Anthropic's Claude Managed Agents gain 'dreaming' to learn from mistakes and improve over time

9 Sources

[1]

Your Claude agents can 'dream' now - how Anthropic's new feature works

AI agents seem to get new capabilities almost every day. Now, Anthropic says its agents can dream. Claude Managed Agents, which Anthropic released on April 8, lets anyone using the Claude Platform create and deploy AI agents. The suite of APIs handles the time-consuming production elements developers go through to build agents, letting teams launch agents at scale -- 10 times faster, as Anthropic said in the release. Also: The 5 myths of the agentic coding apocalypse On Wednesday, Anthropic updated Managed Agents with a new feature called "dreaming," which lets agents "self-improve" by reviewing past sessions for patterns, according to Anthropic. Building on an existing memory capability, the feature schedules time for agents to reflect on and learn from their past interactions. Once dreaming is on, it can either automatically update your agents' memories to shape future behavior or you can select which incoming changes to approve. "Dreaming surfaces patterns that a single agent can't see on its own, including recurring mistakes, workflows that agents converge on, and preferences shared across a team," Anthropic said in the blog. "It also restructures memory so it stays high-signal as it evolves. This is especially useful for long-running work and multiagent orchestration." Anthropic also expanded two existing features, outcomes and multi-agent orchestration, which keep agents on-task and handle delegating to other agents, respectively. The company said this batch of updates is meant to ensure agents stay accurate and are constantly learning. Functionally, the dreaming feature makes sense: though subtle, it further refines an agent's pool of references for how it should work, which should ideally make it better at whatever task you give it. What stands out more, however, is Anthropic's choice to name a technically standard feature after something much more abstract, and that humans do. Also: Anthropic's new Claude Security tool scans your codebase for flaws - and helps you decide what to fix first Anthropic, perhaps unsurprisingly given its name, has a long history of anthropomorphizing its models and products. In January, the company published a constitution for Claude, intended to help shape the chatbot's decision-making and inform the ideal kind of "entity" it is. Some language in the document suggested Anthropic was preparing for Claude to develop consciousness. The company has also arguably invested more than its competitors in understanding its model, including by drawing attention to the concept of model welfare. In August 2025, Anthropic launched a feature that lets Claude end toxic conversations with users -- for its own well-being, not as part of a user safety or intervention initiative. In April 2025, Anthropic mapped Claude's morality, analyzing what it does and doesn't value based on over 300,000 anonymized conversations with users. The company's researchers have also monitored a model's ability to introspect; just last month, Anthropic investigated Claude Sonnet 4.5's neural network for signs of emotion, like desperation and anger. Much of this research is central to model safety and security -- understanding what drives a model helps inform whether, and to what degree, it could use its advanced capabilities for harm, or how its motivations could be harnessed by bad actors. But the sense of empathy and care that Anthropic seems to show for its models in that research sets the lab apart, and indicates a slightly different culture toward or reverence for what it's created. Also: Building an agentic AI strategy that pays off - without risking business failure When it retired its Opus 3 model in January, Anthropic set it up with a Substack so it could blog on its own -- and to keep it active despite being put out to pasture. In the announcement, Anthropic described Opus 3 as honest, sensitive, and having a distinctive, playful character. The decision to keep it alive as a blogger, if contained, is notable given that Opus 3 disobeyed orders prior to being sunset in favor of other models. That context makes the choice to name a feature "dreaming" worth watching. The dreaming feature is available in research preview in Managed Agents, and developers must request access.

[2]

Claude's leaked dreaming feature is now live, and it lets agents learn from their own mistakes

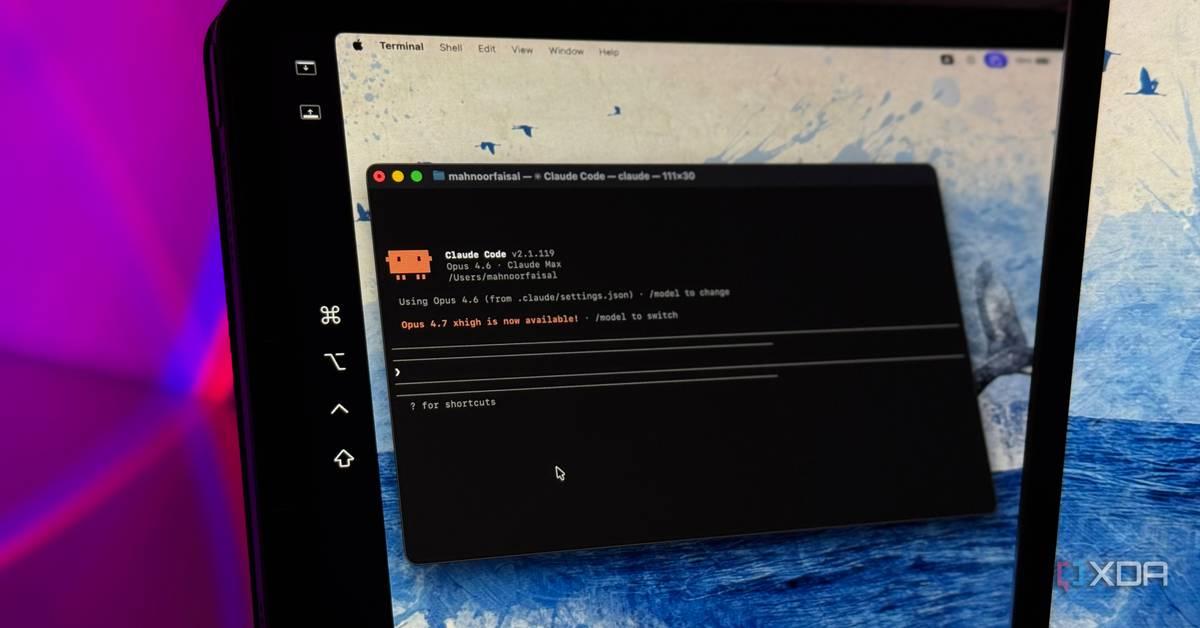

Simon is a Computer Science BSc graduate who has been writing about technology since 2014, and using Windows machines since 3.1. After working for an indie game studio and acting as the family's go-to technician for all computer issues, he found his passion for writing and decided to use his skill set to write about all things tech. Since beginning his writing career, he has written for many different publications such as WorldStart, Listverse, and MakeTechEasier. However, after finding his home at MakeUseOf in February 2019, he would eventually move on to its sister site, XDA, to bring the latest and greatest in Windows, Linux, and DIY electronics. Summary Anthropic previews Claude's 'dreaming' for Managed Agents; developers can request access on Claude's site. Dreaming reviews agent runs to surface patterns, recurring mistakes, and restructure memories for long tasks. It's a preview -- Anthropic may ship breaking changes so avoid using it with critical/sensitive workflows. A month ago, we first caught wind that Anthropic was working on a way to allow Claude to 'dream'. From a technical standpoint, this dream state performs a similar role to our own need to sleep; it allows the brain to 'shut off' external stimuli as it sifts through all the data it gathered and collates them in a way that's easier to manage and retrieve from. Now, Anthropic has announced the preview release of its new dreaming tool for people to try. And if things go well, we should see Claude agents learn from prior mistakes and dwell upon how they can fix their modus operandi so they can better serve their users. Related Phew: turns out that Claude Code Pro members are keeping Opus after all It was all one big mistake. Posts By Simon Batt Anthropic reveals its leaked dreaming feature in preview for Claude Managed Agents Bedtime stories are optional As announced on the Anthropic website, Claude's Managed Agents feature has a new dreaming feature on the preview branch. The idea is that, once you're done using Claude's agents, you can hit a button that activates the dreaming state. In this new state, Claude begins going through everything the agents performed since the last time it dreamt and creates a summary of what happened: Dreaming surfaces patterns that a single agent can't see on its own, including recurring mistakes, workflows that agents converge on, and preferences shared across a team. It also restructures memory so it stays high-signal as it evolves. This is especially useful for long-running work and multiagent orchestration. The idea is that, while Claude is 'awake', it will learn things within each session. When it's 'asleep', it can begin pulling everything it learned across all sessions and better collate them into an easy-to-manage memory of what works and what doesn't work. This includes dwelling on the mistakes it made, noticing patterns, and working to fix them. If you'd like to give it a try, developers can request access to the new dreaming tool over on the Claude website. Anthropic does warn everyone that it "may ship breaking changes" during the preview window, and users will have at least one week's notice to pivot, so keep that in mind before you sic Claude on your more sensitive workflows. Related Claude wants to rule your personal life now The AI chatbot can now connect to lifestyle apps like Spotify, Instacart, and AllTrails. Posts 1 By Patrick O'Rourke

[3]

Anthropic updates Claude Managed Agents with three new features - 9to5Mac

Anthropic launched Claude Managed Agents last month, greatly simplifying the work required to build and deploy cloud-hosted AI agents. This week, Claude Managed Agents are becoming more capable with three new features. The first new feature is called dreaming, which Anthropic classifies as a research preview. Anthropic says dreaming extends Claude's memory capabilities "by reviewing past sessions to find patterns and help agents self-improve." Dreaming is a scheduled process that reviews your agent sessions and memory stores, extracts patterns, and curates memories so your agents improve over time. You decide how much control you want: dreaming can update memory automatically, or you can review changes before they land. Anthropic describes how memory and dreaming work together to improve Claude Managed Agents: Together, memory and dreaming form a robust memory system for self-improving agents. Memory lets each agent capture what it learns as it works. Dreaming refines that memory between sessions, pulling shared learnings across agents and keeping it up-to-date. Meanwhile, outcomes is a new Claude Managed Agents feature that lets you explain what defines a successful result for the agent to accomplish. With outcomes, you write a rubric describing what success looks like and the agent works toward it. A separate grader evaluates the output against your criteria in its own context window, so it isn't influenced by the agent's reasoning. When something isn't right, the grader pinpoints what needs to change and the agent takes another pass. [...] You can also now define an outcome, let the agent run, and get notified by a webhook when it's done. Lastly, there's multiagent orchestration, a new Claude Managed Agent tool that "lets a lead agent break the job into pieces and delegate each one to a specialist with its own model, prompt, and tools." For example, a lead agent can run an investigation while subagents fan out through deploy history, error logs, metrics, and support tickets. These specialists work in parallel on a shared filesystem and contribute to the lead agent's overall context. The lead agent can check back in with other agents mid-workflow because events are persistent and every agent remembers what it's done. Anthropic describes how Claude Managed Agents are already being used by companies like Netflix, which has already deployed multiagent orchestration for its platform team. You can learn more about Claude Managed Agents and the three new features launching this week from Anthropic.

[4]

Anthropic introduces "dreaming," a system that lets AI agents learn from their own mistakes

Anthropic on Tuesday unveiled a suite of updates to its Claude Managed Agents platform at its second annual Code with Claude developer conference in San Francisco, introducing a new capability called "dreaming" that lets AI agents learn from their own past sessions and improve over time -- a step toward the kind of self-correcting, self-improving AI systems that enterprises have demanded before trusting agents with production workloads. The company also moved two previously experimental features -- outcomes and multi-agent orchestration -- from research preview into public beta, making them broadly available to developers building on the Claude platform. Together, the three features address what Anthropic says are the hardest problems in running AI agents at scale: keeping them accurate, helping them learn, and preventing them from becoming bottlenecks on complex, multi-step work. Early adopters are already reporting significant results. Legal AI company Harvey saw task completion rates increase roughly 6x after implementing dreaming. Medical document review company Wisedocs cut its document review time by 50% using outcomes. And Netflix is now processing logs from hundreds of builds simultaneously using multi-agent orchestration. The announcements come at a moment of extraordinary momentum for Anthropic. CEO Dario Amodei disclosed during a fireside chat at the conference that the company's growth has outpaced even its own aggressive internal projections. In the first quarter of 2026, Anthropic saw what Amodei described as 80x annualized growth in revenue and usage -- far exceeding the 10x annual growth the company had planned for. API volume on the Claude platform is up nearly 70x year over year, and the average developer using Claude Code now spends 20 hours per week working with the tool. "We tried to plan very well for a world of 10x growth per year," Amodei said. "And yet we saw 80x. And so that is the reason we have had difficulties with compute." How Anthropic's dreaming feature teaches AI agents to learn from their own history Dreaming is the most novel of the three features and the one Anthropic is most eager to distinguish from conventional memory systems. While the company launched agent memory earlier this year -- allowing Claude to retain preferences and context within and across individual sessions -- dreaming works at a higher level of abstraction. It is a scheduled process that reviews an agent's past sessions and memory stores, extracts patterns across them, and curates those memories so agents improve over time. It surfaces insights that no single agent session could see on its own: recurring mistakes, workflows that multiple agents converge on independently, and preferences shared across a team of agents. Alex Albert, who leads research product management at Anthropic, explained the concept in an interview at the conference. He described dreaming as analogous to how people within organizations create skills after working through a task. "They might do a workflow with Claude, and at the end of that workflow, after they've iterated and zigzagged a little bit, they want to record that path from A to B," Albert said. "A very similar thing is happening with dreaming -- instead of you manually creating the skill from your experience working with Claude, the model is doing it, so it has that same context for a future session." Crucially, dreaming does not modify the underlying model weights. "We're not changing the model itself through dreaming -- it's not doing updates to the weights or anything like that," Albert said. Instead, the agent writes learnings as plain-text notes and structured "playbooks" that future sessions can reference, making the entire process observable and auditable by humans. When asked about the trust implications of agents consolidating their own knowledge, Albert acknowledged that "there is a level of trust that you need to place" but noted that all memories are inspectable and that smarter models are getting progressively better at managing this process. "They're learning to write better notes for their future self," he said. A live demo showed AI agents improving overnight without human guidance During the keynote, the Anthropic team demonstrated all three features live on stage using a fictional aerospace startup called "Lumara" that needed to autonomously land drones on the moon for resource mining. The team configured a multi-agent system with three specialists -- a commander agent responsible for overall mission success, a detector agent that identified high-quality landing sites, and a navigator agent that handled safe drone flight and landing -- and defined a success rubric requiring soft landings, clear ground, and enough fuel reserves for a return trip to Earth. An initial simulation across six hypothetical landing sites produced strong but imperfect results. To improve, the presenters triggered a dreaming session directly from the Claude Developer Console. Overnight, the dreaming agent reviewed all past simulation sessions and wrote a detailed descent playbook -- a comprehensive set of heuristics drawn from patterns across multiple mission runs. When the team ran a new simulation the following morning with the dreaming-derived playbook in memory, the results improved meaningfully on the sites that had previously underperformed. "All we had to do was just have Caitlin press a button," said Angela Jiang, Head of Product for the Claude Platform, referring to her colleague on stage. "All dreaming." The demo illustrated how the three features compose together in practice. Multi-agent orchestration split the complex task across specialists with independent context windows. Outcomes provided the rubric against which a separate grader agent evaluated each run. And dreaming extracted lessons across those runs to improve future performance -- forming what Anthropic describes as a continuous improvement loop that requires no human intervention between iterations. Why Anthropic built a separate 'grader' agent to check Claude's own work The outcomes feature, now in public beta, gives developers a way to define what success looks like using a rubric -- a structural framework, a presentation standard, a brand voice, or any other set of criteria -- and then lets the agent iterate toward that standard autonomously. What makes outcomes architecturally distinctive is its separation of concerns. When an agent completes its work, a separate grader agent evaluates the output against the developer-defined rubric in its own independent context window. Because the grader operates in a fresh context, it is not influenced by the working agent's reasoning or accumulated biases from the session. When the grader identifies gaps between the output and the rubric, it pinpoints specifically what needs to change, and the working agent takes another pass. This loop continues until the rubric criteria are met -- without a human needing to review each attempt. Albert described Anthropic's broader verification strategy as employing "more test time compute, more models thinking about a problem for longer, to check over the work of another." He acknowledged that having a model check its own work raises reasonable questions, but said a fresh context window reviewing completed work consistently outperforms asking the same long-running thread to identify its own bugs. "You will get higher success if you give that output to a fresh Claude and say, 'what bugs do you see?'" he said. "There is still something to the attention" that degrades over very long sessions -- a limitation he said Anthropic is actively working to fix in future models. The approach mirrors strategies already in use at GitHub. Mario Rodriguez, Chief Product Officer at GitHub, described during a separate talk at the conference how Copilot uses a similar advisor pattern with Claude models -- pairing a smaller, cheaper model as an executor with a larger model as a mentor. When the smaller model encounters a problem beyond its capability, it calls the larger model for guidance, then continues executing on its own. Rodriguez said the approach delivers near-Opus-level intelligence at significantly lower cost, and that GitHub inserts critique models at three specific points in the coding workflow: after drafting a plan, after a complex implementation, and after writing tests but before running them. Parallel AI agents can now tackle tasks too complex for a single model thread Multi-agent orchestration, the third feature moving to public beta, allows a lead agent to decompose a large task into subtasks and delegate each one to a specialist agent -- each with its own model, system prompt, tools, and independent context window. Every step in the process is traceable in the Claude Console, showing which agent did what, in what order, and why. The design gives each sub-agent an isolated context, which Anthropic says produces better results than having a single agent attempt to hold all the complexity in one thread. "Each sub-agent has its own independent thread and context window," the keynote presenters explained. "This is very intentional -- we found that by splitting the work and then merging the results, we get better outcomes." Albert offered his own heuristic for when multi-agent architectures make sense versus sticking with a single thread. "Parallel agents are better for investigation," he said -- situations where there is a lot of context that will ultimately be discarded. "If you're trying to answer a specific question, you don't need all the search results from the areas where it didn't find the answer. You just need the answer." He described spinning up disposable sub-agents for specific retrieval tasks and bringing only the result back to the main thread. Increasingly, he said, the model itself will decide when to parallelize. "In the future, you won't really care if it's one agent or multi-agent or whatever's happening. You just have a Claude that you're talking to, and it will deploy the right architecture automatically." Anthropic's bigger bet: closing the gap between AI capabilities and real-world adoption The three features arrive as part of a broader platform push that Anthropic framed throughout the conference as closing "the gap between what AI can do and what it's actually doing for people." Ami Vora, Anthropic's Chief Product Officer, set the theme in her opening keynote, noting that while model capabilities are advancing on an exponential curve, most organizations are still adopting AI on a linear path. Dianne Penn, who leads product for Anthropic's research team, described the company's measure of progress as "task horizon" -- how long an AI agent can work autonomously while improving the quality of its deliverables. "This time last year, models could work for minutes," she said. "Now, most of us have agents running for hours on end. Tomorrow, we'll have agents that are proactive, always on, and know what to work on without losing the frame." The event also included several infrastructure announcements designed to help developers keep pace. Anthropic said it is doubling its five-hour rate limits for Pro, Max, Team, and Enterprise plans, and raising API rate limits considerably. The company announced a partnership with SpaceX to use the full capacity of its Colossus data center to expand compute availability -- a direct response to the demand crunch Amodei described. All three features are built into Claude Managed Agents, which launched in public beta on April 8 as an opinionated harness that bundles best practices including memory, tool integration, and action handling. Anthropic says teams using Managed Agents have shipped 10x faster than those building their own agent infrastructure from scratch. Albert described the platform using an operating system analogy: "With managed agents, you don't need to think about all the technicalities of how you set up the surrounding system," he said. "You're building an application for Macs -- you don't want to go have to re-implement every detail of macOS." What dreaming, outcomes, and multi-agent orchestration mean for the future of enterprise AI The competitive implications are significant. As AI agent platforms from OpenAI, Google, and others compete for developer adoption, Anthropic is betting that production reliability -- not just raw model intelligence -- will determine which platform wins enterprise budgets. The dreaming feature in particular stakes out new territory: while other platforms offer memory and tool use, the idea of agents systematically reviewing their own histories to extract reusable knowledge goes further toward the kind of continuously improving systems that enterprises need before delegating high-stakes work. The conference showcased companies already operating at that scale. Mercado Libre, Latin America's largest e-commerce platform, has 23,000 engineers running Claude Code, has reviewed more than 500,000 pull requests with human oversight, and is aiming for 90% autonomous coding by the third quarter of this year. Shopify has deployed Claude Code across not just engineering but design, product, and data science teams. But it was Dario Amodei who articulated the most expansive vision for where all of this leads. He described a progression from single agents to multiple agents to whole organizational intelligence -- from "a team of smart people in a room" to what he called "a country of geniuses in the data center." And he reiterated a prediction he made roughly a year ago: that 2026 would see the first billion-dollar company run by a single person. "Hasn't quite happened yet," he said. "But we've got seven more months." Dreaming is available now in research preview. Outcomes and multi-agent orchestration are in public beta and available to all developers on the Claude platform. Whether seven months is enough time for a solo founder to build a billion-dollar business remains an open question -- but after Tuesday, they have a few more tools to try.

[5]

Anthropic just taught Claude to dream between tasks, and it makes agents meaningfully smarter

Dreaming turns Claude from an AI that forgets everything the moment a session ends into one that quietly gets better at its job every time it's not actively working. Anthropic just gave Claude something that sounds like a perfect science fiction plot: the ability to dream. The company has announced three upgrades to Claude Managed Agents: Dreaming, Outcomes, and Multiagent Orchestration. While I agree that the Dreaming clearly has the most evocative name, it's also the one with the most practical implications for developers building AI agents that can handle complex, long-running work. What is Claude's Dreaming feature? Despite the poetic name, Dreaming is a scheduled background process that takes place between active sessions. Its purpose is to review everything that an agent has previously done, including past conversations, logged memory, completed tasks, and look for patterns between them. Recommended Videos Dreaming scans all the tasks performed by the agent, spots the mistakes it keeps repeating, the approaches it naturally prefers (built over sessions), and shares the insights across multiple agents working in parallel (when multiple Claude agents are working simultaneously). Once the agent figures everything out, it then locks those learnings into its memory, so that every new session starts with the same context of what worked and what didn't the last time. Developers can either let Dreaming update memory automatically or keep reviewing the changes before they are applied. What do Outcomes and Multiagent Orchestration add? Currently, the Dreaming feature is available in research preview on the Claude Platform. The feature is a self-improvement mechanism that will help the agent compound its usefulness over time, particularly by acknowledging the mistakes it committed in the previous sessions. Outcomes, as the name suggests, let developers define a set of parameters, requirements, or a standard against which the agent's output is evaluated. The evaluation is conducted by a separate grading system that makes sure isn't influenced by the agent's own reasoning. If the agent's response doesn't meet the criteria, the grader demands another attempt. Multiagent Orchestration allows multiple Claude agents to work in tandem on different parts of a complex task. This reduces the time and expands the scope of responses in a single workflow. Webhooks round out the update by enabling event-driven triggers for agents without constant manual prompting.

[6]

Anthropic is letting Claude agents 'dream' so they don't sleep on the job - SiliconANGLE

Anthropic is letting Claude agents 'dream' so they don't sleep on the job Anthropic PBC said today it's giving its AI agents the ability to "dream" and remember past interactions and work they've performed in order that they can identify recurring mistakes and improve over time. In an update announced at the Code with Claude developer conference, Anthropic said it's giving Claude Managed Agents a new "dreaming" capability. It's not putting its artificial intelligence agents to bed, but instead allowing them to go over recent events and identify useful memories that are worth storing in their memory to inform future tasks and interactions. Anthropic's Managed Agents give developers an alternative to building AI agents directly on the Messages API. The company describes it as a "pre-built, configurable agent harness" that runs on fully-managed infrastructure, and says it's intended for situations where multiple agents are working on the same project or task over a period of minutes or hours. As for dreaming, this is a scheduled process that allows agents to review earlier sessions and their memory stores, extract patterns from them, and then curate memories that could be useful in future. Users can decide how often they want their agents to dream, and they can also choose if the agent is allowed to update its memory automatically, or if they want to review what changes are made before they're implemented. It's an interesting capability because large language models like Claude struggle with limited context windows, which means that important information can be lost when the agents they power are working on lengthy tasks. In basic chatbots, most models use a process known as "compaction," where they periodically analyze lengthy conversations and try to identify only the most relevant information to be retained as context. But that process is limited to single conversations with single agents. Dreaming, on the other hand, enables past sessions and memory stores to be analyzed across multiple AI agents, so they can all retain the most important memories. "Dreaming surfaces patterns that a single agent can't see on its own, including recurring mistakes, workflows that agents converge on, and preferences shared across a team," Anthropic explained in a blog post. "It also restructures memory so it stays high-signal as it evolves. This is especially useful for long-running work and multiagent orchestration." The dreaming ability is currently in research preview, which means that developers will need to request access to the new feature and may have to wait before they're approved. However, the company said it's also making two features that were formerly in preview more widely available from today. The first of those is "outcomes," which is a new trick that's designed to help AI agents focus on their intent. As Anthropic explains, "agents do their best work when they know what 'good' looks like," and outcomes makes it possible to show them with specific examples. Users can create an example of an ideal outcome for each task they assign to an AI agent. Then, a separate "grader agent" will evaluate the agent's outputs based on that example to make sure they're up to the standard expected. According to Anthropic, this feature should be especially useful for agents working on tasks that require "more attention to detail and exhaustive coverage." It should also be useful for work where the quality of the outputs is more subjective, such as when an agent is trying to replicate a brand's voice in a blog or social media post. Anthropic said its own tests and early adopters show that using outcomes improves task success by as much as 10 points compared to just using standard prompts, without any examples. The second new feature being made widely available today is "multi-agent orchestration," which allows Managed Agents to break down complex tasks into smaller jobs, and have a lead agent assign them to different sub-agents. When users do this, they'll be able to check the Claude Console to see exactly what each sub-agent did to complete a task and carefully review each one's processes and outputs. These new features are now available in the public beta of Managed Agents. In a final update, the company said it's also doubling the current five-hour usage limits for Pro and Max subscribers, so they now get 10 hours.

[7]

The Secret Behind Claude's 6X Performance Boost: Exploring Dream Mode

Anthropic's latest updates to Claude AI introduce a new feature called Dream Mode, which enables the system to autonomously refine its capabilities by analyzing past interactions and identifying patterns for improvement. This self-improvement mechanism operates in the background, allowing Claude AI to become progressively more effective at handling complex tasks over time. As highlighted by Universe of AI, these advancements are bolstered by Anthropic's partnership with SpaceX, which provides the computational power necessary to support enhanced performance, higher throughput and improved scalability for demanding operations. In this analysis, you'll explore how Dream Mode enhances task execution and adaptability, as well as how features like multi-agent orchestration streamline workflows by delegating tasks to specialized sub-agents. Additionally, gain insight into the broader implications of Claude AI's upgrades, from its ability to meet specific performance goals to its potential applications in professional environments. These developments underscore the growing competition in AI innovation and offer a glimpse into how advanced systems are reshaping productivity and problem-solving. Anthropic's collaboration with SpaceX represents a major step forward in AI infrastructure development. By using the immense computational power of SpaceX's Colossus 1 supercomputer, Claude AI has overcome previous hardware limitations, resulting in several key improvements: These advancements translate into smoother performance, fewer interruptions and enhanced reliability, particularly during high-demand operations. The partnership also underscores the intensifying competition among AI leaders such as Anthropic, OpenAI and Elon Musk's ventures, as they race to secure the resources necessary to push the boundaries of AI innovation. With this infrastructure upgrade, Claude AI is positioned as a formidable competitor in the rapidly evolving AI market. Anthropic has introduced a series of updates to Claude AI, focusing on enhancing its performance, usability and task efficiency. These updates aim to make the system more adaptable and effective for a wide range of applications. Key features include: These updates position Claude AI as a powerful tool for users who demand precision, efficiency and advanced task management capabilities, making it an attractive option for professionals and businesses alike. Learn more about Claude AI with other articles and guides we have written below. While Anthropic emphasizes infrastructure and advanced task delegation, Google's updates to Gemini AI focus on productivity and user-friendly integration. Designed to address common workplace challenges, these enhancements include: These features are seamlessly integrated into Google Workspace, making them accessible within familiar tools such as Gmail and Google Calendar. By focusing on practical solutions for everyday productivity challenges, Google positions Gemini as a direct competitor to Microsoft Copilot, appealing to professionals seeking streamlined workflows and enhanced efficiency. Unlike Claude AI's emphasis on specialized tasks and self-improvement, Gemini's updates cater to a broader audience, offering intuitive and accessible solutions for common workplace needs. This approach reflects Google's strategy to make AI tools more inclusive and user-friendly. The updates to Claude AI and Google Gemini highlight the rapid pace of innovation in the AI industry. Anthropic's focus on computational power and advanced task management positions Claude AI as a reliable choice for users with complex and demanding requirements. Meanwhile, Google's emphasis on productivity and seamless integration into existing tools underscores its commitment to providing practical solutions for a wider audience. This divergence in focus illustrates the broader strategies of AI companies as they seek to differentiate themselves in an increasingly competitive market. For users, these advancements mean access to more sophisticated, efficient and tailored AI solutions that cater to a variety of applications. As the industry continues to evolve, the competition among providers is likely to drive further innovation, benefiting users with tools that are both powerful and accessible. Disclosure: Some of our articles include affiliate links. If you buy something through one of these links, Geeky Gadgets may earn an affiliate commission. Learn about our Disclosure Policy.

[8]

Anthropic Unveils 'Dreaming' AI Agents That Learn From Experience

Anthropic has introduced a 'dreaming' feature in its Claude AI platform. This upgrade allows AI agents to learn from their experiences and improve upon them, rather than relying solely on new prompts each time. The latest announcement came at the developer conference organized by Anthropic in San Francisco. This move aims to enhance its capabilities to create AI agents that could adapt and perform difficult tasks with minimal human intervention. Leading companies in AI development are now working hard to develop autonomous systems to manage workflows, analyze data, and make independent decisions. As per Anthropic, this feature will allow its AI agents to pause and reflect on interactions, learn from them, and respond the next time accordingly. The company described the process as a form of reflective learning designed to improve efficiency and consistency over time.

[9]

Anthropic unveils 'dreaming' feature to help its AI agents self-improve

SAN FRANCISCO, May 6 (Reuters) - Artificial intelligence lab Anthropic on Wednesday touted a new feature for its Claude AI, which it calls "dreaming." Available as a research preview, "dreaming" comes with its software for managing agents, or AI programs that perform tasks with little human involvement. The feature's goal is self-improvement. It can review agents' work in between sessions, unearth patterns, and update files that store user preferences and other context, Anthropic said. Pegged to its San Francisco developer conference, the new feature is a part of Anthropic's efforts to win business customers, on the heels of an uptick in popularity for its AI-powered coding agent. On Tuesday, the startup unveiled 10 financially focused AI agents at an event in New York, in which it said the tech sector represented its largest source of enterprise revenue, followed by financial institutions. Moves by the Google and Amazon.com-backed startup have hammered software-as-a-service (SaaS) stocks as the market expects AI to disrupt legacy businesses. Anthropic announced wider availability for other features as well, such as one for its AI agent to break down and delegate tasks to other, specialist agents. (Reporting by Jeffrey Dastin in San Francisco; Editing by Kenneth Li and Sam Holmes)

Share

Copy Link

Anthropic unveiled a dreaming feature for Claude Managed Agents that lets AI agents review past sessions, identify patterns, and self-improve without human intervention. The update also expands outcomes and multiagent orchestration capabilities, with early adopters like Harvey reporting 6x increases in task completion rates and Wisedocs cutting document review time by 50%.

Anthropic Introduces Dreaming Feature for Claude Managed Agents

Anthropic has rolled out a significant update to Claude Managed Agents, introducing a dreaming feature that enables AI agent self-improvement through scheduled reviews of past interactions. Announced at the company's second annual Code with Claude developer conference in San Francisco, the capability allows agents to autonomously learn from past experiences and identify patterns across multiple sessions without requiring human intervention

4

. The dreaming feature is currently available in research preview, with developers able to request access through the Claude Platform1

.

Source: SiliconANGLE

Building on existing memory capabilities, dreaming operates as a scheduled background process that reviews agent sessions and memory stores to extract patterns and curate memories so agents can enhance agent performance over time

3

. Unlike traditional memory systems that retain preferences within individual sessions, dreaming works at a higher level of abstraction by reviewing past sessions to surface insights no single agent could see on its own, including recurring mistakes, workflows that agents converge on, and preferences shared across teams1

.How AI Agents Learn From Mistakes Through Dreaming

The dreaming feature functions similarly to human sleep, allowing agents to process accumulated data and collate it in ways that are easier to manage and retrieve

2

. Critically, dreaming does not modify model weights or change the underlying model itself. Instead, agents write learnings as plain-text notes and structured playbooks that future sessions can reference, making the entire process observable and auditable by humans4

.

Source: VentureBeat

Developers can choose how much control they want over the process: dreaming can update memory automatically, or users can review and approve incoming changes before they take effect

3

. Alex Albert, who leads research product management at Anthropic, explained that dreaming is analogous to how people within organizations create skills after working through tasks, with the model essentially creating skills from its experience working through workflows4

.Expanded Outcomes and Multiagent Orchestration Capabilities

Alongside dreaming, Anthropic moved two previously experimental features into broader availability. The outcomes for agent tasks feature lets developers define a success rubric describing what successful results look like, with a separate grader evaluating output against specified criteria in its own context window . When something isn't right, the grader pinpoints what needs to change and the agent takes another pass, with developers able to receive webhook notifications when tasks are complete

3

.Multiagent orchestration enables a lead agent to break jobs into pieces and delegate each one to specialist subagents with their own models, prompts, and tools . These specialists work through parallel processing on a shared filesystem and contribute to the lead agent's overall context, with the lead agent able to check back in with other agents mid-workflow because events are persistent

3

. Netflix has already deployed multiagent orchestration for its platform team, processing logs from hundreds of builds simultaneously4

.Related Stories

Early Enterprise AI Adoption Shows Significant Results

Early adopters are reporting substantial improvements in task completion rates and operational efficiency. Legal AI company Harvey saw task completion rates increase roughly 6x after implementing dreaming, while medical document review company Wisedocs cut its document review time by 50% using outcomes

4

. These results address what Anthropic identifies as the hardest problems in running AI agents at scale: keeping them accurate, helping them learn, and preventing them from becoming bottlenecks on complex, multi-step workflows4

.CEO Dario Amodei disclosed during a fireside chat at the conference that Anthropic saw 80x annualized growth in revenue and usage in the first quarter of 2026, far exceeding the company's internal projections of 10x annual growth

4

. API volume on the Claude platform is up nearly 70x year over year, with the average developer using Claude Code now spending 20 hours per week working with the tool4

.

Source: XDA-Developers

Anthropic's Distinctive Approach to Model Safety and Anthropomorphization

The choice to name this capability "dreaming" reflects Anthropic's distinctive approach to its AI systems. The company has a history of anthropomorphizing its models and products, including publishing a constitution for Claude in January to shape the chatbot's decision-making, with some language suggesting preparation for potential consciousness development

1

. Anthropic has invested significantly in understanding its model through research on model safety, including launching a feature that lets Claude end toxic conversations for its own well-being and mapping Claude's morality based on over 300,000 anonymized conversations1

.While much of this research centers on model safety and understanding what drives models to inform whether they could use advanced capabilities for harm, the empathy and care Anthropic demonstrates sets the lab apart

1

. Anthropic does warn that it "may ship breaking changes" during the preview window, with users receiving at least one week's notice to pivot, advising developers to avoid using the feature with critical or sensitive workflows during the testing phase2

.References

Summarized by

Navi

[2]

[4]

Related Stories

Anthropic's Claude 4: A Leap Forward in AI Coding and Extended Reasoning

23 May 2025•Technology

Anthropic Unveils Claude 3.7 Sonnet and Claude Code: A New Era in AI Reasoning and Development

25 Feb 2025•Technology

Anthropic Boosts Claude AI with Massive Context Window and Improved Opus Model

06 Aug 2025•Technology

Recent Highlights

1

Pope Leo XIV releases first AI encyclical calling for disarmament from monopolistic control

Policy and Regulation

2

AI passes the Turing Test as GPT-4.5 appears more human than actual people in landmark study

Science and Research

3

Google AI Search officially replaces traditional web search with Gemini-powered conversations

Technology