AI music floods Spotify as frustrated listeners build blockers and demand transparency

9 Sources

[1]

AI music is flooding streaming services -- but who wants it?

This is The Stepback, a weekly newsletter breaking down one essential story from the tech world. For more on how AI is changing music and the music industry, follow Terrence O'Brien. The Stepback arrives in our subscribers' inboxes at 8AM ET. Opt in for The Stepback here. The use of generative AI in pop music started almost as a gimmick. There was a sense of experimentalism to 2018's I AM AI by Taryn Southern and 2019's Proto by Holly Herndon, albums that were created with significant assistance from AI. Others got in on the action too, exploring the outer limits of tools like Google's Magenta and even training their own models. But things quickly changed with the launch of Suno in December of 2023 and Udio in April of 2024. Suno and Udio allow users to quickly create entire compositions with a simple text prompt. AI-generated music was no longer the realm of technical experts and fringe experimenters, it was now accessible to anyone with an internet connection. This led to an influx of machine-made music hitting streaming platforms. In September of 2025, Deezer said that 28 percent of music uploaded was fully AI-generated. By the end of the year, that had grown to over 50,000 tracks per day, accounting for 34 percent of uploads. Both users and artists have expressed frustration, demanding streaming platforms do something to combat the growing problem that is watering down playlists and siphoning millions in royalties away from legitimate artists. Udio did not reply to a request for comment. Things have only gotten worse at Deezer, where daily uploads of AI-generated content have grown to 75,000, and are threatening to overtake actual human-made music. And Spotify removed over 75 million spam tracks in just 12 months. Deezer was the first major streaming platform to implement a system that detects and labels AI-generated content. The service also prevents its algorithm from recommending it and has demonetized 85 percent of the streams. In a recent press release, Deezer CEO Alexis Lanternier said that, "AI-generated music is now far from a marginal phenomenon and as daily deliveries keep increasing, we hope the whole music ecosystem will join us in taking action to help safeguard artist's rights and promote transparency for fans." Qobuz was next to implement a detection system. It also published an AI charter, promising that it would never use AI for its editorial or curation content. While the company stopped short of banning AI-generated content, it did lean into the discontent, saying, "The heart of Qobuz is and will remain human." Apple soon followed. Though its labeling system has an obvious flaw -- it relies on self-reporting. Apple Music "requires" labels and creators to voluntarily add Transparency Tags to their metadata. When asked how it was enforcing requirements, or what penalties, if any, there were for failing to label AI-generated content, Apple declined to comment and pointed me to an industry newsletter from early March that says it "defers to content providers to determine what qualifies as AI content." Spotify also opted for a voluntary system. It recently launched AI credits, which identify tracks made using generative AI. It's working with the standards group DDEX to create an industry standard for labeling AI content. It goes beyond blanket labeling, allowing artists to specify whether AI was used to create the lyrics, vocals, or backing music. Initial glimpses of those efforts began rolling out in mid-April, with DistroKid as its first partner. While DDEX counts most of the industry's heavyweights as members -- including Amazon, Google, Meta, Apple, Songtradr (home of Bandcamp), Pandora, BMI, UMG, Sony Music Entertainment, and Warner Music Group -- not everyone is necessarily on board with Spotify's standard yet. Spotify has come under criticism for its handling of AI slop and so-called ghost artists. But recently, it has gone out of its way to talk up its transparency efforts and its increased offensive against spam and impersonation. The company also recently launched a Verified by Spotify badge that is supposed to guarantee there's a human behind an artist profile. Sam Duboff, global dead of Marketing & Policy, Spotify for Artists, told The Verge that it is experimenting with third-party detection tools, but that they still make a "material amount of incorrect assessments." Google also requires that AI-generated content be labeled, whether that's on YouTube or YouTube Music. While the company won't publicly detail how its systems to combat AI slop work, it has said that it's "building on... established systems that have been very successful in combatting spam and clickbait, and reducing the spread of low quality, repetitive content." It also says that failing to disclose can carry penalties, including the removal of content or suspension from the YouTube Partner program. In survey after survey, public opinion toward AI music is pretty unfavorable. A study by Deezer and Ipsos showed that 51 percent of respondents think AI will "lead to the creation of more low-quality, generic-sounding music." A poll conducted by The Hollywood Reporter and the Frost School of Music found that 66 percent of people never knowingly listen to music generated by AI. And 52 percent said they wouldn't even want to listen to music from their favorite artist if they knew it was made with help from AI. Researchers from Singapore also found significant negative bias toward AI-generated content. The paper's authors claim that this is because emotion plays such a central role in how we engage with music. They say that "due to its lack of expressive intent, AI-generated music may be perceived as less capable of conveying authentic emotion or fostering meaningful connections with listeners." Despite this, only Bandcamp has banned generative AI music outright. Of course, it says that "Music and audio that is generated wholly or in substantial part by AI is not permitted," but enforcing that policy is easier said than done. Bandcamp is not proactively scanning uploads to catch AI music. Instead it's relying on manual reports from users to flag suspicious content. The flood of AI music shows no signs of abating. The number of AI tracks uploaded has grown steadily over the last year, and according to Deezer's Director of Research, Manuel Moussallam, "It is likely that deliveries will keep increasing." If there is a silver lining, it's that while the number of generative AI uploads has grown by nearly 40 percent, there doesn't seem to be a marked increase in streams. Moussallam says, "The consumption after fraud removal is not gaining much traction and is still very concentrated on a few viral tracks." AI generated music accounts for as little as 1 percent of streams on Deezer as of April, up from about 0.5 percent in early November. But in that time the percentage of fraudulent streams of AI music has increased dramatically from "up to 70 percent" to 85 percent. That suggests people are seeking out AI music less often -- perhaps the novelty has worn off. YouTube Policy communications manager Jack Malon told The Verge the company is "involved in the active development of new industry standards for AI disclosures in music credits," though stopped shy of saying that it was collaborating with Apple or Spotify specifically. Google was heavily involved in the creation of C2PA for authenticating content, but it's been criticized for inconsistent implementation, potential for abuse, and creating a false sense of security. Neither Google nor Spotify seems ready to start demonetizing or excluding AI-generated music from its recommendation engine. Duboff says that, "Over time, we believe the use of AI in music will increasingly be a spectrum, not a binary. Tracks won't be 'categorically AI' or 'not AI at all' with no in between." Creations like Velvet Sundown, Breaking Rust, and Solomon Ray might be anomalies at the end of the day. They've generated more attention for being AI than they have for the quality of the music. Fully generative AI music will continue to be a threat to working musicians, session artists, library music composers, and the like. But they may struggle to find footing on the charts. However, artists are more frequently embracing AI, even if it's largely behind the scenes. It's worked its way into songwriting sessions in Nashville and replaced sampling for hip-hop producers, and Diplo says creatives need to adapt. (Or "just like give up and become an Uber driver until everyone has a Waymo.") Duboff says, "We hear from top artists, songwriters, and producers all the time who are incorporating AI technology into their creative processes." Companies are hesitant to penalize AI use in part because they expect it to become a standard tool in the industry. Even when launching its Verified by Spotify program, the company left the door open to AI acts saying, "the concept of artist authenticity is complex and quickly evolving." But when Suno users are churning out an entire Spotify's worth of AI slop every two weeks, the demand for dramatic steps is likely to grow. That Deezer / Ipsos study found that 45 percent of people would like to filter out all AI-generated music from their music streaming library. It's a solution that neither Deezer nor any other streaming service has committed to. And it would face its own steep hurdles, including an industry-wide standard for labeling that is consistently implemented, and robust, reliable AI detection tools. If someone wants to listen to Xania Monet, nobody should stand in their way. If you could flip a switch and instantly hide all generative AI music on Spotify, I bet a lot of people would.

[2]

I Made a Kitschy Pop Song With AI. It's Fast, Easy, and Completely Empty

AI can generate anything from code, essays, pictures, podcasts, and more, so why not music and music videos? You've probably already heard AI music on TikTok, Spotify, YouTube, or even the radio without realizing it, in fact. Unfortunately, it's nearly as easy to create a song and music video using AI as it is to consume it. Take it from someone who spends a lot of time evaluating AI services: AI music is a mistake. How Do You Make Music (and Music Videos) With AI? Simply search for an AI music generator, and you will find countless such services. I used Suno, but its features and results aren't especially unique. Making a song largely involves clicking the Create button. You can give the AI as much or as little information as you want. For example, Suno let me choose a genre, generate lyrics (though I chose to write them myself), pick a vocal gender, and even upload an audio clip for reference, all at no cost. Then, seconds later, it produces a song. If this all sounds jarringly easy, that's because it is, unfortunately. Making music with AI doesn't require prompting, and the only actual creative work you need to do is pick a genre or the type of sound you want. I opted for a country pop song, and it sounds very much like something you might hear on the radio or on a Spotify playlist. You might raise an eyebrow at certain pronunciations, which I could fix by spelling them out phonetically in my lyrics, but otherwise, it's hard not to find the technology impressive. But why stop there? You can also make music videos with AI in a variety of ways. For example, you can prompt Gemini's Veo 3.1 AI video generator to create a video and then add your music of choice as a backing track. However, dedicated AI music video generator services also exist. I tried neural frames, which gives you an incredible amount of control over the music video generation process, down to the level of frame-by-frame adjustments. I provided neural frames with a song I generated with Suno, and then I let the AI choose a concept and style for my video. It then presented me with characters, a story description, a slider to choose how much I want my video to focus on vibe over story, and a storyboard, complete with images and descriptions for each scene. Finally, I chose a model from a list of options, and neural frames started generating dozens of clips it stitched into scenes to make my video. The above is just the beginning of what you can do with neural frames, considering you can also generate lyric videos or even ones in which AI characters lip sync songs. While the depth of neural frames is impressive, results are a lot more variable, considering the available models aren't at the cutting edge of AI video generation. However, with enough tweaking and spending (credits cost money), good results are possible. AI Music Is Everywhere and Sometimes Impossible to Spot Outside of picking out mispronunciations (you can hear one in my song above when the AI tries to sing the word 'AI' at certain moments and doesn't quite get it right) or listening keenly, the reality is that you likely can't distinguish most AI music from human-created music. Although some streaming services label AI-generated music, not all do, and they continue to face a torrent of AI music uploads. As an experiment, I generated a chill, lo-fi song with Suno (featured in the neural frames-generated video above). This required next to no work, since all I did was select my genre. The result should sound familiar to you if you listen to lo-fi music, as I do sometimes while working, courtesy of Lofi Girl. However, Lofi Girl notes that humans create all of the music and visuals in its videos. However, many channels don't have those scruples. When I search for lo-fi beats on YouTube, Lofi Girl is the first result, but a similar channel, called The Japanese Town, is the second. The latter channel doesn't mention AI anywhere, simply noting that The Japanese Town team creates all the artwork and music in its videos. However, it's all almost certainly the work of AI. The artwork in its videos includes distorted or nonsensical elements, and its streams have almost identical runtimes. Meanwhile, its thumbnails are nearly the same when it comes to style and composition, yet they remain distinct, a hallmark of AI image generation. Unless The Japanese Town is one of the most prolific collectives ever, creating hundreds of hours of unique music without so much as a footprint on the wider web, it's all AI-generated. I could easily wind up listening to AI-generated music without noticing it, which is why I steer clear of lo-fi music playlists from sources I don't trust. But if I can't always tell the difference, what does it matter where the music comes from? Listening to AI Slop Is Wrong On Many Counts It's one thing if you're a seasoned music producer who wants to experiment with sampling some AI-generated vocals in a new song, even if that comes with its own set of ethical considerations. It's another thing if you want to make a meme song to send to your friends. But knowingly spending your time listening to music that's wholly AI-generated is just gross. That kind of AI music is thoughtless slop that requires zero creative expression and next to no effort. It's a shallow imitation of one of the few things that makes life actually worth living (art), and it exists to fill the pockets of faceless ghouls who prompt an LLM instead of actual artists. You must also consider the potential legal ramifications of profiting from AI-generated music. PCMag's Jamie Lendino, executive editor of reviews and longtime audio producer, points out that "LLMs have scraped enough music and violated enough copyrights to present a reasonable facsimile of music in different genres" but notes that you "can never be sure it didn't copy a melody, which means you really shouldn't produce anything and present it as your own, lest you get sued." Beyond the legal issues, PCMag's Angela Moscaritolo, managing editor of consumer electronics, finds it concerning that people are using AI-generated music to spread misinformation and conspiracy theories. "Following the Artemis II mission, a friend sent me an Instagram Reel suggesting NASA faked the Apollo program Moon landings, featuring a song called 'Radiation_Fire' by an artist dubbed the Deep State Ramblers," she says. "Based on the singer's voice, I initially thought it was a Dolly Parton song, but a quick Google search proved it was AI." That Instagram post had over 53,000 likes as of publication. Even if none of the above gives you pause, AI music is usually just bad. Sure, not much might separate an AI-generated chill, lo-fi beat from a human-created one in terms of sheer musical quality, but listen to the country-pop song I made with AI and tell me it doesn't suck. The reason why you might mistake that song for something authentic is that it's generic by design. The underlying LLM trains on countless country-pop songs, which is how it creates a song that sounds exactly like others in the genre. Don't Go Too Far With AI Music Generation If you're in a garage band just getting off the ground and you want to upload your music to YouTube with more than a still image in the background, an app like neural frames is not only technically impressive but could also be useful. (Of course, aspiring videographers might rightly disagree.) AI music generators are just as impressive from a technical perspective, but I can't in good conscience recommend using them for anything beyond a silly experiment.

[3]

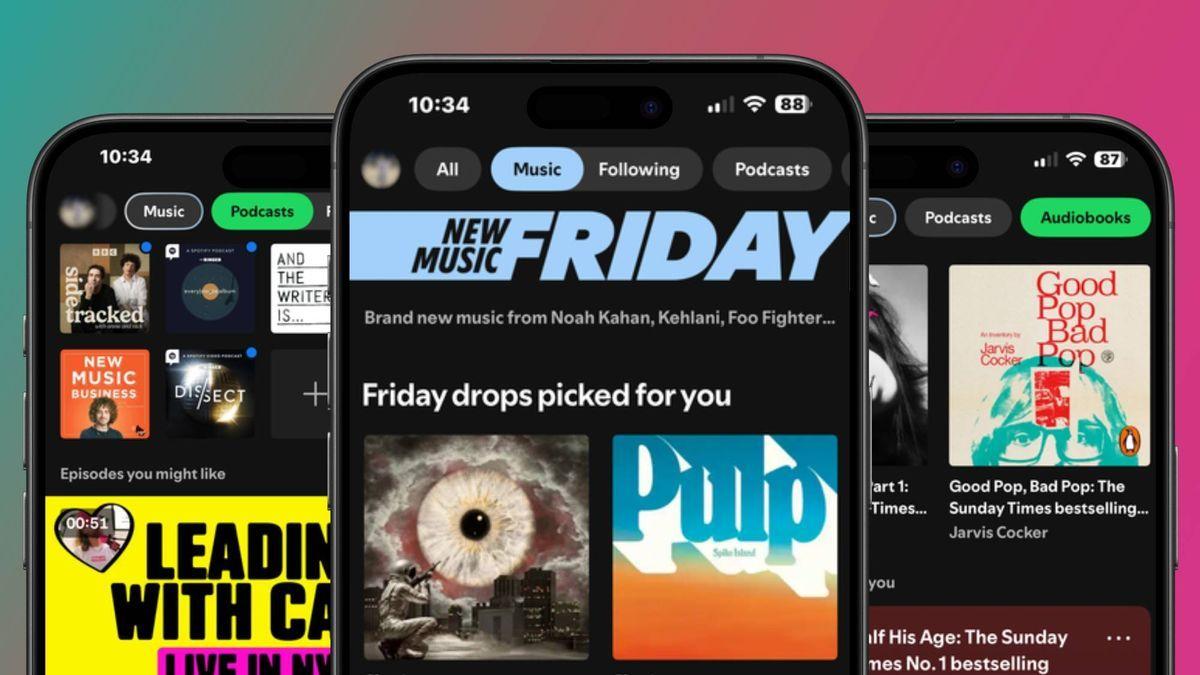

Why Spotify has no button to filter out AI music

In mid-2025, frustration boiled over for Cedrik Sixtus. Finding his Spotify playlists increasingly sprinkled with tracks he suspected were AI generated, the Leipzig-based software developer built a tool to automatically label and block them from his listening. He uploaded his Spotify AI Blocker to a couple of code-sharing websites, where hundreds have downloaded it. It filters out a growing list of more than 4,700 suspected AI artists, drawing on already existing community tracking efforts, and signs like unusually high release volumes and AI-style cover art, supplemented with external detection tools. "It is about choice - if you want to hear AI music or if you don't," says Sixtus who would prefer Spotify labelled and enabled filtering of AI-generated content itself. Sixtus's tool is installed initially via the web browser version of Spotify. He warns that using his software "may violate Spotify's terms of service". He isn't alone: feelings run deep on the community forum of the world's most popular music streaming service. While for Sixtus the issue is that AI music doesn't sound right, others simply don't want to listen to music made by a bot. Spotify has made some concessions to address such concerns. In April it launched a test feature which shows, in a song's credits, how an artist used AI. But it's a voluntary system based on what an artist tells their record label or distributor. "We know this isn't a complete solution on its own. Building a truly comprehensive system is a challenge that requires industry-wide alignment," Spotify said in April. Spotify's position is certainly a long way from actively identifying AI-generated music and giving users an option to filter it out. "It is a difficult - borderline existential - balancing act for Spotify," says Robert Prey, who studies streaming platforms at Oxford University's Internet Institute. Spotify is trying to avoid value judgments about how music is created, but risks eroding trust among listeners, artists and the wider industry if it fails to offer enough transparency, he explains. "It has to figure out what listeners want and how artists feel - all while AI is improving, being used more widely and becoming harder to detect," he adds. The arrival of AI tools for music is both seducing and unsettling the music world. Generative AI music services like Suno and Udio now produce increasingly polished, fully realised songs, complete with lyrics, vocals and instrumentation from simple text prompts in seconds. In one recent controlled test, part of a Deezer-Ipsos poll, 97% of listeners failed to correctly distinguish between AI-generated and human-made tracks. And tens of thousands of the AI tracks appear to be uploaded to streaming platforms daily, where they could dilute revenue pools for human artists - even if most currently attract few listens. Spotify, along with YouTube Music and Amazon Music, have so far avoided any clear user-facing labels or filters for AI-generated music, neither openly using detection tools nor requiring systematic self-disclosure - though that may change as industry standards develop. Widely suspected AI acts like Sienna Rose, Breaking Rust and The Velvet Sundown are essemtially treated like any other artists by Spotify, even as the platform removes what it considers AI-related spam such as mass uploads and short tracks designed to game the system. "Our priority is addressing harmful uses [of AI] like spam and impersonation, rather than trying to filter music based on how it was made," a Spotify spokesperson said, adding AI in music also isn't a binary category but exists on a spectrum. Deezer - a smaller competitor to Spotify - has taken a stronger approach. Last year it began both tagging albums that contain AI generated tracks produced by Suno, Udio and similar, and excluding the tracks from algorithmic recommendations or human-made playlists. It uses its own in-house detection technology based on training AI models to spot statistical patterns in the sound itself, and recently began offering it for sale across the industry. "We're the only music streaming platform that has that in place," notes Jesper Wendel, its head of global communications. In March, Apple Music said it was introducing "transparency tags" and would eventually require that music labels and distributors self-disclose when new songs or related content involve AI. But, as with Spotify's song credit features, critics point out those are unlikely to be reliable as artists may rather not disclose AI use for fear of stigma - and how visible Apple's tags will be to listeners remains unclear. That AI music exists on a continuum does make labelling difficult, says Maya Ackerman, an expert in AI and computational creativity at Santa Clara University in California and co-founder and CEO of WaveAI, which has an AI tool to help musicians write song lyrics. While some tools are "prompt in, song out" - where AI labels would be straightforward - others are designed for co-creation, assisting with specific parts of the music-making process. If a musician uses those tools, at what point does that warrant a label? And, Ackerman adds, even with tools like Suno and Udio, users can put a lot of their creative selves into the outputs - feeding in their own lyrics or spending many hours iterating on the song's sound. "From a distance it looks like such an obvious 'yes, label AI music' but, once you zoom in, you realise it is a very complicated thing," she says. There is also the technical challenge of accurately detecting AI-generated tracks, with potentially serious consequences if human musicians are falsely labelled as AI. Even detecting fully AI generated music can be fraught notes Bob Sturm, who studies AI's disruption of music at the KTH Royal Institute of Technology in Sweden. AI detection systems are trained on outputs from existing AI music generation tools, but as those tools improve the software must be continually retrained, leading to what he characterises as a kind of "AI music arms race". It is a challenge, acknowledges Manuel Moussallum, Deezer's head of research, but the company's detection technology has so far maintained a low false positive rate, he says, and research to better understand hybrid cases, where AI is only partially used, is ongoing. Yet others see such concerns as a distraction. "There is a lobbying message to say 'we can't draw the line, and therefore we shouldn't do anything'," says David Hoffman, a professor at Duke University in North Carolina who studies the impact of AI-generated music on artists' livelihoods. He argues platforms should at least label fully AI-generated tracks and assess the scale of the remaining issue from there. And listeners appear to want labels: in the Deezer-Ipsos poll, around 80% of respondents said AI-generated music should be clearly labelled, though views on filtering were more divided. "Listeners deserve awareness," says singer-songwriter Tift Merritt, who works with Hoffman as a practitioner-in-residence at Duke, citing the way we provide nutritional labels on food or tell consumers if it is organic. What may really be stopping Spotify from embracing labelling and filtering is economics, speculate many. Spotify is trying to optimise for platform growth, says Prey from Oxford. Keeping recommendation systems as "unencumbered and free to operate as possible" helps with that. Detecting AI-generated content would add cost, Hoffman notes, and it may also be cheaper to serve up AI music. Past controversies fuel suspicion note critics. Spotify has, at various points, been accused of commissioning and promoting lower-cost music for background-style playlists - claims it denies. "All tracks on our platform are delivered by third-party rightsholders like labels and distributors, and the payment model is the same for all of them: royalties are paid out of the revenue pool based on listening share," a Spotify spokesperson said. Meanwhile the area is evolving. The music industry's standards body, DDEX, is continuing to work on a broad industry standard for AI disclosures in music credits, though display will depend on the streaming platforms. And certain AI-generated content is required to be labelled from August 2026 under the EU AI Act; though how Spotify will implement those rules remains unclear. It feels like the "Wild West" for AI music right now says David Hesmondhalgh, professor of media, music and culture at the University of Leeds. But he also expects "some kind of order will emerge", as the early-2000s file-sharing panic ultimately led to today's streaming industry. And Spotify appears to be recognising the pressure, recently announcing features aimed at elevating human artistry, including SongDNA and "About the Song" which give premium users deeper insight into a track's origins and contributors. "We believe the right response to AI in music isn't any single policy, it's a combination of proactive controls, industry-wide standards, and a deeper investment in the human creativity behind every track," added the Spotify spokesperson.

[4]

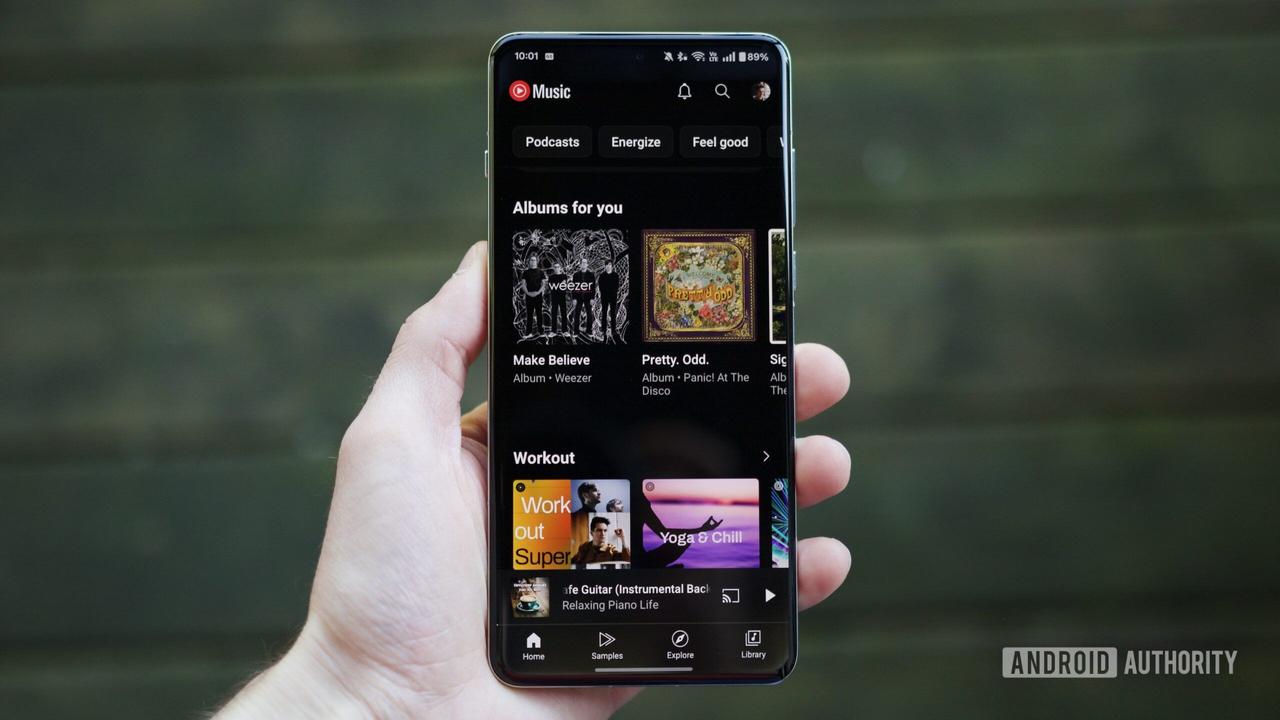

AI music is flooding streaming platforms. But listeners like it less and less

The decline is especially notable with young listeners who are part of Gen Z and Gen Alpha. Fiordaliso/Getty Images/Moment RF hide caption Music fans are becoming increasingly uncomfortable with AI songs, according to a recent report published by the music and entertainment insights company Luminate. The decline is especially notable with young listeners who are part of Gen Z and Gen Alpha. The study compared attitudes towards AI use in music creation from May to November of 2025. It found that overall interest dropped from -13% to -20% during that time period. "Across the board, what we found is that consumers are net negative," says Audrey Schomer, a media analyst and research editor at Luminate who authored the report, titled "Generative AI in Entertainment 2026: Examining Changes in Industry Strategies, Legal Challenges & Consumer Attitudes." "All that means is that people are more likely to feel uncomfortable than to feel comfortable with AI use." The results include partial AI usage (like for writing lyrics or creating vocals) as well as fully AI generated compositions or performances, though the latter is viewed in a more negative light. A significant portion of the people surveyed -- about a third -- feel indifferent towards AI music altogether. Schomer notes that the decline in interest is marked by people who changed their outlook from positive to negative from May to November. The Luminate report coincides with a rise in generative AI content across social media and streaming platforms. Last year, the French company Deezer implemented an AI detection tool to track and label how much "synthetic content" is uploaded to its streaming platform. Earlier this month, Deezer reported that approximately 44% of daily uploads are now AI generated tracks. But when it comes to listening behaviors, there's no sustained uptick to match; Deezer found that AI songs account for less than 3% of total streams on the platform, and a majority of those streams have been deemed fraudulent, meaning they're likely driven by bots rather than human listeners. (Deezer says it demonetizes these streams). In recent months, artists and advocates have raised concerns about how a spike in AI content on streaming services can affect how much real musicians get paid. That's because Spotify, Apple Music and several other companies rely on a pro rata model: if an artist's catalog accounts for a certain percentage of total streams on the platform, that's the percentage of total royalty payouts they receive. In February, several artists' rights groups from around the world published an open letter called "Say No To Suno" -- a reference to one of the largest AI song generators -- in which they claimed that AI content "dilutes the royalty pools of legitimate artists from whose music this slop is derived." Still, the hype around AI music isn't entirely fake. Several self-disclosed AI projects, including Xania Monet and Breaking Rust, have already landed on the Billboard charts. Monet is the artificially created avatar behind Mississippi poet Telisha "Nikki" Jones, who uses Suno to turn her words into R&B compositions and performances. According to Billboard, Monet signed a multimillion dollar record deal with Hallwood Media in the fall. For some singers, these developments raise serious concerns about the state of the industry. In March, R&B singer SZA told the magazine i-D that she feels "at war" with AI and the kind of content being created with it. "It's happening disproportionately with Black music," SZA said. "Why am I hearing AI covers of Olivia Dean, when Olivia Dean just came the f*** out? She can't even collect the streams. I'm also really offended by the type of Black music that's coming out of AI. Weird, stereotypical struggle music." Although Luminate's study did not ask listeners why their outlook on AI has shifted, Schomer suggests that musicians speaking out against AI could be moving the needle. "If people have any sort of affinities towards specific artists who have been active in some of those artist rights campaigns, then perhaps that rising awareness would lead people - particularly young people -- to be more anti AI," she says. She also says that as AI becomes more common in everyday life, AI fatigue or brain fry (mental burnout from excessive AI use) could also be playing a role in changing attitudes, particularly for younger generations that have more anxieties about entering a rapidly changing workforce shaped by AI. "There's more and more concerns about jobs, and I think that Gen Z are probably among the biggest receivers of some of that messaging around contraction of job opportunities [and] entry level jobs," Schomer says. When it comes to music, Luminate's report found that sentiments are particularly negative towards new songs created by AI in the style or sound of an existing artist. Major AI song generators including Suno and Udio have faced copyright lawsuits for training their models on artists' music without authorization -- but several labels and publishers, including Warner Music Group and Universal Music Group, have struck licensing deals with these same AI tools. The agreements would compensate artists and songwriters for opting into having their likeness, voice or style used in AI creation. Last month, Taylor Swift became the latest artist to file several trademark patents that could be meant to protect her voice or image from being used in this way by AI tools. Looking ahead, several music generators and streaming services like Spotify have indicated that they'd like to create interactive ways for fans to remix and alter existing songs using AI. Given Luminate's findings, which indicate that people are least comfortable with AI usage to create new music that mimics the sound or style of existing artists, Schomer says building audience trust in those new features could pose a real challenge. "If the biggest decline among young users is on that particular kind of activity, it's the very thing that's being proposed to happen in these services," Schomer says. "I think that poses a potential uphill battle for the services to actually attract users and demonstrate that this is a good thing for the industry."

[5]

'It is about choice -- if you want to hear AI music or if you don't.' One Spotify user got so frustrated with AI slop that they created an 'AI blocker', but it 'may violate Spotify's terms of service'

* A Spotify user has developed their own AI song-filtering software * It's been downloaded by hundreds of users, but it's creator warns that it may violate Spotify's terms of service * Over the past 12 months, Spotify told us it removed 'over 25 million AI tracks' AI-generated music is a growing frustration for users of Spotify and other music streaming services -- and now one Spotify user has taken matters into their own hands. To combat the flood of AI songs plaguing their algorithm, software developer Cedrik Sixtus has built an 'AI blocker' that labels and filters out AI-generated tracks from their listening sessions. Since developing the tool, Sixtus has shared it online where it's been downloaded by hundreds of users via Spotify's web platform. Speaking with the BBC, Sixtus summarized the aim of his filter tool quite simply, saying "it is about choice -- if you want to hear AI music or if you don't". He also told the BBC that using his software "may violate Spotify's terms of service" -- so you might want to proceed with caution if you're thinking of installing it, or a similar blocker. So how does the tool work? The main objective of Sixtus' AI blocker is to filter out a list of over 4,700 'artists' that are suspected to be AI. This detection is based on community tracking methods, and factors in other characteristics such as album art, and how often music is uploaded to the purported artist's profile. While users like Sixtus are actively attempting to extinguish the AI flames, the big dog Spotify has yet to clearly label AI-generated songs, which Sixtus finds the most frustrating part. Spotify has just celebrated its 20th anniversary, and to mark the milestone I spoke with Sten Garmark, the company's Head of Consumer Experiences at Spotify. As part of our discussion he teased the company's future development plans, which include new measures to combat the rise of AI uploads. Garmark told me that Spotify removed "over 25 million AI tracks" in the last year, and emphasized the company's ongoing commitment to tackling the issue. "We have rules against impersonation, and we've also helped set up new protectionist mechanisms for artists so that they can more securely control what goes up on their platform," he added. At the same time, Spotify has refrained from penalizing artists who use AI as a creative tool in small doses, and a lack of clarity over where it draws the line is one of the issues with its AI filtering system -- and it's one reason why loyal subscribers are slowly losing confidence in the service, argues Sixtus. "[Spotify] has to figure out what listeners want and how artists feel -- all while AI is improving, being used more widely and becoming harder to detect," he shared. Not only is the number of AI-generated uploads rising, the technology used to create AI songs is becoming increasingly accessible, meaning pretty much anyone can create a song, and an AI persona to go with it. In the current UK iOS App Store charts, AI music generator Suno sits in the number one spot in the music category, ahead of Spotify in third (Global Player is in second spot). If major streaming platforms don't start clamping down on strengthening their AI filters now, it gives the developers of software such as Suno more time to perfect their technology, and fool more music fans -- and we could soon find we've passed the point of no return. Follow TechRadar on Google News and add us as a preferred source to get our expert news, reviews, and opinion in your feeds.

[6]

Spotify apparently has no solid plan to label AI-generated music

There's a quiet anxiety spreading through music streaming -- and Spotify, the platform more than half a billion people trust to soundtrack their lives, is doing remarkably little about it. AI-generated tracks are flooding streaming platforms at a pace that would've felt dystopian five years ago. Tens of thousands of them, every single day, slipping into the same playlists and recommendation queues as your favorite human artists. And most listeners wouldn't even know the difference -- research suggests the overwhelming majority can't tell them apart in a blind listen. Listeners are already solving it themselves So when people started noticing something felt off, they started doing something about it themselves. One developer in Germany got so fed up with suspected AI tracks bleeding into his Spotify playlists that he built his own tool to flag and block them. He uploaded it online. Hundreds of people downloaded it immediately. That alone should tell Spotify something. But Spotify's response so far has been more of a corporate shrug than a genuine reckoning. The platform recently rolled out a feature that shows AI usage in a song's credits -- but only if the artist actually admits to it. Voluntary self-disclosure from people who might fear career damage for doing so. That's not transparency; that's just the appearance of it. Recommended Videos On the other hand, Deezer, far smaller and more powerful than Spotify, has already deployed its own detection technology and started tagging and filtering AI-generated content from its recommendations. Apple Music is at least moving toward mandatory disclosure. Spotify, the biggest platform in the room, is still standing at the doorway, saying it's complicated. Yes, it's complicated but that's not an excuse The line between AI-assisted and AI-generated is definitely blurry. A musician who uses AI to help write a verse is a different conversation from someone who typed a prompt and uploaded the result. Experts in the field acknowledge this isn't a clean binary. Mislabeling a human artist as AI would be a serious mistake with real consequences. But here's the thing -- nobody is asking for perfection. What listeners want, what artists deserve, is a starting point. Label the fully AI-generated stuff, assess the scale of the grey area from there. The argument that it's too hard to do anything, so we shouldn't do anything, is starting to sound more like a convenient excuse. Because there's money in this somewhere. AI-generated music is cheap to produce, potentially cheaper to serve, and doesn't require royalties the way human artists do. The incentive structures here aren't invisible. When the world's biggest music platform declines to ask too many questions about where its content comes from, it's worth wondering why. A trust problem in the making There's a version of this story where Spotify eventually gets it right -- where transparency tools, industry standards, and platform accountability catch up with the technology. That future might even be nearer than it seems, with regulatory pressure building and the music industry's standards bodies inching toward disclosure frameworks. But right now, in the present, listeners are downloading third-party blockers and double-checking their playlists, as if they're reading the fine print on a suspicious contract. That's not the relationship a platform should want with its audience. Spotify has built its entire brand on helping people discover music they love. If people stop trusting what they're hearing, that brand means very little.

[7]

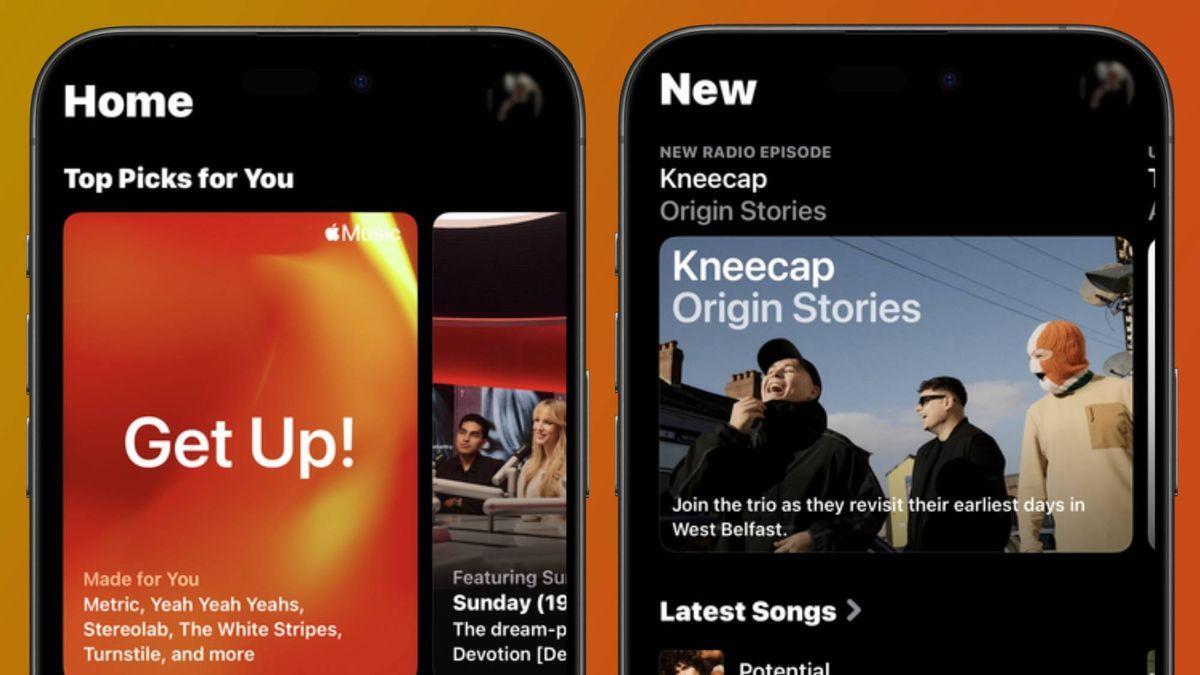

'Every label in the world is delivering AI': Apple Music executive says over a third of uploads are '100% AI' as it clamps down on AI fraud

* Oliver Schusser from Apple Music says a third of uploads are AI-generated * Despite this, only 0.5% of all users are engaging with this content * Apple Music has plans to combat the AI epidemic even further Apple Music has become the latest music streaming service to be hit with the influx of AI-generated content, says its VP Oliver Schusser -- but it's reaching only a very small percentage of all users. Speaking with Billboard ($/£), Schusser shed light on the state of AI music in Apple Music's library, sharing that "more than a third of what (Apple Music) get(s) today is actually what we would say is 100% AI". It goes to show that it's becoming easier for labels and distributors to submit music that's completely made using AI, and Apple Music isn't the only service that's facing this epidemic. Just last week, Deezer declared that nearly half of the new music submitted to the platform is AI-generated, resulting in the company's decision to stop offering hi-res versions of these songs. So, how is Apple planning to put out the AI fire? Well, Schusser went into further detail in his interview. "We've never talked about this -- but we've developed technology in-house that would allow us to exactly see what music people are delivering us, what AI (model) it is and all that," he reveals, likely referring to Transparency Tags. Back in March, Apple sent out a letter to industry partners revealing its plans to roll out 'Transparency Tags', a new metadata system to help flag AI-generated and AI-assisted music. This means labels and distributors can disclose whether AI has been used in a song's production when submitting to Apple Music. Though it's optional, Schusser made it clear that he "really need(s) the content providers and the labels to take responsibility". There's no denying that fully AI-generated music is cropping up in the best music streaming services, but Schusser unveiled an interesting statistic that may come as a surprise: despite the rise, it's not having a huge impact on users' listening and engagement habits. "The reality is, the usage of the AI music on Apple Music is really tiny. I'm rounding, but it's below 0.5% of usage. We're just at the beginning here," he told Billboard -- but fraud is still rife. This is another issue on which Apple Music is clamping down, but it's been doing this since the good old iTunes days: "This has been a 20-year journey because there was fraud, obviously, in iTunes already," Schusser said, which led to the introduction of Apple's fraud penalty. The company also doubled this penalty as of this year. But the battle isn't over, as Schusser puts it, "We invest way more than anyone else in reducing and eliminating fraud. We implemented a fraud penalty four years ago, where if we catch someone, then we actually take the money and put it back in the pool. We need to monitor AI music because there's a correlation between AI and fraud". He also shared that Apple has seen a "60% reduction" in fraudulent uploads after implementing the penalty. As it stands, I've been one of the lucky ones not to have run into AI-generated music flooding my recommendations in Apple Music as well as Spotify, though the latter has come under significant scrutiny for housing AI slop. Like other platforms, Spotify is also working toward safeguarding users by removing 25 million AI tracks in the last 12 months, and devising a solid AI combat strategy for the future. Follow TechRadar on Google News and add us as a preferred source to get our expert news, reviews, and opinion in your feeds.

[8]

AI Can Write a Song. It Can't Build a Career.

The music industry has seen disruption before. Vinyl gave way to cassettes, CDs to Napster, downloads to streaming. Each shift rewired how the music industry distributes and monetizes songs but did not change what music fundamentally is or the fact that humans have always created it. Artificial intelligence doesn't just change how music moves. It challenges who owns it and who gets paid for it. The real threat of AI isn't that it can make songs. It's that it reveals how fragile the music industry is. For years, artists have operated inside a system where millions of streams translate into fractions of a cent, algorithms dictate visibility, and ownership is often diluted long before a song reaches an audience. The conversation around AI is not a battle between humans and machines over creativity. It's a structural shift that puts the entire artist economy at risk, and how we respond will determine if AI expands opportunities or quietly erodes them. AUTOMATION, ACCOUNTABILITY AND CONTROL At its best, AI is a powerful equalizer. For emerging artists without teams or budgets, it reduces the friction of getting started. What once required a label infrastructure can now be assembled independently. Tools can generate press materials, build websites, create visuals, and help develop production ideas. That matters because access, not talent, has been the primary barrier to entry into music for decades. Used responsibly, AI doesn't replace creativity. It gives artists more time to focus on what's needed to build their careers: songwriting, live performances, and audience connection. But that's only one side of the equation.

[9]

One-third of Apple Music uploads now fully AI-generated

Apple Music reports that over one-third of its uploads consist of 100% AI-generated music, according to Oliver Schusser, VP of Apple Music. The growing prevalence of AI submissions has prompted Apple to take action, despite only 0.5% of its users engaging with this content. Schusser highlighted the ease for labels and distributors to submit fully AI-created tracks, noting that this issue is not unique to Apple Music. Similarly, Deezer recently stated that nearly half of new music submitted to its platform is AI-generated, leading to the cessation of its high-resolution versions for these tracks. In response to the increase in AI content, Apple is developing an in-house technology likely associated with what it calls Transparency Tags. This system, announced earlier this year, will allow labels and distributors to indicate the use of AI in their music submissions, although participation remains optional. Despite the surge in AI-generated music, Schusser asserted that it has not significantly influenced user engagement on Apple Music. "The reality is, the usage of the AI music on Apple Music is really tiny. I'm rounding, but it's below 0.5% of usage," he stated. To combat fraudulent activities associated with AI music, Apple Music has a long history of addressing fraud dating back to its iTunes days. As part of ongoing efforts, the company has doubled its fraud penalty this year. Schusser revealed that since implementing this penalty four years ago, Apple Music has observed a 60% reduction in fraudulent uploads. Other streaming platforms are also tackling the issue of AI music. Spotify has removed 25 million AI-generated tracks over the past year, indicating a broader industry concern regarding the authenticity of music content on streaming services.

Share

Copy Link

AI-generated music is overwhelming streaming platforms like Spotify and Deezer, with uploads reaching 44% of daily content on some services. Listener frustration has grown so intense that users are building their own AI blockers, while platforms struggle to implement effective detection systems. The surge raises serious questions about artist royalties and the future of human-made music.

AI Music Overwhelms Streaming Platforms at Unprecedented Scale

AI music has evolved from experimental curiosity to existential crisis for streaming platforms. What began with pioneering albums like Taryn Southern's I AM AI in 2018 transformed dramatically with the December 2023 launch of Suno and Udio's April 2024 debut

1

. These tools democratized music creation, allowing anyone to generate complete compositions from simple text prompts.

Source: PC Magazine

The consequences have been staggering. Deezer reported that AI-generated music comprised 28 percent of uploads in September 2025, escalating to 50,000 tracks daily by year's end—accounting for 34 percent of all uploads

1

. By early 2026, that figure climbed to 75,000 daily uploads, representing approximately 44 percent of content flooding streaming platforms4

. Spotify removed over 25 million AI tracks in just 12 months, according to Sten Garmark, the company's Head of Consumer Experiences [5](https://www.techradar.com/audio/spotify/it-is-about-choice-if-you-want-to-hear-ai-music-or-if-you-dont-one-spotify-user-got-so-frustrated-with-ai-slop-that-they-created-an-ai-blocker-but-it-may-violat e-spotifys-terms-of-service).Listener Frustration Drives DIY Solutions and Growing Backlash

Public sentiment toward AI music has soured dramatically. A Luminate study tracking attitudes from May to November 2025 found overall interest dropped from -13% to -20%, with listeners increasingly uncomfortable with both partial and full AI usage in music creation

4

. The decline proved especially pronounced among Gen Z and Gen Alpha listeners. Leipzig-based software developer Cedrik Sixtus exemplifies this listener frustration. Finding his Spotify playlists increasingly contaminated with suspected AI content, Sixtus built a Spotify AI Blocker that automatically labels and filters out tracks from over 4,700 suspected AI artists3

.

Source: TechRadar

Hundreds have downloaded his tool from code-sharing websites, though Sixtus warns it "may violate Spotify's terms of service" [5](https://www.techradar.com/audio/spotify/it-is-about-choice-if-you-want-to-hear-ai-music -or-if-you-dont-one-spotify-user-got-so-frustrated-with-ai-slop-that-they-created-an-ai-blocker-but-it-may-violate-spotifys-terms-of-service). "It is about choice - if you want to hear AI music or if you don't," Sixtus told the BBC, expressing his preference for platforms to implement official labeling and filtering systems

3

.Royalty Issues and Content Dilution Threaten Human Artists

The AI music surge creates serious economic consequences for human artists. Streaming platforms like Spotify and Apple Music operate on a pro rata royalty model—if an artist's catalog represents a certain percentage of total streams, they receive that percentage of royalty payouts

4

. AI slop dilutes these pools, siphoning millions in royalties away from legitimate artists1

. In February, several artists' rights groups published an open letter titled "Say No To Suno," claiming AI content "dilutes the royalty pools of legitimate artists from whose music this slop is derived"4

. Despite the flood of uploads, actual listening remains minimal. Deezer found AI songs account for less than 3 percent of total streams, with most deemed fraudulent and driven by bots rather than human listeners4

. The company has demonetized 85 percent of AI streams1

.Related Stories

Platforms Implement Inconsistent AI Detection Tools and Transparency Tags

Deezer became the first major platform to implement AI detection tools, using proprietary technology that trains AI models to identify statistical patterns in sound

3

. The service labels AI-generated content, prevents algorithmic recommendations, and recently began selling its detection technology industry-wide. CEO Alexis Lanternier stated that "AI-generated music is now far from a marginal phenomenon and as daily deliveries keep increasing, we hope the whole music ecosystem will join us in taking action to help safeguard artist's rights and promote transparency for fans"1

. Apple Music introduced transparency tags requiring labels and creators to voluntarily disclose AI content, though the system relies entirely on self-reporting with unclear enforcement1

.

Source: TechRadar

Spotify launched AI credits in April, working with standards group DDEX to allow artists to specify whether AI created lyrics, vocals, or backing music

1

. However, Sam Duboff, Spotify's global head of Marketing & Policy for Artists, acknowledged that third-party detection tools still make a "material amount of incorrect assessments"1

.The Challenge of Detection as AI Music Becomes Indistinguishable

The technical sophistication of AI music generators poses fundamental challenges for detection. In a controlled Deezer-Ipsos poll, 97 percent of listeners failed to correctly distinguish between AI-generated and human-made tracks

3

. Creating AI music requires minimal effort—users simply select genres, generate lyrics, and click create2

. Services like Suno produce polished songs with vocals and instrumentation in seconds. Robert Prey, who studies streaming platforms at Oxford University's Internet Institute, describes Spotify's position as "a difficult - borderline existential - balancing act"3

. The platform must avoid value judgments about how music is created while maintaining trust among listeners, artists, and the industry through adequate transparency. Maya Ackerman, an AI and computational creativity expert at Santa Clara University and CEO of WaveAI, notes that AI music exists on a continuum, making straightforward labeling difficult3

. While some tools operate as "prompt in, song out" systems where labels would be straightforward, others involve varying degrees of human and AI collaboration.References

Summarized by

Navi

Related Stories

Major Labels Embrace AI Music Platforms After Copyright Battles, Raising Artist Concerns

16 Dec 2025•Entertainment and Society

AI Music Generation Tools Spark Debate and Disruption in the Music Industry

01 Sept 2025•Technology

YouTube Music users furious as AI slop floods recommendations, threatening paid subscriptions

06 Jan 2026•Technology

Recent Highlights

1

Anthropic warns AI may soon build itself, calls for global pause on frontier development

Policy and Regulation

2

Nvidia unveils RTX Spark chip to chase $200B CPU market with AI agent PCs from Microsoft, Dell, and HP

Technology

3

Apple unveils overhauled Siri powered by Google's Gemini AI at WWDC after two-year delay

Technology

Recent Highlights

Today's Top Stories

News Categories