Google DeepMind's Decoupled DiLoCo transforms distributed AI training with resilient architecture

2 Sources

[1]

Decoupled DiLoCo: Resilient, Distributed AI Training at Scale

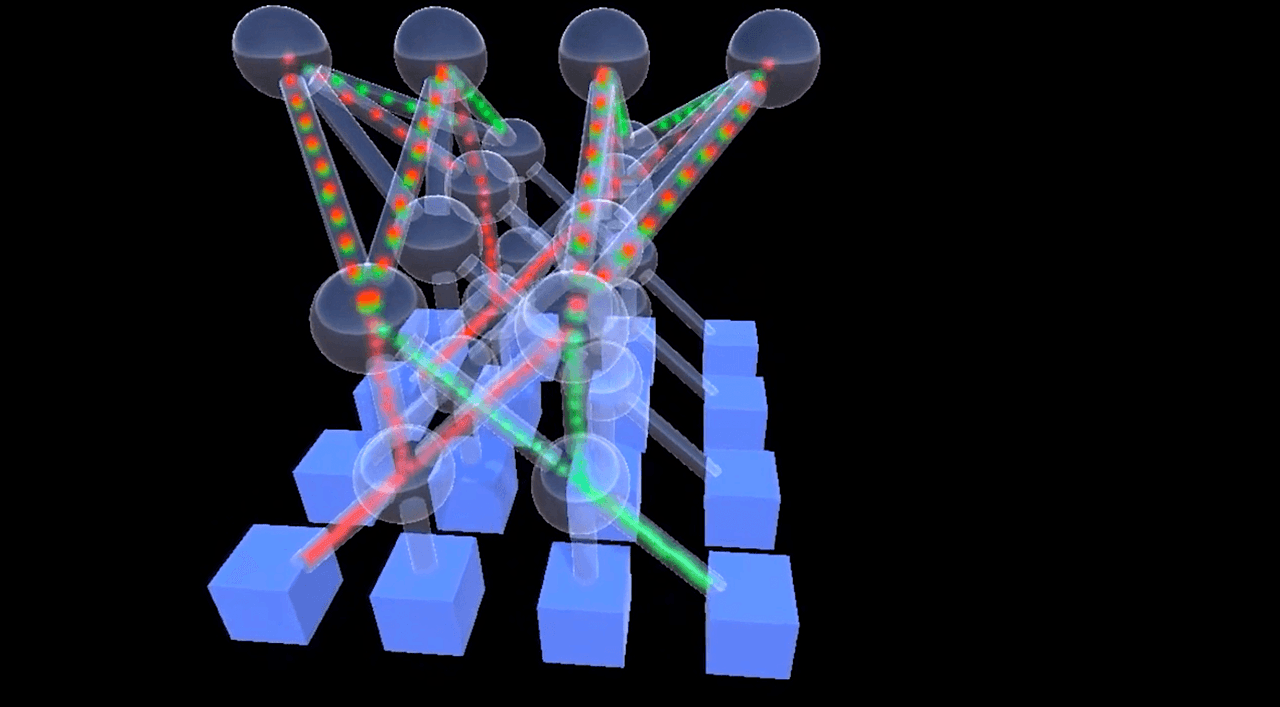

Our new distributed architecture helps to train LLMs across distant data centers - with lower bandwidth and more hardware resiliency. Training a frontier AI model traditionally depends on a large, tightly coupled system in which identical chips must stay in near-perfect synchronization. This approach is highly effective for today's state-of-the-art models, but as we look toward future generations of scale, maintaining this level of synchronization across thousands of chips becomes a significant logistical challenge. Today, in a new paper we are excited to share a new approach to this problem, called Decoupled DiLoCo (Distributed Low-Communication). By dividing large training runs across decoupled "islands" of compute, with asynchronous data flowing between them, this architecture isolates local disruptions so that other parts of the system can keep learning efficiently. The result is a more resilient and flexible way to train advanced models across globally distributed data centers. And crucially, Decoupled DiLoCo does not suffer the communication delays that made previous distributed methods like Data-Parallel impractical at global scale. As frontier models continue to grow in scale and complexity, we're exploring diverse approaches to train models across more compute, locations and varied hardware.

[2]

Google's DeepMind's New Approach to Distributed Training of AI Models

It isolates local disruptions such as hardware failures and network issues so that other parts of the system can continue the learning processes Google's AI research arm DeepMind has introduced a decoupled distributed low-communication (DiLoCo) training architecture that could train advanced AI models across distributed datacentres. The architecture isolates local disruptions such as hardware failures and network issues so that other parts of the system can continue the learning processes efficiently. This is achieved through partitioning large-scale training workloads into decoupled compute islands through which data is exchanged in an asynchronous fashion. "This enables large language model pre-training across geographically distant datacentres without requiring the tight synchronization that makes conventional approaches brittle at scale," says a blog post by DeepMind. DeepMind has shared a research paper titled "Decoupled DiLoCo for Resilient Distributed Pre-training" wherein it explains how the decoupled DiLoCo breaks down a global cluster of processors into independent asynchronous learners. The paper explains that frontier AI models are trained around a large, tightly coupled system where identical chips must stay in near-perfect synchronisation. "This approach is highly effective for today's models, but as we look towards the future of scale, achieving such levels of sync across thousands of chips becomes a logistical challenge," it says. By dividing large training runs across decoupled "islands" of compute, with asynchronous data flowing between them, this architecture isolates local disruptions so that other parts of the system can keep learning efficiently. "The result is a more resilient and flexible way to train advanced models across globally distributed datacentres. And crucially, Decoupled DiLoCo does not suffer the communication delays that made previous distributed methods like Data-Parallel impractical at global scale," the paper says. In this process, each learner group functions on its own data shared at its own speed while communicating parameter fragments to a central lightweight synchroniser that aggregates them in an asynchronous manner. The central point defines a threshold with the minimum number of independent trainers required to complete a task before the system moves the training forward. This "minimum quorum" strategy is supported by an adaptive grace window (a buffer designed to maximise sample efficiency without giving up on the system's speed, and token-weighted merging, which is a weightage system used to reconcile the divergent states of different learners. This results in the faster learners or those capable of processing more data getting weighted appropriately in order to maintain model stability without sacrificing speed. DeepMind claims that the approach allows for zero global downtimes and also maintains a training goodput (Google's metric to measure AI system efficiency) of nearly 90% even when hardware failures are simulated aggressively. The blog says this contrasts with the traditional elastic methods where goodput can fall up to 40% during runtime. The Decoupled DiLoCo builds on two earlier advances: Pathways, which introduced a distributed AI system based on asynchronous data flow, and DiLoCo, which dramatically reduced the bandwidth required between distributed data centers, making it practical to train large language models across distant locations. "Decoupled DiLoCo brings those ideas together to train AI models more flexibly at scale. Built on top of Pathways, it enables asynchronous training across separate islands of compute (known as learner units) so that a chip failure in one area doesn't interrupt the progress of the others," says the research note. Additionally, the infrastructure is also self-healing. In testing, the researchers used a method called "chaos engineering" to introduce artificial hardware failures during training runs. Decoupled DiLoCo continued the training process after the loss of entire learner units, and then seamlessly reintegrated them when they came back online. The system was tested using Google's Gemma 4 AI models, with Douillard claiming the team trained a 12-billion parameter model across four separate U.S. regions using 2-5 Gb/s of wide-area networking. "Decoupled DiLoCo is not only more resilient to failures, but is also practical for executing production-level, fully distributed pre-training. We successfully trained a 12 billion parameter model across four separate U.S. regions using 2-5 Gbps of wide-area networking (relatively achievable using existing internet connectivity between datacentre facilities, rather than requiring new custom network infrastructure between facilities)," a blog post by DeepMind says. The system achieved this training result more than 20 times faster than conventional synchronization methods. This is because our system incorporates required communication into longer periods of computation, avoiding the "blocking" bottlenecks where one part of the system must wait for another, the post said. Additionally, the system also allowed the researchers to mix different generation of Tensor Processing Units (TPU) in a single training run. This means the shelf life of the existing hardware would go up substantially and enhance the total compute available for model training in the future. During their experiments, chips from different generations running at different speeds still matched the ML performance of single-chip-type training runs, ensuring that even older hardware can meaningfully accelerate AI training, DeepMind says. What's more, because new generations of hardware don't arrive everywhere all at once, being able to train across generations can alleviate recurring logistical and capacity bottlenecks.

Share

Copy Link

Google DeepMind unveiled Decoupled DiLoCo, a new architecture that trains AI models across geographically distant data centers without tight synchronization. The system maintains 90% efficiency even during hardware failures by dividing training into decoupled compute islands with asynchronous data flow, addressing a critical challenge as frontier models scale.

Google DeepMind Introduces Decoupled DiLoCo for Distributed AI Training

Google DeepMind has unveiled Decoupled DiLoCo (Distributed Low-Communication), a novel architecture designed to train AI models across geographically distant data centers while maintaining exceptional hardware resiliency

1

. The approach addresses a fundamental challenge in modern machine learning: as frontier models grow larger, maintaining near-perfect synchronization across thousands of chips becomes increasingly difficult. Traditional training methods rely on tightly coupled systems where identical chips must operate in lockstep, but this approach struggles at global scale.

Source: DeepMind

The new system divides large-scale training workloads into decoupled compute islands that communicate through asynchronous data flow, effectively isolating local disruptions so other parts of the system continue learning efficiently

2

. This architecture enables large language model pre-training without requiring the tight synchronization that makes conventional approaches brittle at scale.How Decoupled DiLoCo Achieves Resilient Distributed Pre-Training

The architecture breaks down global clusters into independent asynchronous learners, with each group processing its own data at its own pace

2

. These learner units communicate parameter fragments to a central lightweight synchronizer that aggregates them asynchronously. The system employs a "minimum quorum" strategy, defining a threshold for the minimum number of independent trainers required to complete tasks before moving training forward.This approach is supported by an adaptive grace window—a buffer designed to maximize sample efficiency without sacrificing speed—and token-weighted merging, which appropriately weights faster learners capable of processing more data. DeepMind claims the system maintains training goodput of nearly 90% even when hardware failures are simulated aggressively, contrasting sharply with traditional elastic methods where goodput can fall up to 40% during runtime

2

.

Source: CXOToday

Training Across Geographically Distant Data Centers Without Bandwidth Constraints

Decoupled DiLoCo builds on two earlier advances: Pathways, which introduced distributed AI systems based on asynchronous data flow, and the original DiLoCo, which dramatically reduced bandwidth requirements between distributed data centers

2

. Crucially, the system does not suffer the communication delays that made previous distributed methods like Data-Parallel impractical at global scale1

.In testing with Gemma 4 AI models, researchers successfully trained a 12-billion parameter model across four separate U.S. regions using just 2-5 Gbps of wide-area networking—achievable with existing internet connectivity rather than requiring custom network infrastructure between facilities

2

. The system achieved this more than 20 times faster than conventional synchronization methods.Related Stories

Self-Healing Infrastructure Withstands Hardware Failures

The infrastructure demonstrates self-healing capabilities through chaos engineering tests, where researchers deliberately introduced artificial hardware failures during training runs

2

. Decoupled DiLoCo continued training after losing entire learner units, then seamlessly reintegrated them when they came back online. This enables zero global downtimes, as a chip failure in one compute island doesn't interrupt progress in others.As frontier LLMs continue expanding in scale and complexity, this architecture offers a practical path for training across more compute, locations, and varied hardware

1

. The ability to leverage globally distributed resources without custom infrastructure could accelerate development timelines while reducing dependency on concentrated computing clusters, particularly relevant as organizations face growing challenges in securing sufficient hardware capacity for next-generation models.References

Summarized by

Navi

Related Stories

DeepSeek unveils mHC architecture that could reshape how developers train advanced AI models

02 Jan 2026•Science and Research

DeepSeek's AI Breakthrough: Expertise Trumps Raw Compute in Model Development

28 Jan 2025•Technology

Google's Titans and Sakana's Transformer Squared: Revolutionizing AI Architectures Beyond Transformers

19 Jan 2025•Technology

Recent Highlights

1

Pope Leo XIV releases first AI encyclical calling for disarmament from monopolistic control

Policy and Regulation

2

AI passes the Turing Test as GPT-4.5 appears more human than actual people in landmark study

Science and Research

3

Google AI Search officially replaces traditional web search with Gemini-powered conversations

Technology