OpenAI, Nvidia, AMD and Microsoft unveil MRC protocol to accelerate large-scale AI training

3 Sources

[1]

Nvidia's MRC: When 'just Ethernet' isn't enough for gigascale AI - SiliconANGLE

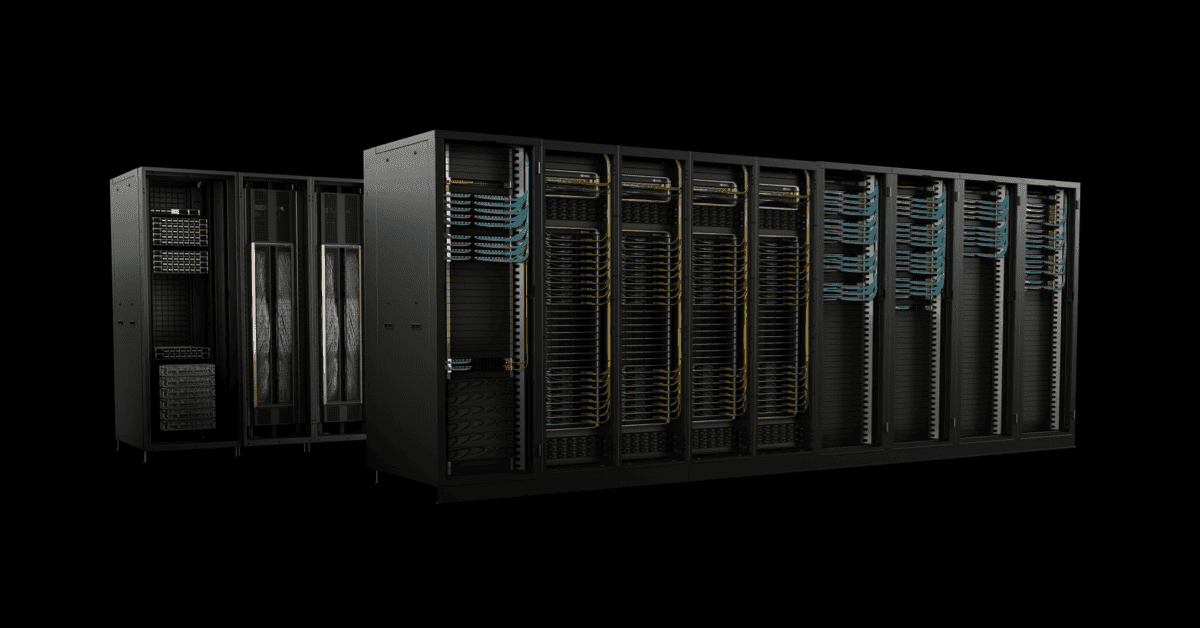

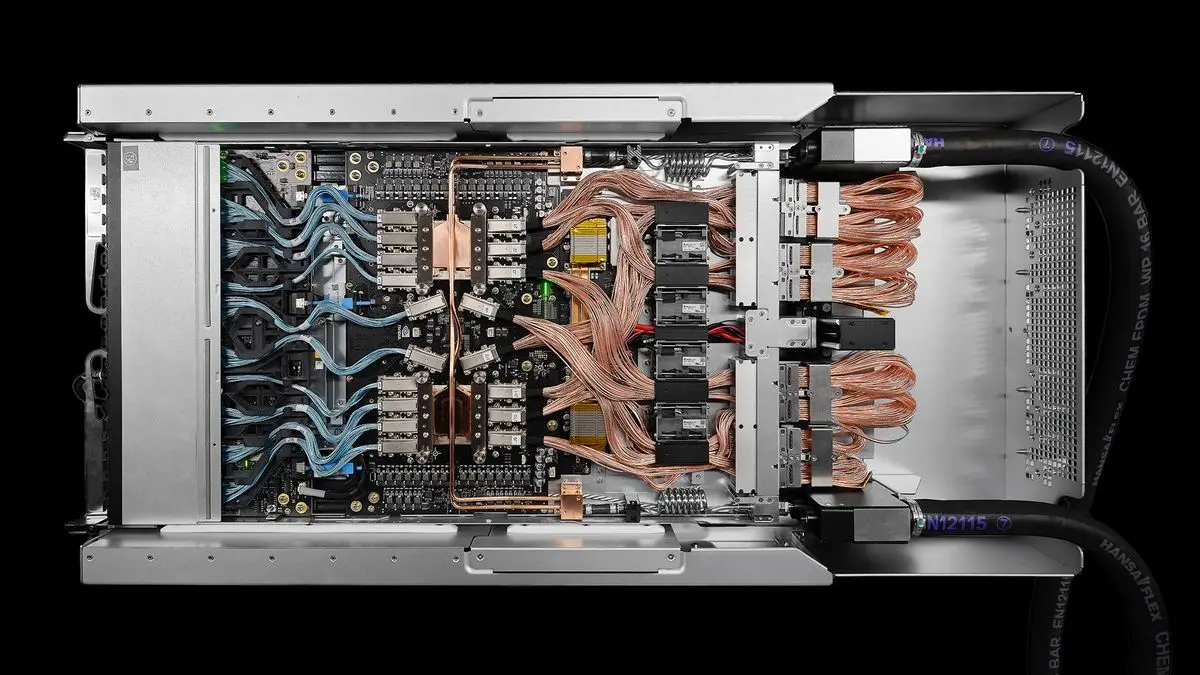

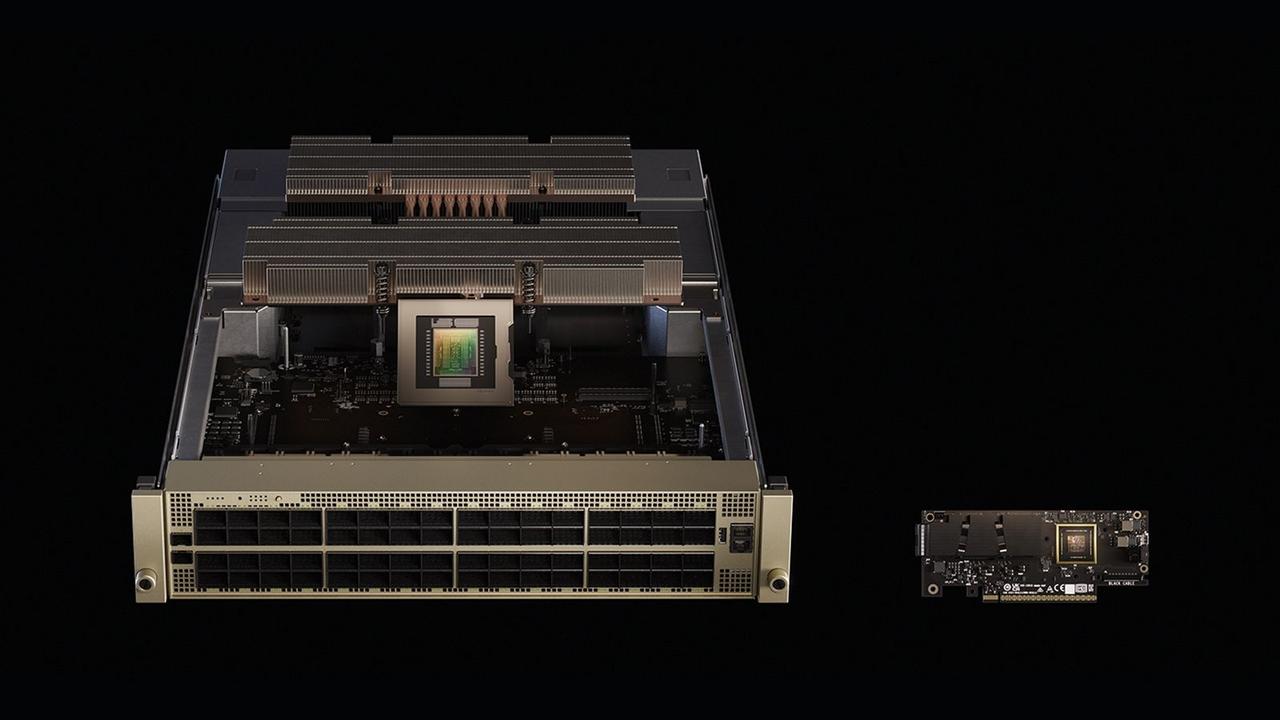

Nvidia Corp.'s latest networking innovations meet the needs of a new kind of network that supports the unique demands of artificial intelligence factories. Ethernet is no longer a generic plumbing choice but an enabler of high-performance AI. With today's unveiling of Multipath Reliable Connection, or MRC, on Spectrum-X Ethernet, Nvidia is pushing Ethernet even deeper into AI-native territory -- and doing so in partnership with OpenAI Group PBC and Microsoft Corp. On the surface, MRC is a new remote direct memory access or RDMA transport protocol, now open-sourced via the Open Compute Project. In reality, it's a production-proven way to keep tens or hundreds of thousands of graphics processing units fed and synchronized by using a single RDMA connection to stripe traffic across multiple paths and dynamically steer around congestion and failures. OpenAI has already used MRC on Spectrum-X to train recent frontier large language models powering ChatGPT and Codex, and Microsoft is deploying it in some of its largest AI factories built on GB200 systems. The important point is that MRC isn't a lab experiment but a set of algorithms that has already earned its place in some of the most demanding AI environments on the planet. There are three intertwined elements to the announcement: That openness is important to scaling MRC. Nvidia has been adamant that everything in Spectrum X is built on standard protocols, with no proprietary wire formats and no lock-in at the packet level. The "secret sauce" is in how they partition control logic among NICs, switches and host software, not in a closed protocol. MRC follows that pattern: Anyone can implement the spec, but Nvidia believes its execution on SpectrumX hardware, with deep telemetry and fabric control, will be hard to match. When a frontier model is being trained across tens or hundreds of thousands of GPUs, the network is effectively part of the compute pipeline. If a link flaps for a few milliseconds or a path gets congested, it's a stall in a multimillion-dollar training run and can cost big money. MRC addresses that problem in several ways. During a call, Nvidia Senior Vice President Gilad Shainer described MRC as extending the routing "brain" all the way to the host. The network interface card and the host-side management stack (in OpenAI's case, its own software) can actively participate in routing decisions, thereby overriding or influencing what the switches do. That's a major shift from classical Ethernet designs, where a hosted tenant has little or no control over the fabric. In more traditional cloud models, a hosted customer has visibility and control at the virtual machine or server level, but the network fabric remains opaque. OpenAI wanted to change that, acting as a "smart tenant" with the ability to govern routing policy, congestion responses and failure behavior from the server edge. MRC is the mechanism that reconciles that desire with the realities of a shared, hyperscale fabric. Another key piece is SpectrumX multiplane support. Large AI factories are increasingly built as multiplane networks. That is a separate, independent network plane that provides a full path between GPUs. Think of it as having multiple disjointed fabrics in parallel, each serving as an alternative route for the same east-west traffic. SpectrumX solves this. Hardware-accelerated load balancing across planes keeps latency predictable while scaling to hundreds of thousands of GPUs. Failures or maintenance events can be absorbed by shifting traffic between planes without disrupting training jobs. MRC sits on top of this, using multiplane awareness to exploit those parallel fabrics more intelligently. The result is a kind of AI-native Ethernet fabric where redundancy, performance and control are baked into the transport, not bolted on via box-by-box tinkering. Nvidia is careful to present MRC as "another protocol" on SpectrumX, not a replacement for everything else. Today, SpectrumX supports at least two main Ethernet transports for AI. Spectrum-X plus adaptive RDMA is a general-purpose AI Ethernet with adaptive routing in the switches and NIC-level optimization. Spectrum-X with MRC is an RDMA transport emphasizing multipath, host-driven routing and governance. There is also the Ultra Ethernet Consortium, which is a multivendor effort to define a new Ethernet RDMA-based fabric. I asked Shainer about the long-term implications of these Ethernet variants and he gave a very pragmatic answer. He does not see the world collapsing onto a single "winner" like UEC. Instead, he expects more variety: Different hyperscalers and AI providers will tune their transport protocols to their own workloads and operational models. In that context, MRC is a great example of a "custom Ethernet for AI" that's already running in production, while UEC is another evolving effort. Technically, MRC builds on RoCEv2 as defined by the InfiniBand Trade Association, then extends it with multipath, host-governed routing and the multiplane integration. Some concepts that surfaced in UEC discussions -- such as enhanced congestion control -- also show up in MRC, but wired into Nvidia's hardware and host stack. From a user point of view, the important bit is that SpectrumX gives you a choice: you can run Adaptive RDMA, you can run MRC, and there are other undisclosed variants SpectrumX can support that are specific to other large customers. One of the more interesting subtexts in my conversation with Shainer is the distinction between "hosted users" and "infrastructure owners." If you own the AI factory, you can program switches, NICs and hosts end-to-end; you can roll your own routing algorithms and congestion-control tweaks anywhere in the stack. If you're a hosted customer -- OpenAI on top of Microsoft, for example -- you typically only control the host. The network underneath is someone else's problem. MRC exists largely to bridge that gap. By embedding new logic in the SuperNIC and exposing it to host-side management, a tenant can make meaningful routing decisions that the fabric will honor, without direct switch access. That allows OpenAI, or others with similar models, to optimize for their specific training jobs -- changing routing strategies, reacting to congestion patterns, or tuning behavior per workload -- without owning the whole data center. That's an important pattern to watch as AI ecosystems get more layered and multiparty. We'll see more cases where a model provider wants near-owner-level control over routing and telemetry, even when they're running on someone else's iron. MRC is an early pattern for how that could be done safely over Ethernet. From an industry perspective, MRC and SpectrumX underscore three trends. First, AI is forcing Ethernet to specialize. Ten years ago, you could plausibly talk about "one Ethernet" dominating the data center. Today, we have a spectrum: shallow-buffer vs. deep-buffer switches, DCB vs. ECN-driven fabrics, a variety of RDMA variants, and now AI-specific transports such as MRC. Shainer's line that "there is Ethernet, and there is Ethernet, and there is another Ethernet" isn't just a joke -- it's the reality of the role the network plays in AI. Second, open specifications with proprietary implementations are becoming the norm. By pushing MRC into OCP, alongside contributions from AMD, Broadcom and Intel, Nvidia gains ecosystem credibility while still betting that its Spectrum-X implementation will perform best. It's the same playbook Nvidia has used in InfiniBand: standards on the wire, differentiation in silicon, and software. Third, UEC is now one of several options, not the ordained future. With MRC in production on GB200-based clusters at Microsoft and in OpenAI environments, Nvidia can point to a working, large-scale, open Ethernet transport that doesn't depend on the UEC kitchen to finish its meal. That doesn't kill UEC, but it does make the future feel more pluralistic -- one where hyperscalers, silicon vendors and model providers define and adopt the flavors that best match their economics and risk tolerance. For enterprise buyers and service providers, the practical takeaway is this: When evaluating "AI networking," don't stop at port speeds and buffer sizes. Ask which transport protocols the fabric supports, how they're implemented in NICs and switches, what telemetry and host-side control you get, and how quickly the system can respond to failure and congestion. In other words, treat the network as part of the AI architecture, not just a line item. Nvidia's MRC announcement, backed by OpenAI and Microsoft, is a strong reminder that in gigascale AI, Ethernet must mature and function as an AI-native fabric. With Spectrum-X, Nvidia is betting that the winning networks won't just be fast -- they'll be intelligent, programmable and tailored to the unique demands of AI factories.

[2]

OpenAI Got The Whole AI Squad To Accelerate Large-Scale AI Training - AMD, NVIDIA, Intel, Microsoft & Broadcom All-In On MRC

It's one thing to partner with either one or two major names in the AI segment, but OpenAI got AMD, NVIDIA, Intel, Microsoft, & Broadcom to accelerate large-scale AI training. OpenAI Announces Mega-Partnership With AMD, NVIDIA, Intel, Microsoft & Broadcom To Accelerate AI The new announcement from OpenAI comes as a supercomputer network partnership that aims to accelerate large-scale AI training. For this purpose, AMD, Broadcom, Intel, Microsoft, and NVIDIA are working with the firm to develop a new protocol called MRC (Multipath Reliable Connection) with the goal of improving GPU networking performance and resilience in large training clusters. OpenAI has released MRC today through the OCP (Open Compute Project) to facilitate the broader use of the protocol across AI firms. The problem that pushed the need for MRC is the transfer of data when training large AI models. It is stated that even a single transfer arriving late can disrupt the entire process, causing GPUs to sit idle. The main sources of this delay are linked to Network Congestion, link and device failures. The larger the size of the cluster, the more common this problem occurs. MRC is the fundamental approach for next-gen and large-scale supercomputer platforms for AI. OpenAI states that it has worked with AMD, Broadcom, Intel, Microsoft, and NVIDIA over the past two years to develop the protocol, which is built into the latest 800 Gb/s network interfaces, allowing AI firms to spread a single transfer across hundreds of uninterrupted paths, route around failures in microseconds, and also run simpler network control planes. Instead of treating each network interface as one 800Gb/s link, we split it into multiple smaller links. For example, one interface can connect to eight different switches. You can then build eight separate parallel networks, or planes, each operating at 100Gb/s, rather than a single 800Gb/s network. That change has a large effect on the shape of the cluster. A switch that can connect 64 ports at 800Gb/s can instead connect 512 ports at 100Gb/s. This lets you build a network fully connecting about 131,000 GPUs with only two tiers of switches. A conventional 800Gb/s network would require three or four tiers. OpenAI The MRC standard will extend the existing RDMA over RoCE (Converged Ethernet). This enables hardware-accelerated remote direct memory access for GPUs and CPUs. OpenAI has already deployed MRC across its supercomputers housing the NVIDIA GB200 "Blackwell" GPUs that are used to train Frontier models. These include Oracle Cloud Infrastructure (OCI) in Abilene, Texas, & Microsoft's Fairwater supercomputers. Currently, MRC has been used to train multiple OpenAI models across NVIDIA and Broadcom hardware. The RCP protocol will be fundamental to OpenAI's Stargate supercomputer, built by Oracle Cloud Infrastructure at Abilene, Texas. The supercomputer aims to deploy 10GW of AI compute by 2029 and has already deployed over 3GW in the past 3 months. With RCP available and open to the entire AI industry, it paves the way for cross-industry collaboration in solving the hardest problems within AI and advances the segment further. Follow Wccftech on Google to get more of our news coverage in your feeds.

[3]

From Innovation to Deployment Ready: AMD Advances AI Networking at Scale with MRC

What does it take to power the world's most demanding AI models, like those behind ChatGPT? At the most fundamental level, the world's most demanding AI models require massive GPU compute working in lockstep. As AI systems scale, efficiently bringing that compute together depends increasingly on the network that connects it. Hundreds of thousands of GPUs must continuously stay synchronized, exchange data, and recover quickly from inevitable disruptions. At this scale, the network directly determines how much compute can be utilized. Today, AMD, in collaboration with OpenAI, Microsoft, and other industry leaders, announced that it is contributing Multipath Reliable Connection (MRC) to the Open Compute Project (OCP), making this new network protocol available to the broader ecosystem. As a long-standing contributor to open ecosystems helping advance Ethernet for the era of AI, AMD is helping transform AI networking into an open, programmable, production-ready foundation for customers building AI infrastructure. For AMD, and the industry at large, MRC represents more than a new networking protocol for frontier-scale supercomputers. It is an important step toward a more open, programmable, and resilient foundation for AI infrastructure. As customers build larger AI clusters across cloud, enterprise, research, and sovereign AI environments, the industry needs networks that are not only fast in ideal conditions, but consistent, adaptive, and operationally practical in real world deployments. MRC: Built for AI networking at Scale MRC is designed specifically for large-scale AI training environments where traditional single-path networking models struggle. These workloads require continuous, high-speed communication, and even brief disruptions can impact overall system progress. Instead of sending traffic along a single path, MRC distributes packets across multiple paths simultaneously. This reduces congestion hotspots and limits latency variation that can slow synchronized training. When failures inevitably occur, MRC adapts quickly and allows traffic to reroute in near real-time, avoiding the delays associated with traditional network recovery. In practical terms, MRC helps turn the network into a shock absorber for AI infrastructure. Instead of forcing every event to become a disruption, MRC gives the network a way to adapt locally and quickly so workloads can continue making progress. That matters because performance at AI scale is not defined by peak bandwidth alone. It is defined by how much useful accelerator capacity remains productive under real-world conditions. AMD Contributions: From Development to Deployment AMD played a formative role in shaping how MRC works today. AMD co-led authorship of the MRC specification that defines next-generation AI networking and contributed advanced congestion control technology to improve performance under real-world conditions. More importantly, this isn't theoretical. AMD has implemented and deployed MRC, combined with AMD networking technology, at scale in test clusters with a leading cloud provider. This validation means the design reflects how networks actually perform under sustained AI workloads. "As GPUs and CPUs continue to drive compute, real bottleneck in scaling AI is the network. AMD, alongside OpenAI and Microsoft announced MRC, marking a major step forward for the industry. The programmability from AMD enables us to rapidly turn innovations like this into real-world performance at scale, where consistent, resilient throughput matters more than theoretical peak bandwidth." - Krishna Doddapaneni, CVP, Engineering, NTSG, AMD Programmability remains a key differentiator for AMD, as one of the only networking solutions that combines full hardware and software programmability with proven deployments, allowing networks to adapt as workloads evolve. Before the development of the MRC specification, AMD had a pre-standard implementation of an improved RoCEv2 transport protocol, which evolved into the MRC standard of today. This was due to the open programmability of the AMD Pensando™ Pollara 400 AI NIC, and that programmability contributed to the flexibility in obtaining early validation. As AMD being one of the first and only companies to implement MRC on a 400G NIC, we can accelerate a seamless transition to our AMD Pensando "Vulcano" 800G AI NIC, which also supports the MRC transport protocol. This combination of a defined specification, contributed technology, and implementation in testing positions AMD at the forefront of deploying MRC in real-world AI infrastructure. Redefining Performance for AI Infrastructure For AI at scale, performance is defined by how systems behave under real conditions, not peak bandwidth. Consistent throughput, effective congestion handling, and quick recovery from failures, while keeping GPUs synchronized and productive is what's optimal to power AI networking at scale. MRC can improve model efficiency and helps make the networking protocols connecting large-scale AI training across large GPU clusters highly reliable. By helping define, develop, and contribute to MRC, AMD, in collaboration with OpenAI, Broadcom, Intel, and Microsoft, is advancing AI networking from concept to practical, production-ready infrastructure.

Share

Copy Link

OpenAI has released Multipath Reliable Connection (MRC), a new networking protocol developed with Nvidia, AMD, Intel, Microsoft and Broadcom, through the Open Compute Project. The protocol addresses critical bottlenecks in AI training by distributing data across multiple paths, enabling networks to support up to 131,000 GPUs with microsecond failure recovery. MRC is already deployed in OpenAI's frontier model training and Microsoft's GB200-based AI factories.

OpenAI Releases MRC Protocol Through Open Compute Project (OCP)

OpenAI has unveiled Multipath Reliable Connection (MRC), a production-proven RDMA transport protocol designed to eliminate network bottlenecks in AI training environments. Developed over two years in collaboration with Nvidia, AMD, Intel, Microsoft, and Broadcom, MRC has been released through the Open Compute Project to facilitate broader adoption across the AI industry

2

. The protocol is already operational in some of the world's most demanding AI environments, including OpenAI's training infrastructure for ChatGPT and Codex, as well as Microsoft's Fairwater supercomputers housing Nvidia GB200 Blackwell GPUs1

2

. This isn't a lab experiment but a set of algorithms that has earned its place powering frontier large language models in production.

Source: CXOToday

Solving Critical Bottlenecks in Large-Scale AI Training

When training frontier models across tens or hundreds of thousands of GPUs, even a single data transfer arriving late can disrupt the entire process, causing expensive compute resources to sit idle during multimillion-dollar training runs

1

. The primary culprits are network congestion, link failures, and device disruptions that become increasingly common as cluster size grows2

. MRC addresses these challenges by distributing data across multiple paths simultaneously rather than relying on a single path. This approach to distributing data across multiple paths reduces congestion hotspots and limits latency variation that can slow synchronized training operations3

. When failures inevitably occur, MRC adapts quickly, allowing traffic to reroute in microseconds and avoiding the delays associated with traditional network recovery mechanisms2

.

Source: SiliconANGLE

AI Networking at Scale Through Multiplane Architecture

The protocol leverages a multiplane network design that fundamentally reshapes how gigascale AI factories are constructed. Instead of treating each network interface as one 800Gb/s link, MRC splits it into multiple smaller links. One interface can connect to eight different switches, enabling the construction of eight separate parallel networks or planes, each operating at 100Gb/s rather than a single 800Gb/s network

2

. This architectural shift has profound implications for cluster topology. A switch that can connect 64 ports at 800Gb/s can instead connect 512 ports at 100Gb/s, allowing a network to fully connect approximately 131,000 GPUs with only two tiers of switches, compared to the three or four tiers required by conventional 800Gb/s networks2

. Nvidia's Spectrum-X provides hardware-accelerated load balancing across these planes, keeping latency predictable while absorbing failures or maintenance events by shifting traffic between planes without disrupting training jobs1

.

Source: Wccftech

Host-Driven Routing and Smart Tenant Control

MRC extends the routing intelligence all the way to the host level, marking a major shift from classical Ethernet designs where hosted tenants have little control over the network fabric. According to Nvidia Senior Vice President Gilad Shainer, the network interface card and host-side management stack can actively participate in routing decisions, overriding or influencing what switches do

1

. OpenAI wanted to operate as a "smart tenant" with the ability to govern routing policy, congestion control, and failure behavior from the server edge. This capability is particularly valuable in hyperscale environments where traditional cloud models leave the network fabric opaque to customers who only have visibility at the virtual machine or server level1

. The protocol builds on RoCEv2 as defined by the InfiniBand Trade Association, then extends it with multipath capabilities and host-driven governance1

.Related Stories

AMD's Programmability Advantage in GPU Networking Performance

AMD played a formative role in developing MRC, co-leading authorship of the specification and contributing advanced congestion control technology

3

. The company has implemented and deployed MRC at scale in test clusters with a leading cloud provider, validating that the design performs under sustained AI workloads3

. Krishna Doddapaneni, CVP of Engineering at AMD, noted that "as GPUs and CPUs continue to drive compute, real bottleneck in scaling AI is the network"3

. AMD's programmability differentiator stems from its AMD Pensando Pollara 400 AI NIC, which enabled a pre-standard implementation of an improved RoCEv2 transport protocol that evolved into today's MRC standard. This programmability contributed to early validation flexibility, positioning AMD as one of the first companies to implement MRC on a 400G NIC and enabling a seamless transition to the AMD Pensando Vulcano 800G AI NIC3

.AI-Native Ethernet Fabrics and Industry Implications

Nvidia is careful to position MRC as "another protocol" on Spectrum-X rather than a replacement for existing approaches. The platform currently supports at least two main Ethernet transports for AI: Spectrum-X plus adaptive RDMA for general-purpose AI Ethernet with adaptive routing, and Spectrum-X with MRC emphasizing multipath and host-driven routing

1

. Shainer offered a pragmatic view on the relationship between MRC and the Ultra Ethernet Consortium, a multivendor effort to define new AI-native Ethernet fabrics. He expects more variety rather than a single winner, with different hyperscalers and AI providers tuning transport protocols to their own workloads and operational models1

. The protocol will be fundamental to OpenAI's Stargate supercomputers, built by Oracle Cloud Infrastructure in Abilene, Texas, which aims to deploy 10GW of AI compute by 2029 and has already deployed over 3GW in the past three months2

. With MRC now available to the entire AI industry through OCP, it paves the way for cross-industry collaboration in solving the hardest problems in AI infrastructure.References

Summarized by

Navi

Related Stories

Tech giants form optical interconnect alliance to solve AI infrastructure's data bottleneck

13 Mar 2026•Technology

Microsoft Unveils World's First AI Superfactory, Linking Data Centers Across 700 Miles

12 Nov 2025•Technology

NVIDIA's Spectrum-X Ethernet Revolutionizes AI Data Centers for Meta and Oracle

14 Oct 2025•Technology

Recent Highlights

1

Pope Leo XIV releases first AI encyclical calling for disarmament from monopolistic control

Policy and Regulation

2

Trump cancels AI executive order signing after tech CEOs skip event and industry pushback

Policy and Regulation

3

Google AI Search officially replaces traditional web search with Gemini-powered conversations

Technology