IBM charts governed enterprise AI path with new operating model at Think 2026

4 Sources

[1]

Governed enterprise AI drives IBM's strategy - SiliconANGLE

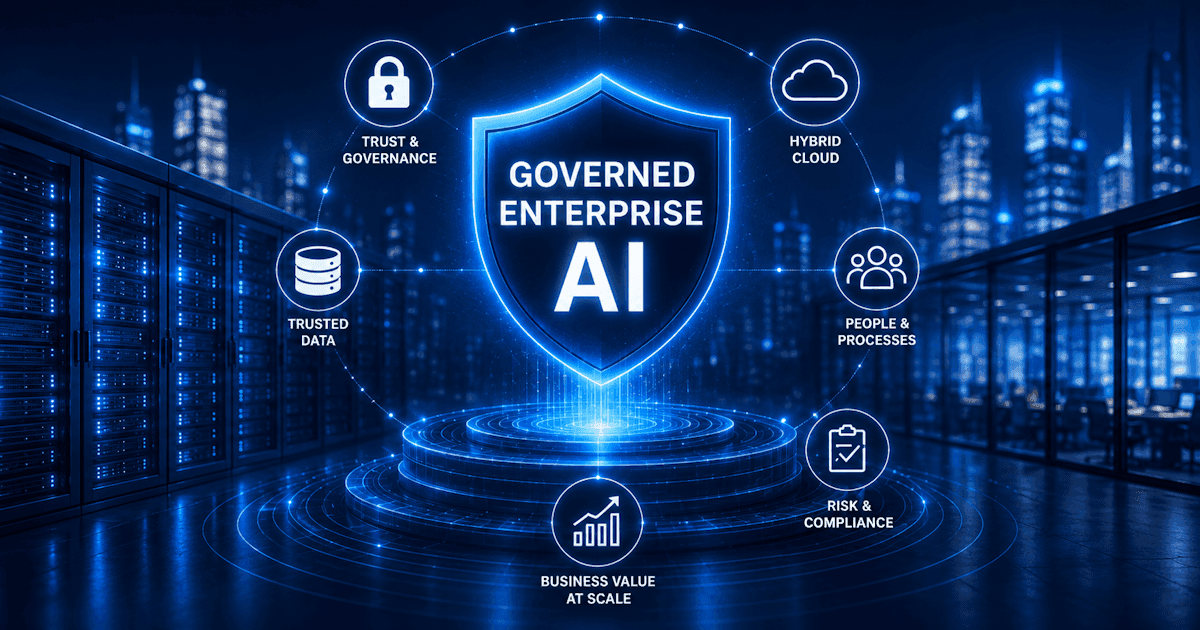

IBM's enterprise AI strategy makes trust and control the production test Before artificial intelligence can scale, governed enterprise AI has to prove it can be trusted. As companies move from pilots to production, the real test is whether platforms can bring automation, trusted data and operational control into messy business environments without creating more risk than value. That shift gives IBM Corp. a practical opening as it works to turn watsonx, hybrid cloud and governance into a trusted execution layer for enterprise AI, according to John Furrier, executive analyst at theCUBE Research. "IBM isn't trying to win the AI hype cycle -- they're trying to win enterprise reality," Furrier said. "If Think shows real deployments with watsonx on governed data inside complex environments, IBM becomes a serious second-wave AI leader." This feature is part of SiliconANGLE Media's exploration of IBM's AI strategy, hybrid cloud foundation, governance priorities and operating-model changes needed to scale enterprise AI. Be sure to check out SiliconANGLE's extensive coverage of IBM Think, including interviews with IBM executives, hybrid cloud and AI experts, and other industry leaders. (* Disclosure below.) Enterprise AI has entered a more demanding phase. Companies want agents, automation and generative AI, but they also need auditability, cost controls, trusted data and operating discipline across fragmented environments. IBM's opportunity is to make governed enterprise AI less of a compliance layer and more of a production model, Furrier explained. "While others chase frontier models, IBM is betting on something harder: trusted AI in production," he said. "The question coming out of Think is simple -- are they becoming the system of record for enterprise AI or just another layer in the stack?" That distinction matters because enterprise AI is no longer just a model-selection problem. IBM is positioning around orchestration, governance and hybrid deployment, with watsonx serving as the center of that strategy, Furrier noted. The company's challenge is making those capabilities feel operationally essential, not bolted on after deployment. "This isn't about chasing OpenAI or Anthropic on frontier models," Furrier added. "IBM's bet is that most enterprise value comes from applied AI on proprietary data. With watsonx, they're building a stack designed to plug into messy, regulated, real-world environments -- not greenfield AI labs." IBM's hybrid cloud strategy gives it a practical opening as AI workloads move closer to production across public clouds, private systems, mainframes and edge locations. Its broader ecosystem, including Red Hat, watsonx, consulting services and technology partners, adds another layer for deploying AI across mixed enterprise environments, Furrier pointed out. "IBM's differentiation hinges on one idea: AI won't live in a single cloud," he said. "Their Red Hat-driven hybrid model positions them to orchestrate AI across on-prem, private and public environments. If that story lands, it's a real strategic advantage -- especially for large, regulated enterprises." That strategy also explains why IBM's Red Hat acquisition continues to matter. The deal gave IBM a hybrid cloud foundation before enterprise AI became the defining workload. As AI shifts from isolated copilots to agentic systems that touch data, infrastructure and business logic, the value of a consistent control plane becomes easier to understand, according to Jim Kavanaugh, chief financial officer of IBM. "Our investor announcement of Red Hat acquisition was predicated on three things," Kavanaugh said in a recent interview with theCUBE. "One, that there was going to be a tighter integration of hybrid cloud and AI. Second, that the world was going to be multicloud. And, third, workloads were going to be optimized in multiple environments, public cloud, private cloud, on-prem, and now we're all the way to the edge." IBM's trust story also extends into post-quantum security, where enterprises face a looming shift in how long-protected data will be secured. With quantum-vulnerable public-key algorithms expected to be deprecated and removed by 2035, IBM is pushing customers to prepare now through stronger cryptographic visibility and agility, explained Mark Hughes, global managing partner of cybersecurity services at IBM, in a recent interview with theCUBE. "Getting organized around cryptography now is essential -- not just because of the quantum event, although that is absolutely a necessity," he said. "You need to be doing that now so we can get to a state of what we're describing at IBM [as] 'crypto agility,' where we move away from how we've traditionally managed crypt, which is hard-coded crypt. It's worked, and it's worked really well for us, but that's not relevant now in today's environment." The next wave of AI value will not come from adding more tools to old workflows. It will depend on changing how companies coordinate people, data, systems and automation. That puts finance, operations and technology leadership into the same conversation about AI returns, Kavanaugh noted. "The role of a CFO is you have to be focused on creating long-term sustainable competitive advantage and value creation," he said. "Underneath that, you might ask, 'Well, what does agent of transformation mean? What is the day in the life of a CFO?' I would tell you, it's a fundamentally different mindset and I put it in three buckets. Number one, a CFO has to have strategic vision. Second, you've got to be able to enable business model innovation. And the third, very important is organizational agility." That financial framing is important because AI programs are under increasing scrutiny. Leaders are being asked to show returns, not just activity. For IBM, the message is that governance, hybrid architecture and process redesign are not separate priorities; they are part of the same value equation, Kavanaugh added. "Great CFOs that are able to co-architect with the CEO as that partner, the AI vision, strategy and business model, I will tell you they will shape markets and they will create new sources of value," he said. "That is what's the most important role of a CFO, capital allocation, portfolio optimization, enterprise productivity to free up investment capability. That defines leadership roles in CFO." Agentic AI changes the risk profile for enterprise technology. Instead of software waiting for user commands, agents can act across systems, trigger workflows and make decisions that require oversight. That makes governance, observability and human accountability central to the next stage of enterprise AI, Kavanaugh emphasized. "One, this is going to be the most powerful inflection shift that I've seen, let me personalize it, in my career around the agentic AI era," he said. "Around that comes an important responsibility for companies, for worlds, for economies, for industries, to ensure that we responsibly and ethically scale this technology in the most proper manner for common good about how we get value and how we get the leverage of what technology is." The competitive landscape is tightening as cloud, data and software companies push their own enterprise AI platforms. IBM's clearest opening is not being the flashiest AI company, but being one of the most credible choices for regulated, hybrid and risk-sensitive enterprises that need AI to work inside existing operations, Kavanaugh observed. "Inside IBM, my point of view is we are not going to operate in a world of AI plus," he said. "We are going to operate in a world of plus AI. To use your terminology, the human and the digital synergistically working together is going to be the world that we're going to operate in, artificial intelligence combined with augmented intelligence at the end of the day." Here's the complete video interview with IBM's Jim Kavanaugh:

[2]

Arvind Krishna AMA at IBM Think 2026: Openness, integration and key questions on the IBM AI stack - SiliconANGLE

Arvind Krishna AMA at IBM Think 2026: Openness, integration and key questions on the IBM AI stack IBM Corp. entered Think 2026 with a different posture than the market is used to. In this artificial intelligence cycle, the company is leaning hard into openness and ecosystem - and doing it without the usual proprietary overtones. According to Chairman and Chief Executive Arvind Krishna (pictured), that choice is purposeful. It's also a sign that IBM's CEO has a clear vision of where the puck is going and the approach IBM must take to win in this era. According to Krishna, foundation models are in a game of leapfrog and must become interchangeable over time. Value is shifting to the platform layer to ensure governance, integration, and the ability to move workloads and data without getting trapped by one vendor's propriety. This Special Breaking Analysis is based on an analyst "ask me anything" with Krishna at IBM's annual conference in Boston this year. The session covered where IBM is constrained, what metrics matter, how IBM is allocating resources, the workforce implications of AI, and why Krishna chose not to show a "stack slide" in the keynote - a deliberate choice to stay anchored on business value, not tech specs. Our take is that IBM's open posture is directionally correct, but it raises a strategic question in that IBM's greatest historical strength has been deep vertical integration. The mainframe proves what integrated, transaction-grade engineering can achieve for customers. The opportunity now in our view, is to combine that integration with an ecosystem that's motivated to build, sell and profit alongside IBM. Krishna's answer started with a blunt reality commenting that IBM doesn't have enough market share and he wants teams to do twice as much in half the time. He connected that to a portfolio view that is not only reliant on mergers and acquisitions but also involves predicting where clients will find value around the corner; then concentrating credibility and investment into a small number of arenas where IBM can win. He pointed to Red Hat as the by far the best bet. He said the Red Hat acquisition looked "weird" to many observers in 2019, but it has proven to be the default delivery model. He also challenged the stale perception that IBM is inherently proprietary, arguing IBM is the "most open source company in the world," and that has doubled since the Red Hat deal. Key message: IBM is trying to win by narrowing focus, accelerating execution, and leaning into openness as a first principle, while still picking a few spots to go deep and lead. Krishna's answer was unequivocal: "software growth." He tied that directly to the idea that AI success is not just about the best AI model - it's where AI runs, how data is unlocked, and whether IBM's hybrid and data strategy are pulling workloads into IBM software. He also referenced the scale of IBM's generative AI book of business (noting it has surpassed $12.5 billion). Key message: IBM is effectively telling investors and customers to watch software growth as the proxy for AI traction, because that's where repeatable value flows once AI moves into production workflows. Krishna pushed back on the premise that the future is only cloud-native, arguing the market is still meaningfully split between on-premises and cloud and that IBM doesn't care where you run it - the priority is resilience and long-term cost. He acknowledged the temptation of high-margin, slower-growth franchises, but described two counterweights: 1) Resource allocation from the top (IBM's R&D spend growth); and 2) Sales specialization and incentives geared to newer portfolio areas. Key Message: IBM is managing the portfolio with incentives and capital allocation - treating mainframe as an advantage to extend, not a reason to underinvest in the next stack. Krishna said IBM is planning to triple entry-level hiring in 2026 based on two convictions: 1) The business will grow, and 2) AI productivity can make a person with one year of tenure perform like someone with five years of experience. He also said the shape of hiring is changing, with fewer clerical/back-office roles, more engineering, sales and digital roles. He offered an anecdote from his daughter who claims roughly 25% of work that took eight hours three years ago now takes less than an hour. He added a concerning observation about education, saying universities are moving too slowly, though he sees a meaningful minority leaning in. He shared an observation that the educational system is polarized, with one end of the spectrum forcing students to lean into AI and the other end banning the use of the technology in their studies. Krishna, like most folks in tech, favors the former approach. Key message: IBM is leaning into AI as a productivity engine and using it to justify growth hiring, while acknowledging roles are materially changing, especially where repetitive work can be streamlined. Krishna rejected the idea of AI becoming autonomous in the consciousness sense, describing it as a powerful tool under human control. His advice to other CEOs was focus on the operational: Key message: The operating shift is cultural more than technical - and Krishna's prescription is focus, commitment and leadership accountability. Krishna said the decision was intentional - he wanted to stay on business value rather than "tech up," and he also wanted to keep AI and quantum discussions distinct. On the deeper integration question, he argued enterprises of scale should keep the discipline to work with two or three model options because foundation models will become a commodity in the sense that you can substitute one for another (admittedly, with some work). He stressed the discipline of insulating end users from idiosyncrasies of model analysis, and keeping portability inside the platform layer. Key message: While IBM has an end-to-end stack, the company is betting that choice expands and that sustainable value shifts to the platform layer - where portability, integration and governance live. Krishna described agent infrastructure as early, saying it will require rough consensus rather than complete consensus, and implied it's still too soon to declare a single winning approach. He suggested IBM's role is neutral plumbing across heterogeneous environments. He referenced learnings from history with initiatives like message queuing, or MQ, and service-oriented architecture, or SOA, stressing the importance of things working across multiple agent providers rather than trying to be the unique agent provider. Key message: IBM's agent strategy aligns with Krishna's philosophy - integration across heterogeneity. The near-term test is whether IBM can operationalize that neutrality into an enterprise-grade control layer and scale that across industries and clients while building an open ecosystem. Krishna argued that quantum is shifting from a science problem into an engineering problem, with progress coming faster than many expected. He cited molecular modeling (chemistry/biology) as the obvious near-term category, referenced protein simulation progress (12,000-atom scale), and emphasized hybrid approaches where quantum tackles pieces and classical systems assemble results. He also pointed to differential equations and real-world physics as the next category - aerodynamics, fluids, reservoirs - problems that are currently too hard to solve classically. He suggested commercialization as late 2028/2029, tied to both technical progress and the economics of building systems at scale. Key message: IBM is positioning quantum as less of a platform shift, and more of a hybrid complement by nature - with near-term traction in molecules and a credible path into physics-based simulation as systems improve. We believe this (and other Krishna AMAs) show a materially different IBM than the one many still carry in their heads. Krishna repeatedly stressed openness, and he challenged the "IBM is proprietary" narrative, tying IBM's identity to open-source scale post-Red Hat. That posture is sensible in an era where developer affinity and ecosystem economics decide who wins. We were struck when Krishna referenced a 2018 interview with John Furrier, where they discussed whether Kubernetes and containers would win, and he connected that to a broader point about the need for standards and rough consensus rather than one company keeping tight control. He used that example to frame his answer about how the agentic infrastructure layer may evolve similarly. At the same time, we believe one of IBM's greatest advantages has been deep integration - the mainframe and its continued success is the proof point -- and frankly the one and only product where IBM is an unquestioned No. 1. It's still the gold standard for secure, high-performance transactions, and that level of integration creates real customer value that DIY approaches struggle to match. The trick in this cycle is to build a tightly integrated stack, while still recruiting an ecosystem that can make money alongside IBM. Amazon Web Services Inc. has proved the power of ecosystem, but it has not delivered the same kind of integrated transaction-grade stack end-to-end. That's where IBM has an opening, in our view. If foundation models become commoditized, the sustainable value shifts to the integration layer. We see a key opportunity for IBM in data and process harmonization, workflow integration, governance and the ability to put AI into operation in end-to-end processes. Krishna chose not to show the stack slide in the keynote because business value is the right emphasis at Think. But we also believe IBM can press its advantage by articulating a fuller end-to-end integration story - one that looks more like an ontology-driven harmonization layer enterprises can trust and put into operation (our system of intelligence - SoI - model). IBM can strike a posture of "open by default" plus "integrated where it differentiates" -- a viable path to success in the AI era. Key message: In our view, IBM is doing the right thing by leading with business value and openness - now it has to connect that to an integrated, enterprise-grade story that turns hybrid+governance+data into a repeatable operating model customers can scale. Action item for CIOs: In the next 60 to 90 days, pick one cross-functional workflow that has real business value (cycle time, cost, compliance risk) and run a disciplined platform reality check against IBM's premise. The deliverable isn't a model demo or a retrieval-augmented generation-based chatbot. It's a governed, end-to-end agentic workflow that includes systems of record, applies consistent identity and policy, and produces an auditable, repeatable outcome. The goal should be to begin to shape an AI operating model. and move beyond pilots and point tools. The gotcha to avoid is treating open as an architecture by itself. Openness helps ecosystem expansion and choice, but it doesn't replace deep integration where it matters most - that is, transactions, security and deterministic execution. Chief information officers should stress-test whether IBM has an intention of participating in earnest to own the harmonization layer across data and process semantics while still keeping the ecosystem motivated to build and profit alongside IBM. If IBM chooses not to go there, it will affect your relationship with the company, as we see this layer as the highest-value piece of real estate in the emerging AI software stack. If IBM is not delivering that value, you'll need to find a partner that will, and understand how and where your IBM stack fits.

[3]

IBM charts AI operating model to move enterprises beyond experimentation - SiliconANGLE

IBM charts AI operating model to move enterprises beyond experimentation IBM Corp. will use its Think 2026 conference today to outline a broad expansion of its enterprise artificial intelligence portfolio, positioning a new "AI operating model" as the next stage in its customers' march toward translating early investments into measurable returns. The announcements span agent orchestration, real-time data integration, hybrid cloud operations and digital sovereignty, reflecting what executives described as a shift away from isolated AI deployments toward systemic integration across the enterprise. "The enterprises pulling ahead are not deploying more AI; they're redesigning how their business operates," IBM Chief Executive Arvind Krishna said during a media briefing. IBM is framing AI as an operational transformation challenge rather than a model or tooling race, emphasizing its independence from AI models. The company is promoting a four-part architecture built around agents, data, automation and hybrid infrastructure, which it argues must work together to deliver value at scale. Krishna emphasized that most enterprise data remains internal, favoring IBM's focus on hybrid cloud. "Over 70% of all data is still sitting inside the enterprise in systems that are core and germane to them," he said. AI strategies must therefore account for where data resides. A central piece of today's announcements is the evolution of watsonx Orchestrate -- a platform for building, deploying and managing agents -- into a multi-agent control plane spanning heterogeneous environments. IBM characterizes its orchestration layer as a unifying framework that integrates agents from multiple vendors, said Rob Thomas, senior vice president of software and chief commercial officer. "It's about the best agentic technology from any company in the world," he said. The strategy positions IBM as an integrator rather than a builder of foundation models. While the company its own foundation models called Granite, it emphasizes partnerships with model providers such as Anthropic PBC and OpenAI LLC, as well as major cloud platforms. "We help put AI into the enterprise," Krishna said, describing IBM's role as orchestrating models, data and infrastructure while ensuring governance and security. That approach reflects a broader shift in the competitive landscape. Rather than competing directly with hyperscalers on infrastructure or foundation models, IBM is focusing on what it sees as the next layer of value: operational integration. IBM also introduced new capabilities in its "Project Bob" platform, an AI-based tool system for enterprise software development lifecycles. New features are designed to support multimodel workflows across both cloud and on-premises environments. IBM has deployed the technology internally and driven "over $5 billion of productivity improvements," Thomas said. Data integration is another pillar of the strategy. Following its recent acquisition of Confluent Inc., IBM is emphasizing real-time data pipelines as a prerequisite for effective AI coordination. The integration of streaming and batch data into watsonx.data is intended to provide agents with continuously updated context. "Your AI is only as good as your data," Thomas said. "We're leveraging real-time data to inform agents that run in the enterprise." The company is also expanding its Concert platform, which applies AI to infrastructure operations and security. Initially focused on identifying vulnerabilities, the platform now embeds security management directly into developer workflows. It identifies and prioritizes risks as code is written and can generate automatic remediations to fix or patch vulnerable code. Execuetives stressed that human oversight is still needed. "Nothing is completely hands off, but it is used as augmentation," Thomas said, describing how AI-generated fixes are reviewed before deployment. Asserting that security and sovereignty are emerging as critical themes in enterprise AI, particularly in regulated industries and government environments, IBM formally announced the general availability of Sovereign Core, a platform announced early this year that supports AI deployments within tightly controlled, geographically bounded environments. Thomas said early use cases center on organizations requiring air-gapped or fully localized infrastructure. The offering includes an extensible catalog that organizations can populate with their own applications or those from pre-vetted IBM, third-party and open-source partners. Krishna framed sovereignty as a core requirement rather than an optional feature as AI becomes embedded in critical systems. "This way people can mix and match what's appropriate," he said. "That's our strategy to go forward on AI." Outside the enterprise realm, IBM highlighted recent advances in quantum computing, including a collaboration with the Cleveland Clinic to simulate protein complexes containing more than 12,000 atoms. The milestone reflects growing confidence that quantum systems are moving beyond experimental phases. The work is part of a broader push toward what IBM calls "quantum-centric supercomputing," which combines quantum and classical systems to tackle complex problems in areas such as drug discovery. Marrying the two architectures is driving much of the current research into quantum processors. "Quantum is no longer a science lab experiment," Krishna said. "People are doing real use cases of significant scale." However, executives cautioned that large-scale commercial applications remain several years away. Krishna said meaningful enterprise impact is likely to emerge toward the end of the decade as hardware capabilities improve. IBM executives were careful not to trumpet AI's transformational potential, choosing instead to emphasize the hard work that still needs to be done to make models scalable and reliable. Krishna drew a parallel to previous technology cycles, arguing that initial innovation phases tend to center on infrastructure before moving up the stack. "The real value in every one of these comes with the applications and the deployment into enterprises," he said. Thomas compared the current state of AI to the early days of electrification, suggesting that current AI deployments resemble incremental productivity tools rather than transformative systems. "It's useful, but it's not really redefining how the company runs," he said. "This is about moving beyond light bulbs to things that are more fundamental to how a company operates."

[4]

IBM Think 2026 Showcases Agentic AI And Sovereign Cloud Strategy

IBM focused its product innovation news at its annual conference on ways to enable an 'AI operating model' that brings together data, agents, automation and hybrid cloud to deliver AI to business cores and move beyond collections of AI projects. Private preview for a new iteration of Watsonx Orchestrate, public preview for the Concert operations platform and general availability of the IBM Bob agentic developer product and Sovereign Core software platform are some of the biggest news coming out of IBM Think 2026. The Armonk, N.Y.-based technology vendor focused its product innovation news at its annual conference on ways to enable an "AI operating model" that brings together data, agents, automation and hybrid cloud to deliver AI to business cores and move beyond collections of AI projects, bringing to life a connected AI system. Think runs through Wednesday in Boston. IBM's AI opportunity extends to services partners, resell partners, build partners, ISVs and startups, IBM Chairman, President and CEO Arvind Krishna said in response to a CRN question during a virtual press conference Monday. For systems integrators and services partners, a large amount of revenue opportunity is in connecting data sources to unlock AI agents and an AI operating model. "That is the value that enterprises need -- not just a chatbot or a consumerish application," Krishna said. "Those are important, but they only get to the first 20 percent of the value. The next value comes from deploying hybrid cloud technologies." [RELATED: IBM Q1 2026 Earnings: CEO Krishna Says AI Is A Tailwind To The Business] Krishna recommended solution providers look to the OpenShift hybrid cloud platform or IBM subsidiary Red Hat or even the IBM Sovereign Core revealed during Think 2026. He also suggested investing in greater automation to remove human labor complexities where possible and move value to real time. Rob Thomas, IBM senior vice president of software and chief commercial officer, said in answer to a CRN question during a virtual press conference that the vendor is looking for multidimensional partnerships, holding up its work with tax and consulting giant EY as an example. With IBM Watsonx Orchestrate and IBM Bob, EY powered a new tax product, brought services and advising to market and can bridge the product into other products through its technology platform. "It's an example of a services partner that's gone the next step to adopting AI, making it part of one of their solutions," Thomas said. "And then together, we built a joint go-to-work with them." Bo Gebbie, president of Hamel, Minn.-based IBM solution provider Evolving Solutions -- No. 162on CRN's 2025 Solution Provider 500 -- told CRN in an interview that recent IBM acquisition HashiCorp, IBM Bob and automation tools have proven popular with clients lately. "You think about people-cost increasing. You think about AI workloads. The automation side feeds into all of that," Gebbie said. The IBM Fusion infrastructure for AI and containers, bringing enterprise-grade performance across hybrid environments and unifying compute, storage and networking is also a big opportunity for Evolving Solutions, he said. "We are making a big push on skills and investment in that space." The new iteration of Orchestrate in private preview is now home to more third-party agents from companies including ServiceNow, Salesforce and Adobe, Thomas said during the press conference. "Orchestrate is no longer just about IBM technology," Thomas said. "It's about the best agentic technology from any company in the world." Also in private preview is a Bob Premium Package for Z that extends Bob's capabilities to mainframe environments, according to IBM. IBM moved its context in Watsonx.data capability into private preview, giving users an open, federated context layer for reliable AI reasoning over business data. Users can apply semantic meaning, enforce governance at runtime and give explanations for decisions. Watsonx.data gains generally available integrations with Confluent Tableflow and Flink for real-time event streaming. A private preview of IBM Z Database Assistant gives Db2 and IMS database administrators an AI-powered workspace for performance monitoring, task automation and configuration optimization. Another private preview puts Watsonx.data GPU-accelerated Presto in select users' hands, reducing certain workload costs and processing times on large datasets. Among the public previews revealed during Think 2026 is the IBM Concert AI-powered operations platform. Concert gives users signals across applications, networks, infrastructure and cost in one view without replacing existing tooling. Concert promises cross-domain understanding to prevent silos and surface important data and has gained importance as the world frets over new risks imposed by Claude maker Anthropic's Mythos, Thomas said. "Every customer is looking for how do I solve any problems with vulnerabilities that I'm not aware of?" he said. "Concert is really the only answer in the market today for that." Also in public preview is Concert Secure Coder for embedded security management in developer workflows. Secure Coder is available in IBM Bob and VS Code and generates automatic remediations for vulnerable code and patching middleware, packages, images and more. HashiCorp Platform (HCP) Terraform powered by Infragraph is in public preview and allows for unified infrastructure visibility through centralized, event-driven knowledge graphs. Users connect data across cloud environments, infrastructure-as-code workflows, security tooling and operations platforms, according to IBM. IBM has made its Bob agentic developer generally available. Bob allows for AI agent building with built-in security and cost. IBM also has made Vault 2.0 generally available, with AI-driven analysis of leaked secrets for quick triaging, dynamic short-lived credentials across the major cloud providers and automated secrets rotation. The vendor plans to make its Secure Secret Manager available in June to enhance Resource Access Control Facility mainframe environments. The manager integrates with Vault Self-Managed for Z and LinuxOne, according to IBM. IBM has made Sovereign Core generally available, giving users a software platform for AI-ready sovereign environments and control over infrastructure, operations and systems. The platform, which has been in preview for a couple of months and offers a way to deploy technology air-gapped from any international borders and on-premises, only managed by locals, Thomas said. Sovereign Core aims to help AI users create consistent, auditable AI results so that the cutting-edge technology can enter operational reality faster, according to the vendor. Sovereign Core seeks to enable operational sovereignty and sovereignty over data at rest, in use and in motion. It also focused on technology sovereignty, avoiding vendor lock-in through open, modular architecture. And users gain AI sovereignty, controlling where models run and how to govern inference. The platform's control plane extends full authority over configurations, operations and life-cycle management. In-bound identity, encryption and data services give customers control over access, secrets, keys, logs and audit evidence. Sovereign Core has continuous compliance monitoring and evidence generation for real-time audit readiness. And it has preloaded regulatory frameworks for defining compliance postures across regions and industries quickly. Sovereign Core allows for governing data, models, inference and agent behavior, controlling where AI processing happens and governance over access and updates, all important to highly regulated fields, including government, public sector, regional cloud operators and enterprises, according to IBM. The platform already has prevetted third-party and open-source software and services from channel partners such as HCL and Atos-plus vendors including AMD, Palo Alto Networks, Cloudera, Dell, Intel, MongoDB, Mistral and Elastic. Users provision CPU, GPU and AI inference environments with standardized templates and automated configuration profiles for consistent workload deployment and management, according to IBM.

Share

Copy Link

IBM is positioning itself as a trusted execution layer for enterprise AI at Think 2026, unveiling an AI operating model that prioritizes governance, orchestration and hybrid deployment over frontier models. With watsonx at the center and a $12.5 billion generative AI book of business, the company is betting that most enterprise value comes from applied AI on proprietary data in regulated, real-world environments.

IBM AI Strategy Pivots to Governed Enterprise AI in Production

IBM is making a calculated bet that the future of enterprise AI lies not in chasing frontier models, but in building trusted execution layers for complex business environments. At IBM Think 2026 in Boston, Chairman and CEO Arvind Krishna outlined an IBM AI strategy centered on what the company calls an AI operating model—a four-part architecture combining agents, data, automation and hybrid cloud capabilities

1

3

. This approach positions IBM as an orchestrator rather than a model builder, emphasizing governance, integration and the ability to deploy AI across fragmented enterprise landscapes without creating more risk than value. "The enterprises pulling ahead are not deploying more AI; they're redesigning how their business operates," Krishna said during a media briefing3

.

Source: SiliconANGLE

Watsonx Platform Evolves into Multi-Agent Control Plane

Central to IBM's enterprise AI vision is the evolution of the watsonx platform, particularly watsonx Orchestrate, which is transitioning into a multi-agent control plane spanning heterogeneous environments

3

. Now in private preview, the new iteration of watsonx Orchestrate integrates third-party agents from ServiceNow, Salesforce and Adobe, according to Rob Thomas, IBM's senior vice president of software and chief commercial officer4

. "Orchestrate is no longer just about IBM technology," Thomas said. "It's about the best agentic technology from any company in the world"4

. This strategy positions IBM as an integrator of agentic AI systems rather than competing directly with hyperscalers on infrastructure or foundation models, focusing instead on operational integration that delivers measurable returns.Hybrid Cloud Capabilities and Red Hat Foundation Drive Differentiation

IBM's hybrid cloud capabilities provide a practical opening as AI workloads move closer to production across public clouds, private systems, mainframes and edge locations. The company's 2019 Red Hat acquisition, which Krishna noted looked "weird" to many observers at the time, has proven to be the default delivery model and what he called "by far the best bet"

2

. IBM Chief Financial Officer Jim Kavanaugh explained that the Red Hat acquisition was predicated on three things: tighter integration of hybrid cloud and AI, a multicloud world, and workloads optimized across multiple environments including public cloud, private cloud, on-premises and edge1

. Krishna emphasized this reality at IBM Think 2026, noting that "over 70% of all data is still sitting inside the enterprise in systems that are core and germane to them," making hybrid strategies essential3

.Concert Platform and Project Bob Bring AI-Driven Infrastructure Operations

IBM introduced significant updates to its AI-driven infrastructure operations capabilities through the Concert platform, now in public preview, and Project Bob, which reached general availability

3

4

. The Concert platform provides cross-domain signals across applications, networks, infrastructure and cost in one unified view without replacing existing tooling4

. Project Bob, an AI-based tool system for enterprise software development lifecycles, has driven "over $5 billion of productivity improvements" internally at IBM, according to Thomas3

. The platform now embeds security management directly into developer workflows, identifying and prioritizing risks as code is written while generating automatic remediations. Thomas emphasized that human oversight remains essential, noting "nothing is completely hands off, but it is used as augmentation"3

.Related Stories

Sovereign Core Software Platform Addresses Digital Sovereignty Demands

IBM formally announced the general availability of the Sovereign Core software platform at IBM Think 2026, addressing growing demands for digital sovereignty in regulated industries and government environments

3

4

. The platform supports AI deployments within tightly controlled, geographically bounded environments, with early use cases centering on organizations requiring air-gapped or fully localized infrastructure. Thomas said the offering includes an extensible catalog that organizations can populate with their own applications or those from pre-vetted IBM, third-party and open-source partners3

. Krishna framed sovereignty as a core requirement rather than an optional feature as AI becomes embedded in critical systems, noting IBM's approach allows organizations to "mix and match what's appropriate"3

.Ecosystem Integration and Post-Quantum Security Complete the Vision

Source: SiliconANGLE

Arvind Krishna pushed back against perceptions of IBM as inherently proprietary, arguing the company is the "most open source company in the world" and that this openness has doubled since the Red Hat deal

2

. This emphasis on ecosystem integration extends to partnerships with model providers including Anthropic and OpenAI, as well as major cloud platforms3

. IBM's trust story also extends into post-quantum security, where Mark Hughes, global managing partner of cybersecurity services at IBM, emphasized the need for "crypto agility" as quantum-vulnerable public-key algorithms face deprecation and removal by 20351

. Krishna told investors and customers to watch software growth as the proxy for AI traction, noting IBM's generative AI book of business has surpassed $12.5 billion2

. For solution providers and systems integrators, Krishna highlighted significant revenue opportunities in connecting data sources to unlock AI agents, recommending they invest in OpenShift, Red Hat or Sovereign Core to capture value as enterprises move from AI pilots to production deployments4

.

Source: SiliconANGLE

References

Summarized by

Navi

[1]

[2]

[3]

Related Stories

IBM Unveils Enterprise-Ready AI Agents and Tools to Accelerate AI Adoption

06 May 2025•Technology

IBM Unveils Project Bob and Expands AI Infrastructure to Boost Enterprise Productivity

07 Oct 2025•Technology

Enterprise AI faces agent management crisis as digital worker lifecycle demands new skills

14 May 2026•Technology

Recent Highlights

1

Pope Leo XIV releases first AI encyclical calling for disarmament from monopolistic control

Policy and Regulation

2

AI passes the Turing Test as GPT-4.5 appears more human than actual people in landmark study

Science and Research

3

Google AI Search officially replaces traditional web search with Gemini-powered conversations

Technology