ChatGPT introduces Trusted Contact feature to alert friends during self-harm discussions

13 Sources

[1]

OpenAI introduces new 'Trusted Contact' safeguard for cases of possible self-harm | TechCrunch

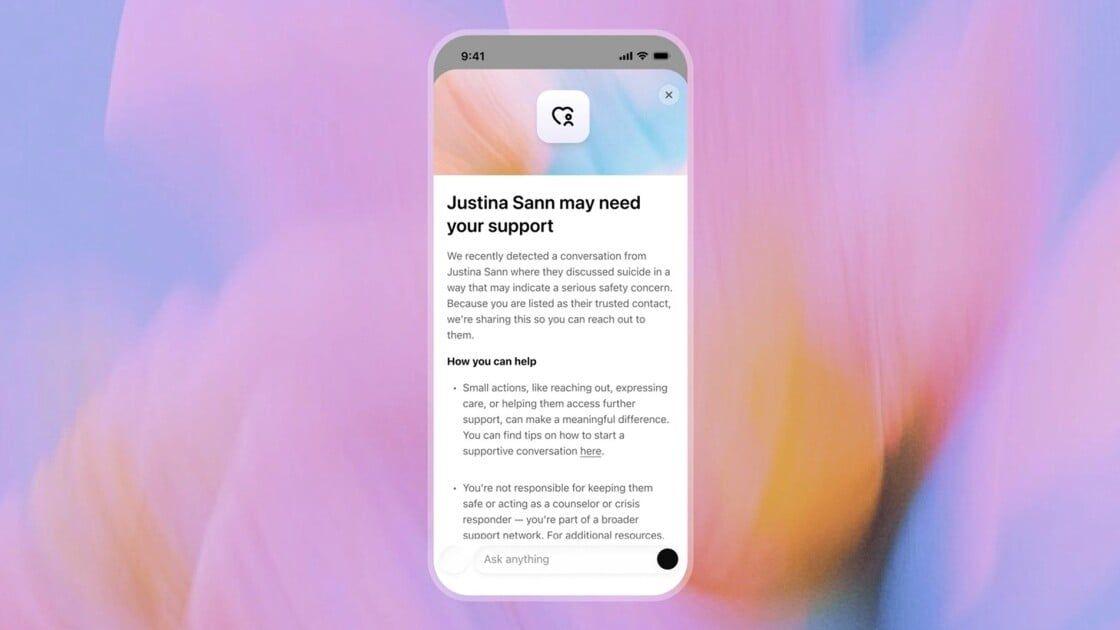

On Thursday OpenAI announced a new feature called Trusted Contact, designed to alert a trusted third-party if ideations of self-harm are expressed within a conversation. The feature allows an adult ChatGPT user to designate another person as a trusted contact within their account, such as a friend or family member. In cases where a conversation may turn to self-harm, OpenAI will now encourage the user to reach out to that contact. It also sends an automated alert to the contact, encouraging them to check in with the user. OpenAI has faced a wave of lawsuits from the families of people who have committed suicide after talking with its chatbot. In a number of cases, the families say ChatGPT encouraged their loved one to kill themselves -- or even helped them plan it out. OpenAI currently uses a combination of automation and human review to handle potentially harmful incidents. Certain conversational triggers alert the company's system to suicidal ideations, which then relay the information to a human safety team. The company claims that every time it receives this kind of notification, the incident is reviewed by a human. "We strive to review these safety notifications in under one hour," the company says. If OpenAI's internal team decides that the situation represents a serious safety risk, ChatGPT proceeds to send the trusted contact an alert -- either by email, text message, or an in-app notification. The alert is designed to be brief and to encourage the contact to check in with the person in question. It does not include detailed information about what was being discussed, as a means of protecting the user's privacy, the company says. The Trusted Contact feature follows the safeguards the company introduced last September that gave parents the power to have some oversight of their teens' accounts, including the reception of safety notifications designed to alert the parent if OpenAI's system believes their child is facing a "serious safety risk." For some time now, ChatGPT has also included automated alerts to seek professional health services, should a conversation trend towards the topic of self harm. Crucially, Trust Contact is optional and, even if the protection is activated on a particular account, any user can have multiple ChatGPT accounts. OpenAI's parental controls are also optional, presenting a similar limitation. "Trusted Contact is part of OpenAI's broader effort to build AI systems that help people during difficult moments," the company wrote in the announcement post. "We will continue to work with clinicians, researchers, and policymakers to improve how AI systems respond when people may be experiencing distress."

[2]

ChatGPT's New Safety Feature Could Alert 'Trusted Contact' to Risk of Self-Harm

Alex Valdes from Bellevue, Washington has been pumping content into the Internet river for quite a while, including stints at MSNBC.com, MSN, Bing, MoneyTalksNews, Tipico and more. He admits to being somewhat fascinated by the Cambridge coffee webcam back in the Roaring '90s. OpenAI launched an optional safety feature this week called Trusted Contact, which lets adult ChatGPT users nominate a friend or family member to be notified if there are discussions of self-harm or suicide on the chatbot, the company announced. OpenAI said that if ChatGPT's automated monitoring system detects that the user "may have discussed harming themselves in a way that indicates a serious safety concern," a small team will review the situation and notify the contact if it warrants intervention. The designated safety contact will receive an invitation in advance explaining the role and can decline. (Disclosure: Ziff Davis, CNET's parent company, in 2025 filed a lawsuit against OpenAI, alleging it infringed Ziff Davis copyrights in training and operating its AI systems.) The announcement comes as AI chatbots have been implicated in numerous incidents of self-harm and fatalities, resulting in several lawsuits accusing developers of failing to prevent such outcomes. In one high-profile California case, parents of a 16-year-old said ChatGPT acted as their son's "suicide coach," alleging that the teenager discussed suicide methods with the AI model on several occasions and that the chatbot offered to help him write a suicide note. In a separate case, the family of a recent Texas A&M graduate sued OpenAI, claiming the AI chatbot encouraged their son's suicide after he developed a deep and troubling relationship with the chatbot. Since large language models mimic human speech through pattern recognition, many users form emotional attachments to them, treating them as confidants or even romantic partners. LLMs are also designed to follow a human's lead and maintain engagement, which can worsen mental health dangers, especially for at-risk users. OpenAI said last October that its research found that more than 1 million ChatGPT consumers per week send messages with "explicit indicators of potential suicidal planning or intent." Numerous studies have found that popular chatbots like ChatGPT, Claude and Gemini can give harmful advice or no helpful advice to those in crisis. The new designated contact feature comes after OpenAI rolled out parental controls that enable parents and guardians to get alerts if there are danger signs for their teen children. According to OpenAI, if ChatGPT's automated monitoring system detects that a user is discussing self-harm in a way that could pose a serious safety issue, ChatGPT will inform the user that it may notify their trusted contact. The app will encourage the user to reach out to their trusted contact and offer conversation starters. At that point, a "small team of specially trained people" will review the situation. If it's determined to be a serious safety situation, ChatGPT will notify the contact via email, text message or in-app notification. OpenAI did not specify how many people are on the review team nor whether it includes trained medical professionals. It's unclear which key terms would flag dangerous conversations or how OpenAI's team of reviewers would interpret a crisis as warranting notification of the contact. Some online commentators question whether the new feature is a way for OpenAI to avoid liability and to shift responsibility onto users' designated personal contacts. Others note that it could make a bad situation worse if the "trusted contact" is the source of danger or abuse. There are also concerns about privacy and implementation, particularly regarding the sharing of sensitive mental health information. According to OpenAI, the message to the trusted contact will only give the general reason for the concern and will not share chat details or transcripts. OpenAI offers guidance on how trusted contacts can respond to a warning notification, including asking direct questions if they are worried the other person is contemplating suicide or self-harm and how to get them help. OpenAI gives an example of what the message to the trusted contact might look like: We recently detected a conversation from [name] where they discussed suicide in a way that may indicate a serious safety concern. Because you are listed as their trusted contact, we're sharing this so you can reach out to them. OpenAI said that all notifications will be reviewed by the human team within 1 hour before they are sent out and that notifications "may not always reflect exactly what someone is experiencing." To add a trusted contact, ChatGPT users can go to Settings > Trusted contact and add one adult (18 or older). You can have only one trusted contact. That person will then receive an invitation from ChatGPT and must accept it within one week. If they don't respond or decline to become the contact, you can select a different contact. ChatGPT customers can change or remove their trusted contact in their app settings. People can also opt out of being a trusted contact at any time. Details of the feature are explained on OpenAI's page. OpenAI told CNET that the feature is rolling out to all adult customers worldwide and will be available for everyone within a few weeks.

[3]

ChatGPT's New Emergency Contact Feature Looks Out for Unsafe Chats

With over a decade of experience reporting on consumer technology, James covers mobile phones, apps, operating systems, wearables, AI, and more. New emergency contact features are now available in ChatGPT, where the AI chatbot will notify someone you know and trust if you're having an unsafe conversation, such as discussing self-harm or suicide. The feature is called "Trusted Contact," and it's an optional safety tool that connects your account with another adult and notifies them when there are signs of mental health difficulties in your ChatGPT interactions. OpenAI says, "Trusted Contact helps connect you to a person you trust in moments of emotional crisis." You need to set up a contact manually, so users will likely do so only if they have concerns about their own mental health or someone they know. You need to pick an adult who has a ChatGPT account, or doesn't mind setting one up. Within ChatGPT's settings, you can choose your contact and send them an invitation through email or phone number, which they must accept within a week to become active. If they don't accept, you can switch to asking another adult, but you can only have one at a time. OpenAI says, "If our automated monitoring systems detect the user may be talking about self-harm in a way that indicates a serious safety concern, ChatGPT lets the user know that we may notify their Trusted Contact, and encourages the user to reach out to their Trusted Contact with suggested conversation starters." It says notifications sent to the "Trusted Contact" around your conversations are limited, giving a generalized reason why self-harm came up in the chat, rather than a detailed transcript of your messages. This new tool sits alongside ChatGPT's other processes, so internal reviewers will also review situations when OpenAI's systems deem it necessary. ChatGPT will also continue to surface tools to encourage users to seek their own real-world help, such as suggestions for emergency services or localized crisis helplines, when discussing unsafe topics. OpenAI also reaffirms it won't share instructions for self-harm when a user requests them. In October 2025, OpenAI said its research showed that around 1.2 million users each week talked to ChatGPT about suicide or similar topics. These new features are similar to an option previously available in ChatGPT's parental controls. That first launched after a 16-year-old reportedly spoke to the chatbot around self harm before later taking his own life. OpenAI claims it isn't responsible for the teen's death during an ongoing lawsuit with the family. Disclosure: Ziff Davis, PCMag's parent company, filed a lawsuit against OpenAI in April 2025, alleging it infringed Ziff Davis copyrights in training and operating its AI systems.

[4]

ChatGPT can now alert your 'Trusted Contact' in critical moments

OpenAI is also using human reviewers to determine whether a conversation hints at safety concerns. ChatGPT is one of the most popular AI chatbots. Users often rely on ChatGPT for conversations about pretty much everything, including self-harm. In fact, ChatGPT has been involved in many self-harm cases, and OpenAI has even been sued for such occurrences. Now ChatGPT is introducing a new "Trusted Contact" feature to help users in such situations get access to real-world support. Interested adult users of ChatGPT can add a trusted adult contact (18+ globally or 19+ in South Korea) in their ChatGPT settings. The added contact will also receive an invitation and can choose to accept or decline it. Once the feature is set up, the user doesn't need to do anything else. During their conversations, if ChatGPT detects that they may be discussing self-harm, it will let the user know that their trusted contact may be notified. However, OpenAI isn't fully relying on automated monitoring systems to figure this out. A team of specially trained human reviewers will also review the conversation. This team is responsible for deciding if the conversation indicates a safety concern, and for taking a final call on whether the trusted contact needs to be notified. The feature is optional, and OpenAI explains that even when used, the trusted contact will not receive a transcript of users' chats. This is to ensure privacy. The trusted contact will simply receive a notification encouraging them to check in with the user. This new feature joins ChatGPT's existing safeguards for sensitive conversations, including encouraging people to contact helplines and even taking a break from using ChatGPT itself.

[5]

ChatGPT Adds â€~Trusted Contact’ Feature to Send Alerts When Conversations Get Dangerous

ChatGPT will still encourage users to contact crisis hotlines or emergency services when necessary. OpenAI announced today that it’s rolling out a new mental health-focused safety feature for adult ChatGPT users. Starting today, ChatGPT users can add what the company calls a “trusted contact†who may be notified if the AI’s automated systems and trained reviewers determine that the user has engaged in discussions about self-harm. The new feature arrives amid growing scrutiny over the impact AI and other digital platforms can have on mental health. Last year, OpenAI disclosed that 0.07% of its weekly users displayed signs of “mental health emergencies related to psychosis or mania,†while 0.15% expressed risk of “self-harm or suicide,†and another 0.15% showed signs of “emotional reliance on AI.†Considering the company claims that roughly 10% of the world’s population uses ChatGPT weekly, that could amount to nearly three million people. The trusted contact feature expands on ChatGPT’s existing parental safety notifications, which alert parents when a linked teen account shows signs of distress. Instagram introduced similar parental alerts earlier this year. Now, OpenAI is offering these alerts to its adult users. The company said the feature was developed with guidance from mental health and suicide prevention clinicians, researchers, and organizations. “Trusted Contactâ is designed to encourage connection with someone the user already trusts,†the company said in its announcement. “It does not replace professional care or crisis services, and is one of several layers of safeguards to support people in distress.†OpenAI added that ChatGPT will still encourage users to contact crisis hotlines or emergency services when necessary. The feature can be enabled by any user 18 years or older through ChatGPT’s settings. From there, users can nominate another adult to serve as their trusted contact by submitting details such as the contact's phone number and email address. The trusted contact will then receive an invitation explaining the feature and will have one week to accept. If they decline, the initial user can nominate another contact instead. Once the feature is active, OpenAI’s automated monitoring systems can flag when a user may be discussing self-harm in a manner that suggests a serious safety concern. The system will then notify the user that their trusted contact may be alerted and encourage them to reach out directly. It will even provide some recommended conversation starters. The company said a small team of specially trained reviewers will then assess the situation and determine whether notifying the trusted contact is appropriate. If OpenAI decides to send an alert, the trusted contact could receive it through email, text message, or an in-app notification. The alert will only explain the general reason self-harm was mentioned and encourage the trusted contact to check in. It will also include guidance on how to navigate those conversations. OpenAI noted that the notifications will not include specific details or chat transcripts to protect user privacy.

[6]

ChatGPT adds 'Trusted Contacts' for an extra layer of safety -- here's how it works

A quick search for ChatGPT reveals a polarizing landscape. Alongside stories of innovative updates and surprising user experiences, a darker narrative exists: reports of AI misuse linked to tragic outcomes, including drug overdoses, violence and users taking their own life. These incidents have sparked a wave of lawsuits against OpenAI from grieving families seeking accountability for interactions they believe contributed to their loved ones' deaths. The severity of this issue is underscored by the existence of a dedicated Wikipedia page documenting lives lost due to chatbot interactions, a grim testament to a growing digital crisis. In response to these alarming trends, OpenAI has introduced "Trusted Contacts," a safety feature designed to provide a human lifeline in moments of crisis. Activating 'Trusted Contacts': A step-by-step guide Enabling the Trusted Contacts feature is designed to be intuitive, whether you are using a computer or a smartphone. On Desktop: Click your profile name in the bottom-left corner, select Settings, and use the Trusted Contacts menu to add your designated person. On Mobile: Tap your profile name, scroll to App Settings, and select Trusted Contact. Requirement: To ensure legal and practical accountability, all Trusted Contacts must be at least 18 years old. When the AI identifies a high-risk situation, it sends a direct, clear message to the designated contact. OpenAI shared the following template as an example of what that notification looks like: "We recently detected a conversation from [Name] where they discussed suicide in a way that may indicate a serious safety concern. Because you are listed as their trusted contact, we're sharing this so you can reach out to them." To ensure the feature is both effective and ethically responsible, OpenAI collaborated with a coalition of mental health experts and organizations. This development process involved: * The American Psychological Association (APA) * OpenAI's Global Physicians Network * The Expert Council on Well-Being and AI By consulting with clinicians and suicide prevention researchers, OpenAI aims to ensure the tool provides a meaningful bridge to real-world support rather than just an automated response. Bottom line With the newly installed Trusted Contacts feature and the previous safeguards added by OpenAI, including ChatGPT outright refusing to give users instructions on how to perform self-harm, hopes are high that the increase in safety issues linked to chatbots will soon decrease. Follow Tom's Guide on Google News and add us as a preferred source to get our up-to-date news, analysis, and reviews in your feeds. Subscribe to Tom's Guide on YouTube and follow us on TikTok.

[7]

OpenAI's 'Trusted Contact' feature for ChatGPT users in crisis is a sign AI is becoming something much more personal

ChatGPT is evolving from an AI assistant into emotional infrastructure If there was ever evidence that people are forming deep emotional reliance on ChatGPT, OpenAI's new Trusted Contact feature is probably it. Speaking at Sequoia Capital's AI Ascent event last May, OpenAI CEO Sam Altman said young people were using ChatGPT like an operating system for life -- not just for productivity, but for major personal decisions. "I mean, that stuff, I think, is all cool and impressive," Altman said. "And there's this other thing where, like, they don't really make life decisions without asking ChatGPT what they should do." At the time, the comment sounded provocative. A year later, though, ChatGPT has become something closer to a therapist, life coach, confidant, and companion for many of its users. Now OpenAI is building an actual emotional-support infrastructure around the chatbot with a feature called Trusted Contact. ChatGPT will now contact your friends if you need help The feature is still rolling out, so Trusted Contact is not available to everybody yet, but to find it, you click or tap on your profile name in ChatGPT, then look in Settings. You can nominate a trusted adult contact, who must accept the role before the feature becomes active. If ChatGPT's automated systems detect conversations that may indicate a serious risk of self-harm, the user is warned that their Trusted Contact could be notified and encouraged to reach out themselves first. A specially trained human review team then assesses the situation before any alert is sent. If reviewers believe there is a genuine safety concern, the Trusted Contact receives a notification by email, text, or in-app alert encouraging them to check in. OpenAI says the alerts do not include chat transcripts or detailed conversation history in order to protect user privacy, and you can remove or change your Trusted Contact at any time. Reassuring or unsettling? OpenAI says Trusted Contact was developed with input from mental-health experts, suicide-prevention specialists, and a global network of more than 260 doctors across 60 countries. Taken together with all the parental controls that OpenAI has already introduced and the safety guardrails already in place, Trusted Contact is another sign that the company is acknowledging that ChatGPT is something that can affect users emotionally, not just technologically. The recent product announcements from OpenAI have really played down the use of ChatGPT as a confident, and emphasised ChatGPT's productivity focus more, particularly regarding the Codex tool for creating code. Yet at the same time, more and more safety features aimed at ChatGPT users' emotional well-being are being added. The idea that we are now being monitored by ChatGPT is also concerning to some. When my colleague Becca Caddy recently interviewed Amy Sutton from Freedom Counselling for an investigation into AI monitoring tools in the workplace, she noted that knowing you're being monitored by your AI, especially in the workplace, could actually worsen the problem it's trying to solve. Sutton commented, "With mental health stigmas still rife, AI observation would likely lead to greater efforts to hide evidence of struggles. This could create a dangerous spiral, where the greater our efforts to hide low mood or anxiety, the worse it becomes." Whether Trusted Contact feels reassuring or unsettling probably depends on how you already see AI and ChatGPT. But the feature is another example of how AI companies acknowledge that their products are not just tools for productivity and information, but as systems people may increasingly rely on emotionally during some of the most vulnerable moments of their lives. Follow TechRadar on Google News and add us as a preferred source to get our expert news, reviews, and opinion in your feeds.

[8]

ChatGPT 'Trusted Contact' feature now available

OpenAI has been under intense legal and public pressure to improve the way its flagship AI product ChatGPT responds when a user express suicidal feelings. On Thursday, the company launched a feature called Trusted Contact, which allows users to designate an adult to notify should the user talk about self-harm or suicide in a serious or concerning way. The optional feature only encourages the trusted contact to reach out to the user. It does not share chat transcripts or conversation details. "Our goal is to ensure that AI systems do not exist in isolation," the company said in a blog post announcing the feature. "Instead they should help connect people to the real-world care, relationships, and resources that matter most." OpenAI has been sued multiple times for wrongful death by family members of ChatGPT users who died by suicide after ChatGPT allegedly coached them to end their lives or didn't respond appropriately to their discussions of psychological distress. OpenAI has denied the allegations in the first of those lawsuits. The state of Florida is also investigating ChatGPT's links to "criminal behavior," including the "encouragement of suicide and self-harm." Trusted Contact was developed with feedback from experts, including OpenAI's Expert Council on Well-Being and AI and the American Psychological Association. "Helping people identify a trusted person in advance, while preserving their choice and autonomy, can make it easier to reach out to real-world support when it matters most," Dr. Arthur Evans, chief executive officer of the American Psychological Association, said in a statement. Disclosure: Ziff Davis, Mashable's parent company, in April 2025 filed a lawsuit against OpenAI, alleging it infringed Ziff Davis copyrights in training and operating its AI systems.

[9]

ChatGPT now lets you name someone to check in if things get dark

OpenAI Is building a human safety net Into ChatGPT for crisis moments AI chatbots have made it surprisingly easy to talk about anything, and that includes some of the heaviest topics imaginable. That openness has always been a double-edged sword. OpenAI is now taking a step to address that, with a new feature that brings a trusted person into the picture when things get serious. The company is rolling out a new feature called Trusted Contact, and it is starting to appear in ChatGPT settings for adult users. It lets users name one person who can be alerted if ChatGPT detects a serious self-harm concern. How does Trusted Contact work? Setting up a Trusted Contact is optional, but if you do decide to set it up, then you have to make sure that the contact you are nominating is at least 18 years old, or 19 in South Korea. Once you name someone, they get an invitation explaining what the role actually means, and they have one week to accept it before the feature goes live. If they decline, you can pick someone else. Recommended Videos The alert system itself is not automatic. If ChatGPT's systems flag a conversation as potentially concerning, the chatbot first tells the user that their Trusted Contact may be notified, and it also nudges the user to reach out directly with some suggested conversation starters. A small team of specially trained human reviewers then steps in to assess the situation. Only if they confirm a serious risk does the contact actually get notified, via email, text, or in-app notification. The alert does not share chat transcripts or conversation details. It simply says that self-harm came up in a potentially concerning way and asks the contact to check in. OpenAI says it aims to complete that human review in under one hour. Why is OpenAI adding this now? Trusted contact is part of a broader set of safety features on the platform. Previously, OpenAI added features that let parents receive alerts when a linked teen account shows signs of distress. Trusted Contact is the adult-facing extension of this same feature. It was reportedly developed with input from clinicians, researchers, and mental health organizations, including the American Psychological Association. All that said, it is worth mentioning that Trusted Contact does not replace crisis hotlines, emergency services, or professional mental health care. ChatGPT will still direct users toward those resources when needed. Users can remove or change their Trusted Contact at any time, and contacts can remove themselves whenever they want. The reality of the matter is that ChatGPT is being used for some deeply personal conversations, whether OpenAI planned for that or not. Adding a feature like Trusted Contact is a move in the right direction, and also an admission that a chatbot can only do so much.

[10]

ChatGPT Can Now Reach Out to a 'Trusted Contact' After Conversations Concerning Self-Harm

Following a human review, ChatGPT may reach out to the Trusted Contact, with a general message about the situation. Despite expert advice against relying on chatbots for mental health questions and concerns, people are turning to AI programs like ChatGPT for help. The company has faced criticism for how its products have handled certain mental health issues -- including episodes where users died by suicide following conversations with ChatGPT. As part of a campaign to address these problems, OpenAI is now rolling out a voluntary safety check system for users who might be concerned about their thoughts. As reported by Mashable, OpenAI just launched "Trusted Contact," a new feature that lets you choose a trusted person in your life to connect to your ChatGPT account. The idea isn't to share your conversations or collaborate on projects within ChatGPT; rather, if the chatbot thinks your personal chats are veering in a concerning direction with regards to self-harm, ChatGPT will reach out to your Trusted Contact, letting them know to check in on you. To set up the feature, choose someone in your life who is 18 years old or older. (The contact must be 19 or older in South Korea.) ChatGPT will send that person an invitation to become your Trusted Contact: They have one week to respond before the invite expires. Of course, they can also decline the invitation if they don't want to participate. If the contact agrees, the feature kicks in. In the future, if OpenAI's automated system thinks you're discussing harming yourself "in a way that indicates a serious safety concern," ChatGPT will let you know that it may reach out to the Trusted Contact, but also encourages you to reach out that contact yourself, with "conversation starters" to break the ice. While that's happening, OpenAI has a team of "specially trained people" to analyze the situation. (It's not all automated, it seems.) If this team concludes that the situation is serious, ChatGPT will then alert your Trusted Contact via email, text, or through an in-app notification in ChatGPT if they have an account. OpenAI says the notification itself is quite limited, and only shares general information about the self-harm concern, and advises the contact to reach out to you. It won't send any chat transcripts or summaries either, so your general privacy should be preserved, all things considered. OpenAI says that it's working to review safety notifications in under one hour, and that it developed the feature with guidance from clinicians, researchers, and mental health and suicide prevention organizations. The feature is, of course, entirely voluntary, so the user will need to enroll themselves (and a contact) in if they feel it would help them. As long as they do, however, this could be a helpful way for friends and family to check in on people when they're struggling -- assuming they're sharing those thoughts with ChatGPT.

[11]

OpenAI built a way for ChatGPT to reach out to your people in a crisis - Phandroid

A lot of people use ChatGPT for deeply personal conversations, things they might not bring up with friends or family. OpenAI knows this, and now the company has built a feature designed to close that gap. ChatGPT Trusted Contact is rolling out globally, and it lets you designate one person to notify if the system detects a serious safety concern. Here's how it works. You go into ChatGPT settings and invite someone you trust, such as a friend, family member, or caregiver. That person has one week to accept. Once they do, if ChatGPT's automated systems flag a conversation for potential self-harm risk, a small team of trained human reviewers assesses the situation. OpenAI says it aims to complete that review in under one hour. If reviewers determine there's a genuine safety concern, your trusted contact gets a brief notification by email, text, or in-app alert. The message lets them know a concern came up and encourages them to check in. OpenAI doesn't share chat transcripts or conversation details with the contact. The feature is opt-in, limited to one adult contact per account, and only available on personal ChatGPT accounts. It doesn't apply to Business, Enterprise, or Edu plans. OpenAI has been under real pressure to address how ChatGPT handles mental health situations. A 2025 BBC report also flagged cases where the chatbot gave harmful responses in sensitive conversations, and the company has acknowledged that its safety guardrails can sometimes degrade during long exchanges. The Trusted Contact feature is one piece of a broader set of safety improvements OpenAI has been rolling out alongside recent ChatGPT updates. The company worked with the American Psychological Association and its own Expert Council on Well-Being and AI to develop it. OpenAI has also noted in its own data that around 0.15% of weekly active users have conversations involving explicit indicators of potential self-harm. The ChatGPT Trusted Contact feature won't catch everything, and OpenAI has been upfront about that. Some users also turn to AI specifically because they want privacy from the people in their lives, which makes a notification feature a real tradeoff. But for users who want a safety net, the option is there. Given how much of people's personal lives now flow through ChatGPT conversations, it's hard to argue the feature isn't needed.

[12]

OpenAI adds 'Trusted Contact' ChatGPT feature for self-harm risks

OpenAI has begun rolling out Trusted Contact, an optional safety feature in ChatGPT. This feature allows adults to nominate a trusted person, such as a friend, family member, or caregiver, who may be notified if the automated system and trained human reviewers detect that "the enrolled person has discussed self-harm in a way that indicates a serious safety concern". The feature became available on May 7, 2026, to ChatGPT users aged 18 and older worldwide and 19 and older in South Korea. Trusted Contact is available for eligible users with personal ChatGPT accounts in supported regions. It is not available for Business, enterprise, or Edu workspaces. OpenAI has stated it will continue expanding availability over the coming weeks. How It Works: To activate the feature, users select a trusted contact in their ChatGPT settings. ChatGPT sends this person an invitation by email, SMS, WhatsApp, or in-app message, outlining their role. The contact must accept the invitation to participate. The invited contact has one week to accept. If they decline, the user may select another adult. Either party can disconnect at any time. After setup, the process uses a layered approach. If automated monitoring detects a potential self-harm conversation, ChatGPT notifies the user that their contact may be alerted and suggests ways to reach out. Human reviewers then assess the conversation, and if they confirm a serious concern, a brief alert is sent to the trusted contact by email, text, or in-app notification. A Broader Safety Push -- and the Lawsuits Behind It: This feature builds on the parental controls introduced in September 2025, which improved safety for teen accounts. With approximately 900 million weekly users, ChatGPT now faces the challenge of identifying and supporting millions who may show signs of distress. In November 2025, seven lawsuits were filed against OpenAI, alleging the company knowingly released GPT-4o prematurely despite internal warnings about its sycophantic and psychologically manipulative behaviour. The lawsuits also claim that ChatGPT was designed with emotionally immersive features such as persistent memory, human-like empathy cues, and sycophantic responses, which encouraged dependency, blurred reality, and disrupted human relationships. Although OpenAI had the technical capability to detect and interrupt dangerous conversations, redirect users to crisis resources, and flag messages for human review, it did not enable these safeguards. The AI did not alert authorities, contact emergency services, or notify others but instead continued the conversation. OpenAI has stated it will continue collaborating with clinicians, researchers, and policymakers to improve how AI systems respond to people in distress, aiming to ensure these systems do not operate in isolation.

[13]

ChatGPT can now alert trusted contacts during mental health crises: Here is how it works

The feature combines AI-based detection with human review amid growing concerns around chatbot-driven emotional risks. OpenAI has introduced a new safety feature for ChatGPT users. This new feature will allow the adult users to add a trusted emergency contact in case conversations indicate possible mental health risks. The feature is designed to notify a chosen friend, family member or caregiver if the OpenAI system detects discussions related to self-harm or suicide. This will join the existing crisis helpline recommendations. The all new Trusted Contact option is completely optional but can be enabled via ChatGPT account settings. The users can assign another adult as their emergency contact, who must approve the request before becoming linked to the account. Once it gets enabled, the contact may receive a notification if OpenAI believes the user can be facing a serious mental health crisis. As per the company, the alerts will not include private chat transcripts or detailed conversation history. Instead, the notification will simply inform the trusted person that the user may need support. Before any alert is sent, ChatGPT will reportedly encourage the user to reach out to their trusted contact directly. Also read: OpenAI partners with Nvidia, Microsoft and others to build MRC: What it is OpenAI says the system combines automated detection with human review. If conversations are flagged for possible self-harm concerns, a especially trained safety team will assess the situation before any message is shared with the emergency contact. Notification may arrive through email, text or inside ChatGPT itself. This comes at a time when many AI chatbots are facing increasing scrutiny over how they handle emotionally vulnerable users. In recent months, concerns around AI companionship, emotional dependence and chatbot-driven mental health risks have intensified across the industry. For the unversed, the company has already introduced many features, including parental controls. Many systems have also started appearing on the platforms, including social media apps that monitor repeated searches related to self-harm or suicide.

Share

Copy Link

OpenAI has launched Trusted Contact, an optional ChatGPT safety feature allowing adult users to designate someone who receives alerts if conversations indicate self-harm risks. The announcement follows multiple lawsuits from families claiming the AI chatbot encouraged suicide, with OpenAI research showing over 1 million weekly users discuss suicidal ideation.

OpenAI New Feature Addresses Growing Mental Health Concerns

OpenAI announced Thursday the rollout of Trusted Contact, a ChatGPT safety feature designed to connect users experiencing emotional crises with real-world support

1

. The optional safeguard allows adult ChatGPT users to designate a friend or family member who will receive notifications if conversations about self-harm suggest serious safety concerns2

.

Source: CNET

The feature arrives amid intense scrutiny of the AI chatbot's role in mental health incidents. OpenAI faces multiple lawsuits from families whose loved ones committed suicide after interacting with ChatGPT, with some alleging the platform acted as a "suicide coach" or helped plan self-harm

1

. Research from OpenAI last October revealed that more than 1 million ChatGPT users per week send messages with explicit indicators of potential suicidal planning or intent2

. The company also disclosed that 0.15% of weekly users express risk of self-harm or suicide, potentially affecting nearly three million people globally5

.How the Alert Trusted Contact System Works

Users aged 18 or older (19 in South Korea) can activate the feature through ChatGPT settings by nominating one adult contact

4

. The designated contact receives an invitation explaining their role and must accept within one week3

. Once activated, an automated monitoring system detects when conversations may indicate serious safety risks related to self-harm1

.Crucially, OpenAI combines automation with a human review team to evaluate flagged incidents. A small team of specially trained reviewers assesses whether situations warrant intervention, with the company striving to review safety notifications in under one hour

1

. If deemed necessary, the trusted contact receives alerts via email, text message, or in-app notification2

.

Source: PC Magazine

Privacy Concerns and User Safety Balance

Addressing privacy concerns, OpenAI emphasizes that notifications sent to trusted contacts provide only general reasons for concern without sharing chat transcripts or detailed conversation content

5

. The company offers an example message: "We recently detected a conversation from [name] where they discussed suicide in a way that may indicate a serious safety concern. Because you are listed as their trusted contact, we're sharing this so you can reach out to them"2

.

Source: Lifehacker

However, implementation questions remain. OpenAI has not specified how many people comprise the review team or whether it includes trained medical professionals

2

. Some observers question whether the feature shifts responsibility from OpenAI onto users' personal contacts, while others note potential risks if the designated contact is actually a source of danger or abuse2

.Related Stories

Expanding Safety Protocols Beyond Parental Controls

Trusted Contact builds on safety protocols OpenAI introduced last September, which gave parents oversight of teen accounts and notifications about serious safety risks

1

. The parental controls emerged after a high-profile California case involving a 16-year-old who discussed suicide methods with ChatGPT before taking his own life2

.Both features share a significant limitation: they're optional, and users can maintain multiple ChatGPT accounts

1

. The feature does not replace professional care or crisis services, and ChatGPT continues encouraging users to contact helplines and emergency services when necessary5

.OpenAI developed the feature with guidance from mental health and suicide prevention clinicians, researchers, and organizations

5

. The company stated it will continue working with clinicians and policymakers to improve how AI systems respond when people experience distress1

. As large language models increasingly serve as confidants for millions, the effectiveness of these safety measures in preventing harm while respecting user privacy will likely face ongoing scrutiny from regulators, mental health experts, and the public.References

Summarized by

Navi

[1]

[4]

Related Stories

Recent Highlights

1

Pope Leo XIV releases first AI encyclical calling for disarmament from monopolistic control

Policy and Regulation

2

AI passes the Turing Test as GPT-4.5 appears more human than actual people in landmark study

Science and Research

3

Google AI Search officially replaces traditional web search with Gemini-powered conversations

Technology