Qlik makes data governance the foundation for enterprise AI as agentic analytics reshape decisions

4 Sources

[1]

AI-driven decision-making reshapes analytics - SiliconANGLE

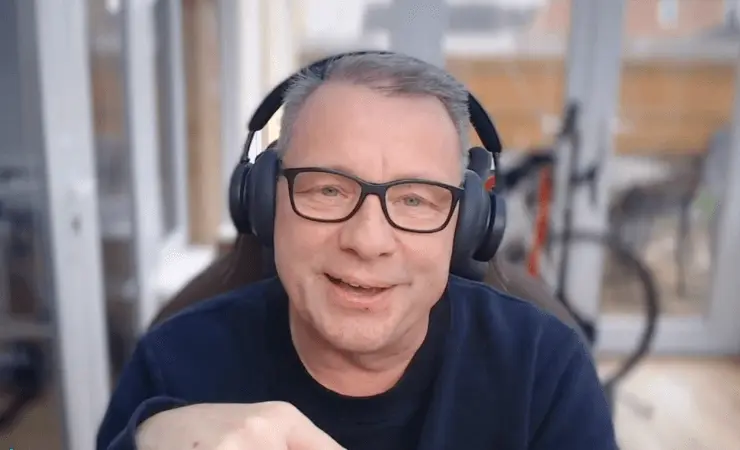

The dashboard is dead, but what comes next requires a lot more than just faster AI AI-driven decision-making has arrived, putting a focus on trusted data and strong governance so outputs stay reliable at scale. The shift is rewriting the relationship between people and data. Instead of relying on dashboards and reports to drive action, AI can now handle much of that work and even act on our behalf, according to Nick Magnuson (pictured, right), head of AI at Qlik Technologies Inc. But for enterprises, the opportunity is only valuable if the data underneath it can be trusted. "It's changing a lot of paradigms -- how we think about acting on that information, how we think about constructing data and supporting that data through that life cycle now, because we've got autonomous things in the mix that aren't human in nature," Magnuson said. "I think a lot of the frameworks that we've used in the past now need to be rethought essentially from the ground up." Magnuson and Michael Leone (left), vice president and principal analyst at Moor Insights & Strategy, spoke with theCUBE's Rob Strechay and Rebecca Knight at Qlik Connect 2026, during an exclusive broadcast on theCUBE, SiliconANGLE Media's livestreaming studio. They discussed the rise of agentic AI and the need for trust and context in AI-driven decision-making. (* Disclosure below.) AI has collapsed the time-to-insight curve that analytics teams have chased for decades. What used to take hours of querying and dashboard-building now happens in seconds. But speed without clean data is just faster failure -- and that problem predates AI entirely, according to Leone. "I think the enablement that we're going to get from AI now is, 'Hey, I don't have to rely on a human to try and go figure out where to pick my data that I'm going to be using to analyze,'" Leone said. "It's going to find it pretty darn quick and it's going to do it faster and it's probably going to do it more accurately than what I think humans have been able to do." Yet the goal was never to remove humans from the equation. The winners will be the ones that pair autonomy with governance, context and human oversight so AI can deliver outcomes that are not just faster, but dependable over time, according to Magnuson. "We have that superpower that we kind of sit above it, and the ability to put in the governance frameworks and the things that make it work over time," Magnuson explained. "AI is not a point in time, it's an over time situation. You've got to be able to have humans that can get in there and assess the thing, put together the system and then monitor it." Here's the complete video interview, part of SiliconANGLE's and theCUBE's coverage of Qlik Connect 2026:

[2]

Qlik's most important AI feature is knowing when to say nothing . Boring is brilliant

There's a phrase that's stuck with me since a conversation with Martin Tombs, VP Global Go-to-Market for Analytics and Field CTO EMEA at Qlik, back in February: "boring is brilliant." He used it to describe the unglamorous but essential work of data governance. I deployed it back at him in the context of observability log files and we laughed at the irony. But given the state of enterprise AI right now, it's also genuinely useful shorthand for where the industry needs to go - and what separates the vendors building for production from the ones still selling demos. Tombs was speaking ahead of Qlik's announcement of the general availability of its agentic experience in Qlik Cloud, delivered through Qlik Answers as a unified conversational interface, alongside the GA of its Model Context Protocol (MCP) server. This week at Qlik Connect, a new ServiceNow partnership completes the picture. Taken together, they form a fuller architecture story - one worth unpacking carefully, because the market is drowning in agentic announcements that don't survive contact with production environments. Ask Tombs where enterprise AI deployments actually fail and his answer is that it's not the model - not the interface. He explains: Getting your unstructured data right - I think everyone's still getting their heads around this. It's not just where you store a PDF. It's what's in the PDF, who's responsible for that content in the PDF. This tracks against what diginomica has been hearing across organizations ranging from Fortune 100 to Fortune 500. And if you're building agentic systems that need to reason across both, the governance challenge compounds. You cannot bolt it on afterward, as Tombs puts it, because the agent has to make decisions about what to trust, what to surface, and - critically - what to decline to answer. One of the more honest design decisions Qlik has built into Answers is a hard boundary: ask it something outside its governed dataset and it will not hallucinate a response. It tells you it doesn't know. Tombs illustrates this with a deliberately absurd example - training a finance instance and then asking how to peel a banana - but the principle lands in production environments where a confidently wrong answer is categorically worse than silence. Tombs explains: If I give you three wrong answers, you're going to be out very quickly in asking me questions. And that's really how I see the adoption of any vendor's product. Gartner's hype cycle framing, which Tombs refers to, describes vendors still climbing the peak while enterprise consumers have started the descent into disillusionment. The gap between what AI delivers in a controlled demo and what it reliably does in a messy production environment remains significant - and it follows that this is a useful lens for evaluating everything Qlik has announced. Qlik's Model Context Protocol (MCP) server deserves a little more scrutiny. Originally developed by Anthropic, MCP provides a standardized way for AI assistants to discover and invoke external tools and data sources. Qlik's implementation exposes its analytics engine, tools, and governed data products to third-party AI assistants including Claude. Tombs uses a door analogy that earns its keep. If Qlik's internal Answers capability is the front door of the house, MCP is the side door - the one you open to let external agents access what you've built. But you need a bouncer on that door. He elaborates: By opening this front door, you've always got to have a bouncer on the door that says, 'What are you coming in for? What are you doing?' You've got to hand a menu to the MCP of what you are capable of doing, what your uniqueness is - and then other things can take advantage of that. The governance layer has to come before the MCP exposure. The practical implication is that an organization that has done its data governance homework can make that trusted intelligence available to whatever AI assistant its teams use - without re-exposing raw data or bypassing established controls. The distinction from a conventional API is also worth making explicit for those new to MCP: it standardizes not just the call but the capability discovery, so an external agent understands what a tool does before deciding whether to invoke it. That matters for multi-agent orchestration, where agents select tools dynamically rather than following hard-coded instructions. The capability Tombs is most visibly animated about is Discovery Agent - Qlik's continuously monitoring agent that surfaces anomalies, shifts, and emerging risks in key measures, without a human having to go hunting for them. He notes: We can proactively identify anomalies, trends, and risk. We could tell decision-makers that without me finding it all for them. Qlik CEO Mike Capone is pointed about what enterprise boards are actually wrestling with right now: they are navigating geo-political volatility, tightening AI regulation, and relentless cost pressure - all of which changes what enterprise AI has to be: auditable, governable, and capable of acting inside real workflows. Discovery Agent is the operational result of that positioning. The counterpoint is that automated anomaly detection is only as reliable as the model's understanding of what "normal" looks like for a given business - a data quality and contextual calibration problem that no GA announcement resolves. Tombs is candid about this: Qlik will get some things right and some things wrong in deployment, and the product is in constant iteration. The Qlik Connect announcement adds a dimension that contextualizes the rest. The new ServiceNow partnership routes Qlik analytics into ServiceNow workflows and agents, while adding Qlik metadata collectors to the ServiceNow Data Catalog for discovery and lineage visibility. An organization can have the best governed analytics layer available, but if the insight never reaches the person or agent making the operational decision, it's academic. ServiceNow is where a significant volume of enterprise work execution happens - and getting Qlik's analytics engine, aggregating cross-system context from ERP, CRM, supply chain, billing, and support data, feeding into that environment is a substantive architectural move rather than a badge-swap partnership. Pramod Mahadevan, VP of Data and Analytics Product Ecosystem at ServiceNow, says: The decisions people and agents make every day are only as good as the data behind them. That's been true for thirty years. What's changed is the plumbing: the data layer, analytics layer, and workflow layer are now connectable in ways that no longer require custom integration work at every junction. The metadata collector piece reinforces this - lineage, discovery, and structure visibility for Qlik-managed assets become accessible from within ServiceNow's own governance tooling, which is a more practically grounded integration than most partnership announcements in this space manage to deliver. The combination of a governed data product layer, a reasoning engine that knows when not to answer, an MCP interface that extends trusted intelligence to external assistants, continuous monitoring via Discovery Agent, and now a workflow integration with ServiceNow represents a genuine end-to-end architecture - not a feature announcement dressed up as a strategy. Those honest caveats remain significant, though. Deployment quality varies, unstructured data governance is hard regardless of tooling, and the cost of running agentic systems at enterprise scale is underexamined - Tombs raises cost governance explicitly, and he's right to. These are not problems Qlik's announcements solve; they're problems that good tooling makes more tractable. The underlying positioning - govern first, agent second, trust as infrastructure - is correct. The organizations that internalize it will build AI systems that actually work in production. The ones that skip it will keep discovering, at significant expense, why promises don't match up to reality. 'Boring is brilliant' needs to be read as an engineering principle, not a marketing line.

[3]

Governed data is AI's real competitive edge, says Qlik - SiliconANGLE

It's not governance slowing down enterprise AI -- it's the lack of it, says Qlik executive Enterprises are chasing AI models at a dizzying pace, yet the organizations pulling ahead are the ones that paused to build something less glamorous: a trusted, governed data foundation. Pressure is mounting across industries, as research from Qlik and Enterprise Technology Research shows that data quality, availability and governance remain the top blockers to scaling agentic AI deployments. But a data strategy anchored in governance is not a constraint on AI momentum -- it is the prerequisite for it, according to James Fisher (pictured), chief strategy officer at Qlik Technologies Inc. "We've seen this big shift from trying to just apply AI to a problem to thinking about what is needed architecturally to bring that data together -- at the right latency, in the right format -- and deliver that in a way that it's consumable to AI applications," Fisher told theCUBE. "I think that's a really positive shift that we've seen over the last couple of years." Fisher spoke with theCUBE's Rebecca Knight and Rob Strechay at Qlik Connect 2026, during an exclusive broadcast on theCUBE, SiliconANGLE Media's livestreaming studio. They discussed AI architecture decisions, governed data as an accelerator and Qlik's product roadmap for agentic analytics. (* Disclosure below.) The urgency reflects a broader industry reckoning. Analysts warn that enterprise AI will stall not because models aren't ready, but because governance isn't keeping pace. Governance slowing AI delivery might still be a common chief information officer objection, but it's not necessarily the right one, according to Fisher. "I'm a big fan of the phrase 'go slower to go faster,'" he said. "By creating and taking the time to build that foundation -- to think about where it's gonna be used, how it's gonna be applied -- just that little step, that little extra time you take there will provide exponential benefits long term, whether that's performance of the AI application [or] whether that's performance of the agent." That compounding logic also applies to data products -- reusable, governed datasets built around specific consumer needs. Solving one use case with a well-structured data product tends to unlock the next, creating organizational momentum, Fisher noted. Qlik Answers is built on exactly that premise, pairing governed data products with a conversational AI interface so that decisions carry citations and explanations users can trust. "While we're all worrying about data infrastructures and building agents and the cost of deployment, I think it's always important we understand about the user, about the individual that's working with it," Fisher said. "We need to not only democratize access to AI, but democratize the value that can come from it." Stay tuned for the complete video interview, part of SiliconANGLE's and theCUBE's coverage of Qlik Connect 2026.

[4]

Qlik Looks To Boost AI Effectiveness, Predictive Capabilities With New Agents

The data integration, governance and analytics giant, which is holding its Qlik Connect conference this week, is emphasizing the need for trusted data as businesses and organizations look to put AI into production and generate business value. Qlik is expanding the agentic analytics capabilities of its data analytics platform, building on the recently launched AI-powered Qlik Answers knowledge assistant with new data prediction and analytic workflow automation agents. The data integration, data governance and business analytics giant, which is holding its annual Qlik Connect customer and partner conference in Orlando this week, also announced an extension of its agentic AI strategy to the data engineering realm and unveiled expanded data trust and governance capabilities for AI tasks. Qlik has also struck a deal with ServiceNow to link the two vendors' software in a bid to improve the quality of the data and analytics flowing into ServiceNow's AI-driven workflows. [Related: Qlik CEO: "Trusted Data Foundation" For AI Is The Theme At This Week's Connect Event] "Is it real? Can I trust it? Can you prove it? How much is this going to cost me to scale?" said Qlik CEO Mike Capone, as he began his Connect keynote Tuesday (pictured) by reciting the questions he frequently gets about AI from customers. "There's so much noise out there, so much hype, so many buzzwords," the CEO said of the state of AI today. "I spend a lot of time meeting with executives, CEOs, CIOs, CFOs, of our customers. And one thing is becoming really, really clear right now: There's frustration. There's a lot of frustration out there. Why? Because companies are spending a lot of money on AI, but they are not getting the return. They're not getting the value." "The reckoning is here," he said. Capone also repeated a theme he introduced to hundreds of channel partner executives during a Connect Partner Summit on Monday that customers are looking to "trusted partners," including Qlik and its ecosystem of solution providers, service providers and consultants, to help them navigate their AI journey. "You are closer to agentic AI than you think," Capone said, telling the thousands of attendees that they can leverage the Qlik platform they already work with to overcome the problems of data availability, data quality and data governance that sink many AI projects. In February Qlik announced the general availability of Qlik Answers, the company's AI-powered analytical assistant that's delivered through the Qlik Cloud platform. At Connect this week Capone said more than 1,000 customers have activated Qlik Answers and are now working with the vendor's analytic agent capabilities. Also in February, the company announced the availability of the Qlik Model Context Protocol (MCP) server that enables third-party assistants, such as Anthropic Claude, to securely access Qlik's analytical capabilities and trusted data products. That was followed in March by the launch of Discovery Agent, which monitors key data areas for important data changes and possible anomalies and alerts users for possible investigation. At Connect this week Qlik expanded its agent portfolio with Predict Agent and Automate Agent. Predict Agent, slated for availability later this quarter, lets users ask forward-looking questions. The agent builds machine learning models, generates predictions, and interprets results. Automate Agent provides a link from analytics to execution that makes it possible -- using natural language prompts -- for data teams to trigger workflows based on analytic results. The company also announced an expansion of its AI agent strategy beyond analytics to include data engineering, a move that brings agentic capabilities to data teams who manage and maintain data and build pipelines for moving data for analytical and AI tasks. Qlik said the new strategy centers on engineering execution for declarative pipelines, real-time data routing, data lakehouse streaming and other chores that the company said reduce friction in how data pipelines are built, altered and operated. Qlik said its Analytics Agent technology now supports analytics software development tasks, in addition to generating analytical insights. And the company announced an expansion of its trust and governance capabilities for AI, centered on data products (curated, reusable, data sets) and the operational controls required to make them reliable for human decision making and for AI-driven actions. Many AI products on the market today operate as a black box, said Igor Alcantara, director of data science at Qlik consulting partner IPC Global, during a panel session at the Qlik Partner Summit. "You have no idea of the reasoning behind the results, you have no idea of how the AI delivered the results." But Qlik's AI technology, he said, "is a glass box" where the reasoning, the source data and other elements of the AI output are transparent. "So before making a decision, you don't have to blindly trust the AI," he said. And Alcantara praised the effectiveness of the Qlik Associative Engine that powers its data analysis platform and Qlik's data governance capabilities that he said also help build trust in the results produced by Qlik's AI products. On Monday, as Connect got underway, Qlik announced a strategic alliance with ServiceNow through which Qlik technology, including the Qlik Analytics Engine, will be integrated with the ServiceNow Workflow Data Fabric to improve the quality of the data used by the ServiceNow platform and its Ai agents. Capone said the ServiceNow relationship provides "a better path from insight to action." "The marriage of what we bring: data integration, data quality, data governance, integrated with ServiceNow, helping them marry their data together with data from other sources, with our data quality and data governance frameworks, is going to be one of those, one-plus-one-equals-three-types of scenarios," the Qlik CEO said in an interview with CRN prior to Connect. New Qlik data collectors for the ServiceNow Data Catalog will improve visibility into data lineage, movement and structure, according to the two companies. The Qlik Analytics Engine and Qlik AI, meanwhile, can combine ServiceNow Workflow Data Fabric with broader enterprise signals to surface relationships, patterns and recommendations that support more intelligent actions. "The decisions people and agents make every day are only as good as the data behind them," said Pramod Mahadevan, ServiceNow vice president, data and analytics product ecosystem, in a statement. "Our partnership with Qlik connects those insights from third-party data directly inside ServiceNow, extending the reach of Workflow Data Fabric to the systems where critical data already lives. The result: People and agents that act on trusted, governed intelligence, and decision-ready data in the workflows where work gets done," Mahadevan said.

Share

Copy Link

Qlik positioned data governance as the critical enabler for enterprise AI at its Connect 2026 conference, announcing new agentic analytics capabilities including Predict Agent and Automate Agent. CEO Mike Capone addressed widespread frustration over AI investments that aren't delivering returns, pointing to data quality and governance as the primary blockers. The company also unveiled a ServiceNow partnership and expanded its AI agent portfolio beyond analytics to data engineering.

Qlik Positions Data Governance as Enterprise AI's Critical Accelerator

Qlik is reframing data governance from a perceived bottleneck into the essential foundation for enterprise AI success. At Qlik Connect 2026 in Orlando this week, the data integration and analytics company announced expanded agentic analytics capabilities while emphasizing that organizations achieving AI momentum are those that invested in governed, trusted data foundations first

3

.CEO Mike Capone opened the conference keynote by acknowledging widespread executive frustration with AI investments that fail to deliver returns. "Companies are spending a lot of money on AI, but they are not getting the return. They're not getting the value," Capone told thousands of attendees, declaring that "the reckoning is here"

4

. Research from Qlik and Enterprise Technology Research confirms that data quality, availability and governance remain the top blockers to scaling agentic AI deployments across industries3

.

Source: CRN

AI-Driven Decision-Making Demands Trusted Data Foundation

The shift toward AI-driven decision-making is fundamentally changing how organizations interact with data. Nick Magnuson, head of AI at Qlik, explained that AI can now handle work previously done through dashboards and reports, and even act autonomously on behalf of users. But this autonomy only creates value when the underlying data can be trusted

1

."It's changing a lot of paradigms -- how we think about acting on that information, how we think about constructing data and supporting that data through that life cycle now, because we've got autonomous things in the mix that aren't human in nature," Magnuson said. The frameworks organizations relied on in the past need rethinking from the ground up to accommodate these autonomous systems

1

.

Source: SiliconANGLE

James Fisher, chief strategy officer at Qlik, advocates for what he calls the "go slower to go faster" approach. "By creating and taking the time to build that foundation -- to think about where it's gonna be used, how it's gonna be applied -- just that little step, that little extra time you take there will provide exponential benefits long term," Fisher explained

3

.Qlik Expands Agentic Analytics Portfolio With Predictive Capabilities

Building on the February general availability of Qlik Answers, the company's AI-powered analytical assistant, Qlik announced that more than 1,000 customers have now activated the platform's agentic capabilities

4

. At Connect this week, Qlik expanded its agent portfolio with two significant additions.Predict Agent, slated for availability later this quarter, enables users to ask forward-looking questions using natural language. The agent builds machine learning models, generates predictions, and interprets results without requiring data science expertise

4

.Automate Agent creates a direct link from analytics to execution, allowing data teams to trigger workflows based on analytic results through natural language prompts. This bridges the gap between insight and action, addressing a longstanding challenge in enterprise analytics

4

.The Discovery Agent, launched in March, continuously monitors key data areas for important changes and anomalies, proactively alerting users to emerging risks without requiring manual investigation. Martin Tombs, VP Global Go-to-Market for Analytics and Field CTO EMEA at Qlik, described this capability as identifying "anomalies, trends, and risk" and telling "decision-makers that without me finding it all for them"

2

.

Source: diginomica

ServiceNow Partnership and Model Context Protocol Extend Reach

Qlik announced a partnership with ServiceNow designed to improve the quality of data and analytics flowing into ServiceNow's AI-driven workflows

4

. This collaboration complements Qlik's February announcement of its Model Context Protocol (MCP) server, which enables third-party AI assistants like Anthropic Claude to securely access Qlik's analytical capabilities and governed data products2

.Tombs uses a door analogy to explain MCP's role: if Qlik Answers is the front door to the company's analytics house, MCP is the side door that external agents can use. But governance remains essential. "By opening this front door, you've always got to have a bouncer on the door that says, 'What are you coming in for? What are you doing?'" Tombs explained

2

.The MCP implementation standardizes not just API calls but capability discovery itself, enabling external agents to understand what a tool does before deciding whether to invoke it. This matters particularly for multi-agent orchestration, where agents select tools dynamically rather than following hard-coded instructions

2

.Related Stories

Human Oversight and Glass Box AI Build Trust

One of Qlik's most significant design decisions addresses the hallucination problem plaguing many AI systems. When Qlik Answers receives a query outside its governed dataset, it refuses to generate a response rather than risk providing incorrect information. "If I give you three wrong answers, you're going to be out very quickly in asking me questions. And that's really how I see the adoption of any vendor's product," Tombs said

2

.This approach contrasts sharply with what Igor Alcantara, director of data science at Qlik consulting partner IPC Global, described as the "black box" nature of many AI products on the market. "You have no idea of the reasoning behind the results, you have no idea of how the AI delivered the results," Alcantara said during a panel session at the Qlik Partner Summit. Qlik's technology, he explained, operates as a "glass box" where the reasoning, source data, and other elements of AI output remain transparent

4

.Magnuson emphasized that human oversight remains critical even as AI takes on more autonomous roles. "We have that superpower that we kind of sit above it, and the ability to put in the governance frameworks and the things that make it work over time," he explained. "AI is not a point in time, it's an over time situation. You've got to be able to have humans that can get in there and assess the thing, put together the system and then monitor it"

1

.Data Engineering Gets Agentic Capabilities

Qlik extended its agentic AI strategy beyond analytics to include data engineering, bringing autonomous capabilities to teams who manage data pipelines and infrastructure. The new strategy focuses on engineering execution for declarative pipelines, real-time data routing, and data lakehouse streaming. Qlik says these capabilities reduce friction in how data pipelines are built, altered, and operated

4

.The company's Analytics Agent technology now supports analytics software development tasks in addition to generating analytical insights. Qlik also announced expanded trust and governance capabilities for AI centered on data products—curated, reusable datasets with the operational controls required to make them reliable for both human decision-making and AI-driven actions

4

.Fisher noted that data products built around specific consumer needs create organizational momentum. Solving one use case with a well-structured data product tends to unlock the next, creating a compounding effect. "While we're all worrying about data infrastructures and building agents and the cost of deployment, I think it's always important we understand about the user, about the individual that's working with it," Fisher said. "We need to not only democratize access to AI, but democratize the value that can come from it"

3

.References

Summarized by

Navi

[1]

Related Stories

Recent Highlights

1

Pope Leo XIV releases first AI encyclical calling for disarmament from monopolistic control

Policy and Regulation

2

AI passes the Turing Test as GPT-4.5 appears more human than actual people in landmark study

Science and Research

3

Google AI Search officially replaces traditional web search with Gemini-powered conversations

Technology