Google unveils enterprise AI agents platform as Cloud reaches $70B revenue with 48% growth

22 Sources

[1]

Google makes an interesting choice with its new agent building tool for enterprises | TechCrunch

Google CEO Sundar Pichai opened the Google Cloud Next conference on Wednesday with a video in which he announced one of the company's biggest new products: Gemini Enterprise Agent Platform. Google's tool is intended for building and managing agents at scale. This is Google's answer to Amazon's Bedrock AgentCore and to Microsoft Foundry. Given that AI, and agents in particular, are furthest along for technical tasks like coding, and that the tech is so new to the enterprise that security remains a real concern, Google has made an interesting choice with this tool. Agent Platform is particularly geared at IT and technical teams. The business folks, meanwhile, are directed toward what Google calls its Gemini Enterprise app, introduced in the fall. They can work with agents built by IT or build their own for tasks like scheduling meetings, performing trigger-based processes, creating shortcuts for repetitive tasks or creating and editing files without needing to switch apps, Google says. Google also underscored that the underlying models these tools tap into include Google's own Gemini LLM and Nano Banana 2 image generator, as well as Anthropic's Claude. The company announced support for Claude Opus, Sonnet and Haiku -- in other words, flagship, reasoning, and lower-cost models, including the new Opus 4.7 that launched last week.

[2]

How Google just revamped Gemini Enterprise for the agentic era - here's what's new

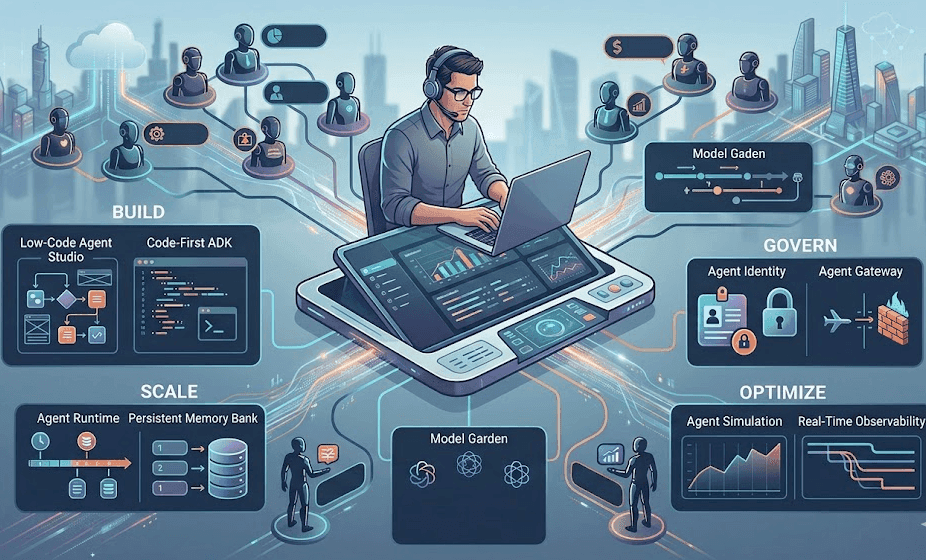

A new Agent Platform streamlines automated work and security. As companies use more agents in their workflows, managing them securely and efficiently becomes a primary challenge. Google just created a possible solution, wrapped in the same accessible interface that many teams are used to. On Wednesday at Google Cloud Next, the company's annual enterprise conference, Google released its new Gemini Enterprise Agent Platform for developers. Evolved from Vertex AI, Agent Platform "brings together the model selection, model building, and tuning services of Vertex AI that customers love, along with new features for agent integration, security, DevOps, orchestration, and more," CEO Thomas Kurian said in the announcement. Also: This powerful Gemini setting made my AI results way more personal and accurate The platform revamps the current Gemini Enterprise experience and offers over 200 models, including Gemini 3.1 Pro, Nano Banana 2, Gemma open models, and competitive models from Anthropic, such as its just-released Opus 4.7. Since Agent Platform is built on Vertex, Google noted that those services will now flow through Agent Platform exclusively. In the platform, according to Google, developers can design an agent's life cycle start to finish, from building the agents themselves to scaling and governing them. MCP support and an upgraded Agent Development Kit help developers maximize reasoning capabilities by structuring agents into sub-networks. That tiered approach should set agents up to handle complex tasks, Google said, adding that other features like faster runtime and Memory Bank help agents delegate to each other more efficiently and operate with more context for longer. "Gemini Enterprise is now an end-to-end system for the agentic era, built for agents that can execute complex, multi-step work processes," Google said in the announcement. Also: Prolonged AI use can be hazardous to your health and work: 4 ways to stay safe The company also emphasized that it has baked security into the new platform through tools such as Agent Identity, which assigns each agent a cryptographic ID. If you'd rather not take any risks, however, you can use Google's new Agent Simulation tool to "stress-test your agents against real-world scenarios before they ship," the company said. Once developers are done building and testing, they can publish agents from the platform to the Gemini Enterprise app, where employees can run those agents or build their own with no-code or lower-code options like Google's Agent Studio and Agent Designer. A Google employee demonstrated how users can deploy multiple agents in the enterprise app at once to tackle an inventory or marketing challenge, as if they were a team of workers. In the demo, each individual agent handled a specific element of a multi-step project for a furniture company, using the organization's Workspace contents to pull relevant data and strategy points. Running multiple autonomous agents can pose a host of privacy and security risks for any organization, especially when non-developer employees use them. Google emphasized that its revamped Gemini Enterprise addresses this by simplifying guardrails and permissions before users can access agents. The company said it "provides the same level of oversight and auditability found in essential business applications like payroll or quarterly financial reporting." Also: I tested ChatGPT Plus vs. Gemini Pro to see which is better - and if it's worth switching The Gemini Enterprise app sits atop Agent Platform, which Google said standardizes governance and security. "We provide a single control plane for governance in Agent Platform, so every employee can use and share agents with full IT visibility," the company added. "Both no-code and pro-code agents are managed through a consistent model for identity, security, and auditing." Google also announced Agentic Data Cloud, a new data architecture intended to help scale AI agents. Several new features let developers instantly query data without moving it out of AWS or Azure, leverage new data science tools across multiple surfaces, and enrich files with metadata to give agents more semantic context, among other capabilities. At the Workspace level, Google launched Workspace Intelligence, which uses Gemini reasoning to understand "complex semantic relationships within your Workspace apps (such as Docs, Slides, or Gmail) content, your active projects, your collaborators, and your organization's domain knowledge," the company wrote. Also: Scaling agentic AI demands a strong data foundation - 4 steps to take first While that may sound like what Gemini already does, Google framed Workspace Intelligence as an additional tool that Gemini will leverage when automating tasks such as slide generation and project prep. Google noted a few upgrades in the new feature, including proprietary infographics in Docs and advanced personalization tailored to a user's style. "Workspace Intelligence retrieves your relevant emails, chats, files, and information from the web to transform ideas into professionally formatted drafts that mimic your exact voice, brand, style, and company templates," Google said.

[3]

Google on why its all-in-one AI stack embraces competitors

Google Cloud Next Google Cloud's Andi Gutmans said that the company holds a structural advantage over its largest rivals in the race to win value from AI agents in the enterprise, arguing that no competitor currently combines cloud computing infrastructure, frontier AI models, and a data platform under one roof. "We're really the only provider that has the AI infrastructure, the model and the data platform," he said in response to a question from The Register during a briefing with reporters on the sidelines of Google Cloud Next. Gutmans, who runs Google Cloud's data business, including its analytics, transactional databases, storage and business intelligence products, said the integrated stack is critical to achieving value from AI. "If you think about AWS and Azure, they've got the infrastructure, they don't have the model," Gutmans said. "You look at the data providers, they have the data platform, but they've got to get the infrastructure and model from others. The AI model providers just do the AI model." Gutmans said as enterprises shift from AI tools that respond to human queries toward agents that act autonomously on behalf of employees, the significance of those gaps becomes more pronounced. That transition puts pressure on the underlying data platform in ways that earlier architectures were not designed to handle, and that the economics of running agents at scale rewards providers that control more of the stack. "If you ask 'How is this agentic data cloud really different because everyone is saying the same thing?' The answer is we are uniquely positioned to integrate these things very tightly which is now more important than ever as you go from human scale to agent scale because you're going to have to bend the price-performance curve or it's going to be too expensive." Gutman said Google spent the past year and a half rethinking its data platform for the shift to agent scale. He said roughly 90 percent of enterprise data remains unstructured and has historically gone unused. He said the Knowledge Catalog announced at the show is designed to make that data available to agents without requiring armies of data engineers to prepare it manually. The moment that made the change possible was not a product decision but a model one. He said that, when Gemini 2.5 arrived, there was a tipping point in reasoning capability that forced Google to re-engineer every agent in its data portfolio. "We've completely re-engineered every single one of our agents in the last year. So even the conversation analytics agent, the data science agent, the data engineering agent -- we've had to be less prescriptive with the models. That's where the Knowledge Catalog and the MCPs help because they're so much better than reasoning around them. That is the big tipping point." he said. "If you ask a customer how conversation analytics was last year versus now, they'll tell you they couldn't use it last year. It worked for simple stuff." He said the company has roughly 80 data-related announcements at the conference this week, and that nearly every agent product in his portfolio has been rebuilt in the past year. "The models have gone so far," he said. "It's night and day." He said approaches that required months of manual ontology-building are no longer necessary. "A year ago, people would be like, 'Let me get Palantir and get 20 people and work for six months and build an ontology.' That's not how you would approach it anymore," he said. "If you really want to activate your whole data estate you can't do it with people." The Register asked Gutmans how Google navigates a market where it simultaneously competes with, and also partners with many of the software providers Google makes its own TPU AI accelerators, but partners with Nvidia on chips. It has a data analytics platform in Big Query but also works with Databricks, Snowflake, and Informatica. GCP users can create, deploy and govern AI agents to carry out tasks across their digital estates, but it can also host those same capabilities from its partners at Salesforce and ServiceNow. "Our view, and I don't think its different than any other hyperscaler, is we want to build the best platform," he said. Gutmans said that the integrated stack is a real and durable competitive advantage, particularly as security, governance and cost efficiency become harder to manage across fragmented systems. He said the same principle applies to the cross-cloud lakehouse Google announced this week, which he said allows customers to query data sitting in Amazon Web Services or Microsoft Azure with low latency. "Differentiated, but open," is how he described Google's approach. ®

[4]

Google says it has all the answers for AI agent sprawl

As biz agentic bot-wrangling intensifies, company says AI orchestration, security and infrastructure tools on the way Google Cloud Next Google has overhauled its enterprise AI strategy in the wake of the agentic push across the biz landscape, rebranding and expanding its Vertex AI developer platform into what it now calls the Gemini Enterprise Agent Platform. It comes as the challenge facing businesses has shifted from building individual AI agents to managing hundreds or thousands of them at once - something Workday and others are trying to tackle too. "The early versions of AI models were really focused on answering questions that people had and assisting them with creative tasks. Now we're seeing as the models evolve people wanting to delegate tasks and sequences of tasks to agents," Google Cloud CEO Thomas Kurian told reporters during a press briefing. "And these agents then being able to turn around and use a computer, use all of GCP and Workspace as a tool." To meet the moment, Google rolled out infrastructure in the form of its eighth generation of TPU chips and security updates through its purchase of Wiz. Those announcements as well as the Gemini Enterprise Agent Platform are designed to give companies a single system for developing, deploying, governing, and monitoring AI agents across their organizations. Google says it can act as the connective layer between a company's data, its employees, and the growing fleet of autonomous agents that enterprises are beginning to rely on. "All the pieces are designed to do this," Kurian said in the briefing. "The security to protect these agents. Our data cloud to feed the agents context from within the system. Our AI infrastructure to optimize performance, scale and cost of how agents run. This year is the next evolution of where we see this AI technology going." He said organizations are choosing Google Cloud because of its ability to deliver "a comprehensive backbone for innovation" rather than "individual services that can be cobbled together." Gemini Enterprise Agent Platform is organized around four pillars: build, scale, govern, and optimize. On the build side, Google introduced Agent Studio, a low-code interface for creating agents using natural language, alongside an upgraded Agent Development Kit with a new graph-based framework for orchestrating multiple agents working together, the company said during a media prebriefing. It also provides an agent registry that gives organizations a central catalog of each internal agent and tool, the company said. Also inside the new platform is an agent marketplace that offers pre-built agents from partners including Atlassian, Oracle, ServiceNow, and Workday. The platform includes Agent Runtime, a feature that Google says delivers sub-second cold starts and gives users the ability to provision new agents in seconds. It also supports long-running agents -- autonomous processes that can operate for hours or days on complex business workflows like financial reconciliation or sales prospecting. A new Memory Bank feature gives agents persistent, long-term memory across sessions rather than starting from scratch each time, the company said. But it is the governance capabilities that may matter most to enterprise buyers who fear that AI tools may proliferate across their organizations with limited oversight. Agent Identity assigns every agent a unique cryptographic ID with defined authorization policies, creating an auditable trail of every action, Google said. Agent Gateway, meanwhile, acts as the police for agent ecosystems, enforcing security policies and protecting against prompt injection, tool poisoning, and data leakage. An Agent Anomaly Detection system flags suspicious behavior by analyzing the intent behind agent actions, and gives users the chance to stop it before it goes rogue. Then there are the ways Google has said its tools can be used to fine-tune agents, such as Agent Simulation for stress-testing them against synthetic interactions before deployment. Agent Evaluation scores live performance, while Agent Observability dashboards trace execution paths and diagnosing problems in real time for rapid debugging, the cloud giant told reporters. Google said the Gemini Enterprise app -- the consumer-facing side of the platform -- is a place where non-technical employees can build and manage their own agents using Agent Designer. Users can create schedule- or trigger-based agents to automate multi-step processes, while an "Inbox in Google Enterprise gives those users a central hub for monitoring agent activity with notifications sorted into categories like "Needs your input," "Errors," and "Completed." Google CEO Sundar Pichai said, based on internal adoption statistics, there is evidence of a shift toward agentic workflows. He said 75 percent of all code at Google is now AI-generated and approved by engineers, up from 50 percent last (northern hemisphere) fall. In a blog, he described a recent internal code migration completed by agents and engineers working together that "was completed six times faster than was possible a year ago with engineers alone." Its tools are surging in popularity, Google claims, with nearly 75 percent of its Cloud customers using AI products, while Gemini Enterprise saw 40 percent growth in paid monthly active users quarter over quarter in Q1, and Google's first-party models now process more than 16 billion tokens per minute via direct API use, up from 10 billion the prior quarter. There also appears to be a lot of token-maxxing among customers. Google said 330 Google Cloud customers each processed more than one trillion tokens, while 35 reached the 10-trillion-token milestone with its models. Within the press material for the show, several large customers provided testimonials about their own Gemini deployments. GE Appliances said it has more than 800 of Google's AI agents running across manufacturing, logistics, and supply chain operations. KPMG reported 90 percent Gemini Enterprise adoption among employees with more than 100 agents deployed in the first month. Tata Steel said it deployed over 300 specialized agents in nine months. Merck announced a partnership valued at up to $1 billion to build an agentic platform across its R&D, manufacturing, and commercial functions. The announcements land in an increasingly competitive market for enterprise AI platforms. Microsoft, Amazon Web Services, and Salesforce have all made a push into agent orchestration and management in recent months. Google's approach leans heavily on vertical integration, with the hope that designing chips, models, infrastructure, and application layers together produces better results than assembling components from different vendors. Google also announced a $750 million fund to support its partner ecosystem in building and deploying agentic AI, along with agreements with McKinsey, Deloitte, and other consulting firms that will receive early access to upcoming models from Google DeepMind. ®

[5]

Pichai opens Cloud Next 2026 with $240B backlog, 750M Gemini users, and a plan to turn Search into an agent manager

Summary: Sundar Pichai opened Cloud Next 2026 with Google Cloud at $70 billion in annual revenue, 48% growth, a $240 billion backlog that doubled in a year, and $175-185 billion in planned capital expenditure. The Gemini app has 750 million monthly users, AI Overviews reach two billion, and the Gemini API processed 85 billion requests in January alone. Pichai framed the conference around Search evolving from a retrieval engine into an "agent manager" and announced the Universal Commerce Protocol with Shopify, Target, and Walmart, while positioning Google's full-stack integration from custom silicon to consumer distribution as the advantage competitors cannot replicate. Sundar Pichai opened Google Cloud Next 2026 on Tuesday with a set of numbers that reframe the competitive dynamics of enterprise AI. Google Cloud is now generating more than $70 billion in annual revenue, growing at 48% year on year, with a backlog of $240 billion, up 55% and more than double the roughly $155 billion of a year ago. The number of billion-dollar deals Google Cloud signed in 2025 exceeded the combined total of the three previous years. Existing customers are outpacing their own commitments by 30%, spending faster than they contracted. Google has committed $175 billion to $185 billion in capital expenditure for 2026, nearly doubling the $91.4 billion it spent last year. Pichai described the moment as "a fundamental rewiring of technology and an accelerant of human ingenuity." The money suggests he may not be exaggerating. The keynote, titled "The Agentic Cloud," was less a product launch than a thesis statement. Google is positioning itself not as a cloud provider that offers AI but as the operating system for what it calls the agentic enterprise: a model in which AI agents handle routine business operations autonomously, communicate with each other across platforms, and interact with the physical world through commerce, search, and real-time data. The pitch is that Google is the only company that controls every layer of that stack, from the custom silicon that runs inference, to the frontier models that power reasoning, to the cloud platform that hosts the agents, to the productivity suite and search engine through which three billion users interact with them. The Gemini app has reached 750 million monthly active users as of the fourth quarter of 2025, up 100 million from the previous quarter. AI Overviews, Google's AI-generated search summaries, reach two billion monthly users across more than 200 countries and drive 10% more search queries globally. AI Overviews now trigger on approximately 48% of all tracked queries, up from 31% in February 2025, a 58% increase in a year. The Gemini API processed 85 billion requests in January 2026, a 142% increase from 35 billion in March 2025. Eight million paid Gemini Enterprise seats are deployed across 2,800 companies. Thirteen million developers are building with Google's generative models. Gemini 3 Pro has had, in Pichai's words, "the fastest adoption of any model in our history." These are not cloud metrics. They are platform metrics. Google is arguing that its advantage over AWS, Azure, OpenAI, and Anthropic lies not in any single product but in the fact that it reaches more users, processes more queries, and touches more surfaces than any competitor. Search alone handles more than a billion shopping interactions per day. Workspace has more than three billion users. Android runs on billions of devices. The thesis is that when AI agents become the primary interface for work and commerce, the company with the largest existing surface area wins, because the agents need somewhere to run, something to connect to, and someone to serve. Pichai's most consequential framing may have come in a podcast appearance earlier this month: "A lot of what are just information-seeking queries will be agentic in Search. You'll be completing tasks. You'll have many threads running." He described Search evolving from a retrieval engine into an "agent manager," an orchestration layer that dispatches AI agents to complete tasks on a user's behalf rather than returning a list of links. The infrastructure for this is already being built. Google announced the Universal Commerce Protocol at NRF in January, an open-source standard for agentic commerce co-developed with Shopify, Etsy, Wayfair, Target, and Walmart. More than 20 partners have endorsed it, including Adyen, American Express, Best Buy, Flipkart, Macy's, Mastercard, Stripe, The Home Depot, Visa, and Zalando. UCP is built on REST and JSON-RPC transports with the Agent2Agent protocol, Model Context Protocol, and a new Agent Payments Protocol built in. It lets AI agents treat any participating store as a programmable service, with the merchant remaining the merchant of record. Pichai, who described himself as "an indecisive shopper," said he is "looking forward to the day when agents can help me get from discovery to purchase." The implications for the advertising industry are significant. If Search shifts from showing links that users click to dispatching agents that complete purchases, the entire cost-per-click model that funds Google's advertising business, and by extension the businesses of every company that advertises on Google, changes. Retailers are already deploying AI-powered shopping through Gemini, ChatGPT, and Copilot. The question is whether agentic commerce cannibalises Google's own advertising revenue or whether Google can capture a larger share of the transaction itself. UCP suggests Google is betting on the latter. The competitive positioning at Cloud Next was unusually direct. Thomas Kurian said competitors are "handing you the pieces, not the platform," leaving enterprise teams to integrate components themselves. The claim rests on Google's vertical integration: Ironwood TPUs and the forthcoming eighth-generation split into Broadcom-designed training chips and MediaTek-designed inference chips provide the silicon. Gemini 3 Pro, 3 Flash, and 3.1 Pro provide the models. The Gemini Enterprise Agent Platform, formerly Vertex AI, provides the developer tools and runtime. Workspace Studio provides the no-code agent builder. Search and Android provide the consumer distribution. No other company assembles all of these under one roof. The argument has a specific target: Microsoft Copilot, which despite being embedded in virtually every Fortune 500 company has struggled with adoption. Only 3.3% of Microsoft 365 users with Copilot access actually pay for it, and its accuracy net promoter score deteriorated to negative 24.1 by September 2025. Google's eight million paid Gemini Enterprise seats in roughly four months represents a faster trajectory, though from a much smaller base. GitHub has frozen new Copilot sign-ups because agentic coding sessions consume more compute than users pay for, illustrating why owning the silicon layer, as Google does, is not just a technical advantage but an economic one. The $175 billion to $185 billion in planned capital expenditure is the number that makes the rest of the strategy credible or alarming, depending on how the next two years unfold. Roughly 60% goes to servers and 40% to data centres and networking equipment. Combined with Microsoft, Meta, and Amazon, total big tech AI infrastructure spending is approaching $700 billion this year, a figure large enough to reshape energy markets and strain power grids. Pichai acknowledged on the fourth-quarter earnings call that the "top question is definitely around compute capacity and all the constraints, be it power, land, supply chain," and expects Google to remain supply-constrained through 2026. The backlog provides the justification. At $240 billion, it represents more than three years of current revenue contracted but not yet delivered. Thirteen product lines each generate more than $1 billion in annual revenue. The ServiceNow deal alone was worth $1.2 billion over five years. If the demand is real, and the backlog suggests it is, then the capital expenditure is not a gamble but an obligation: the cost of building the infrastructure to fulfil commitments already made. Google Cloud holds roughly 11% of the cloud infrastructure market, behind AWS at 31% and Azure at 25%. The gap has narrowed: Google grew at 48% in the fourth quarter of 2025, the fastest of the three, and achieved sustained profitability for the first time. But the gap remains. What Pichai presented at Cloud Next is not a plan to close that gap through incremental cloud sales. It is a plan to redefine what the cloud is, from a place where companies store data and run workloads to a platform where AI agents perform work, make decisions, complete purchases, and coordinate with each other across organisational boundaries. If that transition happens, the company that built the agents, the models, the chips, the protocols, and the distribution channels stands to capture a share of the value that the current market share numbers do not reflect. That is the bet. Cloud Next 2026 is the moment Google made it explicit.

[6]

Google Cloud Next 2026: AI agents, A2A protocol, Workspace Studio, and the full-stack bet against OpenAI and Anthropic

Summary: Google rebranded and consolidated its AI platform at Cloud Next 2026, renaming Vertex AI to the Gemini Enterprise Agent Platform and absorbing Agentspace into a unified Gemini Enterprise product. The announcements include Workspace Studio (no-code agent builder), 200+ models in the Model Garden including Anthropic Claude, partner agents from Box, Workday, Salesforce, and ServiceNow, ADK v1.0 stable releases across four languages, Project Mariner (web-browsing agent), managed MCP servers with Apigee as an API-to-agent bridge, and A2A protocol v1.0 in production at 150 organisations. Kurian framed the strategy as owning the full stack from chip to inbox while competitors "hand you the pieces, not the platform." Google used the opening keynote of Cloud Next 2026 on Tuesday to unveil what amounts to a full rebranding and consolidation of its AI platform around agents. Vertex AI is now the Gemini Enterprise Agent Platform. Google Agentspace, the employee-facing AI assistant, has been absorbed into a unified product called Gemini Enterprise. The announcements span a no-code agent builder for Google Workspace, a redesigned developer platform with more than 200 models including third-party options such as Anthropic's Claude, a web-browsing agent called Project Mariner, managed MCP servers across Google Cloud services, and the production-grade Agent2Agent protocol for cross-platform agent communication. Thomas Kurian, Google Cloud's chief executive, titled the keynote "The Agentic Cloud" and drew a deliberate contrast with competitors: other vendors, he said, are "handing you the pieces, not the platform," leaving teams to integrate components themselves. The timing is deliberate. OpenAI's Operator is scoring 87% on complex browser task benchmarks and the company has recruited Cognizant and CGI to push its Codex coding agent into enterprise software shops, with enterprise revenue now accounting for 40% of OpenAI's total. Anthropic has launched a marketplace for Claude-powered enterprise tools and its Model Context Protocol has reached 10,000 servers and 97 million monthly SDK downloads. Google is fighting from third position in cloud market share, behind AWS and Microsoft Azure, but exited the fourth quarter of 2025 with the fastest growth rate of the three at 50% year on year, and is betting that vertical integration, owning the model, the runtime, the silicon, and the distribution channel through Workspace, gives it an advantage neither competitor can replicate. Google Workspace Studio is the most consumer-facing announcement. It is a no-code platform that lets business users build and deploy AI agents across Gmail, Docs, Sheets, Drive, Meet, and Chat by describing automations in plain language. A user can type "every Friday, ping me to update my tracker" and Gemini creates the automation. Workspace Studio connects to third-party applications including Asana, Jira, Mailchimp, and Salesforce, and can call external APIs via webhooks or run custom logic through Apps Script. It is rolling out to Google Workspace business, enterprise, and education customers. The developer-facing platform, now called the Gemini Enterprise Agent Platform, received deeper upgrades. Agent Designer, a visual flow canvas for building agent workflows, is in preview. Agent Engine Sessions and Memory Bank, which give agents persistent context across interactions, are generally available. A new Agent Garden provides prebuilt agent solutions for customer service, data analysis, and creative tasks. A free tier via Express mode lowers the entry barrier. The Model Garden now hosts more than 200 models spanning Google's own Gemini and Gemma families, third-party models including Anthropic Claude, and open models such as Llama. Google also announced six new agents for data engineering and coding in BigQuery, including a data engineering agent that automates pipeline creation from natural language prompts and a code interpreter that translates queries into executable Python with visualisations. Partner agents from Box, Workday, Salesforce, ServiceNow, Dun and Bradstreet, and S&P Global are integrated into the platform, giving enterprise customers prebuilt capabilities for document intelligence, HR self-service, IT operations, and financial data. Project Mariner, Google DeepMind's web-browsing agent powered by Gemini 2.0, scores 83.5% on the WebVoyager benchmark and handles ten concurrent tasks on cloud-based virtual machines. It automates shopping, information retrieval, and form-filling, and is available to Google AI Ultra subscribers in the United States. The roadmap includes a visual builder called Mariner Studio in the second quarter, cross-device synchronisation in the third quarter, and an agent marketplace in the fourth quarter. The most strategically significant announcement may be the least visible to end users. Google's Agent2Agent (A2A) protocol, originally launched with more than 50 technology partners, has reached 150 organisations in production, not pilot, routing real tasks between agents built on different platforms. The protocol is now governed by the Linux Foundation's Agentic AI Foundation and has reached version 1.2, with signed agent cards using cryptographic signatures for domain verification. Microsoft, AWS, Salesforce, SAP, and ServiceNow are running A2A in production environments. A2A is designed to complement rather than compete with Anthropic's Model Context Protocol (MCP). MCP handles how an agent connects to tools and data sources. A2A handles how agents communicate with each other across organisational and platform boundaries. Google adopted MCP across its own services in December 2025, launching fully managed remote MCP servers for Google Maps, BigQuery, Compute Engine, and Kubernetes Engine, with Cloud Run, Cloud Storage, AlloyDB, Cloud SQL, Spanner, Looker, and Pub/Sub on the roadmap. Apigee, Google's API management platform, now functions as an MCP bridge, translating any standard API into a discoverable agent tool with existing security and governance controls. Google is simultaneously positioning A2A as the standard for the layer above: the orchestration of multiple agents from multiple vendors working together on a single task. The practical implication is that a Salesforce agent built on Agentforce can hand off a task to a Google agent running on Vertex AI, which can query a ServiceNow agent for IT asset data, all through A2A without any of the three systems needing to understand each other's internal architecture. Native A2A support is now built into Google's Agent Development Kit, LangGraph, CrewAI, LlamaIndex Agents, Semantic Kernel, and AutoGen. Google's open-source Agent Development Kit reached stable v1.0 releases across Python, Go, and Java, with TypeScript support also available. It is a code-first framework optimised for Gemini but model-agnostic and deployable to any container or Kubernetes environment. The security layer includes Model Armor for defence against indirect prompt injection, zero-trust architecture applied to decentralised agent systems, and access management through Google Cloud IAM with audit logging. OpenAI's own enterprise agent push through Codex and systems integrator partnerships has reached three million weekly users. Anthropic's enterprise marketplace for Claude-powered tools is building an ecosystem through partners including Snowflake. Microsoft's Copilot is embedded in virtually every Fortune 500 company. AWS has Bedrock with its own agents framework maturing rapidly. The enterprise AI agent market is not a two-horse race. It is a five-way contest in which each competitor has a structural advantage the others lack. OpenAI has the strongest consumer brand and the most advanced reasoning models. Anthropic has the most trusted safety positioning and the fastest-growing enterprise revenue. Microsoft has the deepest enterprise distribution through Office and Azure. AWS has the largest cloud infrastructure base and the strongest developer gravity. Google's argument is that it is the only company that owns all four layers of the stack: the custom silicon (Ironwood TPUs), the frontier models (Gemini), the cloud platform (now unified as the Gemini Enterprise Agent Platform), and the enterprise distribution channel (Workspace with more than three billion users across Google's productivity tools). Kurian framed the strategy explicitly: "If you want to adopt a technology successfully, you need to pick a few important projects and do them well, rather than spraying on a lot of little projects." No other competitor controls the full vertical from chip to application. Google's own AI Agent Trends report, published ahead of the conference, found that 89% of business teams are already using AI agents and the average organisation runs 12. The most common enterprise use cases are customer service at 49%, marketing at 46%, security operations at 46%, and IT support at 45%. Early customer deployments suggest the productivity claims are not purely theoretical: Danfoss, the Danish industrial manufacturer, automated 80% of transactional decisions in email-based order processing using Google's agents, reducing response times from 42 hours to near real-time. Suzano, a Brazilian pulp and paper company, built an agent with Gemini Pro that translates natural language into SQL queries, cutting query time by 95% for 50,000 employees. The agents run on Google's Gemini model family, with the Gemini 2.5 generation being retired in October in favour of the 3.x line. Gemini 3 Pro and Gemini 3 Flash, released in late 2025 and iterated through early 2026, provide the reasoning backbone. Gemini 3 Flash delivers a 15% improvement in overall accuracy over Gemini 2.5 Flash and is optimised for high-frequency agentic workflows and real-time processing. Gemini 3.1 Pro, the most advanced reasoning variant, is available in preview. A new experimental model, GLM 5, targets complex systems engineering and long-horizon agentic tasks through the Model Garden. Gemini 3.2 is expected to be formally announced during the conference, with an expanded context window beyond one million tokens and optimised parameter counts for reduced inference latency. Demis Hassabis, DeepMind's chief executive, stated in January that his team is "focusing on Gemini 4 this year." Google also recently launched Gemma 4 open models under Apache 2.0 licensing, built from the same research as Gemini 3 and providing an open-weight alternative for enterprise customers who need to run models on their own infrastructure. The infrastructure beneath the models is equally central to the pitch. Ironwood, Google's seventh-generation TPU announced the same day, delivers 4.6 petaFLOPS per chip and scales to 9,216-chip superpods producing 42.5 exaFLOPS. Anthropic has committed to up to one million Ironwood units. The custom silicon means Google can offer inference at costs that customers buying Nvidia GPUs at retail cannot match, which in a market where inference is the dominant and growing expense, translates directly into pricing power for the agent services that run on top. Google Cloud holds roughly 11% of the cloud infrastructure market. AWS holds 31%. Azure holds 25%. The gap is significant and Cloud Next will not close it. But the agentic era, if it materialises at the scale Google is projecting, reshuffles the competitive dynamics in ways that favour a company with a vertically integrated stack over companies that assemble their AI capabilities from multiple vendors. Google is betting that the enterprise customer who adopts AI agents at scale will choose the platform where the model, the runtime, the silicon, the governance, and the productivity suite are all built by the same company and optimised to work together. It is a large bet. Cloud Next 2026 is where Google is asking enterprises to take it.

[7]

Google and AWS split the AI agent stack between control and execution

The era of enterprises stitching together prompt chains and shadow agents is nearing its end as more options for orchestrating complex multi-agent systems emerge. As organizations move AI agents into production, the question remains: "how will we manage them?" Google and Amazon Web Services offer fundamentally different answers, illustrating a split in the AI stack. Google's approach is to run agentic management on the system layer, while AWS's harness method sets up in the execution layer. The debate on how to manage and control gained new energy this past month as competing companies released or updated their agent builder platforms -- Anthropic with the new Claude Managed Agents and OpenAI with enhancements to the Agents SDK -- giving developer teams options for managing agents. AWS with new capabilities added to Bedrock AgentCore is optimizing for velocity -- relying on harnesses to bring agents to product faster -- while still offering identity and tool management. Meanwhile, Google's Gemini Enterprise adopts a governance-focused approach using a Kubernetes-style control plane. Each method offers a glimpse into how agents move from short-burst task helpers to longer-running entities within a workflow. To understand where each company stands, here's what's actually new. Google released a new version of Gemini Enterprise, bringing its enterprise AI agent offerings -- Gemini Enterprise Platform and Gemini Enterprise Application -- under one umbrella. The company has rebranded Vertex AI as Gemini Enterprise Platform, though it insists that, aside from the name change and new features, it's still fundamentally the same interface. "We want to provide a platform and a front door for companies to have access to all the AI systems and tools that Google provides," Maryam Gholami, senior director, product management for Gemini Enterprise, told VentureBeat in an interview. "The way you can think about it is that the Gemini Enterprise Application is built on top of the Gemini Enterprise Agent Platform, and the security and governance tools are all provided for free as part of Gemini Enterprise Application subscription." On the other hand, AWS added a new managed agent harness to Bedrock Agentcore. The company said in a press release shared with VentureBeat that the harness "replaces upfront build with a config-based starting point powered by Strands Agents, AWS's open source agent framework." Users define what the agent does, the model it uses and the tools it calls, and AgentCore does the work to stitch all of that together to run the agent. The shift toward stateful, long-running autonomous agents has forced a rethink of how AI systems behave. As agents move from short-lived tasks to long-running workflows, a new class of failure is emerging: state drift. As agents continue operating, they accumulate state -- memory, too, responses and evolving context. Over time, that state becomes outdated. Data sources change, or tools can return conflicting responses. But the agent becomes more vulnerable to inconsistencies and becomes less truthful. Agent reliability becomes a systems problem, and managing that drift may need more than faster execution; it may require visibility and control. It's this failure point that platforms like Gemini Enterprise and AgentCore try to prevent. Though this shift is already happening, Gholami admitted that customers will dictate how they want to run and control any long-running agent. "We are going to learn a lot from customers where they would be using long-running agents, where they just assign a task to these autonomous agents to just go ahead and do," Gholami said. "Of course, there are tricks and balances to get right and the agent may come back and ask for more input." What's becoming increasingly clear is that the AI stack is separating into distinct layers, solving different problems. AWS and, to a certain extent, Anthropic and OpenAI, optimize for faster deployment. Claude Managed Agents abstracts much of the backend work for standing up an agent, while the Agents SDK now includes support for sandboxes and a ready-made harness. These approaches aim to lower the barrier to getting agents up and running. Google offers a centralized control panel to manage identity, enforce policies and monitor long-running behaviors. Enterprises likely need both. As some practitioners see it, their businesses have to have a serious conversation on how much risk they are willing to take. "The main takeaway for enterprise technology leaders considering these technologies at the moment may be formulated this way: while the agent harness vs. runtime question is often perceived as build vs. buy, this is primarily a matter of risk management. If you can afford to run your agents through a third-party runtime because they do not affect your revenue streams, that is okay. On the contrary, in the context of more critical processes, the latter option will be the only one to consider from a business perspective," Rafael Sarim Oezdemir, head of growth at EZContacts, told VentureBeat in an email. Iterating quickly lets teams experiment and discover what agents can do, while centralized control adds a layer of trust. What enterprises need is to ensure they are not locked into systems designed purely for a single way of executing agents.

[8]

Gemini Enterprise Agent Platform lets you build, govern, and optimize your agents.

Gemini Enterprise Agent Platform is our new developer platform that has everything your technical teams need to build, scale, govern and optimize agents. Think of it as a one-stop-shop for all of your autonomous agents, built on top of our leading infrastructure and integrated with our data and security capabilities. This new platform, announced at Google Cloud Next '26, brings the model building and tuning services of Vertex AI together with new features for agent integration, security, DevOps and more. Agent Platform is designed to flex to your team's unique needs and provides access to Gemini 3.1 Pro, Gemini 3.1 Flash Image (Nano Banana 2) and Lyria 3. It also supports Anthropic's Claude Opus, Sonnet and Haiku. Plus, Agent Platform integrates with the Gemini Enterprise app, which acts as the front door for AI for every employee. Learn more about Gemini Enterprise Agent Platform on the Cloud blog.

[9]

Agentic AI blueprint key focus for Genpact and Google - SiliconANGLE

You can't prompt your way out of complexity: Why enterprise AI is turning to to process intelligence As enterprises navigate the complexities of scaling AI initiatives, the agentic AI blueprint for success lies in combining deep process intelligence with powerful cloud platforms, enabling organizations such as Genpact Ltd. to transform finance and operations workflows through reimagined processes and effective partner ecosystems. That convergence defines the moment the industry finds itself in as a wave of agentic AI announcements proliferates. The technology is maturing quickly; the partner relationships that determine who actually captures value are maturing too, according to Nidhi Srivastava (pictured, right), senior vice president and head of digital and cloud at Genpact. "The partner ecosystem is now the new village wherein we work with the vision of the client," Srivastava said. "We've been working with business processes for our clients for many, many years. We have a very deep understanding of what the last mile looks like for our clients. When you combine that with the power of a platform like Google Cloud, it becomes a motif for success." Srivastava and Pallab Deb (left), managing director of global partner go-to-market practice and product engagement at Google Cloud LLC, spoke with theCUBE's John Furrier and co-host Alison Kosik at Google Cloud Next, during an exclusive broadcast on theCUBE, SiliconANGLE Media's livestreaming studio. They discussed the agentic AI blueprint for success and why process intelligence is surging as the decisive enterprise advantage. (* Disclosure below.) One of the clearest signals of agentic AI's maturity is where early enterprise momentum is concentrating. Finance transformation -- long considered a back-office function -- has emerged as a primary beachhead for agentic deployments, driven by its deterministic nature and clear return on investment. Genpact moved early on this signal, launching its agentic AP Suite to automate accounts payable workflows end to end as part of its broader Service-as-Agentic-Solutions portfolio, Srivastava noted. "I think we are clearly at the point where we should solve the real problems," Srivastava said. "Playing at the edge and getting used to it and feeling comfortable -- we are past that point. Finance transformation has kind of bubbled up to the top." The return of process expertise as a first-class discipline echoes a pattern traceable back to the ERP era, according to Deb. AI is bringing functional depth back to the foreground after two decades in which pure technology focus -- mobile, data lakes, cloud -- dominated enterprise investment, he explained. The engineering gap that separates a polished demo from a production deployment on a mission-critical SAP environment is where that expertise now earns its keep. "You think you can prompt your way out of complexity? Don't even try," Deb said. "The demos are going to show you that you can prompt and get wonderful things [to] happen. Try doing that on top of a fairly custom SAP installation that manages your supply chain, where things are mission-critical. You're probably going to need hard engineering work. I think that's the gap between what's promised versus what it takes to get through the journey." Google Cloud's $750 million investment in its partner ecosystem underlines the strategic weight both companies are placing on the agentic AI blueprint for success. But throwing AI at an unreformed process yields only marginal gains -- the prerequisite is reimagining workflows for AI from the ground up, including value stream mapping and building agents that mirror the personas of the people they are designed to support, according to Srivastava. "It's super important to reimagine the process for AI, because throwing AI on an old process only gives you marginal improvement," she said. "That's also where the business case of AI sometimes suffers, because people are just instrumenting AI into an existing process which was not designed for AI. Spending enough time reimagining the process is critical to the scale when you're looking for success at scale." Here's the complete video interview, part of SiliconANGLE's and theCUBE's coverage of Google Cloud Next:

[10]

Next '26 - when you don't have enterprise legacy, you build it. Google Cloud's playbook for winning agentic AI trust.

On the first day of Next '26, I asked whether Google Cloud's push to own the enterprise AI governance layer was a realistic competitive proposition - or whether enterprise buyer legacy and a competitive landscape that's seeing ServiceNow, Salesforce, Workday and Microsoft all making the same claim (amongst others) - would make this difficult. Having spent two days here in Las Vegas with the company - sitting in on the keynotes, product demos, interviewing customers, speaking with CEO Thomas Kurian and other Google Cloud executives - I think I've got a better understanding of Google Cloud's playbook for winning agentic AI trust in the enterprise. The competitive questions I raised on Tuesday haven't disappeared, but I think Google Cloud's official position of 'being Switzerland' in the enterprise AI value capture is a little more nuanced than it's fully letting on. Google Cloud's strategy, I think, is to build the enterprise trust it sometimes lacks - by embedding with customers, co-investing in outcomes, and proving value on the ground. Google Cloud took to the main stage this week to talk up the benefits of its full stack - the 'Android of the agentic era', as Kurian put it. The core argument - that delivering serious agentic AI by stitching together fragmented models, disconnected silicon and separate governance tools is much harder than doing so via an integrated stack - has a real logic to it. The Gemini Enterprise Agent Platform, with Agent Identity, Agent Gateway and OTel-based observability that can aggregate traces from third-party agents, is a coherent governance architecture. Combine this with the Knowledge Catalog, the Cross-Cloud Lakehouse, and Bring Your Own MCP support - these represent a serious attempt to build a platform that is genuinely open at the edges while being meaningfully differentiated at the center. The real test of that argument is whether it holds up in practice. I spoke with Matt Renner, Google Cloud's Chief Revenue Officer,where he framed Google's competitive positioning as being a neutral partner. He said: Our approach is to be the platform - the Switzerland - that orchestrates across all of that, and creates interoperability so you don't have to throw away the agents you've already built. The reality is, if you're going to have one place to orchestrate your agent strategy, it should probably be independent of your application strategy. That's where we're seeing a lot of traction on Gemini Enterprise. His assessment of how the major SaaS vendors are faring with the same ambition was: They've tried this with data. They're trying it now with agents. Mixed success at best. Whether you find Google's claim to Switzerland more credible than a ServiceNow or Salesforce making the equivalent claim is a reasonable debate. But the structural argument - that the orchestration layer should probably sit independent of any individual application vendor - has logic behind it, and 1,500 enterprise Gemini Enterprise customers suggests it is resonating with buyers. Two customers I interviewed this week put some meat on the bones of how this is playing out in reality for enterprise buyers. They illustrate what the full stack proposition looks like when it is actually working - and at a level of ambition well beyond the efficiency plays that dominate most enterprise AI conversations. At Merck, the use cases span drug discovery, clinical development, manufacturing and commercial operations (I'll be writing up this full case study in the coming days). Dave Williams, Chief Information and Digital Officer at the company, described what agentic AI looks like in the context of in-silico drug development: When you start to think about how agents and robotics can play a role - where the scientists, rather than manually running all those filters, are more guiding the process - you start to see really significant productivity improvements. At Citi Wealth, the Sky conversational avatar is an external-facing customer engagement platform positioned explicitly as a revenue play, not a cost reduction exercise. Joseph Bonanno, Head of Wealth Intelligence at the organization, said that Citi is looking to agentic to drive revenue, not just drive out cost: Our clients actually have about five trillion dollars away from us. There lies the opportunity. If I can upsell, cross-sell and retain clients, that is far more important than finding efficiencies here and there. Everybody's doing efficiencies. This is about playing offence. Both cases involve genuinely cross-organizational AI deployments that span functions and workflows in ways earlier technology waves could not. Rohit Bhat, GM and Managing Director of Financial Services at Google Cloud, outlined why Citi was able to pursue such a valuable client-facing use case: One of the big advantages we've had with Citi has been the full-stack advantage on our platform. That concept gets a bit lost in the noise, but it matters: if part of your company is devoted to understanding how to build models and capabilities, informing the team thinking about how data systems need to interact within those models, informing the team building the software layer on which you build client experiences -- and you have those design and engineering teams under one roof -- you can have a much more defined and informed strategy on governance, controls, policy and risk systems. Neither Merck nor Citi arrived at these use cases by accident. Both had spent years doing the foundational data work before any of this was possible. And they're now looking at Google Cloud's full stack to provide them a platform for future agentic work. I'll come back to this - it's important. In a private press roundtable on the second day, I put the governance contest directly to Kurian: given that multiple vendors are making essentially the same pitch, how are enterprise buyers deciding which governance platform to trust - and do you think we end up with one layer or multiple competing ones? His answer covered the technical architecture carefully - agent identity, the zero trust principle applied to agents, the OTel logging standard enabling cross-platform visibility. On that last point, he said: We're trying to provide customers with a central place they can monitor, manage, and govern all their agents, whether built by us or built on another platform and exposed to us. What he did not address was the enterprise political dimension of that, nor would he be drawn on whether one platform would be chosen to govern across all areas of the enterprise. ServiceNow would describe its agents as the ones governing others. Salesforce built Agentforce specifically to own the customer-facing governance layer. Other vendors are making similar arguments and they're not going to quietly accept Google's Agent Gateway as the authoritative monitoring surface. The skirting around the question was itself informative...Switzerland. Renner was, in this respect, a bit more candid. He acknowledged that the realistic near-term outcome is not one platform replacing another but customers using multiple - with Google competing hard for the cross-functional orchestration layer while ISVs retain their domain-specific positions. He said: My honest view is that customers aren't going to do without their ISVs -- they're just going to use both us and the ISVs, rather than choosing one or the other. However, despite all of this, how do you get from where most enterprises actually are - messy legacy estates, siloed data, change-averse workforces - to the deployments Merck and Citi are describing? Simply put, even before Google Cloud entered with agentic AI, huge amounts of work had been done by the organizations already. Merck's Williams offered a grounded CIO-level answer - when asked what advice he would give peers in regulated industries, he came back to three things: The foundation - the data. Without that, this isn't going to work. Second, the human side - change management. And third, focus on the right things. On change management specifically, he was unsparing - calling it "probably the longest pole in the tent." And the emotional component of that challenge came through elsewhere in our conversation: Everybody in my seat is excited about agentic AI on one hand, and also a bit terrified on the other. If you don't think about this proactively with the right tools, you could end up with thousands of people building agents that you don't know are properly governed - from a cyber standpoint, a quality standpoint, a risk standpoint. Again, this is a data readiness, change management and organizational capability problem. And both Merck and Citi have been building towards agents for a while. Merck has been running a cloud acceleration programme for five years, has consolidated its commercial estate into a common data model, and has a single manufacturing data model across all its shop floors. Citi spent years consolidating infrastructure that Bonanno described as looking like "seven different companies" before building the One Wealth platform that makes the agentic avatar Sky possible. Google Cloud met both organizations at a point of readiness that most enterprises have not yet reached - and this is important. This context on where customers are is important, as it frames what Google Cloud is attempting to do going forward. What is genuinely interesting about Google Cloud's approach - and what I did not fully appreciate earlier this week - is how deliberate the strategy is for supporting customers towards agentic AI (and consequently, governance). It is not waiting for enterprises to become ready. It is actively supporting them to get from A to B. Williams described the Merck partnership in terms that go well beyond a technology purchase. Google is, he said, co-investing "to solve the data, process, and people upskilling challenge - not just selling the software." The speed at which the deal came together is also telling: It was only two and a half months ago that I emailed [Google Cloud] and said we want to take this to the next level. We got together, set ambitious goals, said we want to get this done before the end of the first quarter - not actually thinking that was ever going to happen. But it did. And not only that, the teams already have a roadmap and deployment plan for Gemini Enterprise across all our employees. We've been impressed with how quickly everyone is moving. The $750 million partner fund announced this week supports this logic too - embedding forward-deployed engineers alongside Accenture, Deloitte, PwC and the major GSIs to work directly inside customer environments, specifically tasked with resolving data readiness issues and integration complexities. The McKinsey Google Transformation Group, also announced at the event, takes this further: joint teams, co-funded value assessments, outcome-based commercial models, with McKinsey QuantumBlack technologists working alongside Google's FDEs on client use cases. Google Cloud's Renner outlined the underlying philosophy: Our strategy is not to bundle. Our strategy is to have successful projects. Show up with the right technical resources, work together, and make it work. He also noted that the POC-to-production success rate for enterprise AI projects three years ago was around ten per cent. It is significantly higher now, he said, driven by better upfront qualification, clearer governance structures and more experienced GSI partners. That trajectory is the argument. This is a company that, without the decades of installed base that ServiceNow or Salesforce can draw on, is essentially earning strategic relevance by co-investing in the most ambitious use cases customers have - demonstrating value at a depth the incumbent SaaS vendors are not currently positioned to match, and betting that the relationships built in that process compound into the strategic partnerships of the next decade. What I am taking away from Las Vegas is that Google Cloud is not simply selling a platform and hoping enterprises find their way to it. It is walking into customer environments and doing the hard organizational and data work alongside them. That is resource-intensive, and the $750 million partner fund and the FDE model are clearly the answer to scaling it beyond the Mercks and the Citis of the world. The question that this week did not answer - and that the next few years will - is whether Google Cloud can extend that model to the enterprises that do not have Merck's data foundations or Citi's transformation appetite, and do it before the incumbents find their footing in the governance layer Google is working hard to claim as its own. On the evidence of this week, it is a serious contender. Whether it is the winner is a different question. Google Cloud may be positioning itself as Switzerland, but if you take its strategy as a whole - it's governance claim, the full stack offering, and its heavy customer co-investment - the company's behaviour is not neutral in its entirety. It's strategic, it's aimed at winning enterprise trust in the agentic AI world, and it's smart.

[11]

Real-time marketing now reality with data and agentic AI - SiliconANGLE

Agentic AI gives CPG brands a real-time edge on marketing spend and product testing Consumer packaged goods companies face mounting pressure to grow profitably while operating on razor-thin margins -- and agentic AI is emerging as the tool that can close the gap between fragmented data and real-time marketing outcomes. The sector has long relied on weeks-long campaign measurement cycles, disconnected consumer data and costly physical test markets to guide brand decisions. Google Cloud LLC's Agentic Data Cloud, unveiled at Google Cloud Next 2026, signals a shift toward giving agents the contextual intelligence needed to act on enterprise data without hindrance -- and its effects are already being felt in CPG, according to Sonia Fife (pictured, right), global leader of consumer packaged goods, strategic industries, at Google Cloud. "On the revenue side we're seeing agents really be able to drive that transformation, looking around corners to understand what trends are coming," Fife said. "Then marketing is helping to take those trends -- transform them into resonant concepts and campaigns." Fife and Jeff Follestad (left), senior manager of GCP partner sales at EPAM Systems Inc., spoke with theCUBE's John Furrier and co-host Alison Kosik at Google Cloud Next, during an exclusive broadcast on theCUBE, SiliconANGLE Media's livestreaming studio. They discussed how agentic AI is transforming CPG real-time marketing workflows, enabling media optimization and accelerating time to market through synthetic consumer data. (* Disclosure below.) One of the most concrete gains from agentic-powered real-time marketing in CPG is the collapse of traditional campaign measurement timelines. Historically, CPG organizations would run a campaign, then wait four to eight weeks to analyze results, adjust media mix and reallocate spend -- a process that allowed markets to shift well before brands could respond, Follestad noted. "In this new world, in literally near real time, that dial or that adjustment of my marketing spend assortment can be [updated]," Follestad said. "If we see customer sentiment shift [or] there's some event that triggers more effectiveness on one channel than another, you can optimize that to great efficiency." Agentic AI is also compressing the cost and time required to validate new product ideas. Rather than organizing physical consumer panels that can take months and significant budget to execute, brands can now deploy synthetic consumer audiences in minutes, Follestad explained. That velocity allows companies to fail fast, redirect resources and prioritize concepts with stronger market signals before committing to production. "If you've developed something in a vacuum and you test it quickly, you can retreat from that product and focus on something else, whereas you may have taken six months to get to that point in the past," Follestad said. "It's in an industry very sensitive to margins and running very lean." The frontier for CPG extends further into the transition from the physical shelf to the invisible shelf -- where agentic commerce protocols enable AI agents to discover, evaluate and purchase products on behalf of consumers, according to Fife. Brands competing in this environment must treat their product information management and digital asset management data as agent-ready infrastructure from day one. "The objective of agentic commerce is to make the experience frictionless for the customer, for the consumer," Fife said. "Whether that's shopping agents that will become a part of the repertoire for many D2C brands, or even brand agents that are driving a whole level of experience -- this is really a time of transformation, but ... it begins, first of all, as always with the data." Here's the complete video interview, part of SiliconANGLE's and theCUBE's coverage of Google Cloud Next:

[12]

Next '26 - control the agents, control the enterprise? Google Cloud enters the battle for enterprise AI governance