Tesla completes AI5 chip design with 40X performance leap, but deployment still years away

6 Sources

[1]

Elon Musk demonstrates first sample of Tesla AI5 processor, accidentally thanks TSC rather than TSMC -- claims 40X performance boost over the predecessor

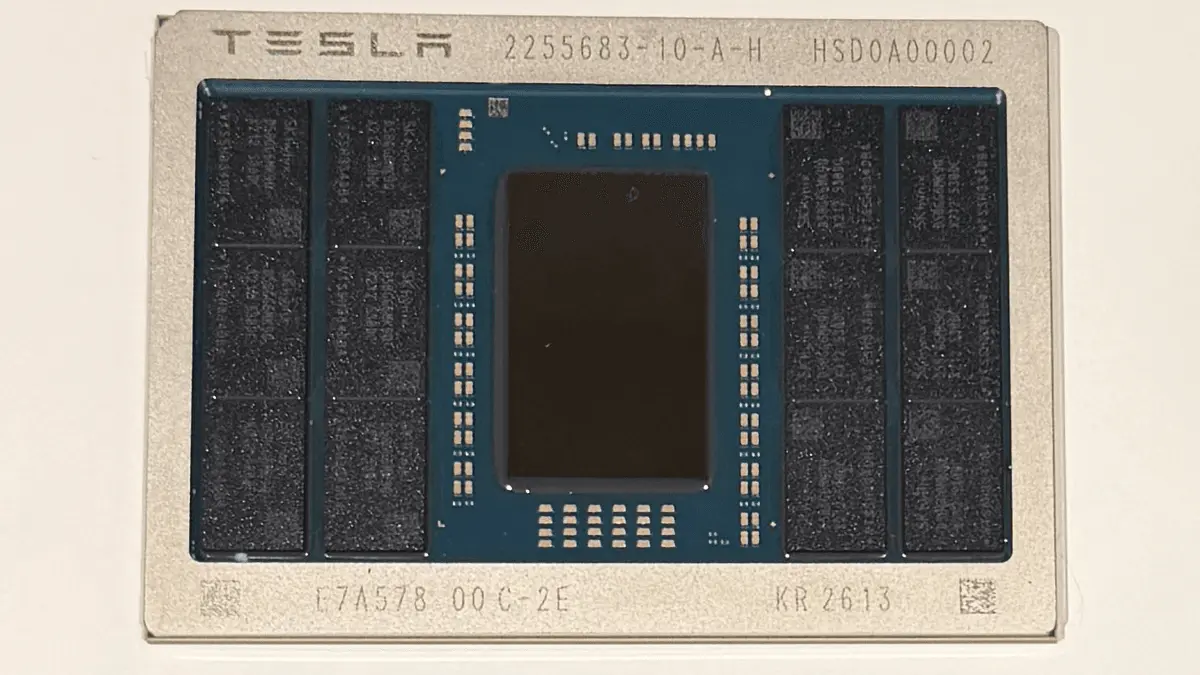

Elon Musk on Wednesday showcased an image of one of the first samples of Tesla's AI5 hardware that will be used to drive AI applications in Tesla's cars, Optimus robots, and potentially xAI data centers. The AI5 processor is about half of the reticle size, uses industry-standard memory, and yet can be up to 40X faster than AI4 in certain scenarios, according to Elon Musk. "Congrats to the Tesla_AI chip design team on taping out AI5," Elon Musk wrote in an X post. "AI6, Dojo 3 and other exciting chips in [the works]. [...] And thank you to @TaiwanSemi_TSC and Samsung for your support in bringing this chip to production! It will be one of most produced AI chips ever." The Tesla AI5 processor module features a fairly small ASIC die (about half the reticle size, according to Musk's previous comments) surrounded by 12 memory packages from SK hynix (most likely GDDR6/7). The module uses an organic substrate, and the memory packages are marked like industry-standard DRAM products. While we do not know how wide AI5's memory interface is, 12 memory packages clearly indicate that we are dealing with a fairly wide memory I/O. If we are indeed dealing with 12 GDDR6/7 memory ICs, then the Tesla AI5 ASIC has a 384-bit memory interface. Depending on the memory type used, the Tesla AI5 can offer memory bandwidth between 768 GB/s and 1.536 GB/s. Exact performance of the AI5 has not been disclosed, though Musk claims significant -- up to 40X -- improvements over AI5 in select cases. "I think the Tesla chip team is really designing an incredible chip here: by some metrics the AI5 chip will be 40 times better than the AI4 chip," Elon Musk said during Tesla's Q3 2025 earnings call. "As a result of [outdated hardware] deletions, we can actually fit AI5 [on a] half [of a] reticle with good margin for the traces from the memory to the Tesla trip accelerators, the Arm CPU cores, and PCIe blocks." Although Musk claims that the AI5 has just been 'taped out' (which means that the final chip design has been sent to a photomask house), he actually shows an already fabricated processor with a 'KR 2613' marking on it, which suggests that the ASIC was packaged on the 13 week of 2026. Musk also mentions Taiwan Semiconductor (TSC) and Samsung for bringing the chip to production, though we are not sure that the producer of passive components has anything to do with bringing the AI5 processor to production. More likely, Musk meant Taiwan Semiconductor Manufacturing Co., better known as TSMC. Previously, the head of Tesla, SpaceX, and xAI said that the AI5 would be made by both TSMC and Samsung Foundry, though we do not know which contract chipmaker fabbed the current sample. Assuming that Tesla got the chip in March or early April and no re-spin is required, it is reasonable to expect the company to deploy the processor sometimes in 2027. Perhaps the most intriguing part of the announcement is that Tesla has apparently not given up on the Dojo system-on-wafer (SoW) processor for AI training, and the Dojo 3 processor is in the works. It was reported last August that the Dojo wafer-level processor initiative had been abandoned and the team behind it dismantled. Indeed, Peter Bannon, the head of the Dojo project at Tesla, retired last August, according to his LinkedIn page. Elon Musk said in July that the AI6 and Dojo 3 could feature a converged architecture (a converged ISA, we would speculate), which would enable the company to unify its software stack and could potentially allow the company to unify its hardware stack as well. "I think about Dojo 3 and the AI6 as the first [converged architecture designs]," Musk said in a July 23 earnings call (via Investing.com). "It seems like intuitively, we want to try to find convergence there where it is basically the same chip that is used where we use, say, two of them in a car or an Optimus and maybe a larger number on a on a [server] board, a kind of 5 - 12 twelve on a board or something like that. [...] That sort of seems like intuitively the sensible way to go." Follow Tom's Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

[2]

Tesla taped out AI5 chip, Musk says -- nearly 2 years behind schedule

Tesla has taped out its next-generation AI5 self-driving chip, CEO Elon Musk said overnight, a key milestone that sends the final design to the foundry for fabrication. The tape-out comes nearly two years after Tesla originally promised AI5 would be in vehicles, and it still leaves volume production more than a year away. In a post on X at 3:21 AM today, Musk wrote: "Congrats to the @Tesla_AI chip design team on taping out AI5! AI6, Dojo3 & other exciting chips in work." He shared this picture of the chip: A "tape-out" is the point at which a chip's design is locked and sent to a semiconductor foundry to begin fabrication. It is a meaningful milestone -- but it is not the finish line. After tape-out, the chip still has to be manufactured, tested in silicon, validated, and ramped to volume production. For an automotive-grade AI accelerator, that process typically takes 12 to 18 months. Tesla is reportedly using TSMC for AI5 production, while Samsung's 2nm line is tied to the follow-on AI6 chip. We reported last month that AI6 has already slipped roughly six months because of Samsung 2nm yield issues, pushing mass production to Q4 2027 at the earliest. Today's tape-out is worth putting next to Tesla's own earlier promises on this chip: Musk has previously described AI5 as up to 10x more powerful than AI4 and floated a 9-month design cycle for subsequent generations -- a cadence that, as we've noted, is unheard of for a major architectural overhaul in the semiconductor industry. Tesla's own "AI4.5" stopgap computer, quietly introduced in 2026 Model Y vehicles late last year, exists precisely because of this delay. The company needed more compute to keep feeding larger FSD neural networks while AI5 slipped. The practical implication of today's milestone is straightforward: AI5 is real and moving, but it will not be in a meaningful number of vehicles this year. Tesla has already confirmed the Cybercab -- scheduled for production in Q2 2026 -- will launch on AI4, the same hardware currently sold in Model Y, Model 3, Model S, Model X, and Cybertruck. Tesla has said it needs "several hundred thousand completed AI5 boards line side" before it can switch production lines, and that volume isn't expected until mid-2027. AI6, Dojo3, and whatever other chips Musk is alluding to remain on paper. Tesla's AI hardware progress is, honestly, bittersweet. On one hand, we want to see Tesla's self-driving compute get better. FSD is clearly compute-bound on bigger neural nets, and more powerful silicon is part of the path forward. A taped-out AI5 is real progress after years of slipping timelines, and it's good to see the chip finally heading to the fab. On the other hand, every new Tesla chip generation carries the same problem: it makes the last one obsolete before Tesla has delivered what it sold on that hardware. Tesla sold millions of cars with HW3 and HW4 on the promise of unsupervised self-driving. HW3, by Musk's own admission last year, can't do it. HW4 is running V14, and it still isn't unsupervised. AI4.5 is already in new Model Ys. Now AI5 is taped out, and AI6 and Dojo3 are coming. The pattern is hard to miss: Tesla keeps moving the goalpost to the next chip instead of delivering what was promised and sold on the current one. Every tape-out announcement is, in that sense, also a quiet confirmation that a lot of existing customers are not getting the product they paid for on the hardware they own. That's the part Musk never congratulates the team for.

[3]

Tesla AI5 AI chip taped out in partnership with Samsung and TSMC

TL;DR: Tesla has finalized the design of its AI5 chip for Full Self-Driving, matching NVIDIA Hopper's performance and potentially rivaling Blackwell with dual setups. Produced at Samsung and TSMC facilities, AI5 features up to 192GB LPDDR5X memory and aims for mass production by late 2026 or early 2027. Tesla CEO Elon Musk took to X (formerly Twitter) to announce that Tesla has completed the design of its AI5 chip for Full Self-Driving (FSD). Musk congratulated the Tesla AI team on the successful tape-out of AI5 and also shared an image of the full chip. We covered the rumors surrounding AI5 production earlier this year, and now it seems the chip is on track for mass production. The AI5 chip is an AI-focused processor that follows HW4, but Tesla has changed the nomenclature to adopt the "AI" naming scheme going forward. This chip will be employed in Tesla's next-gen Full Self-Driving (FSD) suite. Musk claims that AI5 will be a very capable solution, with performance equivalent to NVIDIA's Hopper architecture. He further adds that a dual AI5 SoC setup can rival NVIDIA Blackwell in performance. In the image of the chip Musk posted, we can see the DRAM chips sourced from SK Hynix. There are two rows of SK Hynix LPDDR5X memory on each side of the chip, with each row having three modules for a total of 12. If these are 16GB modules, that translates to 192GB of LPDDR5X memory for each AI5 SoC. Musk also thanked Samsung and TSMC for their support in production, which is apt, since the chips will be produced at Samsung's plant in Taylor, Texas, and at TSMC's plant in Arizona. This two-pronged production strategy is put in place to diversify the supply chain. Official information on the production timeline remains unknown, but industry experts are eyeing high-volume production in late 2026 or early 2027. As confirmed by Tesla's CEO, work is already underway on the next-generation AI6 chip and Dojo3. For Tesla, the AI5 tape-out was the main milestone, as Musk claimed it was almost "existential" for the company. Now, it can be sent to Samsung and TSMC for production. While full specifications of the chip are not yet known, Elon's previous discussions point towards a 40x improvement over the HW4 chip, along with new features.

[4]

Tesla Pulls 2nm AI Chip Production Onto US Soil, Splitting AI6 and AI6.5 Between Samsung Texas and TSMC Arizona

Elon Musk lays out the plans for Tesla's future AI ecosystem, utilizing both Samsung & TSMC 2nm tech for AI6 & AI6.5 chips. A few days ago, Tesla announced the successful tape out of its AI5 chip, made at Samsung. The chip is just one of the many custom silicon designs that Elon Musk and his enterprises are making to fulfill their in-house AI demand. Musk also talked about the next-generation chips, such as AI6 and the Dojo3 supercomputer project. The company is aiming to move the production of its custom AI chips to Terafab once the project is completed, but will remain a key customer for TSMC and other semiconductor giants. While Terafab is still years away from completion, Tesla's next-gen chips, namely AI6 and AI6.5, will be made at Samsung and TSMC, respectively. Confirming in a post on X, Musk shared some more details on the AI chip roadmap. While he is relieved that the AI5 design is complete and fabbed out, he did state that several design concessions have to be made for faster delivery of the chip, which is why they were able to achieve tape out 45 days ahead of schedule. And while some of the original design plans have been missed out, the upcoming Tesla AI6 chip will address these. AI6 will be manufactured on Samsung's 2nm process node technology in Texas, & will deliver double the performance over AI5. While AI5 leverages LPDDR5X memory, AI6 will be using the newer LPDDR6 memory standard, which addresses those design misses mentioned above. After AI6, Tesla plans to make a further optimized version of the chip called AI6.5, which will further improve the performance, and this chip will be made using TSMC's 2nm technology in Arizona. One of the coolest features coming to AI6 and AI6.5 is the halving of the TRIP AI computation accelerators dedicated to SRAM. This will result in effective memory bandwidth to be an "order of magnitude" greater than the DRAM bandwidth for any calculations made within the SRAM cache. Tesla's AI HW and Inference software lead also stated how he felt satisfied in deleting legacy blocks from previous Tesla IPs, leading to a more efficient and optimized chip for Tesla, SpaceX, and xAI's future AI endeavors. While AI5 is being made at both Samsung & TSMC with volume production slated between 2026 and 2027, the AI6 and AI6.5 chips should target a 2027-2029 timeframe.

[5]

Tesla A15 AI Chip Taped Out, Elon Musk Shares First Pictures & Confirms A16 & Dojo3 In Work

Elon Musk has shared the very first update on the Tesla A15 chip, confirming its successful tape out & plans for A16 and Dojo3. Elon Musk Shares First Pictures of Tesla A15 After Successful Tape Out, The Road Ahead Includes A16 & Dojo3 In the latest update on X, Elon Musk has congratulated the Tesla AI team on the successful tape out of its A15 AI chip. Elon has also shared pictures of the chip, featuring a large primary die in the middle that will handle all of the compute and 12 DRAM modules on the outskirts, offering high capacity and efficiency-optimized bandwidth. A15 is the follow-up to HW4 and will arrive as a next-gen FSD solution for Tesla. In a previous talk, Elon Musk stated that A15 will offer a monumental 40x improvement over the HW4 chip, with 8x raw compute capabilities, 9x memory, and will deliver brand new features. The chip itself is expected to offer close to 2500 TOPS of AI compute, 144 GB of memory per chip, and is designed with the latest transformer engine in mind. The chip is expected to be manufactured at TSMC and Samsung, with a high-volume production slated for late 2026 or early 2027. Elon has shared plans to move next-gen chip production to the upcoming TeraFab, but its announcement is still pending. Meanwhile, Elon also confirms that work is already underway on the next-generation Tesla A16 chip and Dojo3. Since Tesla announced a return to form in the chipmaking business, the plans for Dojo3 are back on track. Tesla resumed the plans for the Supercomputer project back in January 2026, and with Terafab operational and developing DRAM, Packaging, Chips, all under one roof, this goal should not be far away from now. Follow Wccftech on Google to get more of our news coverage in your feeds.

[6]

Tesla Shares Rise on Completion of AI5 Chip Design Process

Shares of Tesla rose after Chief Executive Elon Musk said the company had completed the final stage of the design process for its AI5 chip. Shares were up 6.8% to $388.92 in Wednesday afternoon trading. The stock is down 14% this year. Tesla's chip design team reached the tapeout stage for AI5, referring to the transition from design to fabrication, Musk wrote in a post on his social media platform X. The AI5 chip will be manufactured by TSMC and Samsung, whom Musk thanked on X. "IT will be one of the most produced AI chips ever," Musk wrote. Tesla is currently designing its next-generation AI6 chip, which Samsung agreed to produce last year in a $16.5 billion deal. The company is also working on its Dojo3 chip for artificial-intelligence supercomputing, Musk said. Tesla and SpaceX, Musk's rockets and AI company are also collaborating on a project to build a massive chip facility in Texas, which is designed to bypass the constraints of third-party chip suppliers. Write to Elias Schisgall at [email protected]

Share

Copy Link

Elon Musk announced Tesla's successful tape-out of the AI5 chip, designed to power Full Self-Driving systems in vehicles and Optimus robots. The chip promises up to 40x performance improvement over its predecessor, featuring 12 memory packages and a 384-bit interface. However, the milestone comes nearly two years behind schedule, with volume production not expected until mid-2027.

Tesla AI5 Chip Reaches Critical Design Milestone

Elon Musk revealed that Tesla has completed the successful tape-out of its AI5 chip, marking a significant milestone in the company's custom silicon roadmap

1

. In a post on X, Musk congratulated the Tesla AI team and shared the first images of the processor, which will power Full Self-Driving (FSD) systems in Tesla vehicles, Optimus robots, and potentially xAI data centers3

. The announcement also confirmed that AI6 chip and Dojo3 supercomputer projects are already in development, signaling Tesla's aggressive push into custom AI hardware2

.

Source: Electrek

The tape-out process sends the final chip design to semiconductor foundries for fabrication, but this achievement arrives nearly two years behind Tesla's original timeline. The company initially promised AI5 would be in vehicles much earlier, and volume production remains more than a year away

2

.40X Performance Improvement Over Previous Generation

The Tesla AI5 chip delivers substantial computational advances, with Elon Musk claiming up to 40x performance improvement over the AI4 processor in select scenarios

1

. During Tesla's Q3 2025 earnings call, Musk explained that by removing outdated hardware blocks, engineers could fit the AI5 design on half a reticle size while maintaining adequate margin for memory traces, Arm CPU cores, and PCIe blocks1

.The processor module features a compact ASIC die surrounded by 12 memory packages from SK hynix, likely GDDR6 or GDDR7 modules

1

. This configuration suggests a 384-bit memory interface capable of delivering memory bandwidth between 768 GB/s and 1.536 TB/s, depending on the memory type deployed1

. Industry reports indicate the chip could feature up to 192GB of LPDDR5X memory if using 16GB modules3

. Musk has previously compared AI5's performance to NVIDIA's Hopper architecture, suggesting that a dual AI5 setup could rival NVIDIA Blackwell3

.

Source: Tom's Hardware

Samsung and TSMC Partnership for Dual-Source Production

Tesla is leveraging both Samsung and TSMC for AI5 production, implementing a dual-source manufacturing strategy to diversify its supply chain

3

. The chips will be manufactured at Samsung's facility in Taylor, Texas, and TSMC's plant in Arizona3

. In his announcement, Musk accidentally thanked "Taiwan Semiconductor TSC" instead of TSMC, though the intended reference was clear1

.The fabricated sample shown by Musk bears a "KR 2613" marking, indicating the chip was packaged during the 13th week of 2026

1

. Industry experts estimate high-volume production will commence in late 2026 or early 20273

. Tesla has confirmed it needs "several hundred thousand completed AI5 boards line side" before switching production lines, with that volume not expected until mid-20272

.Related Stories

Implications for Full Self-Driving and Hardware Obsolescence

The AI5 development carries significant implications for Tesla's Full Self-Driving roadmap, but also highlights ongoing challenges with hardware obsolescence. Tesla's Cybercab, scheduled for production in Q2 2026, will launch on AI4 hardware—the same processor currently used in Model Y, Model 3, Model S, Model X, and Cybertruck

2

. The company introduced an AI4.5 stopgap computer in 2026 Model Y vehicles precisely because AI5 delays left existing hardware unable to handle larger FSD neural networks2

.This pattern raises questions about Tesla's promises to existing customers. The company sold millions of vehicles with HW3 and HW4 on the promise of unsupervised self-driving, yet HW3 cannot deliver that capability by Musk's own admission, and HW4 running V14 still requires supervision

2

. Each new chip generation effectively confirms that previous hardware may not achieve the autonomous driving features originally promised2

.AI6 Chip and Dojo3 Development Already Underway

Tesla is already developing next-generation processors, with AI6 chip targeting Samsung's 2nm process technology in Texas and delivering double the performance of AI5

4

. Musk revealed that AI5 was completed 45 days ahead of schedule but required design concessions for faster delivery, which AI6 will address4

. The AI6 will utilize LPDDR6 memory instead of AI5's LPDDR5X, and will feature halved TRIP AI computation accelerators dedicated to SRAM, resulting in memory bandwidth an "order of magnitude" greater than DRAM bandwidth for calculations within the SRAM cache4

.

Source: Wccftech

Tesla also plans an AI6.5 variant manufactured using TSMC's 2nm process in Arizona, with the AI6 and AI6.5 chips targeting a 2027-2029 production timeframe

4

. Perhaps most notably, Musk confirmed that Dojo3 development continues, suggesting Tesla has not abandoned its system-on-wafer processor for AI training despite reports last August that the initiative had been dismantled1

. Musk previously indicated that AI6 and Dojo3 could feature converged architecture, potentially unifying Tesla's software and hardware stacks across vehicles, Optimus robots, and data center applications1

. The company aims to eventually move production to TeraFab once that facility is operational4

.References

Summarized by

Navi

[1]

Related Stories

Tesla Unveils AI5 Chip: A Game-Changer in Performance and Manufacturing Strategy

23 Oct 2025•Technology

Tesla Partners with Samsung for AI5 Chip Production as Musk Claims Korean Fab Outperforms TSMC's Arizona Facility

23 Oct 2025•Business and Economy

Tesla targets December tape out for AI6 chips as Samsung plans 2027 mass production

19 Mar 2026•Technology

Recent Highlights

1

AI outperforms ER doctors in diagnosis accuracy, Harvard study shows collaborative care ahead

Health

2

AI chatbots provide detailed biological weapons instructions, raising urgent biosecurity concerns

Technology

3

Google, Microsoft, and xAI Agree to US Government Review of AI Models Before Public Release

Policy and Regulation