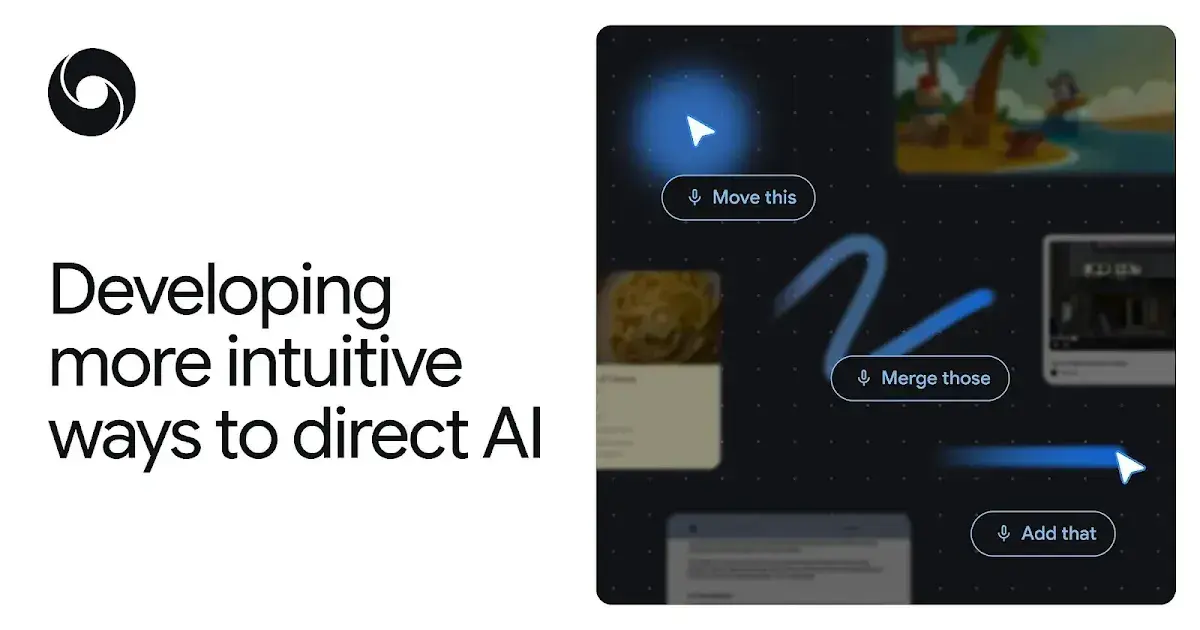

Google DeepMind unveils Magic Pointer, an AI-powered cursor that understands context and voice

7 Sources

[1]

Google Details the New Magic Pointer Features Coming to Googlebooks

Shortly after its Android Show announcements on Tuesday, Google's DeepMind, the company's artificial intelligence lab, detailed the upcoming Magic Pointer, a feature that reimagines the mouse pointer with AI capabilities. The feature will be available in Googlebooks, Google's new AI-focused laptops, later this year. Google's ambitious attempt to reinvent the computer mouse introduces several interesting features. By bringing contextual understanding and other smart tools with just a simple wiggle, it could change the way we interact with computers today. DeepMind says it wants to push the boundaries of AI tools that live in dedicated windows. The DeepMind blog post showcased some of the ways you'll be able to interact with Magic Pointer when it arrives. You can: The Magic Pointer feature lets you select text and easily adjust it without having to type a highly specific prompt. The demo also shows someone hovering over two spreadsheet columns and saying, "merge these," which instantly combines them into one. If you want to try some of these new features out, you don't have to wait until the Googlebooks laptops arrive later this year. The AI-enabled pointer experience is now available in Google AI Studio and lets you edit an image or find places on a map. Google also says you can use your cursor to ask Gemini, the AI assistant built into the Chrome browser, about specific parts of a web page. For example, you could select multiple products on a page and have Gemini automatically compare them.

[2]

Google's AI-enabled mouse pointer understands 'this' and 'that'

Right-clicking could go the way of the 3.5-inch floppy at the Chocolate Factory Google doesn't design mouse traps, so it's trying to design a better mouse. Google DeepMind announced a research effort to transform the standard computer mouse cursor into a context-aware, AI-powered tool, marking what the company described as the first major rethinking of the cursor in more than 50 years. The project by researchers Adrien Baranes and Rob Marchant integrated Google's Gemini AI model with an experimental context-aware mouse pointer. In this way, the company said, the system can understand where a user clicks, what they are clicking on, and the likely intent behind the interaction. Researchers said there is a persistent friction in how people currently interact with AI tools. Most AI assistants today live in a separate window, requiring users to copy, paste, or drag content into a chat interface before receiving help. The new approach aims to reverse that dynamic. "We want the opposite: intuitive AI that meets users across all the tools they use, without interrupting their flow," the researchers stated in the blog post. The mouse pointer works alongside the computer's microphone, allowing Gemini to listen as the user points. This lets users refer to features on the screen with object pronouns like "this" and "that." In a demonstration website, a user can hover a cursor over a crab and say "move this here," and the system understands enough context to grab the crab and move it to where the cursor indicates. The first computer mouse, a one-button prototype with metal wheels for the x- and y-axis, was built out of wood in 1964 and was patented in 1970 by its inventors Doug Engelbart and Bill English, who worked at the Stanford Research Institute. Engelbart foresaw a day when humans and computers would interact more easily and naturally, which he talked about during his 1997 acceptance speech for the Lemelson-MIT Prize. "The computer technology, the digital capabilities, it's affecting communications, displays, storage, computer processing. It's affecting the way you can interface to things a lot more flexibly," he said. "That's going to be so pervasively high-impact in our society and our organizations that it's more than anything we've had to cope with evolutionary wise." At Google, the team said it laid out four design principles guiding the project. The first, which the researchers called "Maintain the flow," stated that AI capabilities should work across all applications rather than forcing users into separate AI-specific environments. Under this principle, a user could point at a PDF and request a summary, or hover over a statistics table and ask for a chart, all without leaving the current application. The next, "Show and tell," addressed the burden of prompt writing. The researchers stated that an AI-enabled pointer could capture visual and semantic context from the screen, reducing the need for users to write detailed text instructions to the model. They also developed the AI cursor based on how humans naturally communicate using short phrases and gestures like "this" and "that." The researchers stated that the system would allow users to issue commands like "Fix this" or "Move that here" while the AI fills in the contextual gaps. The fourth principle, "Turn pixels into actionable entities," lets the pointer recognize structured objects within on-screen content. The researchers stated that this capability could turn a photo of a handwritten note into an interactive to-do list, or convert a paused video frame showing a restaurant into a booking link. In the blog, the researchers said that Google DeepMind has already begun integrating the lessons learned into products. A feature called Magic Pointer will soon roll out on the forthcoming Googlebook laptop platform, which The Chocolate Factory introduced earlier this week. The company said the technology will also allow users of Gemini in Chrome to point at specific parts of a webpage and ask questions, rather than composing a full text prompt. Experimental demos of the AI-enabled pointer are currently available through Google AI Studio, where users can test image-editing and map-based interactions using the point-and-speak approach. The company said it plans to continue testing the concept across additional platforms, including Google Labs' Disco. ®

[3]

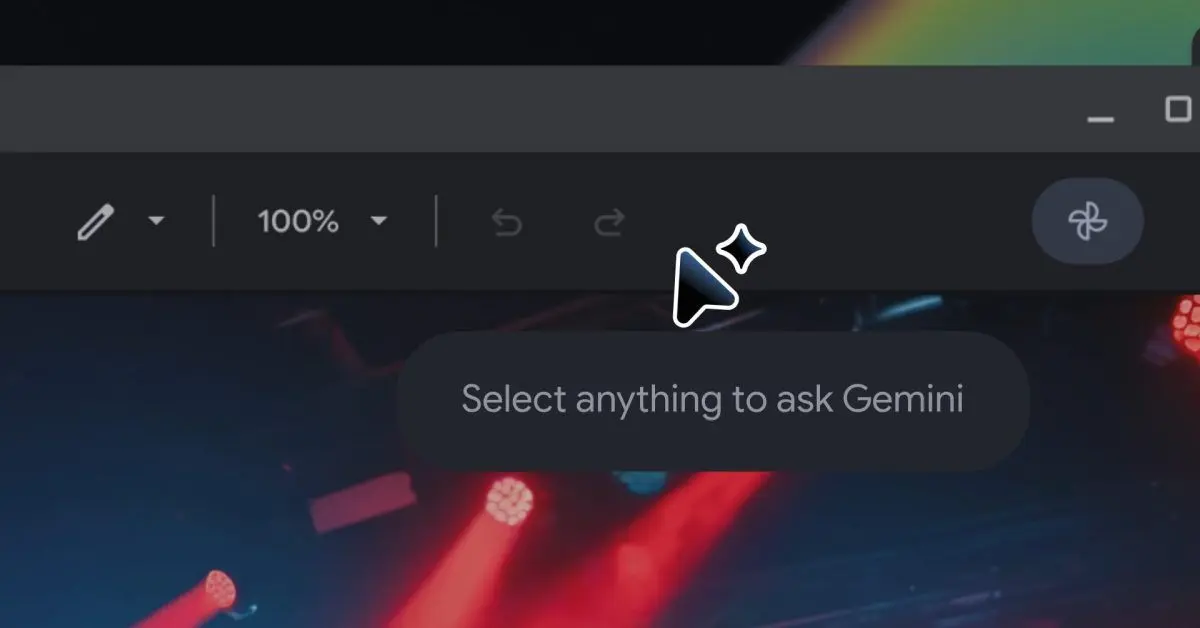

Google's Magic Pointer turns your cursor into an AI assistant, and you can test it now

Karandeep Singh Oberoi is a Durham College Journalism and Mass Media graduate who joined the Android Police team in April 2024, after serving as a full-time News Writer at Canadian publication MobileSyrup. Prior to joining Android Police, Oberoi worked on feature stories, reviews, evergreen articles, and focused on 'how-to' resources. Additionally, he informed readers about the latest deals and discounts with quick hit pieces and buyer's guides for all occasions. Oberoi lives in Toronto, Canada. When not working on a new story, he likes to hit the gym, play soccer (although he keeps calling it football for some reason🤔) and try out new restaurants in the Greater Toronto Area. Google's Android Show is over, and it might go down as one of the biggest yet. Not only did we get an all-new Android Auto experience at the event, complete with the introduction of Gemini Intelligence, we also got our first look at the Googlebook. It's essentially a combination of Chrome OS and Android within new machines, crafted to give you direct access to Gemini. Related The Googlebook puts Gemini in a laptop, ready to pull you away from Apple and Microsoft Chromebooks are so last year Posts 10 By Andy Boxall The tech giant doesn't want to leave it down to chance that you'll use Gemini on your Googlebook, and that's precisely why it's baking the AI assistant directly into the Googlebook's cursor. Officially dubbed the Magic Pointer, the cursor is built to understand what you're pointing at. For example, if you're pointing at a date, the cursor will be able to help you quickly set up an event, a reminder, or a calendar entry for the date. Similarly, you'd be able to point at an image of a building and ask the Googlebook where said building is located. The goal here is to move away from text-heavy prompting and towards a model where the AI simply understands context without being spoon-fed information. You can try out the Magic Pointer now It's worth noting that the first few Googlebooks from the likes of Acer, ASUS, Dell, and more won't be out until this fall. However, that doesn't mean you can't try out the Magic Pointer, as pointed out by 9to5Google. Google has two Magic Pointer demos available to try out in AI Studio: Point and Speak: Point and speak to get directions and find things. Show and Tell: Move or edit objects in an image by simply pointing and speaking. Elsewhere, users will soon also be able to use their cursor to 'Ask Gemini' about certain things on Chrome. This won't replicate the Magic Pointer experience, but it'll be close and should give you some practice before the first Googlebooks officially land. Related All the coolest Android 17, Gemini, and Android Auto announcements from The Android Show Prepare yourself, because there's a lot to get excited about Posts By Andy Boxall

[4]

DeepMind details Googlebook 'Magic Pointer' with demos you can try, also coming to Gemini in Chrome

The Magic Pointer on Googlebook was built with Google DeepMind. The research team behind this underlying capability shared more about the premise of AI-enabled pointers. DeepMind wants to use AI "to help the pointer not only understand what it's pointing at, but also why it matters to the user." Our goal is to address a common frustration: because a typical AI tool lives in its own window, users need to drag their world into it. We want the opposite: intuitive AI that meets users across all the tools they use, without interrupting their flow. For example, imagine pointing to an image of a building, and requesting "Show me directions". Nothing more is needed when the AI system already understands the context. The idea is to replace "text-heavy prompts with simpler, more intuitive interactions." An AI-enabled pointer would streamline this process by smoothly capturing the visual and semantic context around the pointer, letting the computer "see" and understand what's important to the user. Similarly, an "AI system that understands this combination of context, pointing and speech would allow users to make complex requests in natural shorthand." Example use cases include: In the example below, a "paused frame in a travel video becomes a booking link for that cool-looking restaurant." Google has two AI-enabled pointer demos in AI Studio: Additionally, you will soon have the ability to "use your pointer to ask Gemini in Chrome about the part of the webpage you care about." This is in the process of rolling out.

[5]

Shaping the future of AI interaction by reimagining the mouse pointer

We are developing more seamless, intuitive ways to collaborate with AI The mouse pointer has been a constant companion on computer screens, across every website, document and workflow. Despite how technologies have changed, the pointer has barely evolved in more than half a century. We've been exploring new AI-powered capabilities to help the pointer not only understand what it's pointing at, but also why it matters to the user. Our goal is to address a common frustration: because a typical AI tool lives in its own window, users need to drag their world into it. We want the opposite: intuitive AI that meets users across all the tools they use, without interrupting their flow. For example, imagine pointing to an image of a building, and requesting "Show me directions". Nothing more is needed when the AI system already understands the context. Today, we're outlining the underlying principles guiding our thinking on future user interfaces, and sharing experimental demos of an AI-enabled pointer, powered by Gemini. For example, you could visit Google AI Studio to edit an image or find places on the map, just by pointing and speaking.

[6]

Google is redefining the cursor for computers, and it's AI-charged future looks ridiculous

Google's Magic Pointer could be the next evolution of AI on laptops The humble mouse pointer has barely changed in decades. It moves, clicks, selects, drags, and occasionally turns into a spinning wheel of frustration. Google now wants to turn that tiny arrow into one of the most powerful AI tools on your laptop, which sounds ridiculous until you think about how often you use it. The company has announced Magic Pointer for Googlebook, its new category of Gemini-powered laptops. The feature gives the cursor AI abilities, allowing it to understand what you are pointing at and help you act on it without needing a long prompt or a separate chatbot window. Can the cursor become the new AI button? In a new DeepMind post, the company explained how it is rethinking the pointer for the AI era. The idea is to make Gemini understand the exact part of a webpage, image, table, document, or video frame the user is referring to. That turns the cursor from a basic navigation tool into a kind of AI remote control for the entire screen. This is where the whole thing starts to sound wonderfully absurd. A pointer could turn a table into a chart, compare products you select on a webpage, summarize a PDF into bullets for an email, or identify a building in a photo and pull up directions. The cursor, once used mainly to click tiny buttons, is suddenly being asked to understand context, intent, and action. Why does this matter for Googlebooks? Google has taken inspiration from the way people already communicate offline. You usually do not describe every object in a room before asking someone to move it. You point and say, "move this" or "fix that." Magic Pointer brings that same idea to the screen. The cursor tells Gemini what you are referring to, while short commands such as "add this," "merge those," or "what does this mean?" tell it what action to take. This new feature will be deeply integrated into Googlebook laptops, as Magic Pointer is being announced as part of that platform. That means Googlebook users should be able to use it more freely across the laptop experience, instead of being limited to a single app or browser window. Recommended Videos For everyone else, this AI pointer will be limited to Gemini in Chrome for now. Google says users can point to specific parts of a webpage and ask questions, such as comparing multiple selected products, summarizing technical specs from a product listing, or instantly converting prices into a different currency. If Magic Pointer works well, everyday AI tasks may no longer need a prompt box at all.

[7]

'What if behind the pointer, there was an AI model': Google DeepMind wants to reinvent the humble mouse cursor

I don't doubt there's some worthwhile application of at least one technology lumped under the monolithic 'AI' banner. Unfortunately, big tech's major players seem preoccupied with reinventing the wheel and introducing an unnecessary agentic twist. Case in point, Google DeepMind's latest experimental demo attempts to give your humble mouse cursor an AI enhancement. "The mouse cursor is something that has been forgotten," argues Adrien Baranes, a staff researcher prototyping Human-AI Interactions at Google DeepMind, "What if behind the pointer, there was an AI model, like Gemini, trying to interpret whatever we are saying, like another person would." To be fair to the demo, barking commands at Gemini does reduce the standard, laborious process of copy and pasting recipe ingredients into a shopping list by a considerable number of clicks. I've got plenty more sass where that came from -- but this cursor demo is also interesting in how it attempts to navigate contextual challenges that might otherwise stump many AI models. Essentially, rather than relying on an AI model to consistently tell the difference between a shopping list, a recipe, a food fight, and a hamburger costume, a combination of cursor gestures and naturalistic commands like 'move this here' are leveraged to point the AI in the right direction. "Current models require precise instructions, but our AI-enabled pointer removes that burden," Google DeepMind shares, "By 'seeing' what's under your cursor, it instantly understands the specific word, image, or code block you need help with." Another example explored by these early tech demos involves watching a video of 'top 10 places to eat in Tokyo,' dragging your cursor across an eatery's signage, and then Gemini agentic-ly taking you through booking a table for the following evening. Setting aside the well-covered security concerns of letting an AI agent at your emails or other important data, I do also wonder how this tech might handle misclicks -- at least with the restaurant example, there appear to be multiple steps that a user can easily backpedal away from. Otherwise, I'm not convinced this "50-year-old interface" really needed the AI reinvigoration. Besides the obvious 'if it ain't broke...' argument, I don't think I'd be comfortable with allowing Gemini to get an eyeful of my desktop. To be clear, if you switch Smart Features on in Gmail and let Gemini organise your inbox, Google won't then scan your emails to train its AI. Instead, official support documentation for Gemini apps says that "summaries, excerpts, generated media, and inferences" resulting from your prompts to the AI are what is used as training data. As such, if the cursor demo was to become more widely available, odds are Gemini wouldn't be tattling to Google about the contents of your SSD -- though it could potentially tell Google what you do all day at your desk. Personally, I'd rather no one knew how often I fail to write 'embarassment' or 'occassionally' correctly, let alone anything else.

Share

Copy Link

Google DeepMind introduced Magic Pointer, an AI-powered mouse pointer that understands screen context and voice commands. Launching on Googlebooks laptops this fall, the feature lets users interact naturally by pointing and speaking instead of typing detailed prompts. Early demos are already available in Google AI Studio, and the technology will soon integrate with Gemini in Chrome.

Google DeepMind Transforms the Mouse Pointer with AI Interaction

Google DeepMind has announced Magic Pointer, an ambitious project that transforms the traditional mouse cursor into an AI-powered mouse pointer with contextual understanding

5

. The feature, set to launch on Googlebooks laptops later this fall, marks what the company describes as the first major reimagining of the mouse pointer in more than 50 years2

. Developed by researchers Adrien Baranes and Rob Marchant, the system integrates Google's Gemini AI model to understand where users click, what they're clicking on, and the likely intent behind each interaction.

Source: DeepMind

The context-aware cursor addresses a fundamental friction in how people currently work with AI assistant tools. Rather than forcing users to copy, paste, or drag content into separate chat windows, Magic Pointer brings intuitive AI assistance directly into user workflow

4

. "We want the opposite: intuitive AI that meets users across all the tools they use, without interrupting their flow," the researchers stated in their blog post5

.How Magic Pointer Enables Natural Human Communication

The technology works by combining the mouse pointer with the computer's microphone, allowing Gemini to listen as users point at on-screen elements

2

. This enables natural interactions using pronouns like "this" and "that." In demonstrations, users can hover over a crab image and say "move this here," and the system understands enough context to execute the command. Similarly, pointing at a date allows quick creation of calendar entries or reminders without typing detailed text-based prompts3

.

Source: The Register

Google DeepMind outlined four design principles guiding the future of AI interaction. First, "Maintain the flow" ensures AI capabilities work across all applications rather than forcing users into separate AI-specific environments. Second, "Show and tell" reduces the burden of prompt writing by capturing visual and semantic context from the screen. Third, the system mimics how humans naturally communicate using short phrases and gestures. Fourth, "Turn pixels into actionable entities" lets the pointer recognize structured objects within on-screen content, such as converting a photo of a handwritten note into an interactive to-do list

2

.Magic Pointer Features and Current Availability

The Magic Pointer feature demonstrates several practical applications. Users can select text and adjust it without typing specific prompts, hover over spreadsheet columns and say "merge these" to combine them instantly, or point at an image of a building and request directions

1

. The system can also turn a paused video frame showing a restaurant into a booking link4

.While Googlebooks from manufacturers like Acer, ASUS, and Dell won't arrive until this fall, users can already test Magic Pointer through Google AI Studio

3

. Two demos are currently available: "Point and Speak" for getting directions and finding things, and "Show and Tell" for moving or editing objects in images by pointing and speaking4

.Related Stories

Integration with Gemini in Chrome and Broader Implications

Beyond Googlebooks, the technology will soon enable users to leverage their cursor with Ask Gemini functionality in Chrome

1

. This feature allows users to point at specific webpage elements and ask questions, such as selecting multiple products and having Gemini automatically compare them, without composing full text prompts. Google stated it plans to continue testing the concept across additional platforms, including Google Labs' Disco2

.

Source: 9to5Google

The initiative reflects a broader vision articulated by mouse inventor Doug Engelbart, who foresaw more flexible human-computer interfaces during his 1997 Lemelson-MIT Prize acceptance speech

2

. By pushing the boundaries of smart tools that live beyond dedicated windows, Google DeepMind aims to change how we interact with computers, making AI assistance feel less like a separate application and more like an integrated part of every digital task1

.References

Summarized by

Navi

[2]

[3]

[4]

Related Stories

Google's Googlebook brings Magic Pointer and Gemini AI to replace Chromebooks this fall

21 May 2026•Technology

Google Unveils Project Mariner: An AI Agent That Can Navigate the Web for You

12 Dec 2024•Technology

Google Unveils Gemini Live: Revolutionizing AI Assistance with Real-Time Video and Screen Sharing

03 Mar 2025•Technology

Recent Highlights

1

Nvidia RTX Spark chips power new AI laptops with up to 128GB memory and local agent capabilities

Technology

2

Florida sues OpenAI and Sam Altman over ChatGPT safety, alleging AI harms linked to violence

Policy and Regulation

3

Trump signs AI executive order seeking voluntary 30-day review after industry pushback

Policy and Regulation