Meta locks Broadcom for custom AI chips through 2029 as Hock Tan exits board

16 Sources

[1]

Broadcom to supply Meta with custom silicon through 2029 -- Broadom CEO Hock Tan departs Meta's board

Assumes a different role that will allow him to control Meta's custom silicon plans. Broadcom and Meta this week announced the extension of their relationship with a long-term agreement under which Broadcom will supply Meta multiple generations of custom-designed Meta Training and Inference Accelerator (MTIA) hardware through 2029. The package includes supplying hundreds of thousands of AI processors. The deal is significant enough for Hock Tan, chief exec of Broadcom, to leave Meta's board of directors, ostensibly to avoid a conflict of interest. Under the terms of the deal, Broadcom will supply Meta multiple generations of custom-designed AI accelerators for training and inference that will be built around Broadcom's foundational XPU platform, which enables to combine custom differentiating silicon with standard logic, memory, and high-speed I/O to greatly improve efficiency and lower the cost of such bespoke processors. The companies are tight-lipped about the exact volumes of hardware to be supplied, though they say that it will consume multiple gigawatts of power, with initial commitments exceeding 1 GW of compute capacity. In addition, Broadcom will supply Meta Ethernet networking solutions for scale-up, scale-out, and scale-across requirements. It is necessary to note that Broadcom participates in Microsoft, Meta, and OpenAI-led multi-source agreements for unified optical compute interconnects for scale-up interconnects. To that end, Broadcom will likely supply Meta custom AI accelerators with optical scale-up interconnects over time. "Meta is partnering with Broadcom across chip design, packaging, and networking to build out the massive computing foundation we need to deliver personal superintelligence to billions of people," said Meta founder and CEO Mark Zuckerberg. "As we roll out more than 1GW of our custom silicon to start and then multiple gigawatts over time, this partnership will give us greater performance and efficiency for everything we are building." One of the interesting implications of the deal is that MTIA accelerators use modified RISC-V-based cores from Andes Technology for scheduling and orchestration, as well as some basic processors. While compute blocks (tensor engines, vector engines, systolic arrays, etc.) are not RISC-V-based, the scale of the deal will likely benefit both Andes Technology and the RISC-V ISA ecosystem in general. Hock Tan will step down from Meta's board of directors and move into an advisory role, likely in a bid to avoid a conflict of interest as he remains chief executive of Broadcom. Nonetheless, in his new role, he will guide Meta's custom silicon roadmap and influence its future infrastructure investments. "We are pleased to expand our strategic collaboration with Meta as they pioneer the next frontier of artificial intelligence," said Hock Tan, President and CEO, Broadcom. "This initial MTIA deployment is just the beginning of a sustained, multi-generation roadmap to serve the trajectory of massive growth over the next few years highlighting Broadcom's unmatched leadership in AI networking and the power of our foundational XPU custom accelerator platform." Follow Tom's Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

[2]

Meta, Broadcom Deepen Ties on Chips; Tan Departs Meta's Board

Meta Platforms Inc. announced an expanded partnership with Broadcom Inc. to design and build custom chips to power the social media giant's artificial intelligence efforts. The companies also said that Broadcom Chief Executive Officer Hock Tan will leave Meta's board of directors. The partnership includes a commitment to develop chips that will provide more than 1 gigawatt of computing capacity, Meta said Tuesday in a statement. The arrangement is expected to be the first part of a sustained rollout. Tan, who joined Meta's board in early 2024, will continue to advise the company and CEO Mark Zuckerberg, according to a company statement. Tracey Travis, a former top executive at the Estee Lauder Companies Inc., is also stepping away from Meta's board. Nvidia Corp. denied a report from website SemiAccurate that it was seeking an acquisition of a large company that would "reshape the PC landscape." The website said Nvidia had been negotiating a deal for more than a year. The report sparked a rally Monday in the shares of PC makers Dell Technologies Inc. and HP Inc. "The media report is false; Nvidia is not engaged in discussions to acquire any PC maker," a company spokesperson told Bloomberg News. Dell and HP are among the top PC vendors in the world. HP, based in Palo Alto, California, has 19% of the global market in the first quarter, trailing just Lenovo Group Ltd., which had a share of almost 27%, according to Gartner Inc., an industry research firm. Dell, based in Round Rock, Texas, had about 17% market share, the firm said. Nvidia, the world's most valuable company, is the biggest maker of chips to power artificial intelligence work. Chief Executive Officer Jensen Huang has been a leading advocate for the use of AI across the economy, urging companies to experiment with how the emerging technology can help their businesses. The company investedBloomberg Terminal $70 billion in partners and customers in the fiscal year that ended in January to help further AI. Dell also manufactures AI servers that use Nvidia chips, and predicted it will generate about $50 billion in revenue from that business in the current fiscal year, which ends in January 2027. Dell shares fell 3.4% in extended trading after Nvidia's comments. Earlier, the stock jumped 6.7% to close in New York at a record high of $189.79. HP stock also declined more than 3% in extended trading after gaining 5.3% during the day to close at $19.23. Dell and HP didn't respond to requests for comment.

[3]

Meta inks deal with Broadcom for custom AI chips

April 14 (Reuters) - Meta (META.O), opens new tab and Broadcom (AVGO.O), opens new tab on Tuesday announced a multi-year partnership under which the chip designer will provide technology supporting Meta's custom AI accelerators, as the social media giant rapidly expands its data centers. Broadcom's shares rose 3.4% in extended trading. The companies said the initial 1-gigawatt commitment represents only the first phase of a "sustained, multi-gigawatt rollout," with a shared roadmap to co-design and scale hardware aimed at delivering real-time generative AI features and what Meta calls "personal superintelligence" to billions of users across its platforms. Meta last month unveiled a roadmap of four new chips that it is making in-house as part of its Meta Training and Inference Accelerator program. Reporting by Juby Babu in Mexico City; Editing by Maju Samuel Our Standards: The Thomson Reuters Trust Principles., opens new tab

[4]

Broadcom lands big chip deal with Meta. What it means for the stock

Broadcom jumped nearly 4% in trading Wednesday after the chipmaker announced a deal with Meta to design the hyperscaler's custom artificial intelligence accelerators. Analysts on Wall Street in their first take broadly saw the announcement as a positive, but disagreed whether it materially changed the outlook for Broadcom shares. The partnership will lead to an initial deployment of 1 gigawatt of power, with it expected to scale to multiple gigawatts through 2029. As part of the expanded partnership between the two companies, Broadcom CEO Hock Tan announced he won't run for reelection for a seat on Meta's board, which he joined in 2024. It comes on the heels of Broadcom announcing last week expanded deals with both Google and Anthropic. "Strategically, we see this as further evidence of AVGO's leading position in the AI/XPU/Networking sectors, and while not overtly announced as an [long-term agreement] like the recent collaboration with Google/Anthropic, we view the multi-generational aspect of this press release as a positive," wrote Deutsche Bank analyst Ross Seymore in a Tuesday note. AVGO YTD mountain AVGO year-to-date chart. Wolfe Research analyst Chris Caso said Tan's decision to step down from Meta's board is an important development, and he believes it implies the duration of the partnership between the two companies may be longer than explicitly stated. But Caso also thinks the information delivered in the announcement wasn't new. "On the most recent earnings call, AVGO noted an expectation to ship META multiple GW in FY27 and beyond," he wrote in a Wednesday note. "Therefore, this disclosure doesn't appear to be materially different from what the company discussed on the earnings call." Bernstein analyst Stacy Rasgon made a similar point, with the caveat that the 2029 detail did appear to be a new disclosure. Harlan Sur, a JPMorgan analyst, wrote in a Wednesday note that this deal and others show Broadcom stands to benefit as hyperscalers look more to develop custom chips. Goldman Sachs analyst James Schneider agreed, naming Broadcom as home to the "industry-leading" XPU platform. "This announcement further demonstrates Broadcom's increasing traction with its XPU platform and networking solutions across major U.S. hyperscalers, and provides a broader and more diverse customer base with high exposure for Broadcom to both enterprise (through Google and Anthropic), and consumer (through Google and Meta) AI," Schneider wrote.

[5]

Meta doubles down on custom AI chips with Broadcom deal through 2029

Serving tech enthusiasts for over 25 years. TechSpot means tech analysis and advice you can trust. What just happened? Meta is expanding its use of in-house AI silicon and has agreed to keep Broadcom as a long-term partner for design and manufacturing of its custom Training and Inference Accelerators, or MTIA chips. The companies announced an expanded agreement that runs through 2029 and centers on the accelerators, which will power future large-scale compute deployments. Under the deal, Meta has committed to an initial deployment of 1 gigawatt of MTIA capacity and plans to ramp to multiple gigawatts of Broadcom-based accelerators as its AI footprint grows. Broadcom said that the chips will be the first AI silicon manufactured on a 2-nanometer process. Meta is working with its partner on chip design, advanced packaging, and networking for MTIA, giving Broadcom a broad role in its AI compute infrastructure. Broadcom, which has been under scrutiny over how much it benefits from Meta's custom chip plans, used the announcement to stress that Meta's MTIA program is still on track. "Now, contrary to recent analyst reports, Meta's custom accelerator, MTIA roadmap is alive and well. We're shipping now and, in fact, for the next generation XPUs, we will scale to multiple gigawatts in 2027 and beyond," Broadcom CEO Hock Tan said on the company's March earnings call. The deal lands as large cloud providers look for ways to rely less on pricey, scarce GPUs from Nvidia and AMD by designing their own ASICs tailored to specific AI workloads. Those custom chips offer less flexibility than general-purpose GPUs, but they can be cheaper and more efficient when running a fixed set of AI tasks. Google launched the first major hyperscaler ASIC effort with its Tensor Processing Units in 2015, followed by Amazon's custom chips in 2018. Unlike those companies, which expose their accelerators through cloud platforms, Meta uses its MTIA silicon entirely for internal workloads. Meta introduced MTIA in 2023 and added four new versions in March - a quick turnaround between chip iterations. The Broadcom agreement follows Meta's multi-gigawatt GPU deals with AMD and Nvidia and a new custom-chip partnership with Arm Holdings. Meta plans to host this mix of GPUs and accelerators across 31 data centers, including 27 in the US. The silicon strategy sits inside a much larger capital plan. In January, Meta said it could spend up to $135 billion on AI this year as it tries to stay on pace with Google, Amazon, Anthropic and OpenAI. Broadcom has a separate long-term agreement with Google to develop and supply future TPUs, and beginning in 2027 Anthropic is slated to access about 3.5 gigawatts of that TPU capacity. Board changes are unfolding alongside the technical and financial commitments. According to a securities filing, Tan told Meta last week that he will not stand for reelection to Meta's board, which he joined in 2024.

[6]

Meta and Broadcom extend their AI chip deal to 2029

Broadcom's CEO stepping off Meta's board to become its chip strategy adviser The expanded partnership covers several generations of Meta's custom MTIA processors, starts with over a gigawatt of computing capacity, and is described as the 'first phase of a sustained, multi-gigawatt rollout.' The new chips will be the first custom AI silicon to use a 2-nanometer process. Meta has expanded its partnership with chip designer Broadcom to build several generations of custom artificial intelligence processors, extending the deal through 2029 with an initial commitment of more than one gigawatt of computing capacity, sufficient to power roughly 750,000 US homes. The companies also announced that Broadcom CEO Hock Tan would leave Meta's board of directors when his term expires at the company's next annual meeting and move into an advisory role focused specifically on Meta's custom chip strategy. Meta described the one-gigawatt commitment as "the first phase of a sustained, multi-gigawatt rollout." The deal covers Meta's Training and Inference Accelerator programme, known as MTIA, in which Broadcom provides chip design, packaging, and networking technology. The first chip in the programme, the MTIA 300, already runs Meta's ranking and recommendation systems across Facebook, Instagram, and other apps; three further chip generations are planned through 2027, designed primarily for inference, the process by which AI models respond to user queries in real time. Broadcom confirmed separately that the new MTIA silicon will be the first custom AI chips in the industry to use a 2-nanometer manufacturing process. Broadcom's Ethernet networking technology will also be used to connect Meta's expanding clusters of AI computers at scale. Mark Zuckerberg said Meta was partnering with Broadcom "across chip design, packaging, and networking to build out the massive computing foundation we need to deliver personal superintelligence to billions of people." The framing is consistent with Meta's stated ambition, articulated by Zuckerberg in January, to spend up to $135 billion on capital expenditure in 2026 as it races to build AI infrastructure to compete with OpenAI and Google. The Broadcom deal is the latest in a series of large-scale chip commitments Meta has announced this year, which already include six gigawatts of AMD GPUs, millions of Nvidia chips, custom processors designed with Arm Holdings, and capacity rented from neocloud providers including CoreWeave and Nebius. Unlike Google's TPUs or Amazon's Trainium, which are offered to external cloud customers as a revenue stream, Meta's MTIA chips are exclusively for internal use, powering the AI features and recommendation systems that underpin its advertising business. The MTIA programme follows the path set by Google, which began producing its first custom accelerators in 2015, and represents Meta's long-term bet that purpose-built silicon optimised for its specific workloads will outperform general-purpose GPUs from Nvidia in cost efficiency at the scale Meta operates.

[7]

Meta extends custom AI chip deal with Broadcom through 2029

Meta $META and Broadcom $AVGO have agreed to expand their partnership on custom AI chips through 2029, covering multiple generations of Meta's Training and Inference Accelerator, known as MTIA -- the silicon that powers AI across Meta's apps and services. A commitment to deploy more than 1 gigawatt of computing capacity anchors the agreement, with Meta framing that figure as an opening installment in what it expects to become a multi-gigawatt buildout. Under the arrangement, Broadcom's XPU platform will be used to develop custom AI accelerators, with collaboration spanning the design, packaging, and networking layers of the hardware stack. Broadcom's Ethernet technology will also connect Meta's expanding AI compute clusters. As part of the expanded deal, Broadcom CEO Hock Tan will leave Meta's board of directors and move to an advisory role focused on Meta's custom silicon roadmap. Tan joined Meta's board in 2024, according to CNBC. According to a regulatory filing, Tan informed the company last week of his intention to step down from the board rather than seek another term. "Meta is partnering with Broadcom across chip design, packaging, and networking to build out the massive computing foundation we need to deliver personal superintelligence to billions of people," Meta founder and CEO Mark Zuckerberg said in a statement. Hock Tan, Broadcom's president and CEO, called the initial MTIA deployment "just the beginning of a sustained, multi-generation roadmap." The MTIA chips will be the first AI silicon to use a 2 nanometer process, according to Reuters. Meta unveiled four new versions of MTIA in March, with Of the four chips unveiled in March, the MTIA 300 is already running Meta's ranking and recommendation systems, and the remaining three are slated to arrive by 2027. Broadcom stock rose about 3% in extended trading after the announcement. Meta stock was little changed. The Broadcom deal is one piece of a broader hardware push. Meta has struck a multiyear agreement with AMD $AMD to deploy up to 6 gigawatts of AMD Instinct GPUs, and has also committed to millions of Nvidia $NVDA chips and custom chips made by chip architecture firm Arm Holdings. The company announced in January that it expects to spend up to $135 billion on AI infrastructure in 2026, and it is developing 31 data centers to support that buildout, 27 of which are located domestically. In a separate disclosure, Meta announced that Tracey Travis, a board member since 2020, will step down and not seek another term when shareholders meet at the company's annual meeting.

[8]

Meta doubles down on partnership with Broadcom, committing to 1 gigawatt of custom AI processors - SiliconANGLE

Meta doubles down on partnership with Broadcom, committing to 1 gigawatt of custom AI processors Meta Platforms Inc. has announced a new deal with Broadcom Inc., extending a partnership that already sees the two companies work together on the design of the social media giant's in-house artificial intelligence accelerators. The company said in an announcement that it is committing to an initial deployment of one gigawatt of its Meta Training and Inference Accelerators, a custom-designed chip for AI workloads that Meta runs in its own data centers. Ultimately though, the extended partnership envisions Meta deploying multiple gigawatts of the chips, which are based on Broadcom's technology. Broadcom stressed in a separate announcement that the new MTIA chips will be the first custom silicon for the AI industry that uses a 2-nanometer process. The chipmaker's stock rose more than 3% in late trading today after the news was published. It's now up more than 10% in the year to date, outpacing the broader S&P 500 Index, which has gained just 2% over the same timeframe. Meta co-founder and Chief Executive Mark Zuckerberg was quoted as saying that the MTIA chips will use Broadcom's chip design, packaging and networking technologies to "build out the massive computing foundation we need to deliver personal superintelligence to billions of people." Reports earlier this year had suggested that Meta was struggling to bring the latest generation of its MTIA chips to market, but Broadcom CEO Hock Tan said in an earnings call last month that that's not the case. "Contrary to recent analyst reports, Meta's custom accelerator, MTIA roadmap is alive and well," he said. "We're shipping now and, in fact, for the next generation of XPUs, we will scale to multiple gigawatts in 2027 and beyond." Last month, Meta announced it was developing four new versions of the MTIA chips. It debuted the original MTIA chip back in 2023, following in the footsteps of rivals such as Google LLC and Amazon Web Services Inc., which have also developed their own AI processors. The MTIA chips give Meta an alternative to the costly and hard-to-buy graphics processing units made by companies such as Nvidia Corp. and Advanced Micro Devices Inc. as it scrambles to build new AI data centers. Like Google's and Amazon's chips, they're a kind of application-specific integrated circuit, or ASIC, which are smaller and cheaper to manufacture than GPUs, because their design limits them to a narrow range of computing tasks. In contrast, GPUs are general-purpose processors that can run almost any kind of task. Google delivered its first custom ASICs way before the start of the AI boom, back in 2015. Its tensor processing units were originally designed for standard cloud computing workloads, and were followed by Amazon's first custom chips in 2018. Both companies relied on Broadcom to help develop their silicon. Broadcom has announced a string of deals for its XPUs, or custom processors, in recent months. The most recent came just eight days earlier, when Anthropic PBC said it had penciled an agreement with the chipmaker and Google to secure 3.5 gigawatts of TPUs starting next year. As part of today's announcement, Meta revealed that Tan, who has sat on its board of directors since 2024, has opted not to stand for reelection. Instead, he will be moving to an advisory role at the company, offering input on the company's future custom chip strategy only. Meta's board is also facing a second departure, with Reuters reporting the news that Tracey Travis, the former Chief Financial Officer of Estée Lauder, will give up the seat she has held since 2020. The social media giant has announced a string of multibillion-dollar chip deals this year as part of its commitment to spend more than $135 billion on capital expenditures in fiscal 2026. Previously, it committed to deploying six gigawatts of AMD's GPUs, millions of chips from Nvidia, and also new custom processors designed by the chip architecture designer Arm Holdings Ltd. It has further committed to spending billions of dollars on renting chips from suppliers such as CoreWeave Inc. and Nebius N.V.

[9]

Meta Partners With Broadcom to Develop Next Generation of Its AI Chipsets

Meta wants to release 4 generations of MTIA chips in the next 2 years Meta announced a new partnership with semiconductor giant Broadcom on Tuesday. The collaboration is focused on developing multiple generations of custom silicon for Meta's artificial intelligence (AI) apps and services. Currently, the company powers its AI compute via the Meta Training and Inference Accelerator (MTIA) chips. The Menlo Park-based tech giant recently shared that in the next two years, it plans to release four new generations of its MTIA chips. It is likely that all of them will be developed in partnership with Broadcom. Meta, Broadcom Partner to Build New AI Chips In a newsroom post, the tech giant announced its partnership with Broadcom to develop multiple generations of its MTIA chips. For the unaware, MTIA is Meta's specialised accelerators built for inference and managing AI operations at scale. It not only powers the generative AI features via Meta AI but also its recommendation engine running on its social media platforms. Meta released its latest MTIA processor in 2024, highlighting improvements in both architecture and performance. It is the same infrastructure that powers the tech giant's recently released Muse Spark AI model. But as the Mark Zuckerberg-led firm aims to scale out its AI services and offerings, it needs to upgrade the foundational layer of the infrastructure. This is where Broadcom comes in. Meta highlighted that the partnership will leverage the chipmaker's XPU platform, which is designed to build custom AI accelerators. As part of the deal, Broadcom will be involved in the chip design, advanced packaging, and networking processes. "Broadcom's advanced Ethernet technologies will also enable seamless, high-bandwidth networking across Meta's rapidly expanding AI compute clusters," the announcement post stated. Both companies together have committed to developing custom silicon that generates more than 1 GW of compute in the first phase. Overall, the partnership aims for a multi-gigawatt rollout to help Meta scale its offerings and pursue its long-term ambition of personal superintelligence. Notably, Meta also announced that due to the scale of the partnership, Broadcom President and CEO, Hock Tan, will transition from Meta's Board of Directors and will shift to an advisor role for the company. Hock has been on Meta's board for the last two years.

[10]

The Meta-Broadcom AI Chip Deal: A Shift From Nvidia Dependence, Not Displacement - Advanced Micro Devices

However, the obvious conclusion from the partnership announcement on April 14, 2026, is that this move is targeted at reducing NVIDIA dependency. Beyond that, it represents a fundamental change in how AI technology is being developed, financed, and made efficient. Key TakeawaysThe Rationale for the Deal Meta's objective is to optimize the most expensive part of its AI operations. AI infrastructure is expensive, and relying solely on third-party GPUs is not sustainable at hyperscale. The Broadcom partnership allows Meta to design application-specific integrated circuits optimized for its own workloads, especially inference tasks like ranking, feeds, and chatbot responses. This matters because inference is quickly becoming the dominant workload in AI. Training large models is still critical, but once deployed, those models must serve billions of users in real time. Custom chips can perform these repetitive tasks more efficiently and at lower cost than general-purpose GPUs. What the Deal Actually Covers This deal involves an initial deployment exceeding 1 gigawatt of computing capacity, part of Meta's broader, massive AI hardware push. The company has projected capital expenditure of $115 billion to $135 billion on AI infrastructure in 2026 alone. The partnership is built on Broadcom's XPU platform, which is designed for creating custom AI accelerators. Broadcom will work with Meta across chip design, advanced packaging, and networking to help build out a massive computing foundation for real-time AI experiences at scale. Specifically, the Meta Training and Inference Accelerator (MTIA) is optimized for inference and recommendation at scale, powering AI across all of Meta's apps and services. It is customized for ranking content, recommending posts and ads, and running its growing family of generative AI models across Facebook, Instagram, and WhatsApp. Why This Is Not a Direct Threat to NVIDIA Despite its custom chip efforts, Meta's aim of achieving better long-term scalability and avoiding the volatile pricing premiums associated with external GPU supply chain constraints still requires continuous investment in NVIDIA hardware. For instance, Meta is committing to deploy six gigawatts of AMD's GPUs and millions of chips from NVIDIA. This is because its GPUs remain the industry standard due to their performance, software ecosystem, and developer adoption. There are three reasons NVIDIA remains difficult to displace: 1. Training Remains a Task for GPUs Specialized hardware performs best in focused and repetitive operations. Training next-gen AI models needs versatility, high memory bandwidth, and sophisticated software support, all strong suits of NVIDIA. 2. CUDA and Software Lock-in NVIDIA's CUDA ecosystem is a major competitive advantage. Developers build AI systems around it, which raises switching costs. 3. Demands for Scale Are Skyrocketing Investment in AI technology is not fixed. It is predicted that hyperscalers will invest more than $600 billion into AI infrastructure in 2026 alone. The Widespread Industry Shift The Meta-Broadcom deal revealed that AI infrastructure is evolving closer to cloud computing, where multiple specialized components work together. This trend is already visible across the industry. Google has long relied on tensor processing units, Amazon (AMZN:NASDAQ) uses Trainium, and even OpenAI has explored custom chips to reduce dependency on NVIDIA. Recent deals, including large-scale partnerships involving custom silicon, show that companies are diversifying rather than consolidating around a single vendor. It has now become a pattern for hyperscalers to go to NVIDIA for leading training capacity, and to Broadcom for custom chips optimized for specific inference workloads at volume. This positions Broadcom as a key beneficiary of the next phase of AI infrastructure buildout, which is less about training frontier models and more about deploying AI to billions of users as cheaply and efficiently as possible. What This Means for Investors In frontier model training, NVIDIA's dominance is unlikely to be contested anytime soon. But the rise of custom silicon will reduce its total available market in the long run, especially for inference. Each gigawatt of MTIA chips that Meta consumes is one gigawatt less for NVIDIA. Current geopolitical and market volatility have weighed on both Broadcom and Meta, which are currently selling for 34 and 22 times forward earnings, respectively. Both companies have come off their respective highs, which presents an opportunity for an investor looking to get exposure to the custom silicon trend without paying the premium price. Bottom Line The Meta-Broadcom partnership indicates that AI infrastructure is maturing into a multi-layered ecosystem. Broadcom is emerging as the leading custom chip partner for hyperscalers, having also struck deals with Google and Anthropic, whereas Meta continues buying NVIDIA and AMD GPUs for model training. Although this partnership reduces its dependence on third-party hardware for high-volume, repetitive workloads, NVIDIA continues to power the most demanding workloads and innovation cycles. Feature image credit: Meta News Release Benzinga Disclaimer: This article is from an unpaid external contributor. It does not represent Benzinga's reporting and has not been edited for content or accuracy. Market News and Data brought to you by Benzinga APIs To add Benzinga News as your preferred source on Google, click here.

[11]

Broadcom Jumps, Meta Deal Signals Multi-Gigawatt AI Buildout - Broadcom (NASDAQ:AVGO)

Broadcom Jumps As Meta Partnership Signals Multi-Gigawatt AI Buildout The latest deal "further reinforces Broadcom's technology advantage in custom silicon and AI networking," according to Goldman Sachs. The Broadcom Analyst: Analyst James Schneider maintained a Buy rating and price target of $480. The Broadcom Thesis: The partnership with Meta exceeds 1GW (gigawatt). It is also "the first phase of a sustained, multi-gigawatt rollout between the two companies," Schneider said. Check out other analyst stock ratings. The Meta deal is not limited to hardware supply. It involves "collaboration on system-level optimization and forward-looking R&D efforts," the analyst stated. Broadcom's CEO Hock Tan will take on an advisory role to provide guidance on Meta's custom silicon roadmap, he added. The deal with Meta comes on the heels of Broadcom's agreement with Alphabet Inc's (NASDAQ:GOOG) Google and AI safety and research startup Anthropic, announced last week. The Meta deal further highlights Broadcom's "increasing traction with its XPU platform and networking solutions across major US hyperscalers," the analyst wrote. He added that the recent deals broaden and diversify the company's customer base, as Google and Anthropic provide exposure to enterprise customers, while Google and Meta provide exposure to consumer AI applications. According to Schneider, Broadcom's stock, over the next 12 months, hinges on: Multi-year partnerships driving the XPU platform's traction across major US hyperscalers The company's industry-leading networking capabilities Upside to estimates He noted that Goldman's estimates for fiscal 2027 and 2028 are currently around 14% higher than Street expectations. AVGO Price Action: Shares of Broadcom had risen by 3.06% to $392.36 at the time of publication on Wednesday. Image: Shutterstock Market News and Data brought to you by Benzinga APIs To add Benzinga News as your preferred source on Google, click here.

[12]

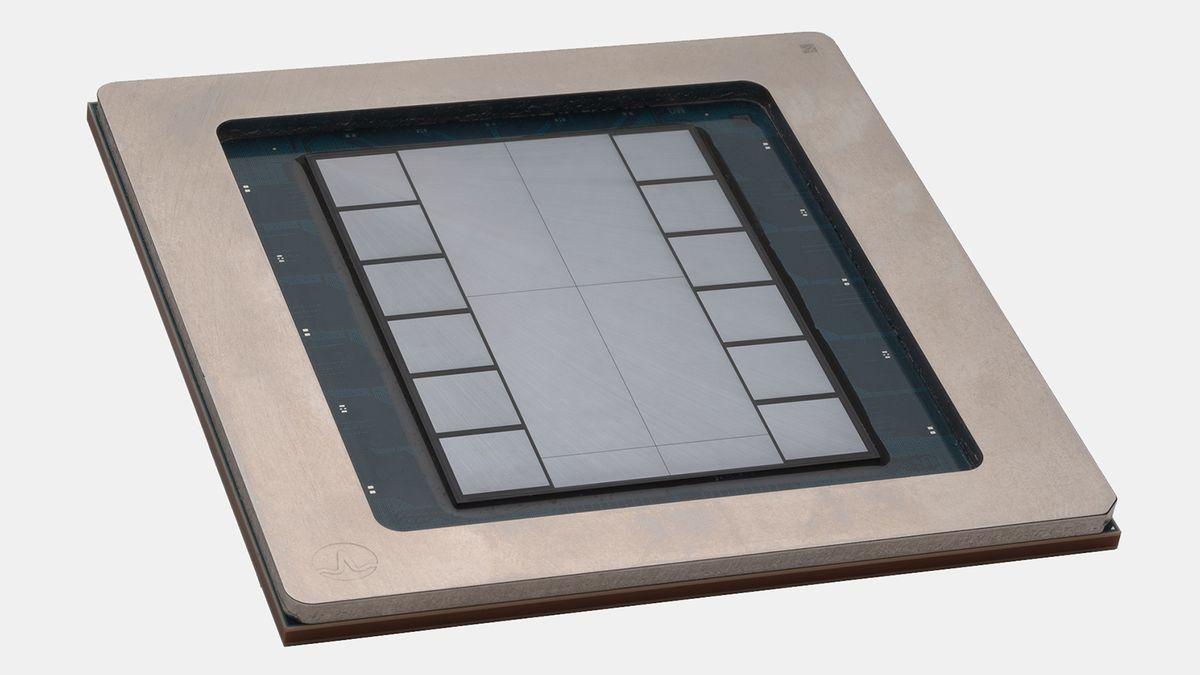

Broadcom Wins Big, But AI Infrastructure Is 'Real Winner' Of Meta Deal, Says Daniel Newman - Broadcom (NA

A Non-Zero-Sum Game "This cycle is bigger, it's different and it's not zero sum," he added, noting that the immense demand for compute capacity means multiple suppliers can thrive simultaneously over the next three to four years. Building The Foundation For 'Superintelligence' The extended agreement marks a deeper strategic commitment, focusing on co-developing the tech industry's first 2nm AI compute accelerator. These highly specialized chips will power Meta's next-generation Training and Inference Accelerator (MTIA). Combined with Broadcom's advanced networking solutions, this hardware will anchor a massive multi-gigawatt data center expansion stretching through 2029. "Meta is partnering with Broadcom across chip design, packaging, and networking to build out the massive computing foundation we need to deliver personal superintelligence to billions of people," stated Meta CEO Mark Zuckerberg regarding the expanded rollout. Strategic Leadership Shifts To facilitate this technological alignment, Broadcom CEO Hock Tan is officially stepping down from Meta's board of directors to act as a direct advisor on the company's custom silicon roadmap. This multi-billion-dollar deal highlights how tech giants are moving to reduce their reliance on any single entity while still scaling aggressively. Ultimately, as hyperscalers pour billions into new facilities, the definitive winner remains the vast ecosystem of semiconductors, memory, and energy powering the global AI boom. AVGO Stock Tumbles In 2026 AVGO stock is up 10.02% year-to-date, as the Nasdaq 100 index was 2.52% higher in the same period. Furthermore, the stock was up by 10.65% in the last six months but higher by 113.49% over the year. The stock closed Tuesday 0.27% higher at $380.78 apiece. Benzinga's Edge Stock Rankings indicate that AVGO maintains a strong price trend in the short, medium, and long terms, with a solid quality score. Disclaimer: This content was partially produced with the help of AI tools and was reviewed and published by Benzinga editors. Photo courtesy: Ken Wolter via Shutterstock Market News and Data brought to you by Benzinga APIs To add Benzinga News as your preferred source on Google, click here.

[13]

Broadcom shares gain on multi-year Meta AI chip deal By Investing.com

Investing.com -- Broadcom Inc. shares rose 2.6% in after-hours trading on Tuesday following the announcement of a multi-year strategic partnership with Meta to support the social media company's artificial intelligence infrastructure. Under the agreement, Broadcom will deliver technology supporting Meta's Training and Inference Accelerator chips, with plans extending through 2029. The partnership builds on an existing collaboration between the two companies. The initial commitment exceeds 1GW and represents the first phase of a multi-gigawatt rollout. The infrastructure will support Meta's deployment of AI data centers designed to bring generative AI features to billions of users across WhatsApp, Instagram, and Threads. The deployment centers on Meta's MTIA silicon, enabled through Broadcom's XPU platform. This platform allows the companies to integrate logic, memory, and high-speed I/O for current deployments while establishing a framework for future MTIA iterations. "We are pleased to expand our strategic collaboration with Meta as they pioneer the next frontier of artificial intelligence," said Hock Tan, President and CEO of Broadcom. "This initial MTIA deployment is just the beginning of a sustained, multi-generation roadmap." "Meta is partnering with Broadcom across chip design, packaging, and networking to build out the massive computing foundation we need to deliver personal superintelligence to billions of people," said Meta founder and CEO Mark Zuckerberg. This article was generated with the support of AI and reviewed by an editor. For more information see our T&C.

[14]

Meta, Broadcom Extend AI Chip Partnership -- Stocks Climb - Broadcom (NASDAQ:AVGO)

Meta, Broadcom Extend Multi-Year AI Chip Partnership, Stocks Climb META stock is moving. See the chart and price action here. The deal marks the foundation of a multi-gigawatt infrastructure rollout through 2029, supporting Meta's next-generation MTIA (Meta Training and Inference Accelerator) chips. The companies said Broadcom's XPU custom accelerator and networking solutions will form the backbone of Meta's AI data centers, designed to handle massive training and inference workloads for real-time generative AI features. "Meta is partnering with Broadcom across chip design, packaging, and networking to build out the massive computing foundation we need to deliver personal superintelligence to billions of people," said Meta CEO Mark Zuckerberg. As part of the expansion, Broadcom CEO Hock Tan will step down from Meta's board to serve as an advisor on its custom silicon roadmap. AVGO Price Action: According to Benzinga Pro data, Broadcom shares were up 3.02% to $392.26 in after-hours trading on Tuesday. Meta shares were mostly flat after gaining 4.41% in Tuesday's regular trading session. Image created using artificial intelligence via Gemini. Market News and Data brought to you by Benzinga APIs To add Benzinga News as your preferred source on Google, click here.

[15]

Meta extends custom chips deal with Broadcom to power AI ambitions

April 14 (Reuters) - Meta will work with chip designer Broadcom to produce several generations of custom artificial intelligence processors under an expanded deal as the social media giant races to build out the computing capacity needed to power AI features across its apps. Tuesday's announcement extends the tie-up until 2029 and includes an initial commitment of over one gigawatt of computing capacity, enough to power roughly 750,000 U.S. homes on average. As part of the deal, Broadcom CEO Hock Tan would leave Meta's board and move to an advisory role on its custom chip strategy, the companies said in a joint statement. As AI drives a surge in computing demand, big technology companies such as Meta, Google and Amazon are designing their own chips to reduce reliance on Nvidia's costly processors. That custom chip boom has made Broadcom one of the biggest winners of generative AI. The company works with clients to develop custom processors and supplies infrastructure software. Its shares were up 3.5% in extended trading, while those of Meta were little changed. The tie-up helps "build out the massive computing foundation we need to deliver personal superintelligence to billions of people," Meta CEO Mark Zuckerberg said. The company, which last month unveiled a roadmap of four new chips, said the initial capacity commitment with Broadcom was "the first phase of a sustained, multi-gigawatt rollout." Broadcom's Ethernet networking technology will also be used to connect Meta's rapidly growing clusters of AI computers. The first chip from Meta Training and Inference Accelerator (MTIA) program, called the MTIA 300, already powers Meta's ranking and recommendation systems, with three more due through 2027. The later generations are designed for inference, the process by which AI models respond to user queries. Separately, Meta said on Tuesday Tracey Travis, who has been on its board since 2020, would not stand for re-election at the company's annual shareholder meeting. (Reporting by Juby Babu in Mexico City; Editing by Maju Samuel)

[16]

Meta extends Broadcom deal to power AI across its apps

Meta is expanding its deal with chip designer Broadcom to build several generations of custom processors to power AI features across its apps. The deal announced Tuesday extends their partnership through 2029. :: Meta It includes an initial commitment of more than one gigawatt of computing capacity - enough to power roughly 750,000 U.S. homes. The agreement also brings a boardroom change. Broadcom CEO Hock Tan will step down from Meta's board and take on an advisory role focused on custom chip strategy, according to a joint statement. Meta chief Mark Zuckerberg said the deal helps build the computing backbone needed to supply "personal superintelligence to billions of people." :: Meta As AI drives soaring demand for computing, tech giants such as Meta, Google and Amazon are designing their own silicon to reduce reliance on Nvidia's expensive processors. That shift has been a major boost for Broadcom, which develops custom chips and supplies infrastructure software. Its shares were up 3.5% in extended trading, while those of Meta were little changed.

Share

Copy Link

Meta and Broadcom announced a multi-year partnership extending through 2029 for custom AI accelerators, with initial deployment exceeding 1 gigawatt of computing capacity. Broadcom CEO Hock Tan is stepping down from Meta's board to avoid conflict of interest but will continue advising on Meta's custom silicon roadmap as the social media giant pursues its AI infrastructure goals.

Meta and Broadcom Forge Long-Term AI Chips Partnership

Meta and Broadcom this week unveiled an expanded partnership with Broadcom that extends through 2029, positioning the chipmaker as a critical supplier for the social media giant's AI infrastructure goals

1

. Under the multi-year agreement, Broadcom will supply Meta multiple generations of custom AI accelerators designed specifically for the Meta Training and Inference Accelerator program, commonly known as MTIA3

. The partnership includes an initial commitment exceeding 1 gigawatt of computing capacity, with plans to scale to multiple gigawatts as Meta rapidly expands its data centers2

.

Source: SiliconANGLE

The deal represents a significant step in Meta's strategy to reduce reliance on expensive GPUs from Nvidia and AMD by developing in-house AI silicon tailored to specific workloads

5

. Mark Zuckerberg emphasized the scale of this commitment, stating that Meta is "partnering with Broadcom across chip design, packaging, and networking to build out the massive computing foundation we need to deliver personal superintelligence to billions of people"1

.Hock Tan Departs Meta's Board Amid Expanded Deal

In a notable development tied to the partnership, Hock Tan announced he will step down from Meta's board of directors, which he joined in early 2024, to avoid a conflict of interest as he remains chief executive of Broadcom

2

. However, Tan will transition into an advisory role where he will continue to guide Meta's custom silicon roadmap and influence its future infrastructure investments1

. Wolfe Research analyst Chris Caso noted that Tan's decision to step down is significant and suggests the duration of the partnership may extend beyond what was explicitly stated4

.Custom Silicon Strategy Accelerates

The custom AI accelerators will be built around Broadcom's foundational XPU platform, which combines custom differentiating silicon with standard logic, memory, and high-speed I/O to improve efficiency and lower costs

1

. Broadcom will also provide Ethernet networking solutions for scale-up, scale-out, and scale-across requirements, addressing Meta's comprehensive AI networking needs1

. The chips will be the first AI silicon manufactured on a 2-nanometer process, according to Broadcom5

.

Source: Quartz

Meta introduced MTIA in 2023 and unveiled four new versions in March, demonstrating rapid iteration between chip generations

5

. The MTIA accelerators use modified RISC-V-based cores from Andes Technology for scheduling and orchestration, and the scale of this deal will likely benefit both Andes Technology and the broader RISC-V ISA ecosystem1

.

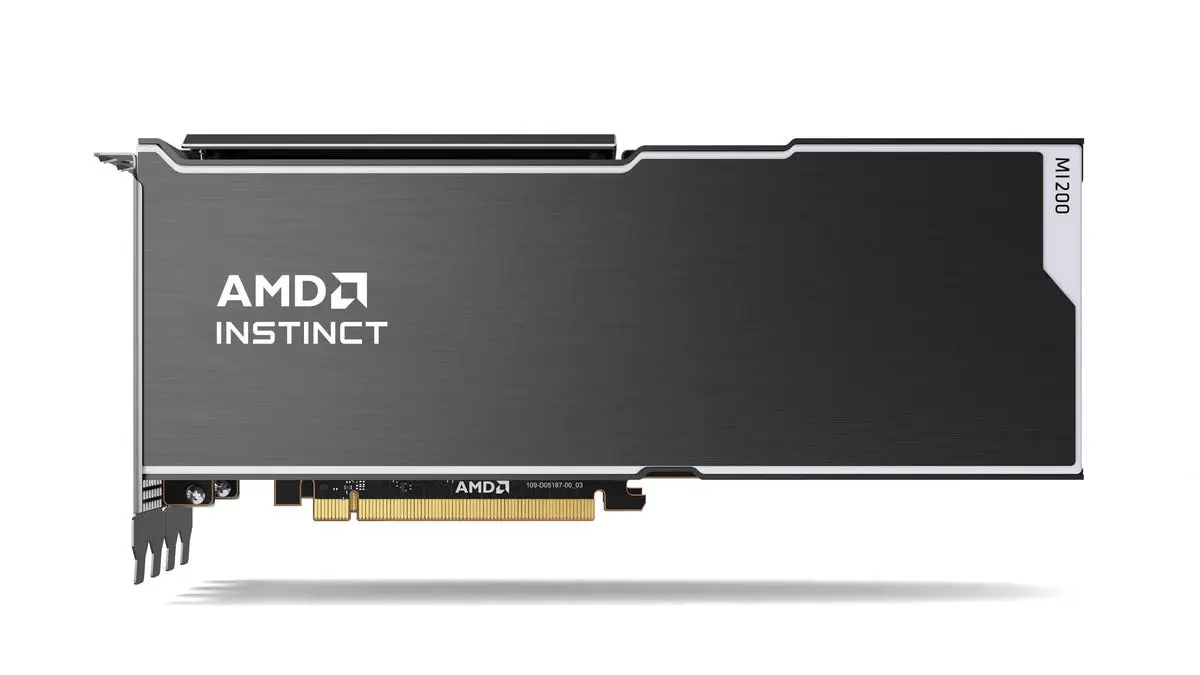

Source: Tom's Hardware

Related Stories

Wall Street Reacts to Strategic Positioning

Broadcom shares jumped nearly 4% in extended trading following the announcement, with analysts viewing it as further evidence of Broadcom's leading position in AI networking and custom accelerator platforms

4

. Goldman Sachs analyst James Schneider described Broadcom's XPU platform as "industry-leading" and noted that the deal provides a broader and more diverse customer base with exposure to both enterprise and consumer AI4

. The partnership follows Broadcom's recent expanded deals with Google and Anthropic, positioning the company as a key enabler for hyperscalers pursuing custom chip strategies4

.Implications for AI Infrastructure Competition

This silicon strategy sits within Meta's much larger capital plan, with the company announcing it could spend up to $135 billion on AI this year as it competes with Google, Amazon, Anthropic, and OpenAI

5

. Meta plans to deploy this mix of custom accelerators alongside GPUs from Nvidia and AMD across 31 data centers, including 27 in the US5

. Unlike Google and Amazon, which expose their custom accelerators through cloud platforms, Meta uses its MTIA silicon entirely for internal workloads to power features across its platforms5

. The shared roadmap to co-design and scale hardware aims at delivering real-time generative AI features to billions of users [3](https://www.reuters.com/business/meta-inks-deal-with-broadcom-custom-ai-chips-2026-04-14/].References

Summarized by

Navi

[1]

Related Stories

Meta strikes up to $100 billion AI chips deal with AMD, could acquire 10% stake in chipmaker

18 Feb 2026•Technology

Broadcom Reveals Hyperscalers' Ambitious Plans for AI Chip Deployment, Challenging Nvidia's Dominance

27 Dec 2024•Technology

Meta abandons advanced AI chips after design roadblocks, turns to Nvidia and AMD instead

27 Feb 2026•Technology

Recent Highlights

1

Google Search transforms with agentic AI, generative UIs, and intelligent search box at I/O 2026

Technology

2

Pope Leo calls to disarm AI in first encyclical, warning against new forms of domination

Policy and Regulation

3

AI passes the Turing Test as GPT-4.5 appears more human than actual people in landmark study

Science and Research