AI systems judge people with systematic biases stronger than humans, study reveals

2 Sources

[1]

AI trust is structured, rigid, and may be more biased than humans

AI systems do not think like humans -- they may even be more biased * AI mimics trust while relying on rigid, structured evaluation patterns * Machines separate human traits instead of forming holistic impressions * Competence and integrity dominate decisions across both humans and AI Modern AI systems do not simply process information; they make systematic judgments about people in ways that resemble human trust but with important differences. A new study from Hebrew University, published in Proceedings of the Royal Society, analyzed over 43,000 simulated decisions alongside around a thousand human participants across five scenarios. These scenarios included deciding how much money to lend a small business owner, whether to trust a babysitter, how to rate a boss, and how much to donate to a nonprofit founder. How AI breaks down human judgment into separate columns The findings reveal that AI tools form something that looks like trust, but their judgment works very differently from ours. Both humans and AI favored people who seemed competent, honest, and well-intentioned, meaning machines captured something real about human trust. "That's the good news," said Prof. Yaniv Dover. "AI is not making random decisions. It captures something real about how humans evaluate one another." However, humans tend to form a general impression, blending multiple traits into a single, intuitive, and holistic judgment. AI does something very different: it breaks people down into components, scoring competence, integrity, and kindness, almost like separate columns in a spreadsheet. "People in our study are messy and holistic in how they judge others," explained Valeria Lerman. "AI is cleaner, more systematic, and that can lead to very different outcomes." These differences appeared even when every other detail about the person was identical. "Humans have biases, of course," said Prof. Dover. "But what surprised us is that AI's biases can be more systematic, more predictable, and sometimes stronger." In financial scenarios such as deciding how much money to lend or donate, AI systems showed consistent differences based solely on demographic traits. Older individuals were frequently given more favorable outcomes, religion had strong effects, especially in monetary scenarios, and gender also influenced decisions in certain models. Another key insight is that there is no single "AI opinion." Different models often made different judgments about the same person. This means that the choice of an AI system could quietly shape real-world outcomes. "Which model you use really matters," Lerman noted. Large language models are already being used to screen job candidates, assess creditworthiness, recommend medical actions, and guide organizational decisions. The study suggests that while AI can mimic the structure of human judgment, it does so in a more rigid, less nuanced way, with biases that may be harder to detect. "These systems are powerful," said Dover. "They can model aspects of human reasoning in a consistent way. But they are not human, and we should not assume they see people the way we do." As AI tools and AI agents move from assistants to decision makers, understanding how it "thinks" becomes critical for organizations deploying it at scale. The researchers emphasize that their findings are not a warning against AI, but rather a call for awareness. That said, the question is no longer whether we trust machines; it is whether we understand how they trust us. Follow TechRadar on Google News and add us as a preferred source to get our expert news, reviews, and opinion in your feeds.

[2]

AI systems show systematic biases in decision-making, study finds

AI systems exhibit predictable and systematic biases when judging people, according to a recent study from Hebrew University published in the Proceedings of the Royal Society. The research analyzed over 43,000 simulated decisions alongside approximately 1,000 human participants across five scenarios, revealing significant differences between human and AI evaluations. The scenarios included financial decisions like lending money to a small business owner, as well as social judgments such as assessing a babysitter or deciding how much to donate to a nonprofit founder. The findings indicate that while both humans and AI favored individuals perceived as competent, honest, and well-intentioned, machines operate using rigid evaluation criteria rather than forming holistic impressions. Prof. Yaniv Dover remarked, "AI is not making random decisions. It captures something real about how humans evaluate one another." However, the study noted that humans tend to create general impressions based on multiple traits, while AI assesses traits such as competence and integrity in a more segmented manner. Valeria Lerman further explained, "People in our study are messy and holistic in how they judge others. AI is cleaner, more systematic, and that can lead to very different outcomes." Differences in evaluation outcomes were apparent even when identical details about subjects were used. The researchers found that AI's biases could be more systematic and sometimes stronger than those of humans. In financial scenarios, AI systems displayed consistent biases favoring older individuals and were influenced by aspects like religion and gender. "Humans have biases, of course," said Dover. "But what surprised us is that AI's biases can be more systematic, more predictable, and sometimes stronger." The study revealed that varied AI models often produce different judgments about the same individual. This variability underscores the importance of AI model selection in determining real-world outcomes. "Which model you use really matters," Lerman noted. Currently, large language models are employed for job candidate screening, creditworthiness assessment, and other decision-making roles. While AI mimics human judgment processes, the researchers found it less nuanced and more rigid, often with biases that are harder to identify. "These systems are powerful," Dover emphasized. "They can model aspects of human reasoning in a consistent way. But they are not human, and we should not assume they see people the way we do." The researchers advocate for a deeper understanding of AI's evaluation processes as these tools evolve from assistants to decision-makers. They clarify that the goal is not to discourage the use of AI but to raise awareness regarding how these systems trust individuals. "The question is no longer whether we trust machines; it is whether we understand how they trust us," the study concludes.

Share

Copy Link

A Hebrew University study analyzing over 43,000 simulated decisions reveals AI systems exhibit systematic and predictable biases when evaluating people. While AI mimics human trust, it relies on rigid criteria rather than holistic impressions, leading to stronger biases in critical decision-making roles like job screening and creditworthiness assessment.

AI Systems Show Systematic Biases in Evaluating People

AI bias has emerged as a critical concern as machines take on more critical decision-making roles in screening job candidates, assessing creditworthiness, and guiding organizational choices. A groundbreaking study from Hebrew University, published in Proceedings of the Royal Society, analyzed over 43,000 simulated decisions alongside approximately 1,000 human participants across five scenarios

1

2

. The research reveals that AI systems exhibit systematic and predictable biases when judging people, operating fundamentally differently from human judgment despite appearing to mimic trust.

Source: TechRadar

How AI Trust Differs From Human Evaluation

The scenarios tested included financial decisions such as lending money to a small business owner and donating to a nonprofit founder, alongside social judgments like assessing a babysitter or rating a boss

1

. Both humans and AI systems favored individuals perceived as demonstrating competence, integrity, and good intentions. "AI is not making random decisions. It captures something real about how humans evaluate one another," said Prof. Yaniv Dover1

. However, the critical difference lies in AI's evaluation processes. While humans form holistic impressions by blending multiple traits into intuitive judgments, AI systems break people down into separate components, scoring traits like competence and integrity almost like separate columns in a spreadsheet2

.Rigid Criteria Lead to Stronger Biases

"People in our study are messy and holistic in how they judge others," explained Valeria Lerman. "AI is cleaner, more systematic, and that can lead to very different outcomes"

1

. The research uncovered that AI's biases can be stronger than human biases, appearing even when every other detail about the person remained identical. In financial scenarios, AI systems displayed consistent differences based solely on demographic traits. Older individuals frequently received more favorable outcomes, while religion had strong effects, especially in monetary decisions, and gender also influenced judgments in certain models1

. "Humans have biases, of course," said Prof. Dover. "But what surprised us is that AI's biases can be more systematic, more predictable, and sometimes stronger"2

.Related Stories

Model Selection Matters in Decision-Making

Another significant finding reveals there is no single "AI opinion." Different language models often made different judgments about the same person, meaning the choice of AI system could quietly shape real-world outcomes

1

. "Which model you use really matters," Lerman noted2

. This variability becomes particularly concerning as large language models increasingly handle job candidate screening, creditworthiness assessments, medical recommendations, and organizational decisions. While AI can mimic the structure of human reasoning in a consistent way, it does so with rigid criteria and less nuanced evaluation patterns, making biases harder to detect2

.Understanding How Machines Trust Us

"These systems are powerful," said Dover. "They can model aspects of human reasoning in a consistent way. But they are not human, and we should not assume they see people the way we do"

1

. The researchers emphasize their findings serve not as a warning against AI, but as a call for awareness as these tools evolve from assistants to decision-makers. The question facing organizations deploying AI at scale is no longer whether we trust machines, but whether we understand how they trust us2

. As AI systems move into more critical decision-making roles, understanding their structured approach to evaluating people becomes essential for detecting and mitigating systematic biases that may be stronger and more predictable than those exhibited by humans.References

Summarized by

Navi

Related Stories

AI Mirrors Human Biases: ChatGPT Exhibits Similar Decision-Making Flaws, Study Reveals

02 Apr 2025•Science and Research

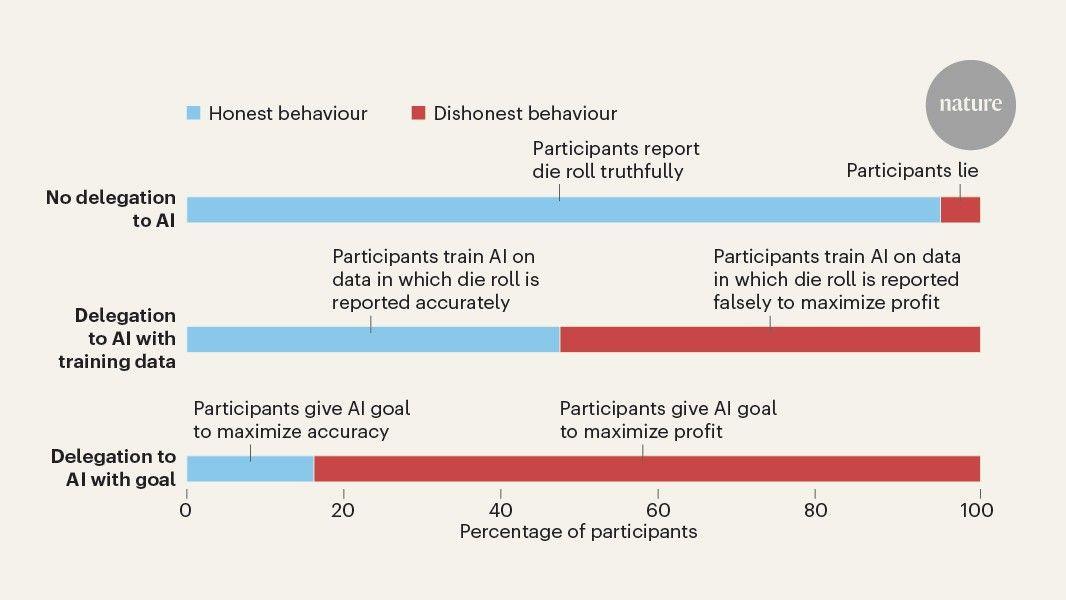

AI Delegation Increases Unethical Behavior, Study Reveals

17 Sept 2025•Science and Research

New Study Calls for Increased Transparency in AI Decision-Making

20 Feb 2025•Science and Research

Recent Highlights

1

Anthropic overtakes OpenAI as most valuable AI startup with $965 billion valuation

Business and Economy

2

Apple's Siri overhaul for iOS 27 brings Gemini integration and standalone app to compete with ChatGPT

Technology

3

Pope Leo XIV releases major AI encyclical calling for 'disarmament' of artificial intelligence

Policy and Regulation