Anthropic explores UK startup's AI inference chips promising 100x speed boost at fraction of cost

2 Sources

[1]

Anthropic in early talks to buy DRAM-less AI inference chips from UK startup -- Fractile's SRAM architecture reduces need for pricey memory during extreme pricing and shortage crunch

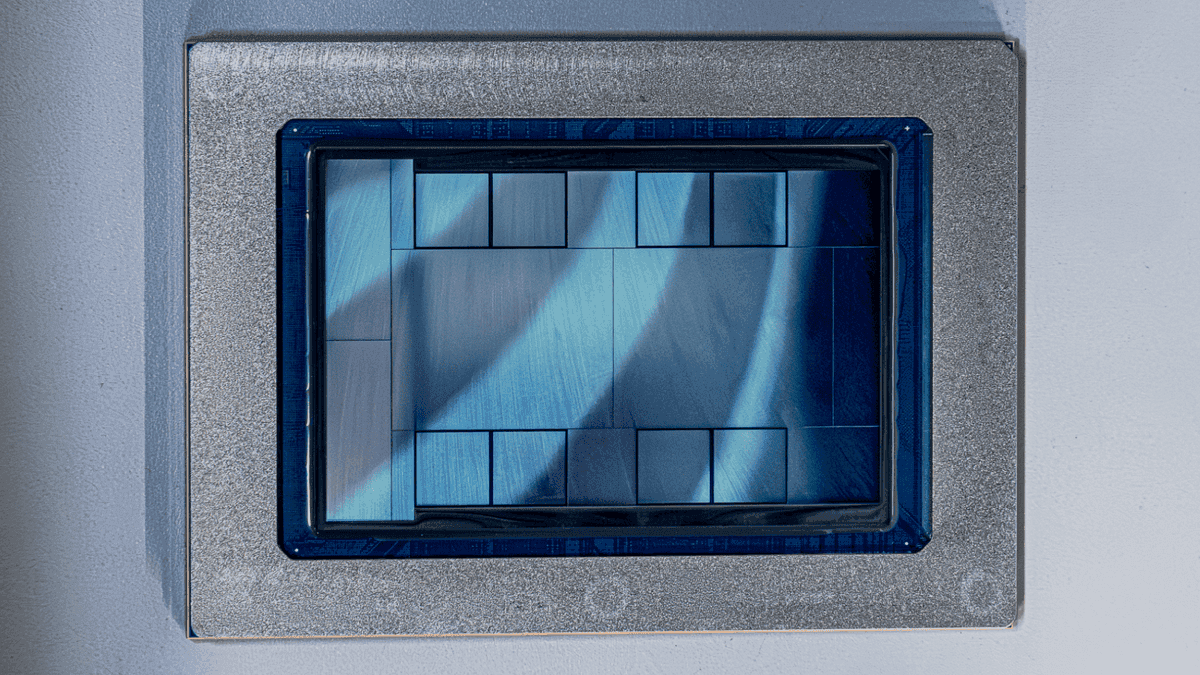

The Claude developer is exploring a fourth chip supplier alongside Nvidia, Google, and Amazon. Anthropic has reportedly held early discussions with London-based chip startup Fractile about purchasing the company's inference accelerators, The Information reported on Friday, citing people familiar with the matter. The talks would add Fractile as a fourth source of AI server silicon for the Claude developer, which already uses chips from Nvidia, Google, and Amazon. Fractile's chips aren't expected to reach commercial readiness until around 2027, placing any deployment well outside Anthropic's near-term procurement plans and roughly inside the same window as its Google-Broadcom TPU partnership. Founded in 2022 by Oxford PhD Walter Goodwin, Fractile is developing an inference chip that co-locates memory and compute on the same die using SRAM rather than shuttling data to separate DRAM chips. That data movement between the GPU and off-chip DRAM is one of the main bottlenecks in running large AI models at speed. Goodwin told Fortune in July 2024 that Fractile's design stores data needed for computations directly next to the transistors that perform the arithmetic, rather than relying on off-chip DRAM. Based on simulations at the time, Goodwin said Fractile could run a large language model 100 times faster and 10 times cheaper than Nvidia's GPUs, though the company had not yet manufactured test chips. The company raised $15 million in seed funding, co-led by Kindred Capital, the NATO Innovation Fund, and Oxford Science Enterprises. Fractile is now in talks to raise $200 million at a $1 billion-plus valuation, with Founders Fund, 8VC, and Accel among the potential investors. The Fractile team reportedly includes engineers from Graphcore, Nvidia, and Imagination Technologies, and the company is building its own software stack alongside the hardware. Anthropic has deliberately avoided dependence on any single chip vendor, running Claude on Nvidia GPUs, Amazon's Trainium processors through Project Rainier, and Google's TPUs under a deal announced in October that provided over 1GW of compute capacity. In early April, that expanded to 3.5GW of TPU capacity from 2027 through 2031. The interest in Fractile coincides with surging demand on Anthropic's existing infrastructure. The company's annualized revenue run rate passed $30 billion in March, up from around $9 billion at the end of 2025, and its inference costs have been a drag on gross margins. Unlike OpenAI and xAI, which are building or expanding their own massive data center footprints, Anthropic has opted to rent capacity from multiple providers and negotiate leverage through diversified chip supply. Fractile is one of several inference-focused startups pursuing SRAM-based or near-memory architectures, including Groq and Cerebras. Nvidia struck a $20 billion acquisition deal with Groq in December and subsequently launched its own dedicated inference accelerator, Groq 3 LPX, acknowledging the growing commercial pressure to optimize cost-per-token at scale. Follow Tom's Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

[2]

Anthropic Eyes UK Startup's Fusion Tech Promising 100x Faster AI Inference at One-Tenth the Cost of NVIDIA's Groq

Anthropic, the creators of Claude AI, are reportedly in early talks with a UK startup whose SRAM tech can boost AI inference by 100x & reduce costs by 10x. Currently, Anthropic sources its chips from various companies, including NVIDIA, Google, and Amazon. This trio allows the company to keep running its AI infrastructure without major concerns that are often associated with relying on a single chipmaker. But as compute demand intensifies in the AI space, many AI firms are now looking to invest in in-house chips that suit their requirements. Based on recent reporting by The Information, Anthropic is said to be in early talks with a UK-based startup called Fractile. Fractile is becoming a highlight in the AI space due to its new technology, which it calls the Memory Compute Fusion Architecture. The architecture works by moving less data to the DRAM, lowering the reliance on off-chip memory, and doing all the data go-through within the chip itself. For this, the company has devised its own SRAM tech, similar to NVIDIA's Groq LPUs or Groq 3 LPX. NVIDIA's acquisition of Groq allowed it to integrate the latest LPU in its upcoming Vera Rubin ecosystem. These chips act as an AI Inference booster & this is done through the incorporation of large amounts of SRAM and super-high bandwidth for scale-in and scale-up. As such, NVIDIA itself terms the Groq 3 LPU as an Inference accelerator, packing 500 MB of SRAM, 150 TB/s of SRAM bandwidth, and 2.5 TB/s of scale-up bandwidth. These are packaged within the Groq 3 LPX Rack that houses 256 LPUs and a massive 128 GB of SRAM for low-latency processing. Fractile's solution is similar, though the company claims that its architecture targets a 100x speed up in AI inferencing while reducing the costs by 10x versus NVIDIA's Groq. The team at Fractile working on the project comes from big firms such as NVIDIA, Graphcore, and Imagination Technologies. These are some big numbers, but the company has yet to design any test chips, so these early talks can initiate the process of Anthropic's in-house chip development. Anthropic still heavily relies on external chip makers as it signed a Multi-Gigawatt deal with Broadcom, and reports suggest that it will soon add a fourth name to its compute portfolio in the form of AMD.

Share

Copy Link

Anthropic has entered early talks with London-based Fractile about purchasing the startup's novel AI inference chips. The UK startup's SRAM-based architecture promises to run large language models 100 times faster and 10 times cheaper than current solutions by eliminating memory bottlenecks. The move would add a fourth chip supplier alongside Nvidia, Google, and Amazon.

Anthropic Explores Partnership with UK Startup Fractile

Anthropic has entered early discussions with UK startup Fractile about acquiring the company's AI inference chips, according to reports from The Information

1

. The Claude AI developer would add Fractile as a fourth source of AI server silicon, joining its existing relationships with NVIDIA, Google, and Amazon. Founded in 2022 by Oxford PhD Walter Goodwin, Fractile has attracted attention for its innovative approach to solving one of the most persistent challenges in AI computing: the memory bottleneck that limits how quickly models can process information.

Source: Wccftech

The timing aligns with Anthropic's explosive growth trajectory. The company's annualized revenue run rate surpassed $30 billion in March, up dramatically from around $9 billion at the end of 2025

1

. This surge in demand has intensified pressure on existing infrastructure, with inference costs dragging on gross margins. Unlike competitors such as OpenAI and xAI, which are building massive proprietary data centers, Anthropic has opted to reduce reliance on external chipmakers through diversified chip supply agreements with multiple providers.DRAM-less AI Inference Chips Target Memory Bottleneck

Fractile's approach centers on what it calls Memory Compute Fusion Architecture, a design that fundamentally reimagines how AI chips handle data

2

. The SRAM-based architecture co-locates memory and compute on the same die using SRAM rather than shuttling data to separate DRAM chips. This eliminates the data movement between GPUs and off-chip DRAM, which represents one of the main bottlenecks in running large AI models at speed1

.Goodwin explained to Fortune in July 2024 that Fractile's design stores data needed for computations directly next to the transistors that perform the arithmetic. Based on simulations at that time, the company projected it could run a large language model 100 times faster and 10 times cheaper than NVIDIA's GPUs, though it had not yet manufactured test chips

1

. These ambitious performance claims position Fractile among several inference-focused startups pursuing SRAM or near-memory architectures, including Groq and Cerebras.Investment Momentum and Timeline Considerations

Fractile raised $15 million in seed funding co-led by Kindred Capital, the NATO Innovation Fund, and Oxford Science Enterprises

1

. The startup is now in talks to raise $200 million at a valuation exceeding $1 billion, with Founders Fund, 8VC, and Accel among potential investors. The Fractile team reportedly includes engineers from Graphcore, NVIDIA, and Imagination Technologies, and the company is building its own software stack alongside the hardware1

.However, Fractile's chips aren't expected to reach commercial readiness until around 2027, placing any deployment well outside Anthropic's near-term procurement plans

1

. This timeline roughly aligns with Anthropic's Google-Broadcom TPU partnership, which expanded to 3.5GW of compute capacity from 2027 through 2031 in early April.Related Stories

Competitive Landscape Shifts as NVIDIA Responds

The market for faster AI inference solutions has intensified following NVIDIA's $20 billion acquisition deal with Groq in December

1

. NVIDIA subsequently launched its own dedicated inference accelerator, Groq 3 LPX, acknowledging the growing commercial pressure to optimize cost-per-token at scale. The Groq 3 LPU packs 500 MB of SRAM, 150 TB/s of SRAM bandwidth, and 2.5 TB/s of scale-up bandwidth, housed in racks containing 256 LPUs with a massive 128 GB of SRAM for low-latency processing2

.Anthropic's strategy to reduce inference expenses through diversified sourcing reflects broader industry trends. The company has deliberately avoided dependence on any single chip vendor, running Claude AI on NVIDIA GPUs, Amazon's Trainium processors through Project Rainier, and Google's TPUs under deals providing over 1GW of compute capacity

1

. Reports suggest Anthropic will soon add AMD as a potential fourth name to its compute portfolio2

. Whether Fractile's technology can deliver on its performance promises remains to be seen, particularly as the company has yet to design test chips to validate its simulation-based projections.

Source: Tom's Hardware

References

Summarized by

Navi

Related Stories

Anthropic secures 3.5 gigawatts of Google TPUs as revenue soars to $30 billion run rate

07 Apr 2026•Technology

Amazon Considers Multibillion-Dollar Investment in Anthropic, Pushing for Adoption of Its AI Chips

08 Nov 2024•Business and Economy

Anthropic Secures Massive Google Cloud Deal for AI Computing Power

22 Oct 2025•Technology

Recent Highlights

1

AI outperforms ER doctors in diagnosis accuracy, Harvard study shows collaborative care ahead

Health

2

AI chatbots provide detailed biological weapons instructions, raising urgent biosecurity concerns

Technology

3

Google, Microsoft, and xAI Agree to US Government Review of AI Models Before Public Release

Policy and Regulation