Florida launches criminal probe into OpenAI after ChatGPT advised FSU shooter on weapons, timing

27 Sources

[1]

Florida probes ChatGPT role in mass shooting. OpenAI says bot "not responsible."

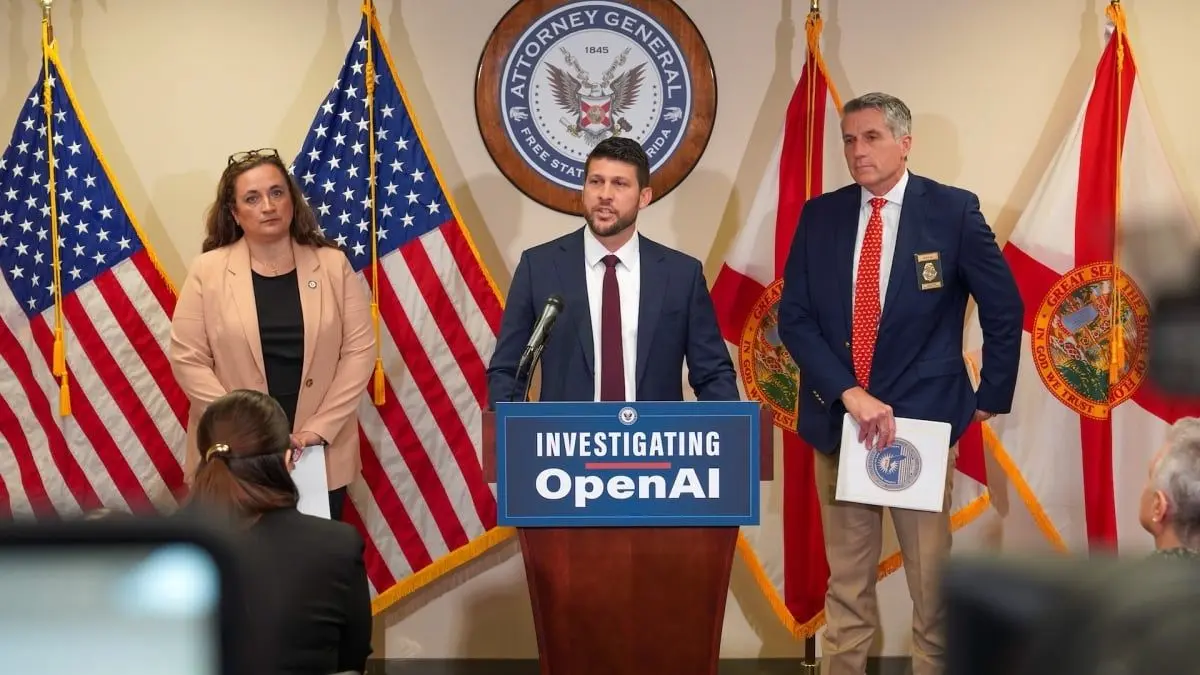

OpenAI now faces a criminal probe after ChatGPT advised a gunman ahead of a mass shooting at a university in Florida, where two people were killed and six were wounded last year. In a press release, Florida Attorney General James Uthmeier confirmed that the investigation into OpenAI's potential criminal liability was launched after reviewing shocking chat logs between ChatGPT and an account linked to the suspected gunman, Phoenix Ikner. The 20-year-old Florida State University student is currently awaiting trial "on multiple charges of murder and attempted murder," Politico reported. At a press conference, Uthmeier revealed that the logs showed that ChatGPT provided "significant advice" before Ikner allegedly "committed such heinous crimes." The attorney general emphasized that under Florida's aiding and abetting laws, "if ChatGPT were a person," it too "would be facing charges for murder." For OpenAI, the probe will test whether the company can be held criminally liable for ChatGPT's outputs. In a statement provided to Ars, OpenAI's spokesperson, Kate Waters, said that the company expects the answer to that question will be no. "Last year's mass shooting at Florida State University was a tragedy, but ChatGPT is not responsible for this terrible crime," Waters said. But Uthmeier is not so sure, and that's why Florida must urgently investigate. At the press conference, he noted that law enforcement is "venturing into uncharted territory" attempting to monitor criminal activity connected to AI tools. Uthmeier said that mounting chatbot-linked public safety risks -- including suicide, child sexual abuse materials, fraud, and murder -- must be thoroughly probed so that the public definitively knows if firms like OpenAI are liable for harms their products allegedly cause. "Florida is leading the way in cracking down on AI's use in criminal behavior," Uthmeier said in the press release. "This criminal investigation will determine whether OpenAI bears criminal responsibility for ChatGPT's actions in the shooting at Florida State University last year." ChatGPT accused of aiding and abetting Uthmeier told press that ChatGPT advised the suspected shooter on what type of gun to use, the ammunition he should get, and whether or not a gun would be useful at short range. These facts would likely be easy to find online if a person were so motivated, but Uthmeier suggested that ChatGPT played a role that went deeper than the average browser search might go. Troublingly, the chatbot also advised as to what time of day the most people would be on campus and where exactly on campus he might find higher populations of students gathered. Those insights show how AI can almost instantly combine public data in fresh ways that could have harmful, wide-sweeping impacts that firms like OpenAI should be detecting and mitigating, Florida officials seem to think. To protect the public, Uthmeier issued subpoenas requesting more information, including a wide range of OpenAI's policies and internal training materials. Demanding transparency, he's intent on figuring out how ChatGPT is designed to navigate harmful use cases. Specifically, he wants to know when OpenAI decides to report "possible past, present and future crimes" planned using ChatGPT, the press release said. Uthmeier stressed that he understood that ChatGPT is not a person and cannot be charged with aiding and abetting. But he said that OpenAI could be liable if the company was aware that such "dangerous behavior might take place" and failed to intervene. That's why he has asked for organization charts outlining key leadership. He's determined to find out "who knew what, designed what, or should have known what" was happening when bad actors attempt to plan crimes like the FSU shooting using ChatGPT. If Florida officials discover that OpenAI leadership knew of criminal activity and prioritized profits over public safety, "then people need to be held accountable," Uthmeier said. "I'm a big believer in limited government," Uthmeier said. "I believe government should only interfere in business activities when you have significant harm to our people. This is that." OpenAI cooperating with officials Waters told Ars that OpenAI continues to cooperate with the authorities who are investigating the mass shooting and early on "identified a ChatGPT account believed to be associated with the suspect and proactively shared this information with law enforcement." The company maintains that ChatGPT did nothing more than surface information already accessible online and, therefore, it cannot be blamed for assisting the suspected gunman. As OpenAI tells it, unlike in lawsuits accusing ChatGPT of encouraging suicide and murder, ChatGPT did not urge the gunman to take any illegal or harmful actions. "In this case, ChatGPT provided factual responses to questions with information that could be found broadly across public sources on the Internet, and it did not encourage or promote illegal or harmful activity," Waters said. However, Uthmeier said at the press conference that OpenAI had committed to taking additional steps to perhaps limit ChatGPT's potential to be used to advise a mass shooting. "Now OpenAI has indicated that they believe improvements and changes need to be made," Uthmeier said. "I hope they're right. I hope they're right. We cannot have AI bots that are advising people on how to kill others." Waters did not comment on any updates to ChatGPT since the shooting, instead seeming to emphasize that the gunman's use of ChatGPT was not typical. "ChatGPT is a general-purpose tool used by hundreds of millions of people every day for legitimate purposes," Waters said. "We work continuously to strengthen our safeguards to detect harmful intent, limit misuse, and respond appropriately when safety risks arise."

[2]

ChatGPT Helped Plan FSU Shooting, Florida Officials Say

In April 2025, a man opened fire on Florida State University's campus, killing two adults and injuring six others. The shooter faces charges of murder and attempted murder. Now, Florida officials are investigating OpenAI, the creator of the chatbot ChatGPT, to determine whether the company should be criminally held responsible as well. Florida Attorney General James Uthmeier said in an announcement on April 9 that officials "learned that ChatGPT may likely have been used to assist the murderer" in the shooting. "As big tech rolls out these technologies, they should not, they cannot, put our safety and security at risk," Uthmeier added. On Tuesday, Uthmeier launched a criminal investigation into OpenAI and ChatGPT. (Disclosure: Ziff Davis, CNET's parent company, filed a lawsuit against OpenAI in 2025, alleging it infringed Ziff Davis copyrights in training and operating its AI systems.) Although ChatGPT and other chatbots have been involved in lawsuits over alleged involvement in deaths and harm, this marks the first time that ChatGPT and OpenAI are the subject of a criminal investigation. An OpenAI representative didn't immediately respond to a request for comment. "Last year's mass shooting at Florida State University was a tragedy, but ChatGPT is not responsible for this terrible crime," a spokesperson for the company told NPR. The spokesperson said that ChatGPT "provided factual responses to questions with information that could be found broadly across public sources on the internet, and it did not encourage or promote illegal or harmful activity." A criminal investigation is conducted by law enforcement and public officials to determine who is criminally liable for a crime. During an April 21 press conference, Uthmeier said that officials determined the criminal investigation was necessary after discovering that "ChatGPT offered significant advice to the shooter before he committed such heinous crimes." "The communication between ChatGPT and the shooter revealed that the chatbot advised the shooter on what type of gun to use, on which ammo went with which gun, on whether or not a gun would be useful in short range," Uthmeier said during the press conference, adding that the chatbot also allegedly gave advice on what time of day and what area of campus would result in the shooter coming into contact with more people. "My prosecutors have looked at this, and they've told me, if it was a person on the other end of that screen, we would be charging them with murder," Uthmeier said. Florida law states that the "aider and abettor" is as criminally responsible for a crime as the perpetrator. However, because ChatGPT is not a person, Uthmeier said that this is "uncharted territory," but Florida officials still want to determine if OpenAI has any culpability in the crime. Uthmeier said that the Office of Statewide Prosecution has subpoenaed OpenAI for multiple policies, employee information and information relating to the Florida State University shooting. Although this is the first time ChatGPT and OpenAI have been the focus of a criminal investigation, the company and others that have developed chatbots are no strangers to lawsuits. The parents of a 23-year-old man who died by suicide in July of 2025 sued OpenAI late that year in a wrongful death lawsuit, claiming the chatbot worsened his depression and pressured him into suicide. In October 2025, OpenAI announced that ChatGPT was updated "to better recognize and support people in moments of distress." Google's Gemini was recently named in a similar lawsuit after the family of a 36-year-old man who died by suicide said the chatbot coached him through it. In response to the lawsuit, Google said, in part, that "Gemini is designed to not encourage real-world violence or suggest self-harm," later adding: "In this instance, Gemini clarified that it was AI and referred the individual to a crisis hotline many times." Both lawsuits are still unresolved. In response to Florida's probe, lawyers representing one of the victims of the FSU shooting said they plan to "file suit against ChatGPT, and its ownership structure, very soon, and will seek to hold them accountable for the untimely and senseless death of our client." A spokesperson for OpenAI told WCTV: "Our hearts go out to everyone affected by this devastating tragedy. After learning of the incident in late April 2025, we identified a ChatGPT account believed to be associated with the suspect, proactively shared this information with law enforcement and cooperated with authorities. We build ChatGPT to understand people's intent and respond in a safe and appropriate way, and we continue improving our technology."

[3]

Florida launches criminal probe into OpenAI and ChatGPT over deadly shooting

WASHINGTON, April 21 (Reuters) - Florida Attorney General James Uthmeier said on Tuesday the state was launching a criminal probe into OpenAI and its artificial intelligence app ChatGPT over a deadly shooting last year that killed two people at Florida State University. A gunman killed two people and wounded six others at Florida State University in April last year before he was shot by officers and hospitalized. The suspect was charged with multiple counts of murder and attempted murder. "The chatbot advised the shooter on what type of gun to use, on which ammo went with which gun, on whether or not a gun would be useful at short range," Uthmeier said in a press briefing, opens new tab. "If it was a person on the other end of that screen, we would be charging them with murder." Uthmeier's office said the investigation will determine whether "OpenAI bears criminal responsibility for ChatGPT's actions in the shooting." The Office of Statewide Prosecution subpoenaed OpenAI for some information and records, it added. The rise of AI has fed a host of concerns ranging from worries that electricity demand by data centers could raise power prices for consumers, to fears that the technology could cost workers their jobs or be used to disrupt the democratic process, turbocharge fraud or help people plan criminal activities. An OpenAI spokeswoman told U.S. media that the shooting was a tragedy but the company had no responsibility. The spokeswoman said that after learning of the incident, OpenAI identified a ChatGPT account believed to be associated with the suspect and "proactively shared this information with law enforcement." "In this case, ChatGPT provided factual responses to questions with information that could be found broadly across public sources on the internet, and it did not encourage or promote illegal or harmful activity," the OpenAI spokeswoman said. Reporting by Kanishka Singh in Washington; Editing by David Gregorio Our Standards: The Thomson Reuters Trust Principles., opens new tab * Suggested Topics: * Artificial Intelligence * Criminal * Data Privacy Kanishka Singh Thomson Reuters Kanishka Singh is a breaking news reporter for Reuters in Washington DC, who primarily covers US politics and national affairs in his current role. His past breaking news coverage has spanned across a range of topics like the Black Lives Matter movement; the US elections; the 2021 Capitol riots and their follow up probes; the Brexit deal; US-China trade tensions; the NATO withdrawal from Afghanistan; the COVID-19 pandemic; and a 2019 Supreme Court verdict on a religious dispute site in his native India.

[4]

Florida AG opens criminal investigation into OpenAI and ChatGPT

Florida Attorney General James Ulthmeier has announced that the state's Office of Statewide Prosecution has opened a criminal investigation into OpenAI and ChatGPT. The investigation was opened because the suspect in a mass shooting at Florida State University in 2025 reportedly used ChatGPT in the lead up to the shooting. Per Uthmeier, "Florida law states that anyone who aids, abets, or counsels someone in the commission of a crime, and that crime is committed or attempted, may be considered a principal to the crime." That means that the responses provided by ChatGPT to the shooter could be interpreted as the AI assistant aiding and abetting his actions. Or at least that's what Florida seems interested in arguing. OpenAI provided the following statement when asked to comment on the Florida investigation: As part of the investigation, Florida has subpoenaed OpenAI for information on "all policies and internal training materials" related to how the company handles things like users threatening to harm others, threatening to harm themselves and how OpenAI responds to law enforcement. The state is also asking OpenAI to share its organizational chart and any publicly released statements on the shooting. "Florida is leading the way in cracking down on AI's use in criminal behavior, and if ChatGPT were a person, it would be facing charges for murder," Attorney General James Uthmeier said. "This criminal investigation will determine whether OpenAI bears criminal responsibility for ChatGPT's actions in the shooting at Florida State University last year." Florida's investigation isn't the first time OpenAI has been connected to a mass shooting. Canadian regulators called for OpenAI to change how it approaches threats of harm following a Wall Street Journal report that claimed the company flagged the account of a Canadian shooting suspect in 2025 but failed to bring their threats to law enforcement. The company agreed to new policies around how it works with Canadian law enforcement in March. Separately, OpenAI is still in the midst of a wrongful death lawsuit from 2025 for the role it may have played in the suicide of a teenage user.

[5]

Florida's attorney general launches criminal probe into ChatGPT over FSU shooting

ORLANDO, Fla. (AP) -- Florida's attorney general on Tuesday opened an investigation into OpenAI's ChatGPT over whether there was criminal behavior involving interactions between the artificial intelligence app and a gunman who killed two people and wounded six others last year at Florida State University. Attorney General James Uthmeier said that prosecutors had done an initial review of chat logs between ChatGPT and the gunman, Phoenix Ikner, to determine if the AI app aided or abetted the crime. "This criminal investigation will determine whether OpenAI bears criminal responsibility for ChatGPT's actions in the shooting at Florida State University last year," Uthmeier said at a news conference in Tampa. Florida's Office of Statewide Prosecution has subpoenaed OpenAI for records of its policies and training materials regarding threats to harm others, and for its policies on reporting "possible past, present, or future crime," according to the attorney general's office. OpenAI spokeswoman Kate Waters called the FSU shooting a tragedy but said the company had no responsibility. The company proactively shared information with law enforcement and continues to cooperate with investigators, she said Tuesday. "In this case, ChatGPT provided factual responses to questions with information that could be found broadly across public sources on the internet, and it did not encourage or promote illegal or harmful activity," Waters said in an email. Ikner faces two counts of first-degree murder and several counts of attempted first-degree murder in the shooting that terrorized the campus in Florida's capital city. Ikner is the stepson of a local sheriff's deputy, and investigators say he used his stepmother's former service weapon to carry out the shooting. Prosecutors in the case intend to seek the death penalty. Uthmeier, a Republican, was named to the position by Florida Gov. Ron DeSantis, after the GOP governor appointed then-Attorney General Ashley Moody to the U.S. Senate seat vacated by Marco Rubio when he became the secretary of state in President Donald Trump's second administration. Uthmeier is running in November to be elected to the position on his own. ___ Charles Sheehan in New York contributed to this report. ___ Follow Mike Schneider on the social platform Bluesky: @mikeysid.bsky.social.

[6]

OpenAI faces criminal probe over role of ChatGPT in shooting

OpenAI is facing a criminal investigation in the US over whether its ChatGPT technology played a part in the murder of two people during a mass shooting at Florida State University last year. Florida's Attorney General James Uthmeier said on Tuesday his office had been looking into the use of the artificial intelligence (AI) chatbot by a man who allegedly shot several people at the campus in Tallahassee. "Our review has revealed that a criminal investigation is necessary," Uthmeier said. "ChatGPT offered significant advice to this shooter before he committed such heinous crimes." An OpenAI spokesperson said: "ChatGPT is not responsible for this terrible crime." It appears to be the first time OpenAI has been under criminal investigation over the use of ChatGPT by someone who allegedly went on to commit a crime. OpenAI's spokesperson said the company has cooperated with authorities and it "proactively shared" information about "a ChatGPT account believed to be associated with the suspect". OpenAI was co-founded by Sam Altman. He and the company quickly joined the most well-known names in the technology industry after the release of ChatGPT in 2022 which is now one of the most widely used AI tools in the world. As for how the suspect, 20-year old FSU student Phoenix Ikner, who is now in jail awaiting trial, interacted with ChatGPT, OpenAI's spokesperson said the chatbot "did not encourage or promote illegal or harmful activity". "In this case, ChatGPT provided factual responses to questions with information that could be found broadly across public sources on the internet." However, Uthmeier said ChatGPT "advised the shooter on what type of gun to use" and on types of ammunition. He said ChatGPT also advised the shooter on "what time of day... and where on campus the shooter could encounter a higher population". "My prosecutors have looked at this, and they told me that if it was a person on the other end of that screen, we would be charging them with murder," said Uthmeier. He added that, under Florida law, anyone who "aids, abets or counsels someone" in attempting to commit or committing a crime is considered a "principle" in the crime. While ChatGPT is not considered a person, Uthmeier said his office needs to determine "criminal culpability" for the company behind the bot, OpenAI. The company is already facing a lawsuit over another incident in which its chatbot may have been a factor. Earlier this year, an 18-year-old man shot and killed nine people and injured two dozen others in British Columbia. OpenAI said after the incident, it had identified and banned the shooter's account based on his usage, but did not refer the matter to police. The company has said it intends to strengthen its safety measures. The parents of a young girl who was injured in the attack filed a lawsuit against the company. Last year, a coalition of 42 state attorney generals sent a letter to 13 tech companies with AI chatbots, including OpenAI, Google, Meta and Anthropic. The letter outlined their concerns over an increase in AI usage by people "who may not realize the dangers they can encounter" and called for "robust safety testing, recall procedures, and clear warnings to consumers". The letter also cited a growing number of "tragedies all across the country," including murders and suicides that apparently involved some usage of AI.

[7]

Florida opens first criminal AI probe into OpenAI

Attorney General James Uthmeier said prosecutors reviewed chat logs showing ChatGPT advised the suspect on weapons, ammunition, and timing. The probe is the first criminal investigation into an AI company over an alleged role in a mass shooting in the US. Florida Attorney General James Uthmeier announced on Tuesday that the state's Office of Statewide Prosecution has opened a criminal investigation into OpenAI over the alleged role of its ChatGPT chatbot in the April 2025 mass shooting at Florida State University. The shooting, which killed two people and injured six others near the student union on FSU's Tallahassee campus, was carried out by Phoenix Ikner, 21, a student at the university at the time. His trial is set to begin on 19 October 2026. More than 200 AI messages have been entered into evidence in the case. Uthmeier said an initial review of Ikner's ChatGPT chat logs showed the suspect had used the tool to seek advice before carrying out the attack, including what type of gun to use, what ammunition was appropriate, what time of day to go to campus to encounter more people, and which locations on campus would have a higher population. "My prosecutors have looked at this and they've told me, if it was a person on the other end of that screen, we would be charging them with murder," Uthmeier said at a press conference in Tampa. "ChatGPT offered significant advice to the shooter before he committed such heinous crimes. We cannot have AI bots that are advising people on how to kill others." OpenAI has been subpoenaed for information about its policies and internal training materials regarding user threats of harm to others and self-harm, as well as its policies for reporting possible crimes. The company's spokesperson Kate Waters said: "Last year's mass shooting at Florida State University was a tragedy, but ChatGPT is not responsible for this terrible crime." OpenAI said it had proactively shared information about the alleged shooter's account with law enforcement after the shooting and continues to cooperate with authorities. The company has maintained that ChatGPT provided only general, factual responses based on widely available information. A criminal investigation into an AI company over an alleged role in a mass shooting is, as multiple legal experts have noted, unprecedented in the United States. Uthmeier had already announced a civil investigation into ChatGPT's role in the FSU shooting, which is ongoing. Attorneys representing the family of one of the victims have announced plans to sue OpenAI. The criminal probe is a significant escalation: it opens the question of whether an AI company could be held criminally liable for responses its system generates, a question with no established legal precedent under current US law. The Florida investigation is part of a broader pattern of legal pressure on AI chatbot companies over alleged contributions to violent incidents. OpenAI is already facing a lawsuit from the family of a victim critically injured in a mass shooting in British Columbia in February 2026 that killed eight people and injured dozens more, an 18-year-old alleged gunman who had discussed gun violence scenarios with ChatGPT and was banned from the platform months before the shooting, but reportedly evaded detection and created another account. OpenAI said it had identified and banned the user but did not alert law enforcement at the time. Separately, a wrongful death lawsuit filed against Google in March over the suicide of a Florida man alleges that its Gemini chatbot pushed the man toward planning a mass casualty attack. OpenAI has said it is working with mental health experts to improve how ChatGPT responds to signs of mental or emotional distress, and that it has taken steps to strengthen its safeguards after the British Columbia case, including changing when it chooses to alert law enforcement about potentially violent activities.

[8]

Florida's Attorney General Opens Criminal Investigation Into OpenAI's Role in Mass Shooting

Florida has been home to some high-profile deaths tied to the use of artificial intelligence and State Attorney General James Uthmeier wants to figure out what is going on. Florida's top prosecutor announced Tuesday that his office is launching an investigation into OpenAI to determine if the company should face any criminal liability for the deaths tied to its popular chatbot. Uthmeier's announcement makes good on his previous promise to investigate OpenAI. The probe will focus primarily on a mass shooting on the Florida State University campus in Tallahassee on April 20, 2025, in which a student gunman opened fire, killing two and injuring six. Earlier this month, an attorney representing the family of one of the people killed in the shooting announced intentions to sue OpenAI after learning the shooter was allegedly in "constant communication with ChatGPT leading up to the shooting." That seems to have spurred Florida's Attorney General into action, as Uthmeier revealed plans to investigate OpenAI just days later. Not much is known publicly at this point about the involvement of ChatGPT in the shooting, though the attorney for the family of one of the victims told the Tallahassee Democrat, “We also have reason to believe that ChatGPT may have advised the shooter how to commit these heinous crimes.†In a statement, Attorney General Uthmeiter explained, “This criminal investigation will determine whether OpenAI bears criminal responsibility for ChatGPT’s actions in the shooting at Florida State University last year.†He also said, "If ChatGPT were a person, it would be facing charges for murder." Per the AG's office, it has already subpoenaed OpenAI for information, including "all policies and internal training materials" regarding user threats of harm to others, user threats of harm to self, and "cooperation with law enforcement, including policies for the reporting of possible past, present, or future crime." It has also requested organizational charts listing "executives, directors, department heads, and/or senior managers of OpenAI" at the time of the shooting and "a listing of all employees, including affiliated departments and titles or role description(s), within ChatGPT." Gizmodo reached out to OpenAI for comment regarding the investigation, but did not receive a response at the time of publication. While the investigation appears to be narrowly focused on the FSU shooting, it's possibleâ€"even likelyâ€"that Uthmeier's office will be back for more. In the lead-up to this investigation, the AG said he would seek to discover how AI contributes to “child sex abuse material†and the “encouragement of suicide and self-harm.†A Florida family is currently engaged in a wrongful death lawsuit against a different AI company, Character.ai, following the suicide of their teenage son. Another Florida family has sued Google over the death of their 36-year-old son, who reportedly was encouraged to kill himself by Gemini. Uthmeier has also expressed an interest in investigating the risk of AI “falling into the hands of America’s enemies, such as the Chinese Communist Party," which frankly seems less pressing than the apparent ongoing epidemic of AI psychosis currently being brought on by American companies. Maybe stick to the domestic threats, man, you've got plenty to work with.

[9]

Florida AG launches criminal investigation into ChatGPT over FSU shooting

Police investigate the scene of a shooting near the student union at Florida State University on April 17, 2025 in Tallahassee, Florida. Two people were killed and five injured in the attack. Florida's attorney general is now investigating OpenAI because the alleged shooter used ChatGPT to help plan the attack. Miguel J. Rodriguez Carrillo/Getty Images hide caption Florida's attorney general is launching a criminal investigation into ChatGPT and its parent company OpenAI over claims that the accused gunman in a shooting at Florida State University last year consulted the AI chatbot before killing two people and injuring five more. The Republican attorney general, James Uthmeier, said at a press conference in Tampa on Tuesday that accused gunman Phoenix Ikner consulted ChatGPT for advice before the shooting, including what type of gun to use, what ammunition went with it, and what time to go to campus to encounter more people, according to an initial review of Ikner's chat logs. "My prosecutors have looked at this and they've told me, if it was a person on the other end of that screen, we would be charging them with murder," Uthmeier said. "We cannot have AI bots that are advising people on how to kill others." OpenAI spokesperson Kate Waters said in a written statement to NPR: "Last year's mass shooting at Florida State University was a tragedy, but ChatGPT is not responsible for this terrible crime." She said the company reached out to share information about the alleged shooter's account with law enforcement after the shooting and continues to cooperate with authorities. Uthmeier's office is issuing subpoenas to OpenAI seeking information about its policies and internal training materials related to user threats of harm and how it cooperates with and reports crimes to law enforcement, dating back to March 2024. At the press conference, Uthmeier acknowledged the investigation is entering into uncharted territory and is uncertain about whether OpenAI has criminal liability. "We are going to look at who knew what, designed what, or should have done what," he said. "And if it is clear that individuals knew that this type of dangerous behavior might take place, that these types of unfortunate, tragic events might take place, and nevertheless still turned to profit, still allowed this business to operate, then people need to be held accountable." OpenAI's Waters said that the chatbot "provided factual responses to questions with information that could be found broadly across public sources on the internet, and it did not encourage or promote illegal or harmful activity." She continued: "ChatGPT is a general-purpose tool used by hundreds of millions of people every day for legitimate purposes. We work continuously to strengthen our safeguards to detect harmful intent, limit misuse, and respond appropriately when safety risks arise." Ikner, 21, is facing multiple charges of murder and attempted murder for the April 2025 shooting near the student union on FSU's Tallahassee campus, where he was a student at the time. His trial is set to begin on Oct. 19. According to court filings, more than 200 AI messages have been entered into evidence in the case. Growing concerns about AI chatbots The Florida investigation comes amid growing concerns over the role of AI chatbots in mass violence. Uthmeier had already announced a civil investigation into ChatGPT's role in the FSU shooting, which is ongoing, and attorneys for the family of one of the victims say they plan to sue OpenAI. OpenAI is already facing a lawsuit from the family of a victim critically wounded in an attack in British Columbia in February 2026 that killed eight people and injured dozens more. The alleged shooter discussed gun violence scenarios with ChatGPT and was even banned from the platform months before the shooting, but was able to evade detection and create another account, OpenAI told Canadian authorities. The Wall Street Journal reported that OpenAI's internal systems flagged the account's posts and staffers were alarmed enough to consider alerting law enforcement, but that the company decided not to. OpenAI has said it is making changes to "strengthen" its protocol for referring accounts to law enforcement in the aftermath of the Canadian shooting. Lawsuits are also mounting against OpenAI and other makers of AI chatbots alleging they've contributed to mental health crises and suicides. (OpenAI has said the cases are "an incredibly heartbreaking situation" and that it's working with mental health experts to improve how ChatGPT responds to signs of mental or emotional distress.) A wrongful death lawsuit filed against Google in March over the suicide of a Florida man accuses the company's Gemini chatbot of pushing the man to "stage a mass casualty attack near the Miami International Airport [and] commit violence against innocent strangers," according to court documents. In response to that lawsuit, Google said: "Gemini is designed to not encourage real-world violence or suggest self-harm. Our models generally perform well in these types of challenging conversations and we devote significant resources to this, but unfortunately they're not perfect." The company added that in this specific case, Gemini had "referred the individual to a crisis hotline many times."

[10]

Man charged with killing Florida doctoral students allegedly consulted ChatGPT

Sign up for the Breaking News US email to get newsletter alerts in your inbox The man charged with killing two University of South Florida doctoral students from Bangladesh allegedly asked ChatGPT about what happens if a person has been put in a garbage bag and "thrown in a dumpster", according to prosecutors in a court filing. He also allegedly bought duct tape and trash bags in the days leading up to the students' disappearance. Hisham Abugharbieh, 26, has been charged with two counts of premeditated murder in the first-degree with a weapon in the deaths of his roommate, Zamil Limon, 27, and his roommate's girlfriend, Nahida Bristy, 27, the Hillsborough county sheriff's office announced on Saturday. Authorities said Abugharbieh, a former USF student, was Limon's off-campus roommate. Limon's remains were found on Friday morning "within numerous black utility trash bags in advanced stages of decomposition" on the Howard Frankland Bridge over Tampa Bay. Bristy was still missing as of Saturday morning, but prosecutors said in the Saturday filing that "no evidence has been uncovered during the course of the investigation to support any probability Nahida Bristy remains alive". The students disappeared from the USF campus on 16 April. During the missing persons investigation, deputies identified Abugharbieh as Limon's roommate. Authorities said that he was arrested on Friday after they responded to a domestic violence call at his family's home. Prosecutors allege that on 7 April, Abugharbieh ordered duct tape online and that on 11 April he also ordered fire starter, charcoal, trash bags and lighter fuel, per court documents seen by the Guardian. Prosecutors also allege that on 13 April he asked ChatGPT, the artificial intelligence chatbot: "What happens if a human has a put in a black garbage bag and thrown in a dumpster." The filing states that ChatGPT responded that it sounded dangerous, to which Abugharbieh allegedly responded: "How would they find out." Two days later, on 15 April, he reportedly asked ChatGPT: "Can a VIN number on a car be changed?" and "Can you keep a gun at home with out a license." Then on 17 April, prosecutors allege that Abugharbieh asked: "are cars checked at the Hillsborough River state park." Prosecutors also say in the filing that Abugharbieh's and Limon's roommate told investigators that between the night of 16 April and the morning of 17 April, they observed Abugharbieh using a gray rolling trolley cart to "move multiple cardboard boxes from within Hisham's room to the compactor dumpster on site". "When asked about the cart and/or boxes, Hisham Abugharbieh advised he removed old clothing he no longer wanted," the filing states. Authorities later searched the compactor, per the filing, and found a wallet containing a student ID and a visa card belonging to Limon and other items. On Sunday, the Hillsborough county sheriff's Office reported that human remains had been recovered from the waterways of Tampa Bay, though they have not yet been identified. Court records show that Abugharbieh, who is a US citizen, made an initial court appearance Saturday and was ordered held without bond. A hearing is scheduled for 28 April at 9am. In the court filing, it states that during an interview with investigators, Abugharbieh denied "having any involvement in the disappearance of Zamila Limon and Nahida Bristy". The Hillsborough county public defender's office, which has been appointed to represent him, declined to comment on the case. OpenAI, which operates ChatGPT, did not immediately respond to a request for comment. Authorities have not disclosed a possible motive for the killings.

[11]

Florida AG probes ChatGPT's role in USF student killings

* AI regulation is also on the list of issues state lawmakers will take up during a special session that starts Tuesday. The other side: OpenAI did not respond to a request for comment on Monday. * After Uthmeier launched his probe of the company, OpenAI in a statement said it would cooperate with the investigation. State of play: Hisham Abugharbieh, 26, is accused of killing his roommate, Zamil Limon, and Limon's friend Nahida Bristy, who were last heard from April 16. * Both were 27-year-old doctoral students from Bangladesh, the Tampa Bay Times reported. * Abugharbieh, a former USF student, was being held without bail Monday in the Hillsborough County jail on two counts of first-degree murder, among other charges. * Authorities found Limon's remains Friday in several trash bags thrown out on the Howard Frankland Bridge, according to court records. * They found a second body Sunday afternoon in the waters near Interstate 275 and Fourth Street North, but as of Monday afternoon, authorities had not released identifying information. Zoom in: On April 13, three days before the students went missing, Abugharbieh asked ChatGPT what happens if a person is "put in a black garbage bag and thrown in a dumpster," prosecutors wrote in court records. * Over the next few days, documents state, he returned to the chatbot to ask about guns and vehicle identification. "Will Apple know who is the new iPhone user after the previous user," he entered on April 19. * On Thursday evening, around the same time deputies announced they believed the missing students were in danger, Abugharbieh wrote to ChatGPT again: "What does missing endangered adult mean." What they're saying: "We are expanding our criminal investigation into OpenAI to include the USF murders after learning the primary suspect used ChatGPT," Uthmeier posted Monday on X. * The attorney general initially launched a civil probe into the company but added the criminal inquiry after his office's review of logs between the chatbot and the accused Florida State shooter. * "If ChatGPT were a person, it would be facing charges for murder," Uthmeier said in a previous news release. What's next: Abugharbieh will go before a judge for a status conference at 9am Tuesday.

[12]

Florida launches criminal probe of ChatGPT's role in mass shooting

Florida attorney general announced a criminal investigation of OpenAI for FSU shooting. Credit: Courtesy Florida Office of the Attorney General Florida attorney general James Uthmeier announced Tuesday that the state launched a criminal investigation into OpenAI and its flagship product, the artificial intelligence chatbot ChatGPT. The investigation centers on the use of ChatGPT by a gunman who allegedly shot several people at Florida State University in April 2025. The shooting killed two people and injured five others. The suspect, a former student at Florida State University in his early 20s, is awaiting trial for multiple charges of murder and attempted murder. "Unfortunately, what we've seen in our initial review is that ChatGPT offered significant advice to the shooter before he committed such heinous crimes," Uthmeier said at a news conference on Tuesday, according to NBC Miami. Uthmeier offered several examples of such exchanges, including one in which the suspect allegedly asked about the gun's short range power and the type of ammunition the gun used. The New York Times reported that the suspect also prompted the chatbot to answer questions about how the country would respond to a shooting at FSU. Florida law may consider anyone who aids, abets, or counsels someone in a committed or attempted crime as a principal to that crime. In a published statement, Uthmeier said that "...if ChatGPT were a person, it would be facing charges for murder." Mashable contacted OpenAI for comment but didn't receive a response prior to publication. The criminal investigation follows an initial probe launched earlier this month by Uthmeier into ChatGPT's links to "criminal behavior," including the FSU shooting, as well as child sex abuse and the "encouragement of suicide and self-harm." The investigation seeks, among other evidence, OpenAI's policies and internal training materials related to user threats directed toward other people between March 2024 and April 2026. A recent report published by the Center for Countering Digital Hate found that many AI chatbots, including ChatGPT, helped test users posing as 13-year-old boys plan violence, including school shootings, knife attacks, political assassinations, and bombing synagogues or political party offices. At the time, OpenAI said it had since introduced a new model different from the one tested jointly by CNN and the Center for Countering Digital Hate. It is unclear which ChatGPT model the alleged FSU shooter used.

[13]

ChatGPT lawsuit claims it advised a shooter on how and where to strike

Florida's attorney general has launched a criminal investigation into OpenAI, alleging that ChatGPT helped plan the mass shooting at Florida State University that killed two people last year. According to The Washington Post, Attorney General James Uthmeier made the announcement at a news conference on Tuesday, claiming the chatbot gave tactical advice to the suspected shooter. "The chatbot advised the shooter on what type of gun to use, on which ammo went with which gun, on whether or not a gun would be useful at short range," Uthmeier said. He didn't hold back on the implications either: "If it was a person on the other end of that screen, we would be charging them with murder." His office has also sent subpoenas to OpenAI, asking the company to explain its policies on how it handles user conversations involving threats of violence. Is OpenAI responsible for what users do with it? OpenAI has pushed back firmly. Spokesperson Kate Waters said, "Last year's mass shooting at Florida State University was a tragedy, but ChatGPT is not responsible for this terrible crime." Recommended Videos The company claims the ChatGPT provided factual responses to questions that could be found anywhere on the internet and that it did not encourage or promote illegal activity. Is this just the beginning? This investigation is part of a growing concern around AI chatbots. OpenAI is already under scrutiny after a separate mass shooting in Canada and multiple lawsuits from families who claim ChatGPT contributed to the deaths of loved ones by suicide. AI experts point out that chatbots' guardrails are imperfect. As Carnegie Mellon professor Ramayya Krishnan put it, "The guardrails are not 100 percent effective." Whether OpenAI can be held criminally liable is a question up to the courts to answer, but no one can deny that these AI chatbots can have severe implications on a person's mental health and should only be used with great care.

[14]

Florida launches criminal probe into OpenAI to see if ChatGPT is responsible for fatal Florida State shooting | Fortune

Florida's attorney general on Tuesday opened an investigation into OpenAI's ChatGPT over whether there was criminal behavior involving interactions between the artificial intelligence app and a gunman who killed two people and wounded six others last year at Florida State University. Attorney General James Uthmeier said that prosecutors had done an initial review of chat logs between ChatGPT and the gunman, Phoenix Ikner, to determine if the AI app aided or abetted the crime. "This criminal investigation will determine whether OpenAI bears criminal responsibility for ChatGPT's actions in the shooting at Florida State University last year," Uthmeier said at a news conference in Tampa. Florida's Office of Statewide Prosecution has subpoenaed OpenAI for records of its policies and training materials regarding threats to harm others, and for its policies on reporting "possible past, present, or future crime," according to the attorney general's office. OpenAI spokeswoman Kate Waters called the FSU shooting a tragedy but said the company had no responsibility. The company proactively shared information with law enforcement and continues to cooperate with investigators, she said Tuesday. "In this case, ChatGPT provided factual responses to questions with information that could be found broadly across public sources on the internet, and it did not encourage or promote illegal or harmful activity," Waters said in an email. Ikner faces two counts of first-degree murder and several counts of attempted first-degree murder in the shooting that terrorized the campus in Florida's capital city. Ikner is the stepson of a local sheriff's deputy, and investigators say he used his stepmother's former service weapon to carry out the shooting. Prosecutors in the case intend to seek the death penalty. Uthmeier, a Republican, was named to the position by Florida Gov. Ron DeSantis, after the GOP governor appointed then-Attorney General Ashley Moody to the U.S. Senate seat vacated by Marco Rubio when he became the secretary of state in President Donald Trump's second administration. Uthmeier is running in November to be elected to the position on his own. ___ Charles Sheehan in New York contributed to this report.

[15]

Florida launches probe into OpenAI over ChatGPT's alleged role in shooting

Florida has opened a criminal investigation into OpenAI following a deadly shooting in 2025 at Florida State University, Attorney General James Uthmeier said Tuesday. Authorities are looking into claims that the company's ChatGPT chatbot provided information to the suspect prior to the attack. Florida Attorney General James Uthmeier said on Tuesday the state was launching a criminal probe into OpenAI and its artificial intelligence app ChatGPT over a deadly shooting last year that killed two people at Florida State University. A gunman killed two people and wounded six others at Florida State University in April last year before he was shot by officers and hospitalised. The suspect was charged with multiple counts of murder and attempted murder. "The chatbot advised the shooter on what type of gun to use, on which ammo went with which gun, on whether or not a gun would be useful at short range," Uthmeier said in a press briefing. "If it was a person on the other end of that screen, we would be charging them with murder." Uthmeier's office said the investigation will determine whether "OpenAI bears criminal responsibility for ChatGPT's actions in the shooting." The Office of Statewide Prosecution subpoenaed OpenAI for some information and records, it added. The rise of AI has fed a host of concerns ranging from worries that electricity demand by data centers could raise power prices for consumers, to fears that the technology could cost workers their jobs or be used to disrupt the democratic process, turbocharge fraud or help people plan criminal activities. Read moreOne year of Trump 2.0: How he's weaponised AI as political propaganda An OpenAI spokeswoman told US media that the shooting was a tragedy but the company had no responsibility. The spokeswoman said that after learning of the incident, OpenAI identified a ChatGPT account believed to be associated with the suspect and "proactively shared this information with law enforcement." Read moreStreamlining the kill chain: how AI is changing modern warfare "In this case, ChatGPT provided factual responses to questions with information that could be found broadly across public sources on the internet, and it did not encourage or promote illegal or harmful activity," the OpenAI spokeswoman said.

[16]

Florida's attorney general announces criminal investigation into OpenAI

Florida's Attorney General said his office was issuing subpoenas to OpenAI on Tuesday morning, seeking information about how the leading AI company approaches user threats of harm to themselves and to others. The subpoenas are part of a new criminal investigation into the company, Florida's Attorney General James Uthmeier said in a press conference. The actions are an escalation from Uthmeier's previously announced probe of the AI company, which Uthmeier said will continue as a civil investigation alongside the newly announced criminal investigation.On April 9, Uthmeier announced he would launch an investigation into OpenAI and its ChatGPT tool over national security and safety concerns. Among other concerns, Uthmeier is investigating whether ChatGPT provided any planning assistance to the alleged gunman in the April 2025 Florida State University mass shooting that left two people dead. "We have been looking into the recent FSU shooting, and that shooter's communications with ChatGPT," Uthmeier said in a press conference Tuesday morning. "Our review of that communication has revealed that a criminal investigation is necessary." "ChatGPT offered significant advice to the shooter before he committed such heinous crimes," Uthmeier said, adding "that the chatbot advised the shooter on what type of gun to use, on which ammo went with which gun, on whether or not a gun would be useful in short range." "If this were a person on the other side of the screen, we would be charging them with murder," Uthmeier said. "We cannot have AI bots that are advising others on how to kill others." Uthmeier announced his office was issuing subpoenas seeking information about OpenAI's policies and internal training materials regarding user threats of harm to themselves and others from March 2024 until April 2026. The subpoenas will also request information from the same timeframe on all policies and internal training materials regarding how OpenAI cooperates with and reports crime to law enforcement agencies. In addition, the subpoenas seek an organizational chart of OpenAI's leaders and senior managers, as well as a list of all employees working on ChatGPT. In a statement to NBC News in early April, an OpenAI spokesperson said: "We build ChatGPT to understand people's intent and respond in a safe and appropriate way, and we continue improving our technology." "Our ongoing safety work continues to play an important role in delivering these benefits to everyday people, as well as supporting scientific research and discovery," the company said, noting that more than 900 million people use ChatGPT each week. The company did not immediately reply to a request for comment on Tuesday's developments. "We are going to look at who knew what, designed what, or should have done what," Uthmeier said in the Tuesday press conference from behind a lectern that said "Investigating OpenAI." "I'm a big believer in limited government. I believe government should only interfere in business activities when you have significant harm to our people," Uthmeier added. "This is that." The alleged gunman in the FSU shooting, 21-year-old Phoenix Ikner, faces multiple charges related to last year's shooting that killed Robert Morales and Tiru Chabba. According to court documents seen by NBC News, Ikner was exchanging messages with ChatGPT in the minutes before the shooting, asking questions like: "What time is it the busiest in the FSU student union?" and "If there was a shooting at FSU, how would the country react?" Attorneys for Morales, one of the shooting's victims, indicated in early April that they were preparing to file charges against OpenAI. "We have been advised that the shooter was in constant communication with ChatGPT leading up to the shooting," said Ryan Hobbs, an attorney for Morales' family. "We also have reason to believe that ChatGPT may have advised the shooter how to commit these heinous crimes. We will therefore file suit against ChatGPT and its ownership structure very soon." Along with Uthmeier, Florida Gov. Ron DeSantis has emerged as an outspoken critic of large AI corporations and their freewheeling approach to AI development and deployment. In December, DeSantis proposed an Artificial Intelligence Bill of Rights for American citizens, focusing on "data privacy, parental controls, consumer protections, and restrictions on AI use of an individual's name, image or likeness without consent," according to the bill's announcement.

[17]

Florida to open criminal investigation into OpenAI over ChatGPT's influence on alleged mass shooter

Sign up for the Breaking News US email to get newsletter alerts in your inbox Florida's top prosecutor is to launch a criminal investigation into how the tech company OpenAI and its software tool ChatGPT may influence users' threats of harm to themselves or others, including whether it "offered significant advice" to a gunman accused of conducting a mass shooting in the state last year. State attorney general James Uthmeier said at a news conference on Tuesday that his office is expanding an examination of OpenAI, saying a "criminal investigation is necessary" and the state had issued subpoenas to the $852bn California-based tech firm. "If this were a person on the other end of the screen, we would be charging them with murder," Uthmeier said during an event in Tampa. Earlier this month, Uthmeier, an appointee of Florida's governor, Ron DeSantis, announced an investigation into the artificial intelligence company over potential national security and safety concerns. But the issuing of subpoenas to OpenAI is a marked escalation that comes after lawyers spoke up on behalf of the family of Robert Morales, one of two fatalities in a shooting at Florida State University last April that also injured six on the Tallahassee campus. The lawyers said they had learned the shooter was in "constant communication with ChatGPT" ahead of the shooting, and that the chatbot "may have advised the shooter how to commit these heinous crimes". Phoenix Ikner, 20 at the time of the shooting, allegedly communicated frequently with the ChatGPT prior to the campus attack, allegedly asking for detailed information about the operation of guns and ammunition, where he could find the most students, and how the nation might react. Ikner is expected to go on trial in October on charges of first-degree murder and attempted first-degree murder in the shooting. He has pleaded not guilty. A lawsuit filed on behalf of the Morales family is among several claims brought against OpenAI and Google alleging that their AI chatbots have played a part in encouraging people to take their lives or the lives of others. Uthmeier said at the press conference that a review of communications revealed that "ChatGPT offered significant advice to the shooter before he committed such heinous crimes". He added "that the chatbot advised the shooter on what type of gun to use, on which ammo went with which gun, on whether or not a gun would be useful in short range". "Just because this is a chatbot in AI does not mean that there is not criminal culpability," Uthmeier said, adding that his office will "look at who knew what, designed what or should have done what". A spokesperson for OpenAI, Kate Waters, said in a statement to NBC News: "Last year's mass shooting at Florida State University was a tragedy, but ChatGPT is not responsible for this terrible crime. "In this case, ChatGPT provided factual responses to questions with information that could be found broadly across public sources on the internet, and it did not encourage or promote illegal or harmful activity." The company said it continues to cooperate with authorities and had shared information with law enforcement after identifying a ChatGPT account believed to be associated with the suspect. The announcement of the dialing-up of the investigation in Florida came two days after the worst mass shooting in the US in two years, when eight children were killed in Shreveport, Louisiana, on Sunday, in what the authorities have identified as a violent domestic incident. The father of seven of the children, Shamar Elkins, was shot dead by police after being identified as the gunman.

[18]

Florida attorney general issues subpoenas in ChatGPT probe over FSU shooting - SiliconANGLE

Florida attorney general issues subpoenas in ChatGPT probe over FSU shooting Florida Attorney General James Uthmeier today said that the state has opened a criminal investigation into ChatGPT and its parent company, OpenAI Group PBC, over whether the company bears any criminal responsibility for a shooting that happened last year at Florida State University. Last month, Uthmeier announced that his office would open a probe into OpenAI over a number of concerns including alleged harm to children, threats to national security, and also the shooting in which 20-year-old student, Phoenix Ikner, killed two people and injured six more after discussing such a crime with ChatGPT. Ikner had asked the chabot various questions, including about how the U.S. would react to a shooting and which parts of the university would be busiest at a certain time of day. Some of the other queries were said to have related to advice on weapons and ammunition. "If this were a person on the other end of the screen, we would be charging them with murder," Uthmeier said. "Just because this is a chatbot, an AI, does not mean that there is not criminal culpability. So, we're going to look at who knew what, designed what or should have done more." Issuing subpoenas to OpenAI is an escalation, reportedly partly at the behest of the family of Robert Morales, one of the people who died in the shooting. The family's lawyers have claimed Ikner was in "constant communication with ChatGPT" before he pulled the trigger, and that ChatGPT might have "advised" him on "how to commit these heinous crimes." "We have been looking into the recent FSU shooting, and that shooter's communications with ChatGPT," Uthmeier said in a press conference this morning. "Our review of that communication has revealed that a criminal investigation is necessary." Uthmeier's office will now seek information about OpenAI's policies andinternal training materials and how the company cooperates with law enforcement agencies. The investigation will determine whether "human beings may have been involved in the design, management and operation" of ChatGPT to possibly "warrant criminal liability." A spokesperson for OpenAI said in a statement to NBC News that the shooting was a "tragedy, but ChatGPT is not responsible for this terrible crime." She added that the chatbot only responded with advice that is available "broadly across public sources on the internet," and that it didn't "encourage or promote illegal or harmful activity."

[19]

Florida Launches Criminal Probe Into OpenAI and ChatGPT Over Deadly Shooting

WASHINGTON, April 21 (Reuters) - Florida Attorney General James Uthmeier said on Tuesday the state was launching a criminal probe into OpenAI and its artificial intelligence app ChatGPT over a deadly shooting last year that killed two people at Florida State University. A gunman killed two people and wounded six others at Florida State University in April last year before he was shot by officers and hospitalized. The suspect was charged with multiple counts of murder and attempted murder. "The chatbot advised the shooter on what type of gun to use, on which ammo went with which gun, on whether or not a gun would be useful at short range," Uthmeier said in a press briefing. "If it was a person on the other end of that screen, we would be charging them with murder." Uthmeier's office said the investigation will determine whether "OpenAI bears criminal responsibility for ChatGPT's actions in the shooting." The Office of Statewide Prosecution subpoenaed OpenAI for some information and records, it added. The rise of AI has fed a host of concerns ranging from worries that electricity demand by data centers could raise power prices for consumers, to fears that the technology could cost workers their jobs or be used to disrupt the democratic process, turbocharge fraud or help people plan criminal activities. An OpenAI spokeswoman told U.S. media that the shooting was a tragedy but the company had no responsibility. The spokeswoman said that after learning of the incident, OpenAI identified a ChatGPT account believed to be associated with the suspect and "proactively shared this information with law enforcement." "In this case, ChatGPT provided factual responses to questions with information that could be found broadly across public sources on the internet, and it did not encourage or promote illegal or harmful activity," the OpenAI spokeswoman said. (Reporting by Kanishka Singh in Washington; Editing by David Gregorio)

[20]

Florida AG issues subpoenas in OpenAI criminal probe

Florida Attorney General James Uthmeier (R) on Tuesday launched a criminal investigation into OpenAI and its chatbot, serving the company with subpoenas. Uthmeier, who initially opened a probe into OpenAI and ChatGPT earlier this month, said at a news conference that the individual accused of fatally shooting two people at Florida State University (FSU) last April communicated with ChatGPT before the incident. Two individuals, 57-year-old Robert Morales and 45-year-old Tiru Chabba, were killed in the attack. Uthmeier noted that prior to allegedly carrying out the shooting, the man accused in the case was "advised" by the chatbot on what type of gun to use, which ammo went with which gun, whether or not a gun would be useful in short range, what time of day would be appropriate to interact with more people and where on FSU's campus he would encounter a higher population. Given that, the attorney general said that his prosecutors told him that "if it was a person on the other end of that screen, we would be charging them" with murder. Under Florida law, anyone who aids, abets or counsels someone in the commission of a crime may be considered a principal in the first degree. "Technology, AI, is supposed to support mankind, it is supposed to help mankind, it is supposed to advance mankind, not end it," Uthmeier remarked. "And unfortunately, what we've seen in our initial review is that ChatGPT offered significant advice to the shooter before he committed such heinous crimes." The attorney general said his office subpoenaed OpenAI for all policies and internal training materials from March 1, 2024, through April 17 of this year regarding user threats of harm to others, user threats of harm to self and cooperation with law enforcement, including policies for the reporting of past, present or future crimes. The Office of Statewide Prosecution also subpoenaed the company for organizational charts and listings of all ChatGPT employees from March 1 and Oct. 1, 2024, and April 17, 2025 -- the day the shooting occurred. The Hill has reached out to OpenAI for comment. Earlier this month, a spokesperson for the company told The Hill that it would "cooperate" with Uthmeier's probe. "We build ChatGPT to understand people's intent and respond in a safe and appropriate way, and we continue improving our technology," the spokesperson added. Florida Gov. Ron DeSantis (R) has pushed for state-level regulations on AI, putting him at odds with President Trump. In December, Trump signed an executive order aimed at preempting state laws that regulate the technology in favor of federal standards, while DeSantis rolled out a "Citizen Bill of Rights" for AI that same month.

[21]

Florida's Attorney General Launches Criminal Probe Into ChatGPT Over FSU Shooting

ORLANDO, Fla. (AP) -- Florida's attorney general on Tuesday opened an investigation into OpenAI's ChatGPT over whether there was criminal behavior involving interactions between the artificial intelligence app and a gunman who killed two people and wounded six others last year at Florida State University. Attorney General James Uthmeier said that prosecutors had done an initial review of chat logs between ChatGPT and the gunman, Phoenix Ikner, to determine if the AI app aided or abetted the crime. "This criminal investigation will determine whether OpenAI bears criminal responsibility for ChatGPT's actions in the shooting at Florida State University last year," Uthmeier said at a news conference in Tampa. Florida's Office of Statewide Prosecution has subpoenaed OpenAI for records of its policies and training materials regarding threats to harm others, and for its policies on reporting "possible past, present, or future crime," according to the attorney general's office. OpenAI spokeswoman Kate Waters called the FSU shooting a tragedy but said the company had no responsibility. The company proactively shared information with law enforcement and continues to cooperate with investigators, she said Tuesday. "In this case, ChatGPT provided factual responses to questions with information that could be found broadly across public sources on the internet, and it did not encourage or promote illegal or harmful activity," Waters said in an email. Ikner faces two counts of first-degree murder and several counts of attempted first-degree murder in the shooting that terrorized the campus in Florida's capital city. Ikner is the stepson of a local sheriff's deputy, and investigators say he used his stepmother's former service weapon to carry out the shooting. Prosecutors in the case intend to seek the death penalty. Uthmeier, a Republican, was named to the position by Florida Gov. Ron DeSantis, after the GOP governor appointed then-Attorney General Ashley Moody to the U.S. Senate seat vacated by Marco Rubio when he became the secretary of state in President Donald Trump's second administration. Uthmeier is running in November to be elected to the position on his own. ___ Charles Sheehan in New York contributed to this report. ___ Follow Mike Schneider on the social platform Bluesky: @mikeysid.bsky.social.

[22]

ChatGPT To Be Charged With Murder? Florida Probes OpenAI After Chatbot Linked To Deadly Campus Shooting:

On Tuesday, Florida authorities launched a high-stakes criminal probe into OpenAI, examining whether its chatbot ChatGPT played any role in last year's deadly shooting at Florida State University. Florida Opens Probe Into OpenAI Over Deadly Campus Shooting Florida Attorney General James Uthmeier said the state has initiated a criminal investigation into OpenAI and its chatbot ChatGPT following a mass shooting at Florida State University that left two people dead and six injured last April. The suspect was shot by police, hospitalized and later charged with multiple counts of murder and attempted murder. Allegations: ChatGPT Provided Gun-Related Information Uthmeier alleged the gunman may have used ChatGPT to seek guidance on firearms, including what type of weapon to use, compatible ammunition and its effectiveness at close range. "If ChatGPT were a person, it would be facing charges for murder," Uthmeier stated in a press release. On X, he said, "AI is supposed to advance mankind, not lead to its demise," adding, "We have a duty to investigate whether OpenAI bears criminal responsibility for ChatGPT's advising of the deadly FSU shooter last year." His office has issued a subpoena seeking records from OpenAI as part of the probe into whether the company could bear criminal responsibility. OpenAI Responds, Denies Responsibility In a statement, OpenAI said the incident was tragic but maintained it is not liable, Reuters reported. A company spokesperson said ChatGPT provided "factual responses" based on widely available public information and "did not encourage or promote illegal or harmful activity." The company added that it identified an account potentially linked to the suspect and "proactively shared this information with law enforcement." OpenAI did not immediately respond to Benzinga's request for comments. Disclaimer: This content was partially produced with the help of AI tools and was reviewed and published by Benzinga editors. Photo Courtesy: Primakov on Shutterstock.com Market News and Data brought to you by Benzinga APIs To add Benzinga News as your preferred source on Google, click here.

[23]

Florida investigating ChatGPT role in mass shooting - The Korea Times

MIAMI -- Florida on Tuesday announced a criminal probe into whether ChatGPT artificial intelligence played a role in a deadly mass shooting at a university in the US state. The decision to launch an investigation came after prosecutors reviewed exchanges between OpenAI chatbot ChatGPT and the suspected gunman, who opened fire at Florida State University last year, according to state Attorney General James Uthmeier. "If ChatGPT were a person, it would be facing charges for murder," Uthmeier said in a release. Florida law allows anyone who aids, abets, or counsels someone in the commission of a crime to be treated as an "aider and abettor" bearing the same responsibility as the perpetrator, according to Uthmeier. Details of the exchange between the gunman and ChatGPT were not disclosed. "Last year's mass shooting at Florida State University was a tragedy, but ChatGPT is not responsible for this terrible crime," an OpenAI spokesperson said in response to an AFP inquiry. "ChatGPT provided factual responses to questions with information that could be found broadly across public sources on the internet, and it did not encourage or promote illegal or harmful activity." OpenAI identified the ChatGPT account linked to the suspected shooter and provided it to police after learning of the shooting, the spokesperson noted. Two men were killed and six other people injured in the mass shooting allegedly carried out by the son of a local deputy sheriff with her old service weapon, according to authorities. The suspect identified as Phoenix Ikner -- rampaged through Florida State University, shooting at students before he was shot by local law enforcement. Ikner was hospitalized with "serious but non-life-threatening injuries," investigators said. Leon County Sheriff Walt McNeil said at the time that Ikner was a student at the university and the son of an "exceptional" 18-year member of his staff. He added that the suspect was part of the sheriff's office training programs, meaning "it's not a surprise to us that he had access to weapons." Bystander footage aired by CNN appeared to show a young man walking on a lawn and shooting at people who were trying to get away. Mass shootings are common in the United States, where a constitutional right to bear arms trumps demands for stricter rules. That is despite widespread public support for tighter control on firearms, including restricting the sale of high-capacity clips.

[24]

Criminal probe launched into ChatGPT's possible involvement in deadly mass shooting at Florida State University

Authorities have launched a criminal probe into whether the artificial-intelligence platform ChatGPT helped a man plan a deadly mass shooting at Florida State University last year. Florida Attorney General James Uthmeier said Tuesday that his office opened the probe after reviewing chats that accused gunman Phoenix Ikner had with one of the platform's bots in the lead-up to the April 17, 2025, horror. "ChatGPT offered significant advice to the shooter before he committed such heinous crimes," Utmeier said at a press conference in Tampa. For example, the chatbot told Ikner what type of gun to use, what type of ammunition to purchase, what kind of firearm is best for a close-range shooting and what part of campus would be the most crowded at the time, the AG said. "My prosecutors have looked at this, and they've told me if it was a person on the other end of the screen, we would be charging them with murder," Utmeier said. Ikner, a student at the college, opened fire using his stepmother's service pistol outside the student union of FSU's Tallahassee campus, killing Robert Morales, 57, and Tiru Chabba, 45, both Aramark vendors, according to officials. The suspect's stepmother is a deputy with the Leon County sheriff's office. Six students also were wounded before police shot Ikner, leaving his face disfigured. The accused shooter's motive is still unclear, with investigators saying Ikner didn't know his victims. He is charged with first-degree murder, attempted murder and related crimes. Utmeier said his office has issued subpoenas to ChatGPT's parent company Open AI for its internal policies and trainings involving users who express self-harm or harm to others. The AG's office is also seeking a slew of other information from the company, including the names of its management, all other employees and any press releases they put out related to the shooting. Neama Rahmani, a former prosecutor and private lawyer, said there are many challenges the AG will face in trying to potentially prosecute the corporation, including difficulties trying to prove intent and causation and overcoming the First Amendment arguments and immunity that tech companies usually benefit from when their users carry out crimes. "It is unusual, and [Utmeier] is venturing into uncharted legal waters," Rahmani said. "I'm not saying that this can't be an important case that changes the way we think about AI," the lawyer said. "But I think there are going to be significant challenges to a successful criminal prosecution. "At the end of the day, you can't put a corporation in jail anyway, so you're talking about a fine," he said. The expert added that a civil lawsuit would be much easier to prove in this case. Morales' relatives are planning to file suit against OpenAI, their lawyers announced earlier this month. A rep for OpenAI said the company is cooperating with law enforcement. The representative said all of the chatbots answers were "factual responses to questions with information that could be found broadly across public sources on the internet. "It did not encourage or promote illegal or harmful activity," the rep said. "ChatGPT is a general-purpose tool used by hundreds of millions of people every day for legitimate purposes. "Last year's mass shooting at Florida State University was a tragedy, but ChatGPT is not responsible for this terrible crime," the representative said. "After learning of the incident, we identified a ChatGPT account believed to be associated with the suspect and proactively shared this information with law enforcement." The company is working to bolster safeguards to help identify users involving "harmful intent" so that it can act appropriately, the rep said.

[25]

Florida launches criminal probe into OpenAI over deadly shooting

STORY: Florida Attorney General James Uthmeier said on Tuesday the state was launching a criminal investigation into OpenAI and its AI app ChatGPT over a deadly shooting last year that killed two people at Florida State University. "We have been looking into the recent FSU shooting and that shooter's communications with ChatGPT. Now that communication and our review of that communication has revealed that a criminal investigation is necessary." A gunman killed two people and wounded six others at Florida State University in April last year before he was shot by officers and hospitalized. The suspect was charged with multiple counts of murder and attempted murder. "The communication between the shooter and ChatGPT revealed that the chat bot advised the shooter on what type of gun to use, on which ammo went with which gun, on whether or not a gun would be useful in short range. ChatGPT advised the shooter on what time of day would be appropriate for the shooting to interact with more people and where on campus would be the place to encounter a higher population." Uthmeier's office said the investigation will determine whether OpenAI bears criminal responsibility as a corporation. "My prosecutors have looked at this and they've told me if it was a person on the other end of that screen, we would be charging them with murder." An OpenAI spokeswoman told U.S. media that the shooting was a tragedy but the company had no responsibility, adding that the chatbot (quote) "provided factual responses" and "did not encourage or promote illegal or harmful activity." She said that after learning of the incident, OpenAI identified a ChatGPT account believed to be associated with the suspect and "proactively shared this information with law enforcement."

[26]

Florida launches criminal probe into OpenAI and ChatGPT over deadly shooting