Google Gemini now creates interactive 3D simulations to explain complex topics

4 Sources

[1]

Gemini Can Now Generate Interactive Images Directly in the Chat

Blake has over a decade of experience writing for the web, with a focus on mobile phones, where he covered the smartphone boom of the 2010s and the broader tech scene. When he's not in front of a keyboard, you'll most likely find him playing video games, watching horror flicks, or hunting down a good churro. Gemini has always been good at generating images thanks to Nano Banana, but Google recently launched a new way for you to understand a topic when chatting with the AI tool. Now, when you ask Gemini to visualize something, you won't just get a static image, but an interactive one instead. The new feature will generate a dynamic visualization when an image isn't enough. Google says you can ask Gemini to "show me" or "help me visualize" a specific topic, and the visual will be created when you click the "show me the visualization" button that appears. I tested the feature out by asking Gemini how the moon orbits the Earth, and then to show me how a car engine works. Both times, an interactive visual was created that explained a lot more than a single image could. For the moon orbit visualization, there was a slider to adjust the orbit speed, along with other tweaks to adjust the view. Similar characteristics appeared in the car engine visualization as well. I was able to change whether the animated car engine played or manually adjust the view so it illustrated each step of the process. Anthropic announced a very similar feature for Claude in March, and the results were impressive. However, there isn't a way to save the visuals in Gemini, as you can do with Claude. Whether and how these interactive simulations will improve over time remains to be seen. Google did not immediately respond to a request for comment. The new Gemini feature is rolling out globally to all users, but is currently not available for Education or Workspace accounts. Visualizations will only be generated when using the Pro model.

[2]

Google's Gemini AI can answer your questions with 3D models and simulations

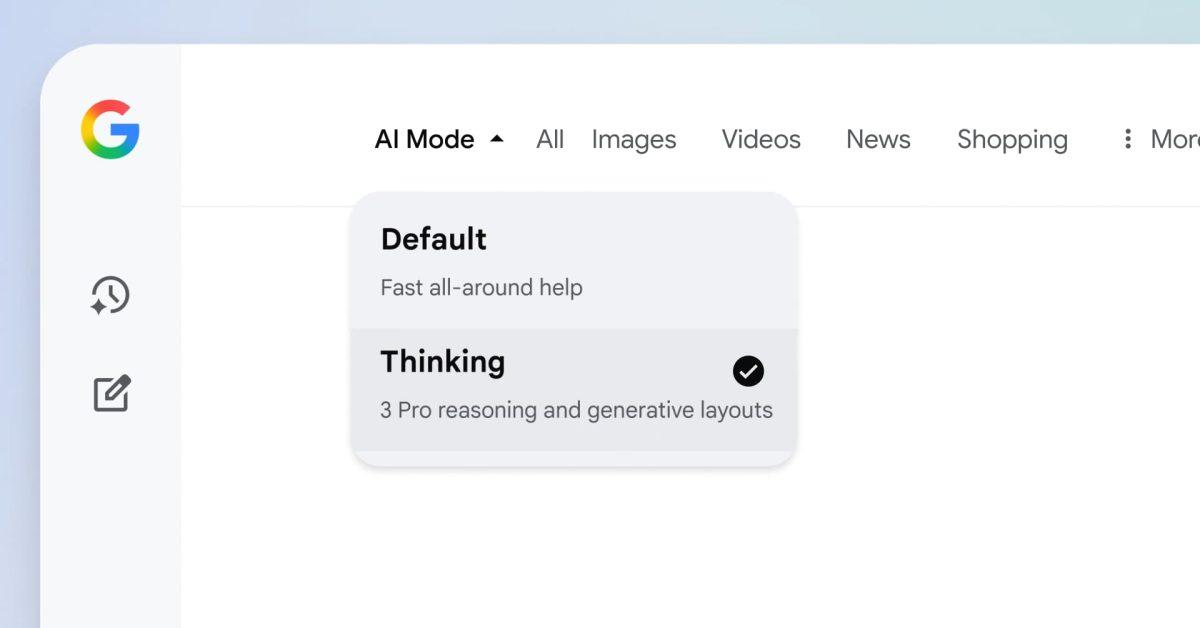

Google's latest upgrade for Gemini will allow the chatbot to generate interactive 3D models and simulations in response to your questions. With the new feature, you may see options to rotate the AI-generated model, manually adjust sliders on it, or input different values to change the simulation in real-time. When trying out the feature for myself, I asked Gemini to make a simulation of the Moon orbiting the Earth, and it created a 3D model with a few different ways to interact with it. Along with a slider to adjust the speed of the Moon's orbit, there's also a toggle to hide the line representing its orbital path and a button to pause the simulation. You can also zoom in on and rotate the 3D model. This update comes just weeks after Anthropic gave Claude the ability to automatically respond with charts, diagrams, and other interactive visuals, while OpenAI added a feature to ChatGPT that allows it to generate visualizations of math and science concepts. Previously, Gemini could only generate interactive images in response to prompts. All Gemini app users can access this feature by selecting the "Pro" model in the prompt bar. From there, ask Gemini something like, "show me a double pendulum," or "help me visualize the Doppler effect," and then select the "Show me the visualization" button beneath Gemini's response.

[3]

Google gives Gemini a powerful new way to help you understand complex subjects

The chatbot can now generate interactive simulations and models. If you're like me, an explanation isn't always enough. It's often easier to understand a concept when you can visualize it. Since many people turn to Gemini to understand complex topics, it only makes sense that Google is rolling out this new feature. In a blog post, Google announced that Gemini is gaining a new ability. The chatbot can now take your questions and transform them into interactive simulations and models. This goes beyond the simple text and static diagrams that the AI served in the past. You can see an example of what this new feature is capable of in the images above. On the left, the user asks for an example of a double slit experiment. Over on the right, there's an animated simulation with controls that let you change separate variables. Google says this new feature is rolling out today for all Gemini app users. You'll need to head over to gemini.google.com and select the Pro model to gain access. After you enter your prompt, ask Gemini to "show me" or "help me visualize" to create your simulation.

[4]

Google Gemini gets new feature to turn complex ideas into interactive visuals, here is how

Interestingly, OpenAI and Anthropic announced similar features for their AI platforms last month. Google has announced that the Gemini app can now create interactive simulations and models directly within chats, making it easier to understand complex topics. This will allow users to explore complicated concepts visually instead of relying only on text explanations. Earlier, most responses from Gemini included written explanations and sometimes static diagrams. While those diagrams helped explain certain topics, they did not allow users to experiment or interact with the concepts. 'Now, we're delivering functional simulations that can help you better understand the topic you're asking Gemini about,' Google said. For example, if someone wants to understand how the Moon orbits the Earth, Gemini will no longer show a fixed diagram. Instead, users can interact with the simulation and change certain parameters to see how they affect the system. This kind of interactive learning could be particularly useful for students, educators and anyone trying to understand complex ideas more easily. Also read: Is WhatsApp reading your private chats? Elon Musk, Pavel Durov say you cannot trust it, Meta responds Google says the feature is rolling out globally to all Gemini app users (except Education and Workspace accounts). To try the feature, just head to gemini.google.com and select the Pro model in the prompt bar, then ask Gemini to 'show me' or 'help me visualise' a complex concept. Also read: Google Pixel 11 Pro may launch soon globally: From India price to specs, here is what we know Interestingly, OpenAI last month announced a similar interactive learning feature for ChatGPT to make learning math and science concepts easier. Even Anthropic also introduced a similar feature for Claude that generates charts, diagrams and other visual elements to help users better understand topics. Also read: Oppo F33 Pro and F33 India launch set for next week: Check expected specs and price

Share

Copy Link

Google Gemini can now generate interactive simulations and models directly in chat, allowing users to visualize complex concepts with adjustable parameters. The feature, available globally on the Gemini Pro model, follows similar launches from OpenAI and Anthropic, marking a shift from static diagrams to dynamic, explorable visualizations.

Google Gemini Launches Interactive Simulations Feature

Google has rolled out a new AI feature for its Gemini chatbot that transforms how users understand complex topics through interactive visuals

1

. Instead of relying solely on text explanations or static diagrams, Google Gemini now creates interactive simulations and models that users can manipulate in real-time3

. The AI chatbot generates these dynamic visualizations when users ask it to "show me" or "help me visualize" specific topics, with a "show me the visualization" button appearing beneath the response1

.

Source: CNET

How the New Feature Works

Users can now generate interactive 3D models by accessing the Gemini Pro model at gemini.google.com and entering prompts about topics they want to explore

2

. When asked to demonstrate how the Moon orbits the Earth, for instance, Gemini creates a 3D model with parameter adjustment options including a slider to control orbit speed, toggles to hide the orbital path, and buttons to pause the simulation2

. Similarly, when visualizing a car engine, users can manually adjust the view to illustrate each step of the process or let the animation play automatically1

. These 3D models can be rotated and zoomed, offering multiple ways to interact with the visualizations2

.

Source: Android Authority

Competing with OpenAI and Anthropic

This launch positions Google alongside competitors who recently introduced similar capabilities. Anthropic announced a comparable feature for Claude in March that generates charts, diagrams, and interactive visuals, with the added ability to save these visualizations

1

2

. OpenAI also added functionality to ChatGPT last month that creates visualizations of math and science concepts4

. The timing suggests a broader industry shift toward interactive learning tools that move beyond text-based responses.Related Stories

Availability and Limitations

The feature is rolling out globally to all Gemini app users, though it remains unavailable for Education or Workspace accounts

1

. Users must select the Pro model in the prompt bar to access the visualization capabilities3

. Unlike Claude's implementation, there is currently no way to save the interactive visuals generated by Google Gemini1

. This interactive learning approach could prove particularly valuable for students and educators seeking to visualize complex concepts like the double slit experiment or the Doppler effect3

2

. Whether these simulations will improve over time and gain additional features like save functionality remains to be seen1

.References

Summarized by

Navi

[3]

Related Stories

Recent Highlights

1

Sam Altman testifies Elon Musk demanded control of OpenAI, even suggesting handing it to his children

Business and Economy

2

OpenAI launches GPT-5.5 Instant as ChatGPT's new default model, cutting hallucinations by 52.5%

Technology

3

Google stops first AI-developed zero-day exploit designed to bypass two-factor authentication

Technology