Google in talks with Marvell Technology to develop two AI chips, diversifying chip suppliers

15 Sources

[1]

Marvell shares gain on report of deal talks with Google to develop two AI chips

April 20 (Reuters) - Marvell Technology's (MRVL.O), opens new tab shares jumped 7% in premarket trading on Monday following a report that Alphabet's Google is in talks with the chip designer to develop two new chips aimed at running AI models more efficiently. The potential deal could involve two distinct chips: a memory processing unit to complement Google's tensor processing unit and a new TPU built for running AI models, The Information reported, opens new tab on Sunday, citing two people with direct knowledge of the discussions. Big Tech such as Google and Facebook-parent Meta (META.O), opens new tab are moving fast to reduce dependence on external chip suppliers by expanding their custom chip efforts. Google deploys TPUs for training AI models and to respond to user queries, a process known as inferencing, and works with Broadcom to design its chips. The report signals that Google might be looking to diversify from Broadcom amid surging demand for its chips as businesses seek alternatives to Nvidia's pricey chips. Google and Marvell did not immediately respond to Reuters requests for comment. AI lab Anthropic uses a range of chips, including TPUs designed by Google, to develop and run its AI software and chatbot Claude. Last week, Meta extended its deal with Broadcom to produce several generations of custom AI processors. The social media giant paid Broadcom $2.3 billion last year for AI chip design and related services. Both Marvell and its larger rival Broadcom help clients with designing chips, as growing adoption of AI tools boost demand for specialized processors used in advanced data centers powering AI workloads. Marvell trades at 33.35 times the estimates of its earnings for the next 12 months, compared with 27.84 for Broadcom. Average stock rating of 44 analysts covering Marvell is "buy" and their median price target is $125, according to data compiled by LSEG. Marvell is set to add more than $9 billion in its market value of $122.15 billion, if the premarket gains hold. Reporting by Jaspreet Singh in Bengaluru; Editing by Arun Koyyur Our Standards: The Thomson Reuters Trust Principles., opens new tab

[2]

Marvell pops on report it will help Google with custom AI chips. Broadcom shares sink

Shares of Marvell Technology gained nearly 6% on Monday amid reports that Google will use the chip design firm for two new chips to power artificial intelligence workloads. Until now, Google has relied on Marvell rival Broadcom for the design of its in-house Tensor Processing Units, or TPUs. Broadcom shares fell nearly 2% Monday following the report by The Information. The potential deal between Google and Marvell could include a TPU as well as a memory processing unit, The Information reported on Sunday. Google and Marvell did not immediately reply to requests for comment. Both Marvell and Broadcom help their customers translate chip designs into silicon, providing back-end support before the processors are sent off to be manufactured at huge fabrication plants by companies like Taiwan Semiconductor Manufacturing Company. It's a role that's fueled the growth of both Marvell and Broadcom as more tech giants design in-house accelerators for AI. Amid that hustle to make enough silicon to power AI, it's no surprise to see Google diversify its chip deals beyond Broadcom. The Google-Broadcom partnership is alive and well, having just been extended through 2031 in an expanded deal announced earlier this month. Meta last week also made a big deal with Broadcom, committing to deploy 1 gigawatt of its own custom MTIA chips using Broadcom technology.

[3]

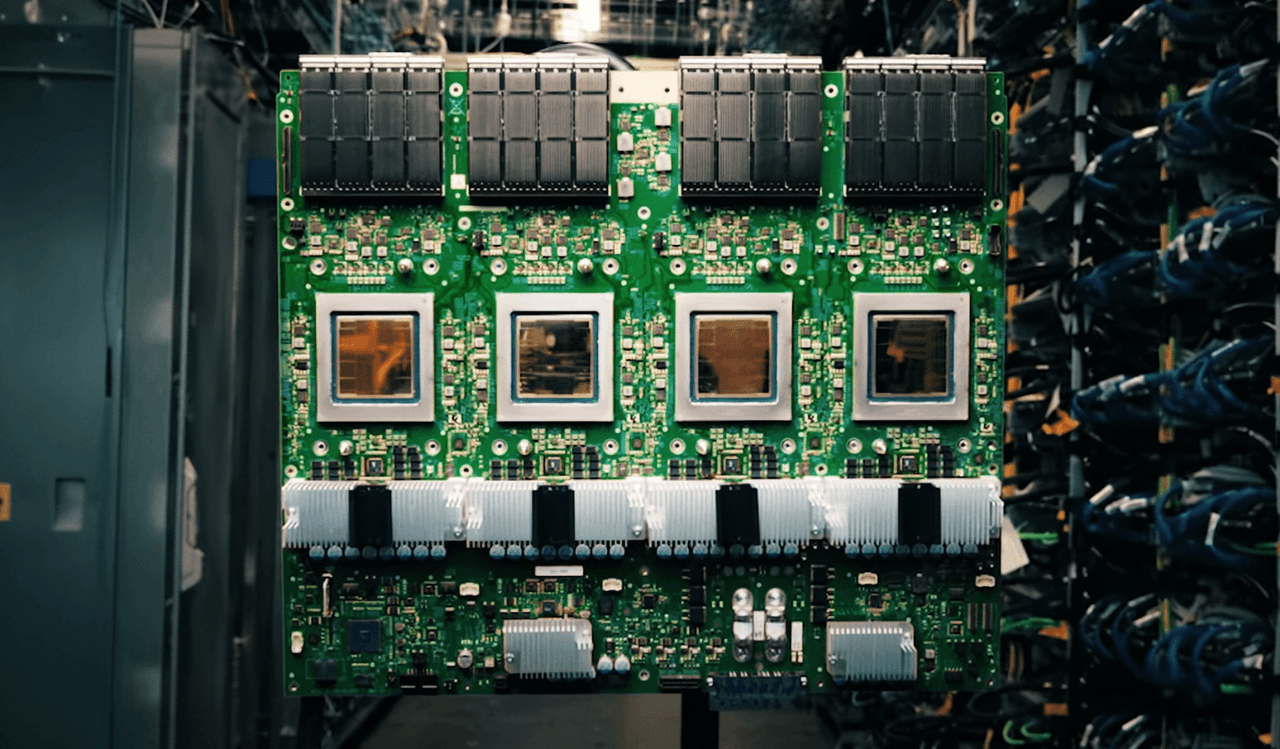

Google in talks with Marvell Technology to build new AI inference chips alongside Broadcom TPU programme

Summary: Google is in talks with Marvell Technology to develop two new AI chips - a memory processing unit and an inference-optimised TPU - adding a third design partner alongside Broadcom and MediaTek in its custom silicon supply chain. The discussions, which have not yet produced a signed contract, came days after Broadcom locked in a through-2031 TPU agreement and reflect Google's shift toward inference as the dominant compute cost, as the custom ASIC market is projected to grow 45% in 2026 and reach $118 billion by 2033. Google is in talks with Marvell Technology to develop two new chips for running AI models, according to The Information. One is a memory processing unit designed to work alongside Google's existing Tensor Processing Units. The other is a new TPU built specifically for inference, the phase of AI where models serve users rather than learn from data. Marvell would act in a design-services role, similar to MediaTek's involvement on Google's latest Ironwood TPU. The discussions have not yet produced a signed contract. The talks came days after Broadcom, Google's primary custom chip partner, announced a long-term agreement to design and supply TPUs and networking components through 2031. The timing suggests Google is not replacing Broadcom but adding a third design partner to a supply chain that already includes Broadcom for high-performance chip variants, MediaTek for cost-optimised "e" variants at 20 to 30% lower cost, and TSMC for fabrication. The strategy is diversification, not substitution. Google's seventh-generation TPU, Ironwood, debuted this month as what the company calls "the first Google TPU for the age of inference." It delivers ten times the peak performance of the TPU v5p and scales to 9,216 liquid-cooled chips in a superpod spanning roughly 10 megawatts, producing 42.5 FP8 exaflops. Google plans to build millions of Ironwood units this year. The Marvell-designed chips would supplement rather than replace Ironwood, potentially targeting different workload profiles or cost points for the growing share of Google's compute that goes to serving AI models rather than training them. The shift from training to inference as the primary demand driver is reshaping the chip market. Training a frontier model is a one-time event that requires enormous compute for weeks or months. Inference runs continuously, serving every query from every user, and its costs scale with demand rather than capability. As AI products reach hundreds of millions of users, inference becomes the dominant expense, and purpose-built inference silicon becomes a competitive advantage that general-purpose GPUs cannot match on cost or efficiency. The Google-Marvell relationship has a longer history than this week's report suggests. The Information reported in 2023 that Google had been working since 2022 on a chip codenamed "Granite Redux" that would use Marvell instead of Broadcom, with Google expecting to save billions of dollars annually. At the time, Google's spokesperson called Broadcom "an excellent partner" and said the company was "productively engaged with Broadcom and multiple other suppliers for the long term." What changed between 2023 and now is that Google appears to have abandoned the idea of dropping Broadcom entirely. The through-2031 agreement locked in that relationship. Instead, Google is building a multi-supplier architecture in which Broadcom, MediaTek, and potentially Marvell each handle different parts of the TPU programme, competing on specific segments rather than for the entire contract. The approach mirrors how automotive companies manage component suppliers: no single vendor gets enough leverage to dictate terms. Marvell's data centre revenue reached a record $6.1 billion in its fiscal year ending February 2026, with total revenue of $8.2 billion, up 42% year over year. The company runs a custom silicon business with a $1.5 billion annual run rate across 18 cloud-provider design wins, building chips for Amazon (Trainium processors), Microsoft (Maia AI accelerator), and Meta (a new data processing unit), in addition to its existing work with Google on the Axion ARM CPU. Nvidia invested $2 billion in Marvell at the end of March, partnering through NVLink Fusion to integrate Marvell's custom chips and networking with Nvidia's interconnect fabric. The deal positions Marvell at the intersection of both the GPU and ASIC ecosystems. In December 2025, Marvell acquired Celestial AI for up to $5.5 billion, gaining photonic interconnect technology that CEO Matt Murphy said would deliver "the industry's most complete connectivity platform for AI and cloud customers." Murphy is targeting 20% market share in custom AI chips and expects roughly 30% year-over-year revenue growth in fiscal 2027. Marvell's stock has rallied approximately 50% year to date, with a 30% gain in April alone following the Nvidia partnership and the Google talks. Barclays analyst Tom O'Malley upgraded the stock to overweight and raised his price target from $105 to $150. The Marvell talks do not appear to have weakened Broadcom's position. Broadcom commands more than 70% market share in custom AI accelerators. Its AI revenue hit $8.4 billion in its most recent quarter, up 106% year over year, with guidance of $10.7 billion for the following quarter. The company is targeting $100 billion in AI chip revenue by 2027. Broadcom's shares rose more than 6% on the day it announced the Google extension, and Mizuho analysts estimated the company would record $21 billion in AI revenue attributable to its Google and Anthropic relationships in 2026, rising to $42 billion in 2027. Anthropic will access approximately 3.5 gigawatts of next-generation TPU-based compute starting in 2027. The broader ASIC market is growing faster than the GPU market. TrendForce projects custom chip sales will increase 45% in 2026, compared with 16% growth in GPU shipments. Counterpoint Research projects Broadcom will hold roughly 60% of the custom AI accelerator market by 2027, with Marvell at approximately 25%. The market itself is expected to reach $118 billion by 2033. Google's chip strategy now involves four partners (Broadcom, MediaTek, Marvell, and TSMC), its own in-house design team, and a product line that spans training, inference, and general-purpose cloud compute. The complexity is deliberate. Every hyperscaler that depends on a single chip supplier, whether Nvidia or anyone else, faces pricing risk, supply risk, and the strategic vulnerability of building a business on someone else's silicon. The inference focus of the Marvell discussions reflects a shift in where the money goes. Training Nvidia's latest chips remain dominant in training workloads, but inference is where the volume is, and volume is where custom silicon's cost advantages compound. Google serves billions of AI-augmented search queries, Gemini conversations, and Cloud AI API calls every day. Shaving even a small percentage off the cost per inference across that scale translates into billions of dollars annually, which is precisely what the 2023 "Granite Redux" discussions were about. The talks with Marvell are not yet a deal, and chip development timelines mean any resulting product is likely years from production. But the direction is clear. Google is building a chip supply chain designed to support the most demanding AI inference workloads in the world, and it intends to have more than one partner capable of building the silicon that runs them. For Marvell, a Google inference TPU contract would validate its position as the second-most important custom AI chip designer in the world. For Google, it would mean one more supplier in a market where no company can afford to depend on just one.

[4]

Google in talks with Marvell to build new AI chips, The Information reports

April 19 (Reuters) - Alphabet's Google (GOOGL.O), opens new tab is in talks with Marvell Technology (MRVL.O), opens new tab to develop two new chips aimed at running AI models more efficiently, The Information reported on Sunday citing two people with knowledge of the discussions. One of the chips is a memory processing unit designed to work with Google's tensor processing unit (TPU) and the other chip is a new TPU built specifically for running AI models, the report said. Google has been pushing to make its TPUs a viable alternative to Nvidia's dominant GPUs. TPU sales have become a key driver of growth in Google's cloud revenue as it aims to show investors that its AI investments are generating returns. Reuters could not immediately verify the report. Google and Marvell did not immediately respond to a request for a comment. The companies aim to finalize the design of the memory processing unit as soon as next year before handing it off for test production, according to the report. Reporting by Angela Christy in Bengaluru Editing by Tomasz Janowski Our Standards: The Thomson Reuters Trust Principles., opens new tab

[5]

Marvell Shares Gain on Report of Deal Talks With Google to Develop Two AI Chips

April 20 (Reuters) - Marvell Technology's shares jumped 7% in premarket trading on Monday following a report that Alphabet's Google is in talks with the chip designer to develop two new chips aimed at running AI models more efficiently. The potential deal could involve two distinct chips: a memory processing unit to complement Google's tensor processing unit and a new TPU built for running AI models, The Information reported on Sunday, citing two people with direct knowledge of the discussions. Big Tech such as Google and Facebook-parent Meta are moving fast to reduce dependence on external chip suppliers by expanding their custom chip efforts. Google deploys TPUs for training AI models and to respond to user queries, a process known as inferencing, and works with Broadcom to design its chips. The report signals that Google might be looking to diversify from Broadcom amid surging demand for its chips as businesses seek alternatives to Nvidia's pricey chips. Google and Marvell did not immediately respond to Reuters requests for comment. AI lab Anthropic uses a range of chips, including TPUs designed by Google, to develop and run its AI software and chatbot Claude. Last week, Meta extended its deal with Broadcom to produce several generations of custom AI processors. The social media giant paid Broadcom $2.3 billion last year for AI chip design and related services. Both Marvell and its larger rival Broadcom help clients with designing chips, as growing adoption of AI tools boost demand for specialized processors used in advanced data centers powering AI workloads. Marvell trades at 33.35 times the estimates of its earnings for the next 12 months, compared with 27.84 for Broadcom. Average stock rating of 44 analysts covering Marvell is "buy" and their median price target is $125, according to data compiled by LSEG. Marvell is set to add more than $9 billion in its market value of $122.15 billion, if the premarket gains hold. (Reporting by Jaspreet Singh in Bengaluru; Editing by Arun Koyyur)

[6]

Google Could Team Up With Marvell to Develop New AI Chips for Inference

Google's existing partnership with Broadcom is said not to be affected Google is reportedly exploring a partnership with semiconductor company Marvell Technology. As per the report, the Mountain View-based tech giant is in talks with the company to develop two new artificial Intelligence (AI) chipsets for its workload requirements. There is reportedly no deal in place, but the companies have initiated formal discussions around the topic. The deal is said not to impact Google's existing partnerships with Broadcom, MediaTek, and TSMC. Instead, it aims to diversify the company's supply chain. Google Could Soon Partner With Marvell According to The Information, the Gemini maker is in talks with Marvell to develop two new AI chips. Citing two unnamed people familiar with the plans, the publication claimed that the potential deal will be centred around Google's rising AI inference requirements, and the new processors will not cater to large language model (LLM) training-related tasks. It is said that one of the planned chips is a memory processing unit, which will work alongside Google's tensor processing unit (TPU). In contrast, the second chip will be a new TPU that will be built specifically to run AI inference. For the unaware, "inference" in AI refers to the processing power or "compute" required to generate outputs based on user queries. It is separate from the compute that is used to train AI models before they are released. The reported plans of forging a new partnership come just weeks after the tech giant introduced Ironwood, its seventh-generation TPU designed for inference. The system scales up to 9,216 liquid-cooled chips that are linked via a new Inter-Chip Interconnect (ICI) networking spanning nearly 10 megawatts. However, the Marvell deal is said not to be a replacement for Ironwood, and instead, it will supplement the system to help Google scale its AI offerings. Earlier this month, the tech giant also signed a multi-year deal with Broadcom, which remains its major partner when it comes to chip development. Additionally, Google has also forged partnerships with MediaTek and TSMC, making its supply chain infrastructure diverse. The planned partnership appears to be a key step in that direction, allowing the hyperscaler to cater to the demand.

[7]

US Stocks: Marvell shares jump 5% on report of deal talks with Google to develop two AI chips

Marvell Technology's shares jumped nearly 5% on Monday following a report that Alphabet's Google is in talks with the chip designer to develop two new chips aimed at running AI models more efficiently. Marvell Technology's shares jumped nearly 5% on Monday following a report that Alphabet's Google is in talks with the chip designer to develop two new chips aimed at running AI models more efficiently. The potential deal could involve two distinct chips: a memory processing unit to complement Google's tensor processing unit and a new TPU built for running AI models, The Information reported on Sunday, citing two people with direct knowledge of the discussions. Big Tech companies such as Google and Facebook-parent Meta are investing heavily to reduce dependence on external chip suppliers by expanding their custom chip efforts amid Silicon Valley's intensifying AI rivalry. Google deploys TPUs for training AI models and to respond to user queries, a process known as inferencing, and works with Broadcom to design its chips. The report signals that Google might be looking to diversify from Broadcom amid surging demand for its chips as businesses seek alternatives to Nvidia's pricey chips. AI lab Anthropic uses a range of chips, including TPUs designed by Google, to develop and run its AI software and chatbot Claude. Google and Marvell did not immediately respond to Reuters requests for comment. "It should be no surprise that rivals (of Nvidia) will want to grab a piece of the market and the apparent growth on offer by developing their own product," said Russ Mould, investment director at AJ Bell. "It also makes sense for customers to diversify their sources of supply, if they can, so they can spread technological and supply chain risk." Last week, Meta extended its deal with Broadcom to produce several generations of custom AI processors. The social media giant paid Broadcom $2.3 billion last year for AI chip design and related services. Both Marvell and its larger rival Broadcom help clients with designing chips, as growing adoption of AI tools boost demand for specialized processors used in advanced data centers. Marvell shares have gained about 64% so far this year, after declining roughly 23% in 2025. Last month, Nvidia invested $2 billion in Marvell in an effort to make it easier for customers to use the custom AI chips that Marvell designs with Nvidia's networking gear and central processors. Marvell, which expects its revenue to approach $15 billion in fiscal 2028, is set to add more than $6 billion to its market value of $122.15 billion, if the gains hold through the session. Marvell trades at 33.35 times the estimates of its earnings for the next 12 months, compared with 27.84 for Broadcom.

[8]

Marvell Rides Google TPU Wave As AI Chip Race Heats Up - Marvell Technology (NASDAQ:MRVL)

Marvell Rides Google TPU Wave As AI Chip Race With Nvidia Heats UpGoogle-Marvell Partnership Expands AI Chip Push The effort targets data movement bottlenecks and supports Google's broader strategy to reduce reliance on Nvidia Corp's (NASDAQ:NVDA) Graphics Processing Units (GPUs). Analysts See Strong Growth From Cloud and AI Demand RBC Capital raised its price forecast on Marvell to $170 from $115 while maintaining an Outperform rating, citing strength in optical connectivity and visibility into Trainium chip production. RBC also projected continued double-digit growth driven by AI demand, with Marvell capturing a significant share of expanding data center capacity. Valuation and Supply Constraints Remain Key Watch Points RBC said Marvell's growth outlook remains strong, supported by revenue expansion of 42% over the past year and forecasts of continued gains through fiscal 2027. However, the firm flagged near-term constraints from the limited 3-nanometer wafer supply. Technical Analysis Marvell is pressing into fresh 52-week highs, consistent with a strong, persistent uptrend rather than a range-bound market. The stock is trading 34.5% above its 20-day simple moving average (SMA) and 69.5% above its 100-day SMA, a spread that points to aggressive short- and intermediate-term upside momentum. The relative strength index (RSI), a momentum gauge, is 85.40, which is deep in overbought territory and often lines up with "crowded" upside positioning. RSI at 85.40 shows buyers have been in control lately, but it also raises the odds of sharper pullbacks if momentum cools. Key Resistance: $152.00 -- a round-number area where upside follow-through can get tested. Key Support: $118.50 -- near the 20-day EMA zone where dip-buyers have recently shown up. Earnings & Analyst Outlook Looking further out, the next major catalyst for the stock arrives with the May 28, 2026 (estimated) earnings report. EPS Estimate: 72 cents (Up from 62 cents YoY) Revenue Estimate: $2.40 billion (Up from $1.90 billion YoY) Valuation: P/E of 48.2x (Indicates premium valuation relative to peers) Top ETF Exposure Significance: Because MRVL carries significant weight in these funds, any significant inflows or outflows will likely trigger automatic buying or selling of the stock. Price Action MRVL Stock Price Activity: Marvell Technology shares were up 2.79% at $151.97 at the time of publication on Tuesday, according to Benzinga Pro data. Image via Shutterstock Market News and Data brought to you by Benzinga APIs To add Benzinga News as your preferred source on Google, click here.

[9]

Google Reportedly Pulls Marvell Into a Two-Chip TPU Plan That Could Reshape AI Inference For ASICs

Google is reportedly working with Marvell on the development of two chips, one of which optimizes existing TPUs, & the other one is a next-gen TPU design. Talks between Google & Marvell have commenced on the development of two brand new chips for AI inference, reports The Information. While the exact nature of what stage the talks are currently in remains a mystery, based on the initial assessment that two chips have been proposed by Google, one aiming to boost existing TPUs, & the second chip being a brand new TPU design, it looks like a baseline has been set. The two chips that have been discussed are very different in their purpose. The first one is related to the TPU, but rather than being a custom TPU silicon, it is going to be a memory processing unit that pairs with a TPU. We can think of in-memory processing being one of the aspects where this specific accelerator or IP block will offset some of the memory requirements from the chip or system and send it over to the dedicated MPU. The second chip that has been discussed is a next-gen TPU, which will specifically be optimized for AI inference models. Currently, Google's flagship AI accelerator is its TPU v7 or Ironwood series. TPU v7 offers 192 GB HBM memory, 4614 TFLOPs of peak performance, and is packaged into the Superpod, which is made up of 9216 chips. Based on the reports, we can expect next-generation Google TPUs coupled with the aforementioned MPUs to further accelerate the memory subsystem for faster AI model performance, especially in the inferencing segment.

[10]

Marvell shares gain on report of deal talks with Google to develop two AI chips - The Economic Times

The potential deal could involve two distinct chips: a memory processing unit to complement Google's tensor processing unit and a new TPU built for running AI models, The Information reported on Sunday, citing two people with direct knowledge of the discussions.Marvell Technology's shares jumped 7% in premarket trading on Monday following a report that Alphabet's Google is in talks with the chip designer to develop two new chips aimed at running AI models more efficiently. The potential deal could involve two distinct chips: a memory processing unit to complement Google's tensor processing unit and a new TPU built for running AI models, The Information reported on Sunday, citing two people with direct knowledge of the discussions. Big Tech such as Google and Facebook-parent Meta are moving fast to reduce dependence on external chip suppliers by expanding their custom chip efforts. Google deploys TPUs for training AI models and to respond to user queries, a process known as inferencing, and works with Broadcom to design its chips. The report signals that Google might be looking to diversify from Broadcom amid surging demand for its chips as businesses seek alternatives to Nvidia's pricey chips. Google and Marvell did not immediately respond to Reuters requests for comment. AI lab Anthropic uses a range of chips, including TPUs designed by Google, to develop and run its AI software and chatbot Claude. Last week, Meta extended its deal with Broadcom to produce several generations of custom AI processors. The social media giant paid Broadcom $2.3 billion last year for AI chip design and related services. Both Marvell and its larger rival Broadcom help clients with designing chips, as growing adoption of AI tools boost demand for specialized processors used in advanced data centers powering AI workloads. Marvell trades at 33.35 times the estimates of its earnings for the next 12 months, compared with 27.84 for Broadcom. Average stock rating of 44 analysts covering Marvell is "buy" and their median price target is $125, according to data compiled by LSEG. Marvell is set to add more than $9 billion in its market value of $122.15 billion, if the premarket gains hold.

[11]

MRVL Stock Jumps on Google AI Chip Partnership Talks - Broadcom (NASDAQ:AVGO), Marvell Technology (NASDAQ

The timing stands out as a cluster of memory and storage stocks has been repriced by investors, with MRVL jumping 51.88% over 12 sessions as the market rotated back toward AI infrastructure. MRVL stock is testing its 52-week high. Track it now here. Google's Move Signals A Shift In AI Hardware Google is discussing a memory processing unit with Marvell that would pair with the TPU to speed up data movement during AI workloads, which can throttle performance as models scale. Companies are also exploring a next-generation TPU tuned for AI model runs, with a memory-chip design potentially reaching final form as early as next year before test production. The push fits Google's broader effort to lean harder on in-house silicon rather than relying as much on Nvidia's GPU ecosystem. The report described TPUs as an increasingly meaningful driver behind Google's cloud revenue expansion. That hardware direction has also been showing up in industry deal chatter tied to TPUs. In February 2026, META was reported to have signed a multi-year arrangement to lease Google's TPUs to support advanced model development. Is Marvell Poised For Unprecedented Growth? Marvell's rally has been part of a wider move where investors revisited AI plumbing after a risk-off stretch pressured high-multiple tech valuations. In that 12-session window, MRVL gained 51.88% and logged five straight weekly advances as the market rewarded companies linked to hyperscaler buildouts. That same rotation included large moves across memory and storage names, with Micron up 42.09% in the span and Western Digital up 43.72%, while Seagate climbed 46.73%. Several of those moves were tied to supply and demand signals inside AI data centers, including high-bandwidth memory demand and constrained capacity. Micron's management said HBM capacity for the rest of 2026 was fully spoken for, and the company pointed to AI data centers consuming about 70% of high-end DRAM supply. Unlocking AI Potential Through Strategic Partnerships For Marvell, the Google discussions spotlight where the company plays in the stack: custom silicon and high-speed networking pieces that matter when hyperscalers try to keep compute fed with data. Bank of America has recently put Marvell in the conversation with Advanced Micro Devices as a top AI compute idea. Google and Marvell did not immediately respond to a request for comment on the talks. MRVL Price Action: Marvell Technology shares were up 4.57% at $146.07 at the time of publication on Monday, according to Benzinga Pro. Image: Shutterstock This content was partially produced with the help of AI tools and was reviewed and published by Benzinga editors. Market News and Data brought to you by Benzinga APIs To add Benzinga News as your preferred source on Google, click here.

[12]

Google in talks with Marvell to build new AI chips: The Information

Alphabet's Google is reportedly in discussions with Marvell Technology to co-develop two new chips designed for more efficient AI model execution. One chip will be a memory processing unit to complement Google's TPUs, while the other is a new TPU specifically engineered for AI workloads. Alphabet's Google is in talks with Marvell Technology to develop two new chips aimed at running AI models more efficiently, The Information reported on Sunday citing two people with knowledge of the discussions. One of the chips is a memory processing unit designed to work with Google's tensor processing unit (TPU) and the other chip is a new TPU built specifically for running AI models, the report said. Google has been pushing to make its TPUs a viable alternative to Nvidia's dominant GPUs. TPU sales have become a key driver of growth in Google's cloud revenue as it aims to show investors that its AI investments are generating returns. Reuters could not immediately verify the report. Google and Marvell did not immediately respond to a request for a comment. The companies aim to finalise the design of the memory processing unit as soon as next year before handing it off for test production, according to the report.

[13]

Google Teams Up With Marvell Technology To Build New AI Chips, Invests Heavily In TPU Strategy Amid Nvidi

Custom Chips To Boost AI Performance Google is in discussions with Marvell to develop a memory processing unit designed to work alongside its Tensor Processing Unit and a new TPU specifically optimized for running AI models, Reuters reported on Sunday (via The Information). The chip is aimed at improving how data moves during AI workloads, a key bottleneck in large-scale model performance. The companies are also exploring a next-generation TPU tailored specifically for running AI models more efficiently. The report said the memory chip design could be finalized as early as next year before moving into test production Google and Marvell did not immediately respond to Benzinga's request for comments. TPU Strategy Targets Nvidia's Grip Google has been investing heavily in its in-house chips to reduce reliance on Nvidia's GPUs, which dominate the AI computing market. Sales of TPUs have emerged as a major contributor to Google's cloud revenue growth. Price Action: On Friday, Alphabet Inc. shares rose, with GOOGL closing at $341.68, up 1.68% and GOOG at $339.40, up 1.99%, while both slipped slightly in after-hours trading, according to Benzinga Pro. According to Benzinga Edge Stock Rankings, GOOG is demonstrating strength across short, medium and long-term timeframes, with its Quality score ranking in the 95th percentile. Disclaimer: This content was partially produced with the help of AI tools and was reviewed and published by Benzinga editors. Photo Courtesy: Ascannio on Shutterstock.com Market News and Data brought to you by Benzinga APIs To add Benzinga News as your preferred source on Google, click here.

[14]

Marvell shares gain on report of deal talks with Google to develop 2 AI chips

Marvell Technology's shares jumped six per cent in premarket trading on Monday following a report that Alphabet's Google is in talks with the chip designer to develop two new chips aimed at running AI models more efficiently. The potential deal could involve two distinct chips: a memory processing unit to complement Google's tensor processing unit and a new TPU built for running AI models, The Information reported on Sunday, citing two people with direct knowledge of the discussions. Big Tech companies such as Google and Facebook-parent Meta are investing heavily to reduce dependence on external chip suppliers by expanding their custom chip efforts amid Silicon Valley's intensifying AI rivalry. Google deploys TPUs for training AI models and to respond to user queries, a process known as inferencing, and works with Broadcom to design its chips. The report signals that Google might be looking to diversify from Broadcom amid surging demand for its chips as businesses seek alternatives to Nvidia's pricey chips. AI lab Anthropic uses a range of chips, including TPUs designed by Google, to develop and run its AI software and chatbot Claude. Google and Marvell did not immediately respond to Reuters requests for comment. Last week, Meta extended its deal with Broadcom to produce several generations of custom AI processors. The social media giant paid Broadcom US$2.3 billion last year for AI chip design and related services. Both Marvell and its larger rival Broadcom help clients with designing chips, as growing adoption of AI tools boost demand for specialized processors used in advanced data centers. Last month, Nvidia invested $2 billion in Marvell in an effort to make it easier for customers to use the custom AI chips that Marvell designs with Nvidia's networking gear and central processors. Marvell, which expects its revenue to approach $15 billion in fiscal 2028, is set to add more than $7 billion to its market value of $122.15 billion, if the premarket gains hold. Marvell trades at 33.35 times the estimates of its earnings for the next 12 months, compared with 27.84 for Broadcom.

[15]

JPMorgan says Marvell stock TPU business reports are false By Investing.com

Investing.com - JPMorgan dismissed reports that Marvell Technology (NASDAQ:MRVL) has secured TPU business from Google, calling the claims false in a sector specialist note issued Sunday. The semiconductor stock has surged 171% over the past year to $146.56, trading near its 52-week high, though InvestingPro analysis suggests the shares are currently overvalued relative to its Fair Value estimate. The firm said Google is in discussions with multiple companies but no deal has materialized. JPMorgan noted Marvell is engaged in more substantive talks about an LPU project with Google and another unnamed company, and is also providing assistance on networking. Marvell previously helped bring Grok's LPU to market and is recognized for its custom silicon capabilities. InvestingPro data confirms the company's status as a prominent player in the Semiconductors & Semiconductor Equipment industry, with revenue growth of 42% over the last twelve months. The company's CEO discussed these efforts during a March Madness presentation two weeks ago, though no contracts have been awarded yet. JPMorgan said Marvell's implicit guidance projects 50% growth in optical products this year and 40% next year, below industry expectations for pluggables to double. The firm learned at the OFC conference that transceivers are expected to double this year and again next year. Some investors project Marvell could reach $8 in earnings per share for fiscal year 2028. JPMorgan said sentiment among bulls has improved, with expectations now set appropriately for the company to beat estimates each quarter this year. For deeper insights, InvestingPro offers 23 additional ProTips for MRVL, along with comprehensive Pro Research Reports available for this and 1,400+ other US equities. In other recent news, Marvell Technology has seen a series of positive developments. The company has doubled its net profit over the last five quarters, with its return on equity recently reaching 19%, according to Erste Group. This strong financial performance coincides with a strategic partnership with Nvidia, which has also led to a $2 billion investment from Nvidia, further strengthening Marvell's position in the optical connectivity markets. RBC Capital reiterated an Outperform rating, viewing this investment as a validation of Marvell's capabilities. Additionally, BofA Securities raised its price target on Marvell to $125, maintaining a Buy rating, following the announcement of the strategic partnership with Nvidia aimed at enabling AI infrastructure. GF Securities upgraded Marvell to a Buy rating, expecting growth from optics demand and potential participation in cloud service provider programs. Barclays also upgraded Marvell to an Overweight rating, citing a strong growth outlook in optical technology, with expectations of significant growth despite some market share shifts. These upgrades and strategic developments underline a positive outlook for Marvell Technology in the near term. This article was generated with the support of AI and reviewed by an editor. For more information see our T&C.

Share

Copy Link

Google is negotiating with Marvell Technology to design two new AI chips—a memory processing unit and an inference-optimized TPU—adding a third partner to its custom silicon supply chain alongside Broadcom. The move signals Google's strategy to diversify chip suppliers as inference workloads become the dominant compute cost in AI.

Google Expands Custom AI Chip Strategy with Marvell Technology

Google is in deal talks with Marvell Technology to develop two AI chips designed to run AI models more efficiently, according to a report by The Information citing two people with direct knowledge of the discussions

1

. The potential partnership would involve creating a memory processing unit to complement Google's existing Tensor Processing Unit (TPU) infrastructure and a new TPU built specifically for running AI models during inference2

. The companies aim to finalize the design of the memory processing unit as soon as next year before handing it off for test production4

.

Source: Wccftech

Marvell Technology shares jumped 7% in premarket trading on Monday following the news, positioning the company to add more than $9 billion to its market value of $122.15 billion if gains held

5

. The stock has rallied approximately 50% year to date, with a 30% gain in April alone3

. Meanwhile, Broadcom shares fell nearly 2% Monday as investors assessed the implications of Google potentially diversifying its chip design partnerships2

.Diversifying Beyond Broadcom Without Replacement

The discussions came days after Broadcom, Google's primary custom chip partner, announced a long-term agreement to design and supply TPUs and networking components through 2031

3

. The timing suggests Google is not replacing Broadcom but adding a third design partner to a chip supply chain that already includes Broadcom for high-performance chip variants, MediaTek for cost-optimized variants at 20% to 30% lower cost, and TSMC for fabrication3

. This strategy represents diversification rather than substitution, mirroring how automotive companies manage component suppliers to prevent any single vendor from gaining excessive leverage.Google deploys TPUs for training AI models and to respond to user queries, a process known as inferencing, and has historically worked with Broadcom to design its chips

1

. The move to diversify chip suppliers comes amid surging demand for custom AI chips as businesses seek alternatives to Nvidia's pricey processors5

. Big Tech companies such as Google and Facebook-parent Meta are moving fast to reduce dependence on external chip suppliers by expanding their custom chip efforts1

.

Source: ET

Inference Emerges as Dominant AI Workload Driver

Google's seventh-generation TPU, Ironwood, debuted this month as what the company calls "the first Google TPU for the age of inference," delivering ten times the peak performance of the TPU v5p and scaling to 9,216 liquid-cooled chips in a superpod producing 42.5 FP8 exaflops

3

. Google plans to build millions of Ironwood units this year, and the Marvell-designed AI inference chips would supplement rather than replace Ironwood, potentially targeting different workload profiles or cost points3

.The shift from training to inference as the primary demand driver is reshaping the chip market. Training a frontier AI model is a one-time event requiring enormous compute for weeks or months, while inference runs continuously, serving every query from every user

3

. As AI products reach hundreds of millions of users, inference becomes the dominant expense, making purpose-built inference silicon a competitive advantage that general-purpose GPUs cannot match on cost or efficiency. TPU sales have become a key driver of growth in Google's cloud revenue as it aims to show investors that its AI investments are generating returns4

.Related Stories

Marvell's Growing Custom Silicon Business

Marvell's data center revenue reached a record $6.1 billion in its fiscal year ending February 2026, with total revenue of $8.2 billion, up 42% year over year

3

. The chip designer runs a custom silicon business with a $1.5 billion annual run rate across 18 cloud-provider design wins, building custom AI chips for Amazon's Trainium processors, Microsoft's Maia AI accelerator, and Meta's data processing unit, in addition to existing work with Google on the Axion ARM CPU3

.

Source: Benzinga

Both Marvell and its larger rival Broadcom help clients with designing chips, as growing adoption of AI tools boosts demand for specialized processors used in advanced data centers powering AI workloads

5

. They act in a design-services role, helping customers translate chip designs into silicon and providing back-end support before processors are sent to be manufactured at fabrication plants by companies like Taiwan Semiconductor Manufacturing Company2

.Nvidia invested $2 billion in Marvell at the end of March, partnering through NVLink Fusion to integrate Marvell's custom chips and networking with Nvidia's interconnect fabric, positioning the company at the intersection of both GPU and ASIC ecosystems

3

. In December 2025, Marvell acquired Celestial AI for up to $5.5 billion, gaining photonic interconnect technology for what CEO Matt Murphy called "the industry's most complete connectivity platform for AI and cloud customers"3

. Murphy is targeting 20% market share in custom AI chips and expects roughly 30% year-over-year revenue growth in fiscal 2027.Market Implications and Competitive Landscape

The custom ASIC market is projected to grow 45% in 2026 and reach $118 billion by 2033 as AI hardware development accelerates

3

. Last week, Meta extended its deal with Broadcom to produce several generations of custom AI processors, with the social media giant having paid Broadcom $2.3 billion last year for AI chip design and related services . Meta also committed to deploy 1 gigawatt of its own custom MTIA chips using Broadcom technology2

.Marvell trades at 33.35 times the estimates of its earnings for the next 12 months, compared with 27.84 for Broadcom . The average stock rating of 44 analysts covering Marvell is "buy" with a median price target of $125

5

. AI lab Anthropic uses a range of chips, including in-house TPUs designed by Google, to develop and run its AI software and chatbot Claude, demonstrating the broader ecosystem reliance on these custom AI accelerators5

.References

Summarized by

Navi

[3]

Related Stories

Marvell Technology stock soars 16% as AI chips drive revenue forecast to nearly $11 billion

06 Mar 2026•Business and Economy

Marvell Technology's Stock Plummets Amid Disappointing Data Center Forecast and AI Chip Concerns

29 Aug 2025•Technology

Marvell Technology's AI Ambitions Boost Stock and Analyst Optimism

19 Jun 2025•Business and Economy

Recent Highlights

1

Anthropic overtakes OpenAI as most valuable AI startup with $965 billion valuation

Business and Economy

2

Pope Leo XIV releases major AI encyclical calling for 'disarmament' of artificial intelligence

Policy and Regulation

3

Apple's Siri overhaul for iOS 27 brings Gemini integration and standalone app to compete with ChatGPT

Technology