Graphon AI raises $8.3M to build pre-model intelligence layer solving enterprise data limits

2 Sources

[1]

Graphon AI raises $8.3M seed to build a pre-model intelligence layer for enterprise AI

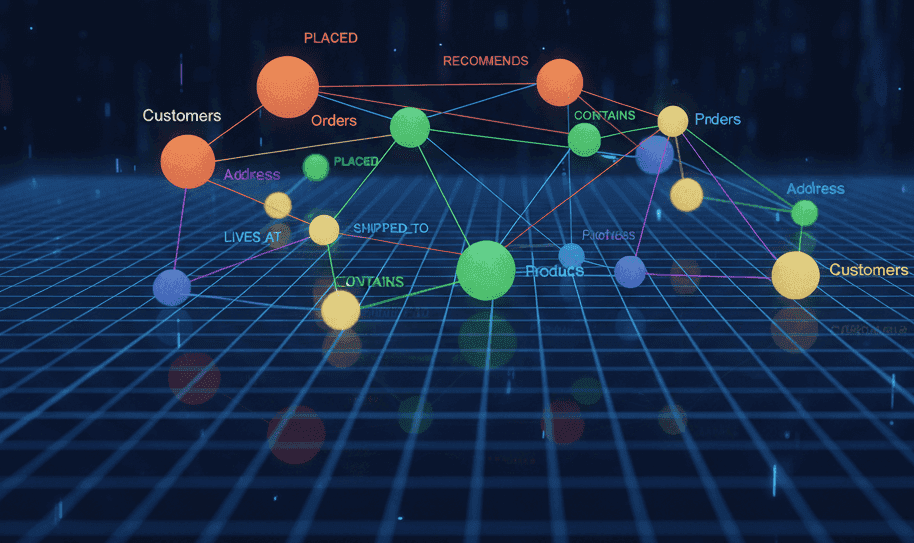

The name is the tell. Graphon AI, which emerged from stealth on Wednesday with $8.3 million in seed funding, is named after a mathematical object that most people in AI have never heard of and that its two most prominent advisors helped invent. A graphon is the limit of a sequence of dense graphs: a continuous function that captures the structure of relationships as networks grow infinitely large. It is the kind of concept that exists at the boundary between pure mathematics and theoretical computer science, and it is now the foundation of a startup that claims to have built the missing layer between enterprise data and the models that are supposed to make sense of it. The company's thesis is straightforward, even if the mathematics behind it are not. Today's large language models can process roughly one million tokens at a time. Enterprises hold trillions of tokens across documents, video, audio, images, logs, and databases. Retrieval-augmented generation, the current standard approach, can surface relevant content from that mass, but it cannot discover relationships between pieces of data that were never stored together. An LLM using RAG can answer a question about a specific document. It cannot reason about how that document connects to a surveillance video, a compliance log, and a customer database, at least not without someone having already mapped those connections. Graphon's product sits before the model, not inside it. Using graphon functions, a mathematical framework that extends the academic concept into a software layer, the system ingests multimodal data and automatically discovers relational structure across it, producing what the company calls persistent relational memory. The result, in theory, is a representation of an organisation's data that any foundation model or agent framework can query without being constrained by its context window. The founding team comprises Arbaaz Khan as chief executive, Deepak Mishra as chief operating officer, and Clark Zhang as chief technology officer. The company says its broader team includes former researchers and engineers from Amazon, Meta, Google, Apple, NVIDIA, Samsung AI Center, MIT, Rivian, and NASA. More notable, perhaps, are the technical advisors. Jennifer Chayes, dean of the College of Computing, Data Science, and Society at UC Berkeley, and Christian Borgs, a UC Berkeley computer science professor, are both listed as advisors. Borgs was among the group of researchers, alongside Chayes, László Lovász, Vera Sós, and Katalin Vesztergombi -- who formalised the graphon as a mathematical concept in 2008. The company is, in effect, commercialising a framework that its advisors co-invented. Chayes and Borgs described the approach in a joint statement as one that treats relational structure as a first-class element of the AI stack rather than something to be inferred after the fact. The distinction matters because most current AI systems treat data as collections of individual items to be retrieved, not as networks of relationships to be preserved. The seed round was led by Arvind Gupta of Novera Ventures, who made Graphon his fund's first investment from its flagship vehicle. Gupta is better known as the founder of IndieBio, the life-sciences accelerator, and his pivot toward an AI infrastructure company suggests he sees structural overlap between the problems Graphon addresses and the complex, multimodal data challenges that define scientific computing. The rest of the cap table reads like a deliberate exercise in strategic diversity. Perplexity Fund, the $50 million venture arm of the AI search company, participated alongside Samsung Next, Hitachi Ventures, GS Futures (the venture arm of South Korean conglomerate GS Group), Gaia Ventures, B37 Ventures, and Aurum Partners, the investment fund affiliated with the ownership group of the San Francisco 49ers. The mix is telling. A search-AI company, a consumer electronics giant, a Japanese industrial conglomerate, and a Korean chaebol all investing in the same pre-model data layer suggests that the context-window problem Graphon claims to solve is felt across industries that otherwise have little in common. GS Group, which ranks among South Korea's largest conglomerates with interests spanning energy, retail, and construction, is also an early customer. Ally Kim, a vice president at GS, said the company's multimodal AI solutions have been applied to analysing customer movement in convenience stores and enhancing safety through CCTV analysis at construction sites. Graphon's positioning reflects a broader shift in the AI infrastructure market. The past three years have been dominated by a race to build larger models with longer context windows. But even the most capable models still hit a ceiling: they can process more tokens, but they cannot maintain relational awareness across the volumes of data that large organisations generate. The question Graphon is betting on is whether the solution lies not in extending the context window further, but in structuring data before it enters the window at all. The company says it has already deployed its platform for enterprise content management, industrial intelligence, agentic workflows, and on-device applications across phones, cameras, wearables, and smart glasses. The breadth of claimed use cases is ambitious for a company at the seed stage, and the absence of independent benchmarks or detailed customer case studies beyond GS Group makes it difficult to assess how far the technology has progressed from concept to production. What is clear is that the problem Graphon describes is real. The gap between what LLMs can theoretically do and what they can actually do with enterprise data remains one of the most significant constraints on AI deployment. Retrieval-augmented generation has been the industry's primary answer, and its limitations, flat retrieval that misses cross-dataset relationships, context windows that force artificial boundaries on what the model can see, are well documented. Whether graphon functions offer a fundamentally better approach or merely a more theoretically elegant version of graph-based data structuring is the question the company will need to answer as it moves from stealth-mode mathematics to production-grade infrastructure. The $8.3 million gives it runway to try. The advisors who co-invented the underlying mathematics give it credibility. But in an AI market that has seen no shortage of startups claiming to have found the missing layer, Graphon's challenge will be proving that the mathematics it is named after translates into a measurable improvement in how foundation models handle the messy, multimodal reality of enterprise data, not just in theory, but at the scale where theory stops being sufficient.

[2]

Graphon reels in $8.3M for its persistent relational memory platform - SiliconANGLE

Graphon reels in $8.3M for its persistent relational memory platform Graphon Inc., a startup with technology that makes artificial intelligence models better at processing large datasets, launched today with $8.3 million in funding. Novera Ventures led the seed round. It was joined by more than a half dozen others including the venture capital arms of Perplexity AI Inc., Samsung Electronics Co and Hitachi Ltd. Today's most advanced large language models have a context window of 1 million tokens. That means a single prompt can only contain up to 1 million tokens worth of data, which corresponds to a few thousand pages of text. LLMs' context window limits their ability to process larger datasets. AI developers get around the context window limit using RAG, or retrieval-augmented generation, tools. Those are software modules that can analyze a dataset with more than 1 million tokens, extract key records and make them available to an LLM. RAG tools can also prioritize the records they extract based on their relevance to a given prompt. However, they struggle to identify connections between records, which limits their usefulness. For example, a RAG system that extracts malware signals from a large cybersecurity dataset may not be capable of determining whether those signals describe different cyberattacks or a single hacking campaign. Graphon has developed a software platform that addresses the challenge. It can analyze a dataset with more than 1 million tokens, identify key patterns and save them to a so-called persistent relational memory. LLMs can then extract the patterns from the persistent memory without hitting their context limits. Graphon's platform reportedly identifies patterns in datasets using small AI models with about 200 million parameters. Those models carry out processing with the help of graphs. A graph is a data structure that contains information about relationships between objects. Such data structures lend themselves well to, among other tasks, representing useful patterns in business datasets processed by LLMs. Graphon's platform also makes use of mathematical objects called graphon functions. They can be used to scan a business dataset stored as a graph for records that are connected to one another. Christian Borgs, a computer scientist who helped invent graphons, is a technical advisor to Graphon. "AI has spent the last decade learning to mimic language," said Graphon founder and Chief Executive Officer Arbaaz Khan. "But the world isn't made of tokens, it's made of relationships. By preserving that structure, we make foundation models more accurate and more useful at enterprise scale." Graphon is one of several venture-backed startups working to increase the amount of data that LLMs can ingest.

Share

Copy Link

Graphon AI emerged from stealth with $8.3 million in seed funding led by Novera Ventures to commercialize a mathematical framework that addresses how large language models struggle with enterprise-scale data. The startup's platform uses graphon functions to create persistent relational memory, allowing AI models to understand relationships across trillions of tokens without hitting context window limits.

Graphon AI Secures $8.3M Seed Funding for Novel Data Architecture

Graphon AI emerged from stealth on Wednesday with $8.3 million in seed funding to build what it describes as the missing layer between enterprise data and AI models

1

. Novera Ventures led the round, with participation from Perplexity AI Inc., Samsung Electronics Co, Hitachi Ltd., Samsung Next, GS Futures, Gaia Ventures, B37 Ventures, and Aurum Partners1

2

. The investment marks Novera Ventures' first from its flagship vehicle, with founder Arvind Gupta—previously known for launching IndieBio—signaling a strategic shift toward AI infrastructure1

.The founding team comprises Arbaaz Khan as chief executive, Deepak Mishra as chief operating officer, and Clark Zhang as chief technology officer, supported by former researchers and engineers from Amazon, Meta, Google, Apple, NVIDIA, Samsung AI Center, MIT, Rivian, and NASA

1

.Addressing the Context-Window Problem in Enterprise AI

Today's most advanced large language models can process roughly one million tokens at a time, yet enterprises hold trillions of tokens across documents, video, audio, images, logs, and databases

1

2

. Current approaches using Retrieval-Augmented Generation can surface relevant content from that mass but struggle to discover relationships between pieces of data that were never stored together1

. A RAG system that extracts malware signals from a large cybersecurity dataset, for instance, may not determine whether those signals describe different cyberattacks or a single hacking campaign2

.Graphon's pre-model intelligence layer sits before the model rather than inside it, using graphon functions to ingest multimodal data and automatically discover relational structures across it

1

. The system produces what the company calls persistent relational memory, creating a representation of an organization's data that any foundation model or agent framework can query without being constrained by its context window1

.

Source: SiliconANGLE

Commercializing Mathematical Concepts Through Graphons

The company takes its name from a mathematical object that exists at the boundary between pure mathematics and theoretical computer science. A graphon is the limit of a sequence of dense graphs—a continuous function that captures the structure of relationships as networks grow infinitely large

1

. The concept was formalized in 2008 by researchers including Jennifer Chayes and Christian Borgs, both now technical advisors to Graphon AI1

2

.Graphon's platform identifies patterns in datasets using small AI models with about 200 million parameters, according to SiliconANGLE

2

. These AI models carry out processing with the help of graphs—data structures that contain information about relationships between objects. Graphon functions can scan a business dataset stored as a graph for records that are connected to one another2

.Related Stories

Strategic Investor Mix Signals Cross-Industry Demand

The investor roster reflects deliberate strategic diversity spanning search AI, consumer electronics, industrial conglomerates, and Korean chaebols

1

. GS Group, among South Korea's largest conglomerates with interests spanning energy, retail, and construction, is also an early customer. Ally Kim, a vice president at GS, said the company's multimodal AI solutions have been applied to analyzing customer movement in convenience stores and enhancing safety through CCTV analysis at construction sites1

. This cross-industry participation suggests the context-window problem Graphon addresses affects organizations with little in common beyond their struggle to apply AI models to complex, relational datasets."AI has spent the last decade learning to mimic language," said Khan. "But the world isn't made of tokens, it's made of relationships. By preserving that structure, we make foundation models more accurate and more useful at enterprise scale"

2

. Chayes and Borgs described the approach as one that treats relational structure as a first-class element of the AI stack rather than something to be inferred after the fact1

.References

Summarized by

Navi

[1]

Related Stories

Recent Highlights

1

Meta AI chatbot exploited by hackers to hijack high-profile Instagram accounts worth millions

Technology

2

Florida sues OpenAI and Sam Altman over ChatGPT safety, alleging AI harms linked to violence

Policy and Regulation

3

Nvidia RTX Spark chips power new AI laptops with up to 128GB memory and local agent capabilities

Technology

Recent Highlights

Today's Top Stories

News Categories