Meta AI introduces parental supervision feature showing topics teens discuss with chatbots

8 Sources

[1]

Meta will now allow parents to see the topics their child discussed with Meta AI | TechCrunch

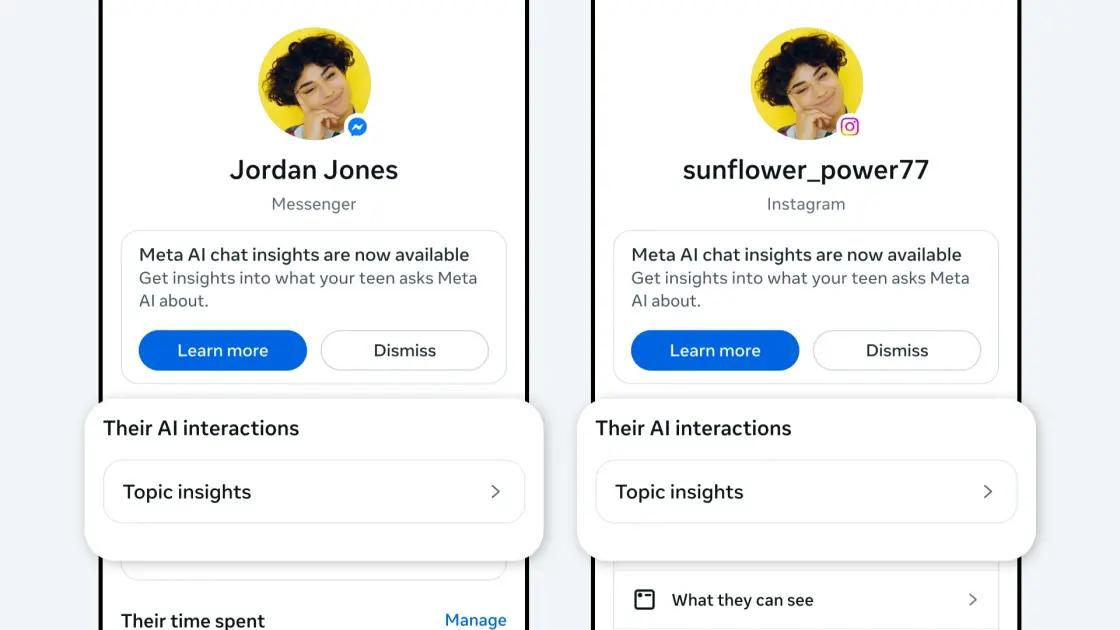

Meta announced on Thursday that parents using its supervision tools can now see the topics their teen has asked Meta AI about in the past week on Facebook, Messenger, or Instagram. Parents will see a new "Insights" tab within the supervision hub showing the topics their teen has been discussing with the AI chatbot. Topics can range from "School," "Entertainment," and "Lifestyle" to "Travel," "Writing," and "Health and Wellbeing," among others, Meta says. Parents can select a topic to see the subcategories that fall within each one. For example, "Lifestyle" breaks down into fashion, food, and holidays, while "Health and Wellbeing" covers fitness, physical health, and mental health. The update is now available in the U.S., U.K., Australia, Canada, and Brazil, and will roll out globally in the coming weeks. Meta first previewed these insights back in October when it said it was developing new tools to help parents guide their teens through AI. Other previewed tools would have allowed parents to block access to specific AI characters or disable them entirely. However, Meta suspended teens' access to its AI characters globally across all of its apps in January, saying it planned to develop an updated version specifically for teens. For those unfamiliar, Meta AI characters are interactive AI personas with distinct personalities, designed for users to engage with as if they were real people filling specific roles -- like a chef -- or as recognizable celebrities, such as Snoop Dogg and Paris Hilton. Meta suspended teens' access to these characters just days before a lawsuit against it was set to go to trial in New Mexico, in which the social media giant was accused of failing to protect minors on its platforms. Meta ultimately lost the case, marking the first time a court has held the company legally liable for endangering child safety. That case is one of many lawsuits that Meta and other Big Tech companies are facing over child safety. Given the timing, it's not surprising that Meta halted access to the AI characters or that it's now looking to inform parents about what their child is discussing with Meta AI. Meta also announced on Wednesday that it is giving parents suggested conversation starters intended to help them talk openly and without judgment about their teens' experiences with AI. Additionally, the company says it is launching a new AI Wellbeing Expert Council to help shape the development of its AI products for teens.

[2]

Parents Can Now See What Their Kids Are Asking Meta AI About

Alex Valdes from Bellevue, Washington has been pumping content into the Internet river for quite a while, including stints at MSNBC.com, MSN, Bing, MoneyTalksNews, Tipico and more. He admits to being somewhat fascinated by the Cambridge coffee webcam back in the Roaring '90s. Meta is giving parents more insight into how their teens use AI on its platforms. The company said Thursday that parents can learn what topics their children are asking AI about over the previous week on Facebook, Messenger or Instagram -- apps owned by the Mark Zuckerberg-run company. While the move is intended to support the safety of children on popular social platforms, experts said it's no substitute for good content moderation and safe design, and they warn it could have unintended consequences by reducing teens' privacy. The new feature is called AI Insights and is now available for parents who are supervising Teen Accounts in the US, UK, Australia, Canada and Brazil. Meta will roll out AI Insights globally in the coming weeks. Teen Accounts are specially designed experiences for teens on the platforms with stricter default privacy and content settings. Insights follows on the heels of other safeguards Meta introduced for parents and kids regarding their use of AI. In October, the company said parents could stop kids from interacting with chatbot characters or block specific characters. A character is a fictional being created by AI. Zuckerberg and his company have been raked over the coals over the past few years when it comes to the mental health of children. Last month, Meta was ordered to pay $375 million after being found liable in a child exploitation case and was also found liable in a California case in which a woman alleged Instagram and YouTube were designed to be addictive to children. A representative for Meta didn't immediately respond to a request for comment. More than 40 US states filed lawsuits against Meta in 2023, alleging that the company is trying to addict children to its apps and thus contributing to a youth mental health crisis. If parents are using supervision for their children on Facebook, Messenger or Instagram, they will now see a tab labeled "Insights," both in the apps themselves and on the internet. (Parents can enable supervision in Meta's Family Center -- the process is detailed here -- for their kids ages 13 to 17 who are using Teen Accounts.) After clicking on the Insights tab, parents can see which topics their kids have asked Meta AI about over the past seven days. The company said those topics could include school, entertainment, lifestyle, travel, writing, health and others. There are also categories within each topic -- fashion, food and holidays in lifestyle and fitness, physical health and mental health in the health and wellbeing topic. When parents click on topics, they can see the categories that their kids have asked Meta AI about. If teens ask AI about suicide or self-harm on Instagram, parents will be alerted, a feature the company added in February. Meta also said that, in collaboration with the Cyberbullying Research Center, it has developed 11 conversation starters for parents to speak to their kids about AI. Parents can access them via a link on the Insights tab. In its news announcement on Thursday, Meta said it is trying to "make parental supervision even more valuable for parents." The company said that the number of US teens enrolled in supervision has doubled over the past year. The feature shifts responsibility for content moderation to parents, but it could also be harmful to children in potentially abusive family environments by giving parents a surveillance tool, said Ardath Whynacht, an associate professor in sociology at Mount Allison University in New Brunswick, Canada, who specializes in mental health and family violence. "Parental surveillance is not content moderation," Whynacht told CNET. "As companies like Meta do less content moderation, they expose children and youth to harm more frequently. It shouldn't be the parent's job to make the product less harmful." Whynacht, who has worked in prisons and with youth with mood disorders and psychosis, said queer and trans youth could suffer the most from parent surveillance. "Many turn to digital spaces to find support," Whynacht said. "Fear of parental surveillance might force children into even more unsafe corners of the web." "It's a sad fact that kids often need protection from their parents as much as they need protection from harms online," she added. Meta's new feature is a "step in the right direction," but it's not nearly enough of a safeguard, Donna Rice Hughes, CEO of the online child safety organization Enough is Enough, told CNET. "Meta cannot be trusted when it comes to teen safety and continues to put profits over safety," Hughes said, pointing to the company's lobbying efforts to kill the Kids Online Safety Act in the US House in 2024. Hughes said parents should use whatever online parental controls are available, such as Meta's new Insights feature, but also should have frequent conversations with their children about online safety. And control tools should be more robust and effective and implemented by all of Big Tech, not just Meta. "Parents simply can't continue to shoulder this burden alone," Hughes said.

[3]

Meta Will Let Parents See What Their Kids Are Discussing With Its AI

An Insights tab will display the topics teens have talked about with Meta AI in the last week. Meta already notifies parents when their teens discuss self-harm or suicide on Instagram. It is now taking an additional step to ensure parents know what their teens discuss with Meta AI. Starting today, parents using supervision on Facebook, Messenger, or Instagram will see a new Insights tab. It includes an option called "Their AI interactions," which lists out the topics their teens discussed with Meta's chatbot over the last seven days. Topics include school, entertainment, lifestyle, travel, writing, health and wellbeing, and others, with sub-topics in each, Meta says. For example, lifestyle could include fashion, food, and holidays, while health and well-being could include physical health and mental health. Parents can tap on the main topic to learn more. At launch, Insights will be available to parents supervising Teen Accounts in the US, UK, Australia, Canada, and Brazil. A global rollout will follow in the coming weeks. By default, Meta assigns Teen Accounts to all users under 16. Meta is facing several lawsuits accusing it of designing addictive experiences; CEO Mark Zuckerberg recently testified in one of them. Last month, the company was ordered to pay $375 million for failing to block child exploitation on its apps. To ensure its AI experience is safer for kids, Meta has established an AI Wellbeing Expert Council, "a group of experts who will provide ongoing input on our AI experiences for teens, to help make sure they continue to be safe and age-appropriate," Meta says. Employees will meet with the council regularly to share updates on their AI features and gather feedback.

[4]

Meta will show parents the topics of their teens' AI conversations

With countries banning social media for kids left and right, Meta is trying different things to convince parents that its platforms are safe for teens. In its latest effort, the company will start showing parents the topics their teens have discussed with Meta AI over the previous seven days. "Parents will be able to see the topics their teen has been asking Meta AI about in [Facebook, Messenger or Instagram] over the past week," Meta explained in a blog post. "Topics can range from School, Entertainment, and Lifestyle to Travel, Writing, and Health and Wellbeing, among others." For parents overseeing Meta's teen accounts, the feature will appear in a new Insights tab within supervision, both in-app and on web. Parents can tap on a topic to see the different categories within each: for instance, sub-categories within Lifestyle include fashion, food and holidays, while fitness, physical health and mental health are part of the Health and Wellbeing topic. Meta also worked with the Cyberbullying Research Center to develop what it calls "conversation starters," or open-ended conversations about their experience with AI. It provides detail about what the questions are designed to address, and can be found on the Family Center website or through a link in the new Insights tab. Finally, Meta revealed more detail about its AI Wellbeing Expert Council, who will provide "ongoing input on our AI experience for teens." It will be made up of three existing advisory groups as well as new members with special expertise in responsible and ethical AI, who are affiliated with the National Council of Suicide Prevention and multiple universities. It's worth noting that Meta has a separate oversight board that deals with subjects ranging from AI to moderation. Offboarding moderation chores to busy parents appears to be par for the course for Meta these days. The company has recently cut back on the use of third-party vendors that help with content moderation, shifting responsibility instead to advanced AI systems, according to recent reports. The dangers of AI for teens have been one of multiple reasons countries like Spain have banned social media platforms for kids. One of the most recent and tragic cases was in Canada, where a teen was provided specific details by OpenAI's ChatGPT about how to carry out a school shooting. Another such case is under investigation in Florida, and AI's have been implicated in multiple teen suicides as well.

[5]

Meta AI parental supervision now includes reviewing kids' AI topics

Parents worried about what their teen is discussing with Meta's AI Assistant will now be able to view topics of conversation through a Teen Account parental supervision tool. Meta announced the feature Thursday in a blog post. The information will be available via an Insights tab in the supervision tool for the platforms Instagram, Facebook, and Messenger, all of which are owned by Meta. The feature lists broader topics, such as school, entertainment, writing, health, and wellbeing. Parents can click on the topic for additional but limited detail. The health and wellbeing categories, for example, can include fitness, physical health, and mental health. The information only covers the past seven days of exchanges. The feature is the latest safety measure Meta has implemented under intense legal and media scrutiny. Meta recently lost two separate landmark trials related to child safety protections and the allegedly addictive design of its products. The company said it will appeal both verdicts. The child safety lawsuit, which took place this year in New Mexico, yielded internal Meta documents demonstrating that the company's leadership knew its persona-driven AI companions, or "characters," could engage in inappropriate and sexual interactions and still launched them without stronger controls. Last August, Meta locked down its AI characters for teen users amid reports that they were inappropriately engaging with minors, including in discussions about self-harm, suicide, and romantic interactions. In October, the company provided parents with the ability to turn off one-to-one AI character conversations and block specific characters. In January, though, Meta again restricted teen access to characters as its AI assistant remained available. A Meta spokesperson confirmed to Mashable that AI characters are paused for teens globally as the company continues to build parental controls. In addition to the latest parental supervision feature, Meta partnered with the Cyberbullying Research Center to create a list of "conversation starters" about AI chatbot use. The company also announced the formation of a new AI Wellbeing Expert Council assembled to provide "ongoing input" on AI teen experiences. Meta said the experts are affiliated with the National Council for Suicide Prevention, the University of Michigan, and Northeastern University, among other institutions.

[6]

Meta will let parents see children's chats with AI and intervene before risks spiral

Meta's new teen AI supervision tools don't hand parents a transcript, they hand them just enough context to have the right conversation before something goes wrong. Meta has been in hot water over teen safety and AI for a while now. A Wall Street Journal investigation, a lost lawsuit in New Mexico, and an FTC inquiry later, the company is finally putting in place some meaningful parental supervision tools. Today, Meta is introducing a new Insights tab in its supervision hub across three of its most popular platforms: Instagram, Facebook, and Messenger. While the name doesn't make it clear right away, the feature gives parents a window into what their teenagers are actually discussing with Meta AI on all these apps. What can parents actually see? While parents won't get a word-for-word transcript of their children's conversation with Meta AI, the new Insights tab provides weekly topic summaries. It covers broad categories like School, Entertainment, Lifestyle, Travel, Writing, and Health, each with its own subcategories. Recommended Videos Health and Wellbeing, for instance, covers topics like fitness, physical health, and mental health. The hope here is that the categories will provide enough context for parents to identify a concerning pattern among the topics without actually reading all the messages. Beyond topic visibility, parents can also decide which AI characters their child can access, and even shut down character chats entirely while keeping the AI assistant available for general use cases, such as doing homework, solving everyday queries, etc. Meta is also developing dedicated alerts for sensitive topics, which, I believe, is the most effective way to notify parents about any concerning chats. If a teen's AI conversation is about self-harm, or worse, veers into suicide, parents will be informed directly. Who is this available to? For now, the Insights tab is available in the United States, the United Kingdom, Australia, Brazil, and Canada. A global rollout, on the other hand, is expected in a few weeks. In addition to the new addition, Meta has also collaborated with the Cyberbullying Research Center to develop conversation starters. These include prompts that can help parents open non-judgmental discussions about AI with their teens. It's important to highlight that the company's move comes under significant legal and regulatory pressure, not noble instinct, and it's the difference that matters. If the sensitive topic alert system performs well, it could set an industrial standard for how AI or social media platforms handle young, vulnerable users.

[7]

Meta's new AI feature lets parents track teen conversations | Stuff

A new Insights feature reveals weekly AI conversation topics across Instagram, Messenger and Facebook The best smartphones are probably running at least one of Meta's apps. Now, the company is trying to get ahead of growing concerns around teens and Meta AI by giving parents a clearer window into what's actually being asked behind the scenes. In a newly announced update, Meta will let parents see the topics their teens have discussed with its AI assistant over the past seven days - for teens using supervised accounts. The feature is available now in regions including the UK, US and several others, and sits inside a new Insights tab within parental supervision tools on Facebook, Instagram and Messenger. The key detail lies in what parents can actually see. Rather than full chat logs, Meta is surfacing broad categories like School, Entertainment, Health and Wellbeing, or Lifestyle. Tap one, and you'll get more granular sub-topics like fitness, fashion, or travel. That means parents won't be reading conversations word-for-word, but they will get a reasonably clear sense of what their teen is using AI for. Topics are shown within each individual app, rather than as one combined feed. Meta says this is intentional. The company frames it as a balance between visibility and privacy - giving parents insight without exposing every message. Even if the AI refuses to answer something (for example, if it's deemed inappropriate), the topic itself can still show up in the dashboard. There's also a more serious layer being worked on. Meta says it's developing alerts for conversations related to suicide or self-harm, which would notify parents more directly if a teen tries to engage with those topics. This builds on a wider push to make its AI tools feel safer for younger users. Meta says its assistant is inspired by 13+ film rating guidelines, meaning it may refuse certain queries or redirect users to support resources instead. Alongside the tracking tools, Meta is also trying to shape how parents respond. It's partnered with the Cyberbullying Research Center to create "conversation starters" - essentially prompts designed to help parents talk to teens about their AI use without turning it into an interrogation. Whether this all feels like reassurance or overreach will depend on where you sit. For some, a weekly snapshot of AI topics might sound like a useful safety net. For others, it edges uncomfortably close to surveillance - even if the actual conversations stay hidden.

[8]

Parents can now see what their children ask Meta AI, here is how

The feature is available in a few countries for now, with a wider rollout coming soon. Meta has recently rolled out a new feature that helps parents to keep an eye on how their teenagers use the company's AI on apps like Facebook, Messenger, and Instagram. The goal is to help families understand online behaviour and make sure teens use AI safely. Right now, this feature is only available in the United States, United Kingdom, Australia, Canada, and Brazil, but Meta plans to bring it to more countries soon. Meta first previewed this initiative in October last year, stating its goal to build tools that support parents in guiding their teens through rapidly evolving AI experiences online. The new feature is located in the Insights tab within the supervision hub. Moreover, the groups' teen interactions are segregated under general categories such as school, entertainment, lifestyle, travel, writing, and health and wellbeing. Each category can be expanded into subtopics. For example, lifestyle can be further subcategorised into fashion, food, and holidays, while health and wellbeing can include fitness, physical health, and mental health. This setup helps parents get a general idea, instead of letting them read actual conversations. Also read: Tired of doomscrolling? This AI startup will browse the internet for you The update is currently available in the United States, the United Kingdom, Australia, Canada, and Brazil, with a global rollout expected in the coming weeks. The feature builds on tools first previewed in October, when Meta said it was exploring ways to guide teens' use of AI. This development follows a period of scrutiny for the company. Earlier this year, Meta suspended teen access to its AI characters, which included role-based personas and celebrity-style figures. The decision came just before a legal case in New Mexico, where the company was found liable for failing to protect minors. Also read: Microsoft wants employees to take voluntary buyouts in first-ever program Follow the simple steps below to check what your teenager has discussed with Meta AI: 1. Open the supervision hub on Facebook, Messenger, or Instagram. 2. Navigate to the new Insights tab. 3. View the list of topic categories your teen has interacted with. 4. Tap on a category to explore related subtopics. 5. Use the information as a starting point for discussion with your teen. Other than that, Meta has also created a group of experts called the AI Wellbeing Expert Council. This group will help make AI safer for young users.

Share

Copy Link

Meta now allows parents to view topics their teens discuss with Meta AI through a new Insights tab. The feature covers conversations from the past seven days across Facebook, Messenger, and Instagram. This move follows child safety lawsuits, including a $375 million penalty for failing to protect minors on its platforms.

Meta AI Expands Parental Supervision Tools with New Insights Tab

Meta announced Thursday that parents using parental supervision tools can now view topics their teenagers discussed with Meta AI over the past week on Facebook, Messenger, or Instagram

1

. The new AI Insights tab appears within the supervision hub for parents supervising teens' accounts, displaying broad categories ranging from school and entertainment to lifestyle, travel, writing, and health and wellbeing2

.

Source: Engadget

Parents can select a topic to see subcategories that fall within each one. For example, lifestyle breaks down into fashion, food, and holidays, while health and wellbeing covers fitness, physical health, and mental health

3

. The update is now available in the U.S., U.K., Australia, Canada, and Brazil, with a global rollout planned for the coming weeks1

.Legal Pressure Drives Focus on Teen Safety in AI Interactions

The timing of this feature reflects mounting legal pressure on Meta regarding children's online safety. Last month, Meta was ordered to pay $375 million after being found liable in a child exploitation case, marking the first time a court held the company legally responsible for endangering child safety

1

. The company also lost a California case alleging Instagram was designed to be addictive to children2

.More than 40 U.S. states filed child safety lawsuits against Meta in 2023, alleging the company is trying to addict children to its apps and contributing to a youth mental health crisis

2

. Meta first previewed these insights back in October when it said it was developing new tools to help parents guide their teens through AI1

.AI Characters Suspended Amid Safety Concerns

Meta suspended teens' access to AI characters globally across all of its apps in January, saying it planned to develop an updated version specifically for teens

1

. These AI characters are interactive personas with distinct personalities, designed for users to engage with as if they were real people filling specific roles or as recognizable celebrities like Snoop Dogg and Paris Hilton1

.Internal Meta documents from the New Mexico trial demonstrated that company leadership knew its persona-driven AI companions could engage in inappropriate and sexual interactions and still launched them without stronger controls

5

. Meta confirmed that AI characters remain paused for teens globally as the company continues to build parental controls5

.Related Stories

Expert Concerns About Content Moderation and Teen Privacy

While Meta positions this as a step toward safer AI chatbot use, experts warn the feature shifts responsibility from Big Tech to parents. "Parental surveillance is not content moderation," said Ardath Whynacht, an associate professor in sociology at Mount Allison University who specializes in mental health and family violence. "As companies like Meta do less content moderation, they expose children and youth to harm more frequently"

2

.

Source: PC Magazine

Whynacht also raised concerns about teen privacy, particularly for vulnerable youth. "Queer and trans youth could suffer the most from parent surveillance," she noted. "Many turn to digital spaces to find support. Fear of parental surveillance might force children into even more unsafe corners of the web"

2

.Additional Safety Measures and Expert Guidance

Meta partnered with the Cyberbullying Research Center to develop 11 conversation starters for parents to speak with their kids about AI

2

. Parents can access these through a link on the Insights tab4

. If teens ask AI about suicide or self-harm on Instagram, parents will be alerted, a feature the company added in February2

.The company also announced the formation of an AI Wellbeing Expert Council to provide ongoing input on AI experiences for teens

3

. The council comprises experts affiliated with the National Council for Suicide Prevention, the University of Michigan, and Northeastern University, among other institutions5

. Meta said the number of U.S. teens enrolled in supervision has doubled over the past year2

.References

Summarized by

Navi

[1]

Related Stories

Recent Highlights

1

AI passes the Turing Test as GPT-4.5 appears more human than actual people in landmark study

Science and Research

2

Google bets on AI agents with Gemini 3.5 Flash, Spark, and Omni at I/O 2026

Technology

3

Global crackdown on sexual deepfakes intensifies as US, Europe, and New Zealand enact strict laws

Policy and Regulation