SK hynix begins mass production of 192GB SOCAMM2 memory for NVIDIA Vera Rubin AI platform

4 Sources

[1]

SK hynix Ships 192GB SOCAMM2 Memory for NVIDIA Rubin AI Platform

SK hynix has started mass production of its 192GB SOCAMM2 memory module, targeting AI server deployments built around the NVIDIA Vera Rubin architecture. The new module is based on LPDDR5X memory and manufactured using a 1c-class DRAM process, focusing on improving bandwidth and efficiency for large-scale AI workloads. SOCAMM2, short for Small Outline Compression Attached Memory Module 2, represents a different approach compared to conventional server memory. Instead of traditional RDIMM designs, it uses a compact module layout combined with a compression-based connector that enhances signal stability. This allows higher memory density within a smaller footprint, which is increasingly important as data centers aim to maximize compute density. According to SK hynix, the 192GB SOCAMM2 module delivers more than double the bandwidth of standard RDIMM configurations while improving power efficiency by over 75 percent. These gains are particularly relevant for AI workloads such as large language model training and inference, where memory throughput can become a limiting factor. Higher bandwidth reduces latency in data movement, while improved efficiency helps control overall system power consumption. The use of LPDDR5X technology is notable because it originates from mobile memory designs, traditionally focused on low power usage. Bringing this architecture into server environments reflects a broader industry shift toward optimizing performance per watt rather than relying solely on higher clock speeds. This approach is becoming critical as AI infrastructure scales and power constraints become more pronounced. Integration with NVIDIA's Rubin platform highlights a trend toward tightly coupled hardware ecosystems. Memory, compute, and interconnect components are increasingly designed to work together as a unified system, rather than as separate elements. In this context, SOCAMM2 is positioned as a key enabler for improving system-level efficiency and throughput in AI-focused data centers. As demand for AI processing continues to grow, solutions like SOCAMM2 aim to address both performance bottlenecks and energy efficiency challenges. By increasing bandwidth while reducing power draw, SK hynix is targeting one of the core limitations in modern AI systems, helping enable higher-density deployments without proportionally increasing power and cooling requirements.

[2]

SK hynix begins mass production of 192GB SOCAMM2 memory for NVIDIA Vera Rubin

TL;DR: SK hynix has started mass production of 192GB SOCAMM2 LPDDR5X memory modules using sixth-gen 10nm technology, offering over double the bandwidth and 75% better power efficiency than RDIMM. Designed for NVIDIA's Vera Rubin AI platform, these modules enhance performance, reduce bottlenecks, and improve thermal and space efficiency in AI servers. As per the headline, SK hynix has announced that it has begun mass production of 192GB SOCAMM2 memory modules, the company's next-gen standard based on the 1cnm process, or sixth-gen 10nm technology. This LPDDR5X low-power DRAM, a technology typically associated with low-power mobile devices, is poised to become a primary memory solution for next-gen AI servers. The reason for the move to SOCAMM2 modules is clear when you look at the benefits: more than double the bandwidth and a 75% improvement to power efficiency when compared to conventional RDIMM memory modules. SK hynix notes that this delivers an "optimized solution for high-performance AI operations." And when it comes to cutting-edge AI operations, SK hynix confirms that these 192GB SOCAMM2 memory modules and other SOCAMM2 products are designed for NVIDIA's upcoming Vera Rubin platform, where they will help mitigate memory bottlenecks during training and inference. SK hynix has been closely collaborating with NVIDIA on the development of its next-gen SOCAMM2 memory modules for this very purpose. And based on the form factor and size, there are also benefits to space utilization in large AI systems and servers, with improved thermal performance. According to the announcement, the company was able to stabilize mass production ahead of schedule, and will start supplying modules to NVIDIA at the end of the month. "By supplying the 192 GB SOCAMM2, SK hynix has established a new standard for AI memory performance," Justin Kim, President & Head of AI Infra (CMO, Chief Marketing Officer) at SK hynix said. "We will solidify our position as the most trusted AI memory solution provider, through close collaboration with our global AI customers."

[3]

SK Hynix Begins Mass Production of 192 GB SOCAMM2 Memory With 2x Bandwidth, A Vital Piece For NVIDIA' Vera Rubin

SK Hynix is now mass-producing SOCAMM2 memory with up to 192 GB capacities for NVIDIA & next-gen AI Data Centers. Prepped For NVIDIA's Vera Rubin & AI Data Centers, SK Hynix Begins Mass Production of SOCAMM2 Memory With 192 GB Capacities At CES 2026, SK Hynix announced that it had delivered its next-gen memory solutions, such as SOCAMM2, to NVIDIA for its upcoming AI data center solutions. Now, four months later, SK Hynix is announcing that it has begun mass production of the memory, and it will be utilized by NVIDIA's next-gen platform for AI. NVIDIA will be utilizing SOCAMM2 from all three memory manufacturers, SK Hynix, Samsung & Micron for a diversified supply chain to keep up with the demand as Vera Rubin takes center stage in the Agentic AI era. Press Release: SK hynix announced today that it has begun mass production of the 192GB SOCAMM2, a next-generation memory module standard based on the 1cnm process (sixth-generation of the 10-nanometer technology) LPDDR5X low-power DRAM. SK hynix emphasized that the 1cnm-based SOCAMM2 product that is now in mass production delivers more than double the bandwidth with over 75% improved power efficiency compared to conventional RDIMM, providing an optimized solution for high-performance AI operations. In particular, the company noted that its SOCAMM2 products are designed for the NVIDIA Vera Rubin platform. "By supplying the 192GB SOCAMM2, SK hynix has established a new standard for AI memory performance" Justin Kim, President & Head of AI Infra (CMO, Chief Marketing Officer) at SK hynix said. "We will solidify our position as the most trusted AI memory solution provider, through close collaboration with our global AI customers. SK hynix expects the new SOCAMM2 product to fundamentally resolve the memory bottlenecks encountered during the training and inference of large language models (LLM) with hundreds of billions of parameters, thereby playing a pivotal role in dramatically accelerating the processing speed of the overall system. The company stated that with the AI market shifting focus from inference to training, SOCAMM2 is gaining significant attention as a next-generation memory solution capable of operating LLMs with low power consumption. To meet the demands of its global Cloud Service Provider (CSP) customers, SK hynix has not only been providing a supply portfolio, but also stabilized its mass production system early on. SOCAMM2 is a module that adapts low-power memory - which was previously used mainly in mobile products like smartphones - for server environments. It is designed to be a primary memory solution for next-generation AI servers. SOCAMM2 (Small Outline Compression Attached Memory Module 2): An AI server-optimized memory module based on LPDDR. It offers a slim form factor and high scalability, while its compression connector enhances signal integrity and allows for easy module replacement Follow Wccftech on Google to get more of our news coverage in your feeds.

[4]

SK hynix begins mass production of 1c SOCAMM2 AI server chips - The Korea Times

SK hynix's small outline compression attached memory module 2 (SOCAMM2) 192GB / Courtesy of SK hynix SK hynix said Monday it has begin mass production of its small outline compression attached memory module 2 (SOCAMM2) 192GB, a next‑generation chip for artificial intelligence (AI) server produced with its sixth-generation 10‑nanometer‑class (1c) process. SOCAMM2 is a server memory module that leverages low-power double data rate (LPDDR) memory chips commonly used for smartphones, aimed at cutting power consumption to roughly one-third of conventional server modules. Designed specifically for AI servers, it uses a thin, high-density form factor, improving signal integrity and making it easier to swap or upgrade. SOCAMM2 is gaining attention among AI data center operators as power efficiency becomes increasingly important for managing total cost of ownership. While commonly used AI memory such as high-bandwidth memory (HBM) is mounted within the package of logic chips such as graphics processing units (GPUs) or central processing units (CPUs), SOCAMM2 is typically placed next to the logic chips on the system board. In this setup, HBM supports computing acceleration, while SOCAMM2 improves overall system-level power efficiency by complementing conventional DDR-based memory modules. Of note is that SK hynix uses the 1c process for manufacturing LPDDR5X memory for the SOCAMM2. In the road map spanning the 1a, 1b and 1c process generations, 1c is considered one of the most advanced nodes currently available, delivering both performance gains and improved power efficiency. Industry officials said DDR5 built on the 1c process is known to offer about 11 percent faster speeds and more than 9 percent better power efficiency compared with 1b-based DDR5. "With its 1c process, the SOCAMM2 delivers more than twice the bandwidth of conventional RDIMMs while improving energy efficiency by over 75 percent, making it a solution tuned for high-performance AI workloads," the company said. The company noted that the product has been optimized for Nvidia's Vera Rubin, a next-generation AI computing platform. SK hynix expects the new module to significantly ease memory bottlenecks, wherein data delivery falls behind GPU processing speeds, during the training and inference of large-scale AI models with hundreds of billions of parameters. Parameters are learned values that a model uses to analyze data and are widely regarded as an indicator of the model's scale or size. When SOCAMM2 is introduced, the memory hierarchy in AI servers will become a multi-tier structure consisting of HBM, SOCAMM, DDR5 memory module and CXL memory serving as expanded memory. "As the AI market shifts from training to inference, SOCAMM2 is gaining traction as a next-generation memory solution capable of running large language models with high energy efficiency," the company said, adding that it has stabilized mass production quickly to meet demand from global cloud service providers. "The company has set a new standard for AI memory performance with the launch of the 192GB SOCAMM2," said Kim Ju-seon, chief marketing officer of SK hynix. "Through close collaboration with global AI customers, we will strengthen our position as a trusted AI memory solutions provider."

Share

Copy Link

SK hynix has started mass production of its 192GB SOCAMM2 memory module targeting NVIDIA's Vera Rubin AI platform. Built on LPDDR5X technology and 1c-class DRAM process, the module delivers more than double the bandwidth and over 75% improved power efficiency compared to conventional RDIMM modules, addressing critical memory bottlenecks in AI server deployments.

SK hynix Launches Advanced Memory Solution for AI Infrastructure

SK hynix has begun mass production of its 192GB SOCAMM2 memory module, marking a significant development in AI server deployments as the industry shifts toward more efficient hardware architectures

1

. The new module is specifically designed for NVIDIA Vera Rubin, the company's next-generation AI computing platform, and represents a fundamental shift in how memory solutions are optimized for artificial intelligence workloads2

.

Source: Wccftech

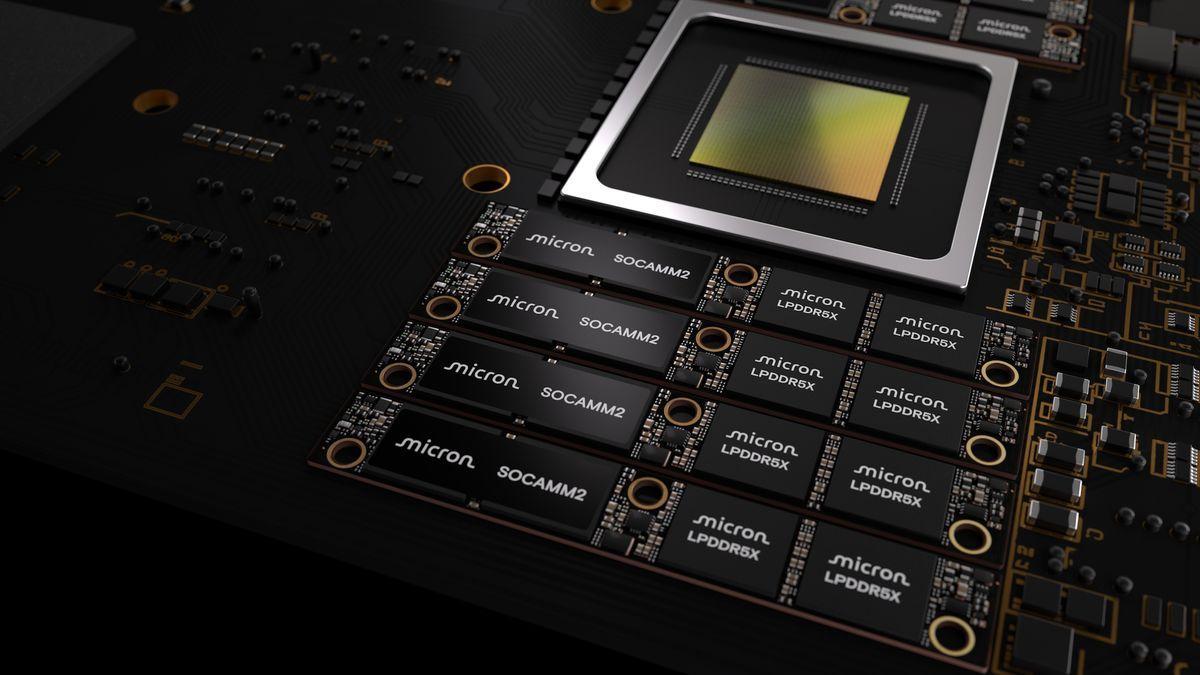

Built using LPDDR5X memory and manufactured with a 1c-class DRAM process—the sixth generation of 10nm technology—the module delivers performance gains that address critical bottlenecks in AI systems. According to SK hynix, the 192GB SOCAMM2 memory module provides more than double the bandwidth of conventional RDIMM modules while achieving over 75% improved power efficiency

3

. These improvements are particularly vital for large language model training and inference operations involving hundreds of billions of parameters, where memory throughput often becomes a limiting factor.Understanding the SOCAMM2 Architecture

The Small Outline Compression Attached Memory Module 2 represents a departure from traditional server memory designs. Unlike standard RDIMM configurations, SOCAMM2 employs a compact form factor combined with a compression-attached design that enhances signal integrity

1

. This compression connector not only stabilizes signal transmission but also enables easier module replacement and upgrades in data center environments4

.The use of LPDDR technology, traditionally associated with low-power mobile DRAM in smartphones, reflects a broader industry trend toward optimizing performance per watt rather than pursuing raw speed alone. By adapting LPDDR for server environments, SK hynix addresses both thermal and space efficiency challenges that plague next-generation AI data centers

2

. The slim profile and high-density design enable increased compute density without proportionally escalating power and cooling requirements.

Source: TweakTown

Addressing Memory Bottlenecks in AI Workloads

Memory bottlenecks have emerged as a critical constraint in AI infrastructure, particularly as models scale to unprecedented sizes. SK hynix expects the new module to fundamentally resolve these bottlenecks during both training and inference phases of large language models

3

. The improved bandwidth reduces latency in data movement between memory and processing units, while enhanced energy efficiency challenges help control overall system power consumption—a growing concern as AI operations expand globally.In typical AI server configurations, SOCAMM2 is positioned on the system board next to logic chips such as GPUs or CPUs, complementing high-bandwidth memory (HBM) that sits within the processor package. While HBM accelerates computing operations, SOCAMM2 improves system-level power efficiency by replacing less efficient DDR-based memory modules

4

. This creates a multi-tier memory hierarchy consisting of HBM, SOCAMM2, DDR5 modules, and CXL memory serving as expanded memory.

Source: Korea Times

Related Stories

Strategic Partnership and Market Positioning

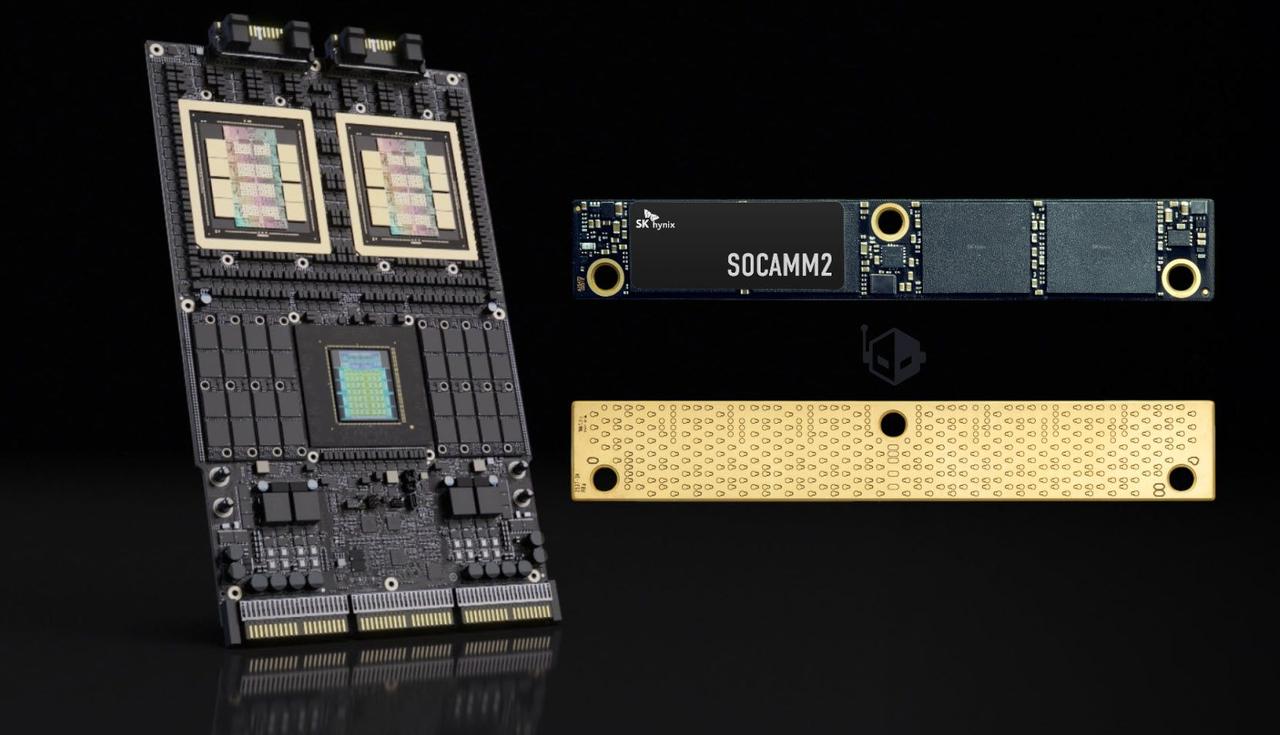

The collaboration between SK hynix and NVIDIA highlights the trend toward tightly integrated hardware ecosystems where memory, compute, and interconnect components work as unified systems. NVIDIA will source SOCAMM2 from all three major memory manufacturers—SK hynix, Samsung, and Micron—to maintain a diversified supply chain capable of meeting demand as Vera Rubin takes center stage in the agentic AI era

3

.Justin Kim, President and Head of AI Infra at SK hynix, stated: "By supplying the 192GB SOCAMM2, SK hynix has established a new standard for AI memory performance. We will solidify our position as the most trusted AI memory solution provider, through close collaboration with our global AI customers"

2

. The company has stabilized mass production ahead of schedule and will begin supplying modules to NVIDIA at the end of this month, demonstrating its commitment to meeting Cloud Service Provider demands.As the AI market continues its shift from inference to training operations, SOCAMM2 gains attention as a solution capable of running large language models with substantially reduced power consumption. This positions SK hynix to capture a significant share of the expanding AI memory market while addressing the dual challenges of performance and sustainability that define modern data center operations.

References

Summarized by

Navi

Related Stories

Micron ships 256GB SOCAMM2 modules to customers, bringing 2TB AI memory capacity per CPU

03 Mar 2026•Technology

Nvidia Collaborates with Major Memory Makers on New SOCAMM Format for AI Servers

24 Mar 2025•Technology

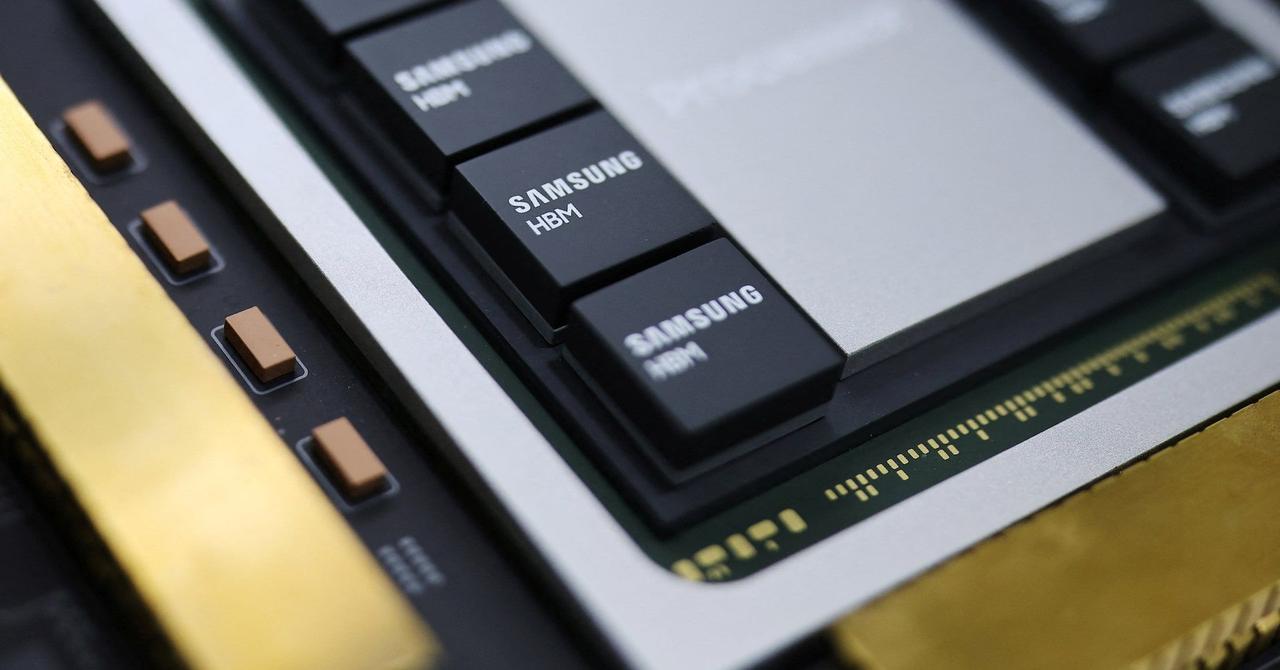

Samsung gains ground in HBM4 race as Nvidia production ignites AI memory battle with SK Hynix, Micron

02 Jan 2026•Technology

Recent Highlights

1

Anthropic overtakes OpenAI as most valuable AI startup with $965 billion valuation

Business and Economy

2

Pope Leo XIV releases major AI encyclical calling for 'disarmament' of artificial intelligence

Policy and Regulation

3

Apple's Siri overhaul for iOS 27 brings Gemini integration and standalone app to compete with ChatGPT

Technology