Sony AI's Ace robot defeats elite table tennis players and targets world championship

28 Sources

[1]

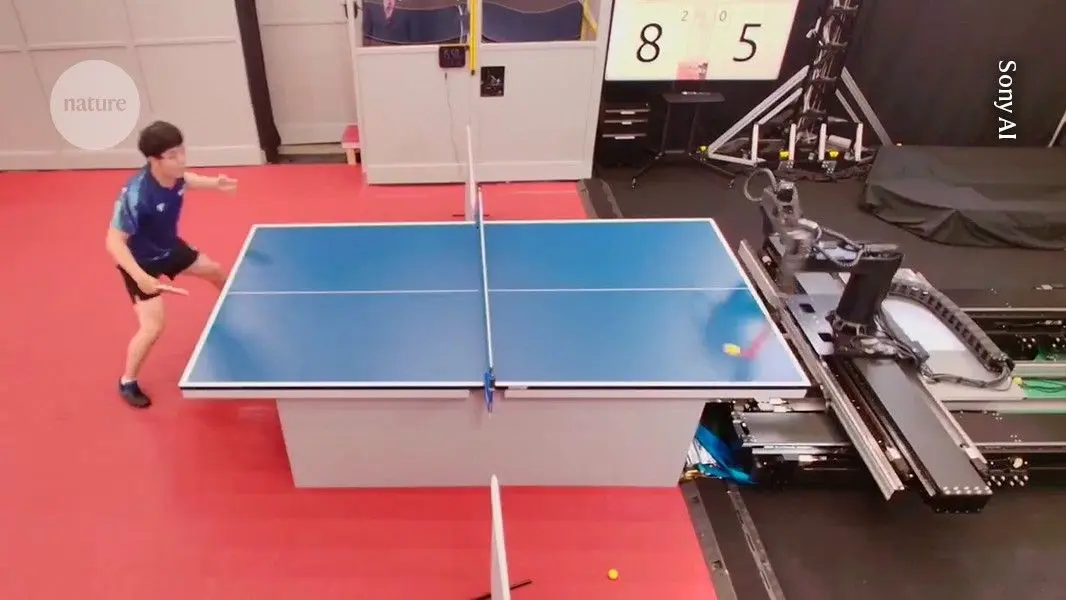

Robot can beat elite players at table tennis

Few sports require a more finely honed combination of speed, perception and skill than table tennis. Watching a professional game is a jaw-dropping experience that shows how a player can, through training and physical prowess, estimate ball speed and spin with astounding accuracy, and, by combining this with fast reflexes and agility, achieve the tactical gameplay and incredible pace the sport is known for. Writing in Nature, Dürr et al., a new player comes to the fore: an artificial-intelligence agent that combines a robotic arm with an AI-based control system. The system, called Ace, can not only challenge professional players, but also provide valuable insights on human strategy and movement. Designing and making a robot that can play professional-standard table tennis is no simple endeavour: building artificial entities that can detect an environmental change, decide how to react and then implement that reaction at speeds that enable them to compete with humans is a challenge across many fields of engineering. One relevant example is car-racing simulations, a contest in which other agents perform the same or competing actions. In 2022, researchers at the multinational firm Sony AI reported an AI system called GT Sophy that could beat championship players at the racing simulation game Gran Turismo. But in the work by Dürr and colleagues, the battlefield for assessment wasn't a simulation but a real table-tennis table, complete with real rackets and ball. Ace comprised three modules: a high-speed perception system, a control system and a robotic arm. The perception system used conventional cameras to locate the ball and three 'gaze control systems' that estimated the rate at which it was spinning, known as its angular velocity. The direction and rate of a table-tennis ball's spin determines its trajectory -- a skilled player can give the ball a desired spin to deliver shots that are difficult for their opponent to return. The perception system's cameras, which were all located outside the court, covered the entire playing area. This information was used by the AI-based control system to direct serves and returns during gameplay. The control system's decisions were enacted by the hardware of the robotic arm: a custom platform with eight independently controlled joints, designed to deliver shots in a manner comparable to those of professional players. Every device and capability that must be integrated into Ace adds complexity, but two aspects of the system deserve particular attention. First, there is the use of AI. The angular velocity of the ball was estimated using a type of algorithm called a convolutional neural network (CNN), which is typically used to classify images. Information on the position and speed of the ball was then passed to the control system, which was trained to play rallies using simulated table-tennis shots. In Dürr and colleagues' approach, which is an example of a process called 'deep reinforcement learning', the decision-making part of the algorithm, called the actor, was scored by another programme, called the critic. Through this process, the system learnt actions that enabled it not only to rally but to give its returning shots desired characteristics such as topspin. Finally, the authors used a genetic algorithm -- which finds the best solutions to a problem by mimicking biological evolution -- to develop a library of serves for the system to use. The second noteworthy aspect of the AI-based system was the role of humans in its development. In table tennis, players serve by tossing the ball up before striking it with the racket. Ace's tosses were based on human demonstrations, adapted to the robot's motional features so that the final serve adhered to the official rules of the game. Expert players informed the genetic algorithm, determining which of the possible serve strategies were challenging enough to be used. If, during training with a human coach, a particular serve succeeded at least 95% of the time across 20 attempts, it became part of the robot's serve set. Ace played against five elite and two professional players (Fig. 1), beating three of the elite players. It lost to both professional players, winning one game out of seven in the matches played against them. Ace's performance was mainly due to its ability to generate different kinds of spin and its consistency in returning the ball, rather than the use of faster-than-human shots. This is noteworthy, because it might have been expected that specialized machines capable of generating extremely high speeds would rely predominantly on power. The authors report that Kinjiro Nakamura, a table-tennis player who competed in the 1992 Barcelona Olympics, commented as he watched Ace perform a particular shot: "No one else would have been able to do that. I didn't think it was possible. But the fact that it was possible ... means that there is a possibility that a human could do it too." Overall, the authors report a successful implementation of a fast-acting AI-based system that operates in a real environment. It must be stressed, however, that Ace relied on guidance and assessment from humans who understood the situations and interactions involved in table tennis. Nevertheless, it is remarkable that human specialists such as Nakamura might learn new skills just by playing against and observing Ace, suggesting that AI-controlled robotic systems could be an arena for human development beyond table tennis. In 1997, the chess-playing system Deep Blue defeated world champion Garry Kasparov in a six-game match. Ace has yet to reach the equivalent level of performance, and even if it were capable of beating a world-champion table-tennis player, the system is far from being humanoid. For example, unlike human players, it observes the game from multiple points at once. AI-based chess engines can now be run using a mobile phone rather than the specialized computer required for DeepBlue -- as autonomous systems become more advanced, Ace might also one day become outdated. Nevertheless, like Deep Blue, Ace is an important milestone, showcasing the potential of the next generation of high-quality, competitive agents that interact with physical environments.

[2]

Ace the Ping-Pong Robot Can Whup Your Ass

Ace is a robot that aims high: It wants to become the world champion of table tennis. It was developed by Sony AI researchers who, in a new study published in Nature, have shown how this robot, equipped with artificial intelligence, has faced some high-level athletes, holding its own in matches played according to the official rules of table tennis. This feat represents a milestone for the world of robotics, a field that has long regarded this sport, among the most technical in the world, as one of the most difficult tests of technological advances. We have already seen artificial intelligence systems win virtual competitions in games such as chess, Go, and even StarCraft II, but physical games are much more difficult to master. A robot needs to sense unpredictable changes in the external environment, interpret their meaning, decide how to react, and then perform the necessary action, all in a very short time. That is precisely what Ace, a very complex robot composed of three main parts, has managed to do. It is equipped with a perception system that allows it to detect the rotation of the ball, which can change its bounce and trajectory in the air, and an artificial intelligence system that can make decisions in real time. Finally, it has high-speed robotic hardware: an eight-jointed, extremely agile robotic arm that can make precise and quick decisions about where and how to place the racket. To put Ace to the test, the researchers had the robot compete with five high-level amateur players, resulting in three wins out of five matches. Against two professional players from the Japanese league, Minami Ando and Kakeru Sone, however, Ace's skills weren't as effective, winning only one out of seven matches. Subsequent analysis of Ace's matches showed that the robot gained points not so much by hitting harder, but by its control ability, successfully repelling 75 percent of the balls. "This research has shown that an autonomous robot can actually win in a sports competition, equaling or exceeding the reaction time and decisionmaking ability of humans in a physical space," says Peter Dürr, director of Sony AI and project leader on Ace, in a press release. Ace's performance represents a breakthrough in the field of robotics. It is a system that combines high-speed sensing, artificial intelligence for decisionmaking, and robotic control to compete with human players in real-world conditions, making extremely fast reactions in real time. "Table tennis is a game of enormous complexity that requires split-second decisions as well as speed and power," Dürr adds. Robots like Ace could offer a way to learn new techniques and skills to improve player performance in many other fields.

[3]

Table tennis-playing robot on track to becoming world champion

A robot built by Sony AI is rapidly learning how to beat the world's very best table tennis players Ace, an autonomous robot powered by AI, cutting-edge sensors and an extremely dexterous arm with eight joints, has played competition-rule table tennis and beaten elite human competitors. The robot is the first machine to excel at the sport. It was the cerebral game of chess that was first disrupted by computers, but Ace's success suggests physical sports may be about to have their "Deep Blue" moment - the day, in 1997, that a machine of that name beat world chess champion Garry Kasparov. "Games have long served as benchmarks for AI, including chess for Deep Blue, but also other games in more recent breakthroughs, like [the Go-playing AI] AlphaGo," says Peter Dürr at Sony AI, Zurich Switzerland, who led the team that built Ace. But he says those earlier AI milestones were played out online. Ace represents an important advance because it has taken on real-world, professional table tennis champions and held its own. "Ace offers something that has simply never been captured before: a robot and a human in genuine athletic competition," says Dürr. Ace boasts three main advancements in autonomous robotics, he says. Firstly, it uses "event-based sensors", which means that the robot focuses on certain regions of the images its cameras capture - those indicating changes in motion or brightness, which are critical to tracking the path of the table tennis ball. Next, the robot's table tennis skills are built using "model-free reinforcement learning", which means, says Dürr, the robot "learns through experience in simulation rather than adopting a model of how table tennis should be played". This process was similar to having the robot play a table tennis computer game, and the robot notched up several thousand hours of training during the process. And finally, the team has deployed high-speed robot hardware that allows Ace to play with "human-like agility", says Dürr. In some ways, it is even more agile than a human, because athletes require around 230 milliseconds to react, he says, whereas the total latency of Ace is only around 20 milliseconds. Currently, the robot looks like a robot from a factory floor, and relies on a network of cameras and sensors surrounding the table tennis arena. But as the technology advances, the researchers expect Ace will eventually be embodied in a humanoid form. For the matches played as part of a study published today, Japanese professional table tennis league rules applied as Ace competed against five elite but non-professional players, each of whom had competed for at least a decade and trained 20 hours per week. The robot also took on two professionals. Ace lost only two of its five matches against elite players, but both of its matches against professional players. It did, however, achieve a win in one game within one of the professional matches. Another advantage that Ace has over humans is that it does not give away any tells of its next move. On the other hand, it lacks the capacity to read any signs of the body language of humans. "Some of the athletes involved in our experiments commented that they are usually watching their opponent's face - which Ace does not have," says Dürr. Others were surprised by Ace's ability to read the spin of their serves, despite their attempts to hide it with different motions. The robot also confounded its inventors - especially when it was able to hit balls that bounced off the net, which was not a skill it had trained for. This was a skill that just "emerged", says Dürr. Over the past year, since the study was completed, the team has continued to improve Ace's abilities. In December 2025, Ace beat a professional player for the first time, and in March 2026, Ace won matches against three more professional players: a female professional, Miyuu Kihara, who is ranked in the top 25 in the World Table Tennis ranking, as well as two male professionals, Tonin Ryuzaki and Fumiya Igarashi. "With further improvements, it should be possible to outperform even the world champion," says Dürr. And improvements go both ways, he says. "Former Olympian Kinjiro Nakamura noted that before watching Ace, he thought a certain shot was impossible, but having seen it, he believes human athletes could replicate this technique."

[4]

Sony's New AI Robot Can Probably Beat You in Table Tennis

Blake has over a decade of experience writing for the web, with a focus on mobile phones, where he covered the smartphone boom of the 2010s and the broader tech scene. When he's not in front of a keyboard, you'll most likely find him playing video games or watching horror movies. On Wednesday, Sony announced its project Ace, an autonomous robot capable of competing with professional table tennis players. Sony says this breakthrough demonstrates that AI systems have achieved human-like, expert-level performance in a competitive sport in the physical world. While the world is currently focused on the agentic AI craze -- allowing AI agents to perform tasks on your behalf -- physical AI is right on its heels. Having a humanoid robot fold your laundry is just the beginning, and Sony's announcement shows that physical AI could outperform humans in some instances. "Table tennis is a game of enormous complexity that requires split-second decisions as well as speed and power," said Peter Dürr, director of Sony AI and project lead for Ace, in the press release. Ace uses advanced sensory technology, reinforcement learning and precision hardware to play table tennis. The robot has nine active pixel-sensor cameras, so it can determine the ball's position in 3D space. There are also additional cameras and systems to help it measure the ball's velocity and spin. Outside of the optics portion, Ace also has a control system based on model-free reinforcement learning that enables it to adapt and make decisions without a preprogrammed model. Combine this with high-speed robotic hardware to play the game, and the robot is as much a piece of art as it is hardware. Ace was tested against five elite and two professional-level table tennis players, and it won three of the five matches against the elite players overall. It also scored sixteen direct points while serving versus the elite players' eight. Ace is more than a proof of concept, but a glimpse into the future of physical AI. "Once AI can operate at an expert human level under these conditions, it opens the door to an entirely new class of real-world applications that were previously out of reach," said Peter Stone, chief scientist at Sony AI, in the company's press release. Sony did not immediately respond to a request for further comment.

[5]

Watch Sony's elite ping-pong robot beat top-ranked players

Humans have been building ping-pong playing robots for decades, such as Omron's FOREPHUS that challenged amateur competitors at CES 2017. What sets Ace apart from the rest is that the robot, which was developed by Sony's AI division, is the first that can hold its own against top-ranked human players and occasionally even beat them in matches that follow the official rules of the International Table Tennis Federation (ITTF). AI is already capable of besting humans at games like Chess and Go, but physical games pose a much greater challenge as robots have to be engineered to match the speed and responsiveness of the human mind and body. To be competitive at table tennis, a particularly difficult game with a ball moving at a high speed and spin that can alter its trajectory, Sony's researchers developed a robotic system with eight joints. Two joints control the paddle's position, two adjust its overall orientation, and the other three enable the robot to deliver powerful shots.

[6]

Table tennis robot defeats some of world's best players - why this has major implications for robotics

A table tennis robot has outperformed elite players in recent evaluations. The robot, called Ace, marks a significant step toward artificial intelligence (AI) systems that can operate in fast, uncertain, real-world environments. In the tests, the autonomous robot won three out of five matches against elite players - competitive athletes with over ten years' experience and an average of 20 hours weekly training. The robot, developed by Sony AI, lost both matches against players in professional Japanese leagues, but did win a game against one of them. The system is described in detail in a recent paper published in Nature. AI has spent decades mastering games. It has repeatedly outperformed the best humans in everything from complex video games like StarCraft II to chess - where modern programs now far exceed human ratings. Landmark systems such as Deep Blue and AlphaGo have confirmed that, given clear rules and enough data, AI can achieve superhuman performance. But these victories all shared one key feature: they happened in controlled, digital environments. At first glance, table tennis might seem like an unusual benchmark for artificial intelligence. In reality, it is one of the most demanding imaginable. The ball can travel faster than 20 metres per second, giving players less than half a second to react. On top of that, spin introduces enormous complexity. A ball rotating at extreme speeds can curve mid-air and rebound unpredictably off the table. For humans, interpreting spin is largely intuitive. For robots, it has been a longstanding obstacle. Earlier table tennis robotic systems such as Forpheus, developed by Japanese company Omron, addressed this by simplifying the game - using controlled ball launchers, limiting movement, or ignoring spin altogether. More recent iterations have aimed for interaction, but still operate under constrained conditions. Ace does none of this. It plays with standard equipment, on a regulation table, against human opponents who are free to use the full range of shots. How Ace works Ace's performance relies on three key innovations: how it sees the world, how it decides what to do, and how it carries out those actions. First, let's deal with how Ace sees the world. Traditional cameras struggle with fast motion, often producing a blur or missing critical details. Ace instead uses three "event-based" vision sensors, which detect changes in light rather than capturing full images at fixed intervals. These are complemented by nine high-speed cameras that track the environment, including the opponent and their racket. Together, these systems enable high-speed gaze control (the technology that enables a robot to direct its sensors to focus on specific things) and allow the robot to follow the ball with exceptional real-time precision. By tracking markings on the ball, where professional players can generate spin approaching 9,000 revolutions per minute (rpm), the system can estimate spin in real time, something that has long challenged robotic systems. The second important innovation is how Ace decides what to do. Knowing where the ball is going is only half the problem; the robot must also respond instantly. Ace uses deep reinforcement learning, trained in simulation over millions of virtual rallies, including self-play. It continuously generates movement commands for its multi-jointed robotic arm, recalculating trajectories every few tens of milliseconds while avoiding collisions with the table or itself. The third innovation is how Ace how it carries out its actions. To match the speed of human elite players, the robot is built around a high-performance arm combining two prismatic (sliding) and six revolute (rotational) joints. This enables rapid sideways motion and precise striking. There is both a table tennis racket and a mechanism for ball handling, allowing one-armed serves. Crucially, the system is engineered for high-speed interaction: lightweight structures and optimised actuation (the mechanisms in a robot that convert energy into mechanical force) allow Ace to return balls at speeds approaching 20 metres per second. This enables sustained, competitive rallies with skilled human players. What makes this particularly notable is the transition from simulation to reality. Many AI systems perform well in virtual environments but fail when exposed to real-world noise and uncertainty. Ace demonstrates that this "sim-to-real" gap can be meaningfully reduced. One moment during a rally with an elite player illustrates the way that Ace has leapt over this gap. When a predicted ball trajectory suddenly changed after clipping the net, Ace reacted almost instantly, returning the shot and winning the point. What makes Ace particularly significant is therefore not just its performance, but its ability to operate reliably under real-world uncertainty. Why this matters beyond sport A robot returning high-speed topspin shots may be entertaining, but the implications go far beyond table tennis. In manufacturing, for example, robots are typically confined to highly structured tasks. The real challenge is adaptability, handling irregular objects, responding to variation. This is particularly relevant for next-generation robots operating in unstructured environments. To function effectively in homes, hospitals or construction sites, robots must be able to predict, adapt and respond to constantly changing conditions. The same predictive and control capabilities that allow Ace to respond to unpredictable shots could enable more flexible, responsive automation. There are also implications for human-robot interaction. Most industrial robots are kept behind safety barriers because they cannot react quickly or reliably enough to unexpected human behaviour. Ace operates at the edge of human reaction time, suggesting a future where robots can safely collaborate with people in shared spaces. More broadly, this work represents a shift in what AI is expected to do. The next frontier is not just intelligence in abstract problem-solving, but intelligence embedded in the physical world. The gap between simulations and reality needs filling, and this is a big step forward. What humans still do better Professional players were still able to exploit Ace's limitations - particularly in reach, speed, and the ability to handle extreme or highly deceptive shots. This highlights that intelligence is not just about prediction and control, but also about physical embodiment. Humans combine perception, movement and strategy in ways that remain difficult to replicate. Interestingly, systems like Ace may end up enhancing human performance rather than replacing it. As one former Olympic player observed after facing the robot, seeing it return seemingly impossible shots suggests humans might be capable of more than previously thought.

[7]

Robotic arm powered by AI bats away ping-pong challenge

Sony project claims a significant breakthrough with applications in task requiring speed and accuracy Rise of the Machines The ancient games of chess and Go are now mere staging posts in the journey toward robots demonstrating their superior performance to humans - the machines can now beat us fleshbags at ping-pong. Capering about with a small bat smacking a tiny air-filled plastic ball to-and-fro across a six-inch net requires a bit of athletic skill, but table tennis fans can't bank on being able to beat the machine anymore. A paper in Nature this week shows an AI-based robotic system can outperform elite table tennis players. Developed by Sony AI, the system it calls Ace shows the capacity for robots and AI to achieve complex, real-time interactive tasks which might have broader applications. "The system can not only challenge professional players, but also provide valuable insights on human strategy and movement," according to an accompanying article describing the work. During amateur play, a table tennis ball might travel at about 96kph (60 miles per hour) across the table. With professional players, that can rise to as high as 150 kph (93 mph) during a smash. When players apply spin, it changes the ball's trajectory as the Magnus effect distributes airflow asymmetrically over the ball, and also as it bounces off the table. The AI and engineering professors based in Brazil point out that designing and building a system able to play such a fast-moving sport requires engineers to design-in features which can detect an environmental change, decide how to react and then implement that reaction at speeds that enable them to compete with humans. The challenge cuts across many fields of engineering, they said. Ace is built from three modules, including a high-speed perception system, a control system and a robotic arm. "The perception system used conventional cameras to locate the ball and three 'gaze control systems' that estimated the rate at which it was spinning, known as its angular velocity. The direction and rate of a table-tennis ball's spin determines its trajectory -- a skilled player can give the ball a desired spin to deliver shots that are difficult for their opponent to return," the accompanying news article explained. The work was led by Peter Dürr, director of Sony AI in Zürich. Also involved was his colleague Peter Stone, chief scientist at Sony AI, who said the research represented "a landmark moment in AI research." It demonstrated that an AI system can perceive, reason, and act effectively in complex, rapidly changing real-world environments that demand precision and speed. "Once AI can operate at an expert human level under these conditions, it opens the door to an entirely new class of real-world applications that were previously out of reach," Stone said. ®

[8]

Ping Pong Robot Uses Agentic AI to Beat Expert Human Players

Scientists at Sony AI have developed a table tennis robot with enough speed and precision to beat even some expert ping pong players in the latest matchup between biological and artificial intelligence. The robot, dubbed "Ace," combines vision sensors, model-free reinforcement learning and high-speed robotic hardware in a crane-like lever with a ping pong bat attached. The resulting system can autonomously locate a ping pong ball in space, determine the correct technique needed to return it to an opponent's side and repeat the process until a play is over. Robots like Ace are known as AI agents, systems that can reason and take action to solve multi-step problems with limited human supervision. Get the Tech Newsletter bundle. Get the Tech Newsletter bundle. Get the Tech Newsletter bundle. Bloomberg's subscriber-only tech newsletters, and full access to all the articles they feature. Bloomberg's subscriber-only tech newsletters, and full access to all the articles they feature. Bloomberg's subscriber-only tech newsletters, and full access to all the articles they feature. Plus Signed UpPlus Sign UpPlus Sign Up By continuing, I agree to the Privacy Policy and Terms of Service. Researchers at Sony AI, a subsidiary of Sony Group Corp., tested Ace in a series of games against highly ranked table tennis players. Without any prior knowledge of the opponents' style of play, the robot won seven out of 13 games against five different elite athletes, defined as those with more than 10 years of intensive training. Against two professional players in officially recognized leagues, Ace won one of seven games. Table tennis requires precise movements, split-second decisions and powerful reactions, making it a case study for how AI systems interact in complex physical situations. While previous robots have been able to hit a ping pong ball back and forth, none have surpassed an amateur level. The new research, published in Nature, marks the first time a robot has been competitive with experienced players. Scientists have long tried to design robots that can compete against humans in sports, with the aim of developing one with the physical skills required to interact in real-time with humans. On April 20, a Chinese humanoid robot completed a half marathon against human racers, winning the competition with a time about seven minutes faster than the men's world record. Electric vehicle maker Tesla Inc. has also pivoted to developing humanoid robots that can perform repetitive or dangerous tasks for humans. The research shows "that an AI system can perceive, reason, and act effectively in complex, rapidly changing real-world environments that demand precision and speed," said Peter Stone, chief scientist at Sony AI, in a statement. "Once AI can operate at an expert human level under these conditions, it opens the door to an entirely new class of real-world applications that were previously out of reach."

[9]

AI robot outplays humans in table tennis milestone

An AI-powered robot has beaten expert table tennis players in a landmark machine-over-human triumph in a major competitive sport. The mechanical maestro, known as Ace, uses a network of cameras and AI to achieve the rapid planning and reaction times needed to compete. The invention made by Japanese tech group Sony highlights how researchers are using AI to improve robots' ability to adapt to physical tasks they have struggled with, particularly those involving people. It follows a race in China at the weekend in which some automated robots outperformed runners, a dramatic improvement on last year. "Table tennis is a game of enormous complexity that requires split-second decisions as well as speed and power," said Peter Dürr, director of Sony AI in Zurich, who led the Ace project. "This research breakthrough highlights the potential of physical AI agents to perform real-time interactive tasks, and represents a significant step toward creating robots with broader applications in fast, precise and real-time human interactions." Ace beat three out of five elite table tennis players who had more than ten years of training, and scored 48 points versus 70 in two defeats to professionals, according to a paper published in Nature on Wednesday. The robot had improved further, Sony said: since the paper's submission, it had played four further matches against humans, beating two elite players and winning one out of two matches against professionals. The robot handled spin and unexpected changes in trajectory caused by the ball clipping the net on its way over, the researchers said. It outscored the elite players in aces -- points won directly from serve -- by 16 to eight. The results show how robots are starting to challenge human sporting capabilities after demonstrating superiority in cognitive and screen-based pursuits such as chess, Go and video games. In a half-marathon in Beijing on Sunday, a robot developed by the smartphone company Honor finished in 50 minutes and 26 seconds -- almost seven minutes faster than the men's world record. Researchers not involved in the Ace project hailed it as a milestone but noted the complexity of the visual inputs needed to track the ball's location and velocity. The system included nine cameras and three so-called gaze-control systems, positioned around the table. A big hurdle that remained for robot interactions with humans was how machines behaved when "information is incomplete, situations are ambiguous, and mistakes have serious consequences", said Johannes Köhler, an assistant professor in Imperial College London's Department of Mechanical Engineering. "Those challenges are largely not present here: the robot can see everything it needs to see, the task is highly structured, physical interaction is minimal, and there is little inherent danger," he said. "As a result, while the work is technically impressive, I am not convinced it addresses the core safety and uncertainty issues that currently limit autonomous robots operating around people."

[10]

Ping-pong robot Ace makes history by beating top-level human players

April 22 (Reuters) - An autonomous robot ping-pong player dubbed Ace has achieved a milestone for AI and robotics in Tokyo by competing against and sometimes defeating top-level human players at table tennis, a feat that could presage an array of other applications for similarly adept robots. Ace, created by the Japanese company Sony's (6758.T), opens new tab AI research division, is the first robot to attain expert-level performance in a competitive physical sport, one that requires rapid decisions and precision execution, the project's leader said. Ace did so by employing high-speed perception, AI-based control and a state-of-the-art robotic system. There have been various ping-pong-playing robots since 1983, but until now they were unable to rival highly skilled human competitors. Ace changed that with its performances against human elite-level and professional players in matches following the rules of the International Table Tennis Federation, the sport's governing body, and officiated by licensed umpires. "Unlike computer games, where prior AI systems surpass human experts, physical and real-time sports such as table tennis remain a major open challenge due to their requirements for fast, precise and adversarial interactions near obstacles and at the edge of human reaction time," said Peter Dürr, director of Sony AI Zurich and leader for Sony AI's project Ace. The project's goal was not only to compete at table tennis but to develop insights into how robots can perceive, plan and act with human-like speed and precision in dynamic environments, Dürr said. "The success of Ace, with its perception system and learning-based control algorithm, suggests that similar techniques could be applied to other areas requiring fast, real-time control and human interaction - such as manufacturing and service robotics, as well as applications across sports, entertainment and safety-critical physical domains," said Dürr, lead author of a study describing Ace's achievements published on Wednesday in the journal Nature, opens new tab. In matches detailed in the study, Ace in April 2025 won three out of five versus elite players and lost two matches against professional players, the top skill level in the sport. Sony AI said that since then Ace beat professional players in December 2025 and last month. Companies worldwide are making advances with robots. On Sunday, for instance, robots outran human runners in a half-marathon race in Beijing. 'A BLUR TO THE HUMAN EYE' AI systems already have excelled in digital domains in strategy games such as chess and Go and at complex video games. While video games take place in simulated environments, table tennis requires rapid decision-making, precise physical execution and continuous adaptation to an unpredictable opponent, Dürr said. The ball moves at high speeds with complex spins and trajectories, pushing humans and robots to operate at the limits of sensing, prediction and motor control, Dürr said. Ace's architecture integrates nine synchronized cameras and three vision systems to track a spinning ball with exceptional accuracy and speedy processing time. "This is fast enough to capture motion that would be a blur to the human eye," Dürr said. The researchers developed a custom robot platform featuring eight joints. This was, Dürr said, the minimum number necessary to execute competitive shots: three for the racket's position, two for its orientation and three for the shot's speed and strength. Mayuka Taira, a professional table tennis player who lost a match to Ace last December, said in comments provided by Sony AI that the robot's strengths "are that it is very hard to predict, and it shows no emotion." "Because you can't read its reactions, it's impossible to sense what kind of shots it dislikes or struggles with, and that makes it even more difficult to play against," Taira said. Rui Takenaka, an elite-level player who has won and lost matches against Ace, said in comments provided by Sony AI: "When it came to my serve, if I used a serve with complex spin, Ace also returned the ball with complex spin, which made it difficult for me. But when I used a simple serve - what we call a knuckle serve - Ace returned a simpler ball. That made it easier for me to attack on the third shot, and I think that was the key reason why I was able to win." Ace has room for improvement, Dürr said. "Ace has a superhuman ability to read the spin of incoming balls, and superhuman reaction time. As it learns to play not from watching humans play, but is trained by itself in simulation, it also reacts differently from human players and creates surprising situations," Dürr said. "At the same time, professional human athletes are very good at adapting to their opponent and finding weaknesses, which is an area that we are working on." Reporting by Will Dunham in Washington; Editing by Daniel Wallis Our Standards: The Thomson Reuters Trust Principles., opens new tab

[11]

A robot is beating human pros at table tennis. Its maker calls it a milestone for machines

A paddle-wielding robot is so adept at playing table tennis that it is posing a tough challenge to elite human players and sometimes defeating them, according to a new study that shows how advances in artificial intelligence are making robots more agile. Japanese electronics giant Sony built the robotic arm it calls Ace and pitted it against professional athletes. Ace proved a worthy adversary, though one with some non-human attributes: nine camera eyes positioned around the court and an uncanny ability to follow the ball's logo to measure its spin. The robot learned how to play the sport using the AI method known as reinforcement learning. "There's no way to program a robot by hand to play table tennis. You have to learn how to play from experience," said Sony AI researcher Peter Dürr, co-author of the study published Wednesday in the science journal Nature. To conduct the experiments, Sony built an Olympic-sized table tennis court at its headquarters in Tokyo to give professional and other highly skilled athletes a "level playing field" with the robot, Dürr said in an interview with The Associated Press. Some of the athletes said they were surprised by Ace's prowess. Sony says it is the "first time a robot has achieved human, expert-level play in a commonly played competitive sport in the physical world -- a longstanding milestone for AI and robotics research." The custom-built robot has eight joints that direct its movements, or degrees of freedom, enabling it to position the racket, execute shots and swiftly respond to its opponent's rallies. "Speed is really one of the fundamental issues in robotics today, especially in scenarios or environments that are not fixed," said Michael Spranger, president of Sony AI, in an interview. "We see a lot of robots that are in factories that are very, very fast," Spranger said. "But they're doing the same trajectory over and over again. With this technology, we show that it's actually possible to train robots to be very adaptive and competitive and fast in uncertain environments that constantly change." Spranger said such technology could play a role in manufacturing and other industries. It's also not hard to imagine how such high-speed and highly perceptive hardware could be used in war. A humanoid robot ran faster than the human world record in a half-marathon race for robots in Beijing on Sunday, but getting a machine to interact and compete at split-second speeds with skilled human athletes is in some ways a more difficult challenge. Spranger said it was important for researchers to not give the robot too unfair of an advantage and make its speed, arm's reach and performance comparable to a skilled athlete who trains at least 20 hours a week. It plays by official table tennis rules on a typically sized court. "It's very easy to build a superhuman table tennis robot," Spranger said. "You build a machine that sucks in the ball and shoots it out much faster than a human can return it. But that's not the goal here. The goal is to have some level of comparability, some level of fairness to the human, and win really at the level of AI and the level of decision-making and tactics and, to some extent, skill." That means, he said, that "the robot cannot just win by hitting the ball faster than any human ever could, but it has to win by actually playing the game.'' AI researchers have long used board games like chess as benchmarks for a computer's capabilities. They later moved into more open-ended video game worlds. But moving AI from simulated environments to the physical world has long been the gold standard for robot makers. The past year has marked a ''kind of ChatGPT moment for robotics," Spranger said, with new, AI-driven approaches to teach robots about their real-world environments and task them with physically demanding activities, like backflips. Sony is hardly the first to tackle robots in table tennis. John Billingsley helped pioneer such contests in 1983 in a paper titled "Robot Ping-Pong." More recently, Google's AI research division DeepMind has also tackled the sport. And while impressive, Billingsley said Sony's all-seeing computer vision and motion detection capabilities make it hard for a two-eyed human to stand a chance. "I would not want to belittle the achievement, but they have gone at the task mob-handed, and used sledgehammer techniques," Billingsley, a retired mechatronics professor at the University of Southern Queensland in Australia, said in an email to the AP. He added, however, that it adds to the lesson that "true progress comes out of contests, whether they involve hitting a ball or setting foot on Mars." Japanese professional players Minami Ando and Kakeru Sone were among those who competed against Sony's robot. Two umpires from the Japanese Table Tennis Association judged the games. After submitting the paper to peer review ahead of its publication in Nature, Sony researchers kept experimenting and said Ace accelerated its shot speeds and rallies and played even more aggressively and closer to the table edge. Competing against four high-skill players, Sony said Ace defeated all but one of them in December. Another expert player, Kinjiro Nakamura, who competed in the 1992 Barcelona Olympics, told researchers after observing Ace play a shot that "no one else would have been able to do that. I didn't think it was possible." But the robot now having done it "means that there is a possibility that a human could do it too," he said, in remarks published in the Nature paper. ___ AP journalists Yuri Kageyama and Javier Arciga contributed to this report.

[12]

Watch an AI-powered table tennis robot beat elite players

Using high-precision cameras and an AI system, Sony AI's Ace is revealing the advancements robotics. The world of table tennis may be in for a shake-up after Sony's AI division unveiled Ace -- an autonomous robot that can compete with expert table tennis players. Using a combination of high-speed cameras and proprietary state-of-the-art hardware, Ace scored 16 unchallenged points, or "aces," after serving against multiple elite players. This is the first time a robot has achieved "expert-level play in a commonly played competitive sport in the physical world," Sony AI representatives said in a statement. "This breakthrough is much bigger than table tennis," Peter Stone, chief scientist at Sony AI, said in the statement. "It represents a landmark moment in AI research, showing, for the first time, that an AI system can perceive, reason, and act effectively in complex, rapidly changing real-world environments that demand precision and speed." A study detailing how the robot works was published April 22 in the journal Nature. Where hardware meets software AI systems have already shown prowess in strategy games, such as Go, chess and role-playing games. However, moving AI into a robotic body, where quick reflexes are paired with physical movements, can be more challenging. Here, the software and hardware components have to work seamlessly -- and in table tennis, where speed and hand-eye coordination are vital, this pairing has to work to win. "Table tennis is a game of enormous complexity that requires split-second decisions as well as speed and power," Peter Dürr, director of Sony AI in Zurich and project lead for Ace, said in the statement. "This research breakthrough highlights the potential of physical AI agents to perform real-time interactive tasks, and represents a significant step toward creating robots with broader applications in fast, precise, and real-time human interactions." Ace's strategy builds on Sony AI's previous research on its AI agent Gran Turismo Sophy, with Ace using advanced sensors and high-speed software to perceive its environment. These sensors include nine active pixel sensor cameras that help Ace identify the ball's exact position in 3D space, along with three gaze systems that use mirrors and event-based vision cameras to measure the ball's spin and angular velocity as it moves through the air. Running these cameras is Sony AI's proprietary AI control system, which is based on model-free reinforcement learning, where an AI agent learns directly from interactions in its environment without making a predictive model first. This technology allows Ace to adapt and make decisions faster, without relying on a preprogrammed model. Lastly, Ace's robotic body, which includes a swiveling arm with a paddle-like appendage at its end, was created with the company's robotic hardware. Beating the pros In April 2025, scientists had Ace play against five elite players (each with over 10 years of experience and around 20 hours of weekly training) and two professional table tennis players (Minami Ando and Kakeru Sone, both in the Japanese professional league). While players in both tiers are skilled at table tennis, professional athletes make their living by playing table tennis, whereas elite players may not have the same caliber to make the sport their livelihood. Ace won three out of five matches with the elite players and boasted a 75% serve return rate. Its autonomous system also allowed the robot to return unusual shots, such as balls bouncing off the net. It however lost both matches against the pros. Then, in December 2025, Sony AI had Ace play a series of separate matches in which it competed against two professional and two elite players. This time, Ace beat both elite players and one of the professionals. Company representatives said the robot moved closer to the table edge, had higher shot speeds and launched faster-paced volleys against its opponents. Given that less than two years ago, Google DeepMind's robotic table tennis robot was defeated by elite players, Ace's victories show how quickly this field of robotics is advancing in a short time. "Once AI can operate at an expert human level under these conditions, it opens the door to an entirely new class of real-world applications that were previously out of reach," Stone said in the statement.

[13]

Watch Sony’s AI Robot Compete Withâ€"and Beatâ€"Elite Table Tennis Players

Ace is the first robot that can match serves with some of the best pro players in the world, a new study shows. Watch out Marty Supreme, there's a new contender for the throne of table tennis champâ€"and it's not human. Research out today showcases a robot that can match and even best elite human players. Scientists at Sony's AI division developed the autonomous robotic system, dubbed Ace. Their study details how Ace won a majority of its matches against table tennis players with extensive experience, though it came up short against professional athletes. Novelty aside, the software and hardware that makes the robot possible could have many other uses, its creators say. "The results of our work on Ace highlight the potential of physical AI agents to perform complex, real-time interactive tasks, suggesting broader applications in domains requiring fast, precise human-robot interaction," lead author Peter Dürr told Gizmodo. Systems based on artificial intelligence can now regularly beat people at all sorts of tasks, including various games. Historically, though, it's been a challenge to design robots smart and nimble enough to surpass humans at physical sports. Table tennis in particular requires fast reaction times and the ability to generate accurate, yet difficult-to-return, high-spin balls to opponents. Scientists have been tinkering with the possibility of tennis robots since the 1980s, but ACE represents an important step forward for both artificial intelligence and robotics, according to Dürr. "Sony AI conducted this research to study how AI could operate safely and effectively in the physical world, where perception, control, and agility must come together in real time," he said. "Unlike simulated environments where AI can rely on perfect information, real-world sports like table tennis demand rapid decision-making based on state estimation from noisy sensors and adversarial human interactions." Unlike past experiments, the researchers judged Ace's performance against humans using the actual rules of the International Table Tennis Federation (ITTF); they also recruited licensed umpires to oversee the games. In the present study, conducted in April 2025, the researchers paired Ace against five players deemed elite, defined as people who had at least 10 years of playing experience and regularly trained 20 hours a week on average. It also faced off against Minami Ando and Kakeru Sone, two players active in Japan's professional table tennis league. Ace won three of the five matches against elite players. It won one game against a pro, though it ultimately lost both matches to Ando and Sone. And throughout the matches, the robot displayed agile moves and could consistently serve and return high-speed and high-spin balls. The team's findings were published Wednesday in the journal Nature. The team's experiments didn't stop there. Ace had another set of matches in December 2025, where it was able to beat both elite and professional players (it won one of the two pro matches). In March 2026, it won three matches against professionals, including Miyuu Kihara, currently a top 25 player in the World Table Tennis rankings for women's singles. During these matches, Ace displayed improved performance at shooting balls faster and more aggressively closer to the table edge, according to Dürr. Still, Ace probably isn't going to take over the world of table tennis. The project was devised as a way for the researchers to push the individual technologies driving Ace as far as they could, rather than any specific goal. But the lessons learned from Ace might allow scientists to create better robotic systems for various "applications across sports, entertainment, and other safety-critical physical domains," Dürr said. Thankfully, I've always been complete trash at table tennis/ping pong, so I'm already happy to accept Ace as our new robotic overlord just in case.

[14]

Sony's autonomous robot becomes first to beat pro table tennis players

For years, AI excelled in virtual environments like chess and racing simulations. Physical interaction posed a harder problem. It requires split-second sensing, planning, and movement. Ace builds on earlier work like Gran Turismo Sophy. That system mastered high-speed racing in simulation. Ace brings similar intelligence into the physical world. Sony AI combined advanced sensors, reinforcement learning, and precision robotics. The system tracks fast-moving objects and reacts in milliseconds. Table tennis pushes these limits. The sport demands speed, spin control, and rapid adaptation. "This research has shown that an autonomous robot can, in fact, win at a competitive sport, matching or exceeding the reaction time and decision making of humans in a physical space," said Peter Dürr, Director of Sony AI in Zürich, and project lead for Ace. "Table tennis is a game of enormous complexity that requires split-second decisions as well as speed and power. This research breakthrough highlights the potential of physical AI agents to perform real-time interactive tasks, and represents a significant step toward creating robots with broader applications in fast, precise, and real-time human interactions."

[15]

'It totally blew my mind': Sony's Project Ace robot plays ping pong better than the pros and could mark a major robotics turning point

* A robot just beat some elite table tennis players * Sony AI's Project Ace is good at competing against unpredictable human players * Success here could mean it'll be easier to use AI to train future robots to handle the real world In competitive table tennis, the ball can travel at speeds of up to 70mph, and it can go anywhere. Sure, there's some predictability based on the strike, spin, and how the ball hits the table, but there are also infinite possibilities that now, it appears, a robot has mastered. Sony AI's Project Ace is the first robot to beat multiple elite-level table tennis players in an International Table Tennis Federation-style arena and under the watchful eyes of licensed referees. In a new Nature Article, Outplaying elite table tennis players with an autonomous robot, Sony AI scientist describe their work and how they built and used AI to train a robot, "Project Ace", to not just play table tennis, but do so at a pro-level. "Ace achieved three victories in five matches against elite players, along with competitive performances in the remaining matches. These results demonstrate the potential of physical AI agents to outperform human experts in interactive, real-time tasks," wrote the scientists. Project Ace is a canny combination of "high-speed perception," a control system based on reinforcement learning (rewarding good behavior), and "high-speed robot hardware." No feet, but a wicked backhand Ace doesn't look like a human ping pong player you've ever seen. Instead, it glides in four directions on a custom track system, while its trunk rotates 360 degrees, and the fully articulated arm and wrist adjust on the fly to both serve and return the ball. You may have seen table-tennis-playing robots before (I recall seeing a lumbering one at CES 2026), but not like this. The speed alone is astonishing. Still, it's the AI-based reinforcement learning and training simulation that makes Project Ace special and successful. In training, it was able to game out all sorts of play scenarios. It even practiced against a virtualized version of itself. But it's the "model-free" reinforcement learning that's, at least in part, allowing Project Ace to adapt to unpredictable, elite, human competitors. Equipped with on-board sensors and an array of nine cameras positioned around the robot, Project Ace can see things that most human competitors, even elite players, might miss. The ball spin, for example, is a determinant of where the ball will go next. As the researchers explain in the project video, perception is one of the key innovations, "So it's the only system in the world that can measure spin of an unaltered table tennis ball at this speed." Perhaps the secret sauce here, though, is a technique called "privileged critic", which Sony AI developers used within the training simulations. The privileged critic accessed perfect-match information, which is married to live sensor data. It might be said that it's comparing what should happen with what does. That learning is how the robot prepares for the unexpected. There's a moment in the video where you can see this at work. The elite human player hits a ball that catches the net, sending it careening in a different, perhaps unanticipated direction. Project Ace clearly already had a return planned, but it managed to adjust to the ball's new trajectory and hit a return. It all happens within milliseconds, and one might argue that a human player would've failed to make that same, rapid adjustment. "It totally blew my mind," said Sony AI Director and Lead Engineer Peter Dürr in a release on Project Ace. Like Sony AI's previous project: teaching the AI how to beat expert human-level players in a Gran Turismo simulation, Project Ace is not about beating pro players and leveling up to Olympic-class tablet tennis players. This is about helping robots operate in an unpredictable world. Most people who watch humanoid robots operate in home environments comment on their speed, or lack thereof. The robots move deliberately for safety and to manage the unexpected. Project Ace, though, proves that robots can be trained and train themselves to manage an unpredictable world, and at speed. Also, future table tennis competition is not totally off the table. After all, the Sony AI team is constantly working on improving Project Ace's game. They note, for instance, that the robot has a tendency to move in and hit earlier than human opponents. Sometimes, stepping back and waiting a beat can provide for a more strategic return. If they solve for that (or maybe Project Ace solves it on its own), why should the Olympics be off the table? Follow TechRadar on Google News and add us as a preferred source to get our expert news, reviews, and opinion in your feeds.

[16]

AI ping pong robot beats top human players, but don't freak out yet

Chris is a veteran tech, entertainment and culture journalist, author of 'How Star Wars Conquered the Universe,' and co-host of the Doctor Who podcast 'Pull to Open.' Hailing from the U.K., Chris got his start as a sub editor on national newspapers. He moved to the U.S. in 1996, and became senior news writer for Time.com a year later. In 2000, he was named San Francisco bureau chief for Time magazine. He has served as senior editor for Business 2.0, and West Coast editor for Fortune Small Business and Fast Company. Chris is a graduate of Merton College, Oxford and the Columbia University Graduate School of Journalism. He is also a long-time volunteer at 826 Valencia, the nationwide after-school program co-founded by author Dave Eggers. His book on the history of Star Wars is an international bestseller and has been translated into 11 languages. If you're primed to fear AI-driven robots replacing human workers at complex physical tasks, consider this your trigger warning. A robot arm built by Sony, and named Ace, has just been dubbed "the first autonomous system to be competitive with elite human table tennis players." That's a quote from the study splashed across the front page of Nature, the world's most venerable peer-reviewed science journal. The Ace researchers brought receipts. As you can see in the video above, the eight-jointed robot arm is able to make split-second decisions via an AI that's being fed real-time data from nine cameras. It scored a lot of points and won a few games against some of the world's top ping-pong players at Sony HQ in Tokyo. But here's the good news buried in all the data. Yes, within the confines of this study, Ace was competitive. That doesn't mean Ace could figure out how to win every time; it's nothing like the half marathon-running robot that simply has to master one speed. And, crucially, the human players started to spot flaws in Ace's ping-pong strategy. Ace isn't the first ping-pong playing robot. Researchers have long been interested in the sport because of its speed and real-time decision-making, which is a major frontier in robotics. In this respect, Ace marks a milestone for the AI system and for the highly reliable arm. That arm was able to track a ping pong ball with 10 milliseconds of latency -- more than 10 times faster than the human brain can manage. "Ace's striking skills are trained entirely in simulation using reinforcement learning, then transferred directly to the real robot," Sony explained in a blog post. "This is analogous to a player who practices endlessly in a virtual training hall and then walks onto a real court without needing to relearn anything." But that's just the thing -- ping-pong players learn on the go, and they're looking at more than just the ball. Mayuka Taira, who lost a match to Ace last December, told Sony the robot effectively intimidated her at first. "Because you can't read its reactions, it's impossible to sense what kind of shots it dislikes or struggles with, and that makes it even more difficult to play against," she said. But then Rui Takenaka, who has both lost and won against Ace, went that crucial human step further. Here's what he told the company, emphasis ours: If I used a serve with complex spin, Ace also returned the ball with complex spin, which made it difficult for me. But when I used a simple serve, what we call a knuckle serve, Ace returned a simpler ball. That made it easier for me to attack on the third shot, and I think that was the key reason why I was able to win. Got that? Ace, a profoundly smart system, was suckered by a knuckle serve. "Professional human athletes are very good at adapting to their opponent and finding weaknesses, which is an area that we are working on," Ace project leader Peter Dürr told Reuters. So we shouldn't exactly hang up our ping pong rackets just yet. But we should certainly be very concerned about the mentions of security applications through the various reports and blogs about Ace. Because the most lucrative real-world application of speedy systems like this isn't at the Olympics. It's on the battlefield -- where being faster than the human eye may mean game over for human soldiers.

[17]

AI-powered robot beats elite table tennis players

In feat hailed as milestone in robotics, Sony AI's Ace wins three out of five matches played under official rules An AI-powered robot has beaten elite players at table tennis in a landmark achievement for a machine faced with a human athlete in a real-world competitive sport. Named Ace, the robotic system developed by Sony AI, won three out of five matches against elite players, but lost the two it played against professionals, clawing back only one game in the seven contests. The feat has been hailed as a milestone for robotics, a field that has long seen table tennis - and the lightning-fast reactions, perception and skill it demands - as one of the toughest tests of how far the technology has advanced. In the matches, played under official competition rules, Ace displayed a mastery of spin, handled difficult shots, such as balls catching on the net, and pulled off one rapid backspin shot that a professional had thought impossible. A research paper on the robot was published in Nature on Wednesday, but scientists working on the project said Ace had improved since the report was submitted. "We played stronger and stronger players and we beat stronger and stronger players," said Peter Dürr, the director of Sony AI in Zurich and project lead for Ace. AI researchers use games from chess and go, to poker and Breakout to teach programs on how to make decisions in complex situations. Building an intelligent robot takes the challenge to the next level by requiring the machine to enact decisions effectively. Ace sidesteps some tricky aspects of table tennis by having an eight-jointed arm on a moveable base that does not have to stand on two legs. And instead of seeing the ball with two eyes, it draws on images from multiple cameras that view the entire court from different angles and track the position and spin of the ball. By zooming in on the ball's logo, the camera system can estimate the ball's spin and axis of rotation in the milliseconds it takes to reach Ace's end of the table. How to deal with spin, and which shots to play, were honed during 3,000 hours of games played in a computer simulation. Other skills, such as serves, were drawn from those used by expert players. Ace was not a table tennis ace from the start. Early on, it had problems facing slow balls with minimal spin, returning them weakly and being punished for the slip. But it was impressive at tricky shots, such as when the ball catches on the net, with Ace responding extremely fast to the altered trajectory. "If I used a serve with complex spin, Ace also returned the ball with complex spin, which made it difficult for me," said Rui Takenaka, an elite player. "But when I used a simple serve - what we call a knuckle serve - Ace returned a simpler ball. That made it easier for me to attack on the third shot, and I think that was the key reason why I was able to win." When Ace played an unusual shot, intercepting the ball early and imparting backspin, the former Olympic table tennis player Kinjiro Nakamura, said it had not thought it possible, but now believed that humans could learn the shot. One difficulty in playing Ace is that the robot has no eyes to look into, no body language to read, and does not succumb to pressure when a game is tied 10-10. Dürr said: "The players want to see the eyes of their opponent. And the eyes of Ace are all around the court and they don't show any intention or feeling." Jan Peters, a professor of intelligent autonomous systems at the Technical University of Darmstadt in Germany, has worked on table tennis robots. He called the project "truly impressive", but said research on table tennis would not solve some of the significant challenges in robotics, such as manipulating objects. To be "useful for the general public, a lot of good old-fashioned engineering is needed", Peters added. "There will be a moment in the next decade which will change the world as much as ChatGPT did in 2022. That moment may be closer to now than to 2036."

[18]

New AI-Powered Robot Can Destroy Human Champions at Ping Pong

First, they learned how to play tennis on two feet. Then, they came for our half-marathon world record. Now, robots are set to overtake us in the sport of table tennis as well. In a recent paper, published this week in the journal Nature, researchers detailed how they've leveraging AI to teach a robot arm built by Sony, dubbed Ace, to repeatedly beat "elite and professional players under official competition rules." Sony claims it's the first robot to achieve expert-level performance in any competitive physical sport, following decades of table tennis robot development. A promotional video put together by Sony's AI division shows the paddle-wielding robot bounding back and forth at staggering speeds to counter aggressive strikes. The human experts don't appear to be holding back, either, smacking challenging shots at it with full strength. It's an impressive technological feat and a major milestone for the application of AI in robotics, a confluence of two areas of research that has been at the core of the ongoing boom in humanoid robotics over the last few years. While teaching a robot arm to play ping pong may not sound like the kind of thing that will lead to the next industrial revolution, many of Ace's achievements could eventually trickle down to other areas of research. "The success of Ace, with its perception system and learning-based control algorithm, suggests that similar techniques could be applied to other areas requiring fast, real-time control and human interaction," lead author and project lead at Sony AI Peter Dürr told Reuters, "such as manufacturing and service robotics, as well as applications across sports, entertainment and safety-critical physical domains." According to their paper, Ace won three out of five games against elite players with more than ten years of experience, but lost two games against top-level pros as of April 2025. However, Sony claims that Ace went on to beat more professional players in December, as well as last month. The level of complexity is staggering. The robot needs to have extremely fast reaction times, not only to track the ball, but to also determine its trajectory in real-time using nine cameras and three vision systems. The overall system can "track a ball at 200 Hz with millimeter accuracy and around ten millisecond latency while measuring the spin at up to 700 Hz," explains an accompanying Sony AI blog post. "This is fast enough to capture motion that would be a blur to the human eye." Deep reinforcement learning allows it to seamlessly predict ball behavior and choose how to counter its opponent. Meanwhile, many high-level players were baffled by the apparatus. It's essentially "impossible to sense what kind of shots it dislikes or struggles with, and that makes it even more difficult to play against," table tennis pro Mayuka Taira told Reuters. Nonetheless, Dürr noted that pro players will still have an edge -- at least for now -- since they remain "very good at adapting to their opponent and finding weaknesses, which is an area that we are working on."

[19]

Sony's table tennis robot made me think about what happens when AI gets a body

Ace starts as a flashy sports demo and quickly turns into a preview of AI moving from screens into factories, hospitals, farms, and homes I wanted to dismiss Sony's table tennis robot as another expensive lab flex. A machine that can rally against elite players is impressive, sure, but it also sounds like the kind of demo built to make executives clap in a room where everyone already agreed to be impressed. But table tennis is a nastier test than it looks. The ball is small, fast, spinning, and rude enough to change direction the moment it hits the table. Sony's system faces something less forgiving than calculation. It has to see, predict, and act before the point is gone. Recommended Videos Sony tested Ace against five elite players and two professionals under official competition rules, and the robot came away with several wins. The more useful detail is what it had to handle during those matches: fast, high-spin shots that change direction after the bounce and punish even small delays. In plain English, Ace wasn't just hitting the ball back. It was reading motion, making a prediction, and moving before the rally escaped it. AI is leaving the board The usual "AI beats human" headline undersells what Ace is actually testing. We've already seen that story in cleaner arenas. IBM's Deep Blue beat Garry Kasparov in 1997, and the symbolism still hangs over every old contest between human skill and machine calculation. But chess, for all its strategic depth, is polite to computers. The board doesn't wobble. The pieces don't spin. A knight never comes screaming back at 60 miles per hour because someone clipped it at a nasty angle. Sony's robot points to a different shift. When AI has to move, intelligence becomes a timing problem. The system has to read the world quickly enough to act inside it. That's more useful, and much harder to keep neatly boxed in. The body changes the problem This is where the table tennis demo starts doing more work. A robot that can track spin, predict motion, and adjust its response in real time isn't automatically a factory worker, warehouse picker, nurse assistant, farmhand, or disaster-response machine. That leap would be too neat, which usually means it's wrong. The broader robotics market is already well past the cute-demo stage. The International Federation of Robotics says 542,000 industrial robots were installed in 2024, more than double the figure from a decade earlier. It expects installations to reach 575,000 in 2025 and pass 700,000 by 2028. That doesn't make Ace a factory product, but it does make it part of a bigger automation story that's already showing up on production floors. On controlled industrial floors, robots need to handle variation instead of repeating one perfect motion forever. In logistics, they face crushed boxes, bad angles, missing labels, and people walking through the wrong lane at the worst possible time. Outdoors, mud, weather, uneven ground, and produce shaped by nature aren't known for respecting software requirements. The labor side is where the story gets less cute. McKinsey estimates that today's technology could theoretically automate activities accounting for about 57% of current US work hours. That isn't a clean jobs-lost number, and McKinsey is careful about that point. The pressure is subtler and probably messier: tasks get split apart, roles get redesigned, and some workers discover that "efficiency" has a habit of arriving with a spreadsheet and a forced smile. Some settings raise the penalty for being wrong. A chatbot that gets something wrong can waste an afternoon. A robot that misreads a patient's balance, a wheelchair, or a hospital hallway can do real damage. The more embodied AI becomes, the less forgiving its mistakes get. The bill comes with the body The infrastructure doesn't disappear when AI gets legs, wheels, or a robot arm. It still depends on chips, data centers, cooling systems, electricity, water, and a grid that wasn't built around every company suddenly discovering it needs more compute. The International Energy Agency expects global data center electricity consumption to double to around 945 TWh by 2030, representing just under 3% of global electricity consumption. That share may sound small until a local grid, a water system, or a community near a new data center has to absorb the concentration. It's not all grim though. Smarter robots could reduce factory waste, help inspect dangerous sites, improve precision agriculture, and take on work that breaks human bodies for a living. The upside is real, but so is the cost. Deep Blue made AI feel powerful inside a board game. Ace makes it feel like the board is gone, and the pieces are now factories, hospitals, farms, grids, and workers trying to guess what happens next. Asimov imagined robots bound by rules. The version we're actually building may be bound first by economics.

[20]

Meet 'Ace,' the paddle-wielding robot who just beat humans at ping pong in AI breakthrough | Fortune