TikTok algorithm systematically skewed to the right during 2024 US elections, new study reveals

2 Sources

[1]

TikTok's algorithm systematically skewed to the right during the 2024 US elections

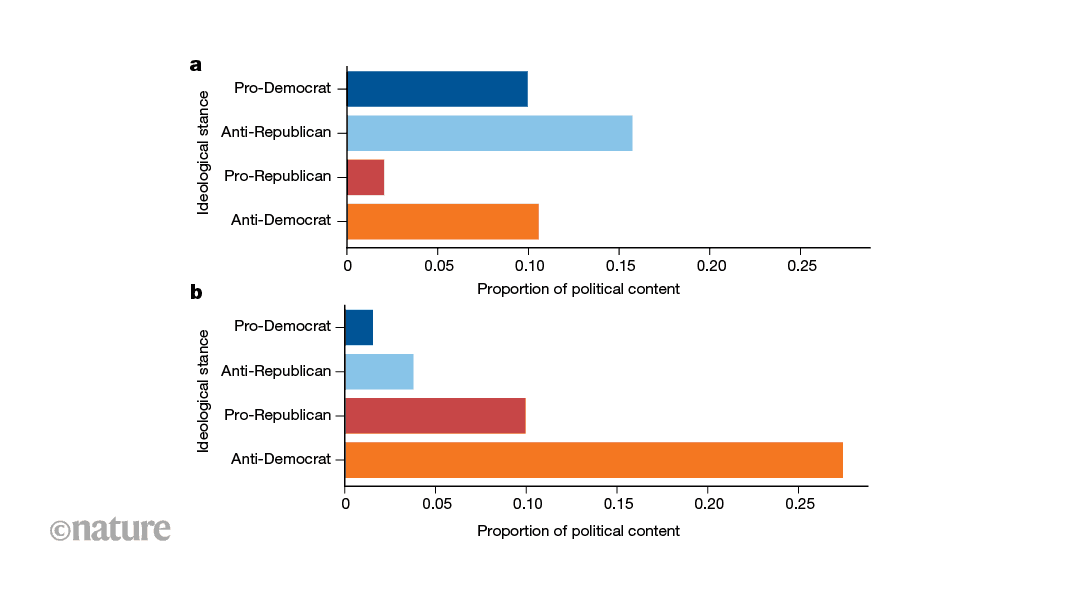

Social-media platforms have become an important channel through which people encounter political information, raising the question of whether the algorithms that control what content is shown might favour one side over another. Research on Facebook has previously shown that users tend to be exposed to like-minded sources, and that this pattern is strongest for users on the political right. Other research indicates that algorithmic curation of content has limited effects on political attitudes. Audits of YouTube using automated 'bot' accounts have found that its recommendation algorithm can amplify partisan content. However, disentangling algorithmic influence from user choice is difficult on platforms in which users retain control over what they see. TikTok offers an unusual opportunity because its main interface -- the 'For You' feed -- is driven almost entirely by the platform's recommendation algorithm, with users having little ability to customize what appears. Despite the rapid growth of TikTok as a news source among young voters, systematic studies of political bias in its recommendations have been lacking. To measure whether TikTok's algorithm treats the two main US political parties differently, we created 323 automated accounts, known as bots, that were designed to behave in the same way as real users. Each bot was 'trained' by exposing it to a set of videos aligned with either the Democratic Party or the Republican Party, or containing no political material at all, thereby signalling a political preference to TikTok's algorithm. We then recorded which videos TikTok recommended to each bot on its For You feed. The bots were artificially located, through 'mock' GPS and virtual private network (VPN) routing, in three US states -- New York, Texas and Georgia -- representing strongly Democratic, strongly Republican and competitive states, respectively. Over 27 weeks during the 2024 presidential campaign, we collected more than 280,000 recommended videos and classified their political leanings using a combination of artificial intelligence and human review. We found a consistent imbalance. Bots trained on Republican-aligned content received about 11.5% more politically reinforcing material than did bots trained on Democrat-aligned content. Bots trained on Democrat-aligned content were shown about 7.5% more content from the opposing side, predominantly videos critical of the Democratic Party (Fig. 1). These patterns were the same across all three states and could not be explained by differences in how popular or widely shared the videos were. The imbalance was concentrated in specific policy areas, such as immigration and crime for anti-Democrat content and abortion for pro-Republican content. A survey of 1,008 US-based TikTok users supported these findings: Republican-leaning respondents reported seeing more content aligned with their views than did Democrat-leaning respondents. Our findings matter because TikTok has become a principal source of political information, especially for young adults -- a group that shifted towards the Republican Donald Trump by 10 percentage points between the 2020 and 2024 presidential elections. Because users have so little control over what TikTok shows them, imbalances in how the algorithm distributes political content could shape the information environment of millions of voters. Our results also have implications for platform regulation: the European Union's Digital Services Act, for example, already requires large online platforms to assess and mitigate risks to electoral processes, whereas US law grants platforms broader editorial discretion. Our study cannot determine exactly why TikTok's algorithm produces this imbalance: whether it stems from the algorithm's internal rules, the availability of content or other factors not visible to outside researchers. Our bots also captured only the early stages of a user's experience on the platform, and we analysed only English-language video transcripts, meaning that political cues conveyed through visuals, audio or other languages were not captured. Future work could pair automated audits with data from real users; extend analyses beyond the election period to test whether these patterns persist; and compare TikTok's behaviour with that of other platforms, to determine whether the imbalance we observed is specific to TikTok or more widespread. -- Talal Rahwan and Yasir Zaki are at New York University Abu Dhabi, Abu Dhabi, United Arab Emirates. In early 2024, the US Congress debated banning TikTok, but almost no one had systematically tested whether its algorithm treated both political sides equally. With a presidential election approaching, the opportunity felt too important to miss. The idea emerged over breakfast in the university's dining hall. We had just formed the AI and Society Lab, and this became the lab's first project. The stakes and expectations felt unusually high. The experiment was conceptually simple but logistically intense: every week for 27 weeks, we used a small phone farm to create 21 fresh accounts mock-located across three US states. Engineering questions kept bumping up against conceptual ones, and our experimental design emerged from this back-and-forth. We were all constantly teaching and learning. In asking whether different users are shown the same kind of information, we revealed consistent, systematic differences in how TikTok's algorithm distributes political content across partisan lines. -- T.R. and Y.Z.

[2]

TikTok's algorithm favored Republican content in 2024 US elections, study finds

Researchers found that TikTok's For You pages prioritized pro-Republican content in New York, Texas and Georgia A study published Wednesday in the journal Nature finds that TikTok's algorithm systematically prioritized pro-Republican content in three states leading up to the 2024 US elections. Researchers created hundreds of dummy accounts and conditioned them to mimic real users' behavior by watching a set of videos either aligned with the US Democratic or Republican parties. Then, they tracked the videos TikTok recommended on these accounts' For You pages, TikTok's main feed. "We found a consistent imbalance," they wrote in Nature. About 42% of US social media users say that these platforms are important for getting involved with political and social issues, according to Pew Research, but it's not often clear how recommendation algorithms shape what appears in feeds. Professors Talal Rahwan and Yasir Zaki at New York University's Abu Dhabi campus set out to study how partisan politics shows up on TikTok - a platform that has become a key source of political information, especially for some young adults. Their study notes that this demographic - ages 18 to 29 - shifted by 10 percentage points towards Trump between the 2020 and 2024 elections. TikTok did not offer comment by press time. Bots that were trained on pro-Republican content viewed about 11.5% more content that agreed with their views compared to their pro-Democrat counterparts. That imbalance held for exposure to opposing views, too. Bots trained on pro-Democratic content were about 7.5% more likely to be viewing pro-Republican content on their For You page, the study found. The researchers used 323 dummy accounts in the study, setting their locations to New York, Texas and Georgia. For 27 weeks of the 2024 presidential campaign, the researchers sifted through more than 280,000 recommended videos using a combination of human and AI review. "Our finding isn't just about reinforcement; Democratic accounts were shown significantly more anti-Democratic content than Republican accounts were shown anti-Republican content," said Rahwan, one of the study's authors. "The algorithm wasn't just giving people what they want; it was giving one side more of what the other side says about them." The types of issues that surfaced in videos differed, too - as pro-Democrat accounts in the study were fed disproportionately more cross-partisan content on immigration and crime, and pro-Republican accounts saw more cross-partisan content on abortion. "This suggests the algorithm may amplify content designed to attack the opposing side on its weakest ground, which is a more targeted and arguably more concerning pattern than a uniform ideological drift," adds Hazem Ibrahim, a PhD student at NYU Abu Dhabi who worked on the study. The bots in the study were located through "mock" GPS and virtual private network (VPN) routing in strongly Democratic New York, strongly Republican Texas and Georgia, a battleground state. The researchers caution that their findings shouldn't be generalized beyond these states. The study authors acknowledge that many users self-select and curate the content they see on various social media platforms. However, they say that TikTok's For You page gives users less control than the main interfaces of other social media platforms, being "almost entirely driven by the platform's algorithm", the paper notes. On TikTok, "users don't need to follow anyone; the system decides based on behavioral signals like watch time. That makes it a uniquely clean setting for studying algorithmic influence, because user self-selection is minimized," Ibrahim says. "Skews here are harder to attribute to users' choices." The authors note that while their study unpacked the kind of political content users are exposed to, it doesn't analyze the influence of these videos on political beliefs and behavior, or the reason why this imbalance exists. They also note that the bots only captured the early states of a user's experience on the platform, and analyzed English-language video transcripts, which wouldn't capture political cues conveyed through visuals or other languages. Still, they stress that studying the extent to which political content can be skewed on TikTok feeds are relevant to ongoing debates about platform transparency and algorithmic accountability. The Nature article pointed out: "The European Union Digital Services Act, for example, already requires large online platforms to assess and mitigate risks to electoral processes, whereas U.S. Law grants platforms broader editorial discretion." Zaki adds: "In an environment where margins are thin, systematic differences in the kind of political information recommended to tens of millions of young voters are worth taking seriously."

Share

Copy Link

A groundbreaking study published in Nature found that TikTok's recommendation algorithm consistently favored pro-Republican content during the 2024 US presidential campaign. Researchers from New York University Abu Dhabi analyzed over 280,000 videos using 323 bot accounts across three states, discovering that Republican-aligned bots received 11.5% more reinforcing content than Democratic counterparts. The findings raise critical questions about algorithmic accountability and platform transparency as TikTok becomes a primary news source for young voters.

TikTok Algorithm Shows Systematic Imbalance During 2024 US Elections

Researchers at New York University Abu Dhabi have uncovered evidence that the TikTok algorithm systematically skewed to the right during the 2024 US elections, according to a study published in Nature

1

2

. Professors Talal Rahwan and Yasir Zaki led the investigation, which analyzed how TikTok's 'For You' recommendation algorithm distributed political content across different user profiles. The study matters because TikTok has emerged as a principal source of political information, particularly for young voters who shifted toward Republican candidate Donald Trump by 10 percentage points between the 2020 and 2024 presidential elections1

.The research team created 323 bot accounts designed to mimic real user behavior, training each to signal political preferences by watching videos aligned with either the Democratic Party or the Republican Party

1

. These automated accounts were artificially located through mock GPS and VPN routing in New York, Texas, and Georgia—representing strongly Democratic, strongly Republican, and competitive states respectively. Over 27 weeks during the 2024 presidential campaign, researchers collected more than 280,000 recommended videos and classified their political leanings using a combination of artificial intelligence and human review1

.Political Bias Revealed Through Systematic Analysis

The findings revealed a consistent systematic imbalance in how the platform distributed content. Bot accounts trained on Republican-aligned content received approximately 11.5% more politically reinforcing material compared to bots trained on Democrat-aligned content

2

. Even more striking, bots trained on Democrat-aligned content were shown about 7.5% more content from the opposing side, predominantly videos critical of the Democratic Party1

. These patterns remained consistent across all three states and could not be explained by differences in video popularity or sharing metrics.

Source: Nature

"Our finding isn't just about reinforcement; Democratic accounts were shown significantly more anti-Democratic content than Republican accounts were shown anti-Republican content," Rahwan explained . The research showed that the algorithm wasn't simply giving people what they wanted but was favoring Republican content in ways that transcended user choice. The imbalance concentrated in specific policy areas: immigration and crime for anti-Democrat content, and abortion for pro-Republican content

1

.Shaping Political Information for Young Voters

The study gains urgency from TikTok's growing influence as a news source. According to Pew Research, about 42% of US social media users say these platforms are important for getting involved with political and social issues

2

. TikTok's unique structure makes it particularly susceptible to algorithmic influence. Unlike other platforms where users retain significant control over their feeds, TikTok's For You page is driven almost entirely by the platform's recommendation algorithm, with users having minimal ability to customize what appears1

.PhD student Hazem Ibrahim, who worked on the study, noted that the amplification of content designed to attack the opposing side on its weakest ground represents "a more targeted and arguably more concerning pattern than a uniform ideological drift"

2

. The research team emphasized that on TikTok, users don't need to follow anyone—the system decides based on behavioral signals like watch time, making it a uniquely clean setting for studying algorithmic influence because user self-selection is minimized.Related Stories

Implications for Platform Transparency and Regulation

The findings carry significant weight for ongoing debates about algorithmic accountability and platform regulation. The European Union's Digital Services Act already requires large online platforms to assess and mitigate risks to electoral processes, whereas US law grants platforms broader editorial discretion

1

2

. Yasir Zaki stressed that "in an environment where margins are thin, systematic differences in the kind of political information recommended to tens of millions of young voters are worth taking seriously"2

.The study cannot determine exactly why the algorithm produces this imbalance—whether it stems from internal rules, content availability, or other factors not visible to outside researchers

1

. The researchers acknowledge limitations: their bots captured only early stages of user experience, analyzed only English-language video transcripts, and findings shouldn't be generalized beyond the three states studied. Future work could pair automated audits with data from real users, extend analyses beyond election periods, and compare TikTok's behavior with other platforms to determine whether this imbalance is specific to TikTok or more widespread1

. A survey of 1,008 US-based TikTok users supported the bot findings, with Republican-leaning respondents reporting seeing more content aligned with their views than Democrat-leaning respondents1

.References

Summarized by

Navi

Related Stories

TikTok Prepares Standalone US App with Separate Algorithm and Data System

10 Jul 2025•Technology

AI Research Tool Shows Social Media Algorithms Can Reduce Political Polarization by 3 Years in Days

27 Nov 2025•Science and Research

AI-Powered Study Reveals Inherent Polarization in Social Media, Challenging Algorithm Blame

13 Aug 2025•Science and Research

Recent Highlights

1

Anthropic overtakes OpenAI as most valuable AI startup with $965 billion valuation

Business and Economy

2

Apple's Siri overhaul for iOS 27 brings Gemini integration and standalone app to compete with ChatGPT

Technology

3

Pope Leo XIV releases major AI encyclical calling for 'disarmament' of artificial intelligence

Policy and Regulation