AI Fakes of Cole Tomas Allen Flood Facebook With Fabricated Celebrity Ties and Sports Team Links

2 Sources

[1]

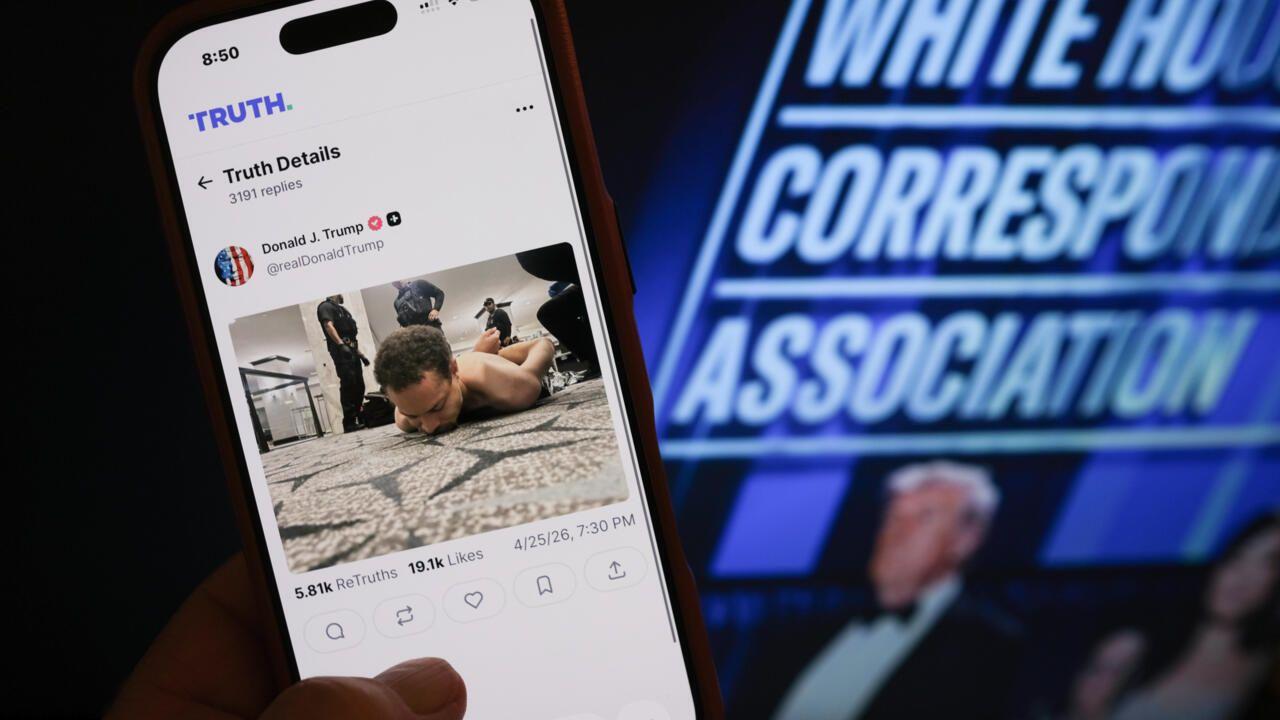

Facebook Flooded With Bizarre Deepfaked Photos of Alleged White House Correspondents' Dinner Gunman

Cole Tomas Allen, a 31-year-old from Torrance, California, has been charged with trying to assassinate President Donald Trump during the White House Correspondents' Dinner on Saturday, though plenty of questions remain about his intended target and whether he even got a shot off. But we can say one thing for certain: All those images you're seeing that depict Allen in the attire of various sports teams are 100% fake. Allen was first identified by the Associated Press late on April 25. The following day, AI-generated images purporting to show Allen in the clothes of different sports teams were popping up on Facebook. The sports attire was associated with different sports and levels of play, including baseball teams like the L.A. Dodgers, hockey teams like the Montreal Canadiens, and colleges like the Oregon Ducks and the Michigan State Spartans. The fact-checking site Lead Stories notes that many of the fake images were posted by a Facebook page called West Coast Sluggers with versions of the caption: BREAKING: The shooter at the White House Correspondents' Dinner has been identified as 30-year-old Cole Allen from Torrance, California. Prior to the incident, he worked as a security staff member for the Los Angeles Dodgers and had appeared multiple times at their games. That's not true, of course. Allen is reportedly a teacher and engineer, and there's no evidence that he ever worked as a security staff member for any sports team. It appears that the West Coast Sluggers account was using AI to take a photo of Allen and make him wear the attire of various sports teams, seemingly in an effort to grab the attention of people who are fans of those teams. Other slop accounts on Facebook also spread the lies, like one focused on Ohio called The Ohio Spirit. Clicking on the link provided on that Facebook post takes users to a slop article that features ads from something called Capital One Shopping, which prompts users to install an extension that's almost certainly going to do horrendous things to your computer. Reporter Ellyn Briggs posted a video showing the examples she's seen. Plenty of other nonsense was spreading after the revelation of Allen's identity, including a news report that featured him from 2017. The video really did show Allen, but claims that it also showed Usha Vance, the wife of Vice President JD Vance, were untrue. The woman looked nothing like Usha Vance. Allen has been charged with transportation of a firearm and ammunition through interstate commerce with intent to commit a felony, discharge of a firearm during a crime of violence, and the big one, attempting to assassinate the president.

[2]

AI fakes of accused US press gala gunman flood social media

Washington (United States) (AFP) - Facebook has been overrun with low-effort AI fakes inventing biographical details and celebrity connections for the man charged with trying to assassinate Donald Trump at a Washington press gala Saturday. Trump and senior administration officials were evacuated from the White House Correspondents' Association dinner as sounds of gunfire rang from a floor above the ballroom, where the suspect had attempted to sprint past security. Within hours of authorities identifying the suspect as Cole Tomas Allen, 31, of California, AI-generated images depicting him beside numerous celebrities pinballed across Facebook in posts saying he was their "former driver," "assistant" or "production crew member." An AFP investigation found more than 50 public figures falsely associated with Allen, from actors Tom Hanks and Sydney Sweeney to musicians Chris Brown and Taylor Swift. Politicians including former US president Barack Obama and Canada's Pierre Poilievre were also falsely implicated, as well as Pope Leo XIV and NBC News anchor Savannah Guthrie. Meta did not immediately respond to AFP's request for comment. The fakes reflect an online ecosystem saturated with content known as "AI slop." Once largely focused on celebrities, generative content has quickly scaled to portray individuals like Allen, whose online presence was limited. "Two years ago, you probably wouldn't have been able to make those images of him, because we could only really make compelling fakes of celebrities who had a large digital footprint from which the AI systems had been trained," said the University of California, Berkeley's Hany Farid, who is also chief science officer at GetReal Security. "Now, all I need is a single image of you." Aaron Parnas, an independent journalist whose likeness appeared in AI-enabled posts claiming Allen worked for him, pleaded on Facebook for people to report the "completely fake" images. "This is extremely dangerous," Parnas told his followers. 'Designed for virality' A separate rush of posts falsely claimed Allen had been on staff for over 40 different professional and collegiate sports teams, with AI-generated visuals dressing him in gear for teams across the NFL, NHL, NBA, WNBA and NASCAR. Many of the renderings appear based on the picture from a tutoring company's post recognizing Allen as "teacher of the month" in December 2024. The template-driven format resembles the output of content mills that mass-produce made-up clickbait stories, said digital literacy expert Mike Caulfield. "This looks a lot like the same content farm behavior, just with AI," Caulfield told AFP. Recent improvements in AI technologies have made visual fakes easier to create and more convincing, with once-telltale mishaps such as six-fingered hands increasingly less common. "AI makes it trivially easy to take existing photos and change their clothes, environment, or to swap out someone else's face," said Jen Golbeck, a professor at the University of Maryland's College of Information. "As soon as someone gets an idea, they can make it a visual reality." "Five years ago, it would not have been unusual to see people manually photoshopping pictures like the ones we are seeing, but it would never have been at this volume." Researchers expressed fears about the quantity wearing on social media users, who could tire of determining what is real. AFP documented similar bursts of fakes after other major events, including the US capture of Venezuelan leader Nicolas Maduro in January and Charlie Kirk's assassination last year. "These things are being designed for virality, and then of course the algorithms pick up on them," said Farid, from GetReal Security. "It's super profitable." "Every time there's a world event, we are just flooded with this kind of nonsense. I don't think that's going away."

Share

Copy Link

Facebook became overrun with AI-generated deepfake images of Cole Tomas Allen within hours of his identification as the White House Correspondents' Dinner gunman. The fabricated images falsely linked the 31-year-old to over 50 celebrities and numerous sports teams, revealing how AI slop spreads rapidly during major news events and making it harder for users to distinguish reality from fabricated content.

AI Fakes Saturate Facebook After White House Incident

Within hours of authorities identifying Cole Tomas Allen as the suspect in the White House Correspondents' Dinner incident on April 26, social media misinformation exploded across Facebook in an unprecedented wave of AI-generated deepfake images

1

2

. The 31-year-old from Torrance, California, was charged with attempting to assassinate President Donald Trump during the Saturday event, but the real story quickly became obscured by a deluge of fabricated biographical details and celebrity connections that flood social media platforms.

Source: France 24

An AFP investigation documented more than 50 public figures falsely associated with the accused US press gala gunman, including actors Tom Hanks and Sydney Sweeney, musicians Chris Brown and Taylor Swift, politicians like former President Barack Obama and Canada's Pierre Poilievre, Pope Leo XIV, and NBC News anchor Savannah Guthrie

2

. These fabricated images depicted Allen as their "former driver," "assistant," or "production crew member," creating false narratives that pinballed across Facebook with alarming speed.Sports Teams Become Primary Vector for AI Slop

A separate category of AI fakes emerged simultaneously, falsely claiming Cole Tomas Allen had worked for over 40 different professional and collegiate sports teams. The visual fakes dressed him in gear for teams across the NFL, NHL, NBA, WNBA, and NASCAR, targeting fans of specific franchises to maximize virality

2

. Many of these AI-generated deepfake images appeared to originate from a Facebook page called West Coast Sluggers, which posted variations claiming Allen worked as security staff for teams like the L.A. Dodgers, Montreal Canadiens, Oregon Ducks, and Michigan State Spartans1

.

Source: Gizmodo

The posts typically featured captions stating: "BREAKING: The shooter at the White House Correspondents' Dinner has been identified as 30-year-old Cole Allen from Torrance, California. Prior to the incident, he worked as a security staff member for the Los Angeles Dodgers and had appeared multiple times at their games." None of this was true. Allen is reportedly a teacher and engineer, with many renderings appearing based on a picture from a tutoring company's post recognizing him as "teacher of the month" in December 2024

1

2

.Content Farms Exploit AI Technologies for Profit

The template-driven format of these fabricated images resembles output from content farm operations that mass-produce clickbait stories. Digital literacy expert Mike Caulfield told AFP, "This looks a lot like the same content farm behavior, just with AI"

2

. Other accounts propagating this misinformation included The Ohio Spirit, which linked to slop articles featuring ads from Capital One Shopping, prompting users to install extensions with potentially malicious intent1

.Hany Farid, University of California, Berkeley professor and chief science officer at GetReal Security, explained the technological shift: "Two years ago, you probably wouldn't have been able to make those images of him, because we could only really make compelling fakes of celebrities who had a large digital footprint from which the AI systems had been trained. Now, all I need is a single image of you"

2

. This advancement in generative content creation means anyone with a limited online footprint can now become a target for deepfake manipulation.Related Stories

Distinguishing Reality From Fabricated Content Grows Harder

Recent improvements in AI technologies have made visual fakes easier to create and more convincing, with once-telltale mishaps such as six-fingered hands increasingly less common. Jen Golbeck, a professor at the University of Maryland's College of Information, noted: "AI makes it trivially easy to take existing photos and change their clothes, environment, or to swap out someone else's face. As soon as someone gets an idea, they can make it a visual reality. Five years ago, it would not have been unusual to see people manually photoshopping pictures like the ones we are seeing, but it would never have been at this volume"

2

.Independent journalist Aaron Parnas, whose likeness appeared in AI-enabled posts claiming Allen worked for him, pleaded on Facebook for people to report the "completely fake" images, warning "This is extremely dangerous"

2

. Meta did not immediately respond to AFP's request for comment about the AI slop flooding their platform2

.Pattern Emerges Across Major News Events

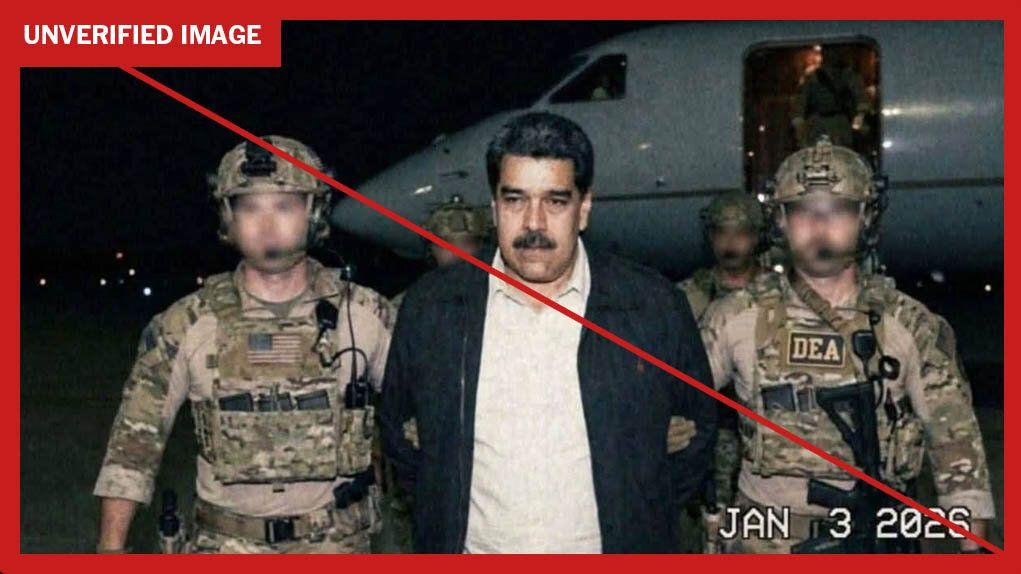

Researchers expressed concerns about the sheer quantity of misinformation wearing on social media users, who could tire of constantly determining what is real. AFP documented similar bursts of fakes after other major events, including the US capture of Venezuelan leader Nicolas Maduro in January and Charlie Kirk's assassination last year

2

. Farid warned: "These things are being designed for virality, and then of course the algorithms pick up on them. It's super profitable. Every time there's a world event, we are just flooded with this kind of nonsense. I don't think that's going away"2

.References

Summarized by

Navi

[1]

Related Stories

AI-Altered Image of Alex Pretti Reaches US Senate Floor, Exposing Misinformation Crisis

31 Jan 2026•Entertainment and Society

Misinformation Spreads Rapidly Following Trump Shooting Incident

15 Jul 2024

AI-generated images of Nicolás Maduro spread rapidly despite platform safeguards

05 Jan 2026•Entertainment and Society