AI delivers jobs and wage growth in exposed industries, even as public backlash intensifies

19 Sources

[1]

Industries most exposed to AI are not only seeing productivity gains but jobs and wage growth too

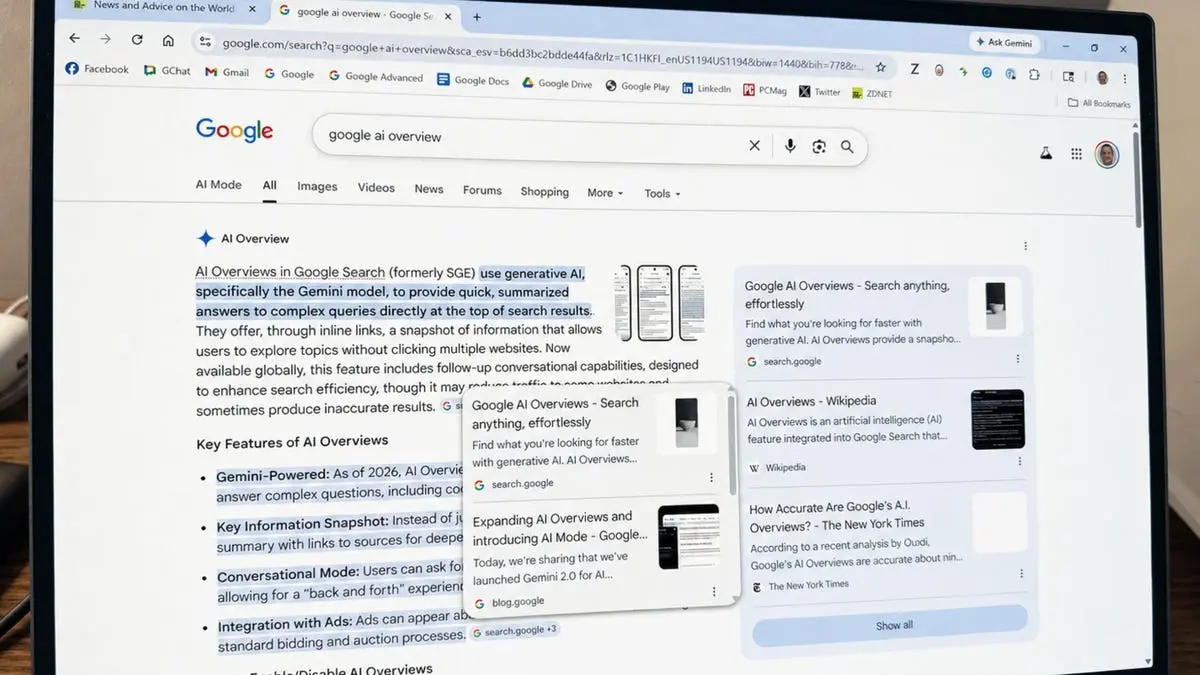

Forecasts of the impact of artificial intelligence range from the apocalyptic to the utopian. An October 2025 report from Senate Democrats, for example, predicted AI will destroy millions of U.S. jobs. A couple of years earlier, consultant company McKinsey forecast AI will add trillions to the global economy, while emphasizing job losses can be mitigated by training workers to do new things. The problem is that many of these claims are based on projections, overly simplified surveys or thought experiments rather than observed changes in the economy. That makes it hard for the public, and often policymakers, to know what to trust. As a labor economist who studies how technology and organizational change affect productivity and well-being, I believe a better place to start is with actual data on output, employment and wages - which are all looking relatively more hopeful. AI and jobs In one of my new research papers with economist Andrew Johnston, we studied how exposure to generative AI affected industries across America between 2017 and 2024, using administrative data that covers nearly all employers. Our analysis covered a crucial period when generative AI use exploded, allowing us to analyze the effect within businesses and industries. We measured AI exposure using occupation-level task data matched to each industry and state's occupational workforce mix prior to the pandemic. A state and industry with more workers in roles requiring language processing, coding or data tasks scored higher on exposure, for example, compared with one with more plumbers and electricians. We then took that exposure ranking by occupation and looked at changes in the standard deviation in occupational exposure, comparing that with labor market and GDP across states and industries from 2017 to 2024. Think of a standard deviation as roughly the gap between a paramedic - whose work centers on physical assessment, emergency response and hands-on care that AI cannot easily replicate - and a public relations manager, whose work involves drafting communications, analyzing sentiment and synthesizing information that AI tools handle well. That gap in AI exposure is roughly what we're measuring when we ask: Does being on the higher-exposure side of that divide change your industry's trajectory? This data allowed us to answer two questions: When AI tools became widely available following the public release of ChatGPT in late 2022, did states and industries that were more exposed to generative AI become more productive, and what happened to workers? Our answers are more encouraging, and more nuanced, than much of the public debate suggests. We found that industries in states that were more exposed to AI experienced faster productivity growth beginning in 2021 - before ChatGPT reached the public - driven by enterprise tools already embedded in professional workflows, including GitHub Copilot for software development, Jasper for marketing and content writing, and Microsoft's GPT-3-powered business applications. In 2024, for example, industries whose AI exposure was one standard deviation higher saw gains of 10% in productivity, 3.9% in jobs and 4.8% in wages than comparable industries in the same state. Those patterns suggest that, at least so far, AI has acted as a productivity-enhancing tool that boosts employment and wages rather than a simple substitute for labor. Augmentation versus displacement A crucial distinction in the data is between tasks where AI works with people and tasks where AI can act more independently. In sectors where AI mainly complements workers - think marketing, writing or financial analysis - our data show that employment rose by about 3.6% per standard deviation increase in exposure. In sectors where AI can execute tasks more autonomously - including basic data processing, generating boilerplate code, or handling standardized customer interactions - we found no significant employment change, though workers in those roles saw slower wage growth. What these findings suggest is that when AI lowers the cost of completing a task and raises worker productivity, companies expand output enough to increase their demand for labor overall -- the same logic that explains why power tools didn't eliminate construction workers. The economic question is not whether any given task disappears. It is whether businesses and workers can reorganize fast enough to create new productive combinations. And so far, in most sectors, our evidence suggests they can. But state policies also matter: These benefits were concentrated in the states with more efficient labor markets, meaning that the impact of generative AI on workers and the economy also depends on the types of policies and institutions of the local economy. Importantly, these findings hold beyond occupational exposure. In additional work with co-authors at the Bureau of Economic Analysis, we found a similar effect on GDP and employment when looking at actual AI utilization -- that is how often workers use AI. Drawing on the Gallup Workforce Panel, we measured workers actively using AI daily or multiple times a week. We found that each percentage-point increase in the share of frequent AI users in a state and industry is associated with roughly 0.1% to 0.2% higher real output and 0.2% to 0.4% higher employment. To put that in context: The share of frequent AI users across all occupations rose from about 12% in mid-2024 to 26% by late 2025, a shift our estimates suggest corresponds to roughly 1.4% to 2.8% higher real output - or about 1 to 2 percentage points of annualized growth over that period. New technologies rarely leave work untouched. But they also rarely eliminate the need for human contribution altogether. Instead, they change the composition of work, as our research shows. Some tasks shrink. Others expand. New ones emerge that were previously too costly or too hard to perform at scale. Put simply, some occupations might go away, but most of them just change. If anything, the trends documented here are likely to strengthen rather than fade. Not only are generative AI tools rapidly improving, but also the experimentation and research and development that many workers and companies are engaging in are likely to pay large dividends. These investments - often referred to as intangible capital - tend to get unlocked a few years after a technology comes onto the scene, once complementary investments have been made. The role of companies and managers Whether AI leads to anxiety or adaptation for workers depends in part on what happens inside organizations. Using additional data collected over many years in the Gallup Workforce Panel covering more than 30,000 U.S. employees from 2023 to 2026, I found in a 2026 paper that workplace adoption of generative AI rose quickly over the period, with the share of workers using AI often increasing from 9% to 26%. But the more important finding is that adoption was far more common where workers believed their organization had communicated a clear AI strategy and where employees said they trust leadership. This suggests that growing adoption and effective use of AI depends not only on the availability of the technology but on whether managers make its use clear, credible and safe. Where that clarity exists, frequent AI use is associated with higher engagement and job satisfaction, and it even reverses the burnout penalties that appear elsewhere. In other words, the broader economic effects of AI depend not only on how sophisticated the tools are but on whether companies and managers create environments where workers can experiment, reorganize tasks and integrate new tools into productive routines. That is, if employees do not feel the psychological safety to experiment, they are less likely to use AI, and they are especially less likely to use it for higher-value work. That is precisely the kind of adaptation that I believe makes labor markets more resilient than the most alarmist forecasts suggest.

[2]

Make bad moves on AI and face voter backlash, govts warned

Britain's government faces a public backlash against AI unless it can show ordinary people that they stand to benefit from its push to inject the technology into every area of the UK in the name of growth. However, the same lessons will likely apply to other governments including the US, where increasing opposition to AI is already apparent. The Institute for Public Policy Research (IPPR), an independent think tank based in London, warns that the great unwashed are increasingly worried about AI, now perceived as one of the biggest global risks to humanity, alongside climate change and the threat of war. This is hardly surprising, given forecasts that glibly talk of millions of workers losing their jobs to AI-based automation of their roles. Forrester recently forecast that 6.1 percent of jobs in the US could be wiped out by 2030, equating to 10.4 million people being laid off. AI biz Anthropic recently boasted that its latest model, Mythos, is so effective at finding security flaws in systems that it would wreak havoc on the internet if it was made publicly available. These claims are disputed, but they add to the perception that AI is like a ticking bomb that will impact many people's lives when it goes off. At the same time, the UK government has gone all-in on AI, announcing its AI Opportunities Action Plan last year that will see datacenters peppered across the land, especially in dedicated "AI Growth Zones," declaring its intention to put AI to work across public services, and unveiling Barnsley as the country's first "Tech Town", shoehorning AI in every aspect of local life. Governments must stand ready to both protect people from the risks of AI and deliberately steer any transformation towards delivering public value, the IPPR says, but there has been little sign of this so far. Efforts to show the public what AI is for do not go far enough, considering the current pace of change, while efforts to rein in potential abuses by the big technology companies have been modest, and any attempts to redistribute the benefits, by giving the public a stake in AI's economic upside, for example, are non-existent. Or so the IPPR says in its newly published report, "Acceleration is Not a Strategy," which it presents as "a framework for directing AI towards public value before it's too late." The signs of a growing backlash are there, from opposition to AI datacenters being built and arguments over copyright theft to train models, plus increasingly heated debates about children's safety, the report states. It claims there is now a growing coalition of people with strong anti-AI sentiment, and a real risk that justified concerns will harden into blanket opposition to anything AI-related before long. Governments, especially in the UK and EU, are caught between two modes: pushing on the AI accelerator, or stressing AI safety and governance. Neither approach concerns itself with addressing AI's societal impact. Instead, governments need policies that steer AI development towards specific public outcomes so that people can clearly see what AI is for, the report says, and market forces alone will not do this. The UK's Department for Science, Innovation and Technology (DSIT) found that AI adoption is concentrated on the low-hanging fruit rather than on transformative, high-impact challenges, according to the IPPR. It also recommends that priority sectors are assisted in adopting AI systems properly. What often hinders adoption is not technology readiness but infrastructure readiness. If this is not addressed, even well-designed AI tools will fail to deliver public benefit at scale. One barrier to AI in health services is the lack of underlying community health infrastructure, the report cites as an example. Perhaps more contentiously, the IPPR states there is a need to shift the balance of power in the AI economy, as this currently rests with a handful of massive tech corporations, and the big three cloud operators in particular. These are increasingly shaping the application layer and determining which AI products reach consumers. Without intervention, these megacorps will continue to be the ones that shape AI adoption, risking a market with fewer choices, higher prices, less privacy and ever-larger models with more severe environmental impacts, the report claims. Sadly, the UK's regulator, the Competition and Markets Authority (CMA) doesn't seem in any rush to do much about it. The IPPR says if governments want AI to deliver public benefit, politicians must act now to ensure the benefits of AI are broadly distributed. As a first step, governments should begin to rebalance tax and subsidy schemes so businesses are rewarded for raising worker productivity rather than automating away jobs. Currently, tax breaks are a fiscal incentive to automate rather than augment workers, it claims. The report argues 2026 should be the year when governments adjust their policy approach, in recognition that simply growing the AI sector and hoping for spillover benefits is not a winning strategy. Governments need to become more interventionist in steering AI towards delivering clear public value, and confronting the extreme concentration of power that currently exists in the AI economy to ensure any benefits from AI are broadly felt. ®

[3]

AI has an Awful Image problem

The techies of Silicon Valley are currently buzzing with happy talk about how AI is changing everything. They may well be right. But whereas most of them assume this will be for the better, the US public appears to think it will be for the worse. AI dread stalks the heartlands amid worries over job losses, child safety and the environmental impact of giant data centres. The chasm of perception between industry and society in the US is highlighted in Stanford University's latest AI Index report. Whereas 73 per cent of AI experts think the technology will have a positive impact on people's jobs, only 23 per cent of the public think the same. It's a similar story with the economy and medical care. Most alarmingly, those likely to live longest in the AI-defined future appear the most alarmed by it. Among US voters aged 18 to 34, AI's net favourability rating is minus 44, according to an NBC poll. In one instance, distrust of AI has even spilled over into violence. A 20-year-old man was arrested on Friday after throwing a firebomb at the San Francisco home of Sam Altman, OpenAI's chief executive. Federal officials said the man was in possession of an "anti-AI document" warning of the dangers of humanity's extinction. The mixed messaging of the industry has not helped. On the one hand, AI executives promise to cure disease, tackle climate change and usher in an era of radical abundance. On the other, they warn of job disruption, cyber and bio threats and existential risk. As with previous economic upheavals, such as the industrial revolution and globalisation, AI's economic gains are likely to be generalised while the pains are localised. It is also easier to identify the jobs upended today than to imagine those created tomorrow. The internet created demand for software coders, for example, but AI may lead to their replacement by prompt engineers. The Luddites get a bad rap for resisting the industrial revolution. But they were rational economic actors: it took decades before ordinary workers benefited from the revolution's productivity gains. Still, we are in the early days of the AI transformation. Three factors may yet reshape the public debate. First, AI is unusual in that it drastically cuts the cost of intelligence; it is an invention for inventing. Not only can the technology boost productivity, it can also transform creativity and scientific discovery. As James Manyika, Google's senior vice-president for research, technology and society, tells me: "AI is the Industrial Revolution plus The Enlightenment." Much of the talk at the moment is about AI's "denominator" effects, reducing the costs of producing goods and services. But it will increasingly focus on its "numerator" effects, enabling innovation and new types of business to be created, driving additional revenue. Second, users are increasingly realising the benefits of AI for themselves. The adoption of AI has been faster than previous technologies. Within three years, generative AI has reached 53 per cent of the population in the countries surveyed, according to the Stanford study. Thousands of start-ups are applying that technology in beneficial ways, to explore new ways of developing drugs and personalising education, for example. The increasing use of AI agents is also stimulating a burst of individual creativity and economic opportunity. That might yet lead to the emergence of "political superintelligence", in the phrase of Andrew Hall, a political economy professor at the Stanford Graduate School of Business. AI can itself be used to better inform the debate, test solutions and improve governance. Improbable as it may seem today, a more responsive regulatory regime is likely to develop in the US at the federal level, too. The Stanford report found that Americans had lower trust in their own government to regulate AI effectively than in any of the other 29 countries surveyed. There is clearly strong demand for more protective legislation, reflected by the 145 AI-related bills passed in US states by 2025. Finally, if AI really can boost productivity then that will itself change the terms of the debate. Dario Amodei, Anthropic's chief executive, suggests that over the past few decades economic growth has been difficult but creating methods of redistribution has been (relatively) easy. But, he tells me, AI may yet flip that calculation. Existing tax systems have been designed to underpin generous welfare states in many countries. But in a world of abundant growth, and increasing concentration of economic wealth, the mechanisms of redistribution will have to be rethought. The logical answer would be a radical shift in taxation from income to capital. We are not there yet but we may be one day soon.

[4]

CEOs are betting AI will augment work rather than displace all workers

Jack Clark, co-founder of Anthropic, at the Semafor World Economy Summit during the International Monetary Fund (IMF) and World Bank Spring meetings in Washington, DC, US, on Monday, April 13, 2026. The effect artificial intelligence will have on the labor market has left workers and job seekers alike worried about their future. Top executives, however, are optimistic that the technology can continue to augment workloads rather than entirely displace human employees. The debate over the future of work even extends inside the corridors of a major AI provider. Speaking Monday at the Semafor World Economy conference in Washington, D.C., Anthropic co-founder Jack Clark dismissed Anthropic CEO Dario Amodei's argument that AI could drive the unemployment rate as high as 20% in the next five years. Clark has previously said that accepting such high joblessness is almost a policy "choice," given that any potential collapse in the job market would take time to play out and is a challenge that can be met by society. "I think that the aspect of this, which is a choice is, if we're correct, this technology really is going to change the world in a vast way," Clark said on stage at the conference. "It will change how business is done, ... aspects of national security, how we even relate to one another as people. And it's impossible to reconcile that with a world where the economy doesn't change in substantial ways as well." Anthropic has been at the center of AI disruption fears in the stock market, resulting in a bloodbath for software companies, which investors suddenly see as vulnerable to technological obsolescence in a world moving toward agentic systems that take actions with minimal human oversight. The iShares Expanded Tech-Software Sector ETF (IGV) is in a bear market, after plunging more than 30% from its high last September. Those changes will force a reworking in how employees meet the labor market, with Clark noting that he sees some weakness in early graduate employment in some industries. Clark leads The Anthropic Institute, a 30-person think tank studying AI's effects on the workplace. Clark said college students entering the job market today have to understand how to analyze and connect information across many disparate disciplines. He is less enthusiastic about students building what he called rote programming skills. "What AI allows us to do is it allows you to have access to sort of an arbitrary amount of subject matter experts in different domains," Clark said. "But the really important thing is knowing the right questions to ask and having intuitions about what would be interesting if you collided different insights from many different disciplines." Here is how some of the other Semafor panelists are thinking through the implications of AI in business: Jon Clifton, CEO of Gallup, said the countries most likely to have an edge in the future are the ones with a larger portion of the workforce using AI. "We can see that 50% of all of American employees are using AI. But one of the challenges was ... are you seeing the productivity gains? It's not being used a lot. So interestingly enough, only 13% of employees are actually using it on a daily basis," he said. Daniel Herscovici, president and CEO of Plume, outlined the importance of having a dedicated leader outlining a company's AI strategy: "We have an AI czar ... she's amazing, and she's dictated our strategy moving forward. So I think that assigning somebody whose job is to wake up every day and [address] how to implement the infrastructure is quite important." Asked if he is working less after implementing AI more into his day, Herscovici said, "absolutely not," adding that, "I am getting more done in my eight or nine or 12 hour day, that's for sure." Salil Parekh, managing director and CEO of Infosys, said he's focused on ensuring his workers learn new skills using AI: "The approach we've chosen is to re-skill all our 300,000 employees on AI tools," he said. "So first we do a lot of work, where, in the first few months of training, we encourage the sort of recent graduate to not use any AI tools and learn how software development is done. And then bring in, after two or three months, the usage of tools and see how things are enhanced."

[5]

The AI Doomers Who Are Playing With Fire

After OpenAI's ChatGPT burst onto the scene in late 2022, it wasn't long before mainstream America started hearing about the warnings. Executives at the top AI companies told us that they were building a radical new technology that posed imminent risks to society. And it wasn't just about digital security. AI had the power to destroy the entire world. From the jump, it was clear that these warnings were as much a sales tactic as they were an earnest prediction of how AI would behave and the ripple effects it would create. AI execs even testified in Congress to tell us how scary it all was, practically begging for regulation, all while hawking their wares to the government. Now, those execs are the ones telling everyone to calm down. Chris Lehane, OpenAI's global policy chief, sat down for an interview with the San Francisco Standard this week in the wake of at least one attack on CEO Sam Altman's home. “Some of the conversation out there is not necessarily responsible,†Lehane told the Standard. “And when you put some of those thoughts and ideas out there, they do have consequences.†Lehane was referring to the person who allegedly threw a Molotov cocktail at Altman's house a week ago. Twenty-year-old Daniel Moreno-Gama of Texas was charged with throwing an incendiary device at Altman's home before going to OpenAI’s headquarters, where he hit the glass doors with a chair. Moreno-Gama was carrying an anti-AI "document," according to police, suggesting his motivations were related to concerns over artificial intelligence and existential threats. The Wall Street Journal reports that he had called for “Luigi’ing some tech CEOs," a reference to Luigi Mangione, who's been charged with murder for killing UnitedHealth's CEO. A second incident, just two days later, in which two people supposedly shot a gun near Altman's home, is still under investigation, though the initial suspects have been released from jail. Lehane divides the world into two groups of people: those who think AI is the greatest thing ever, and will inevitably lead to a world of abundance and leisure; and those whom he calls doomers, who "have a very, very negative and dark view of humanity.†The so-called AI doomers simply aren't being sold properly on the benefits of this new tech, Lehane argues. “Our job at OpenAI and in the AI space â€" and we need to do a much better job â€" is to explain to people why â€| this is going to be really good for them, for their families and for society writ large," Lehane told the Standard. But it's hard to take that argument seriously after everything guys like Altman have been saying. It didn't even start as late as 2022, either. Back in 2015, Altman said, “I think that AI will probably, most likely, sort of lead to the end of the world. But in the meantime, there will be great companies created with serious machine learning." How do you hear something like that from a powerful person and just accept it? You have two options: You can dismiss Altman as unserious and assume humanity should do nothing. Or you can take the tech CEOs at their word that the tech they're building could end the world. Which leaves you with the question of what you can do about that. We know what happens in dystopian fiction. In Terminator 2: Judgement Day, Sarah Connor decides they need to kill the researcher most responsible for starting Skynet and the rise of the machines. She can't bring herself to do it, but after explaining what will happen in the future, the researcher helps gain access to the technology so that it can be destroyed. Altman has also warned that AI could be used to "design novel biological pathogens" and signed onto a letter about the "risk of extinction," if AI isn't tamed. But he's also tried to claim that the U.S. needs to be the one developing these potentially catastrophic technologies because leaving that to geopolitical adversaries carries risks in itself. “A misaligned superintelligent AGI could cause grievous harm to the world; an autocratic regime with a decisive superintelligence lead could do that too," Altman wrote in 2023. I turned to Altman's product, ChatGPT, to ask about his comments on existential threats to humanity. Specifically, I asked if Altman had talked about rogue AI or the end of the world on the Joe Rogan podcast. Hilariously, ChatGPT said he hadn't appeared on Rogan. Altman did, in fact, appear on Episode 2044 of the Joe Rogan Experience, first released on October 6, 2023. I corrected ChatGPT, and it did the now-cliche, 'you're right etc, etc.' The quotes it gave me: That last quote isn't accurate, as far as I can tell. It's not in YouTube's transcript for the episode. But Altman did say something very close to that in an interview with the StrictlyVC podcast. “The bad caseâ€"and I think this is important to sayâ€"is, like, lights-out for all of us," Altman explained to a room full of people. Close, but not exact, which perhaps demonstrates how AI systems are failing people in their lived experience. Anthropic CEO Dario Amodei has made similar statements, telling Axios earlier this year that, "Humanity is about to be handed almost unimaginable power, and it is deeply unclear whether our social, political, and technological systems possess the maturity to wield it." Amodei claims that "AI-enabled authoritarianism terrifies me." Amodei has also warned that anyone with a STEM degree could make a bioweapon with the help of AI models, and he has called for guardrails. Some of those guardrails have gotten Anthropic into trouble, since the Pentagon blacklisted the company and is in the process of purging Claude from its systems. Amodei had refused to drop protections that prohibited the use of Claude for mass domestic surveillance and autonomous weapons systems. If someone testifies that they've made a tool that could potentially end the world, you'd expect that person to be immediately marched out in handcuffs. That's an idea that was floated to me third-hand a couple of years ago, and I wish I knew who originally said it. But it's spot-on. Think about it in any other context. Someone says that they've built a weapon that could go rogue and literally end life on planet Earth. Does the federal government just act like the only fix is light regulations that tinker around the edges? Or do the executives at that company get rounded up and tossed in jail for making terrorist threats? Aside from the rise of Skynet, there's obviously the pressing matter of job displacement. Many companies have cited AI as a reason for layoffs in the past year, even if they sometimes have an incentive to use that as a convenient excuse. But there's no denying that AI is now good enough at writing and other white-collar work to cause some kind of disruption in the labor market. The AI CEOs are keen to tell everyone that these disruptions are coming, insisting that the government should do something about it, while also lobbying that same government to keep it out of their hair. Perhaps no one exemplifies this attitude better than Elon Musk, whose company xAI makes the Grok AI chatbot. "Universal HIGH INCOME via checks issued by the Federal government is the best way to deal with unemployment caused by AI," Musk wrote on Friday. "AI/robotics will produce goods & services far in excess of the increase in the money supply, so there will not be inflation." I've argued before that it's ridiculous for Musk to insist we'll have a world of utopian abundance provided by the government. During Musk's time as President Trump's henchman last year, the billionaire helped with the complete destruction of USAID, cut funding for vital programs, and railed against people he claimed were milking the system. His so-called Department of Government Efficiency (DOGE) helped purge roughly 300,000 federal employees, and he made it his mission to say that undeserving people didn't deserve government handouts. Now this is the guy who says you shouldn't worry about AI because the government is going to hand out free money? Absurd. Why would anyone try to sell the public a product on the idea that it's going to take their job? Because the pitch is meant for investors, the government, and the people who purchase enterprise software for companies. You should focus on making your avatar look like a Studio Ghibli movie. The AI elites are all selling their products as inevitable. Part of their sales pitch is that there's nothing you can do to stop any of it. And the public just needs to accept it while finding ways to work within a system where AI causes job losses. These oligarchsâ€"and they are very much oligarchs, vying to be the favored members of the ruling classâ€"were not elected. But they will nonetheless dictate what your life looks like in the next year, five years, or 20 years, if you're lucky enough to survive the robot uprising. Altman himself wrote a blog post a week ago after the attack on his home. He shared a photo of his husband and child, "in the hopes that it might dissuade the next person from throwing a Molotov cocktail at our house, no matter what they think about me." And it seems Altman is doing his best to humanize himself to dissuade more potential attacks. Whatever happens, it feels like the AI executives have painted themselves into a corner. They've told everyone their product has the potential to destroy everything. They were the doomers, if we want to call it that, at least when it was convenient. And now we seem to be entering a different era where the same people who told us about the dangers of AI try to get us to look exclusively at what they claim are enormous benefits for society; so far, with little to show. It's unclear how you put that doomer genie back in the bottle.

[6]

'This is not fun and games...': OpenAI exec says AI 'doomers' are holding back the 'incredible economic opportunities' the tech can provide for 'families and for society writ large'

* OpenAI's Chris Lehane says negative opinions on AI 'do have consequences' * AI can 'create incredible economic opportunities,' he says * Public opinion on AI as a whole is not very positive OpenAI's Vice President of Global Policy Chris Lehane wants to reframe the conversations around AI and its benefits for humanity, telling the San Francisco Standard, ""This is not fun and games...This is really serious shit." "Our job at OpenAI and in the AI space -- and we need to do a much better job -- is to explain to people why ... this is going to be really good for them, for their families and for society writ large," Lehane said. Trust in AI is continuing to decline amongst the US population, with a recent Pew poll finding just 17% believe AI will have a positive impact on the United States over the next 20 years. Public opinion is not on AI's side Commenting on the two sides of the AI debate, Lehane said, "You have one group that effectively says, 'This is going to be the greatest thing ever, everyone's going to be living in beachside homes, painting in watercolors as they while away their days.' And then you have another extreme, which I would call the Doomers, who have a very, very negative and dark view of humanity." He added AI companies haven't helped these perspectives on AI by making announcements and comments about how AI could impact the future. "You've had a series of things that have been put out there -- but haven't come to fruition -- about extreme things that are going to happen," he said. Lehane said he understands people are worried about the effects AI could have on society, specifically on the job market, bills, and the potential harm it could cause to children, but likened these worries to the concerns people had before other technological leaps. Slightly counter to Lehane's remarks, the negative effects of AI are already being felt by everyday people with almost none of the promised benefits. Block has cut almost 40% of its workforce in favor of AI alternatives. Pinterest is set to fire 15% of its headcount in 2026 and replace them with AI. Numerous other companies including HP, IBM, Salesforce, and more have announced AI related job cuts. Call me a 'doomer', but job cuts, data centers pushing up energy prices, and a growing number of AI psychosis cases may be a reasonable basis for distrusting the promised benefits of AI. So what is on the table? A recently published OpenAI whitepaper [PDF] has explored all the ways AI can "create incredible economic opportunities," Lehane says. These include "Adaptive safety nets that work for everyone," funded "by increasing reliance on capital-based revenues -- such as higher taxes on capital gains at the top, corporate income, or targeted measures on sustained AI-driven returns." The whitepaper also claims that AI can "help solve scientific challenges that still elude human effort: curing or preventing diseases, alleviating food scarcity, strengthening agriculture under climate stress, and speeding up breakthroughs in clean, reliable energy." The paper concludes, "We offer these ideas not as fixed answers but as a starting point for a broader conversation about how to ensure that AI benefits everyone." Convincing the wider public that OpenAI still holds the interests of humanity at heart is a tough sell, especially given the upheaval and corporate evolution of a company that started as a non-profit dedicated to ensuring AI "benefits all of humanity." Countering the growing opposition to data center construction and AI development will be another battle entirely. Follow TechRadar on Google News and add us as a preferred source to get our expert news, reviews, and opinion in your feeds.

[7]

Gen Z is terrified of the AI revolution. Nobody's preparing them for it

Why it matters: This is a growing problem for just about everyone -- kids, educators, employers and politicians. * The youngest, most technologically native age group should be among the biggest cheerleaders and beneficiaries of AI. They aren't. If anything, their feelings are growing more sour. By the numbers: Gen Z's excitement about AI dropped 14 points over the last year to just 22%, according to Gallup polling released last week. Hopefulness about the technology fell nine points to 18%, while anger rose nine points to 31%. * You can't blame that trend on the AI-averse. Daily AI users among the cohort saw even bigger drops in sentiment, with excitement falling 18 points and hopefulness tumbling 11 points. The school failure is real. More than half of college students surveyed in another Gallup poll this month say their school either discourages (42%) or outright bans (11%) AI use. * Educators know this is happening. 63% of faculty think their schools' 2025 graduates were not very or not at all prepared to use AI at work, per an American Association of Colleges and Universities survey. * Some students are trying to outsmart the times. 16% of currently enrolled college students changed their major due to AI, Gallup found. * There are efforts to address the issue. Khan Academy, the TED conference and testing giant ETS announced this week that they're spinning up a $10,000 interactive online program that aims to train students for AI-era jobs. The job market isn't helping. The most recent data from the Fed pegged the unemployment rate for recent grads at 5.7% in Q4 2025. That's above the national rate, which almost never happens. Underemployment for recent grads is at 42.5%, the highest since 2020. * AI is at play here, too. At companies that adopted AI, junior hiring fell nearly 8% within six quarters -- not via layoffs, but through a quiet freeze on new positions, per a Harvard working paper tracking 62 million workers. Between the lines: The cruelest part of this shift is structural. Entry-level jobs are likely the ones AI will automate first -- and they're what teach young workers to think, build judgment and ultimately move up. * If a company's bottom rungs are empty, it's hollowing out its own management pipeline years down the road. * A bright spot: IBM announced earlier this year that it's tripling entry-level hiring, predicting that the rush toward AI will fundamentally expand what the newest workers do. The other side: There's a counterargument that the tepid job market for the youngest workers isn't solely due to AI. Some prominent economists see it as an overcorrection from the post-COVID hiring binge in 2021. * Almost 60% of hiring managers use AI as an excuse for layoffs or hiring freezes because it plays better with stakeholders, per a Resume.org survey. * Marc Andreessen called AI a "silver-bullet excuse" for layoffs last month. Salesforce CEO Marc Benioff said it was "the lazy way out." OpenAI CEO Sam Altman branded it "AI-washing." * The honest, less sexy answer is that it's probably both: Layoffs and hiring freezes are real and targeting younger workers, while AI solidifies changes in the workforce that are already underway. Here are three things you can do to help young people in your life tackle this shift in the most clear-eyed way: The bottom line: The generation best positioned to thrive in an AI world is the most frightened of it -- because the people who should be teaching them aren't, and the companies that should be hiring them won't. * Axios' Shane Savitsky contributed. 📈 If you're a CEO or on a CEO's team: Apply now to join Jim's new Axios C-Suite weekly newsletter.

[8]

Bosses say AI boosts productivity - workers say they're drowning in 'workslop'

Workslop refers to AI-generated work that seems polished but is flawed and in need of heavy corrections Ken, a copywriter for a large, Miami-based cybersecurity firm, used to enjoy his job. But then the "workslop" started piling up. Workslop is an unintended consequence of the AI boom. It's what happens when employees use AI to quickly generate work that seems polished - at least superficially - but is in fact so flawed or inaccurate that it needs to be heavily corrected, cleaned upor even completely redone after it's passed on to colleagues. For Ken, the problem started after his company's CEO laid off several of his colleagues and mandated that remaining workers use AI chatbots, saying it would boost their productivity. While initial drafts were a breeze to create, Ken and his co-workers had to spend more time rewriting, correcting errors and resolving disagreements between each other's chatbots than if they had never used AI at all. "Quality decreased significantly, time to produce a piece of content increased significantly and, most importantly, morale decreased," said the copywriter, who spoke under a pseudonym for fear of losing his job. "Everything got a whole lot worse once they rolled out AI." Ken said the company's executives shifted the blame to staff when they pushed back about AI-fueled productivity decreases. Ken's experience reflects an emerging divide between employees and their leaders when it comes to AI: a recent survey of 5,000 white-collar US workers found that 40% of non-managers say AI saves them no time at all at work, while 92% of high-level executives say it makes them more productive. So what's causing this workslop deluge? The answer is more complex than being simply a case of workers cutting corners. The real driving force connects back to the C-suite. Companies have spent billions on enterprise investment in generative AI. Some of them, like Block, Amazon, Dow, UPS, Pinterest and Target, have laid off human workers at the same time, attributing the cuts to AI's potential productivity. Workers who remain feel pressured by their employers to use AI to produce more work, often with little guidance or training. A disconnect separates executives giddy about generative AI from workers - who are finding that AI merely makes their jobs harder. "People are being told to use AI, often without direction or support," said Jeff Hancock, a co-author of the study that coined the term "workslop", and a Stanford researcher and BetterUp scientific adviser. While Hancock believes that generative AI could eventually power tools that help workers improve efficiency, in many cases, the incorporation of AI is having the opposite effect. Hancock's study, which is not yet peer-reviewed, surveyed 1,150 US desk workers, a subset within the total 5,000. The researchers found that 40% of workers had encountered workslop within a month, and then spent an average of 3.4 hours a month dealing with it - which the study estimates adds up to $8.1m in lost productivity for a 10,000-person organization. Kelly Cashin, a freelance product designer, told the Guardian she encounters workslop often. "It seems to be common to just copy and paste a bot's message directly into chats or emails," she said. At times, when she's confused by work a colleague sent her, they'll respond, saying: "Yeah, I'm not sure what AI meant by that" - meaning they're effectively outsourcing judgment to the chatbot. "Although it is personally frustrating, I understand why people do this. There's a lot of pressure to increase productivity compounded by serious uncertainty in the job market," Cashin said. Philip Barrison, a University of Michigan MD-PhD student who surveyed staff while embedded in primary care clinics, found a similar workslop issue cropping up for medical staff who had been encouraged to use AI to generate email replies to patient questions. That approach was meant to save clinicians' time. "Based on reporting and my own observations, it doesn't," Barrison said. Instead, many of the workers he spoke to described a lot of editing labor, frustration and concerns about data security and patients receiving AI-assisted emails with errors. Because the AI tools are optional, "once they get past the novelty [of the AI], they start ignoring it", Barrison said. One reason employers are pushing generative AI in workplaces is because many companies are aiming to reduce their labor costs after investing in the tech, says Aiha Nguyen, who leads the Labor Futures program at the Data & Society non-profit research institute. But those investments haven't paid off, or at least not yet. One of the conclusions of an often-cited MIT report found that 95% of firms aren't seeing returns on their investments in AI. Other recent assessments from the software giant SAP and the professional services and consultant firm Deloitte report a larger fraction of businesses generating returns on investment, but they are still the minority. Businesses expect - or hope - better returns will materialize after two to four years, which is rather slow for technology investments, according to the Deloitte report. "The problem is, generative AI is often being presented as a general-use tool that can do anything, but the reality doesn't work that way. So what could be creating part of the workslop is [AI's] unclear mandate or use case," Nguyen said. AI has become a sticking point as unionized workers negotiate the terms of new contracts, Dan Reynolds, research economist of the Communications Workers of America, said. Unions are demanding clearer mandates for the tech, and more worker input and control over how it's used. "Firms are pretty open about using AI to streamline operations, and so a natural response is to interrogate what those tools can actually do and the power dynamics that surround their use," said Sarah Fox, director of Tech Solidarity Lab at Carnegie Mellon University. Fox said she was skeptical when firms say they are deploying AI within their companies to improve productivity and efficiency and to help workers be better at their jobs. "Actually that obscures larger changes to labor dynamics," and reduces workers' autonomy rather than empowering them, she said.

[9]

There Are Signs of a Massive AI Backlash

Can't-miss innovations from the bleeding edge of science and tech The public outrage over the tech industry's obsession with AI is starting to boil over -- and the pitchforks are coming out. Most recently, a man allegedly lobbed a Molotov cocktail at OpenAI CEO Sam Altman's house. Days earlier, a councilman in Indianapolis said that somebody had fired a dozen bullets at his house, with a handwritten note reading "No Data Centers" left on his doorstep. A similar story is playing out across swathes of rural America, with small towns continuing a years-long effort to keep environmentally damaging data centers that put a huge strain on water availability and the power grid out of their communities. Earlier this week, voters in a small town in Missouri led a revolt, firing half of their city council over a recently-approved $6 billion data center deal. In short, public backlash over AI has long broken the confines of snarky online commentary. Residents are starting to stand up to what tech leaders continue to claim is a technological revolution, while workers are actively rebelling after being forced train their AI replacements. The public tone is notably starting to shift, as journalist Brian Merchant noted in a recent blog post, with some politicians even publicly throwing their weight behind moratoriums on data center development. Whether the public will eventually reap the benefits of the industry's enormous investments remains as dubious as ever. As Axios points out, the industry is struggling to agree on a cohesive narrative, with OpenAI arguing in a controversial industrial policy paper published earlier this month that we could soon live in a society where the tax burden shifts from human labor to capital, while workers benefit from a four-day workweek. Anthropic CEO Dario Amodei, on the other hand, continues to emphasize that AI poses a massive risk to society and needs to be controlled at all costs. The widening schism between optimism and disillusionment is forcing AI companies into damage control mode. Their attempts to regain control over the narrative are hard to overlook. Just days before the New Yorker published an unflattering exposé about Altman, which painted the billionaire as a liar and skilled manipulator, OpenAI announced it had bought the Technology Business Programming Network (TBPN), a business and tech podcast company that's been referred to as "SportsCenter for Silicon Valley." Meanwhile, Altman shared a photo last week of his one-year-old son "in the hopes that it might dissuade the next person from throwing a Molotov cocktail at our house, no matter what they think about me." The billionaire called the New Yorker exposé an "incendiary article about me," which he claimed to have initially "brushed" aside. Nonetheless, despite of a brewing revolt across the country, Altman doubled down, arguing that he's "extremely proud that we are delivering on our mission." But considering the sheer amount of goodwill the industry has lost in a matter of months, the public is increasingly refusing to subscribe to OpenAI's new world order.

[10]

OpenAI's policy chief says AI companies 'need to do a much better job' talking about AI as industry leaders face personal attacks | Fortune

Dario Amodei warned last May that AI could wipe out half of entry-level white collar jobs. Microsoft AI chief Mustafa Suleyman predicted the technology would automate most tasks of the entire white-collar workforce in a year to 18 months. And recently, a report from Anthropic mapped out exactly what Suleyman warned about, and what Elon Musk thinks will make work optional. The successive statements about AI's transformative impact on labor may help justify the booming valuation of some AI companies. But this rhetoric in part is also behind some backlash to the technology. A recent NBC News poll found just 26% of U.S. voters have a positive view of the technology, while 46% have a negative view. Now, OpenAI chief global affairs officer Chris Lehane is warning people to relent on messaging around AI. "Some of the conversation out there is not necessarily responsible," he told The San Francisco Standard. "And when you put some of those thoughts and ideas out there, they do have consequences." "This is not fun and games," he added. "This is really serious s-t." The constant drumbeat of promises of AI's labor market impact, as well as the threat of raising electricity bills and the danger it poses to kids, has a growing number of Americans rejecting the technology. And in recent weeks, backlash to the technology has turned violent. Last week, a 20-year-old man named Daniel Moreno-Gama traveled from his home in Texas to San Francisco and allegedly lobbed a Molotov cocktail at the gate of OpenAI CEO Sam Altman's home. Authorities then found a manifesto from Moreno-Gama warning of humanity's extinction at the hands of AI, which included a threat of murder. Reactions to the attack across social platforms like Instagram and TikTok suggest the sentiment runs deep. Comments like "He's not scared enough" and "FREE THAT MAN HE DID NOTHING WRONG" or "Finally some good news on my feed" reveal a widespread fear of the technology, at least across the internet. The incident follows a separate shooting at an Indiana city councilman's house after the councilman expressed support for a data center project in his district. The councilman said the perpetrator shot 13 bullets into the home and left a "no data centers" sign at the doorstep. For Lehane, it's about emphasizing the positives of the technology. "Our job at OpenAI and in the AI space -- and we need to do a much better job -- is to explain to people why...this is going to be really good for them, for their families and for society writ large," he said. Of course, the AI optimists are already singing the praises of the technology. Some have that in just a few years, we'll be working a three-day work week and lounging at the beach as AI agents do our work for us. "You have one group that effectively says, 'This is going to be the greatest thing ever, everyone's going to be living in beachside homes, painting in watercolors as they while away their days,'" Lehane said. "And then you have another extreme, which I would call the Doomers, who have a very, very negative and dark view of humanity." The data so far supports some of Lehane's skepticism about the extreme predictions. A study published in February by the National Bureau of Economic Research found that out of 6,000 CEOs and other executives, the vast majority have seen little impact from AI on their operations. That's even as about two-thirds of executives reported using AI. And while some tech companies have initiated mass layoffs due to AI automation, including Jack Dorsey's Block, and most recently, Snap, the technology's impact on the labor market has yet to appear in macro data. In March, employers posted 178,000 job gains and the unemployment rate ticked down to 4.3%, suggesting job gains, at least in the short term, have outweighed AI-related layoffs. "You've had a series of things that have been put out there -- but haven't come to fruition -- about extreme things that are going to happen," Lehane said.

[11]

'Stop hiring humans'? Silicon Valley confronts AI job panic

San Francisco (United States) (AFP) - AI industry insiders want workers to code smarter, think harder and lean into their humanity -- but still dodge the question of how many jobs artificial intelligence will destroy. The reassurance rang out across HumanX, a four-day conference drawing some 6,500 investors, entrepreneurs and tech executives, even as a blunt advertisement at the entrance set the tone: "Stop hiring humans." On the main stage, May Habib, chief executive of an AI platform called Writer, told the audience that Fortune 500 bosses are having a "collective panic attack" on the subject. The anxiety is well-founded. More and more companies are directly citing AI in announcing job cuts. High-profile examples are on the rise: Salesforce laid off 4,000 customer support workers, saying AI now handles 50 percent of its work. Block chief Jack Dorsey announced plans to cut the company's headcount nearly in half, citing "intelligence tools" that have fundamentally changed how companies operate. Not all claims have gone uncontested -- some economists say firms are pointing to AI to rationalize layoffs that are really about past overhiring or cost-cutting ahead of massive infrastructure investments. OpenAI's Sam Altman has spoken of "AI-washing," and most speakers at the San Francisco event similarly dismissed the invocation of AI as a false pretext for job cuts -- even as they freely predicted disruption was just around the corner. AI is going to "transform every single company, every single job, every single way that we do work," said Matt Garman, chief executive of cloud computing giant Amazon Web Services. 'Pretty unsettling' The debate remains heated. Two years ago, Nvidia chief Jensen Huang declared that the ultimate goal was to make it so "nobody has to program" or code. "We will look back on that as some of the worst career advice ever given," Andrew Ng, founder of training platform DeepLearning.AI, shot back on Tuesday. In his view, coding is not an obsolete skill -- AI has simply made it available to more people. Another argument has taken hold in Silicon Valley: interpersonal skills will become more valuable than ever, with some voices going so far as to tout a humanities education as sound tech career preparation. "As AI can do more of a job, the things that will distinguish and differentiate a given employee are going to be the human skills -- critical thinking, communication, teamwork," said Greg Hart, chief executive of training platform Coursera, which has seen enrollment in its critical thinking courses triple over the past year. Florian Douetteau, chief executive of Dataiku, a French company specializing in enterprise AI, agreed. The real human added value, he told AFP, is the "capacity for judgment." He described a world in which an AI agent works through the night, its human counterpart reviews the results in the morning, and then the agent resumes working autonomously during the lunch break. But the entrepreneur nevertheless expressed unease. "We are going to have a generation of people who will never have written anything from start to finish in their entire lives," he said. "That's pretty unsettling." 'Mistake was not preparing' All of this advice risks ringing hollow for a generation already struggling to land a first job. AI has automated entry-level tasks that once served as on-the-job training. Hiring of candidates with less than one year of experience fell 50 percent between 2019 and 2024 among America's major tech companies, according to a study by investment fund SignalFire. "We should be preparing for the loss of knowledge work jobs in a number of categories," warned former US vice president Al Gore. As the week's lone genuinely dissenting voice, Gore called for a real action plan to map threatened jobs and prepare workers for career transitions, so as not to repeat the mistakes of the globalization era. "The mistake was not globalization. The mistake was in not preparing for the consequences of globalization," he said, drawing a parallel with the deindustrialization that followed the offshoring wave of the 2000s. "Maybe we don't want to talk about it," he added, "because it may slow down the enthusiasm for the technology."

[12]

'You're effed': Palantir CEO says AI 'will destroy humanities jobs' - but Gen Z workers are apparently deliberately sabotaging AI rollouts in an effort to fight back

* AI CEOs warn entry level jobs are being taken by AI * Executives don't want to promote employees who can't use AI * Gen Z workers are sabotaging AI rollouts in protest against the technology At the World Economic Forum in January 2026, Palantir CEO and co-founder Alex Karp declared AI "will destroy humanities jobs," but will benefit the market for vocationally trained, creative people. Anthropic CEO Dario Amodei has also warned about the future effects of AI on the job market, stating that the technology could destroy half of all entry level, white-collar jobs. But a recent report has now found Gen Z workers aren't going down without a fight, with many actively sabotaging their companies' AI rollouts. Gen Z versus AI The report [PDF], conducted by enterprise AI agent firm Writer and research firm Workplace Intelligence, found 29% of employees are actively sabotaging their companies' AI rollout, with the figure jumping to 44% among Gen Z workers. Many employees who feel threatened by AI technology are simply refusing to use AI tools mandated by their company, with others feeding proprietary company information into public AI systems in an effort to sabotage rollouts. But those who refuse to submit to their AI overlords may actually be sabotaging their own careers, with 77% of executives saying they would be less likely to offer promotions or leadership roles to AI abstainers. Younger workers are increasingly recognizing (and feeling the effects) of the growing disconnect between productivity and compensation, with AI likely exacerbating the problem. A 2016 study by the National Association of Colleges and Employees (NACE) found that the average starting salary for a bachelor's graduate has risen by only 5% (adjusted for inflation) since 1960. The negative opinion on AI isn't held by Gen Z workers alone. An NBC News poll recently found that 46% of registered US voters hold a negative view of AI, compared to just 26% with a positive view. Many of these views are likely the result of the threat posed by AI to white-collar jobs across the labor market. An Anthropic study published in March found that its Claude model is capable of completing most of the tasks associated with jobs in the fields of computer science, law, business, and finance. In an interview with Axios, the Palantir CEO said, "If you are the kind of person that would've gone to Yale, classically high IQ, and you have generalized knowledge but it's not specific, you're effed." Gen Z workers entering the workforce are finding themselves at odds with the modern world, having spent their formative years pursuing an education for jobs that might not exist within a decade - and the message from AI CEOs and executives is clear; evolve or die. Follow TechRadar on Google News and add us as a preferred source to get our expert news, reviews, and opinion in your feeds. Make sure to click the Follow button! And of course you can also follow TechRadar on TikTok for news, reviews, unboxings in video form, and get regular updates from us on WhatsApp too.

[13]

AI Is Turning Workplaces Into Hopeless Gridlock

There's a problem, though: the workers who remain often say they now have to fix a flood of error-ridden AI-generated "workslop" that's burdening them, paradoxically, with more work than ever. All this pointless busywork to correct AI-generated output results in hidden costs for companies that embrace the tech, according to The Guardian. One recent survey of 1,150 desk jockeys found that the 40 percent had encountered workslop -- defined as "AI-generated content that looks good, but lacks substance" -- in the course of their duties, forcing them to waste 3.4 hours per month dealing with it. At scale, that's significant: all those hours wasted tally up to an estimated $8.1 million of lost productivity for a workplace with 10,000 workers. The hypothesis is supported by previous research that found that computer programmers become slower when using AI. A widely-cited MIT study found that 95 percent companies that deployed AI don't see any added revenue from its adoption, despite massive enthusiasm among CEOs. One stark example of AI's drag on the workplace, per The Guardian: a copywriter at a Miami cybersecurity firm told the newspaper that his employer let go several of his colleagues while pushing everybody left to use AI -- but he and his remaining colleagues found that while AI could effortlessly spit out seemingly polished content, they had to spend significant extra time rewriting or correcting errors. "Quality decreased significantly, time to produce a piece of content increased significantly and, most importantly, morale decreased," the copywriter told the paper. "Everything got a whole lot worse once they rolled out AI." Workslop problems are also dragging down medical staff. Philip Barrison, a sixth-year MD-PhD student at the University of Michigan Medical School, told The Guardian that a survey he conducted found that many medical workers had to waste time fixing errors, while patients received incorrect or flawed AI-generated emails. All these anecdotes also illustrate the difference of opinion between workers in the trenches and CEOs in their glass-walled offices; in a survey of 5,000 office workers, 40 percent said using AI didn't save them time, while 92 percent of executives said AI made them more productive. With this dissonance of opinion, something has to give. Employees' direct experience with AI show that detailed work that requires accuracy still needs trained human discernment, which can't be easily replaced by a bot, hence the spotty adoption and mixed views of people directly involved in production work. That's a tell that should blunt any eager CEO who's hot to replace workers with AI. This issue leads to a logical question that anybody with sense should start asking: if employees find that AI can't easily reproduce their work at the same level of a trained human being, and CEOs who heavily use AI find that the technology makes them more productive, doesn't that suggest that workers can't be replaced while CEOs could be replaced by a bot? That's a question some AI experts are starting to ask, because it's becoming clear that regular office workers -- the lifeblood of any company -- can't be easily traded out.

[14]

Gen Z turning its back on AI isn't irrational -- it's a verdict on everyone who failed them | Fortune

America has a problem with young people and AI. Gen Z has looked clearly at what the AI revolution is doing to their lives and rendered a verdict: the institutions that were supposed to prepare them for this moment have failed, the employers that were supposed to hire them have vanished, and the government that was supposed to manage the transition has been absent without leave. That verdict is arriving in numbers that are hard to dismiss: The more young people engage with the technology, the worse they feel about it. Gen Z's excitement about artificial intelligence dropped 14 points over the past year to just 22%, according to Gallup polling released this week. Hopefulness fell nine points to 18%. Anger rose nine points to 31%. And here's the data point that deserves the most attention: even daily AI users saw bigger drops in sentiment than non-users -- excitement among that group fell by 18 points, and hopefulness tumbled by 11. Separate polling aligns with this: Gen Z rates AI satisfaction at just 69 on the American Customer Satisfaction Index -- below airlines, social media, and mortgage lenders. The paradox is telling: 62% of Gen Z and millennials believe that AI will unlock financial opportunities they don't currently possess. Something is going wrong here, on the cusp of a supposed Fifth Industrial Revolution, and, as with so many things in the wider AI discourse, this seems to be a sort of Rorschach test, reflecting back on humanity's own foibles. They believe in the technology's potential. They don't trust the system surrounding it to let them benefit from it. The first institution to stand in the dock is higher education. At the exact moment AI literacy became a foundational workplace skill, most colleges went in the opposite direction. More than half of college students say their school either discourages (42%) or outright bans (11%) the use of AI, according to Gallup. Faculty are aware of the damage: 63% believe their schools' 2025 graduates were not very or not at all prepared to use AI in the workplace, per the American Association of Colleges and Universities. But what is the first thing employers are asking for from any qualified candidate? AI literacy. This editor has personally visited the KPMG Lakehouse, where new consulting interns are training up in how to prompt, and talked to thought leaders in human resources and economics who fear the mismatch between what employers want and what workers have to offer. AI skills are the missing link in the stagnant labor market, and Gen Z knows it -- and they know they've been underprepared for the revolutionary moment. A Fortune investigation last fall found the same fault line from a different angle: nine in 10 educators told researchers their graduates were workforce-ready, while nearly half of those graduates said they didn't feel prepared even to apply for an entry-level job in their field. Rather than adapt, some students are engineering their own workarounds: double-majoring has surged as a hedge against AI disruption, Fortune reported in November, and graduates who steered toward so-called "AI-proof" fields -- psychology, education, social work -- are now finding those degrees carry negative financial returns as AI moves into white-collar work faster than anticipated. This lands inside a broader legitimacy collapse that elite universities have spent years engineering for themselves. A Yale faculty committee released a sweeping, self-critical report this week documenting the ruin -- runaway tuition, an opaque admissions process that systematically advantages the wealthy, and campuses increasingly hostile to free inquiry. A decade ago, 57% of Americans said they had a great deal or quite a lot of confidence in higher education; by 2024, that figure had cratered to a historic low of 36%. The institutions most responsible for equipping the next generation to navigate a turbulent economy have spent years losing the public's trust -- and then they turned their backs on AI, the one thing Gen Z most needed to master to get a good job, maybe any job, in this market. Whatever deficiencies young people bring out of school, they have expected the job market to eventually sort things out. It isn't. The unemployment rate for recent college graduates hit 5.7% in the fourth quarter of 2025, above the national rate -- a reversal that almost never happens. Underemployment for recent grads sits at 42.5%, the highest since 2020. The mechanism matters here. This isn't primarily a story of mass AI-driven layoffs, as layoffs remain relatively low across the economy, with big exceptions in the tech industry. The story is more one of quiet erasure. At companies that have adopted AI, junior hiring fell nearly 8% within six quarters -- not through firings, but through a freeze on new positions, according to a Harvard working paper tracking 62 million workers. Gen Zers are paying a compounding price: without early-career experience accumulating, their wages are falling further behind older workers than any comparable cohort in decades. Entry-level jobs are the ones AI automates first. They are also the jobs that teach young workers how to think, build judgment, and eventually move up. Eliminate the bottom rung, and you don't just harm one generation -- you hollow out the management pipeline for the next decade. The anxiety is producing measurable behavioral responses. Forty-four percent of Gen Z workers admit to actively sabotaging their company's AI rollout -- compared to 29% of workers overall, Fortune reported earlier this month. It is a sign less of technophobia than of workers who feel unprotected and are acting accordingly. Some economists argue that the weak entry-level market is partly an overcorrection from the post-COVID hiring binge of 2021. And nearly 60% of hiring managers reportedly use AI as an excuse for layoffs and freezes because it plays better with stakeholders than the real reasons do. Marc Andreessen called it a "silver-bullet excuse." Sam Altman branded it "AI-washing". The honest answer is messier: AI and opportunism are compounding each other, and young workers are caught in the middle. The missing actor in all of this is the government. There is no serious federal workforce transition framework, no large-scale AI-skills retraining program, no mandate that schools treat AI literacy the way they treat reading or arithmetic. What there is instead: an administration that has spent its political capital on wielding education funding as a cudgel -- freezing $2.2 billion in federal grants to Harvard over campus activism disputes -- while the skills gap widens and a generation improvises its own future in real time. Sixteen percent of currently enrolled college students have already changed their major because of AI -- a sign of a generation trying to adapt in real time, without a map. Whether schools catch up, whether employers reverse the junior hiring freeze, and whether Washington produces anything resembling a workforce policy will determine whether the current anxiety hardens into something permanent. For now, the numbers suggest it already is. In April 2026, OpenAI released a 13-page policy paper, Industrial Policy for the Intelligence Age, warning that AI's rapid advance toward superintelligence threatens to hollow out wage and payroll tax revenue and unravel the social safety net, and calling for a sweeping overhaul comparable to the Progressive Era or the New Deal. The company's blueprint -- shifting the tax base away from labor income toward corporate profits and capital gains, floating a "robot tax" on automated labor, and creating a national public wealth fund that would distribute returns to American citizens -- closely mirrors proposals from billionaire venture capitalist and early OpenAI backer Vinod Khosla, who has argued for eliminating federal income tax for Americans earning under $100,000 and taxing capital gains at ordinary income rates. Both Khosla and OpenAI framed the urgency in stark terms. Goldman Sachs research indicates that AI is already cutting roughly 16,000 U.S. jobs per month, with younger workers hit hardest, and Khosla predicts that AI could automate 80% of current jobs by 2030. Critics, including Carnegie Endowment scholar Anton Leicht, dismiss the OpenAI paper as "comms work to provide cover for regulatory nihilism," underscoring how far Washington remains from any concrete legislative response.

[15]

AI CEOs warn of job loss for entry-level positions at WEF 2026

Palantir CEO Alex Karp stated that AI "will destroy humanities jobs" but benefit vocationally trained, creative individuals, during the World Economic Forum in January 2026. Anthropic CEO Dario Amodei echoed this sentiment, warning that AI could annihilate half of all entry-level, white-collar jobs. Research by Writer and Workplace Intelligence revealed that 29% of employees are sabotaging their companies' AI rollouts. Among Gen Z workers, this figure rises to 44%, as many employees feel threatened by AI technology. Some employees refuse to use AI tools or intentionally input proprietary information into public AI systems to hinder implementations. A significant 77% of executives reported they would be less likely to promote or offer leadership roles to employees who abstain from using AI. The growing disconnect between productivity and compensation affects younger workers, worsened by the emergence of AI. A 2016 study from the National Association of Colleges and Employers indicated that starting salaries for bachelor's graduates have increased by only 5% since 1960, adjusted for inflation. An NBC News poll found that 46% of registered US voters view AI negatively, contrasting with 26% who view it positively. This adverse perception likely stems from AI's potential impact on the job market, particularly for white-collar positions. An Anthropic study published in March revealed that its Claude model can perform tasks related to jobs in computer science, law, business, and finance. In an Axios interview, Karp emphasized that individuals with generalized knowledge but lacking specificity are "effed." As Gen Z workers prepare to enter the workforce, many find their training may not align with future job opportunities, leading to conflict with AI-driven market demands. The message from AI executives appears clear: adapt or risk obsolescence.

[16]

AI's Impact on Employment Clashes With C-suite Optimism