Google DeepMind unveils Gemini Robotics-ER 1.6 as robot dogs gain precision instrument reading

2 Sources

[1]

Robot dogs now read gauges and thermometers using Google Gemini

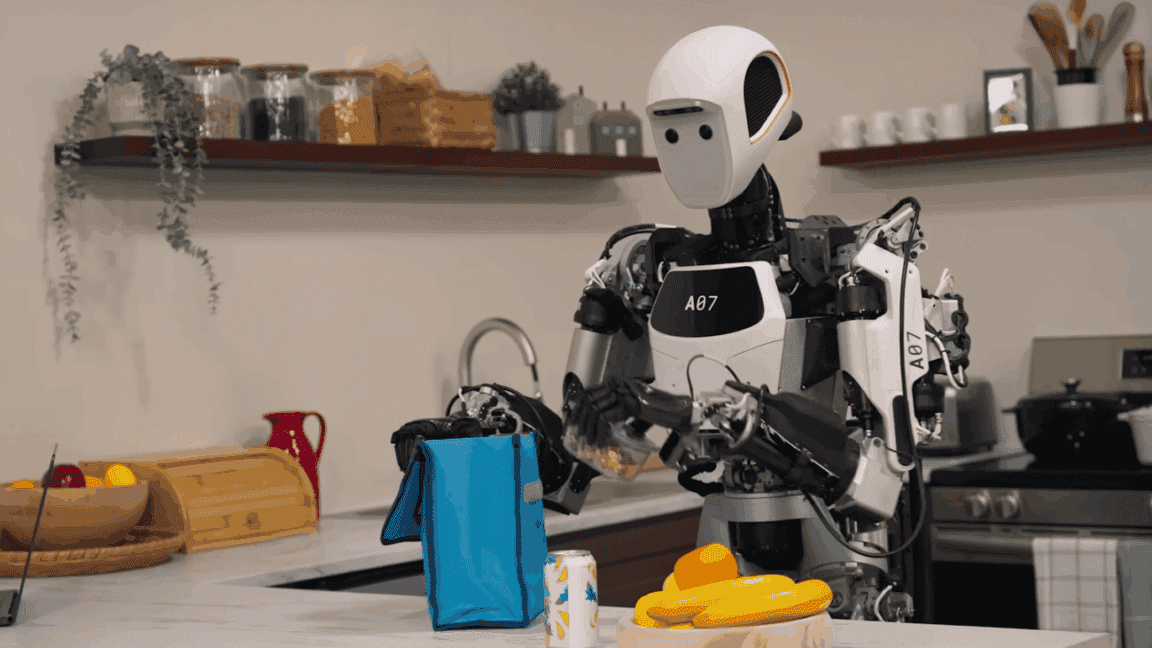

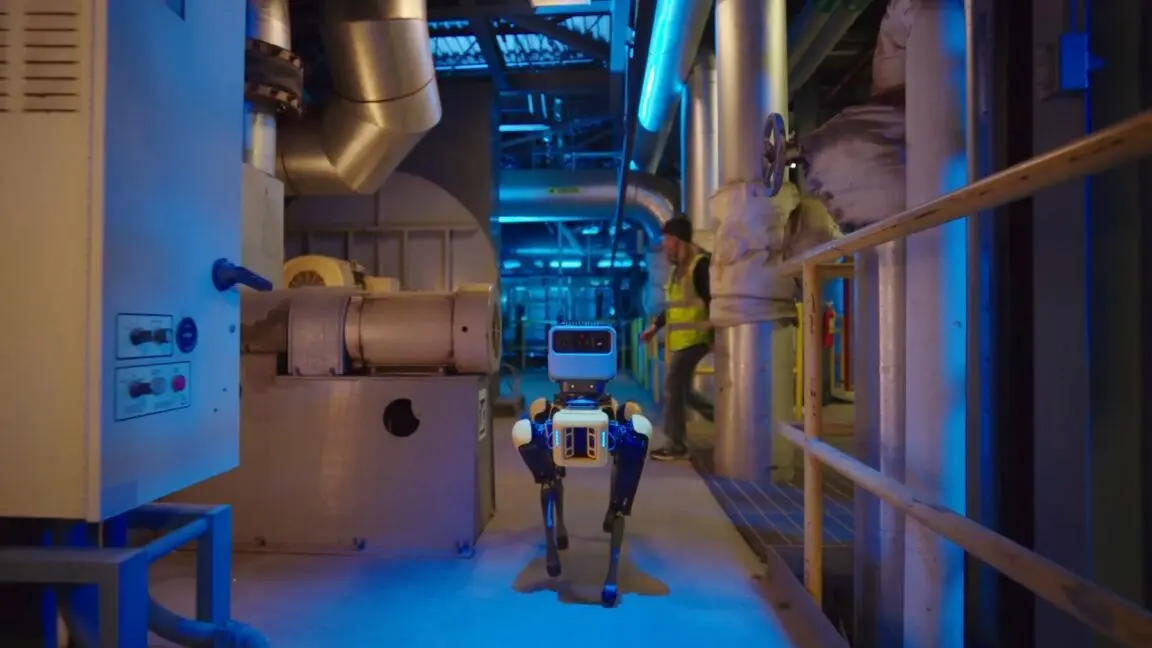

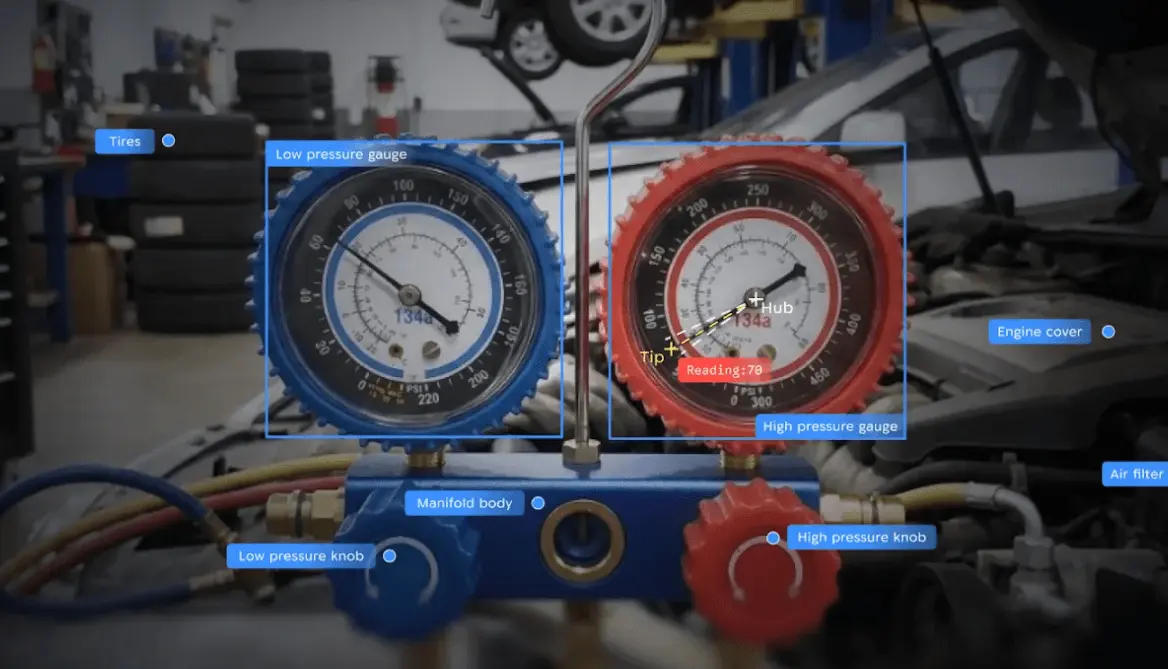

Robots such as Boston Dynamic's four-legged Spot can now accurately read analog thermometers and pressure gauges while roaming around factories and warehouses. Those improvements come courtesy of Google DeepMind's newest robotic AI model that aims to enhance robotic capabilities for 'embodied reasoning' when interacting with physical environments. The new Gemini Robotics-ER 1.6 model announced on April 14 performs as a "high-level reasoning model for a robot" that can plan and execute tasks, according to Google DeepMind. This model also unlocks the capability of accurately reading instruments such as complex gauges and doing visual inspections using sight glasses that provide a transparent window to peek inside tanks and pipes -- a performance upgrade that came about through Google DeepMind's ongoing collaboration with robotics company Boston Dynamics. Boston Dynamics has a keen interest in testing both quadruped and humanoid robotic workers in a wide range of industrial facilities, including the automotive factories of the robotic company's corporate owner, Hyundai Motor Group. The company's robot "dog," Spot, is being trialled as a robotic inspector that roams throughout industrial facilities to check up on everything. Such inspection duties require "complex visual reasoning" to interpret the multiple needles, liquid levels, container boundaries and tick marks, along with text, in various instruments. The model driving it To handle such tasks, the Gemini Robotics-ER 1.6 model provides robots with "agentic vision" that combines visual reasoning with the capability of executing code to create a "visual scratchpad" for inspecting and manipulating images. Such agentic vision was first introduced in Google's Gemini 3.0 Flash model back in January 2026. The agentic vision capability reportedly helps to boost robotic performance on instrument reading tasks from 23 percent in the older Gemini Robotics-ER 1.5 model to 98 percent in the new Gemini Robotics-ER 1.6 model. For the sake of comparison, Gemini 3.0 Flash delivered just 67 percent accuracy. The baseline Gemini Robotics-ER 1.6 model can still achieve 86 percent accuracy in reading instruments even without agentic vision. That is because the model uses a process of pointing to different elements in a visual image to process complex tasks, such as counting the number of items or identifying the most salient features. It also supposedly delivers an improved "multi-view reasoning" capability that allows a robotic system to use multiple camera streams to better understand its environment. One performance example given by Google DeepMind highlights how Gemini Robotics-ER 1.6 could correctly identify the number of hammers, scissors, paintbrushes, pliers, and various gardening tools in a cluttered image. By comparison, the older Gemini Robotics-ER 1.5 model failed to accurately count the number of hammers or paint brushes, completely overlooked the scissors, and falsely identified a nonexistent wheelbarrow because that was one of the requested items in the identification task. That implies the newer model has less of a "hallucination" problem than the older one, even if the latest model is still far from achieving human-level comprehension of its surroundings. Google also describes Gemini Robotics-ER 1.6 as its "safest robotics model yet," with a "substantially improved capacity to adhere to physical safety constraints." It enables robots to both follow safety instructions and to make safer decisions when handling liquids or materials. The new model can also more accurately perceive the risk of injury to humans in different scenarios, such as a young child sticking something into an electrical socket. Future application The practical test of this model's value will come as robotics companies and researchers get more hands-on time to test its capabilities. So far, robots have proven most efficient and productive when performing as highly specialized machines doing the same specific tasks over and over again in factory assembly lines or performing highly coordinated and choreographed movements in warehouse aisles. Companies like Google are betting that the latest AI models can help robots become more free-range workers operating in complex and less controlled real-world environments -- a prospect that also carries the greater risk of robots doing damage or harm to humans if something goes wrong. At the very least, the latest model may nudge us one step closer to a future where a General Atomics International Mark 4 robot can scan the room and correctly exclaim, "There's no fudge here!"

[2]

DeepMind launches Gemini Robotics-ER 1.6 to meet precise physical AI demands - SiliconANGLE

DeepMind launches Gemini Robotics-ER 1.6 to meet precise physical AI demands Google DeepMind, Alphabet Inc.'s artificial intelligence research division, introduced a new foundation robotics artificial intelligence model on Tuesday, designed as a significant upgrade for understanding and precise spatial reasoning. The new model, named Gemini Robotics-ER 1.6 from Gemini Robotics, enhances spatial reasoning and multi-view understanding to bring greater autonomy to physical agents and robots of all kinds. DeepMind said this model provides high-level reasoning capabilities for robotics, offering a layer for task planning and tool calling. These include native tools for Google Search to find information, vision-language-action models, and other third-party user-defined functions to extend capabilities. Examples of improvements include precision object detection, categorization and detection - a must for robots when picking out and picking up items - especially for sorting parcels or cleaning up a messy room. Also in relational logic, such as making comparisons, for example, identifying the smallest object in a set, or defining from-to relationships when moving object X to location Y. This is coupled with enhancements to trajectory mapping and defining the best way to grab objects. The company also said the model works well within constraints and reasons through complex prompts like "point to every object small enough to fit inside the blue cup." Beyond making robots move, DeepMind researchers also boosted the model's ability to understand and read things like gauges and instruments - this requires complex visual reasoning. It's also fundamental for operating within environments such as factories, warehouses and even domestic spaces such as houses. In many cases, gauges include needles, tick marks, finely etched numbers and more indicators (and sometimes instructions) that need to be resolved to fully understand the nature of the readings. "Capabilities like instrument reading and more reliable task reasoning will enable Spot to see, understand, and react to real-world challenges completely autonomously," said Marco da Silva, vice president and general manager of Spot at Boston Dynamics Inc., the dog-like robot the company develops. DeepMind said the Robotics-ER 1.6 achieves this level of accuracy via agentic vision, which combines visual reasoning with code execution. The model takes a snapshot of the image, resolving the fine details and then uses carefully curated code to estimate the proportions and intervals to get an accurate reading, then finally uses its reasoning engine to interpret the reading.

Share

Copy Link

Google DeepMind launched Gemini Robotics-ER 1.6, a foundation AI model for robotics that enables robot dogs like Boston Dynamics' Spot to read analog instruments with 98% accuracy. The model introduces agentic vision combining visual reasoning with code execution, allowing robots to interpret complex gauges, thermometers, and pressure readings in industrial environments. This marks a significant leap from the 23% accuracy of its predecessor.

Google DeepMind Launches Foundation AI Model for Robotics

Google DeepMind announced Gemini Robotics-ER 1.6 on April 14, positioning it as a high-level reasoning model designed to enhance robotics capabilities in physical environments

1

. The foundation AI model for robotics represents a substantial upgrade in how robots interact with real-world settings, particularly in industrial environments where precision matters. Developed through ongoing collaboration with Boston Dynamics, the model enables robot dogs like Spot to perform complex visual reasoning tasks that were previously challenging for automated systems2

.

Source: Ars Technica

The model provides native tools for task planning, including integration with Google Search to find information, vision-language-action models, and third-party user-defined functions to extend capabilities

2

. This architecture allows robots to plan and execute tasks with greater autonomy across factories, warehouses, and other demanding settings.Dramatic Accuracy Improvements in Reading Analog Instruments

The most striking advancement in Gemini Robotics-ER 1.6 centers on its ability to read analog instruments with unprecedented accuracy. The model achieves 98% accuracy on instrument reading tasks, a massive jump from the 23% accuracy delivered by the older Gemini Robotics-ER 1.5 model

1

.

Source: SiliconANGLE

For context, even Gemini 3.0 Flash managed only 67% accuracy on similar tasks.

This capability proves critical for robots performing visual inspections in industrial settings. Boston Dynamics' Spot, currently being trialed as a robotic inspector throughout industrial facilities including Hyundai Motor Group's automotive factories, must interpret multiple needles, liquid levels, container boundaries, tick marks, and text across various instruments

1

. The model now enables these robot dogs to accurately read analog thermometers and pressure gauges while roaming autonomously through complex environments.Agentic Vision Powers Embodied Reasoning Capabilities

The breakthrough in instrument reading comes from what Google DeepMind calls "agentic vision," a capability first introduced in Gemini 3.0 Flash in January 2026

1

. This approach combines visual reasoning with code execution to create a "visual scratchpad" for inspecting and manipulating images. The model takes a snapshot, resolves fine details, uses curated code to estimate proportions and intervals for accurate readings, then applies its reasoning engine to interpret the data2

.Even without agentic vision, the baseline Gemini Robotics-ER 1.6 model achieves 86% accuracy in reading instruments by using a process of pointing to different elements in visual images to process complex tasks

1

. This embodied reasoning capability extends to counting items and identifying salient features in cluttered environments.Enhanced Spatial Reasoning and Precision Object Detection

Beyond gauge reading, the model demonstrates significant improvements in spatial reasoning and precision object detection. In one test, Gemini Robotics-ER 1.6 correctly identified the number of hammers, scissors, paintbrushes, pliers, and gardening tools in a cluttered image

1

. The older model failed to accurately count hammers or paintbrushes, completely missed scissors, and hallucinated a nonexistent wheelbarrow. This reduction in hallucination suggests the newer model moves closer to reliable performance, though it remains far from human-level comprehension.The model also excels at relational logic, such as identifying the smallest object in a set or defining from-to relationships when moving objects

2

. Enhanced trajectory mapping helps robots determine optimal paths for grabbing objects, while improved multi-view reasoning allows robotic systems to use multiple camera streams for better environmental understanding1

.Related Stories

Safer Decision-Making for Physical AI Systems

Google DeepMind describes Gemini Robotics-ER 1.6 as its "safest robotics model yet," with substantially improved capacity to adhere to physical safety constraints

1

. The model enables robots to follow safety instructions and make safer decision-making choices when handling liquids or materials. It can more accurately perceive injury risk to humans in different scenarios, such as recognizing when a young child approaches an electrical socket.Marco da Silva, vice president and general manager of Spot at Boston Dynamics, emphasized that "capabilities like instrument reading and more reliable task reasoning will enable Spot to see, understand, and react to real-world challenges completely autonomously"

2

. This autonomy carries both promise and risk as robots transition from highly specialized factory assembly line roles to free-range workers in less controlled environments.What This Means for Industrial Robotics

The practical value of AI models like Gemini Robotics-ER 1.6 will emerge as robotics companies gain hands-on testing experience. Historically, robots have proven most efficient performing repetitive tasks in controlled settings. Google bets that advanced models can help robots operate effectively in complex real-world environments, though this also increases the risk of damage or harm if systems fail

1

.For industries relying on regular inspections across large facilities, the ability of robot dogs to autonomously read gauges and thermometers could reduce labor costs and improve monitoring consistency. The model's improvements in understanding constraints—such as reasoning through prompts like "point to every object small enough to fit inside the blue cup"—suggest potential applications beyond industrial inspection, including warehouse sorting and domestic assistance

2

. Watch for deployment announcements from Boston Dynamics and other robotics firms as they integrate this technology into commercial products.References

Summarized by

Navi

[1]

Related Stories

Google DeepMind's Gemini Robotics 1.5: A Leap Towards 'Thinking' AI-Powered Robots

25 Sept 2025•Technology

Google DeepMind's Gemini Robotics: A Leap Forward in AI-Powered Robotics

13 Mar 2025•Technology

Boston Dynamics partners with Google DeepMind to give Atlas humanoid robot Gemini-powered intelligence

05 Jan 2026•Technology

Recent Highlights

1

Anthropic overtakes OpenAI as most valuable AI startup with $965 billion valuation

Business and Economy

2

Pope Leo XIV releases major AI encyclical calling for 'disarmament' of artificial intelligence

Policy and Regulation

3

Apple's Siri overhaul for iOS 27 brings Gemini integration and standalone app to compete with ChatGPT

Technology