AI memory shortage to drive 625x demand surge by 2028 as PC hardware prices soar

11 Sources

[1]

Is AI's High Bandwidth Memory Crunch About to Hit Your Wallet?

While browsing our website a few weeks ago, I stumbled upon "How and When the Memory Chip Shortage Will End" by Senior Editor Samuel K. Moore. His analysis focuses on the current DRAM shortage caused by AI hyperscalers' ravenous appetite for memory, a major constraint on the speed at which large language models run. Moore provides a clear explanation of the shortage, particularly for high bandwidth memory (HBM). As we and the rest of the tech media have documented, AI is a resource hog. AI electricity consumption could account for up to 12 percent of all U.S. power by 2028. Generative AI queries consumed 15 terawatt-hours in 2025 and are projected to consume 347 TWh by 2030. Water consumption for cooling AI data centers is predicted to double or even quadruple by 2028 compared to 2023. But Moore's reporting shines a light on an obscure corner of the AI boom. HBM is a particular type of memory product tailor-made to serve AI processors. Makers of those processors, notably Nvidia and AMD, are demanding more and more memory for each of their chips, driven by the needs and wants of firms like Google, Microsoft, OpenAI, and Anthropic, which are underwriting an unprecedented buildout of data centers. And some of these facilities are colossal: You can read about the engineering challenges of building Meta's mind-boggling 5-gigawatt Hyperion site in Louisiana, in "What Will It Take to Build the World's Largest Data Center?" We realized that Moore's HBM story was both important and unique, and so we decided to include it in this issue, with some updates since the original published on 10 February. We paired it with a recent story by Contributing Editor Matthew S. Smith exploring how the memory-chip shortage is driving up the price of low-cost computers like the Raspberry Pi. The result is "AI Is a Memory Hog." The big question now is, When will the shortage end? Price pressure caused by AI hyperscaler demand on all kinds of consumer electronics is being masked by stubborn inflation combined with a perpetually shifting tariff regime, at least here in the United States. So I asked Moore what indicators he's looking for that would signal an easing of the memory shortage. "On the supply side, I'd say that if any of the big three HBM companies -- Micron, Samsung, and SK Hynix -- say that they are adjusting the schedule of the arrival of new production, that'd be an important signal," Moore told me. "On the demand side, it will be interesting to see how tech companies adapt up and down the supply chain. Data centers might steer toward hardware that sacrifices some performance for less memory. Startups developing all sorts of products might pivot toward creative redesigns that use less memory. Constraints like shortages can lead to interesting technology solutions, so I'm looking forward to covering those."

[2]

Memory will consume 30% of hyperscaler data center spending this year, a 4X increase over 2023 -- Nvidia gets preferential supply terms well below standard market rates, says analyst firm

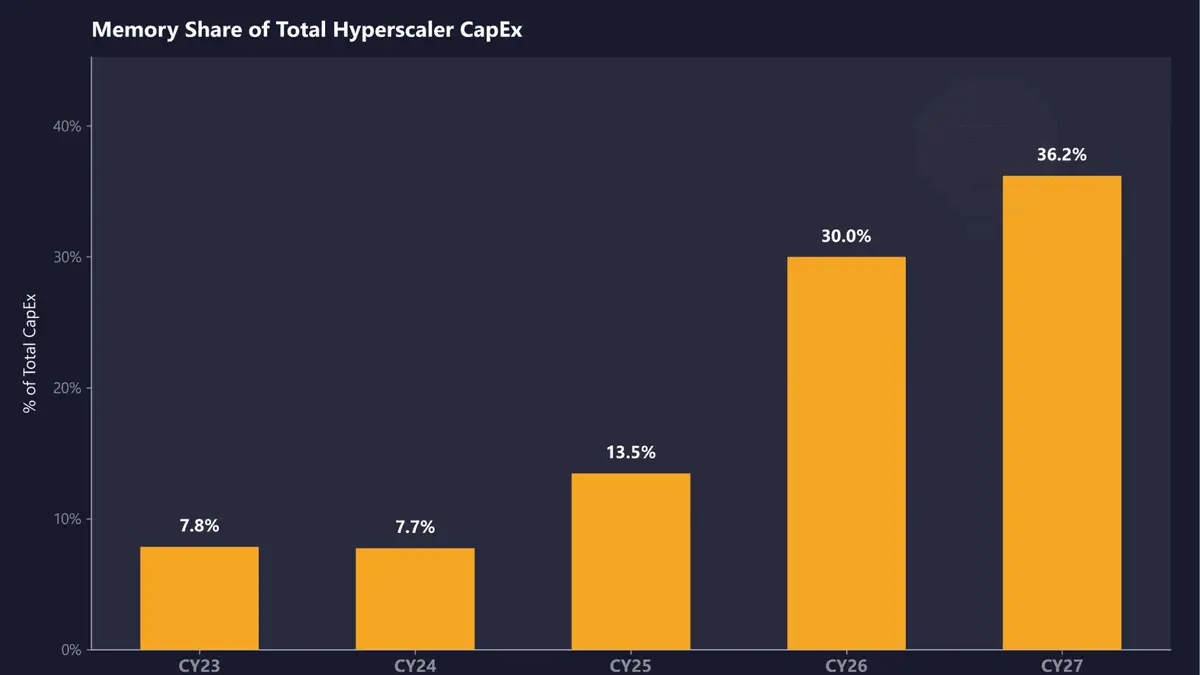

SemiAnalysis estimates that memory will account for roughly 30% of total hyperscaler capex in calendar year 2026, up from approximately 8% in CY23 and CY24. The firm projects that share will climb further in CY27, representing a near four-fold shift in just four years as DRAM prices surge beyond imagination and HBM remains massively undersupplied. SemiAnalysis expects DRAM prices to more than double in CY26, with another double-digit ASP increase in CY27. LPDDR5 contract pricing has already risen more than three times since Q1 2025, and the firm estimates open-market pricing will likely exceed $10/GB this quarter. HBM, the vertically stacked memory at the core of AI accelerators, remains undersupplied through CY27 according to SemiAnalysis's findings, with memory now constituting a massive share of the approximately $250 billion in incremental hyperscaler spend projected for this calendar year. This is already reflected in AI server pricing, with SemiAnalysis noting that B200 prices are set to rise by up to 20% by year-end, driven in large part by memory cost inflation. That aligns with the broader industry, with manufacturers having acknowledged steep component cost increases in recent earnings calls. Dell's COO, Jeff Clarke, described the rate of cost movement as "unprecedented" in its Q325 earnings call back in November. Counterpoint Research has separately projected that DDR5 64GB RDIMM modules could cost twice as much by the end of 2026 as they did in early 2025. AI servers built on Nvidia's LPDDR-based platforms are seeing some of the steepest increases because of the sheer volume of memory per system. An interesting dynamic SemiAnalysis noted is that Nvidia receives what the firm calls "VVP" (Very Very Preferred) DRAM pricing from suppliers, "well below [the rates paid by] both hyperscalers and the broader market." This, according to SemiAnalysis, compresses Nvidia's own server cost exposure and pushes down overall market pricing benchmarks, masking how severe the supply crunch actually is for everyone else. AMD sits on the other side of that dynamic, with its AI accelerator SKUs generally carrying higher memory content per unit, and the company doesn't benefit from the same preferential supplier pricing. At a time when AMD operates at far lower AI accelerator volume than Nvidia, making AMD "structurally more exposed [to memory cost inflation] at a time when it operates at far lower AI accelerator scale." In other words, Nvidia's purchasing scale across HBM and conventional DRAM gives it leverage that smaller-volume buyers simply can't replicate. SemiAnalysis concluded that while memory inflation is already partially reflected in CY26 capex guidance from major cloud operators, CY27 repricing is not yet captured in Wall Street estimates. Samsung, SK hynix, and Micron have all diverted production capacity toward HBM and high-margin enterprise DRAM, leaving conventional DDR5 and LPDDR5 supply constrained, and new fab capacity from Micron's $9.6 billion Hiroshima HBM facility and SK hynix's Icheon and Cheongju expansions won't be delivering meaningful output until 2027 or 2028 at the earliest. Follow Tom's Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

[3]

It's not just you -- MSI's confession proves it's the worst year ever for PC hardware

After a 7-year corporate stint, Tanveer found his love for writing and tech too much to resist. An MBA in Marketing and the owner of a PC building business, he writes on PC hardware, technology, and Windows. When not scouring the web for ideas, he can be found building PCs, watching anime, or playing Smash Karts on his RTX 3080 (sigh). In case you have been living under a rock these last few months, I'd like to inform you that things are bad in the PC hardware right now. What started six months ago with a DRAM shortage snowballed into higher-than-ever RAM and SSD prices. By the time the year was done, we saw GPU price hikes, too, as VRAM got more expensive and manufacturers stopped caring about consumer hardware. Unlike previous hardware shortages, this AI-induced crisis feels unprecedented, and all signs point to at least 2-3 more years of doom and gloom. In short, 2026 is one of the worst years for PC hardware in recent memory. And MSI confirmed as much when it called it the "most challenging year since the company was founded." PC building will survive the AI crisis, but it will be forever changed Expect to pay a lot more for your next PC build Posts 2 By Tanveer Singh The last six months have turned the market upside down "Winter has come for House Frey" Let's do a brief recap of what has transpired in the PC hardware space since September 2025. After an unprecedented rise in data center demand for memory chips, the DRAM supply for consumer RAM naturally faced a shortfall. Manufacturers flocked to greener pastures, reducing or downright abandoning the consumer segment -- Micron shut down Crucial, its consumer arm, to focus on AI hardware. A 32GB kit of DDR5-6000 RAM, which used to cost around $100, shot up to over $400 within weeks and months as the supply shock fully revealed itself. PC builders dreaming of upgrading from DDR4 to DDR5 put their dreams on the back burner as RAM prices broke all ceilings. The limited wafer supply for consumer hardware also affected storage prices, as both SSDs and hard drives suffered price hikes. SSDs shot up to thrice their usual price, to the point where most 2TB consumer drives now cost $300-$350 (they used to cost around $120). Hard drives did not see as much of a price hike, but users still need to pay 30-40% more for HDDs. NAS users, home labbers, and creative professionals are the worst hit, since their storage demands are considerably more than those of gamers and regular users. Finally, graphics cards saw significant price hikes as Nvidia and AMD focused on data center products, jacked up VRAM prices, and stopped bundling memory altogether when sending GPUs to AIBs. The prices of Nvidia cards like the RTX 5070 and RTX 5070 Ti have increased by 20-25% since January this year. Meanwhile, AMD GPUs have been a mixed bag as the RX 9060 XT 16GB has jumped by up to 30%, whereas cards like the RX 9070 XT have stayed at mostly the same price since last year (albeit inflated above the $599 MSRP). DIY components weren't the ones to feel the pinch; laptops, handhelds, and consoles received significant price hikes, too. Everyone knew this was coming, since everything from smartphones and televisions to laptops and consoles uses RAM and storage. Laptop makers decided to reduce RAM configurations in budget models and raise prices across the stack. The prices of the PS5 and PS5 Pro recently got hiked, and even PC gaming handhelds weren't spared, as the top-end Lenovo Legion Go 2 model was jacked up by almost 50%. 6 silent victims of the looming memory crisis Practically anything with LPDDR memory is in for suffering Posts 8 By Chandraveer Mathur MSI is calling it the most challenging year since the company's inception Ouch, that definitely doesn't inspire hope If all that has happened wasn't confirmation enough, MSI general manager Huang Jinqing, in a recent earnings call, lamented the current state of the PC hardware industry. He said, "This year is the most challenging year since the company was founded," (translated from Chinese). The RAM shortage and GPU supply shortfall continue to force the hands of component and device manufacturers. MSI plans to increase prices across its gaming products by 15-30% this year to cope with the pricing squeeze. When it comes to MSI laptops, the company is planning to cut back on the production of low-end models, and focus instead on mid-range and high-end variants. Selling fewer but pricier products to balance supply and revenue seems to be the strategy here. Even for DIY components like motherboards, MSI is switching things up by prioritizing DDR4 boards instead of DDR5 models. Where previously the company was producing four times as many DDR5 motherboards as DDR4 models, it will now focus heavily on the latter. DDR4 memory has also seen price hikes, but it remains the lesser of two evils for both manufacturers and consumers. DDR4 builds have suddenly become the smarter choice for PC builders in 2026, as they offer decent performance without costing as much as DDR5 systems. I'm sticking with my DDR4 system - here's how I'm making the most of it It's not by choice, but I'm ready to push my DDR4 build to the limit Posts 5 By Tanveer Singh RAM prices have shown a downward trend, but it's too soon to celebrate Let's hope things continue to improve In a welcome move for consumers, RAM prices showed a slight decline a few weeks ago. After a relentless upward spiral, which saw some 32GB DDR5-6000 kits reaching $500, the same kits have dropped to around $370. RAM prices in China dropped up to 30%, and despite it being a localized drop, the effects can be seen across the US and Europe. This correction isn't random, since a few developments have caused a bit of panic in the industry. First, OpenAI's intentions of buying 40% of all the global RAM supply haven't actually materialized, leaving a bit of extra inventory in the hands of manufacturers. The stocks of memory makers like Samsung, SK Hynix, and Micron tumbled by over 20%, reflecting investor panic. Second, Google's TurboQuant algorithm announcement, claiming a 6x compression in AI's working memory, predicts that Big Tech won't need as much memory as previously thought. Sure, some new announcements from AI companies could send prices soaring again, or manufacturers could slash production to keep prices high. It's too soon to declare the AI bubble popped, but at least we can celebrate the chink in the armor. Join our newsletter for essential PC hardware context Subscribe to our newsletter for clear, expert coverage of PC hardware trends -- RAM, SSD, GPU price shifts and maker strategies -- so you get context and analysis that helps you navigate supply, pricing, and upgrade choices with confidence. Get Updates By subscribing, you agree to receive newsletter and marketing emails, and accept our Terms of Use and Privacy Policy. You can unsubscribe anytime. Despite this silver lining, analysts predict a 10% decline in PC sales this year. MSI's prediction is a more dire 20%, as the company prepares for the "most challenging year" in its 40-year history. I'm cautiously optimistic about the near future of the PC hardware industry. I'm hoping oversupply and consumer dissatisfaction with sky-high prices will continue to chip at prices as the year goes on. More efficient AI models could also result in panic selling, further cooling RAM and storage prices. We are far from the end of this crisis, but the road to the end may finally be in sight. The best time to upgrade your PC was... six months ago For those of you who missed the bus, it's a long wait ahead Posts 16 By Tanveer Singh The worst isn't behind us yet MSI may have voiced what every manufacturer was already thinking. The RAM shortage and skyrocketing prices across the industry have created a colossal challenge for companies and consumers alike. Analysts have predicted a major decline in PC sales this year, consumers have forgotten about upgrades, and manufacturers are preparing for the worst year in ages. The recent drop in RAM prices offers a glimmer of hope, but it's too soon to say if this is the start of a pattern.

[4]

'The squeeze is real': I spoke to RAM crisis oracle, Carmen Li, about when this nightmare ends -- here's what she told me

For months, buying a PC or upgrading your RAM has felt like a losing battle against the AI industry. Prices skyrocketed, supply vanished and consumers are left footing the bill. There are many, many reasons why this is happening, with a whole lot of data behind it -- so I turned to Carmen Li, Founder and CEO of Silicon Data, to get to the bottom of it. Silicon Data has been tracking the price of GPUs and providing plenty of super accurate market intelligence around them. But as of today, the company is officially launching its RAM pricing indices tracking too -- giving us a raw look at the economics of the compute shortage. So far, the GDDR6 price index has jumped around 21% last month alone. And as we're in the midst of a potential crack in the AI boom that triggered this hardware squeeze in the first place, I got the chance to speak to Li about how we got here, how a closer look at the pricing of AI infrastructure build-out can help us read the tea leaves of where things are going, and how we may be slowly (but surely) getting out of this mess. The HBM ripple effect It all seems to have started when AI companies started getting super hungry for High Bandwidth Memory (HBM) to feed this beast they were creating. OpenAI set themselves up to consume up to 40% of the global DRAM output, and in turn, memory companies like SK Hynix, Micron and Samsung pivoted to where the lionshare of the money was. "Everyone has shortages, obviously HBMs had the highest margin, so I don't blame them," Li commented. "So they start saying 'hey I want to maximize my profit for my shareholders, let's switch a lot of production capacity to HBMs.'" And looking closer at the machinations of this huge AI driver (particularly around Nvidia's GPUs that are fueling this datacenter boom), the B200 rental prices surged by 24% in March alone. All this is happening while the older H100 GPU has stabilized in price, but with a roughly 3x spread, as the hyperscalers (the giants like AWS and Microsoft Azure) keep costs lower, while the Neoclouds (specialist operations like CoreWeave) charge a premium. This price hike to companies in the space was driven by four key things: * The price of HBM being revised upwards by Samsung and SK Hynix * Nvidia's GTC building hype and generating a new wave of enterprise commitments * New major frontier model launches like Claude Opus 4.6 and GPT 5.4 * The exponential growth in token generation needed for agentic AI (the biggest talking point of GTC) This created a huge shift away from demand for batch training to consumers looking for persistent, low-latency compute in their AI bots. And in the companies' mission to do this, we ended up paying quite a penalty for it. The victims of the RAM crisis It's a big old corporate pass-down, folks! But you knew that already... Companies like Asus had to raise computing gear prices by 25-30% to cope with the doubled cost of RAM -- most recently hiking the price of the new Zephyrus G14 and G16 to absurd levels. But Carmen issued a more stark warning about budget tech. While chances are the premiums on more expensive gear may help certain pricier options weather the storm (given they're costly), the lower end of the market could very well be devastated. Not in the way that lower-end tech will be priced out of reach, but rather that companies may just stop making it altogether. "With the price increasing so much, the manufacturer can't really pass down the cost increase, because consumers are very, very price sensitive," Li explained. "[Companies] might have to just decrease production or eliminate certain products." Larger companies like Apple have been able to withstand this in the value space with the likes of the MacBook Neo, but others may not be so lucky. The cracks in the crisis (why prices are dropping) However, there's been a twist in this story. Recently, we've seen a sudden 20% drop in some DDR5 retail prices. As Silicon Data has seen, server-grade DDR5 RAM has increased by 4.5%, while consumer chips fell by 7.8%. There's a divergence happening here. On top of that, we're seeing the cost of some consumer GPUs drop too -- with the MSI Ventus RTX 5060 Ti 16GB climbing back down from its $699 high at the midst of this crisis to $514. Don't get me wrong. This is nowhere near the MSRP, but it's the right direction of travel. There are several factors all coming together that impact this, which I've talked about before: * Improved efficiency: Google's TurboQuant has shown AI companies can do more with less -- compressing AI's working memory by 6x and making it 8x faster at the same time and drastically easing the pressure on global DRAM supplies. * OpenAI's backing out: Those massive non-binding orders for global RAM output were quietly dialed back. * The Tariff refund and exemption: The U.S. Court of International Trade ruled that $130 billion in tariff refunds must be paid to companies like Dell and Asus. On top of that, a new loophole has been added into consumer computing goods under Section 232 that exempts "Non-Data Center Consumer Applications" (like gaming PCs) from the 25% AI hardware tax/ But there's one even more important factor to consider here. The cavalry arrives (new supply chains) Fun fact: I did this interview with Carmen Li a couple weeks ago, and in those couple of weeks, her most critical prediction came true. She said that relief would come from a newly certified Chinese foundry for making consumer RAM. "Obviously they will not do HBMs. They will probably do a lower end memory more for consumers, so I think that could potentially get better once they ramp up production," Li stated. This corroborates our original reporting that major PC manufacturers like Acer have been testing memory packages from Chinese companies like CXMT and YMTC for the past couple of months. Reaching out to other experts in the area, I've found that certification is close. Of course, as I've mentioned before, this could lead to a bit of a quality and reliability lottery depending on where the RAM has come from. But specialization is going to be the long-term key for bringing consumer computing prices down -- frontier labs (the big three) making HBM, while alternative fabs make the consumer DDR. The verdict on your next PC "Memory always goes through these cycles," Li added. "However, this cycle is a little different where it's driven by many other factors." This lines up nicely with what Scan UK's CEO told me, and it's a good expectation to set on the big question: when will things go back to normal? One thing we all agree on is that the recovery will not be quick. "Right away, there's no sort of expectation [of consumer prices] to come down in the near future. That's the problem." Li commented. But going back to what I've been telling you, dear reader, for a while now. Hold until August 2026. By that point, we'll see whether these upcoming events will make for a pivotal moment of change for the price of computing. Will the Chinese memory supply enter the retail stream? Will the tariff refunds trickle down to consumers? It's going to be an interesting summer for sure. Follow Tom's Guide on Google News and add us as a preferred source to get our up-to-date news, analysis, and reviews in your feeds.

[5]

Dell's CEO reckons that the total memory demand from the entire AI market in 2028 will be 625x bigger than it was in 2022

Who needs DRAM in their PC anyway? Mine works on wishes and positive thoughts. Today is a Wednesday. I mention that because when it comes to news about the global memory market, you might just think that every day is now a Woesday instead. Enter Dell's CEO into the fray with an insight as to how things are going to fare over the next few years, and it's going to be worse: 625 times worse. That's according to IT Home and Jukan on X, which claims that at a Bank of America event, Michael Dell said that "As memory per accelerator and system scale expand simultaneously in AI infrastructure, a structure is forming where total memory demand increases by approximately 625 times" (machine translation). Dell arrives at this figure by noting that the most popular AI accelerator in 2022, Nvidia's H100, sported 80 GB of HBM3 (High Bandwidth Memory), but that this figure is estimated to rise to 2 TB by 2028: a fraction over 25 times more DRAM. He then apparently said that the rate at which AI accelerators are implemented in data centers will increase by a factor of 25 over the same period. Multiply the two increases together, and you arrive at the claimed 625. However, Dell is almost certainly using the maximum memory that Nvidia's Vera Rubin superduper chip can support, though most single racks will 'only' be rocking 576 GB of HBM4. That's 7.2 times more memory than a single H100 accelerator, and if we assume Dell is correct about the other growth rate, then the total memory demand will climb by a factor of 180. Is that good news? Hardly. There are only three companies in the whole world that manufacture HBM4 -- SK hynix, Samsung, and Micron -- and while other companies are trying to catch up with HBM3 offerings, none of them can keep up with current memory demands, let alone how things are going to be in a couple of years. By 2028, the big three memory makers are expected to have more production facilities in operation, but whatever they're able to get up and running, it surely won't be enough to cope with a DRAM demand that's going to be many times larger than it already is. And it's not just HBM that's going to be in crushingly short supply; LPDDR5x (memory used in laptops and handhelds) and NAND flash storage will too. A single rack/compute tray in an Nvidia GB200 NVL72 AI server, for example, requires 480 GB of LDDR5X, and a full rack tower has up to 144 E1.S slots (server equivalent of M.2), each home to multiple terabytes of fast SSDs. A fully kitted-out NVL72 tower could have as much as 17 TB of DRAM and 547 TB of flash storage. That's just one tower, and big AI data centers use hundreds, if not thousands, of them. If we're lucky, the growth in DRAM and flash manufacturing will be able to maintain the memory status quo (i.e. it's all outrageously expensive but still 'affordable' compared to how much a top-end graphics card costs), and should Dell's prediction comes to pass, we'll still be in with a chance of enjoying one of the best hobbies around. Perhaps it's best not to consider what will happen if the demand-supply ratio for memory gets considerably worse.

[6]

Samsung pushes DDR5 and HBM prices higher (30%) as AI demand persists

Samsung is reportedly increasing DRAM pricing once again, with new reports indicating an average 30 percent rise for the second quarter of 2026 across both DDR5 and HBM memory products. That follows a strong first quarter in which memory prices had already moved substantially higher compared to the same period a year ago. The latest adjustment is notable because it is not limited to one niche category. It affects commodity DDR5 used in mainstream PCs, notebooks, and servers, while also covering high-bandwidth memory deployed in AI accelerators and data center hardware. The timing is interesting because the memory market had only recently shown signs of a brief cooldown. DDR5 pricing softened for a short period, and some semiconductor shares also came under pressure. Part of that reaction appears to have been driven by speculation around Google's recently introduced TurboQuant technology. Google describes TurboQuant as a compression algorithm designed to reduce memory overhead in vector quantization workloads, which naturally triggered discussion around whether future AI systems could become less memory intensive. That said, the market reality in 2026 still looks heavily demand-driven. AI infrastructure remains one of the biggest consumers of advanced memory, especially in data centers deploying large training and inference clusters. HBM is a critical component in that stack, while server DDR5 demand continues to benefit from hyperscale expansion. In that context, a software-side efficiency improvement does not immediately translate into lower near-term orders for memory suppliers. Samsung's reported pricing move suggests the company still sees enough demand strength to push through higher contract terms without materially changing its outlook. For the broader hardware market, that is a relevant development. Rising DRAM prices can affect BOM costs across a wide range of products, from consumer desktops and gaming notebooks to enterprise servers and AI appliances. Memory had briefly looked like a category that might stabilize after the earlier surge, but the new pricing signals point in the opposite direction. If contract prices remain firm through the quarter, OEMs and system builders may face another round of cost pressure, particularly in configurations that already depend on premium-capacity or high-speed memory. The short version is that the recent DDR5 dip appears to have been more about market sentiment than a true shift in supply-demand balance. Google's TurboQuant may have influenced expectations, and it could become an important part of long-term AI efficiency work, but it has not yet changed the immediate procurement picture. Samsung's latest move makes that clear enough: despite a short-lived pause, the company is still pricing memory as if demand remains elevated, especially anywhere AI infrastructure is involved.

[7]

Microsoft and Google seek long term DRAM deals with SK Hynix

Microsoft and Google are negotiating three-year DRAM supply agreements with SK Hynix that include price floor guarantees and upfront deposits ranging from 10 to 30 percent of the total contract value. These agreements mark a significant change in the procurement strategy of major technology companies as they seek to treat DRAM as a strategic reserve in light of a global shortage exacerbated by rising AI infrastructure spending. SK Hynix and Microsoft are finalizing a long-term supply contract for DDR5 memory beginning in 2026, valued at tens of trillions of Korean won. Key provisions being discussed include a price floor to mitigate against declining DRAM prices and requirements for prepayments. In parallel, SK Hynix is also negotiating contracts with Google for high-bandwidth memory and general server DRAM. Samsung Electronics is reportedly seeking similar deals, reportedly in discussions with both Microsoft and Google involving potential prepayments exceeding $10 billion from Microsoft. Micron Technology has already made strides in this direction, signing its first five-year Strategic Customer Agreement disclosed during its fiscal second-quarter 2026 earnings call. This shift to long-term contracts contrasts with previous practices where major manufacturers like Samsung and SK Hynix sought to capitalize on rising prices by rejecting multi-year arrangements. The persistent shortage of DRAM has shifted the market dynamics, with prices having surged by 90 to 95 percent quarter-over-quarter in Q1 2026 and another 30 percent increase already secured for Q2. According to industry analysts, the escalating demand for AI infrastructure, projected to reach approximately $650 billion in spending for 2026 -- an 80 percent increase from the previous year -- is a key driver of this pricing trend. Memory manufacturers are increasingly focusing on high-margin AI products, leaving conventional DRAM in structurally undersupplied conditions. New fabrication capacity is not anticipated to become available until late 2027, with experts warning of a persistent global chip wafer shortage that may last through 2030. This transition from quarterly procurement to multi-year agreements may negatively impact smaller customers, who could experience longer lead times and higher costs as larger buyers secure supply through substantial upfront payments.

[8]

Dell Says AI Demand For RAM Will Increase Dramatically In Future

If you were hoping for cheaper consoles and RAM sticks later this year, I got some terrible news Folks hoping that the ongoing RAM-ageddon would wind down in the near future will likely be upset to hear that the CEO of a major PC company believes it's only going to get way, way worse. The head of Dell recently predicted that AI companies and datacenters will actually cannibalize even more of the RAM market in the future. Like a lot, lot more. As reported by Electronic Times, via PC Gamer, Dell Technologies CEO Michael Dell explained some math for how the company has landed at the prediction that the demand for memory will increase 625x in the near future during a recent Bank of America-hosted event. "As both per-accelerator memory capacity and system scale expand simultaneously in AI infrastructure, total memory demand is forming a structure where it increases roughly 625x," Dell said. "Expanding memory supply takes years, but current AI infrastructure demand is showing no signs of slowing. We are still in the early stages of technology adoption." As pointed out by PC Gamer, Dell and his company are likely using numbers based on the most memory-intensive Nvidia hardware out there, despite many server rack designs using less demanding tech. So if you crunch those numbers alongside Dell's growth predictions for data centers, you end up with a memory increase of around 180 times greater than now. That is a smaller number, sure, but considering how bad things are already as datacenters and AI companies eat up nearly all the memory and storage out there, the idea of that demand increasing at all is scary. Folks looking to buy cheaper consoles, computers, or gaming handhelds might want to find the best deal now, buy it, and be very careful with it so it doesn't break anytime soon. The ongoing RAM crisis has made it harder and harder for companies to secure the parts needed to build game consoles, computers, and other tech devices. One PC parts maker called 2026 the “most challenging year†in its history due to the shortage. Meanwhile, Valve is struggling to get what it needs to build its upcoming Steam Machine, and Steam Decks are harder to buy these days. And console makers are increasing prices on consoles that launched back in 2020. Even the family-friendly Nex Playground isn't safe from the RAM crisis. This is all largely thanks to AI hyperscalers and tech giants gobbling up PC parts to build datacenters. It’s become quite pricey and challenging for your average person to buy PC RAM, graphics cards, or even an SSD for a console. Even the prices on prebuilt PCs from companies like HP, Dell, and Asus will increase by 15 to 20 percent, according to PC World. And it seems like it won't get better. At least all those nervous RAM resellers will be happy.

[9]

Dell CEO Says AI Memory Demand Will Explode To 'Unimaginable Levels' By 2028, Leaving No Option For Buyers Other Than Paying Whatever's Demanded

Dell's CEO, Michael Dell, has discussed his estimates of the AI memory supercycle at a recent event, claiming that the explosive demand will persist for several years. We have been tracking the memory supply chain for quite some time now, noting that the supply-demand gap has widened in the past few quarters; however, questions remain about how long we will see such conditions in the memory industry. Following the recent TurboQuant fiasco and the wider selloff within memory companies, there was a perception out there that somehow, the industry will now see a decline in demand; however, according to Dell's CEO, he expects the supercycle to persist moving up to 2028, and more importantly, the need for DRAM will increase signifcantly higher than where it is today. As memory per accelerator and system scale expand simultaneously in AI infrastructure, a structure is forming where total memory demand increases by approximately 625 times. While it takes years to expand memory supply, current demand for AI infrastructure is not slowing down. - Michael Dell There is a rather interesting metric discussed here: the memory demand for a particular AI accelerator and how it is growing steadily. When you look at the transition from Ampere to Vera Rubin, you'll realize that the need for memory has expanded significantly, and it isn't just limited to HBM advancements. NVIDIA and other manufacturers have introduced memory technologies like SOCAMM to support specific parts of AI workloads, which means that DRAM requirements have climbed exponentially when you narrow them down to a per-accelerator scope. Dell estimates that memory demand could grow up to 625 times by 2028, claiming that a single accelerator would see a 25x increase in memory capacity and that accelerator deployment will grow by 25x as well. Of course, these estimates aren't backed by factual data for now, but this is what we are here for. Here's a comparison of raw memory capacity, scaling from Hopper all the way to Vera Rubin: Raw capacity increase probably isn't the only metric for judging demand growth, but it is a solid indicator of the 'macro' perspective on how the memory industry is growing. The requirements for memory are increasing with each chip generation, and they will be much higher as we move towards the world of inference. A huge portion of DRAM orders is driven by hyperscalers and their CXL memory pools, which ultimately means that demand prospects are at a level right now that it would probably be a wrong judgment to say we are seeing a decline. Another important factor to consider is that suppliers are now entering into agreements with hyperscalers that span up to 5 years, and so far, the reception has been tremendous for such arrangements. This is also an indication that buyers are willing to spend 'anything' to secure memory supply, and for suppliers, that demand will remain persistent. It wouldn't be wrong to say we should expect shortages to last for a few years, at least through H2 2027, since that's when new capacity comes online.

[10]

Hyperscalers Are 'Scratching Their Heads' with Rising Memory Costs, But NVIDIA Might Be the Only One Smiling

The rising cost of memory has started to bite into the CapEx of major hyperscalers and their infrastructure buildouts, but NVIDIA enjoys an exclusive position in the supply chain. DRAM shortages have disrupted broader supply chains, affecting segments like AI and consumer markets, but, interestingly, for hyperscalers, there are few options other than buying DRAM at outrageous prices, either on spot or contract terms. Based on information from SemiAnalysis and supply chain reports, memory prices have started to influence hyperscaler investments to a much greater extent, to the point that they now account for up to 30% of total spend, which is a shocking figure to say the least. Memory inflation is a serious concern for AI giants right now, yet there are no signs that spending is being affected. SemiAnalysis says that the increase in memory spending could rise significantly higher in CY2027 as well, which is an indirect indication that the ongoing DRAM shortages are here to stay. When we talk about hyperscalers in particular, the need for memory rises from memory pools connected via CXL switches to work alongside the rack-scale infrastructure. Technologies like DDR5 and LPDDR5 have seen massive adoption by hyperscalers in recent times, which is why shortages have been much more brutal for markets dependent on general-purpose DRAM. Another area where memory is important for hyperscalers is their custom silicon and rack efforts. Interestingly, SemiAnalysis also notes an interesting aspect about the memory shortage in a follow-up post, claiming that NVIDIA received a VVP (Very Very Preferred) DRAM customer status within the supply chain, which gives the company both a capacity and a pricing leverage that allows the firm to beat competition, at least in getting the best memory deal. This is also in line with Jensen's past comments about memory shortages, claiming that NVIDIA isn't affected at all because the firm saw the aggressive demand coming in well ahead of others, which is why it entered into extensive supply contracts. We have talked about how NVIDIA, and its close relations with supply chain partners, have helped the company gain the edge in the modern-day infrastructure buildout, and this isn't just limited to DRAM; this superior position extends to other key dynamics such as semiconductors, advanced packaging, and other elements within the broader AI supply chain. On one end, you have high-end infrastructure; on the other, being unfazed by the supply crunch is one of the reasons competing with NVIDIA requires far more than the general perception suggests.

[11]

AI driven memory demand will surge by 625 times in next two years, says Dell CEO Michael Dell

Tech companies are expected to keep investing despite costs, as AI infrastructure becomes essential for future growth. Rising memory chip prices are beginning to impact everyday technology, including smartphones, laptops, and servers. The situation may worsen as demand driven by AI growth continues to increase. Higher demand has already pushed prices to uncomfortable levels for both companies and consumers. Dell CEO Michael Dell recently gave a warning that the demand for chipsets could become so strong that companies may be forced to pay whatever manufacturers ask. Experts have long predicted that the chip shortage is not temporary but a lasting issue. Furthermore, the recent Dell warning highlights the growing pressure on the global technology supply chain and just works as fuel to the fire. Speaking at the recent Bank of America event, Dell outlined how the demand for memory could grow at an extraordinary pace. He further estimated that the total memory requirements for AI infrastructure might increase by as much as 625 times the current demand by the end of 2028. Also read: OpenAI faces investigation over ChatGPT's risks to minors and alleged shooting link According to him, there are two parallel developments that are driving this explosion in demand. The first is that the memory content per AI accelerator will increase dramatically from around 80 GB in 2022 to nearly 2 TB over the next couple of years. The second development is that the number of these accelerators will increase dramatically. As a result of these factors, explosive growth in the demand for DRAM and other comparable technology is expected. However, increasing the manufacturing capacity of these components does not happen overnight. It takes time to construct plants and ramp up production. In the meantime, the supply of memory could struggle to meet the demand in AI data centres. Also read: NASA Artemis II to return on Earth tomorrow morning: When and where to watch it live in India Despite the rising costs, Dell believes that the companies will continue to invest in the latest chipset technology. He argued that businesses cannot afford to rely on outdated or inefficient systems, especially when employee productivity is at stake. For organisations, the decision is less about whether to upgrade and more about when to do it. He added that the industry is also adapting as some of the major manufacturers are shifting their focus toward supplying data centres instead of consumer markets, signalling a broader change in priorities as AI continues to expand.

Share

Copy Link

Dell's CEO predicts total memory demand from AI will increase 625 times between 2022 and 2028, driven by expanding AI accelerator memory requirements and massive data center buildouts. The High Bandwidth Memory shortage is already forcing DRAM price increases exceeding 100%, with memory consuming 30% of hyperscaler spending in 2026—up from just 8% in 2023.

AI Accelerator Memory Demand Reshapes Global Supply Chain

The AI memory shortage has reached a critical inflection point, with Dell CEO Michael Dell projecting that total memory demand from AI will surge 625 times between 2022 and 2028

5

. This staggering forecast stems from two parallel trends: Nvidia's flagship AI accelerators expanding from 80 GB of High Bandwidth Memory in the H100 to an estimated 2 TB by 2028, while data center deployment rates multiply by approximately 25 times over the same period. The calculation reveals an unprecedented shift in how memory resources are allocated across the technology sector, with hyperscaler demand fundamentally altering production priorities at the world's three major HBM manufacturers—SK Hynix, Samsung, and Micron5

.

Source: Wccftech

Memory Cost Inflation Consumes 30% of Hyperscaler Spending

SemiAnalysis estimates that memory will account for roughly 30% of total hyperscaler capital expenditure in calendar year 2026, representing a dramatic escalation from approximately 8% in both 2023 and 2024

2

. This near four-fold shift reflects how High Bandwidth Memory remains massively undersupplied through 2027, constituting a massive share of the approximately $250 billion in incremental hyperscaler spend projected for this calendar year. The AI-induced supply crunch has created what Dell COO Jeff Clarke described as "unprecedented" cost movement during the company's Q3 2025 earnings call2

. DRAM price increases are expected to more than double in 2026, with another double-digit increase projected for 2027, while LPDDR5 contract pricing has already risen more than three times since Q1 20252

.

Source: Tom's Hardware

PC Hardware Price Hikes Spread Across Consumer Electronics

The RAM crisis has triggered widespread PC hardware price hikes, with MSI general manager Huang Jinqing calling 2026 "the most challenging year since the company was founded"

3

. MSI plans to increase prices across gaming products by 15-30% this year, while prioritizing DDR4 motherboard production over DDR5 models—a complete reversal from its previous strategy of producing four times as many DDR5 boards3

. Consumer electronics prices have surged across categories: a 32GB kit of DDR5-6000 RAM that cost around $100 now exceeds $400, while 2TB consumer SSDs jumped from $120 to $300-$3503

. Graphics cards from Nvidia have seen 20-25% price increases for models like the RTX 5070 and RTX 5070 Ti, while AMD's RX 9060 XT 16GB jumped by up to 30%3

.

Source: XDA-Developers

Nvidia Secures Preferential Supply Terms While AMD Faces Exposure

SemiAnalysis revealed that Nvidia receives "Very Very Preferred" DRAM pricing from suppliers, well below rates paid by both hyperscalers and the broader market

2

. This preferential treatment compresses Nvidia's server cost exposure and pushes down overall market pricing benchmarks, masking the severity of the supply crunch for other buyers. AMD sits on the opposite side of this dynamic, with its AI accelerator SKUs generally carrying higher memory content per unit while lacking the same supplier leverage. Operating at far lower AI accelerator volume than Nvidia makes AMD "structurally more exposed at a time when it operates at far lower AI accelerator scale"2

. B200 prices are set to rise by up to 20% by year-end, driven largely by memory cost inflation2

.Related Stories

Data Centers Drive HBM Production Shift

The hyperscaler buildout has fundamentally reshaped manufacturing priorities, with firms like Google, Microsoft, OpenAI, and Anthropic underwriting an unprecedented expansion of data centers

1

. Meta's 5-gigawatt Hyperion site in Louisiana exemplifies the scale of these facilities, while a fully equipped Nvidia GB200 NVL72 AI server tower can house as much as 17 TB of DRAM and 547 TB of NAND flash storage5

. Samsung, SK Hynix, and Micron have all diverted production capacity toward HBM and high-margin enterprise DRAM, leaving conventional DDR5 and LPDDR5 supply constrained2

. New fab capacity from Micron's $9.6 billion Hiroshima HBM facility and SK Hynix's Icheon and Cheongju expansions won't deliver meaningful output until 2027 or 2028 at the earliest2

.Signs of Relief Emerge as Market Dynamics Shift

Recent data from Silicon Data shows a divergence emerging in memory markets, with server-grade DDR5 RAM increasing by 4.5% while consumer chips fell by 7.8%

4

. Carmen Li, founder and CEO of Silicon Data, noted that while GDDR6 prices jumped around 21% last month alone, some consumer DDR5 retail prices have dropped 20% recently4

. This shift reflects improved efficiency in AI operations, with technologies like Google's TurboQuant compressing AI's working memory by 6x while making it 8x faster, drastically easing pressure on global DRAM supplies4

. Additionally, tariffs and trade policy changes, including a U.S. Court of International Trade ruling requiring $130 billion in tariff refunds to companies like Dell and Asus, may provide some pricing relief4

. Industry observers are watching for signals from the big three HBM companies about production schedule adjustments, while data centers may steer toward hardware that sacrifices some performance for less memory1

.References

Summarized by

Navi

[3]

[4]

Related Stories

Lenovo Stockpiles Memory Components as AI Boom Creates 'Unprecedented' Supply Crunch

24 Nov 2025•Business and Economy

Samsung warns memory shortage will intensify through 2027 as AI demand outpaces chip supply

30 Apr 2026•Business and Economy

AI Demand Triggers Component Shortage as Dell and Lenovo Plan 15% Price Increases for Servers

03 Dec 2025•Business and Economy

Recent Highlights

1

Nvidia RTX Spark chips power new AI laptops with up to 128GB memory and local agent capabilities

Technology

2

Florida sues OpenAI and Sam Altman over ChatGPT safety, alleging AI harms linked to violence

Policy and Regulation

3

Trump signs AI executive order seeking voluntary 30-day review after industry pushback

Policy and Regulation