Samsung warns memory shortage will intensify through 2027 as AI demand outpaces chip supply

12 Sources

[1]

Samsung Chip Profits Soar Amid the Tech World's RAM Shortages

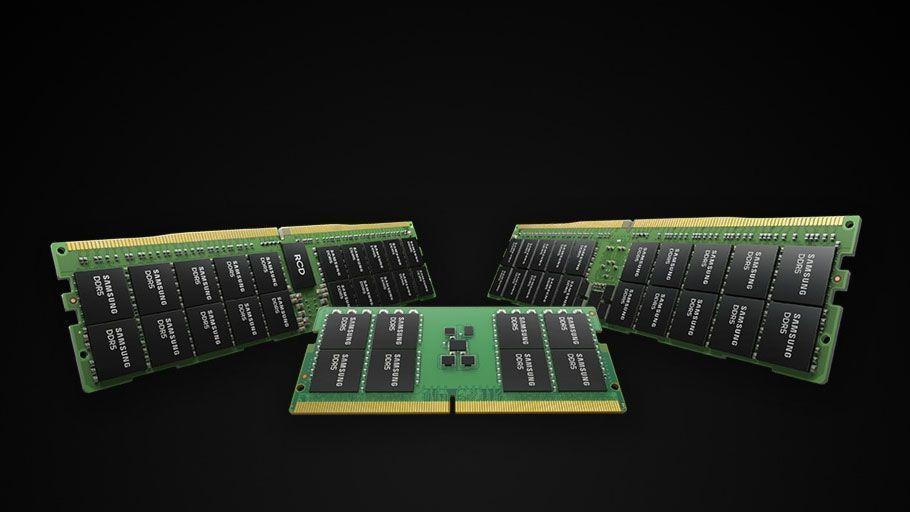

Demand for increasing AI compute is keeping chip supply short for the foreseeable future, and Samsung is coming out ahead. The world's largest semiconductor-maker is reaping the rewards of a global memory chip shortage driven by AI demand so rapacious that it's making everyone else miserable. Samsung said in its latest earnings report that it saw record profits, up almost 500% from the same first quarter last year, at $31.72 billion. The figure was boosted by a nearly 50-times jump in revenue for its chip business. The company has sold out all of its memory production capacity for the rest of this year and says that shortages, which are driving up prices of everything from laptops to smartphones to external storage devices to gaming consoles, are going to get worse in 2027. In an investor call for its earnings report, one of the company's memory chip executives, Kim Jaejune, said, "Based solely on the demand currently received for 2027, the supply-to-demand gap for 2027 is set to widen even further than in 2026." Memory prices began increasing significantly last year as a boom in data centers supporting AI companies gobbled up vast supplies of memory used to power the hardware that processes all their data. That, in turn, led to global shortages and price spikes that are still being felt and that -- according to Samsung -- won't ease up anytime soon. Samsung is trying to keep up with demand from companies, including Nvidia, even as it competes with those same companies, which are also producing their own semiconductors. Apple, Microsoft and Alphabet are among the world's top memory-makers, but they're also customers of Samsung and can't produce the amount of memory required for their growing needs themselves.

[2]

Samsung says the RAM shortage could get even worse next year

There may be a long wait before the end of the RAM shortage that's driving up prices on everything from phones to gaming handhelds. During an earnings call on Thursday, Samsung predicted that the severe memory shortage, driven by demand from AI data centers, will not only continue next year, but likely get worse, as reported by Reuters. As Samsung memory chip business executive Kim Jaejune stated during the earnings call: "Our supply falls far short of customer demand. Based solely on the demand currently received for 2027, the supply-to-demand gap for 2027 is set to widen even further than in 2026." Samsung's prediction follows reports earlier this month that the world's biggest RAM manufacturers might not be able to catch up with demand until 2030. Shortages of Samsung's chips could get even worse if it can't come to an agreement with its labor union, which is planning an 18-day strike starting May 21st.

[3]

Samsung and SK hynix warn AI-driven memory shortages could last until 2027 and beyond, as HBM demand explodes -- customers already reserving supply years ahead, while the wider DRAM market begins to tighten

Supply may never match exploding demand, despite massive investments in supply infrastructure In Samsung's full earnings report released on April 30, 2026, the company's memory chief Kim Jaejune warned that "significant shortages" across memory products are expected to continue through at least 2027. According to the company, demand fulfillment rates have fallen to record lows as customers rush to secure future supply, SCMP reports. The warning closely mirrors comments made by "rival" SK Hynix during its earnings call just a week earlier. Together with US-based Micron Technology, Samsung and SK hynix control well over 90% of the global DRAM market. When two of the world's three biggest memory suppliers simultaneously warn of multi-year shortages, it wouldn't be too out of place to worry. The shortages are being driven largely by the need for artificial intelligence infrastructure. Modern AI systems require enormous amounts of high-speed memory to continuously feed data to GPUs and accelerators. At the center of this demand surge is HBM (high-bandwidth memory), a vertically stacked form of DRAM designed to deliver extremely high bandwidth while remaining physically close to processors. HBM has become critical for AI accelerators. However, the technology is difficult and expensive to manufacture, requiring advanced die stacking, precision bonding, and sophisticated packaging techniques. As a result, supply is limited, and demand is outpacing manufacturers' ability to build capacity. While the shortage is driven primarily by HBM demand, its effects are beginning to spill over into the broader memory market. Because HBM itself is a form of DRAM, manufacturers are increasingly reallocating manufacturing capacity, engineering resources, and investment toward high-margin AI memory products. That shift risks tightening supply for more conventional DRAM products used in servers, PCs, and mobile devices. Enterprise SSD demand is also rising as AI data centers require massive storage infrastructure alongside compute hardware. Ironically, the industry is simultaneously searching for alternatives because current memory architectures consume enormous amounts of power. We recently reported on efforts to develop next-generation memory technologies such as 3D X-DRAM and ZAM (Z-Angle Memory), which aim to reduce power consumption and ease scaling limitations. Yet despite massive investment into future alternatives, demand for the existing memory technologies remains overwhelming. Samsung reportedly stated that some customers have already secured supply allocations through 2027. Earlier this year, SK Group chairman Chey Tae-won suggested that AI-related memory demand pressure may persist even toward 2030. The shortages are not necessarily bad news for the companies themselves. Samsung's semiconductor division posted 53.7 trillion won ($36.1 billion) in operating profit during the first quarter of 2026, accounting for roughly 94% of the company's total quarterly profit as soaring AI memory demand drove record sales. Meanwhile, SK hynix reported record quarterly revenue of 52.6 trillion won ($35.5 billion), and operating profit of 37.6 trillion won ($27.8 billion), fueled largely by booming HBM sales for AI infrastructure. Part of the problem is cyclical. The memory industry has historically swung between oversupply and shortages. However, analysts increasingly believe this cycle is different, as growth in AI infrastructure is consuming hardware at unprecedented rates. To address the crisis, the companies are aggressively expanding production capacity and increasing investment in advanced packaging and memory fabrication. According to the Korea Times, recent regulatory filings show that Samsung Electronics invested 465.4 billion won in its Xi'an memory chip plant in 2025, a 67.5% year-over-year increase. SK hynix also significantly increased spending, investing 581.1 billion won into its Wuxi facilities and 440.6 billion won into its Dalian operations. However, semiconductor fabrication plants and advanced memory packaging facilities take years to expand and ramp up, meaning supply growth cannot catch up to the pace of AI-driven demand. The memory crunch is joining a growing list of resource shortages emerging from the AI explosion. GPU shortages have already become severe across parts of the industry. Earlier this month, we reported Intel's confirmation that extreme demand had become so intense that customers were even buying chips that might previously have been discarded or treated as low-value products. Power is becoming another major bottleneck. AI data centers are consuming enormous amounts of electricity, forcing technology companies to seek increasingly unconventional energy solutions. Earlier this month, Meta Platforms backed plans involving space-based solar power systems that could theoretically beam solar energy back to Earth to help support future AI infrastructure demands. Follow Tom's Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

[4]

Samsung is the latest tech player to bemoan memory chip crunch. That's good news for these stocks, analysts say

Memory chips and storage drives are becoming a major bottleneck in the artificial intelligence buildout, driving companies' capital expenditures higher. Chipmakers warn the supply crunch will get worse before it gets better, and Wall Street sees an opportunity. Quarterly earnings from the hyperscalers like Alphabet and Microsoft released this week showed solid cloud revenues underpinning capex that could top $1 trillion by the end of next year, and memory prices are poised to be a key driver of those costs. Samsung executive vice president of memory Jaejune Kim said Thursday that surging demand for memory is prompting pre-orders of chips that will expand the supply crunch into next year. "Our demand fulfillment rate is now at a record low," Kim said. "Unlike previous years, customers who are concerned about supply shortages are actually bringing forward their demand for 2027 already. So currently, just based on prebooked demand alone, the supply-demand gap is looking to widen further in 2027 versus this year." Feeling the pain of higher memory costs Tech CEOs are already feeling the sting of higher prices from chipmakers in their supply chains. "We believe memory costs will drive an increasing impact on our business," Apple CEO Tim Cook said during his company's earnings call on Thursday. Alphabet CEO Sundar Pichai described upstream factors facing his business as "complicated." "Obviously, we are working through a complicated supply chain environment," he said during earnings on Wednesday. Alphabet's capex was $35.7 billion in the first quarter, with the "overwhelming majority of this spend in technical infrastructure to support ... AI opportunities," CFO Anat Ashkenazi said on the call. Meta Platforms is reportedly extending the shelf life of some aging servers since it can't get new ones due to the memory chip and storage shortage. "We did not anticipate the hardware demand growth that we are seeing in the industry," an internal company memo said, according to a Wednesday report from The Wall Street Journal . "Looking forward to 2027, the binding constraint includes critical server commodities -- particularly DRAM and HDDs." DRAM is a type of fast, short-term computer memory while hard disk drives (HDDs) are for higher-capacity, longer-term storage. A chip shortage produces an opportunity These and similar product segments are where Wall Street is seeing a play for investors. "With mega cap tech earnings coming in solid, adding more fuel to the AI theme, we believe that investors are likely to continue to chase the perceived tech winners in semis and memory," chief investment strategist Chris Senyek with Wolfe Research wrote in a Friday note to investors. Micron Technology , SK Hynix and Samsung Electronics are some of the largest producers of DRAM as well as of NAND, another type of faster, short-term computer memory that's a focal point in supply chains for AI infrastructure. SK Hynix and Samsung Electronics are two of the largest holdings in the iShares MSCI South Korea ETF (EWY) . The fund is up 67% in 2026. NAND-maker Sandisk sailed past earnings expectations on Thursday with a third-quarter adjusted EPS of $23.41, winning laurels from the Street. Analysts with Bernstein were blown away by "nosebleed" average selling prices (ASPs) that they said were "now locked in." "Revenue was up 97% to $5.95bn (vs. consensus $4.72bn), driven by ASP up a whopping 140% [quarter-over-quarter] while slightly offset by high teens bit shipment decline ... The EPS of $23.41 beat us, consensus and our bull case," Mark Newman and colleagues wrote for Bernstein. Ben Reitzes with Melius Research described price "pressures from DRAM and wafer shortages" as "massive" and component prices in NAND and DRAM as a "big headwind" for downstream hardware builders. Seagate Technology and Western Digital are among the largest makers of hard disk drives. Seagate stock is up about 22% this week, up 68% over the last month, and up almost 180% over the last six months. Western Digital stock is up 43% in the past month. Analysts see capex within the memory sector itself driving further expansion as end-user demand from the hyperscalers undergirds the buildout. Memory equipment testing is a space that's poised to gain, JPMorgan analysts said Thursday. "Memory test represents the most underappreciated near-term upside vector, with upside from capacity buildout not yet fully reflected in estimates," Samik Chatterjee wrote for JPMorgan. "The market is significantly underestimating the memory test inflection as greenfield fab deployments (Samsung P4, SK hynix Yongin, Micron Idaho) begin deploying testers at scale."

[5]

Samsung's chip profit jumped nearly 50-fold in a single year, execs warn the shortage will get worse

Serving tech enthusiasts for over 25 years. TechSpot means tech analysis and advice you can trust. Bottom line: Samsung Electronics' latest quarter makes one thing clear: the AI boom is no longer just lifting the semiconductor market - it's straining it. The company posted a record operating profit in the first quarter, almost entirely driven by its chip business. That division now dominates Samsung's earnings as AI hardware demand outpaces production capacity. Operating profit in semiconductors climbed to 53.7 trillion won ($36.15 billion) for the January - March period, up sharply from 1.1 trillion won a year earlier. The figure accounted for roughly 94% of Samsung's total operating profit of 57.2 trillion won. Revenue rose 69% year over year to 133.9 trillion won. Hyperscale companies are building AI data centers fast enough to absorb all available advanced memory. High-bandwidth memory is now essential for AI accelerators like those Nvidia makes. Chipmakers are shifting capacity to advanced nodes and specialized memory, squeezing supply of conventional chips. Samsung executives say the imbalance is already severe and getting worse. "Our supply falls far short of customer demand," said Kim Jaejune, an executive in Samsung's memory chip business, during the company's earnings call. "Based solely on the demand currently received for 2027, the supply-to-demand gap for 2027 is set to widen even further than in 2026." Credit: Reuters Customers are adjusting accordingly. Samsung disclosed that it has signed multi-year binding contracts with clients seeking guaranteed supply, suggesting shortages won't ease soon. New fabrication capacity takes years to build, even with higher investment. Major US tech companies including Alphabet, Amazon, Meta, and Microsoft have all signaled continued AI spending, which keeps pressure on memory supply. Samsung is also working to strengthen its position in high-bandwidth memory, where it has trailed rival SK Hynix. The company said it started mass-production sales of HBM4 chips for Nvidia's Vera Rubin platform in February and expects HBM revenue to more than triple this year. However, SK Hynix recently posted stronger gains in this segment. To keep up, Samsung plans to significantly ramp up capital expenditures. Scaling production isn't just about money, though. The company warned that conflict in the Middle East hasn't disrupted chip production yet, but rising oil prices could push up transportation costs. Samsung said it has avoided supply disruptions by securing inventory and diversifying gas sources. Closer to home, labor tensions in South Korea present another risk. Unions representing a large share of Samsung's workforce in South Korea, particularly within the chip division, are weighing strike action over pay. Samsung said it "plans to respond to the fullest extent through a dedicated organization and response system to ensure that production is not disrupted." A union spokesperson said the company had warned in court that a strike could result in "astronomical damage" and reduced output. The semiconductor surge isn't helping Samsung's other divisions. Rising chip prices are pushing up component costs for Samsung's mobile and display divisions. Operating profit in the mobile and network segment fell 35% to 2.8 trillion won in the quarter, while the display business reported a 20% decline to 400 billion won. The quarter shows how much the semiconductor cycle now depends on AI demand. Samsung's challenge isn't finding buyers - it's building enough advanced capacity while managing the risks of scaling this fast.

[6]

Micron CEO warns 'AI is in very early innings' and it will 'need more memory' -- another ominous sign the RAM crisis isn't going anywhere

Nvidia is also resurrecting a very old GPU to cope with VRAM supply woes * Micron's CEO has been talking about the gravity of the RAM supply situation * Sanjay Mehrotra said that 'AI is in very early innings' and that AI will need a lot more memory to 'scale up' going forward * This follows similar warnings from the other two big memory chip makers We've had another warning from a major memory chipmaker that the RAM crisis will only worsen, and rumors continue to circulate that Nvidia could bring back an old GPU - from two generations ago - to help deal with video RAM woes. Wccftech reports that Micron just posted record Q2 revenue, fuelled by AI demand, and the company's CEO, Sanjay Mehrotra, observed that this demand isn't going away - and in fact will only get stronger. In an interview, Mehrotra told CNBC that: "AI is in very early innings; you just saw at GTC how much advances are being made in AI. And memory is a strategic asset; you need more memory, you need faster performance memory in order for AI to be able to deliver its full capabilities." "This is inference inflection. As inference broadens, it will scale up the need for tokens, and those tokens need to be fast, and guess what, you need more memory, you need faster memory in order to deliver the full potential of memory." "And memory today is very tight supply, and supply cannot be brought up that easily, and you are seeing that in our results." Meanwhile, as VideoCardz recently pointed out, there are continued rumors that the RTX 3060 is going to be resurrected in its 12GB incarnation. This is according to the Board Channels, a source of supply chain rumors over in China, and we're told production of the RTX 3060 could be fired up in June. Add some seasoning with this one for sure, but assuming it's genuine, why might this happen? It is, in theory, a move to provide some relief and additional choice, with more wallet-friendly Nvidia GPUs. It's very much a reflection of the situation with video RAM, and while 12GB is a considerable loadout for a budget graphics card, it's GDDR6 memory rather than the current generation, which uses GDDR7. Therefore, it won't interfere with the inventory of the latter. Even though the RTX 3060 is an old GPU, the 12GB configuration will be tempting for some gamers looking for a cheaper card with more video memory. Analysis: a trio of ominous warnings The key comment from the Micron CEO is that AI is in its "very early innings", and that we can expect AI to gobble up more memory, with the suggestion being that it might be a lot more. What's also worrying is that Micron isn't saying this in isolation. In fact, both the other major players in terms of RAM manufacturers, Samsung and SK Hynix, have issued similar (or more dire) warnings of their own. Samsung recently said that it expects "significant shortages" across its memory products to last through to 2028 (at least), and SK Hynix previously warned that we could be dealing with the fallout from the RAM crisis until as late as 2030. With all three memory-making giants issuing these kinds of ominous statements, and the likes of Nvidia rumored to be resurrecting old GPUs to get around video RAM supply constraints, the prospect of the RAM crisis easing off any time soon doesn't seem likely. Follow TechRadar on Google News and add us as a preferred source to get our expert news, reviews, and opinion in your feeds.

[7]

'Our demand fulfillment rate is now at a record low' says Samsung as it makes its DRAM 90% more expensive than the previous quarter

There are two very different worlds out there. One is the world that you and I live in, the world of the consumer, where we scrape together whatever scraps of expensive memory we can find after the rest has been handed off to AI datacentres. The other is the world of chipmakers and shareholders, where supply that can't keep up with demand is a good thing because, well, more profits. Case in point comes from Samsung's latest earnings call. The world's largest memory maker is, it seems, doing very well despite what it says is "record low" demand fulfilment: "We also have very tight inventory and available supply is far short of customer demand. In fact, our demand fulfillment rate is now at a record low. And unlike previous years, customers who are concerned about supply shortages are actually bringing forward their demand for 2027 already. So currently, just based on prebooked demand alone, the supply-demand gap is looking to widen further in 2027 versus this year." In other words, Samsung simply doesn't have the capacity to make enough chips for even the customers it already has prebooked through 2027. That's just how vast the AI industry's appetite is for memory, which is the primary cause of the RAMpocalypse. When it comes to the most important thing of all, though -- moolah -- Samsung seems to be doing just fine. The company says, "Our blended ASP rose by low 90% range Q-on-Q for DRAM, high 80% Q-on-Q for NAND." Parsing that into non-investor lingo, it's Samsung saying that, compared to the previous quarter, it started to sell its chips, on average, 90% higher when it comes to the memory you find in RAM and 80% higher when it comes to the memory you find in SSDs. To simplify even further: 'We're making a lot more money.' All this being said, Samsung isn't immune to supply issues, and it's not as if having less supply itself is a good thing. As much demand for as much supply as you can muster is the goal, and on the supply front, war in the Middle East could cause a problem. Samsung says there are "no supply chain issues to date" on that front, but nothing is certain.

[8]

Micron's CEO says AI memory demand is just in its 'first innings' and memory supply is already insufficient

TL;DR: AI-driven demand for DRAM and NAND is rapidly increasing, causing a persistent memory supply shortage expected to last until at least 2028. Rising needs for higher-capacity, high-performance memory in AI GPUs and CPUs are straining production, leading to delayed launches, price hikes, and temporary hardware solutions. The demand for DRAM and NAND from AI has expanded exponentially, and the memory supply crunch we're currently facing is unlikely to ease anytime soon. At least that's what Sanjay Mehrotra, CEO of memory and storage maker Micron, believes, and in his view, the demand isn't going away and will only get stronger. In an interview with CNBC, Mehrotra described the current phase of AI-driven demand as just the "first innings," suggesting that we are still early in the cycle. As AI companies scale up compute, faster and higher-density memory will become essential to keep up with growing workloads. He added that as inference and token demand rise, so will the need for both higher-capacity and higher-performance memory. AI GPUs rely heavily on HBM, while AI CPUs use DRAM, and both are currently in short supply. Mehrotra emphasized that the issue goes beyond pricing, as memory production cannot be ramped up quickly enough to meet demand. On the hardware side, upcoming platforms such as NVIDIA's Vera Rubin and AMD's Instinct MI400 are expected to adopt HBM4, pushing both bandwidth and capacity to new levels. According to the South Korean outlet Global Economic, as shipments of 12-layer HBM4 for next-generation AI GPUs ramp up in earnest, more cautious forecasts suggest the industry may meet only around 60% of DRAM demand by 2027. Numerous companies are already being hit by this situation, with the fallout including delayed launches, price hikes, and stopgap fixes such as reviving older GPUs with more VRAM or enabling mixed DDR5 setups without XMP. That being said, if we follow the latest trajectory, data suggests AI demand for DRAM and NAND is expected to exceed 50% of the total industry TAM this year. In line with this trend, the Financial Times, citing analysts, adds that the supply crunch is unlikely to ease before 2028, given the time required to build new fabrication plants.

[9]

Micron CEO Warns AI Is Only in the 'First Innings' as Memory Supply Tightens, With DRAM and NAND Demand Set to Exceed 50% of Industry TAM

Micron posted a record Q2 as DRAM demand increases, but its CEO says this is just the beginning as more memory is required for AI to reach its full capabilities. Memory & Storage maker, Micron, has seen exceptional growth across all of its businesses, which include DRAM, NAND, and HBM. The growth comes from skyrocketing demand for their products as the Agentic AI craze continues to lift off every memory and storage firm. During a conversation with CNBC, Micron's CEO said that what we are seeing right now in the AI industry is just the beginning, terming it as the "First Innings". As AI companies scale up their compute, faster and higher-density memory will become a vital component to keep the AI wheel running. AI is in very early innings; you just saw at GTC how much advances are being made in AI. And memory is a strategic asset; you need more memory, you need faster performance memory in order for AI to be able to deliver its full capabilities. This is inference inflection. As inference broadens, it will scale up the need for tokens, and those tokens need to be fast, and guess what, you need more memory, you need faster memory in order to deliver the full potential of memory. And memory today is very tight supply, and supply cannot be brought up that easily, and you are seeing that in our results. Sanjay Mehrotra - Micron Chairman, President and CEO To make AI models run faster and to increase the token generation speed, more compute is required, and memory is essential to compute. AI GPUs demand HBM, AI CPUs demand DRAM, and guess what, all of that is short in supply. It's not a matter of demand or pricing; it's a matter of supply that major firms are not able to address, and given the future outlook, things aren't going to get any better. GPUs are aggressively aiming to add newer and denser HBM standards. Upcoming lineups such as Vera Rubin and MI400 with HBM4 support will not just speed up the bandwidth; they are also set to max out the capacities, setting the standard for next-gen HBM solutions. DRAM, on the other hand, is rising at an accelerated pace with demand outpacing supply due to the increase in Agentic AI workloads that are pushing CPUs to increase memory support to 400 GBs. LPDDR has become the new favorite of the AI era as its power-efficiency profile make them perfect for massive scale-up deployments. Micron set new records across revenue, gross margin, EPS, and free cash flow in fiscal Q2, driven by a strong demand environment, tight industry supply, and our strong execution, and we expect significant records again in fiscal Q3," said Sanjay Mehrotra, Chairman, President and CEO of Micron Technology. "In the AI era, memory has become a strategic asset for our customers, and we are investing in our global manufacturing footprint to support their growing demand. As per the latest trajectory, the AI Demand for DRAM and NAND is expected to exceed 50% of the total industry TAM this year. Once again, Micron points out that Traditional and AI server demand remains robust, but is constrained due to a lack of DRAM and NAND supply. The DRAM demand will continue to increase with the influx of refreshed and newer platforms. Micron is supplying HBM4 36GB (12-Hi) DRAM for NVIDIA's Vera Rubin platform, and is expecting to hit mature yields on existing HBM3 processes. The company is also developing its next-gen HBM4E HBM memory, which is expected to ramp next year. On the LPDDR front, the company recently unveiled its 256 GB SOCAMM2 memory with LPDDR5X modules that offers up to 2 TB capacities, and is also supplying the Groq 3 LPX from NVIDIA with DDR5 memory. The Groq LPU offers up to 12 TB capacity per chip. On the consumer front, Micron expects PC and Mobile units to decline in the low double digits due to constrained supply and higher prices. The company also highlights that 32 GB has become the choice of PCs that are running Agentic AI workflows locally.

[10]

Samsung Warns RAM Shortage Could Worsen as AI Demand Surges

Looking ahead, Samsung has acknowledged that 2026 is likely to remain a challenging year, shaped by geopolitical uncertainties, rising input costs, and persistent supply constraints. However, the company maintains that its focus on AI-driven differentiation and operational resilience will be key to sustaining growth, particularly in the premium segment. For the broader technology ecosystem, insights from industry reports and global media coverage point to a clear conclusion. The memory shortage is no longer a short-term disruption but a structural challenge tied to the rapid expansion of AI infrastructure. As demand continues to outpace supply, industries ranging from smartphones to data centers are expected to face prolonged pricing pressure and constrained availability in the years ahead.

[11]

Agentic AI Pushes CPUs to Pack 400 GB of Memory, 4x More Than Today, as DRAM Shortage Spirals Toward 2027

CPUs or GPUs, but require lots of memory for running Agentic AI, and this demand is spiraling to unseen levels as DRAM constraints persist. Memory makers are earning big profits but are also unable to meet the demand. We have seen reports on how major manufacturers are rapidly expanding their production facilities, but these are yet to become operational, and Samsung itself has stated that 2027 will be worse for the DRAM industry than 2026, so it's looking like a hopeless situation where DRAM will be in shortages until the AI boom continues. And with Agentic AI accelerating its pace, the main component that is being eyed is the CPU. While GPUs are still required and very much in demand, the ratio of GPUs to CPUs in datacenters has fallen from 8:1 to 4:1, and is likely to approach 1:1 in the near future as Agentic AI models require higher processing capabilities. At the same time, Agentic AI still requires lots of memory, and despite compression technologies through algorithms helping address the KV cache concerns, there is still the matter of demand rising. As such, the CPU segment will witness a massive growth in memory capacities. Citing industry sources, SE Daily reports that CPU makers are now planning to equip their AI CPUs with 300-400 GB of memory. This is a huge increase versus the existing 96-256 GB DRAM offered per chip. The report doesn't clarify what type of memory is being discussed here, as currently, CPU platforms can support 4 to 8 TB of memory, but that's for the entire platform using DIMMs. The upcoming MRDIMM technology will help with memory capacity and bandwidth boosts, but it's still a physically separate DRAM. The competition for memory capacity is expanding beyond GPUs to CPUs, showing signs of snowballing. Nvidia's next-generation AI chip, 'Vera Rubin,' features 288GB via eight HBM chips, while AMD's next-generation GPU, the MI400, boasts an even larger mammoth capacity of 432GB. Google's recently unveiled custom chip, the 8th-generation Tensor Processing Unit (TPU) TPU 8i, is also expected to feature 288GB of HBM capacity. Furthermore, as Intel's AI CPU 'Xeon' and AMD's 'Epyc' begin using high-capacity DDR5 of up to 400GB, the memory shortage is expected to persist. Machine Translated (via SE Daily) The likelihood is that maybe we could see certain CPU SKUs packaged with HBM, or the new HBF/ZAM memory standards that are being developed at the moment. AMD did release an EPYC SKU a while back with HBM memory, so it has a background of doing such chips. Or the simpler way is that memory capacities per DIMM increase substantially. A single 400 GB DIMM will be more DRAM than current GPUs, such as NVIDIA's GB300 and AMD's MI350X with 288 GB HBM3E. Upcoming solutions will scale up DRAM with newer HBM4 standards and increased capacities. The increase in capacities can lead to further supply shortages, and as denser DRAM ICs become the focus, we can expect memory makers to back out of lower-end products. Samsung has already done this with LPDDR4 memory, where it ceased production and moved to the more profitable LPDDR5 solutions. But like LPDDR5, DDR5 comes in various shapes and sizes, and each has its own use. As DRAM demand for AI requires more of the high-end chips to be produced, fewer production lines would be allocated to lower-end products, leading to worse shortages and even higher prices for segments besides AI.

[12]

Samsung Officially Discontinues LPDDR4 Memory, But Still Sees ~50x Profit Jump & Expects Memory Shortages To Get Worse In 2027

Samsung has officially discontinued older LPDDR4 memory production while hinting at "worse" shortages throughout 2027. Samsung Pulled Out Older Memory Production To Focus On Newer Technologies Required For Agentic AI, But Still Expects Worse Shortages In 2027 We have been hearing about Samsung pulling out from the production of older DRAM technologies such as LPDDR4X and LPDDR4. Now, the company has officially confirmed that both of these standards have been discontinued. At its official webpage, Samsung lists both LPDDR4X and LPDDR4 as "Discontinued". This move was made as the company wants to pool in more capacity towards the more profitable and more relevant memory technologies such as LPDDR5, LPDDR5X, and HBM, all of which are being gobbed up by AI datacenters. Despite the added capacity, the company is anticipating memory shortages to get worse in 2027. During its recent earnings call, Samsung highlighted that, based on the orders received for 2027 by AI firms, the supply-to-demand gap for 2027 is set to be worse than that of 2026, highlighting increased stress on the DRAM markets. "Our supply falls far short of customer demand," Kim Jaejune, a Samsung memory chip business executive, told analysts on its post-earnings call. "Based solely on the demand currently received for 2027, the supply-to-demand gap for 2027 is set to widen even further than in 2026." Samsung via Reuters At the same time as Samsung's memory demand swells, the company is witnessing record profits. In Q1 2026, the company reported a profit jump of around 50x versus the previous year. This indicates just how much memory is being consumed by the Agentic AI boom. And it's not just Samsung, SK Hynix, and Micron are also witnessing record revenue growth, with memory brands seeing a big increase in margins too. Samsung has planned to aggressively expand its DRAM manufacturing capabilities. The company also recently ended the production of older MLC-based NAND Flash for storage, prompting entry-level firms to take advantage and rack up their profit figures as they fill in the gaps created by the Korean giant. Meanwhile, Samsung faces growing challenges from the imminent 19-day labor strike, which is expected to expand into a month-long strike if the company fails to address the concerns of its workers. The strike is expected to lead to a 4% disruption in Samsung's DRAM and NAND production, and will take several weeks to return to normal. News Source: Reuters Follow Wccftech on Google to get more of our news coverage in your feeds.

Share

Copy Link

Samsung posted record semiconductor profits of $36.15 billion in Q1 2026, driven by surging AI demand that has created a severe global memory chip shortage. The company has sold out all memory production capacity for 2026 and warns the supply-demand gap will widen even further in 2027, affecting prices of laptops, smartphones, and gaming devices.

Samsung Reports Record Profits Amid Severe Memory Shortage

Samsung Electronics posted unprecedented operating profit of 53.7 trillion won ($36.15 billion) in its semiconductor business for the first quarter of 2026, representing a nearly 50-fold increase from 1.1 trillion won a year earlier

1

5

. The figure accounted for roughly 94% of Samsung's total quarterly operating profit, underscoring how AI demand has fundamentally reshaped the company's business model. Total revenue climbed 69% year-over-year to 133.9 trillion won, with the company selling out all of its memory production capacity for the remainder of 20261

.

Source: Wccftech

AI-Driven Memory Shortages Expected to Intensify Through 2027

During Samsung's earnings call, memory chip business executive Kim Jaejune delivered a stark warning about the worsening chip supply shortage. "Our supply falls far short of customer demand," Kim stated. "Based solely on the demand currently received for 2027, the supply-to-demand gap for 2027 is set to widen even further than in 2026"

2

5

. This prediction aligns with warnings from SK hynix, which suggested during its earnings call that AI-related memory demand pressure may persist even toward 20303

. Together with Micron Technology, Samsung and SK hynix control over 90% of the global DRAM market, making their simultaneous warnings particularly significant3

.Rising Memory Prices Impact Tech Giants and AI Infrastructure

The global memory chip shortage is driving up prices across consumer electronics, from laptops and smartphones to external storage devices and gaming consoles

1

. Major tech companies are already feeling the impact of rising memory prices. Apple CEO Tim Cook stated during earnings that "we believe memory costs will drive an increasing impact on our business" . Alphabet CEO Sundar Pichai described the supply chain environment as "complicated," with the company's capital expenditures reaching $35.7 billion in the first quarter, primarily for AI infrastructure . Meta Platforms is reportedly extending the shelf life of aging servers because it cannot acquire new ones due to the supply crunch, with an internal memo stating the company "did not anticipate the hardware demand growth" .

Source: Tom's Hardware

High-Bandwidth Memory Drives Supply-Demand Imbalance

At the center of this crisis is High-Bandwidth Memory (HBM), a vertically stacked form of DRAM designed to deliver extremely high bandwidth for AI accelerators

3

. Modern AI systems require enormous amounts of high-speed memory to continuously feed data to GPUs and accelerators, with HBM becoming critical for platforms like Nvidia's Vera Rubin3

. Samsung started mass-production sales of HBM4 chips for Nvidia in February and expects HBM revenue to more than triple in 20265

. However, the technology is difficult and expensive to manufacture, requiring advanced die stacking, precision bonding, and sophisticated packaging techniques, which limits supply3

.Customers Securing Multi-Year Supply Contracts

Concerned about the worsening shortage, customers are bringing forward their demand and securing binding contracts years in advance. Samsung disclosed that some customers have already secured supply allocations through 2027

3

5

. "Unlike previous years, customers who are concerned about supply shortages are actually bringing forward their demand for 2027 already," Kim Jaejune explained . This pre-booking behavior is driving demand fulfillment rates to record lows, intensifying the pressure on manufacturers3

.Related Stories

Broader DRAM Market Faces Tightening Supply

While AI-driven memory shortages are primarily driven by HBM demand, the effects are spilling over into the broader memory market. Manufacturers are increasingly reallocating memory production capacity, engineering resources, and investment toward high-margin AI memory products, which risks tightening supply for conventional DRAM products used in servers, PCs, and mobile devices

3

. Enterprise SSD demand is also rising as data centers require massive storage infrastructure alongside compute hardware3

. This shift is creating investment opportunities in related sectors, with analysts from Wolfe Research noting that "investors are likely to continue to chase the perceived tech winners in semis and memory" .Expansion Efforts Face Years-Long Timeline

To address the crisis, Samsung and its competitors are aggressively expanding production. According to Korea Times regulatory filings, Samsung invested 465.4 billion won in its Xi'an memory chip plant in 2025, a 67.5% year-over-year increase, while SK hynix invested 581.1 billion won into its Wuxi facilities and 440.6 billion won into Dalian operations

3

. However, semiconductor fabrication plants and advanced memory packaging facilities take years to expand and ramp up, meaning supply growth cannot match the pace of AI infrastructure buildout3

. Samsung plans to significantly increase capital expenditures, though the company faces potential disruption from labor tensions, with unions representing a large share of its South Korean workforce planning an 18-day strike starting May 21st2

5

.

Source: CNET

What This Means for the AI Industry

The memory shortage joins a growing list of resource bottlenecks emerging from the AI explosion. Hyperscalers like Alphabet, Amazon, Meta, and Microsoft continue signaling sustained AI spending, with analysts predicting their combined capital expenditures could top $1 trillion by the end of 2027 . Beyond memory, GPU shortages have become severe, with Intel confirming that extreme demand has led customers to purchase chips that might previously have been discarded

3

. Power consumption is another major constraint, with AI data centers consuming enormous amounts of electricity and forcing companies to explore unconventional solutions, including Meta's backing of space-based solar power systems3

. Analysts increasingly believe this cycle differs from historical semiconductor market swings, as growth in AI infrastructure is consuming hardware at rates that outpace traditional supply chain responses.References

Summarized by

Navi

Related Stories

AI memory shortage to drive 625x demand surge by 2028 as PC hardware prices soar

03 Apr 2026•Business and Economy

Memory prices spike up to 400% as AI demand creates global shortage lasting through 2026

14 Dec 2025•Business and Economy

AI Demand Triggers Global Memory Shortage as RAM Prices Surge Up to 70% in 2026

06 Jan 2026•Business and Economy

Recent Highlights

1

Google Search transforms with agentic AI, generative UIs, and intelligent search box at I/O 2026

Technology

2

Pope Leo calls to disarm AI in first encyclical, warning against new forms of domination

Policy and Regulation

3

AI passes the Turing Test as GPT-4.5 appears more human than actual people in landmark study

Science and Research