Economists Shift Stance on AI and Jobs as New Data Shows Early Signs of Workforce Disruption

8 Sources

[1]

The one piece of data that could actually shed light on your job and AI

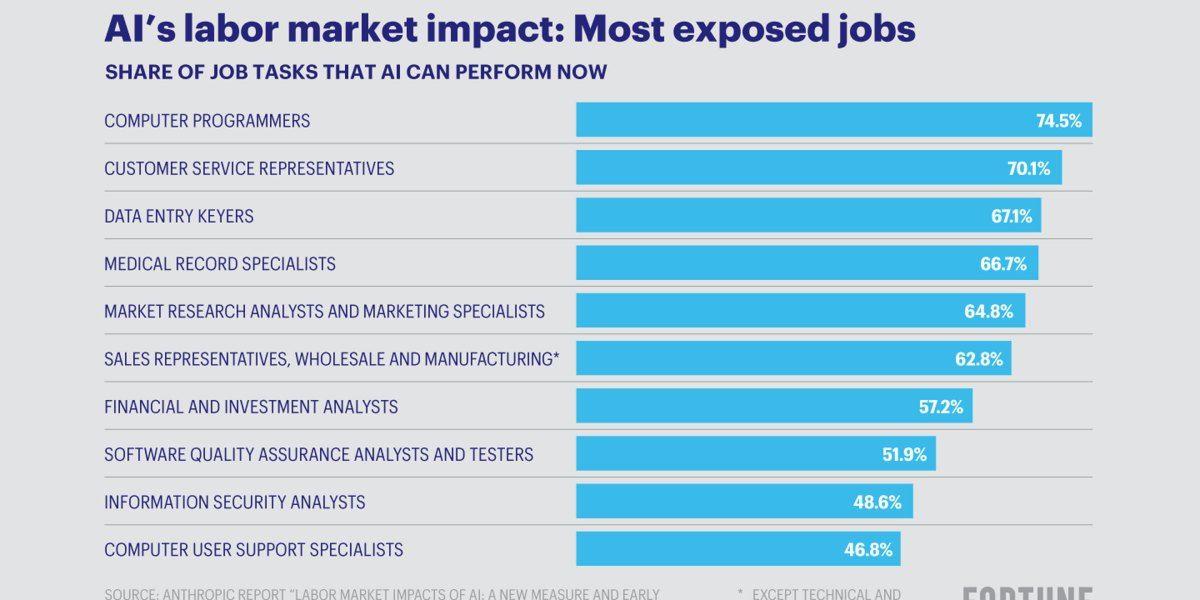

Within Silicon Valley's orbit, an AI-fueled jobs apocalypse is spoken about as a given. The mood is so grim that a societal impacts researcher at Anthropic, responding Wednesday to a call for more optimistic visions of AI's future, said there might be a recession in the near term and a "breakdown of the early-career ladder." Her less-measured colleague Dario Amodei, the company's CEO, has called AI "a general labor substitute for humans" that could do all jobs in less than five years. And those ideas are not just coming from Anthropic, of course. These conversations have unsurprisingly left many workers in a panic (and are probably contributing to support for efforts to entirely pause the construction of data centers, some of which gained steam last week). The panic isn't being helped by lawmakers, none of whom have articulated a coherent plan for what comes next. Even economists who have cautioned that AI has not yet cut jobs and may not result in a cliff ahead are coming around to the idea that it could have a unique and unprecedented impact on how we work. Alex Imas, based at the University of Chicago, is one of those economists. He shared two things with me when we spoke on Friday morning: a blunt assessment that our tools for predicting what this will look like are pretty abysmal, and a "call to arms" for economists to start collecting the one type of data that could make a plan to address AI in the workforce possible at all. On our abysmal tools: consider the fact that any job is made up of individual tasks. One part of a real estate agent's job, for example, is to ask clients what sort of property they want to buy. The US government chronicled thousands of these tasks in a massive catalogue first launched in 1998 and updated regularly since then. This was the data that researchers at OpenAI used in December to judge how "exposed" a job is to AI (they found a real estate agent to be 28% exposed, for example). Then in February, Anthropic used this data in its analysis of millions of Claude conversations to see which tasks people are actually using its AI to complete and where the two lists overlapped. But knowing the AI exposure of tasks leads to an illusory understanding of how much a given job is at risk, Imas says. "Exposure alone is a completely meaningless tool for predicting displacement," he told me. Sure, it is illustrative in the gloomiest case -- for a job in which literally every task could be done by AI with no human direction. If it costs less for an AI model to do all those tasks than what you're paid -- which is not a given, since reasoning models and agentic AI can rack up quite a bill -- and it can do them well, the job likely disappears, Imas says. This is the oft-mentioned case of the elevator operator from decades ago; maybe today's parallel is a customer service agent solely doing phone call triage. But for the vast majority of jobs, the case is not so simple. And the specifics matter, too: Some jobs are likely to have dark days ahead, but knowing how and when this will play out is hard to answer when only looking at exposure. Take writing code, for example. Someone who builds premium dating apps, let's say, might use AI coding tools to create in one day what used to take three days. That means the worker is more productive. The worker's employer, spending the same amount of money, can now get more output. So then will the employer want more employees or fewer?

[2]

New MIT jobs report: Why AI's work impact will roll in like a rising tide, not a crashing wave

Worried AI is coming for your job? New MIT research suggests a slower shift. AI is improving at work tasks, but its impact may take longer to fully reach the workforce. Rather than "crashing waves" that will shock workers, researchers describe a "rising tide" that gives them more time to adapt. Also: How AI has suddenly become much more useful to open-source developers "AI capabilities are already substantial and poised to expand broadly," the study said. "Most of the tasks that we study could reach AI success rates of 80%-95% by 2029 (at a minimally sufficient quality level), suggesting potentially substantial labor-market impacts as this tide continues to rise." AI-induced job anxiety has become an ever-present reality over the last year as AI agents have gotten more capable (though they come with just as many risks as they do benefits). Even a slightly longer horizon for lasting change could make a huge difference in whether -- and how many -- workers get the chance to upskill for a very different labor market of the future. For the study, MIT referred to 3,000 text-based work tasks from the US Department of Labor's Occupational Information Network (O*NET) database, which is used by many companies, including Anthropic, to map AI's impact on labor. To ensure real-world relevance, researchers focused on tasks where AI could help humans save at least 10% of their time. The study found that LLMs completed 60% of tasks without humans at a "minimally sufficient" level, as determined by a human manager, and only 26% at "superior quality." Still, researchers were impressed by what AI could take on. It's not that AI progress will be slower than anticipated, but that progress will manifest over a longer period of time, "such that individual workers are less likely to be blindsided by AI," they noted. "A rising tide could, however, still be quite disruptive if it happens quickly." Also: I used Gmail's AI tool to do hours of work for me in 10 minutes - with 3 prompts The paper noted that text-based work is especially vulnerable to rapidly evolving AI capabilities and could be automated by LLMs at that "minimally sufficient" level by 2029. But the researchers added that consistent, "near-perfect" performance -- meaning success rates closer to 100% -- could still be years off. "While progress is significant, widespread automation, particularly in domains with low tolerance for errors, may still be some distance away," the researchers wrote. 2029 may not feel very far off for a meaningful uptick in what AI can automate, but given how quickly AI is already evolving, it does mean some extra time for the workforce to adapt. That said, the paper authors also don't think the speediest timeline is a guarantee. AI's evolution could be stymied by limits in compute, which is notoriously expensive to scale, as well as algorithmic and hardware constraints. Maintaining that competitive speed will depend on every component of the AI efficiency machine operating at full tilt. Another MIT study from December 2025 found that current AI systems could automate nearly 12% of the country's workforce as it stands today -- not just tech-specific jobs like coding, which many see as particularly exposed (entry-level developer jobs are already dwindling). That also isn't limited to coastal sectors, and covers roles in finance, HR, office administration, and more. Also: This AI expert says the job apocalypse isn't coming, even if you're a coder - here's why But whether that comes to fruition or not depends on how and where companies actually adopt AI, which is a whole different factor that puts projections all over the map. For example, in contrast to MIT's 12%, a January Forrester report estimated 6% of US jobs could be automated -- not now, but by 2030. At the end of February, Block CEO Jack Dorsey announced the company's decision to lay off nearly half its workforce based on what he said AI tools could handle internally. While there's no way to verify that's the case (and not just some savvy stock juicing), it set a tone: Will companies chasing efficiency gains and wanting to appear cutting-edge follow suit with mass layoffs? There are two camps in this debate. One, occupied by figures like Elon Musk, is driven by the belief that AI can put all humans out of work. In the other, experts think AI will change or augment work (a view supported by findings from Gartner) rather than replace human workers themselves. Career development expert Keith Spencer said he's seeing more of the latter: less job replacement and more augmentation and "uneven, role-specific change" that isn't uniform across the job market. He added that AI is also creating new opportunities in freelance and gig work for some (which AI itself hasn't been great at thus far). Also: I built an app for work in 5 minutes with Tasklet - and watched my no-code dreams come true "As certain tasks become faster and easier to complete, more work is being broken into smaller, project-based assignments that can be done independently," Spencer said. "That's opening the door for workers to take on additional income streams, even as they navigate uncertainty in their primary roles." Still, that augmentation has its costs. "When parts of your job are automated or reduced, it can feel like you're slowly being made obsolete, even if your role still exists," he said. "While the long-term trajectory may include both job creation and job displacement, the immediate experience for many workers is that the ground is shifting beneath them, and that's what's shaping behavior." Where AI isn't fully replacing human workers, it's extending the bounds of work itself. A February report from Harvard Business Review found that AI tools in the workplace don't necessarily save time or reduce work, as so many hoped, but actually intensify it. Workers reported using AI tools on lunch breaks and experimenting with prompts after hours to get ahead on projects. That doesn't sound negative, but that creep can have cumulative impacts on workers. Also: 7 AI coding techniques I use to ship real, reliable products - fast "Research from cognitive and organizational psychology has shown that restorative breaks are necessary; without them, cognitive performance and attention decline rapidly," said Tara Behrend, a professor of labor relations at Michigan State University. "This could be extremely dangerous depending on the kind of work being done." Mal Vivek, CEO at digital strategy company Zeb, thinks recent layoffs from Meta and Oracle are less about AI itself and more a response to a composite picture of the economy. "Many of these layoffs were more driven by AI applying market pressure rather than true enterprise AI adoption and automation driving the jobs away," she told ZDNET. "The jobs eliminated were jobs the company always believed it could live without -- with or without AI." Still, Vivek agreed that the layoffs are a growing trend and can be based on AI's capabilities. "We are seeing that AI is on average as good or better at many intellectual tasks, and the efficiency gains from it are just too promising for companies to ignore -- especially when their competitors are capitalizing," she said, speaking from experience at her own company. Also: I built two apps with just my voice and a mouse - are IDEs already obsolete? Spencer isn't seeing a decline in available jobs based on AI's impact yet, though. "We're seeing clearer changes in expectations than in job volume, at least for now," he said. "One of the biggest shifts is the growing importance of AI fluency. Employers are increasingly expecting workers to understand how to use AI tools, not necessarily at an expert level, but as part of their everyday workflow." Either way, data shows AI-induced job anxiety is high. According to a Resume Now survey of 1,000 adults in the US in December 2025, 60% of workers think AI will axe more jobs than it creates in 2026, and over half are concerned they'll lose their jobs because of AI this year. Another Resume Now survey conducted during the same time found that 41% of respondents believe AI "is replacing, devaluing, or overlapping with parts of their job," while 29% think of AI as a competitor that "could effectively complete at least half of their daily work tasks," rather than act as a copilot. Despite many real accounts of workers learning more with AI in the passenger seat, that's not everyone's experience: more than half of workers polled said AI hasn't impacted the growth of their skills or how they apply them. Also: Reinventing your career for the AI age? Your technical skill isn't your most valuable asset At the same time, however, at least one survey suggests 92% of young workers are using AI for professional development and that it's giving them confidence at work. The split could be generational. Only the latter Resume Now survey mentioned respondent demographics, which were nearly evenly split between men and women, but were just 15% Gen Z, with the rest split evenly between millennials, Gen X, and baby boomers. Spencer's advice echoes similar sentiments across the industry: identify what only you can offer, and what parts of your work are most and least susceptible to automation. Also: AI will accelerate tech job growth - former Tesla president explains where and why "Shift the focus from what AI might replace to where you add value that is harder to replicate," he said, citing skills like judgment, communication, and real-world context. "This is less about reacting to fear and more about understanding where your strengths fit into a changing landscape."

[3]

The AI job loss story is all about bundles

Welcome back to The AI Shift, our weekly exploration of how AI is reshaping jobs and work. Sarah is away this week, so stepping into her shoes is the FT's AI editor, Madhumita Murgia. For this edition we are revisiting the big question of whether AI has already started to take white-collar jobs, in light of a flurry of new research and evidence. John writes Today's edition was sparked by a new paper from economists Leland Crane and Paul Soto of the US Federal Reserve, which represents the first time to my knowledge that official labour market statistics have corroborated the story from detailed private payroll data that AI is reducing employment in some pockets of the economy. We have previously reported on research showing a dip in employment for young software developers, based on fine-grained analysis of millions of payroll records, but the finding wasn't matched by labour force survey data. That gap has now closed. By using an expanded definition of coders -- crucially including the large contingent of contractor software developers as well as coders outside the tech industry -- Crane and Soto find a very similar result using the flagship US labour force survey as Stanford's Erik Brynjolfsson and co-authors did using payrolls. Both estimate that around half a million fewer coders are working today than would have been if pre-LLM-era employment trends had continued. It's worth noting that this is not an absolute decline in coder employment, but a marked slowdown in growth. Just as interesting as the headline findings is the fact that nuances in these results align neatly with several recent papers setting out frameworks for thinking about how AI job displacement is likely to play out and highlighting weaknesses with simple occupation-based or task-based models. A paper last month by LSE professor Luis Garicano and co-authors extends the idea that jobs are bundles of tasks, to consider whether the different activities in a job are a tightly bound bundle or something more akin to an itemised list of discrete activities. In the context of software, a contractor or junior hire generally falls into the latter group: these jobs are weak bundles, with daily work consisting mainly of writing code to spec -- tasks that could be given to someone else (or AI) without any disruption to the workflow or the quality of the final product. Here, AI breaks off a large chunk of the job and leaves a role with substantially diminished scope (or obviates the need for that hire or contract). But senior developers, or coders working outside the tech industry in roles where their programming skills are combined with domain-specific expertise, tend to have jobs comprising tightly enmeshed and cross-functional tasks. Here extracting the coding part of the job from all the rest is much harder, so the bundle of tasks remains intact. Instead of becoming a competitor AI becomes an assistant, enhancing rather than eroding the job. This fits with the findings from Brynjolfsson and our own analysis that hiring for senior software roles continues to hold up better than for junior ones. The bundling framework is also explored in recent papers by Lukas Freund and Lukas Mann, and by Joshua Gans and Avi Goldfarb, who move beyond the size and interconnectedness of a job's task bundle to consider the importance of the surviving tasks left after one is automated. When coding is done by AI, senior developers have more time to spend on the many other valuable parts of their job, like translating business needs into product specifications or making judgment calls based on years of accumulated expertise. AI automates a relatively lower-value part of their job and acts as a multiplier on all the rest. But take away coding from a junior developer or contractor and you're left with very little. In this way, the same technological capability shrinks one job while expanding another -- moreover it erodes the junior version of a job even as it enhances the senior version. Between the now-consistent picture on junior coding employment and the expanded framework of jobs as bundles of tasks, it feels to me like we're developing an increasingly coherent picture of AI job displacement. Madhu writes This new data comes at an interesting moment, John. OpenAI released a policy blueprint this week that proposes some radical changes to the social contract, in response to what it casts as inevitable job losses and disruption of entire sectors. Of course, it's in their interest to claim their product will be singularly revolutionary, but I've also spent the last couple of weeks speaking to investors, analysts and executives from a range of white-collar professions for a piece published today on which jobs are resilient -- and which aren't -- in an age of AI agents. The fact that AI is shrinking certain types of employment -- mainly early-career jobs -- is accepted in these circles, although it is being whispered. The recent research is really interesting for two reasons. First, it confirms what AI companies, white-collar professionals and pretty much any AI user I speak to, are telling me: that AI automation is a double-edged sword. On one hand, AI allows you to supercharge your skills if you are already proficient at your job. One person from a frontier AI company described this as tackling a gnarly project by cloning yourself. On the other hand, if you are just starting out, and don't yet have the instincts and knowledge developed through hands-on experience, you are more likely to be replaceable. I find it fascinating that this effect seems to be profession-agnostic. I've heard it repeated from software engineers, but also journalists, musicians, financial services professionals and lawyers. This is partly because of how the technology works: the errors it makes can often seem random, meaning those without the nous to doubt its outputs are caught out by mistakes more easily. It seems using AI effectively is a skill that only comes with mastery of your subject. The other thing the research points to is that AI is picking off clusters of tasks that make up a job, starting with the mundane and working its way up the chain to more cognitively demanding ones. The wave of AI agents that we see today, like Anthropic's Claude Cowork or even OpenAI's Codex, which use AI to write code, can complete multiple tasks simultaneously, extending the complexity of what the technology can accomplish independently. On these topics, I recently sat down with Mark Chen, head of research at OpenAI, who pointed me to the METR benchmark, a metric that measures AI performance in terms of the length of tasks AI agents can complete. This horizon has been expanding rapidly, with an exponential increase over the past months. He told me that a year ago, they were dealing with AI completing tasks that humans could do in minutes. "Now we're dealing with tasks in the hours. And if you extrapolate that forward, we're soon going to be having our models do tasks reliably that would take humans days," he said, referring to building a self-contained, functional piece of software, for example. It struck me that although job displacement currently seems to be impacting junior employees disproportionately, that won't be the case for much longer. The advent of AI agents that can handle higher-level tasks means it is a matter of time before even experienced workers get pushed into being AI project managers, rather than creative thinkers. Do you have a more hopeful outlook, John? John responds Thanks Madhu, it's interesting to hear those industry whispers matching the hard data. There's certainly no sign that the forward march of AI's capabilities is slowing, and that will clearly mean more disruption of jobs. But I'm optimistic on two counts. The first is that I think there's a reason we're still seeing relatively little evidence of job displacement outside of coding (we'll have more on this soon), and I think that will hold for a while yet. The second is that as someone who has increasingly become a manager of agentic AI projects, I've found it more a multiplier of my creativity than a replacement for it.

[4]

Is AI Going to Turn Us All Into Middle Managers?

How is AI changing the way we work? This week on Galaxy Brain, Charlie Warzel is joined by Johnathan and Melissa Nightingale, two experts in management and leadership training. They discuss how chatbots and AI agents are winding their way through the workforce, offering a firsthand view of how companies are (and aren't) adopting AI tools. The conversation covers the gap between AI hype and what's actually happening in offices. It also touches on how overreliance on AI tools may be making bosses worse at their jobs, and how work may be one of the last bastions of sustained social connection in a period of cultural alienation and isolation. Charlie Warzel: I'm Charlie Warzel, and this is Galaxy Brain: a show where today we are going to talk about work. Trying to talk about jobs right now -- how we work, what that work means, what the future of white-collar work looks like -- it is just extremely difficult. We all seem to be situated in this very confusing moment, one that I think is captured very well by my Atlantic colleague Josh Tyrangiel's story. It ran with this rather ominous headline: "America Isn't Ready for What AI Will Do to Jobs." The piece is great, and you should totally seek it out. But the illustration of the piece I found quite apt. It's of this man; he's in a tree. His eyes are two dollar signs, and he's smiling while holding a chainsaw and cutting into the branch that's supporting his own weight. This in many ways is the vibe of 2026. This feeling that this certain subset of people motivated by profit and efficiency are conducting an experiment that, if it succeeds, is not gonna just rewire the economy forever but change the very nature and essence of labor. For the last few years, since the arrival of chatbots, the AI conversation around work has been some version of this. The tools are useful in automating busywork and drudgery. They're getting better. And so what does that mean for jobs? Well, AI executives have been issuing dire warnings. In 2025, for example, Dario Amide -- the CEO of the AI company Anthropic -- told Axios that AI could drive unemployment up 10 to 20 percent in the next one to five years and wipe out half of all entry-level white-collar jobs. Meanwhile, those who are running companies seem quite eager to unleash this technology on knowledge work -- labor force be damned. They're driven by profit incentives and a good amount of FOMO. AI is the future. Get on board or be left behind. What's your AI strategy? In some cases, the fastest way to show results is to simply reduce head count. Workers, especially young workers, are concerned. According to the Federal Reserve Bank of New York, the unemployment rate for college graduates ages 22 to 27 ballooned to 5.6 percent at the end of last year. And as The New York Times notes: "For those who are employed, more than 40 percent held jobs that do not typically require college degrees, the highest level since 2020." You can feel the weirdness in the economy right now. This fear of a kind of job-market stagnation, but no exact sense of what is happening on the ground. How much of all of this is actually AI driven? Simply put, there are huge fears here that AI is not only changing the way we might do our jobs, but it might be changing how we get them, whether we can keep them. AI executives are out here arguing that most of us, no matter the job, are destined to become middle managers for a host of AI agents. And you can take that with a grain of salt as just another tech CEO prognostication. But if there's any truth to it, it would represent a massive change. And the concerns go well beyond the economy into something much more existential. What does it mean to be a human in a world that all of a sudden doesn't value human labor in the same way? So are we all destined to become middle managers? Is AI really ripping through the workforce? What is the value of work in this strange economy? And how can people survive -- maintain dignity, human connection, all of that -- in a world where decision makers driven by dollar signs are pruning the trees for every possible efficiency? Even if we're all just sitting on the branches? Johnathan and Melissa Nightingale are here to help me answer some of these thorny questions. They're the founders of Raw Signal, a leadership and management training firm. Johnathan and Melissa have worked with thousands of executives and managers and companies across tech and other industries, and they're keen observers of the ways that modern white-collar work and workplace communication is broken. But they also offer this clear vision of how work can stay human and humane. They join me now to talk it all through. Warzel: So it is a weird time in the economy, especially in America. We're in this low-hire, low-fire labor market. There's this very amorphous and pressing fear right now about artificial intelligence taking or threatening jobs, especially entry-level jobs. And the labor market just looks increasingly grim and feels increasingly grim to younger workers. The vibes -- they're off, they're not good. I wanted to start just by asking you all: You're talking to businesses, to people. You're on the ground. What are people telling you about the vibes right now? Report on the vibes for me. Melissa Nightingale: I love "Report on the vibes." Johnathan Nightingale: "The vibes" is such a great place to start. Because I don't know if you remember, but it wasn't so many years ago that companies were appointing "chief vibes officers." Melissa Nightingale: Okay; there's a very weird future-of-work moment where everybody was, like, a future-of-work thought leader in 2022. And The New York Times reported they had a big story about chief vibes officers being like... Johnathan Nightingale: Because, like, in 2022, like everybody was fighting. I think junior engineers were getting, you know, 100, 200, 300,000-dollar offer packages because everybody was starved for this tech talent. And that was the story of the moment -- "Wow, how much worker empowerment there is." Melissa Nightingale: And so, we're not trying to make the vibe sad. But it is worth starting with: Where were the vibes as we head into ... like, where are the vibes now? So 2022: still relatively hot labor market; still a lot of competition for talent. Particularly junior talent; particularly junior engineering talent. Johnathan Nightingale: And you could tell that senior leaders were pissed off about them. It was too expensive. These people were too entitled, right? Like, "chief vibes officer" makes good buzz. But you could tell in early 2022 that this was about to get some pushback. Melissa Nightingale: Remember, this was the earliest version of return-to-office mandates. People saying: "We went home. We did amazing work at home. Why do we have to go back? And also, like, if you make me go back, I have another $300,000 job offer lined up tomorrow." Johnathan Nightingale: Just walk across the street. And so, it's funny because people talk about AI and all the layoffs. And there's been, you know, half a million layoffs in the last several years, and technology workers and stuff like that. Those layoffs started before the first ChatGPT release. Melissa Nightingale: What it did was reset the market, right? It recalibrated. Like, you had folks who had that sort of early engineering role. And they got a little bit more skittish about, Should I leave to go across the street, or should I stay put? Should I feel grateful that I have a job, that I have this opportunity? And you started to see people feeling a little bit more nervous, and a little bit more uncomfortable around the market overall. Johnathan Nightingale: These days, AI has sucked all the oxygen out of the room. And people are like: "That's all driven by AI." I'm like: no. First of all, November ChatGPT couldn't count how many Rs there were in strawberry. That's not a reason to turn your whole business upside down. But also, the layoffs happened six months before that too. It's really been part of a pattern of executives in some organizations reasserting power and making sure that, especially, junior workers lose that sense of entitlement. I think vibes-wise, that's happened. They succeeded in that. Warzel: It was such an interesting moment. I wrote back in -- I think it was 2021, but it might've been 2022 -- in that time period of a hot labor market. Also of, as you say, some real worker empowerment. I remember writing this piece that was like: "Do workers, do people even want a career?" Right? Like, do the young, Gen Z people coming up, do they even want a career? They're questioning the idea of the standard thing, because there's just so many different options. And maybe I don't want to work the way that my parents worked. And to compare that feeling to now, where it's like "Could I even get in the door to have a career, or am I going to have to figure out something else completely different?" I think that that's extremely stark in terms of that shift. What are people telling you on the ground now in terms of this moment? Obviously there is that sense of precarity with workers. But in terms of that force, of generative AI, how are people feeling about it? Melissa Nightingale: Johnathan and I both come from tech, right? We've both been working in tech since the first dot-com boom, all the way on through. And we work with a lot of organizations with tech leaders. And what we're hearing from folks is -- on the one hand, you sign up for tech as your industry and as your career, you like working on the cool new stuff, right? Like, we are an industry that loves our toys. We love innovation. We love sort of taking things out and experimenting. Sometimes they last; sometimes they don't last. But as an industry, if that doesn't get you lit up, you've picked the wrong job. But what we're hearing from a lot of folks is that the day-to-day of "We're playing with these tools" is no longer lining up to "What are we supposed to be doing here?" And that playing with the tools has become sort of an end into itself. And a lot of folks are finding: "We're using them, but I don't know -- what are we running toward?" Warzel: Do you get the sense that this is like, if we're going down the chain: This is executives high up on top, see a thing. They're reading a lot about it; there's a lot of hype. They feel, Okay, more than anything, I have to make sure that we do not get left behind here. And then the sort of middle-manager layer is feeling that it's forced on them. Or is there sort of, broadly speaking, a lot of enthusiasm -- but there's just not enough time to figure it out? Melissa Nightingale: All of the above. Like, we met a lady who's a really skilled executive, and she was working in an organization where she's like: "I came in as a fixer, right? My whole job was fixer. And I was really excited about it. Like, the opportunity was cool. Came in, doing the work. And starting to see the impact of that work, right?" And she's like, "And then my CEO got really excited about GPT, and I started sending things that were strategic plans for my division, for my department. And what started coming back from my CEO -- who I report directly into -- wasn't from him. It was clear he hadn't read any of the plans that I was putting forward. He just pushed them through and said, Generate an email in response to this." And she's like, "I went from being so excited about the turnaround potential for this business, for this organization, and for my department and team, to feeling really sad. Like fundamentally -- just having a very hard time figuring out, What am I doing if I'm putting in all this effort?" Johnathan Nightingale: When you look at a story like that -- that's a management failure. It can take one of a couple flavors. One thing you can say is, "That person's work is now useless because GPT replaces it well enough. And so that person should have just been given a firm handshake and a goodbye package and sent out the door." Or you can say "GPT can't replace human ingenuity at the senior levels." And so there's a real dereliction there, because you had a motivated, engaged senior employee, and you burnt them. In either case, that's a management failure. A thing that's coming up a fair bit is that people look at what code generation can do in LLMs today. And they sort of do the "well naturally": Well, naturally, in time, it will write good poems. Well, naturally, in time, it will be your accountant. And well, naturally, in time, it will manage as well or better than most people do. It's just this leap, this linear leap, that is not borne out by what we're seeing on the ground today. Warzel: Do you feel like that's a broader trend of, especially on the executive layer -- and I'm not trying to paint people as caricatures here -- but this idea that, especially with these higher, more like "vision strategy" jobs, where there is a lot of busywork involved in that sense of communication. That, you know, what I've heard is that AI is a perfect CEO, right? Like, it could be -- it's just sort of broad, broad pronouncements -- being able to speak with maybe more confidence than is earned or deserved, right? Johnathan Nightingale: Incredible executive presence. Warzel: Great presence in that sense. And so, naturally, people higher up on the end of the management chain might be enamored with it or the ability of it. Do you feel like CEOs are overly AI-pilled right now? Johnathan Nightingale: I think they're a vulnerable group. I think one of the challenges with being a CEO is that even an incredibly effective CEO shouldn't know more about every function in their business than the people working those functions do. Right? If you're an expert engineer and you become a CEO, you might know a lot about engineering. Melissa Nightingale: But over time, you'll actually know less than the people who are typing on keyboards all day. Johnathan Nightingale: And your head of sales should know more about sales than you do. Your head of marketing should know more about marketing than you do. Right? Like, that part of the job of building a senior team is to make sure those people are, one, lighting you on fire with great ideas, and two, are credible leaders for their own functions in a way that you couldn't be. Because a person can't know everything about everything. And so, it's tempting from that seat to flatten a lot of that work, and to say "If an AI can do that work 80 percent as well, maybe I have to spend some more compute over there in order to get the result I want. But, like, do I even need a marketing department? Do I even need an engineering department? Do I even need a finance department?" They're vulnerable to it if they don't hold on to a sort of core "go touch grass" reality. Which is that it takes a long time to learn some things, and human judgment is valuable. Warzel: Where do you all think we are in terms of adoption? Because again, for someone on the outside, who's not speaking to managers and the rank-and-file types of employees at all times, it's hard to get a good sense. But how much have these tools already changed what is happening day in, day out? Versus how much of it is that feeling of like, I need to be doing this. This is something that we need to have? There is the, you know, the FOMO element. Where do you see the balance there? Johnathan Nightingale: Certainly among the groups that we work with, people in engineering roles have been the most curious about it, and in some cases the most compelled to engage with it. You definitely have people who are very credulous -- "We're playing with it a lot" -- who feel like they're getting super productive about it. We're starting to see studies about how those people are burning themselves out. Right? Those people are really struggling, because they're orchestrating so many bots that they never want to close their laptop. Because they're getting this dopamine hit from productivity. They're not necessarily deepening their skills; they're just doing a bunch of stuff. But like, there you see a ton of adoption. Melissa Nightingale: We're starting to see the fingerprints of AI-adoption mandates in context where it makes no damn sense. So like, prompt saying,"We have a client organization that was building technology to respond to bots infiltrating contact forms on websites." Because so many people were having their agent basically go: "Reach out to this organization; we're trying to get this work done. Can you go figure out a quote for this thing? Like, go write them with a description of what we're trying to get done; have it come back." But the cycle times are considerably longer, in part because the context that you can anticipate a human responding to it needing just isn't there. And so you end up with, like -- it's meant to save a step, but it causes three more. And we've all had the experience of somebody like a junior person sending a shitty email and being like, Fuck, I gotta go unwind that. Right? Like, I gotta go unwind that you sent an email. And, it makes no damn sense. And now I've got 30 people in the organization going on, running and chasing a thing. Johnathan Nightingale: Or "You sent it to a client, and now I gotta go apologize for that." Melissa Nightingale: Right. But imagine that multiplied across many workforces right now. Where you've got a lot of communication and requests flowing that, like, just missed an important key step before they went out the door. And so there's a bunch of weird context and cleanup that's taking longer than the original initial task would have taken to just do the damn thing. Johnathan Nightingale: And there's this rigidity forming, too, which is worth fighting against. Which is that you'll have people farming everything out to GPT -- even really obvious ways, right? You get emails from a colleague, and you're like, "This is not what you sound like. This is obviously GPT. And what am I meant to do with the fact that you didn't bother to write this email?" Right? Warzel: Absolutely. Johnathan Nightingale: And then like the alternative is to say, "Well, screw it. I will never let GPT do that. It's an insult when somebody does it to me; I'm not gonna do that to other people." And from that place, this sort of entrench and say, I refuse to engage with these tools. And then you're putting your refusal up against management pressure. And, it's driving conflict that isn't very helpful. Warzel: When I hear, "Oh, these agents are filling up these contact forms; it's creating a whole bunch of extra work." The guy who's been listening to people in Silicon Valley talk about this technology for a very long time says, "Yes, well; their solution to that is you need agents on both ends, because having agents on just one end is, you know, not balanced." There's this way in which I can see that. People are creating -- it's a very classic tech thing to create a bunch of problems and then offer a technological solution to the problem that you created. Johnathan Nightingale: Yeah. Warzel: That all adds to more problems down the line. I also think, though, that what I wanna highlight there is the way in which this creates these asymmetries and builds these little cracks and pieces of distrust. That seems like something that feels really important in the context of all of this going forward, if you are somebody who cares a lot about what you do and puts a lot of effort into these things. And then you have a group of people who may also care, but they use these tools. And there's not this standardization of work output. And you see somebody respond to your email with something general. I think it's really interesting that it creates that fracture, based off of how AI-pilled you are or how excited you are about the technology. And that feels like a bigger problem than I think people are thinking about right now. Melissa Nightingale: We're seeing that play out across like technology right now. Where you've got folks who feel like maybe you're being incredibly efficient, and you've got like 12 agents working for you, and you're getting a ton of stuff done. And I think, Wow, what a go-getter. Or I think, You're really fucking rude, and you don't give a shit about your work or the impact to my organization on you sending garbage across as a transmission. Johnathan Nightingale: Isn't that interesting? Like, not from a culture war, "red team versus blue team" shit. But like: why? Like, why can so many people nod along to this idea of like, Oh yeah, when you get a GPT email, that feels rude. Like, That feels like the person didn't care. Right. I thought these agents were supposed to be solving all kinds of problems. I thought they were more talented. They're passing the LSATs. They're like, you know -- they're doctors, they're therapists, they're whatever. Like, why would we receive it as rude? It turns out that humans really care about doing work they believe in, with people they care about. And when you hollow those things out, people have these emotional responses to it that I don't see predicted by the marketing materials from the AI companies. Melissa Nightingale: And the hyper-rationalists will fall short on this one every time. Because, fundamentally, being more efficient should, like, it goes -- Johnathan Nightingale: You ought. Melissa Nightingale: You ought to be excited about being 10 times more productive than you were yesterday. You ought to be excited that your colleagues sent you an email that they didn't spend any time on, because you also don't have to spend any time on reading it or consuming it. Like, that should be exciting. Warzel: You're both management and leadership trainers. Which means your job is ostensibly to try to make people better bosses, right? And I wanna ground some of this in asking: What makes a good manager? Or what makes a good middle manager? What are those qualities there? Melissa Nightingale: We have bosses who show up in programs. And they're like, "I am a good manager because my team likes me." Wrong. "I am a good manager because, like ... Johnathan and Melissa Nightingale: "My team is happy." Melissa Nightingale: We're like, "Wrong." Happy is an impermanent state, right? Johnathan Nightingale: And you can't take custody for people's emotions. Melissa Nightingale: No. Fundamentally, you are a good manager if you are making your team more effective. Johnathan Nightingale: And effective feels really mercenary, but it isn't. Because it turns out as you get deeper into this, you learn that the best way to build an effective team is to see them as individuals: to align their personal motivations and aspirations and sense of mastery, the things they want to learn, their curiosity with the things your organization is trying to get done. It turns out that, like, that only happens if there's a high level of psychological safety. If they're able to take risks; if they're able to talk to you about their struggles. Melissa Nightingale: If you're able to give critical feedback on the work, where it is showing up exactly as it should and where it isn't showing up exactly as it should. Johnathan Nightingale: And if they see you engaging as an authentic leader. They don't need you to be perfect, but they need you to not be bullshit, right? It's an important part of how they cohere as a team, and how they find anything that you have to say remotely credible. But many people in management roles lack those skills. And if you're like, "Wouldn't that make work terrible for a lot of people?" Yes. Warzel: There we go. Well, part of the reason I ask is because it seems in this AI conversation, the term management is a skill set that AI companies seem to want to impose on all of us, right? Recently the co-founder of Anthropic, Jack Clark, went on a podcast, he's talking about the way that chatbots and increasingly these coding agents are, you know, going to be sent off to accomplish these tasks. And there's going to be all of what we're talking about, right? These things happening on behalf of us: goose chases, all kinds of stuff. And he had this quote that I thought was striking. It's one of the reasons I wanted to have this conversation with you all. Which is, he says, quote, "Everyone becomes a manager, and the thing that is increasingly limited -- or the thing that's going to be the slowest part -- is having good taste and intuitions about what to do next." What do you make of that line? "Everyone becomes a manager." Johnathan Nightingale: It's such a shallow read on management, isn't it? Like, if you think about it -- let's say he's right. Let's say he's right that when I fire up my Claude Code instance and I say, "Claude, create a marketing team for me. I want a content marketer. Want somebody on social media." Right. Have I done it now? Like, can I transfer those skills over to the humans that are still on my team? Is it the same thing? Can I just go to Tony and be, like, "Tony, get Alex and Sam in a room? You got new jobs. Now. Here's what you're doing." Is that going to work? Nobody thinks that's going to work. Melissa Nightingale: I also think any model for management that doesn't come with employees who have needs and, like, labor and rights -- there's a bunch of pieces that are missing from that mental model. And either that's accidental, or that's on purpose. But we should all be really concerned about a model of labor and a model of management that includes work happening without any capacity for what the people doing that work -- or what the folks who are supposed to be responsible for that output -- need. Johnathan Nightingale: Buddy seems to know a lot about AI. I know the podcast you're talking about. It's cool. He's built something that's very big and seems to be changing a lot of people's lives. And, I hope, some of them for the better. Like, that's super neat. But on management, I'm not convinced he has the range to give anybody advice on what constitutes good management. You can build a very wealthy company and still be cooking a bunch of people inside it. And saying something like that -- I don't know, you can call that an insult, you can call that a threat, but that's not what management is. Warzel: Well, what's interesting is, you know: As you're describing what makes a good manager, and you pause and say, "This could sound mercenary." Right? But what's actually involved in all of this is the very human work of recognition. Of getting to know people. Of caring. Of giving a shit, right? It seems like that idea of "everyone becomes a manager" -- that definition from an AI executive -- it pauses at the mercenary point. It says, "He's with you until that moment where you say, But then it requires all this." And part of the reason why I want to have this part of the conversation is also because my partner, Anne Helen Petersen, and I wrote this book about the rise of remote work in 2020. We spoke to you guys a lot about that book. And one of my broader takeaways from all the reporting is that -- despite being in the boss layer, right? -- the managers and especially middle managers were pretty miserable. Just in general. Just, like, a pretty miserable, core of that thing. Like, maybe miserable in different and unique ways than, you like oppressed rank-and-file workers. But pretty miserable. S So when I hear that we're all gonna become managers? From that, I hear: Man, that's a lot of task switching and a lot of, "I'm getting incoming from two sides of this thing." Like, two groups of people are kind of converging on me. I've got these unhappy people on one side; this unhappy thing on the other side. I kind of have to figure out how to exist in this world. I'm being pulled in a hundred directions. Melissa Nightingale: Charlie, you're selling it. You're making it sound so compelling. Johnathan Nightingale: Strong pitch, strong pitch. Warzel: But that, to me, is like: I don't know. I mean, does it seem as dystopian to you? Or do you just recognize this as, I don't know, a guy pontificating? Without, as you said, the understanding? Melissa Nightingale: We have a working mental model; like, we have a real-world model for where automation takes more of the front seat on management. Amazon warehouses are a good example. Uber drivers are a good example. Johnathan Nightingale: Already being managed by algorithms. The common thread there is this idea that where people are working in Amazon warehouses because they can't automate that piece yet. Right. And so they build all the automation around these little stations where the human stands, and the humans are reaching up and reaching down. That shouldn't be our vision for the future of work. And when you listen to a lot of the people who are like, "Oh, everyone's going to be a manager." You're to have this swarm of agents. Who's doing stuff? Like, what's the end state there? That you're a team of one? That you're a company of one? And so, when you try and sell me this story about a billion-dollar company with one person, I'm like: Think about the best times you've had at work. Best times. You might have had a shit boss. I get it. There's a lot of them. We're working as hard as we can. But, like, the best times you've had at work -- and I guarantee that story involves colleagues. Right? And so when somebody tries to sell you on, "Don't worry; you don't need colleagues anymore," I'm like: What are you doing? Like, that's an anti-signal. I understand your technology is very impressive, but, like, that's a weird thing to sell. Melissa Nightingale: Unless you're annoyed at paying the junior engineers $300,000 a year straight out of school. And then it's a very compelling sell. Warzel: There's a lot here, right? Some of these people who are talking this ... some of them, yes, are people who are inside large companies right now that are building all this. That have this workforce. So I think that argument makes a lot of sense. There's another group, though, of, let's just say "venture capitalists" who are actually on that island, right? A little bit. I mean, yes; there are plenty of people who work in some of these venture firms, no doubt. But the idea is that sort of like, life of the mind. Like "I'm this tech soothsayer." And I think in some sense there is this, "How can I push this to the furthest possible extent?" Right? And there's this idea, always, with the efficiency, right? The reason why the AI bro-type person who's saying this thing about the excitement of the first one-person, you know, unicorn company, I think, speaks to the idea of ... like, this is "efficiency" pushed to its broadest level, right? This is like the cheat code for late-stage capitalism. And I think it ties though to this broader premise of how productive this stuff actually is, right? So there's this recent survey from this company called ActiveTrack. And they analyzed over 10,000 workers across 376 companies. And they did it 180 days before and after AI adoption. And the thing that I think won't be surprising to a lot of people who follow this is: email, up 104 percent. Chat and messaging, up 145 percent. Collaboration time surged 34 percent to an hour a day. Multitasking rose 12 percent. It's that classic, you know, Parkinson's-law, "work expands to fill the time available for its completion"-type thing. What do you make of those types of numbers and the idea of productivity? Melissa Nightingale: We tell bosses all the time: Busy is not the same as effective. Like, you can run your team totally ragged in terms of having them chase every idea that seems like a good idea. But if that's not what we're here to do, then you're wasting their time and your own. Johnathan Nightingale: Yeah, the maximalist arguments are always so weird, right? Like, the thing you could do is say to your team: "This is a cool tool; we should figure out where we can apply it." Melissa Nightingale: Where is there an annoying problem within the organization that you wish someone would fix for you? Where is there an internal tooling thing that would be so cool to have and get a thing out of your way in your workflow? Like, great -- let's go build that. Warzel: Right. Software-size problems. Johnathan Nightingale: That could be the end of a sentence. It doesn't have to be. And then we fire everyone. Like, it doesn't. You can just end. They're like, "Well, but if all your competitors are cutting to the bone and outsourcing all their sort of, you know, friendships to ChatGPT or whatever, then you're going to have margin pressure. And you're going to have to do the same thing." And I'm like, man, "Maybe." But it turns out that creative, resourceful, adaptable humans are good at some shit. And that an LLM -- which however amazing you might find it to be -- is trained on yesterday. It's at some point going to run into problems inventing tomorrow, right? And like, that's a thing people can do. And then tomorrow it'll train on it. But I will bet on people. Again, not like a team thing. It's cool for the people to have tools. "We've got lots of tools." That's great. But it's so weird to bet your business on entirely outsourcing critical thought, creativity, collaboration, partnership to this thing that can generate grammatically correct paragraphs. Like, it just feels like such a weak-sauce version of leadership. Warzel: How hard has it been to give that message to this group of people? Have you been effective in conveying that? Is the siren song of all of this, you know, too difficult to resist? Like, what are you butting up against with this? Melissa Nightingale: Two things. Okay, so it's a great question. Like, on the one hand, you spend a third of your waking hours at work. If you're lucky and have like a normal work schedule, you spend a third of your waking hours at work. So I think for a large number of people, the idea that like one -- we just fully and outright reject the idea that that's how we're going to spend a third of our waking hours, as a collective. Like, absolutely not. Two, we've seen it, and that lends some credibility. In that we've seen inside a lot of organizations, and we talk to a lot of leaders about moments at work that really mattered to them. And it's like: I think the conventional wisdom is nobody would have any moments at work that mattered to them. Nobody would have any moments where a boss saw them, connected with them, brought them up, helped skill them up. Helped unlock the next stage of their career, because work is crappy. We have to spend a third of our life there, and it's always going to be crappy. But the fact of the matter is, you ask people: Do you have one of these moments? Do you have one of these things that happened where work really mattered to you, or was a support or a stability for you at a time where the rest of your life was, like, really rocky? People, by and large, do. And so our starting point, I think, for a lot of folks is that the idea that it could be good for a lot of folks is one, a radical concept, and two, a very welcome idea. Johnathan Nightingale: But when you ask about receptiveness, it's funny. One of the first companies we ever worked with was an AI company. And I remember meeting with the leaders that were going to be coming through a program with us. And this one guy, very sort of eccentric-professor vibes when he came in. Sort of scattered, came in a couple of minutes late. And he said, you know, "I'm coming to this management program. They're sending everybody to this management program. But I need you to know something right up front. I don't believe any human should manage any other human." Warzel: Yeah. Johnathan Nightingale: Cool, fair, yeah, great. He's a bit, "I've never done a program like this before; so you know, I'm open to it. I just want you to know that upfront." By like the third week of the program, he's sitting there reading High Output Management by Andy Grove. Which is sort of, former CEO of Intel; like a standard management text, particularly in tech circles. We're talking about hiring, and he's looking at a job description. And he's like, there's a bunch of gendered stuff in that job description. You're going to end up with a really tilted candidate pool if you keep doing it. Like, he's fully engaged with it, and is really thoughtful and conscientious about how to engage with it. He just didn't know the receptiveness is a really easy sell. It's a surprisingly easy sell. Nobody likes to be in a job that they don't know how to do and feel like they're failing all the time. And if you can give them some stuff, you know, there's this moment where you give them some tools. And they're like, "I don't know if that's going to work." And then you get them back next week, and they're like, "That did work. What else do you have?" Right. And like, it's actually really easy to convince. Warzel: How worried, just hearing you say this, how worried are you guys that these tools, these AI tools, will effectively just act as a Band-Aid for any of these moments? Of "Instead of having to think about it hard, and have that conversation that's ultimately really fruitful and ultimately helps me and everyone else around, I'll press this button instead"? Melissa Nightingale: We come back around again, right? It's like, if I am your direct report and I fucked up in a meeting, like: I'm sorry, I just didn't know that thing, and I said that thing, and I thought it was a good idea at the time. But like, whatever, I screw up in a meeting. And what happens after that meeting is you send me a thing to tell me I screwed up in that meeting, and it is perfectly outlined. Johnathan Nightingale: Lots of em dashes. Melissa Nightingale: It is perfectly structured. And, you know, you've given the feedback to an LLM, but it spit out a thing. And then I get the thing; we're back to the part where I'm like, That's rude as shit. Like, if I screw up, tell me. I am a grown-up; I can absolutely handle it. But you're back to this weird moment in work culture right now. Well, either that's a well-intentioned manager trying to format a thing so that it lands correctly without having to, like, have the like social risk in that moment. Of, like, "If I send it to Melissa and she's upset about it ... well, if GPT wrote it, she's mad at GPT. She's not mad at me. But if I actually put time and effort and energy into it, I might get it wrong. It might not land well. And I might have to spend some time reflecting on, like, That isn't the way I wanted that to go. I want it to go differently next time." Johnathan Nightingale: There's this study. Even if it never touches the employee, even if it's just something that the manager does, you know, they go into their own chat window and say, "Here's what happened. What am I supposed to think about it?" Study came out a little while ago, talking about sycophancy in LLM chats and its effect on sort of attribution of fault during conflict. Right? So I get into a fight with someone, or my direct report is pissed off because they didn't get a promotion. Or I've got hard feedback to give to them, and I gave them the feedback, but it didn't go the way I wanted it to. And so I'm using Chat to help me debug it. And what the study found is that the more sycophantic the LLM is, the more likely I am to feel like I did nothing wrong. The more likely I am to bring that sense that I did nothing wrong into my future interactions with that person. And then you cross-multiply with the studies that are well established now, that LLM use suppresses critical thought and critical reflection. And that a major component for leadership development is critical thought and critical reflection. And you're in a bad spot. Even if the employee never receives that GPT message, just me using GPT as my leadership coach is likely to really impair my sense of my relationship with my people, and also my own reflection. Melissa Nightingale: Outside of management and leadership circles, it may not be obvious why that's such a core component. But basically: If you are in a modern organization today, one of the biggest problems that we have of getting bosses to show up differently in the role is that they are often running from meeting to meeting to meeting to meeting to meeting. So if something goes wrong in my first meeting of the day, I have no opportunity -- other than 2 a.m. when I'm staring at the ceiling -- to think about, like, How did that go, and what do I want to have happen next time? Learning like humans are really freaking good at like: "This went this way; like I touched the hot stove. I don't want to touch the hot stove again. And here's what I'm going to do differently." If they've got time to think about it. The hard part that we have, in our industry and in our sort of line of work, is that for many leaders there's not any of that baked in anymore. Warzel: I want to get here, near the end, of something bigger, though. That we're kind of circling around all this. Which is: this idea of a constant replacement in work, right? Replacing the expectations of how much work should take up someone's life. You know, the expectations of how much dignity someone should be able to derive from it; the expectations of the path of predictable progress in a given career. The technology is often that we put into these spaces, you know, as we said. They free up space that is then used to, you know, fill more in. You keep piling stuff on here, without the attention and as much of the focus on the nourishing qualities that we're talking about here. And the things that might give people some purchase and some connection. And you've all been writing a little bit around this idea lately of social atrophy, and the role that work plays. What is work's role right now in this broader loneliness epidemic? Third-space dwindling, broader-disconnection feeling that a lot of people are experiencing? Melissa Nightingale: We started to see weird glimmers about two years ago. Where a lot of organizations were saying, like: "I'm managing a team, but my team is geo-distributed, right. And so, I'm managing people all over the world. And sometimes I'm managing them, occasionally in office, but sometimes I'm managing them [remotely] ... and we just don't share a lot of overlapping daylight hours. And we work in sort of our own Zoom windows. And for my folks, where I'm managing them, and they're not interacting with a lot of people, things have gone a little bit weird. And what do I do about that?" Like as a management question, right? Would come into our programs and say, like, "It's not a performance problem, per se. They're showing up for meetings. Like, the camera's off, but they're showing up. Things have just gotten wobbly enough that I have an overarching wellness concern. What do I do with that?" Johnathan Nightingale: And the more we looked at it, the more we saw ... you know, Melissa uses this language of work as the last bastion. Robert Putnam wrote Bowling Alone, right, and talked about "People aren't in bowling leagues anymore, but they also aren't in rotary clubs, and they also aren't in churches." That whole sense of, like, our community glue is, you know, eroding. If you want to take the worst version of it. Or certainly evolving. But through all of it -- work is a place that you show up, and you're around other people. And, you know, they see you and appreciate you when you do good things. And give you interesting things to work on. Or at least give you interesting things to talk about while you're getting coffee. When that falls apart, there isn't another backstop. There isn't another place we spend eight hours a day, five days a week. Warzel: So you feel like these tools, as they're further embedded in the workforce -- especially if they're embedded, you know, not critically -- are a real threat to that. Melissa Nightingale: I think if we had community answers for human connection, I would be less worried. But we have eroded most of our social answers that are baked in. We don't live near our relatives anymore. Most of us sort of go away. And then we sort of set up new communities. And maybe we've got some chosen family. But we have a really different context. And our backstop -- for a lot of us, sort of last bastion -- was work. Where we had to go, and we had to socialize. And even when we didn't feel like it, we had to, like, put on our hard pants and get ourselves sorted. And, you know, do the thing. And humans are social creatures. Like, it is core to who we are, the whole way along. Warzel: I fully agree with that. And yet I wanna push back slightly, because I can imagine there's people listening here who are going to say: "Work is work. Work is not family; work should be transactional." Johnathan and Melissa Nightingale: Totally. Yeah. Warzel: And these AI tools depersonalizing work in some way -- in making it so that like, yeah, when Tony sends that email, and it's very clear that he doesn't care, because it's a chatbot -- ultimately, that may be even a better thing, right? Or these tools are going to free people up to not have that time. I think we've sort of debunked that part of it. But this idea that impersonalization is actually a feature, not a bug, right? That these companies ... we were talking earlier about Amazon warehouses and things like that. One of the rebuttals to that from the AI-evangelist guy on X.com is gonna be like, "Who cares, man? It's a business. We're supposed to wring out every inch of productivity, or whatever. And yeah, there'll be more bots in the chain, so that we don't have to hang up ibuprofen because people have repetitive-stress injuries." This is sort of the true mercenary level. But also the mercenary level, I think, on the sense of employees who are like, "I've had it. I've been exploited by the system for so long. I actually like the idea that this is going to feel depersonalized." How do you see that butting up against the last-bastion status? Melissa Nightingale: We meet a lot of those folks. We meet a lot of folks who are like, "I just don't care anymore. I have cared a lot. And I really feel like I'm ready to care less." It's hard. And the reality for those folks is ... it's tricky. You can put yourself into a role in an organization. That you think you'll be fine. And like, you will still manage to get yourself promoted. And they will still figure out that you've got ideas, and you will still find your way to ... like, they often find their way back to, "Okay, I do care about this. I don't necessarily need it to be like my entire waking hours, or my entire personality. I need some space from it, and some other elements in play." But it's hard. It's very hard. A lot of folks have work as a key component, and a core element, of their identity. And you can say, "Well, they shouldn't. Like, they're silly; they're foolish for feeling like that's a moment of profound identity shift." But again, we have to work with humans as they are, and not as we wish them to be. I think it's okay that people care about their work. Johnathan Nightingale: Yeah, when you hear that -- have boundaries. Get out of toxic workplaces, right? Because nobody's saying "Wherever you are, that's where you must be forever. Otherwise, you're ungrateful. Otherwise, you're not applying yourself." Like, that's foolish. Like, if you need to get out, get out. If you need to keep yourself safe, keep yourself safe. But, in a deeper sense, I don't believe you. Like, we do actually care about this shit, and so, like, if you don't care about your job, that's fair. Do whatever you need to do. Capitalism's mean, right? But, you will not sell me on "Nobody cares about their jobs" or that it's not worth caring about your job. Because so many of the people we find who draw so much meaning from it don't see themselves in that at all. Warzel: So to end then, with that in mind, how does that intersect with this idea that, you know, these AI tools are going to destroy knowledge work or white-collar work or whatever? Right? Like, is that a bulwark against it? The idea that there's a lot of people out there that fundamentally give a shit? Like, 'cause it feels to me, if that part is really true, you're going to have this technology come right up against people, like a major pillar of who they are, right? And I don't think that there is a sense that yes, capitalism can sort of just, you know, knock people over, bend people to their whims. But I also think that, it seems to me, like this is all geared to meet some really heavy resistance from people who work. Do you think that's true? Melissa Nightingale: Remember that protest looks a lot of different ways. But like, me feeling like, as an employee, I've got options in terms of where I work. And who my colleagues are, and whether I have colleagues at all. Means that organizations that sort of lend themselves to that -- or sort of specifically go out with a message that says, like: This is what we're doing. We're gonna have colleagues. It's gonna be great. You're gonna love it. Like you're gonna be in an occasional meeting that isn't useful. It'll be okay. Johnathan Nightingale: But there'll be small talk beforehand. And nobody likes small talk. But it is interesting to see, you know, how her vacation went and stuff. Melissa Nightingale: But like, you will see change, I think. Less through walkouts and more through people feeling like the pendulum swings back, and organizations are trying to hire again. And AI companies, in particular, are very skilled at what it looks like to have a very hot talent market and to have to compete on the merits of the organization. Johnathan Nightingale: Isn't that wild? That while they're telling everybody else to lay off your team, and pay the remaining ones as little as possible for doing 10 people's worth of work. Melissa Nightingale: They are having 2021's version of the labor market. Johnathan Nightingale: Like, just throw any amount of money at getting top talent. We need the right people in the door, otherwise we're not going to be able to build the future. Like, what? I thought Claude was doing that. Warzel: That's it for us here. Thank you again to my guests, Johnathan and Melissa Nightingale. If you liked what you saw, new episodes of Galaxy Brain drop every Friday. You can subscribe on the Atlantic YouTube channel, or on Apple or Spotify or wherever it is that you get your podcasts. And if you wanna support this work and the work of my colleagues, you can subscribe to the publication at TheAtlantic.com/Listener. That's TheAtlantic.com/Listener. Thanks so much, and I'll see you on the internet. This episode of Galaxy Brain was produced by Renee Klahr and engineered by Miguel Carrascal. Our theme is by Rob Smierciak. Claudine Ebeid is the executive producer of Atlantic audio, and Andrea Valdez is our managing editor.

[5]

Economists Are Drawing Stronger Connections Between A.I. and Jobs