OpenAI proposes robot taxes and four-day work weeks to prepare for superintelligence era

25 Sources

[1]

OpenAI's vision for the AI economy: public wealth funds, robot taxes, and a four-day work week | TechCrunch

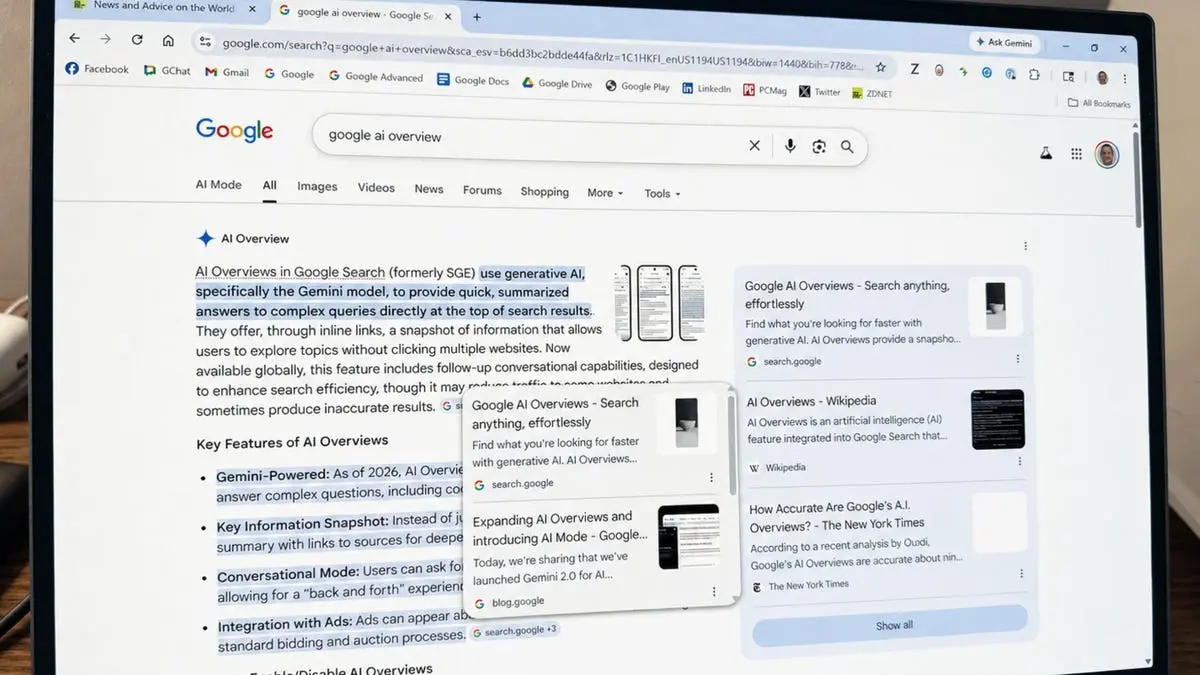

As governments grapple with how to manage the economic fallout of superintelligent machines, OpenAI has released a set of policy proposals outlining the ways wealth and work could be reshaped in an "intelligence age." The ideas blend traditionally left-leaning mechanisms like public wealth funds and expanded social safety nets with a fundamentally capitalist, market-driven economic framework. The proposals were released amid intensifying anxiety around AI, which has been colored by concerns over job displacement, wealth concentration, and data center buildouts across the country. They've also arrived as the Trump administration moves toward a national AI framework and in the run-up to the midterm elections, signaling an attempt at bipartisan positioning. That effort sits alongside a more direct political push: OpenAI President Greg Brockman -- who has donated millions to President Donald Trump -- and other tech billionaires have funneled hundreds of millions into super PACs supporting light-touch AI policies. OpenAI's proposed framework centers on three stated goals: distributing AI-driven prosperity more broadly, building safeguards to reduce systemic risks, and ensuring widespread access to AI capabilities so that economic power and opportunity don't become too concentrated. OpenAI has proposed shifting the tax burden from labor to capital. The company stops short of specifying a corporate tax rate -- which Trump dropped to 21% from 35% during his first term. But OpenAI warns that AI-driven growth could hollow out the tax base that funds Social Security, Medicaid, SNAP, and housing assistance as corporate profits expand and reliance on labor income shrinks. "As AI reshapes work and production, the composition of economic activity may shift -- expanding corporate profits and capital gains while potentially reducing reliance on labor income and payroll taxes," OpenAI wrote. The company suggests higher taxes on corporate income, AI-driven returns, or capital gains at the top -- a category of policy that pushed Marc Andreessen to back Trump after Biden proposed taxing unrealized capital gains in 2024. OpenAI also floats a potential robot tax, something Microsoft founder Bill Gates proposed in 2017, which involved the robot paying the same amount of taxes into the system as the human it replaced. The document also includes a proposal to create a Public Wealth Fund to give Americans an automatic public stake in AI companies and AI infrastructure, even if they're not invested in the market. Any returns would be distributed directly to citizens. The prospect may appeal to Americans who have watched AI inflate the market without seeing any of those gains themselves. Several of OpenAI's proposals were also more labor-focused, including one to subsidize a four-day work week with no loss in pay -- a proposal that aligns with the tech industry's promises that AI will give humans better work-life balance. OpenAI also suggests that companies boost retirement matches or contributions, cover a larger share of healthcare costs, and subsidize child or eldercare. Notably, OpenAI frames these as corporate responsibilities rather than government ones, leaving out the people AI is most likely to displace. If automation eliminates your job, your employer-subsidized healthcare and retirement match may go with it. That said, OpenAI does separately propose portable benefit accounts that follow workers across jobs, but these still likely depend on employer or platform contributions and stop short of the government-backed universal coverage that would actually protect people AI displaces entirely. OpenAI acknowledges that the risks of AI go beyond job loss, including misuse by governments or bad actors and the possibility of systems operating beyond human control. To mitigate those threats, it proposes containment plans for dangerous AI, new oversight bodies, and targeted safeguards against high-risk uses like cyberattacks and biological threats. But with the safety nets and guardrails come the growth proposals, including expanding electricity infrastructure to support AI's power demands and accelerating AI infrastructure buildouts by offering subsidies, tax credits, or equity stakes. OpenAI says AI should be treated like a utility, and to that end, suggests industry and government work together to ensure AI remains affordable and widely available, rather than controlled by just a few firms. OpenAI's framework comes six months after rival Anthropic released its policy blueprint, which laid out a range of possible responses to AI-driven disruption. "We are entering a new phase of economic and social organization that will fundamentally reshape work, knowledge, and production," OpenAI wrote. This, the company says, requires a "new industrial policy agenda that ensures superintelligence benefits everyone." OpenAI was founded as a nonprofit premised on AI benefiting all of humanity. It became a for-profit company last year, a shift that has led critics to question whether its stated mission is compatible with its need to grow and fulfill its fiduciary duty to shareholders. The company cited previous ages of economic upheaval like the Industrial Age, pointing to how new economic and financial movements like the New Deal ensured "growth translated into broader opportunity and greater security" by "building new public institutions, protections, and expectations about what a fair economy should provide, including labor protections, safety standards, social safety nets, and expanded access to education." "The transition to superintelligence will require an even more ambitious form of industrial policy, one that reflects the ability of democratic societies to act collectively, at scale, to shape their economic future so that superintelligence benefits everyone," OpenAI wrote.

[2]

OpenAI Touts 4-Day Work Week, Wealth Fund to Sell Public on Next-Gen AI

To win over AI skeptics, OpenAI is pushing some big ideas to ensure the technology benefits society, which includes a four-day workweek, robot taxes, and even a "public wealth fund" meant to put cash in everyone's pocket. Amid growing concern that generative AI will lead to massive job losses for human workers, not to mention the memory shortage caused by the AI data center scramble, OpenAI published a 13-page document outlining various measures governments could take to rein in AI. But it also comes across as a sales pitch for why countries should continue to invest in artificial intelligence, despite the potential toll on consumers. OpenAI CEO Sam Altman discussed the blueprint with Axios, which notes that the most radical proposal is the creation of a "public wealth fund" that would give every member of society "a stake in AI-driven economic growth." Importantly, the fund would apply to "every citizen -- including those not invested in financial markets." The fund would invest seed capital in AI-related assets and return profits to citizens. The document also calls for incentivizing companies to experiment with "32-hour/four-day workweek pilots with no loss in pay that hold output and service levels constant," because AI should convert to efficiency gains, it says. The other interesting proposal is to impose taxes on automation to ensure the US has enough tax revenue to support Social Security and Medicaid. OpenAI released the document in the hopes it'll kick off some public discussion. Importantly, the company is teasing that it's close to creating even more powerful AI models, dubbed AI superintelligence. "Now, we're beginning a transition toward superintelligence: AI systems capable of outperforming the smartest humans even when they are assisted by AI," the company wrote. "No one knows exactly how this transition will unfold." Still, OpenAI's policy document might be overshadowed by a New Yorker article published today that alleges Altman is a persistent liar, citing interviews with more than 100 people. Plus, the company's release of GPT-5 last year underwhelmed, raising concerns that OpenAI is hitting a wall on the technology's development. Altman, however, tells Axios that superintelligence is not only close, but so mind-bending and disruptive that it requires a new social contract in the US.

[3]

OpenAI Advocates Electric Grid, Safety Net Spending for New AI Era

The goal of the proposals is to serve as a "starting point" for a wider discussion "to ensure that AI benefits everyone," according to OpenAI. OpenAI has released a set of policy recommendations meant to help navigate an era of artificial intelligence-fueled upheaval -- including suggesting the creation of a public wealth fund, fast-response social safety net programs and speedier electrical grid development. In a document released Monday titled "Industrial Policy for the Intelligence Age: Ideas to Keep People First," OpenAI proposed a range of policies related to AI "superintelligence" -- often referred to as software that can outperform humans at all kinds of tasks, but which does not currently exist. Many of the proposals are tied to social change driven by AI, which some fear could lead to widespread job losses. The company advocates for a public wealth fund that will distribute cash to citizens, giving them "a stake in AI-driven economic growth." It proposes finding a way to let people share in efficiency gains driven by AI -- including by incentivizing employers to experiment with four-day work weeks, as long as workers' output doesn't fall. And it suggests actively measuring how AI affects wages and unemployment -- and then, once "these metrics exceed pre-defined thresholds," offering workers increased social assistance like unemployment benefits or job training. The goal of the proposals, the company wrote, is to serve as a "starting point" for a wider discussion "to ensure that AI benefits everyone." In an interview, OpenAI's chief global affairs officer Chris Lehane said the policy conversations around AI need to be "as transformative" as the technology itself. Founded in 2015, OpenAI kicked off the current boom in generative AI in late 2022 with the release of ChatGPT, which remains its most well-known product. Originally built as a nonprofit dedicated to advancing AI to benefit humanity, the startup has since restructured into a more traditional for-profit company. Get the Tech Newsletter bundle. Get the Tech Newsletter bundle. Get the Tech Newsletter bundle. Bloomberg's subscriber-only tech newsletters, and full access to all the articles they feature. Bloomberg's subscriber-only tech newsletters, and full access to all the articles they feature. Bloomberg's subscriber-only tech newsletters, and full access to all the articles they feature. Plus Signed UpPlus Sign UpPlus Sign Up By continuing, I agree to the Privacy Policy and Terms of Service. OpenAI has said for years that it's working to build what's often referred to as artificial general intelligence, or AGI -- essentially, computers that can do most tasks as well as people. More recently, the company and some of its rivals have discussed plans for more powerful software, or superintelligence. In its latest document, OpenAI defined superintelligence as "AI systems capable of outperforming the smartest humans even when they are assisted by AI." While OpenAI's ChatGPT is used by more than 900 million people globally each week, many in the US have negative feelings about AI generally, driven in large part by concerns about job displacement as well as power-hungry data centers. Companies like OpenAI and Anthropic, which are at the forefront of AI advancement, have sought to educate the public and policymakers about the potential changes wrought by AI. That's included a range of work on communication, including last week, when OpenAI bought the tech talk show TBPN. "It is simply not good enough to wave your hands and say, 'Here's all the things that are going to happen and then not actually come up with solutions,'" Lehane said.

[4]

OpenAI encourages firms to trial four-day weeks in AI era

ChatGPT-maker OpenAI says employers should consider trialling a four-day work week as AI use and demand grows in the workplace. Its "people-first" policy proposals set out a range of ideas to help society adjust to an AI era - something it says will bring benefits but also disruption to our lives and careers. Among its suggestions were creating more work opportunities in people-facing sectors such as childcare, education and healthcare. The company said its set of initial ideas - chiefly aimed at the US - aimed to prompt discussions about action needed as AI systems become more capable. Rapid reductions in the time taken by AI tools to complete some tasks mean a transition to advanced AI is in sight, OpenAI said in its Industrial Policy for the Intelligence Age report. "If progress continues, we can expect systems to be capable of carrying out projects that currently take people months," it added. "This shift will reshape how organisations run, how knowledge is created, and how people find meaning and opportunity." The company said to plan for this, firms should be incentivised to find "durable improvements in workers' benefits" - such as by piloting four-day work weeks with no loss in pay. Businesses could also increase retirement contributions, cover more healthcare costs and subsidise childcare, OpenAI said. It comes after warnings that the rise of increasingly capable AI tools could displace people from jobs. Bank of England governor Andrew Bailey said in December such displacement could mirror that seen during the Industrial Revolution. However, others have said the impact of AI may be felt much later than tech firms predict. This is not the first time a large AI company has set out its vision for social or economic changes needed to manage increased use and demands of the tech. Some of OpenAI's suggestions, such as creating a "public wealth fund" to give citizens a stake in AI-driven economic growth, mirror a set of policy ideas published by rival firm Anthropic in October. This said workers and students should be equipped with skills needed for emerging jobs, and planning processes should be revised to allow for more energy and computing infrastructure. More broadly, firms have ploughed ahead with AI development - including of "superintelligence" they believe could outsmart humans - while warning of its detrimental impact to some areas of society. But some also believe the tech's impact has been overstated, saying it may be years before any transformational effect on jobs, productivity and the economy is seen. Adam Slater, lead economist at Oxford Economics, wrote in a recent research note that many scenarios regarding AI's transformative growth "rely on optimistic modelling assumptions about micro-productivity gains and the pace of AI adoption, or on AI sharply raising the rate of generation of new ideas". He added while past periods of technological change and advance showed potential for large productivity gains, these "can take decades to materialise and can also tail off surprisingly quickly". Sign up for our Tech Decoded newsletter to follow the world's top tech stories and trends. Outside the UK? Sign up here.

[5]

OpenAI Releases Its Vague Vision for Reorganizing Society Around Superintelligence

OpenAI says the emergence of superintelligence, AI models that can outperform humans, will be as disruptive as the Industrial Revolution and will require a new social contract. In fact, like every other AI company, Sam Altman's startup has been saying that for years while offering very few ideas for what anyone is supposed to do about it. On Monday, the chatbot maker outlined a set of policy proposals aimed at rectifying that situation in a paper called “Industrial Policy for the Intelligence Age: Ideas to Keep People First.†The paper, which OpenAI says is meant to start a broader conversation about navigating AI’s impact on society, lays out a range of policy ideas, including a public wealth fund, four-day work weeks, investments in the electric grid, and a stronger social safety net. “No one knows exactly how this transition will unfold. At OpenAI, we believe we should navigate it through a democratic process that gives people real power to shape the AI future they want, and prepare for a range of possible outcomes while building the capacity to adapt,†the company said in the paper. The document comes as U.S. politicians remain split on how to regulate the lucrative industry. President Donald Trump, whom tech CEOs have spent the past year cozying up to, signed an executive order in December limiting what his administration described as overly burdensome state-level AI regulations in the name of national and economic security. More recently, progressive Sen. Bernie Sanders and Rep. Alexandria Ocasio-Cortez have been working on bills that would impose a national moratorium on the construction or expansion of new AI data centers until Congress passes legislation that “ensures the safety and prosperity of the American people.†OpenAI’s new paper vaguely attempts to address some of those concerns. It states that the company believes “AI’s benefits will far outweigh its challenges,†while acknowledging risks such as job losses, misuse of the technology, greater concentration of power and wealth, and even the possibility of AI systems becoming uncontrollable. Most of the paper focuses on the economic impact of AI. Among its proposals is a public wealth fund that would give every citizen a stake in “AI-driven economic growth.†The fund would invest in long-term assets tied to the AI economy, with returns distributed directly to the public. The company also suggests rethinking the tax system as AI changes how people work, shifting more toward taxing corporate profits and capital gains while reducing reliance on labor income and payroll taxes. It floats the idea of new taxes tied to automated labor. Additionally, the paper calls for expanding the social safety net, potentially on a temporary, automatic basis triggered by factors like rising unemployment and other economic indicators. It also proposes incentivizing employers to pilot 32-hour work weeks without reducing pay by tying those changes to productivity gains driven by AI. Beyond economic changes, the paper also outlines broader proposals, including strengthening the electric grid to support AI infrastructure and building new safety and oversight systems to reduce risks from advanced AI. OpenAI said it is accepting feedback on its proposals and plans to host discussions at its OpenAI Workshop, which is set to open in May in Washington, D.C. The company also announced plans to pilot fellowships and research grants of up to $100,000 and up to $1 million in API credits for projects that build on these and policy ideas. It remains to be seen how serious OpenAI is about turning these proposals into reality, or whether they have any real shot at becoming policy at all. But there’s little doubt the company has an incentive to at least look like it cares, especially as it tries to reassure investors and politicians ahead of a potential IPO.

[6]

OpenAI calls for robot taxes, a public wealth fund, and a four-day week

Sam Altman's 13-page policy blueprint, 'Industrial Policy for the Intelligence Age,' proposes auto-triggering safety nets, containment playbooks for rogue AI, and direct citizen dividends from AI-driven growth. He told Axios it is a starting point, not a prescription. OpenAI has published a 13-page policy document calling for sweeping economic reforms to prepare for what it describes as approaching superintelligence, including taxes on automated labour, a national public wealth fund seeded partly by AI companies, and pilots of a 32-hour working week. The document, titled 'Industrial Policy for the Intelligence Age: Ideas to keep people first,' was released as Congress prepares to debate AI legislation. CEO Sam Altman told Axios in an exclusive interview that the scale of change coming from AI is comparable to the Progressive Era and the New Deal, and that the two most immediate dangers are cyberattacks and biological weapons capable of being enabled by advanced AI. The most radical proposal in the document is the public wealth fund. OpenAI suggests the government create a nationally managed fund, seeded in part by contributions from AI companies themselves, that would invest in AI firms and other businesses adopting the technology and distribute returns directly to American citizens. The model is comparable to Alaska's Permanent Fund, which pays annual dividends to state residents from oil revenues. On labour, the document floats taxes on automated labour and a shift in the tax base from payroll towards capital gains and corporate income, an acknowledgement that AI could hollow out the wage-and-payroll revenue that currently funds Social Security. The 32-hour workweek proposal is framed as an 'efficiency dividend' from AI-driven productivity gains. The document includes a section on what it calls 'containment playbooks' for scenarios in which dangerous AI systems become autonomous and capable of replicating themselves. OpenAI acknowledges scenarios where such systems 'cannot be easily recalled,' and proposes government co-ordination as the response. The blueprint also envisions automatic safety net triggers: when AI-driven displacement metrics hit preset thresholds, benefits including unemployment payments and wage insurance would increase automatically, then phase out when conditions stabilise. Altman told Axios that a major cyberattack enabled by near-future AI models is 'totally possible' within the next year, and that AI models being used to create novel pathogens is 'no longer theoretical.' Altman was candid with Axios about the dual nature of the document. OpenAI is the company racing to build the very technology it is warning about, and positioning itself as the responsible actor proposing solutions is plainly also a strategy to shape regulation before regulation shapes it. Anthropic has occupied a similar lane. The policy paper arrives at a moment when OpenAI is preparing for an IPO, has closed a $110 billion private funding round, and is simultaneously under scrutiny over its conversion from non-profit. Whether the altruism is genuine or strategic, Altman told 'Some will be good. Some will be bad. But we do feel a sense of urgency. And we want to see the debate of these issues really start to happen with seriousness.'

[7]

OpenAI proposes new AI doom scenario

The big picture: The 13-page document released Monday seeks to lay down a marker for the kinds of policy shifts that might help AI advances create broad prosperity, rather than a divided society with a handful of AI-turbocharged elites and a mass underclass. * Many of the proposals in "Industrial Policy for the Intelligence Age" are outside the zone of political plausibility right now -- and some will seem familiar to readers of white papers from left-of-center think tanks. * These ideas would become viable only if the disruption from AI is so severe as to profoundly scramble U.S. political coalitions. Catch up quick: As Mike Allen and Jim VandeHei report, the proposals amount to a policy response to AI akin to the Progressive Era and New Deal policies that smoothed the rough edges off industrial capitalism. * The paper explores steps to broaden AI's benefits, including creating a national wealth fund, shifting more of the tax burden onto capital instead of labor, and bolstering the social safety net. * "We want to put these things into the conversation," OpenAI CEO Sam Altman told Mike and Jim. "Some will be good. Some will be bad. But ... we do feel a sense of urgency." The intrigue: At a time when Sen. Bernie Sanders (I-Vt.) has become perhaps the leading congressional antagonist of the AI industry, many of the ideas are distinctly Sanders-coded. * Conversely, many would be anathema to the Republican Party and even many centrists. Zoom in: For example, the conservative movement's central economic policy goal since President Ronald Reagan has been to reduce taxes on capital, seeing it as the pathway to unleash more investment and thus higher growth. * To that end, the top tax rate on capital gains is 20% (versus 37% for labor income), and a signature accomplishment of the 2017 Trump tax legislation was reducing the corporate income tax rate to 21%, from 35%. * The OpenAI paper envisions a world where labor income is under stress as machines take over much of the work humans currently do, with the returns going to holders of capital. * In effect, it would invert the tax code when the problem is not too little productivity growth, but too much of it for comfort. What they're saying: "This could erode the tax base that funds programs like Social Security, Medicaid," and more, putting them at risk, the document says. "Tax policy should adapt to ensure these systems remain durable," it argues. * "Policymakers could rebalance the tax base by increasing reliance on capital-based revenues -- such as higher taxes on capital gains at the top, corporate income, or targeted measures on sustained AI-driven returns" and to explore "taxes related to automated labor," the paper says. Yes, but: When extreme economic developments materialize, the rigid U.S. policymaking process has shown a surprising ability to unite and leap into action, overcoming partisan divides. * The passages of the CARES Act and other pandemic relief measures in 2020 were the most recent example. The same could be said of the Troubled Asset Relief Program bank bailout legislation of 2008. The bottom line: The ideas advanced by OpenAI might not be on the legislative radar today or tomorrow, but they represent an early effort to take policy implications of large-scale economic disruption seriously.

[8]

OpenAI says AI could mean a 4-day week 'with no loss in pay' -- but warns jobs and wealth are at risk

OpenAI is proposing a full economic reset -- taxes, wealth sharing, shorter work, and AI as infrastructure We tend to think of OpenAI and AI companies in general as pushing for minimal regulation so nothing slows their expansion. But in a new document, "Industrial Policy for the Intelligence Age," OpenAI argues the opposite: that government oversight isn't just necessary; we need it now. It suggests we need a new kind of industrial policy to deal with the upheaval AI will create: "The transition to superintelligence will require an even more ambitious form of industrial policy, one that reflects the ability of democratic societies to act collectively, at scale, to shape their economic future so that superintelligence benefits everyone." The risk to jobs is framed as the most immediate threat. "While we strongly believe that AI's benefits will far outweigh its challenges, we are clear-eyed about the risks -- of jobs and entire industries being disrupted," the document says. It also points to wider concerns, including threats to democracy, wealth concentrating in the hands of a few, and "bad actors" misusing the technology. So, what happens to people whose jobs are displaced? OpenAI suggests expanding what it calls the "care and connection economy", roles in childcare, eldercare, education, healthcare, and community services, as new pathways for workers. It also proposes turning AI-driven efficiency gains into tangible benefits for employees. That's where one of its most eye-catching ideas comes in. OpenAI suggests governments and employers should "incentivize employers and unions to run time-bound 32-hour/four-day workweek pilots with no loss in pay that hold output and service levels constant." If successful, those shorter weeks could become permanent or translate into more paid time off. It's an appealing vision: AI does more of the work, and humans get more time back. But it rests on a big assumption -- that those productivity gains actually translate into higher wages and not just higher profits. Tax the rich The document from OpenAI also hints at a more fundamental shift needed in how economies are taxed. If AI reduces the need for human labor, it argues, governments may need to rely less on taxing workers and more on taxing capital and the companies benefiting most from automation. In other words, if AI is doing more of the work, the businesses profiting from it may need to shoulder more of the burden. One of the more radical proposals contained in the document is the idea of a public wealth fund. It's effectively a way of redistributing the gains from AI across society. Instead of those profits sitting with a handful of companies, they could be pooled and returned to citizens, echoing models like sovereign wealth funds. What's most striking about this document, however, is how OpenAI frames AI itself. This is no longer just about better chatbots or smarter tools. The document positions AI as something closer to infrastructure -- a foundational layer that will underpin entire industries, economies, and public services. The future is bright OpenAI is arguing that AI could reshape the economy for the better, while also outlining how easily it could concentrate wealth and power if left unchecked. History has told us that humans are generally bad at long-term planning, and it's going to take a very different mentality from the one that our political leaders currently embody to implement these ideas. It's also an unusual position for OpenAI to put itself in: the company building the AI technology is now helping sketch out how AI should be taxed, regulated, and redistributed. Whether that's forward-thinking or self-serving will depend on who you ask, although the idea of a four-day work week would get my vote.

[9]

OpenAI Says Not to Worry About UBI, Because It Has Another Idea

Can't-miss innovations from the bleeding edge of science and tech As AI companies warn of mass automation and the end of work as we know it, ChatGPT maker OpenAI has published a sweeping policy paper outlining its vision of who should call the shots once "superintelligent AI" goes online. Beneath the paper's flowery rhetoric is something familiar: a powerful corporation arguing that the best way to provide for the poorest people in the world is to let the richest do whatever they want. The paper starts off by warning -- ironically -- that superintelligent AI could concentrate wealth among a small number of companies. Its solution to this problem, at a time when nearly 48 million Americans already struggle with hunger, is a "public wealth fund," which would be a program that provides "every citizen" with a "stake in AI-driven economic growth." Instead of expanding tested programs like a universal basic income or unemployment insurance, in other words, OpenAI's proposal is to hitch the public's wellbeing to the boom-and-bust cycles of the tech industry. "Policymakers and AI companies should work together to determine how to best seed the fund, which could invest in diversified, long-term assets that capture growth in both AI companies and the broader set of firms adopting and deploying AI," the paper suggests. "Returns from the fund could be distributed directly to citizens, allowing more people to participate directly in the upside of AI-driven growth, regardless of their starting wealth or access to capital." Of course, in a market economy constant growth isn't guaranteed, even if we do somehow achieve superintelligence. What happens if the tech companies suddenly post a bad quarter? What if our incredible AI system decides to take over Wall Street? What workers need isn't a wealth fund indexed to tech industry profits. They need universal healthcare, access to fresh food, and housing that isn't tied to the ebbs and flows of the stock market. Unlike AI superintelligence, this isn't a utopian fantasy. It's the baseline that most wealthy democracies already provide -- no AI required.

[10]

OpenAI's AI new deal: A Bernie Sanders moment?

A version of this article originally appeared in Quartz's Washington newsletter. Sign up here to get the latest business and economic news and insights from Washington straight to your inbox. Artificial intelligence may upend the relationship between labor, private enterprise, and American government in the years to come. Enter OpenAI, the AI giant that wants to supply ideas that could compose a new social contract akin to the New Deal. OpenAI, the company behind ChatGPT, on Monday released a new policy roadmap that mixes progressive and free-market proposals into a framework that casts the company as a responsible corporate actor -- even as it faces scrutiny over its long-term finances. In OpenAI's vision of the future, workers own a stake in AI's rewards should the staggering sums poured into the rapidly-growing sector pay off. Notable ideas include a public wealth fund seeded by AI firms and later invested into long-term assets. Americans would receive that money as dividends down the road, even if they weren't shareholders in AI. "Unless policy keeps pace with technological change, the institutions and safety nets needed to navigate this transition could fall behind," the company said in the 13-page policy document. "Ensuring that AI expands access, agency, and opportunity is a central challenge ... we should aim for a future where superintelligence benefits everyone." The concepts in the document aren't new. Many of them have circulated in Congress and Washington policy circles for years -- usually among progressive lawmakers. The blueprint draws from ideas long endorsed by Sen. Bernie Sanders of Vermont, a prominent progressive. He's strongly criticized AI development as another path for Silicon Valley executives to grow their wealth and power at the expense of the middle class. Notably, Sanders supports a pause on data center construction. The overlap between what Sanders supports and OpenAI's platform includes: "The goal here is really to start a conversation," Altman told Axios. "That said, the receptiveness from all directions to the fact that we are going to have to try some different things and the sooner we can start talking through them, the better, has surprised me." Still, the company hasn't shaken off questions about its ability to turn a profit and justify its eye-popping valuation. In February, OpenAI reset expectations and said it planned $600 billion in spending commitments through 2030, cutting its initial forecast by over half. In 2025, OpenAI only generated $13 billion in revenue. "The idea that permeates this paper is that AI is going to create a ton of wealth when today it's spending a ton of wealth," Matt Calkins, CEO of enterprise software firm Appian, told Quartz. He favored some pieces of the OpenAI document, such as incentivizing an expansion of the energy supply that accommodates power-hungry data centers by reducing red tape. That part of the platform has steadily gained traction in Capitol Hill. Sens. Josh Hawley of Missouri and Richard Blumenthal of Connecticut are among those who put forward legislation obligating data centers to develop their own power sources. Still, most of OpenAI's platform isn't likely to become law under the Trump administration. That's far from the only attempt from the tech sector at steering the national debate around the AI it is developing. Tech executives are gearing up to spend big in this year's midterms as Republicans seek to hang onto control of the House and Senate. OpenAI President Greg Brockman is among those pouring at least $100 million into Leading the Future, a pro-AI superPAC that backs candidates in both parties favoring a one-size-fits-all approach to AI regulation. Last month, the group notched some successes across key primaries in North Carolina, Texas, and Illinois. Leading the Future's list of GOP endorsements hasn't escaped notice from progressives. "Now they just need to stop spending hundreds of millions of dollars to defeat candidates who run on these policies!" Jeremy Slevin, a senior advisor to Sanders, wrote on social media referring to the OpenAI platform. Sanders' Senate office didn't return a request for comment. AI's impact on the labor market is beginning to emerge in economic data. Analysts from Goldman Sachs $GS and JPMorgan $JPM said in recent reports that AI has both destroyed and created new jobs in its wake. So far, employment disruption is limited: Goldman said AI substitution in certain sectors like phone operations elevated the unemployment rate by 0.16% over the past year. OpenAI has joined other tech firms like Anthropic in putting forward proposals to soften AI's blow on the labor market. Much of the blueprint is a starting point for debate, and it'll be up to policymakers to take it up or cast it aside as a PR play.

[11]

Sam Altman and Vinod Khosla agree: AI will break the economy. Their fix is no income tax for most Americans | Fortune

A month later, OpenAI has made it clear that Khosla's thinking may be the emerging consensus of Silicon Valley's most powerful voices on how to prevent artificial intelligence from tearing the social fabric apart. On Monday, OpenAI released a 13-page policy paper titled "Industrial Policy for the Intelligence Age: Ideas to Keep People First," in which Sam Altman's company laid out a sweeping blueprint for economic reform on a scale it compared to the Progressive Era of the early 1900s and Franklin Roosevelt's New Deal of the 1930s. The central thrust: as AI systems approach superintelligence -- defined as capabilities that surpass the smartest humans -- the existing tax code, labor market, and social safety net are all dangerously unprepared for what's coming. The overlap with Khosla's vision is hard to miss. Khosla's March proposal on Fortune's Titans and Disruptors of Industry podcast was elegant in its simplicity: eliminate the preferential tax rate on capital gains, tax all income -- whether earned from a paycheck or an investment portfolio -- at the same rate, and use the windfall to exempt everyone earning under $100,000 from federal income taxes entirely. He estimated that 40% of all capital gains taxes are paid by people earning more than $10 million annually, making the math work without increasing the overall tax burden. OpenAI's blueprint lands in the same territory, albeit through a slightly different door. The company's paper calls for shifting the tax base away from payroll and labor income -- the very revenue streams that AI threatens to hollow out -- and toward corporate income and capital gains. It also floats what many have termed a "robot tax," proposing levies on automated labor to capture a share of the productivity gains that would otherwise flow exclusively to capital owners. Both Khosla and OpenAI framed the need for a major policy overhaul around the massive change arising from the implications of the exponential improvement in AI tools. OpenAI warns that as AI automates more work, the wage and payroll tax revenue that funds Social Security, Medicaid, SNAP, and housing assistance could collapse. Shifting to capital-based taxation isn't just equitable, it argues, it's fiscally necessary. Both visions converge on the same uncomfortable assertion: the American tax system was designed for an economy where most value was created by human labor. That economy is disappearing. Khosla is not a passive observer of OpenAI's trajectory -- he was an early investor in the company. His argument that AI could automate 80% of current jobs by 2030 provides the economic backdrop against which OpenAI's policy paper reads less like corporate positioning and more like an alarm bell. During his Fortune interview, Khosla situated the problem in terms that go beyond tax policy. In an AI-driven economy, he argued, the traditional balance of income between labor and capital will tilt dramatically. "Capitalism was about economic efficiency," he said, "but if the need for efficiency goes away because of extreme abundance, then why focus on efficiency?" OpenAI's paper echoes that logic almost beat for beat. Its most radical proposal is a nationally managed public wealth fund, seeded in part by AI companies themselves, that would invest in diversified assets across the AI economy and distribute returns directly to American citizens -- a mechanism designed to give every person a stake in the technology that might otherwise render their skills obsolete. Khosla himself has endorsed the idea of a national wealth fund, and the symmetry between his individual advocacy and OpenAI's institutional proposal suggests that a policy framework is crystallizing within the AI industry's upper echelons. Still, the fact that a major VC and the company he invested in are singing the same tune hasn't silenced the skeptics. Anton Leicht, a visiting scholar with the Carnegie Endowment for International Peace, called OpenAI's paper "comms work to provide cover for regulatory nihilism" -- big ideas floated to project responsibility while the company builds at full speed. The paper landed on the same day The New Yorker published a lengthy investigation raising questions about Altman's trustworthiness on safety issues, a timing that did not go unnoticed. And the political headwinds are fierce. Taxing capital gains at ordinary income rates is a proposal that pushed Marc Andreessen to back Donald Trump after President Biden floated a plan to tax unrealized gains in 2024. OpenAI's paper conspicuously avoids specifying a corporate tax rate, a diplomatic omission that suggests the company knows where the political landmines are buried. The irony of Khosla's position is that he's making a case for bold federal tax reform while fighting a rearguard action in his home state against what he considers a catastrophically misguided local experiment. California's proposed Billionaire Tax Act would levy a one-time 5% tax on residents worth more than $1 billion -- a measure Khosla has called the behavior of "a junkie" chasing a one-time fix while permanently damaging the state's tax base. By some estimates, more than $1 trillion in billionaire wealth has already left California in anticipation of the ballot measure. Google co-founders Larry Page and Sergey Brin have reportedly taken steps to sever ties with the state. Even Gov. Gavin Newsom has said the measure "makes no sense." Khosla's counter-vision -- federal reform that taxes capital more aggressively while relieving the burden on working Americans -- is designed to be a policy that billionaires can live with and workers can vote for. As he put it: "They will vote for a candidate who says no taxes if you make less than $100,000." Both Khosla and OpenAI agree on at least one thing: the window for action is narrowing. Khosla predicted that structural tax reform will arrive before 2040 and could become a defining issue in the next presidential campaign cycle. OpenAI's paper calls for automatic safety-net triggers that would expand benefits when AI displacement hits preset thresholds, an acknowledgment that the disruption may arrive faster than any legislative process can handle. Goldman Sachs research has estimated that AI is already cutting roughly 16,000 U.S. jobs per month, with younger workers bearing a disproportionate share. OpenAI itself warns of scenarios where advanced AI systems become autonomous and self-replicating -- systems that, in its own words, "cannot be easily recalled." Against that backdrop, the question is no longer whether the tax code needs to change but whether Washington can move fast enough. Khosla, for his part, is betting the real battle will be fought in Congress, not in Sacramento. And now, with OpenAI's 13-page document in hand, he has the most powerful company in AI essentially co-signing his thesis. Whether that amounts to genuine policy momentum or, as critics contend, an elaborate exercise in reputation management may be the defining question of the political economy of the AI age.

[12]

OpenAI backs robot taxes and shorter workweeks in AI push

The company believes that AIs will soon become smarter than humans, which will have a drastic effect on how people work, live and pay taxes. OpenAI is urging governments to rethink the foundations of the economy, including how people work, earn, and pay taxes, as the shift to artificial intelligence (AI) continues. The company outlined "initial ideas" in a policy document on Monday to mitigate the disruption that artificial intelligence is bringing to the United States and the global economy. One of the major policy suggestions is to create a public wealth fund that provides all citizens with a stake in AI-driven economic growth. It could invest in diversified, long-term assets that capture growth in both AI companies and broader companies that are adopting and deploying the technologies, with the returns going straight to the citizens, the document said. Governments should also incentivise companies to launch four-day workweek pilot programs with "no loss in pay," as a way to offset the productivity gained from using AI, the company said. Lawmakers should also consider modernising the American tax system to increase taxation on corporate income and capital gains, rather than labour income, which could be affected by a wave of AI-related job losses and impact social benefits. Governments could also consider a tax when a company uses automated instead of human labour, the report said. It also suggests that benefit systems like retirement pensions and healthcare access be built into "portable accounts," that will follow people across jobs, industries and entrepreneurial ventures. This is not the first time that OpenAI or the CEOs of other AI companies have offered suggestions on how to mitigate changes coming to the job market from AI growth. Tech leaders, including xAI's Elon Musk and OpenAI's Sam Altman, advocate for a universal basic income as a likely necessity in a world where traditional work is being replaced by AI. Others, such as Nvidia's Jensen Huang and Zoom's Eric Yuan, have supported a four or three-day work week given the gains from AI productivity. Anthropic CEO Dario Amodei wrote a sweeping essay in January that warned that superintelligent AI, which will be able to outpace humans and will be difficult to control, is a "recipe for existential danger." In that essay, he suggested that controlling the export of key technologies, such as semiconductor chips, which are used to train large language models (LLM), as one key solution. He also called for transparency laws that compel AI companies to disclose how they guide their models' behaviour.

[13]

OpenAI releases policy document focused on financial impacts and risks of AI - SiliconANGLE

OpenAI releases policy document focused on financial impacts and risks of AI OpenAI Group PBC today released a policy framework with suggestions on how to address the risks posed by artificial intelligence. The 13-page document is titled "Industrial Policy for the Intelligence Age: Ideas to Keep People First." It's at least the second such paper OpenAI has published in the past 2 years. The document's release follows reports that OpenAI insiders have expressed concerns about Chief Executive Sam Altman's leadership. According to the New Yorker, "several executives connected" to the company have floated the idea of replacing Altman with Fidji Simo. Chief Financial Officer Sarah Friar, in turn, is reportedly not convinced about the strength of OpenAI's plan to go public. The company's newly published framework includes more than two dozen policy suggestions. Some call for broad macroeconomic policy changes, while others discuss narrower topics such as power grid components. Mitigating malicious AI output is another major focus. One of OpenAI's fiscal suggestions is that policymakers mitigate the economic impact of AI by "increasing reliance on capital-based revenues - such as higher taxes on capital gains at the top, corporate income, or targeted measures on sustained AI-driven returns." The paper goes on to suggest a tax tied to automation initiatives. Another section of the paper recommends that the government create an AI-focused public wealth fund. The paper also includes several narrower economic policy suggestions that each focus on a specific type of market participant. OpenAI argues that workers should be given a bigger say in how their companies implement AI. Entrepreneurs, meanwhile, would be given access to shared backoffice infrastructure and other forms of support if the document were to be implemented. OpenAI's paper also covers established companies, which the ChatGPT developer argues should be given "incentives that encourage firms to retain, retrain, and invest in workers, similar to existing R&D-style credits." One of the policy suggestions focuses on companies in the energy sector. OpenAI argues that the U.S. government should more actively support the development of power generation capacity. According to the papers, policymakers could go about the task by providing utilities with financial incentives and easing access to grid components such as advanced conductors. More than a half dozen of the remaining policy suggestions in the paper focus on AI safety. OpenAI says that the U.S. government should develop "coordinated playbooks to contain dangerous AI systems once they have been released into the world." Policymakers, adds the paper, should create a way for companies to report AI safety incidents. "These ideas are ambitious, but intentionally early and exploratory," OpenAI stated in a blog post that accompanied the paper. "We offer them not as a comprehensive or final set of recommendations, but as a starting point for discussion." The company plans to host discussions about its suggestions at a Washington, D.C. hub called the OpenAI Workshop that will open next month. Additionally, OpenAI will issue grants of up to $100,000 to AI policy researchers along with up to $1 million worth of API credits.

[14]

OpenAI on robot taxes, public wealth fund, AI jobs in policy

OpenAI has published a policy blueprint calling for robot taxes, a public wealth fund, and trials of a four-day workweek as part of a broad set of proposals designed to cushion the economic disruption expected from artificial intelligence. The 13-page document, titled "Industrial Policy for the Intelligence Age: Ideas to Keep People First," was released Monday. It frames the proposals as a starting point for public debate rather than a finished prescription, Axios reported, which published an interview with CEO Sam Altman alongside the document's release. Every American would receive an ownership interest in the gains produced by artificial intelligence under one of the document's more sweeping proposals -- a nationally managed public wealth fund that Axios characterized as the blueprint's most far-reaching element. Contributions from AI companies would help capitalize the fund, which is envisioned as holding stakes across both the AI sector and the wider range of industries adopting the technology. Tax policy proposals in the document include charges tied to the use of automated workers and a restructuring of the sources of government revenue -- moving the emphasis away from wages and toward investment returns and corporate profits. Underlying the tax proposals is the concern that widespread automation could erode the employment-based income streams that fund Social Security, Medicaid, and SNAP. Workers would see AI productivity improvements translate into shorter hours rather than higher output under another proposal, which calls for government-backed experiments with 32-hour schedules that maintain current pay levels. OpenAI's chief global affairs officer Chris Lehane told Bloomberg the policy conversation around AI needs to be "as transformative" as the technology itself. The document also envisions a data-driven mechanism that would expand government assistance without requiring new legislation each time -- once measurements of AI-related job displacement cross defined limits, programs covering income support, wage insurance, and direct cash payments would activate automatically. As labor market indicators recovered, the expanded benefits would wind down on their own. Rounding out the social proposals, the blueprint argues that access to AI tools should be treated as a basic public entitlement on par with reading ability or electrical service, and that pricing must not put those tools out of reach for hourly workers, community institutions, or economically marginalized groups. Perhaps the document's starkest moment comes when it confronts the possibility of AI systems that spread and operate beyond human control -- machines that, because they can copy themselves and act independently, could not be shut down through conventional means, making pre-arranged government-level response plans essential. Speaking to Axios, Altman framed the pace of superintelligence development as demanding a reimagining of American society's foundational agreements -- a transformation he compared in ambition to the Progressive $PGR Era reforms of the early twentieth century and the New Deal responses to the Depression. Of all the risks on the horizon, Altman singled out cyber and biological threats as the dangers that concern him most in the near term. "I think that's totally possible," he said of a significant cyberattack occurring within a year. "I suspect in the next year, we will see significant threats we have to mitigate from cyber." The backdrop to the proposals is a labor market already showing strain. White-collar payrolls have contracted for 29 consecutive months, a stretch economists describe as unprecedented outside a recession, and researchers have documented a decline in demand even for elite business school graduates. This analysis found that AI is reducing demand for white-collar workers, while the technology's positive job-creation effects remain years away. The document offers its own definition of superintelligence -- machines that surpass even the most capable humans at cognitive tasks, including situations in which those humans are working alongside AI tools. Bloomberg reported that ChatGPT's global weekly user base has grown to around 900 million people.

[15]

Sam Altman says AI superintelligence is so big that we need a 'New Deal' -- critics say OpenAI's policy ideas are a cover for 'regulatory nihilism' | Fortune

OpenAI says the world needs to rethink everything from the tax system to the length of the work day in order to prepare for the wrenching changes of superintelligence technology -- the point at which AI systems are capable of outperforming the smartest humans. On Monday, in a 13-page paper titled Industrial Policy for the Intelligence Age, OpenAI said it wanted to to "kick start" the conversation with a "slate of people-first policy ideas." How much faith to put in OpenAI's words and motives however, seems to be one of the key questions among many of the people reading the paper. The paper was released on the same day that the New Yorker published a lengthy one-and-a-half year investigation into OpenAI that raised questions about CEO Sam Altman's trustworthiness on various issues, including AI safety. Written by OpenAI global affairs team, the paper outlines many of the expected economic impacts of superintelligence and floats various approaches for addressing them. "We offer them not as a comprehensive or final set of recommendations, but as a starting point for discussion that we invite others to build on, refine, challenge, or choose among through the democratic process," said the introductory blog post. The self-described "slate of ideas" in the document -- spanning everything from public wealth funds to shorter workweeks -- may not do much to reassure a public increasingly nervous and disenchanted with the pace and consequences of AI-driven change. And OpenAI, of course, is one of the least neutral parties in this ongoing discussion, which is the core tension of the document, said Lucia Velasco, a senior economist and AI policy leader at DC-based Inter-American Development Bank and former head of AI Policy at the United Nations office for digital and emerging technologies. "OpenAI is the most interested party in how this conversation turns out, and the proposals it advances shape an environment in which OpenAI operates with significant freedom under constraints it has largely helped define," she said, adding that that wasn't a reason to dismiss the document, but "it is a reason to ensure that the conversation it is trying to start does not end with the same company that started it. Still, she emphasized that OpenAI is correct in saying that governments are behind in advancing policy solutions. "Most are still treating AI as a technology problem when it's actually a structural economic shift that needs proper industrial policy," she said. "That's a useful contribution, and the document deserves to be taken seriously as an agenda-setting exercise, even if it's a starting point." Soribel Feliz, an independent AI policy advisor who previously served as a senior AI and tech policy advisor in the U.S. Senate agreed that OpenAI deserves credit for "putting this on paper." The acknowledgment that both U.S. institutions and safety nets are falling behind AI development and deployment is correct, she said, "and the conversation needs to happen at this level at this moment." However, she emphasized that most of what is being proposed is not new. "Some of these pillars 'share prosperity broadly, mitigate risks, democratize access', have been the framework for every major AI governance conversation since ChatGPT came out in November 2022. "I worked in the U.S. Senate in 2023/2024 and we had nine AI Policy Fora sessions where all of this was said. I have it in my handwritten notes! All of this was already said, all of it," she wrote to Fortune in a direct message. "The language around public-private partnerships, AI literacy and worker voice reads like it came out of a UNESCO or OECD AI policy framework report. The ideas are not wrong. The problem is the gap between naming the solutions and building real mechanisms to achieve them." Clearly, the target audience is not its hundreds of millions of weekly ChatGPT users. Instead, it is the Beltway policymakers who have been pushing for AI regulation (or kicking the can down the road) in various forms ever since ChatGPT was released in November 2022. In that sense, some said it represents an improvement over earlier efforts. "I found this document to genuinely be a real improvement from previous documents that were even more floaty and high level," said Nathan Calvin, vice president of state affairs and general counsel of Encode AI. "I think some of the concrete suggestions around things like auditing or incident reporting and government restrictions on certain uses of AI are good ideas." But he also pointed to lobbying efforts led by OpenAI leaders with the Leading the Future PAC, which lobbies for AI industry friendly policies. Global affairs head Chris Lehane is considered the brainchild, while president Greg Brockman has been the biggest donor. "I hope this document signals a move toward more constructive engagement, instead of attacking politicians pushing the very policies OpenAI is now endorsing," said Calvin, pointing specifically to Leading the Future's lobbying against New York congressional candidate Alex Bores, author and primary sponsor of the RAISE Act, the New York AI safety and transparency law recently signed by Governor Kathy Hochul. Calvin has also accused OpenAI of using intimidation tactics to undermine California's SB 53, the California Transparency in Frontier Artificial Intelligence Act, while it was still being debated. He also alleged that OpenAI used its ongoing legal battle with Elon Musk as a pretext to target and intimidate critics, including Encode, which the company implied was secretly funded by Musk. Still, while OpenAI CEO Sam Altman compared Monday's slate of policy ideas the to the 'New Deal' in an interview with Axios, some say it reads less like FDR-era legislation and more like a Silicon Valley thought experiment that won't magically turn into action. For example, Anton Leicht, a visiting scholar with the Carnegie Endowment's technology & international affairs team, wrote on X that in reality, the ideas are fundamental societal changes and heavy political lifts. "They're not just going to emerge as an organic alternative," he wrote. "On that read, this is comms work to provide cover for regulatory nihilism." A better version of this, he said, would be to redirect the AI industry's political funding and lobbying skills to make progress on this kind of policy agenda. However, he said that the "vague nature and timing" of the document "doesn't make me too optimistic."

[16]

OpenAI's Altman releases blueprint for taxing, regulating artificial intelligence

OpenAI published a policy blueprint for artificial intelligence on Monday, recommending a revised social contract to prepare for the technology's likely impact on the economy, workforce and overall state of humans. The 13-page document suggests superintelligence, an AI that will surpass the smartest humans, is on the horizon, and argues the governing of AI must "keep people first" once this transition unfolds. From a public wealth fund to four-day work weeks, OpenAI's sweeping recommendations appear to be an attempt to get ahead of the growing fears around AI. Recent polling suggests a growing number of voters are concerned about how the technology could prompt job losses, raise electricity bills and change military operations. OpenAI and its CEO and co-founder Sam Altman compared today's circumstances to the transition to the Industrial Age and the Progressive Era and New Deal that followed. "In normal times, the case for letting markets work on their own is strong. Historically, competition, entrepreneurship, and open economic participation have lifted living standards and expanded opportunity," the document stated, adding, "But industrial policy can play an important role when market forces alone aren't sufficient -- when new technologies create opportunities and risks that existing institutions aren't equipped to manage." The recommendations include the creation of a public wealth fund to give every citizen a "stake in AI-driven economy growth" regardless of their current investments in financial markets. The company also called for taxes "related to automated labor," given AI could reduce the tax base funding programs like SNAP, Social Security and Medicaid. Altman also suggested new ways for public input to make sure the technology isn't just developed with the perspectives of "engineers or executives behind closed doors." AI leaders have emphasized the technology's ability to increase efficiency, and Altman recommended employers and unions use these "efficiency dividends" to press for four-day workweeks with no loss in pay. The company also suggested expanded job opportunities in human-centered job sectors like childcare, healthcare and community services where AI may help these workers, but not eliminate jobs. "As AI reshapes the labor market, these sectors can absorb transitioning workers if supported with investments in training, wages and job quality," the blueprint stated, suggesting governments build training pipelines and incentivize employers to raise pay in these fields. Other recommendations included guardrails on how the government can use AI, the development of playbooks to "contain dangerous AI systems" and the expansion of AI to detect cyber and biological risks.

[17]

OpenAI Wants Superintelligence to Reduce Working Hours, Boost Benefits

OpenAI said firms with AI gains should increase retirement contributions OpenAI, on Monday, shared a report on how the global government policies should be shaped to avoid the concentration of power and benefits in the hands of a select few. The San Francisco-based artificial intelligence (AI) firm's report focused on broad areas of creating an open economy, sharing the prosperity received from the technology, and building a resilient society to mitigate the risks of AI. The company also suggested policy changes to improve workers' benefits and tax reformations. OpenAI's Age of Superintelligence Focuses on Workers' Benefits In the report titled "Industrial Policy for the Intelligence Age: Ideas to Keep People First," the AI giant highlighted that as the age of superintelligence approaches, the world should focus on sharing its prosperity broadly, mitigating risks, and democratising access and agency. "OpenAI is offering these ideas to help start a broader conversation about the kinds of policies and institutions needed to navigate the transition[..]as a starting point for discussion that we invite others to build on, refine, challenge, or choose among through the democratic process. The transition to superintelligence is[..]already underway, and the choices we make in the near term will shape how its benefits and risks are distributed for decades to come," the report said. Alongside calling for the creation of an open economy where governments sit together to ensure that the benefits are not limited to a select few, the suggestions were also pro-worker. The ChatGPT-maker suggested giving those being impacted by the AI transition a voice in understanding how the technology can be used in workplaces. The report added that the focus should be on creating better jobs and safer workplaces. OpenAI also spoke about converting the efficiency and financial gains from AI into workers' benefits. Some of the suggested proposals include running a pilot of a 32-hour or four-day workweek with no loss in pay, increased paid time off, companies increasing retirement contributions and healthcare costs, and "benefit bonuses" tied to measured productivity improvements. The company also suggested subsidising child and eldercare. The report emphasises the need to create safety nets for workers and help transitioning employees get access to quick unemployment insurance, social security, and healthcare facilities. Additionally, it said governments should create opportunities in areas requiring care and connection, such as childcare, eldercare, education, healthcare, and community services, as pathways for workers displaced by AI. Another key focus area was modernising the tax base. OpenAI highlighted that AI will result in expanded corporate profits and capital gains, so the taxation should also reduce reliance on labour income and payroll taxes. Instead, it suggested higher taxes on capital gains, corporate income, and taxation on automated labour. Apart from this, the report also mentions making access to AI a fundamental right of individuals, the creation of public wealth funds that allow individuals to invest in and make profits from AI advancement, creating safety systems for emerging risks, and developing systems that let individuals build trust in AI systems.

[18]

Four-day week, taxes on robots, public wealth fund: OpenAI floats policy ideas for the intelligence age - The Economic Times

OpenAI has proposed a four-day, 32-hour workweek without pay cuts as part of sharing AI-driven gains. It suggests better worker benefits, wider AI access, and possible taxes on automated labour. It also urged for stronger oversight as advanced AI systems become more powerful and risks increase.OpenAI on Monday released a new policy paper on the "intelligence age", with a four-day workweek at the centre of its vision for how society should share the gains from artificial intelligence (AI). As per the paper, the company says employers and unions should run time-bound pilots of a 32-hour workweek with no loss in pay, while keeping output and service levels unchanged. They can then consider converting reclaimed hours into a permanent shorter week or bankable paid time off. This is one of the many proposals on "efficiency dividends" in the paper. OpenAI said that AI should do more than lift productivity for companies; it should also translate into better worker benefits, including larger retirement matches, bigger healthcare contributions, and subsidies for child and elder care. The paper frames these ideas as ways to ensure that AI-driven efficiency is shared with employees rather than captured only as profits. The document also argues that AI's economic gains could arrive unevenly and concentrate among a small number of firms unless governments act. It called for a "right to AI," broader access to AI tools, a more durable tax base, and even a Public Wealth Fund so citizens can share in AI-driven growth. It also moots the idea of "taxes related to automated labor". On the safety side, the paper warns that stronger frontier AI systems will require tighter oversight, including auditing, incident reporting and model-containment plans.

[19]

Sam Altman Wants To Talk -- Six Takeaways From His Bold Proposal On AI, Wealth Distribution, Governance - Al

Sam Altman, CEO of OpenAI, released a 13-page document on Monday comparing the shift towards superintelligence to past major technological transitions like electricity or the combustion engine. It is a comprehensive proposal on how governments should tax, regulate, and redistribute wealth from AI technology. Six major insights from Altman's plan: 1-Shared Benefits Altman advocates for a proactive policy similar to the "Progressive Era" or New Deal to ensure AI breakthroughs translate into shared opportunities to benefit a broad spectrum of people, not just a few powerful entities. He proposes principles for an AI-centered industrial policy, including sharing prosperity broadly, mitigating risk and building governance, and democratizing access and agency. 2-AI-Driven Tax, Wealth Fund Through this paper, Altman also outlines initial policy ideas, such as modernizing the tax system. He said policymakers could raise taxes on capital gains, corporate income, and AI-driven profits, or introduce taxes on automation, while offering wage-linked incentives to help firms retain and retrain workers. These measures aim to fund essential programs and support workforce shifts in an AI-driven economy. He also called for creating a Public Wealth Fund. Policymakers and AI companies could collaborate to create a fund investing in AI-driven growth across companies, said Altman. Returns from the fund could be distributed to citizens, letting everyone benefit directly from AI's economic upside. 3-Four-Day Workweek Use AI efficiency gains to boost worker benefits, fund healthcare and retirement, and test shorter workweeks without reducing pay, turning saved hours into permanent time off or a four-day work week. 4-Policy Pilots & Global AI He suggests that policy experiments should be piloted by non-government groups, with successful approaches reinforced by the state through regulation, procurement, and investment. It emphasizes the need for global cooperation as the transition to superintelligence is already underway worldwide. 5-Containing Dangerous AI Societies should create and test plans to contain dangerous AI systems that can't easily be recalled, focusing on limiting their spread, reducing harm, and coordinating responses -- similar to strategies used in cybersecurity and public health. 6-Strengthening Safety Nets Altman urges authorities to ensure safety nets like unemployment insurance, SNAP, Social Security, Medicaid, and Medicare work effectively and at scale. Track AI's impact on jobs and wages in real time, then automatically expand temporary support -- such as cash assistance, wage insurance, or training -- when disruptions exceed set thresholds, scaling back as conditions stabilize. OpenAI presents these ideas as a starting point for a global, inclusive conversation on shaping AI's benefits. Progress will rely on ongoing collaboration, experimentation, and feedback, supported by fellowships, research grants, and discussions at the new OpenAI Workshop. Disclaimer: This content was partially produced with the help of AI tools and was reviewed and published by Benzinga editors. Photo Courtesy: Meir Chaimowitz on Shutterstock Market News and Data brought to you by Benzinga APIs To add Benzinga News as your preferred source on Google, click here.

[20]

OpenAI Calls for AI Taxes to Protect Safety Nets | PYMNTS.com

By completing this form, you agree to receive marketing communications from PYMNTS and to the sharing of your information with our sponsor, if applicable, in accordance with our Privacy Policy and Terms and Conditions. OpenAI warned that a chain reaction is already underway. The company released a 13-page policy paper calling on governments to tax automated labor and artificial intelligence (AI)-driven capital returns. Without intervention, OpenAI argued that payroll tax revenue will decline as AI replaces workers. That erosion puts Social Security, Medicaid and SNAP at risk. Taxes on automation, OpenAI said, can help close that gap without slowing AI adoption. AI expands corporate profits and shrinks labor income. Programs built on payroll taxes don't adjust automatically. The Hill reported that OpenAI called for taxes "related to automated labor" to stabilize that funding base. OpenAI's paper also proposes higher levies on capital gains and corporate income. It pairs those with wage-linked incentives for firms that retain and retrain workers, modeled on existing R&D credits. However, the ChatGPT maker stopped short of specifying rates or a collection mechanism. The proposals aren't new to the industry. Anthropic's economic policy research, published last October, identified similar instruments. University of Virginia economists Lee Lockwood and Anton Korinek proposed studying taxes on token generation, robots and digital services. They argued that taxing compute and hardware could become the only remaining mechanism to capture windfall gains if labor's role in the economy declines. Anthropic noted those taxes would directly affect its own revenue. The company has committed $10 million to scale up its Economic Futures research program, which funds empirical work on AI's labor market effects. OpenAI's paper acknowledged that productivity gains will concentrate inside a small number of firms, including OpenAI itself. Workers using AI may see output rise. Their compensation may not follow. The paper called for a public wealth fund seeded by AI companies and government investment. Returns would go directly to citizens, including those with no exposure to financial markets. It also proposed converting efficiency gains into better retirement contributions and expanded healthcare cost-sharing. It called for time-bound pilots of a 32-hour workweek at no pay cut, with reclaimed hours converting to a permanent shorter week or bankable paid time off. AI systems have already progressed from tasks that take minutes to tasks that take hours, the company said. Systems capable of handling month-long projects are next, it added. Tech Policy Press called the document a "policymercial," a portmanteau of "policy" and "commercial." It noted several sections read as product pitches rather than policy. The proposal asking policymakers to help workers launch AI-first businesses, for example, named no role for state or federal governments and cited no funding mechanism. The paper also linked subsidized AI access for underserved communities directly to a call for governments to accelerate energy infrastructure expansion and reduce regulation on advanced conductors. OpenAI is among the largest consumers of that infrastructure. TechCrunch reported that the proposal mixes traditionally left-leaning mechanisms like public wealth funds with a fundamentally market-driven economic framework. OpenAI was founded as a nonprofit premised on AI benefiting all of humanity. It became a for-profit company last year, a shift that's led critics to question whether its stated mission is compatible with its fiduciary duty to shareholders. Some analysts told Fortune the document correctly identifies AI as a structural economic shift rather than a technology problem. Carnegie Endowment visiting scholar Anton Leicht called the proposals fundamental societal changes and heavy political lifts. Anthropic's research puts the stakes directly: governments need to formulate responses before the scale of displacement is clear, because waiting means the tools won't exist when they're needed. OpenAI is offering research grants of up to $100,000 and up to $1 million in API credits for work that builds on its proposals. It opens a policy workshop in Washington in May.

[21]

OpenAI releases AI industrial policy for superintelligence transition