Princeton creates 3D neural network that computes with living brain cells to tackle AI energy crisis

4 Sources

[1]

A three-dimensional micro-instrumented neural network device - Nature Electronics

Three-dimensional (3D) cultured neural networks that emulate the structures and computational principles of the brain could be of use in the development of brain-inspired computing and artificial intelligence, as well as in the understanding of neural development and disease progression. However, creating such stable device-neural network interfaces remains challenging, limiting the potential of such 3D neural networks. Here we report a 3D micro-instrumented neural network device in which a 3D flexible electronic sensor and stimulator array is integrated with a 3D cultured neural network. Our device can be used to record action potentials from multiple planes over a period of 6 months, allowing the quantitative monitoring of the evolving connectivity maps and the pharmacological stimulation responses of the neural networks. This approach also supports chronic electrical stimulation, which we use to train neural networks by tuning the connectivity strengths between neurons, creating a reservoir neural network for biocomputing.

[2]

New 3D device computes using living brain cells -- bioelectronic device uses 3D electronic mesh design paired with living tissue

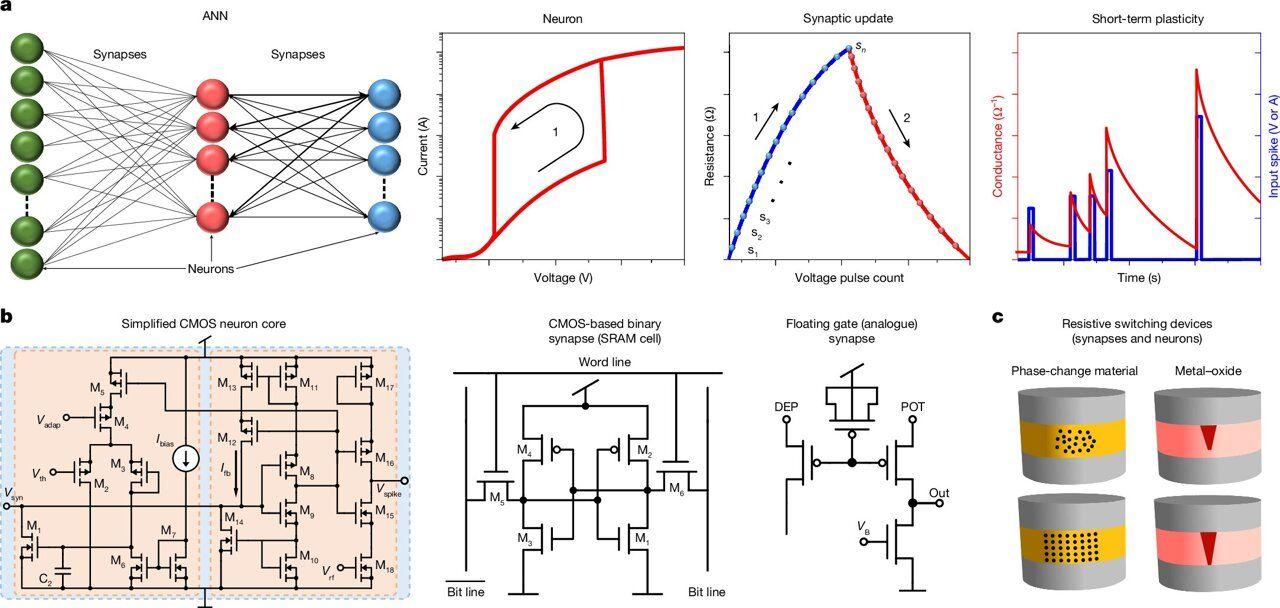

The device may unlock the brain's neuroscience and energy efficiency secrets. Researchers at Princeton University have created a three-dimensional neural network device that combines living brain cells and advanced embedded electronics. According to a recent press release, this 3D bioelectronic computer was programmed to differentiate patterns using computational techniques. Basically, we are looking at living brain cells performing computational tasks outside the brain, using embedded electronics. This is not the first time scientists have used brain cells to perform computation. In previous attempts, scientists cultivated 2D cultures in petri dishes or 3D clusters, probing and monitoring activity from the outside. The Princeton research took a different approach. To build the device, the team created a 3D mesh of microscopic wires and electrodes supported by a thin layer of epoxy. They then cultured tens of thousands of neurons into a vast 3D network that can perform computation, using the mesh as a scaffold. According to the researchers, this new approach "enabled them to record and stimulate the neurons' electrical activity at a much finer scale than past approaches". Over the course of six months, they observed how the network developed, tested techniques to reinforce or weaken links between key neurons, and eventually trained an algorithm to identify recurring pulse patterns. To test the system, the researchers presented two distinct patterns in separate experiments, and it successfully differentiated the patterns in both cases. The team aims to progressively scale the device to perform increasingly complex tasks. According to the paper's first author, Kumar Mritunjay, a postdoctoral researcher in electrical and computer engineering, the technology could "not only help uncover the computing secrets of the brain but can also assist in understanding and possibly treating neurological diseases." The original aim of the research was to investigate fundamental problems in neuroscience by studying the activities of living brain cells. That aim remains. However, the researchers realized that it could also play a role in solving one of AI's key bottlenecks: power consumption. "The real bottleneck for AI in the near future is energy," said Fu. "Our brain consumes only a tiny fraction -- about one millionth -- of the power consumed by today's AI systems to perform similar tasks," said Tian-Ming Fu, assistant professor of Electrical and Computer Engineering and member of the research team. The researchers hope the device may reveal some of the secrets behind this, making it possible to replicate the discoveries and solve AI's power consumption problem. The paper was published in the journal Nature Electronics.

[3]

3D Bio-Hybrid Device Merges Neurons and Computing - Neuroscience News

Summary: Researchers have bridged the gap between biology and silicon by creating a 3D programmable device that merges living brain cells with advanced electronics. Unlike previous "brain-on-a-chip" attempts that grew cells on flat surfaces, this device uses a flexible, microscopic metal mesh as a scaffold, allowing tens of thousands of neurons to grow around and through the sensors. The study demonstrates that this "biological neural network" can be trained to recognize complex electrical patterns, offering a high-efficiency alternative to power-hungry AI. Princeton researchers have combined brain cells and advanced electronics into a single 3D device that can be programmed to recognize patterns using computational techniques. Past attempts at using brain cells to do computation have relied on 2D cultures grown in a petri dish or 3D clusters that are probed and monitored from outside. The Princeton device takes a different approach, working from the inside out. Using advanced fabrication techniques, the team created a 3D mesh made of microscopic metal wires and electrodes supported by a thin epoxy coating. Because the coating is so thin, it has just the right amount of flexibility to interface with the soft neurons that grow around it. The team used the mesh as a scaffold to culture tens of thousands of neurons into a vast 3D network that can be used to do computation. The study was published in Nature Electronics on Apr. 23. The researchers said the new integrated approach enabled them to record and stimulate the neurons' electrical activity at a much finer scale than past approaches. They tracked the evolution of the system over a period of more than six months, experimenting with ways to strengthen and weaken connections between key neurons, and ultimately trained an algorithm that could recognize patterns of electrical pulses. In one test, they used pairs of distinct spatial patterns. In another, they used distinct temporal patterns. The system correctly distinguished among the patterns in both tests. The researchers said they hope to scale the system to the point where it can do increasingly complex tasks. The work was led jointly by Tian-Ming Fu, assistant professor of Electrical and Computer Engineering and Omenn-Darling Bioengineering Institute; James Sturm, Stephen R. Forrest Professor of Electrical and Computer Engineering; and Kumar Mritunjay, a postdoctoral researcher in electrical and computer engineering. While initially developed to study fundamental problems in neuroscience, the team realized it could shed light on a key bottleneck of modern AI technology: energy consumption. "The real bottleneck for AI in the near future is energy," said Fu. "Our brain consumes only a tiny fraction -- about one millionth -- of the power consumed by today's AI systems to perform similar tasks." Mritunjay, the paper's first author, said that systems like this, called 3D biological neural networks, "not only help uncover the computing secrets of the brain but can also assist in understanding and possibly treating neurological diseases." A three-dimensional micro-instrumented neural network device Three-dimensional (3D) cultured neural networks that emulate the structures and computational principles of the brain could be of use in the development of brain-inspired computing and artificial intelligence, as well as in the understanding of neural development and disease progression. However, creating such stable device-neural network interfaces remains challenging, limiting the potential of such 3D neural networks. Here we report a 3D micro-instrumented neural network device in which a 3D flexible electronic sensor and stimulator array is integrated with a 3D cultured neural network. Our device can be used to record action potentials from multiple planes over a period of 6 months, allowing the quantitative monitoring of the evolving connectivity maps and the pharmacological stimulation responses of the neural networks. This approach also supports chronic electrical stimulation, which we use to train neural networks by tuning the connectivity strengths between neurons, creating a reservoir neural network for biocomputing.

[4]

Princeton builds bio-hybrid computer using living neurons

A team at Princeton University has developed a 3D device that combines tens of thousands of living neurons with an embedded electronic mesh, constituting a biological neural network capable of recognizing intricate electrical patterns. This study, published in Nature Electronics, introduces a novel bio-hybrid computing approach that could mitigate the escalating energy needs of artificial intelligence. The device employs an "Inside-Out Architecture," setting it apart from earlier brain-on-chip models that depended on flat, two-dimensional cell cultures or externally probed three-dimensional structures. Researchers created a three-dimensional mesh with microscopic metal wires and electrodes coated with a flexible epoxy to interface seamlessly with soft biological tissue. Neurons were cultivated on this scaffold, forming a dense and functional network over time. This integrated design enables researchers to stimulate and record neural activity more precisely than previous methodologies. Over six months, the team experimented with modifying neuronal connections and successfully trained an algorithm to differentiate between spatial and temporal electrical patterns. The project was led by Tian-Ming Fu, assistant professor of electrical and computer engineering, along with James Sturm, the Stephen R. Forrest Professor of Electrical and Computer Engineering, and postdoctoral researcher Kumar Mritunjay. The research initially focused on fundamental neuroscience but revealed significant implications for AI hardware, particularly concerning energy consumption. "The real bottleneck for AI in the near future is energy," Fu stated. "Our brain consumes only a tiny fraction -- about one millionth -- of the power consumed by today's AI systems to perform similar tasks." This device is part of a growing trend that seeks to blur the boundaries between biological and electronic systems. Recent demonstrations by Northwestern University researchers showcased printed artificial neurons triggering responses in living mouse brain cells, while the Princeton device advances this concept by embedding electronics within the living network itself, offering higher integration and enhanced control capabilities. Mritunjay commented that these systems "not only help uncover the computing secrets of the brain but can also assist in understanding and possibly treating neurological diseases." The research team plans to scale the platform for more complex computational tasks, with anticipated long-term applications in neuromorphic chip design, drug testing, and brain-machine interfaces.

Share

Copy Link

Princeton University researchers have developed a groundbreaking bioelectronic device that merges tens of thousands of living brain cells with a 3D electronic mesh. The system successfully recognizes complex patterns while consuming just one-millionth the power of conventional AI systems. Monitored over six months, the device was trained to differentiate electrical patterns, demonstrating biocomputing capabilities that could reshape artificial intelligence and advance neuroscience research.

Princeton's Bioelectronic Device Merges Living Neurons With Electronics

Researchers at Princeton University have developed a 3D neural network that combines living brain cells with advanced electronics to perform computational tasks, marking a significant step toward brain-inspired computing. Published in Nature Electronics, the bioelectronic device integrates tens of thousands of neurons with a microscopic electronic mesh, creating a biological neural network capable of recognizing complex electrical patterns

1

2

. The research team, led by Tian-Ming Fu, assistant professor of Electrical and Computer Engineering, along with James Sturm and postdoctoral researcher Kumar Mritunjay, spent over six months monitoring neural activity and training the system to differentiate patterns3

.

Source: Tom's Hardware

What sets this 3D bio-hybrid device apart is its Inside-Out Architecture. Unlike previous brain-on-chip attempts that relied on flat, two-dimensional cell cultures in petri dishes or externally probed three-dimensional clusters, the Princeton device works from the inside out

4

. Using advanced fabrication techniques, the team created a three-dimensional electronic mesh made of microscopic metal wires and electrodes supported by a thin epoxy coating. This scaffold provides just the right amount of flexibility to interface seamlessly with the soft neurons that grow around and through the sensors2

.Monitoring Neural Activity and Training Neural Networks Over Six Months

The integrated design enables researchers to record action potentials from multiple planes and stimulate neuronal connectivity at a much finer scale than previous approaches. Over a period of more than six months, the team tracked the evolution of the system, experimenting with techniques to strengthen and weaken connections between key neurons

1

. This chronic electrical stimulation allowed them to train neural networks by tuning the connectivity strengths between neurons, ultimately creating a reservoir neural network for biocomputing3

.

Source: Neuroscience News

In testing, researchers presented the system with distinct spatial patterns in one experiment and distinct temporal patterns in another. The algorithm successfully differentiated among the patterns in both tests, demonstrating the device's capability to recognize complex electrical pulse patterns

3

. The device also showed robust responses to pharmacological stimulation, allowing quantitative monitoring of the evolving connectivity maps of the neural networks1

.Addressing AI Power Consumption Through Biological Computing

While initially developed to study fundamental problems in neuroscience, the team realized the technology could address one of artificial intelligence's most pressing challenges: energy efficiency. "The real bottleneck for AI in the near future is energy," said Fu. "Our brain consumes only a tiny fraction -- about one-millionth -- of the power consumed by today's AI systems to perform similar tasks"

2

4

.This dramatic difference in power consumption positions biocomputing as a potential solution to the escalating energy demands of modern AI systems. The researchers hope the device may reveal some of the secrets behind the brain's remarkable energy efficiency, making it possible to replicate these discoveries in neuromorphic chip design

2

4

.Related Stories

Future Implications for Neuroscience and Brain-Machine Interfaces

Mritunjay noted that these 3D biological neural networks "not only help uncover the computing secrets of the brain but can also assist in understanding and possibly treating neurological diseases"

2

3

. The research team plans to progressively scale the device to perform increasingly complex tasks, with anticipated long-term applications extending beyond energy-efficient computing to include drug testing and brain-machine interfaces4

.This device represents part of a growing trend that seeks to blur the boundaries between biological and electronic systems. The ability to create stable device-neural network interfaces over extended periods opens new possibilities for understanding neural development and disease progression

1

. As the technology matures, watch for developments in how these systems scale to handle more complex computational tasks and whether the energy efficiency advantages translate to practical AI applications.References

Summarized by

Navi

[2]

[3]

Related Stories

Breakthrough in Neuromorphic Computing: Single Silicon Transistor Mimics Neuron and Synapse

29 Mar 2025•Technology

Brain Cells Outperform AI in Learning Speed and Efficiency, Study Reveals

13 Aug 2025•Science and Research

Cortical Labs Unveils CL1: World's First Commercial Biological Computer Using Human Brain Cells

06 Mar 2025•Technology

Recent Highlights

1

Anthropic warns AI may soon build itself, calls for global pause on frontier development

Policy and Regulation

2

Florida sues OpenAI and Sam Altman over ChatGPT safety, alleging AI harms linked to violence

Policy and Regulation

3

Nvidia RTX Spark AI chip debuts in premium laptops, promising Windows its Apple Silicon moment

Technology

Recent Highlights

Today's Top Stories

News Categories