Scientists reveal which phone swipes tire you out most with new AI simulation tool

2 Sources

[1]

We swipe our phones all day, and scientists just ranked which ones are the most tiring

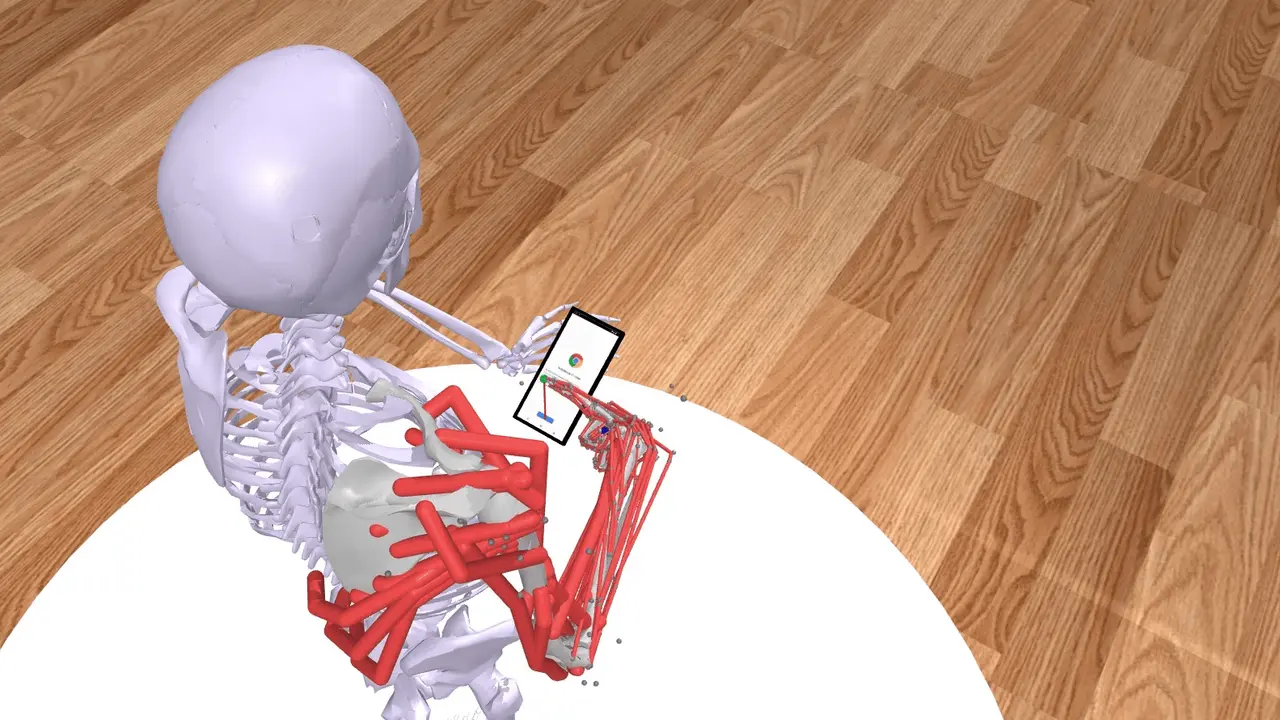

Turns out your thumb has been quietly working overtime this whole time. We all know staring at your phone for hours isn't great for mental health. But what about your fingers? Previously, researchers couldn't measure this. A new AI model, Log2Motion, from Aalto and Leipzig Universities, now changes that. The model converts smartphone logs into simulated human movement, like a digital skeleton moving its finger across a phone screen, mirroring real users. Recommended Videos Through a software emulator, it can even use real apps in real time, mimicking logged interactions to study what's physically happening during each swipe, tap, and scroll. Is scrolling affecting our health? Yes, according to researchers, scrolling adversely affects your health. They found that not all gestures are equally easy to perform. Up-down and down-up swipes require more effort than other movements. This is what most of us in today's short-form content world, so if you needed any more confirmation to stop using apps like Instagram and TikTok, here it is. Researchers also found that tapping small icons and reaching toward the corners of the screen also demands additional physical exertion from your finger. These might sound like minor inconveniences, but multiply that effort across hundreds of interactions a day, and it starts to add up. Why does this actually matter for you? Right now, this research benefits designers more than it does regular users. Until Log2Motion came along, smartphone interaction logs only recorded where a finger touched the screen, with no insight into whether that interaction felt comfortable or physically demanding. Designers can now use this simulation early in the development process to build interfaces that are less tiring to use. The implications for accessibility are also significant. The model can be adapted to simulate how users with tremors, reduced strength, or prosthetics interact with their phones, helping developers build experiences that work better for everyone. The researchers also say the model can be scaled to simulate other common scenarios, such as lying on a couch and scrolling with one hand. That one feels a little too relatable. Your phone might not be as passive a device as you thought. Every swipe takes something out of you, even if it's just a little.

[2]

Tired of Swiping? Now an AI Simulation Helps US Understand Why | Newswise

Newswise -- Prolonged scrolling is bad for your well-being, but is it also physically tiring? Until now, we haven't really been able to say. This is why researchers from Aalto and Leipzig Universities created a new AI model that makes it possible to simulate muscle activations and used energy to work out how physically effortful smartphone interactions are for users. 'It's the first time anyone has developed a tool that can help designers and developers quickly assess how physically tiring a real mobile user interface could be,' says Antti Oulasvirta, Professor at Aalto University and ELLIS Institute Finland. 'So far, smartphone logs have only told us where a finger has touched the screen - not whether or not it's felt comfortable.' To bridge this gap, Oulasvirta and his colleagues at Leipzig University developed Log2Motion, an AI model that translates smartphone logs into simulated human motion. Movement of this musculoskeletal simulation is based on data from previous motion capture studies. In the simulation, a human model consisting of digital bones and muscles moves its index finger to interact with a smartphone laid out on a desk. Through a software emulator, the model can use real mobile apps in real time. It can re-enact logs collected on users to illuminate what happened during interaction. The Log2Motion model then estimates the motion, speed, accuracy and effort of these biomechanical movements. The model provides entirely new horizons for smartphone use research - as well as design. 'We found that some gestures are harder to perform - in this case, up-down and down-up swipes,' explains Oulasvirta. 'Small icons and locations toward the corners of the display also require additional effort.' Using such simulation early in the process could help designers create user-friendly interfaces. It can also provide insight into accessibility needs for users with tremors, reduced strength or prosthetics. 'It is possible to scale the Log2Motion model to simulate other scenarios, such as the more classic: laying on the couch, holding the phone in one hand and scrolling with the thumb,' Oulasvirta says. The researchers hope that human simulations would be adopted to help design interactions that are more ergonomic and pleasant for users. In the future, these simulations could be combined with other AI methods to optimise user interfaces to a user's needs. The paper, 'Log2Motion: Biomechanical Motion Synthesis from Touch Logs', will be presented on April 17 at CHI 2026, the leading conference on human-computer interaction. It is also available online through arxiv.org.

Share

Copy Link

Researchers from Aalto and Leipzig Universities developed Log2Motion, an AI model that translates smartphone interaction logs into simulated human movement to measure physical fatigue. The tool reveals that up-down and down-up swipes require the most effort, while tapping small icons and screen corners also demands additional physical exertion from phone gestures.

Log2Motion Transforms How We Understand Smartphone Interaction

Your thumb has been working overtime, and now scientists can prove it. Researchers from Aalto University and Leipzig Universities have developed Log2Motion, an AI simulation that converts smartphone interaction logs into simulated human movement, revealing exactly how much physical effort each swipe, tap, and scroll demands from your fingers

1

2

.

Source: Newswise

The model creates a digital skeleton with bones and muscles that moves its index finger across a smartphone screen, mirroring real user interactions. Through a software emulator, it can use real mobile apps in real time, re-enacting touch logs collected from actual users to illuminate what physically happens during each interaction

2

. This marks the first time anyone has developed a tool that helps designers quickly assess how physically tiring a mobile user interface could be.Some Gestures Demand More Physical Exertion From Phone Gestures

'We found that some gestures are harder to perform - in this case, up-down and down-up swipes,' explains Antti Oulasvirta, Professor at Aalto University and ELLIS Institute Finland

2

. These vertical swipes require more effort than other movements, which matters significantly in today's short-form content world dominated by apps like Instagram and TikTok.The research also found that tapping small icons and reaching toward screen corners demands additional effort. While these might seem like minor inconveniences, the energy expenditure during smartphone interactions adds up across hundreds of daily interactions

1

.Biomechanical Motion Synthesis Enables Less Fatiguing Smartphone Interfaces

Until Log2Motion arrived, smartphone interaction logs only recorded where a finger touched the screen, with no insight into whether that interaction felt comfortable or physically demanding. 'So far, smartphone logs have only told us where a finger has touched the screen - not whether or not it's felt comfortable,' says Oulasvirta

2

.The Log2Motion model estimates motion, speed, accuracy, and human muscle activation of these biomechanical movements based on data from previous motion capture studies. Designers can now use this biomechanical motion synthesis early in the development process to build ergonomic interfaces that reduce physical strain

2

.Related Stories

Accessibility and Future Applications in User Interface Design

The implications for accessibility are substantial. The model can be adapted to simulate how users with tremors, reduced strength, or prosthetics interact with their phones, helping developers build experiences that work better for everyone

1

2

.Researchers say the model can be scaled to simulate other common scenarios, such as lying on a couch and scrolling with one hand. Looking ahead, these simulations could be combined with other AI methods to optimize user interfaces to individual user needs

2

. The research, titled 'Log2Motion: Biomechanical Motion Synthesis from Touch Logs', will be presented at CHI 2026, the leading conference on human-computer interaction.For users, this research signals a shift in how phones might be designed in the future. Every swipe takes something out of you, even if it's just a little, and designers now have the tools to measure and minimize that cost through less fatiguing smartphone interfaces that prioritize ergonomics alongside aesthetics.

References

Summarized by

Navi

[1]

Related Stories

AI Productivity Gains Come With a Hidden Cost: Employee Burnout and Longer Workdays

10 Feb 2026•Business and Economy

MobilePoser: Revolutionary AI-Powered Motion Capture App for Smartphones

16 Oct 2024•Technology

MIT's ultrasound wristband lets wearers control robotic hands with their own hand movements

25 Mar 2026•Science and Research

Recent Highlights

1

Anthropic overtakes OpenAI as most valuable AI startup with $965 billion valuation

Business and Economy

2

Apple's Siri overhaul for iOS 27 brings Gemini integration and standalone app to compete with ChatGPT

Technology

3

Pope Leo XIV releases major AI encyclical calling for 'disarmament' of artificial intelligence

Policy and Regulation