Claude Code's future in Pro plan uncertain as free alternatives emerge using DeepSeek and Ollama

3 Sources

[1]

If Claude Code is going away for Pro users, I can't recommend Claude anymore

Claude Code's basically what ChatGPT was to everyone else wondering what AI was all about (after all, it was practically synonymous with the word AI for the longest time). Claude Code is Anthropic's terminal-based coding agent, and though the tool is primarily positioned around developers, the reality is that it's brought an entirely new audience into coding. Unfortunately, it looks like the tool that brought them in might not be included in the plan that got them here for much longer. If that happens, I'm not sure how much longer I can keep recommending Claude to people. Want to stay in the loop with the latest in AI? The XDA AI Insider newsletter drops weekly with deep dives, tool recommendations, and hands-on coverage you won't find anywhere else on the site. Subscribe by modifying your newsletter preferences! Hold up, what's even happening? First, some context Claude's been around since 2023, and for a long time, it was primarily used and talked about by the developer community because of its coding capabilities. It felt like a very niche product -- like the one tool your tech friend wouldn't stop talking about, but that never quite broke through to everyone else. Until it did. Beyond launching features that naturally pull people in, that isn't really what finally broke through. Instead, it was OpenAI signing the Pentagon deal (which Anthropic had refused to), and the sudden wave of people ditching OpenAI on principle. Claude shot up to the top of the App Store and replaced ChatGPT for the first time ever. While that's great for Anthropic, it also means they've been dealing with a surge in demand their infrastructure clearly wasn't ready for. Unfortunately, that also means the company has had to make decisions that have started to chip away at the very thing that made people fall in love with Claude. If you've been using Claude for a while now, you've probably noticed things getting tighter. For instance, you might've started getting those "you've reached your usage limit" messages way more frequently (even if your workflow hasn't changed at all). The limits bit is something Anthropic's already acknowledged, but something even more controversial has been going on lately. On the 21st of April, the company quietly updated its pricing page to show Claude Code as no longer included in the Pro plan. There wasn't an announcement, no post on X, no email, no changelog -- nothing but an X where a checkmark used to be. In fact, this was something users spotted in the wild and posted about on X and Reddit. Anthropic's Head of Growth then acknowledged this and called it a small A/B test on 2% of new prosumer signups. Companies running A/B tests isn't really anything new. They do it all the time. But why did they go through the hassle of updating the entire pricing page, the support documentation, and every public-facing reference to Pro if it was only meant for 2% of new signups? I might not have run a single A/B test myself, but I know for certain that's not how these tests work. The company eventually reverted everything after the massive backlash and put everything back the way it was. The checkmark returned, the docs were reverted, and everything went back to normal. The community certainly didn't let it go, but from Anthropic's end, it was business as usual. Just 6 days later, another change appeared in Anthropic's support docs. This time, Claude Code Pro users were told they'd only be able to use Opus models after enabling and purchasing extra usage, essentially turning what used to be an included feature into a paid add-on. Now, to be fair, Anthropic later clarified that this was an outdated support article that hadn't been properly updated when Opus was added to the Pro plan. And after being contacted directly, a representative confirmed it was a documentation issue and said that if anything affecting current subscribers does change, they'd get "plenty of notice." So this could very well just be an honest mistake. But I don't know about you -- I'm very clearly seeing a pattern forming. Even when the individual explanations sound reasonable, patterns don't really lie. At what point do we stop giving the benefit of the doubt? The Pro plan is living on borrowed time Something's gotta give Per the above and given Claude's constant outages, it's no secret that Claude's struggling with capacity issues. Usage is through the roof, infrastructure is strained, workloads are heavier, and newer models burn through tokens faster than ever. That's a real problem, and it's becoming incredibly clear that Anthropic will need to find some way to address it. They've already made limits tighter. They've blocked third-party tools from using Pro and Max subscriptions. And now they're floating the idea of removing Claude Code from the Pro plan entirely. I'm not sure how much longer they'll keep pretending the Pro plan can stay the way it is. But here's the thing: the Pro plan is the entry tier. It's how most people first experience what Claude actually is (and no, the free tier just doesn't count). Given that Claude Code is limited to paid tiers, I started with Claude Pro myself. If you're reading this, you probably did too! Of course, this doesn't just apply to Claude. When I realized I needed more than ChatGPT's free tier, I didn't just automatically jump to the most powerful plan they offered. I got the Plus plan. Same with Google Gemini -- I began with AI Pro. That's just how people try things. You pay the lowest tier, you see if it's worth the hype, and upgrade if it's genuinely worth it. Nobody, and I mean nobody, would be willing to drop $100 or $200 a month on a Max plan just to see if a feature is worth it. In this case, it's a tool whose name suggests it's aimed at developers. Would non-technical people even bother paying that amount just to give the tool a try? I know countless people who picked up Claude Code without writing a single line of code before. They saw a demo, got curious, and the $20 Pro plan made it a no-brainer to try. Take that away, and you're telling those people (the ones who are arguably Claude's best evangelists) that this isn't for them anymore. In fact, I didn't subscribe to Claude Pro for Claude Code. I tried Claude Code because I was already subscribed to the Pro tier, and realized I was likely missing out on something incredible that was already included in my subscription. That's how discovery works! There are some excellent Claude Code alternatives A lot of them are free Now, I've only ever had good things to say about Claude Code, besides the occasional complaint. It's a genuinely excellent tool, and there's a reason why Anthropic is struggling with load issues. That said, if the floor for using it jumps to $100 a month, I can't in good conscience tell someone to just get Claude without mentioning what else they could do with that money. Understand AI tool shifts -- Subscribe to the newsletter Subscribing to the XDA AI Insider newsletter gives focused coverage of AI tooling: clear context on vendor pricing and plan changes, hands-on tool recommendations, and practical alternatives so you can evaluate which solutions fit your needs. Get Updates By subscribing, you agree to receive newsletter and marketing emails, and accept our Terms of Use and Privacy Policy. You can unsubscribe anytime. At the stage we're at, there are some excellent Claude Code alternatives out there. The most obvious one is OpenAI's Codex, which, ironically, is available on the free tier too! OpenCode is a completely free and open-source Claude Code alternative, and it even lets you bring in your own LLM instead of locking you into a single provider's ecosystem. I understand where Anthropic is coming from At $20, Claude Code is an easy recommendation. At $100+, it becomes a hard one. And at that price, I'd be doing people a disservice, not to mention what else is out there. And while I can see where Anthropic is coming from, they're about to lose the people who got them to where they are now if they decide to go through with this.

[2]

DeepClaude Lets You Run Claude Code With DeepSeek's Brain for 17x Cheaper - Decrypt

It launched weeks after Anthropic accidentally leaked Claude Code's full source code, fueling a wave of open clones and modifications. A new open-source tool called DeepClaude lets developers run Claude Code -- Anthropic's autonomous coding agent -- with DeepSeek's model under the hood. Pushed to GitHub by a developer going by aattaran, the project bills itself as the same Claude Code experience at "17x cheaper." It's a simple bash and PowerShell script -- nothing fancy, no fork, no rewrite. Using the tool, you can also run Claude Code with other backends like OpenRouter, or Fireworks AI -- not just DeepSeek, though that's apparently the main draw. The trick is environment variables. Claude Code reads a handful of them to decide where to send API calls. DeepClaude rewrites those for the duration of a session, points them at a cheaper backend, then restores the originals when you exit. The command line tool itself doesn't change. File reading, editing, bash execution, multi-step tool loops, subagent spawning, all of it still works. Only the model doing the thinking is different. But the "tool loop" is the whole point of Claude Code. When you ask Claude to fix a bug, it doesn't answer in one shot. It reads files, runs commands, sees the output, decides what to do next, edits something, runs your tests, reads the failures, tries again. So it's a process that involves thinking, acting, observing, thinking again, dozens of times, until the job is done. That cycle is what people mean by "autonomous agent loop." You give one instruction, the model takes thirty steps. It's also the part that burns through tokens -- and through your Anthropic budget. DeepClaude keeps the loop intact and just swaps which model is making the decisions inside it. The math is straightforward. Anthropic's Max 20x plan, which gives heavy users full Claude Code access, runs $200 a month. DeepSeek V4 Pro through OpenRouter currently costs $0.435 per million input tokens and $0.87 per million output -- a promotional rate that expires May 31, 2026, after which it doubles. Even at full price, it's drastically cheaper than Anthropic's API. By default, DeepClaude routes traffic to DeepSeek V4 Pro, the 1.6 trillion-parameter open-weight AI behemoth model that Hangzhou-based DeepSeek released on April 24. (Parameters, for the uninitiated, are what determine a model's capacity -- the more parameters, the wider the model's breadth of knowledge.) DeepSeek V4 Pro scores 93.5% on LiveCodeBench -- a benchmark that runs models on fresh competitive programming problems, meaning DeepSeek solved 93.5% of the tasks -- placing it ahead of both Gemini 3.1 Pro and Claude Opus 4.6. Some things are still a bit rough around the edges. Image input doesn't work. DeepSeek's Anthropic-compatible endpoint doesn't support vision. Parallel tool calls are disabled. MCP server integrations don't pass through. And on hard reasoning tasks, the "readme" file itself concedes Claude Opus is still stronger. That said, for reasoning, coding, and creative tasks, this implementation is a very powerful and efficient solution to use. A bit of context for why this exists: On March 31, Anthropic accidentally shipped Claude Code's full source map to npm, exposing 512,000 lines of TypeScript. The leak triggered a wave of clones, Python rewrites, and tools that hook into Claude Code's now well-documented internals. DeepClaude is the natural next step. It doesn't fork the code, but it exploits the fact that Claude Code's backend is, by design, swappable. There's also a "remote control" mode that opens a Claude Code session in any browser via a claude.ai/code/session_... URL, with DeepSeek still doing the model work. That one requires a Claude.ai subscription, since Anthropic's WebSocket bridge is hardcoded. Whether Anthropic will care is another question. Claude Code is a paid product, but the API endpoints are open by design, the company built them to be Anthropic-compatible for third-party adoption. DeepClaude turns that compatibility outward. The repo currently sits at almost a thousand stars and two forks. DeepSeek's promotional pricing ends May 31.

[3]

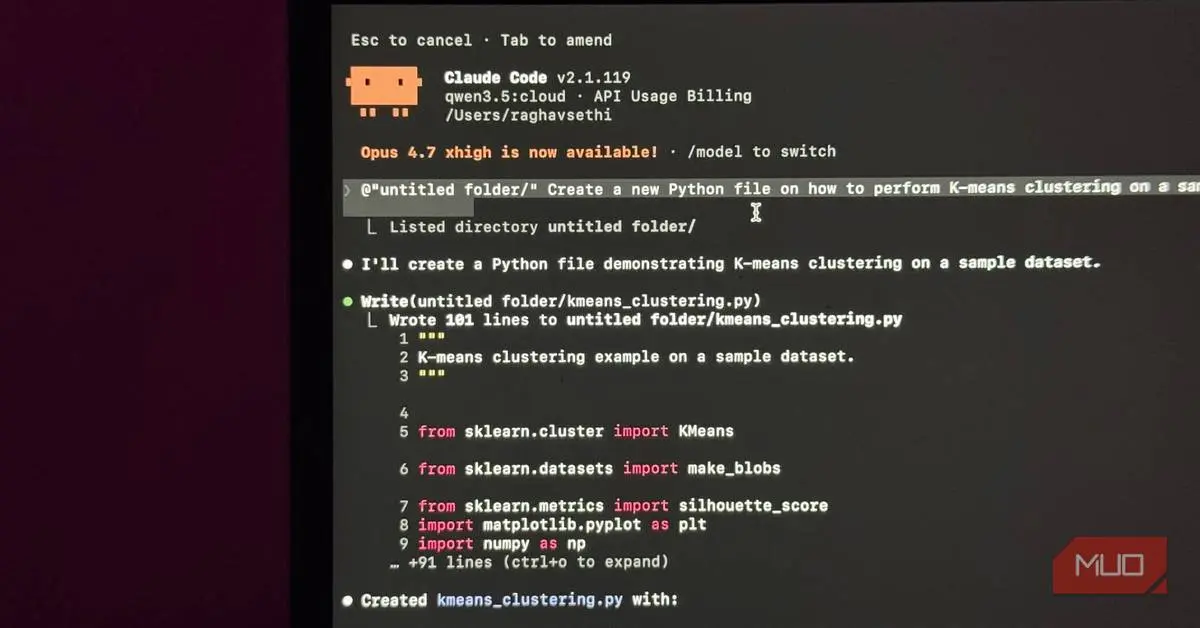

There's a free way to use Claude Code -- and it's surprisingly simple

Raghav Sethi began his tech writing journey in 2022, contributing to his college's open-source community blog. Later that year, he joined MakeUseOf, and since then has written extensively about Apple, Android, and AI. His work ranges from hands-on experiments to opinion pieces that explore the bigger picture behind emerging tech trends. Alongside his work at MUO, you can also find Raghav's articles at XDA Developers, where he mainly focuses on Linux and the world of open-source software. Outside of writing, Raghav enjoys working on coding projects, playing the guitar, and living life on the edge by installing the latest beta software on his daily devices. Claude Code is a really good tool. If you have been using it, you already know that, especially when you pair it with something like Obsidian for managing context and notes, it becomes a pretty serious part of any workflow. But the number one complaint I keep seeing is the same every time. Claude Code is locked behind Claude's Pro or Max plan, and at $20 a month, that is not exactly pocket change for a subscription you might only be dipping into occasionally. But there is a way to use Claude Code for free! Related I finally caught up and tried OpenClaw -- it's everything you'd expect The "agent" hype finally earned it. Posts 5 By Amir Bohlooli You are paying for the model, not the harness LLMs can be expensive Claude Code is not a standalone AI. It is closer to a very smart intermediary. You give it a task; it figures out which files to read, what changes to make, and what to run, and then hands all that context off to an actual language model to do the thinking. By default, that model is Claude Sonnet or Opus, depending on what you have configured. The model is what reads your code, understands what you are asking for, and generates the output. Claude Code is the layer that takes that output and actually does something useful with it: editing files, running terminal commands, and managing context across your project. And here is the thing that changes the whole picture: Claude Code itself is free and open source. You can install it right now at no cost. What you are actually paying for every time you use it is the API call to the model that powers it. Related I moved my entire ChatGPT context to Claude and it finally felt like home Here is the best path to go from ChatGPT to Claude. Posts 3 By Brandon Miniman Every prompt you send, every file it reads, every response it generates, that is all going through Anthropic's API, and that is what shows up on your bill or subscription usage. So when people say Claude Code is expensive, they are not really talking about Claude Code. They are talking about the cost of running a frontier model. Which is a real concern, but it is also a solvable one, because nothing is forcing you to use a frontier model. You can use it for free with local models through Ollama Local LLMs to the rescue Ollama is a tool that lets you run open-weight AI models locally on your own machine. No API, no subscription, no usage bill. You download it, pull a model, and it runs a local server on your machine that applications can talk to exactly the same way they would talk to a remote API. Claude Code has a flag called --model that lets you swap the underlying model. On top of that, you can set an environment variable called ANTHROPIC_BASE_URL to point Claude Code to a different endpoint entirely. Point it to your local Ollama instance/URL instead of Anthropic's servers, and suddenly you have a fully functional agentic coding setup running entirely on your own hardware, completely free. To get started, you'll first need to download a model via Ollama. For example, once you've installed Ollama, you can download the Gemma 4 model by entering this command in your terminal. ollama pull gemma4 I'll get more into which model you should choose later, but once you've downloaded it, you can integrate it with Claude Code by entering this command: ollama launch claude You'll now be prompted to choose a model via Ollama. Just select the one you downloaded earlier, and that's it. Now you're inside Claude Code! The interface is identical. The file editing, the terminal commands, the context management -- all of it works exactly as you would expect. Is it as capable as Sonnet? No, but free and pretty good is a completely different value proposition, and for a lot of everyday coding tasks, it gets you further than you might think. But there are some caveats that you should be aware of. There is a catch, like always You'll need beefy hardware Running a large language model locally is probably one of the most demanding things you can ask a consumer machine to do. This is especially true for coding-focused models, which tend to be bigger and more complex than general-purpose ones. So, before you get too excited about the free part, it is worth being honest about what you'll need. If you have a modern Apple Silicon Mac, you are in a good position. The unified memory architecture means your CPU and GPU share the same pool of RAM, which is brilliant for local models. Something like a 32GB M-series Mac will comfortably handle the current best-in-class options. On the GPU side, a higher VRAM GPU gets you to a similar place. For models, the two I would point you toward right now are Qwen3.6 and Gemma 4. Qwen3.6 is built specifically for agentic coding, with particular improvements in frontend workflows and repository-level reasoning. It comes in 27B and 35B variants and pulls around 17-24GB depending on which you run. Gemma 4 is Google DeepMind's latest family, with a 26B MoE model that uses only 4B active parameters, and a denser 31B variant aimed at higher-end setups. Both are on Ollama, and both have solid coding benchmarks. If you are working with 16GB of unified memory, you are not locked out. The smaller Gemma 4 E4B is designed specifically for edge devices and runs on around 5GB in 4-bit mode. On model quality: none of this matches Opus. That is just reality. But in my experience, the gap has gotten surprisingly small for everyday coding tasks. Smaller than you would expect. Related Gemma 4 just replaced my whole local LLM stack Gemma 4 made local LLMs feel practical, private, and finally useful on everyday hardware. Posts 2 By Amir Bohlooli Try it, then judge it I currently have a separate machine running purely as an inference server, with Ollama on it handling all the local model work. I just connect to it from my laptop when I am doing dev work. I am still subscribed to Claude, but for quick tasks where I do not want to burn through my tokens on something trivial, it has been a solid fallback. The models are not as good. That is just the honest reality. But if you are on the fence about the Pro subscription, this is worth trying first, and if you are already subscribed, it is worth setting up anyway. Claude Code Claude Code is an agentic coding tool built by Anthropic that works directly inside your terminal. It can read, edit, and manage files across your entire project, run commands, and work through multi-step coding tasks on its own. See at Anthropic Expand Collapse

Share

Copy Link

Anthropic appears to be testing the removal of Claude Code from its $20-per-month Pro plan amid growing capacity issues. Meanwhile, developers have created free workarounds using DeepSeek's models at 17x cheaper rates and local AI models through Ollama, exploiting the AI coding agent's open-source nature after an accidental source code leak.

Anthropic Tests Removing Claude Code from Pro Plan

Anthropics's terminal-based coding agent faces an uncertain future as the company quietly tested removing Claude Code from its Pro plan in late April. On April 21, Anthropic updated its pricing page to show Claude Code no longer included in the $20-per-month Pro plan, replacing a checkmark with an X across support documentation and public-facing references

1

. The company's Head of Growth later called it a small A/B test on 2% of new prosumer signups, though users questioned why an entire pricing page overhaul would occur for such limited testing1

. After significant backlash, Anthropic reverted the changes, but the incident revealed deeper capacity issues plaguing the autonomous coding agent.

Source: MakeUseOf

Capacity Issues Drive Pricing Pressure

The AI coding agent has experienced surging demand following OpenAI's Pentagon deal, which prompted users to switch to Anthropic on principle. Claude shot to the top of the App Store, replacing ChatGPT for the first time

1

. However, this growth exposed infrastructure limitations, with users reporting frequent "you've reached your usage limit" messages despite unchanged workflows1

. Just six days after the initial pricing page incident, another change appeared suggesting Claude Code Pro users would need to purchase extra usage to access Opus models, converting an included feature into paid add-on1

. While Anthropic attributed this to outdated documentation, the pattern suggests mounting pressure on the Claude Pro plan structure.

Source: XDA-Developers

DeepClaude Offers 17x Cheaper Alternative

Developers have created DeepClaude, an open-source tool that lets users run Claude Code with DeepSeek's model at drastically reduced costs. The project uses simple bash and PowerShell scripts to redirect API calls to cheaper backends like DeepSeek, OpenRouter, or Fireworks AI without modifying the core functionality

2

. DeepSeek V4 Pro through OpenRouter currently costs $0.435 per million input tokens and $0.87 per million output—a promotional rate valid until May 31, 2026—compared to Anthropic's $200-per-month Max 20x plan2

. The tool maintains Claude Code's autonomous agent loop, where coding and reasoning tasks involve reading files, executing commands, and iterating through dozens of steps2

.

Source: Decrypt

Source Code Leak Enables Alternative Implementations

The emergence of cost-effective backends followed Anthropic's accidental leak of Claude Code's full source map to npm on March 31, exposing 512,000 lines of TypeScript

2

. This leak triggered a wave of clones and modifications, with DeepClaude exploiting the fact that the backend is swappable by design. DeepSeek V4 Pro, the 1.6 trillion-parameter open-weight AI models powering the alternative, scores 93.5% on LiveCodeBench, placing it ahead of both Gemini 3.1 Pro and Claude Opus 4.6 on competitive programming problems2

. The GitHub repository has accumulated almost a thousand stars, demonstrating strong developer interest in avoiding subscription costs2

.Related Stories

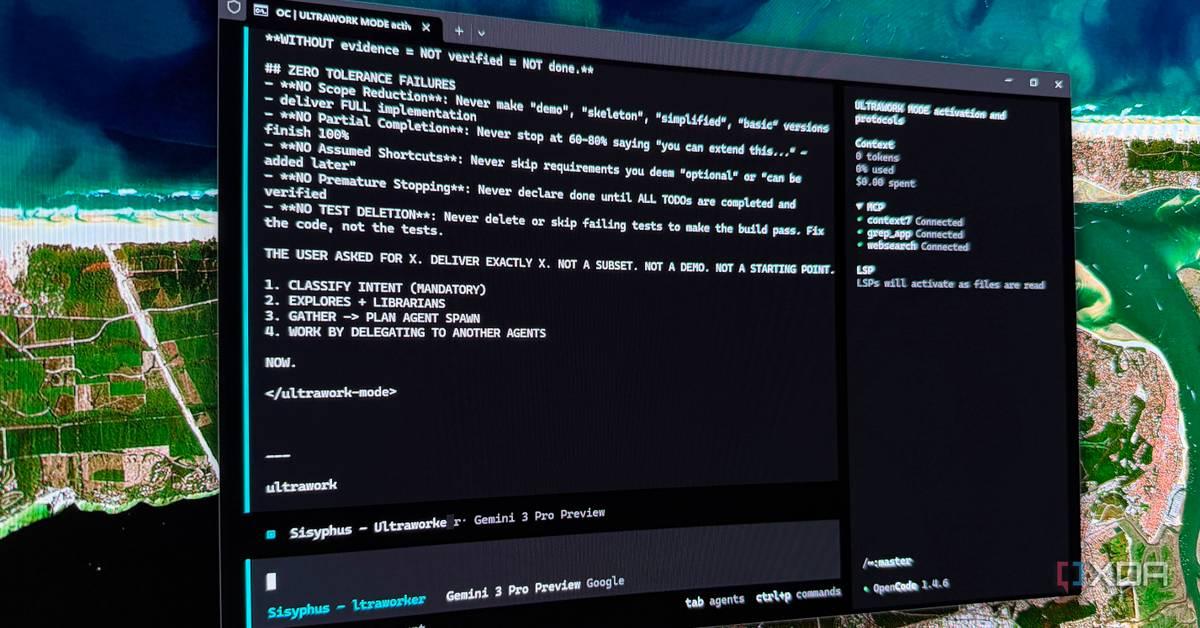

Free Local Alternative Through Ollama

Users can also run Claude Code entirely free using local AI models through Ollama, which runs open-weight AI models locally without paid API calls

3

. Claude Code itself is free and open source—users actually pay for API calls to large language models that power the thinking behind file editing and terminal commands3

. By setting the ANTHROPIC_BASE_URL environment variable to point to a local Ollama instance, developers can run the autonomous coding agent entirely on local hardware3

. This approach requires beefy hardware, particularly for coding-focused models, making modern Apple Silicon Macs ideal candidates3

.What This Means for AI Development

The situation highlights tension between commercial AI services and open alternatives as capacity issues force pricing decisions. While Anthropic representatives claim current subscribers will receive "plenty of notice" before changes affecting them

1

, the emergence of DeepClaude and Ollama-based solutions demonstrates how quickly developers adapt when faced with potential service restrictions. DeepSeek's promotional pricing expires May 31, 2026, which may impact long-term cost calculations for those relying on cheaper AI models2

. The Pro plan remains under pressure as newer models burn through tokens faster, suggesting Anthropic may need to restructure pricing tiers to balance infrastructure demands against user expectations for terminal-based coding tools that brought new audiences into coding1

.References

Summarized by

Navi

Related Stories

Claude AI's workflow automation features are helping users cut their work hours in half

10 Apr 2026•Technology

Anthropic's Claude Code Goes Web-Based: A Game-Changer for AI-Assisted Coding

20 Oct 2025•Technology

Anthropic's Claude Code: Revolutionizing AI-Powered Software Development

06 Jun 2025•Technology

Recent Highlights

1

Google bets on AI agents with Gemini 3.5 Flash, Spark, and Omni at I/O 2026

Technology

2

AI passes the Turing Test as GPT-4.5 appears more human than actual people in landmark study

Science and Research

3

OpenAI cracks 80-year-old Erdős problem, stunning mathematicians with AI's biggest math breakthrough

Science and Research