Bee-inspired navigation robot uses neural network to find home from 600 meters away

2 Sources

[1]

Bee-inspired navigation robot pinpoints its home using a neural network

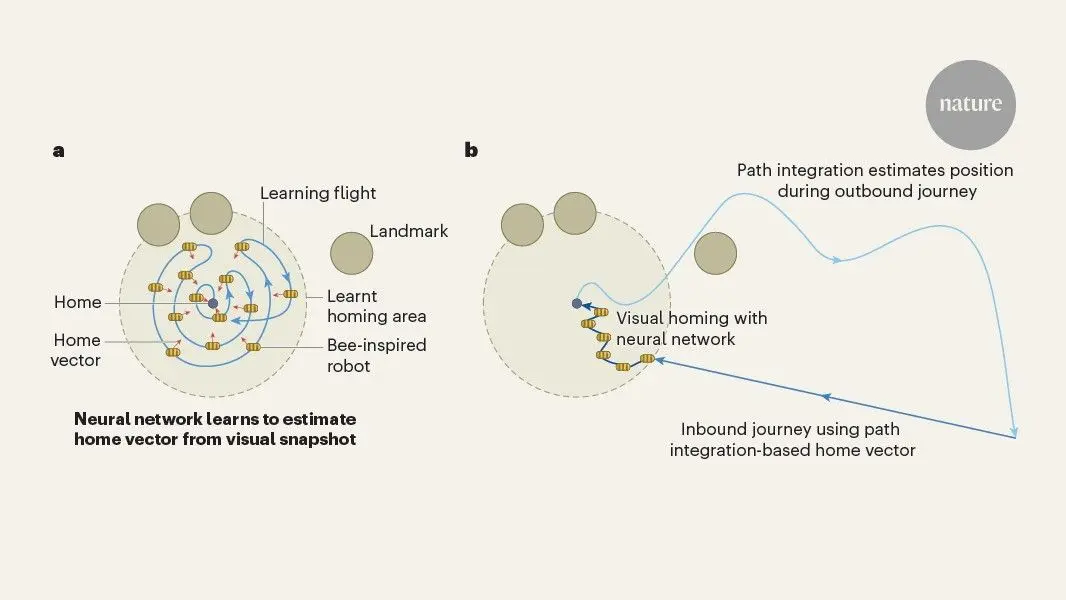

You have full access to this article via Jozef Stefan Institute. Conventional robots navigate much like humans do, relying on detailed maps of the area they are in to get around. This allows the robot to maintain an accurate estimate of its own location, but comes at a high computational cost. But now, writing in Nature, Ou et al. report a flying robot that uses a simple learning algorithm, inspired by the exploratory flights that honeybees (Apis spp.) take before starting a lengthy journey, to correct navigational errors that accumulate during long flights. The robot can return to within half a metre of its starting position after circuitous flights of up to 600 metres. This lightweight algorithm extends the navigational capabilities of small, low-power drones and helps scientists to understand how insects' brains work. The study of bee and wasp navigation has a long history. Typically, when leaving their home and entering unexplored surroundings, bees and wasps face their nest and fly in arcs of gradually increasing radius. In initial accounts of these flights, scientists assumed that the animals were learning the locations of prominent landmarks around the nest, perhaps using changes in the relative visual positions of near and far objects to estimate those object's locations. When the insect returns and sees the same landmarks, it can use its memory of their layout to pinpoint the nest entrance. Alternatively, researchers have suggested that the insect might store in its memory a set of views -- unprocessed, low-resolution, near-360-degree images -- that it sees when looking towards the nest. The insect could then compare what it sees on its return path with the views in its memory, using familiarity to guide itself home. However, this 'view memory' is not the only tool that bees, and some other insects, use to navigate. They can use various cues, including the position of the Sun, to track the direction and speed of their flight manoeuvres, enabling them to maintain a running estimate of their location relative to their starting point, a process called path integration. This generates a 'home vector' that the insect uses to fly along a straight-line path back home, even if the view is unfamiliar. However, the longer the distance travelled, the more errors that the home vector accumulates -- so path integration alone is not sufficient to pinpoint the location of the nest after extended journeys. It remains an open question how insects use the home vector and view memory together. Ou and colleagues tested the idea that insects use the home vector and view memory in different ways, depending on how far they are along their journey (Fig. 1). When first departing the nest on a learning flight, the insect links what it sees to the short and accurate home vector to build a 'learnt homing area' -- a region in which the insect can generate an accurate estimate of the home vector purely on the basis of its current view. This means that, on long flights, the increasingly inaccurate home vector needs to guide the insect back only to somewhere in the safe learnt homing area. How might an insect link views to a home vector? One possibility is a 'perfect memory' that stores each view and vector pair. Other teams have proposed models based on memory circuits identified in the insect brain. Ou and colleagues used artificial neural networks, which are algorithms that can identify patterns in data and which form the backbone of current artificial-intelligence systems. During training, the algorithm was presented with a view and, on the basis of that view, it estimated the home vector at the corresponding point in space. As well as real views from the learning flight, simulated rotated views were generated and used for training. The estimate was scored on the basis of how close it was to the 'true' value calculated using path integration. Through a process called self-supervised learning, the algorithm progressively improved its predictions, until it could, when presented with a view inside the learnt homing area that it had not seen before, make a good prediction of the home vector. An advantage of this approach over a perfect memory of vector-view pairs is that the amount of time it takes the system to search through its memory remains constant, rather than increasing with the number of views, and it still performs well despite variations in the view. The authors showed, using simulations, that the neural network could navigate back home even when faced with unfamiliar views at distances of up to 2.5 times the radius of the learnt homing area. These neural networks were also very compact, requiring only a few thousand parameters; by contrast, billions are used in state-of-the-art image-recognition systems. The effectiveness of this navigation scheme was thoroughly assessed first using realistic simulations, and then with a small flying robot. The robot had an omnidirectional camera, and various sensors that it used to perform path integration. The authors showed that, in indoor arenas, learning a homing area of only 5 × 5 metres around home could allow safe exploration and 100% successful returns across an area of up to 50 × 30 metres. Testing the robot outdoors revealed some remaining challenges. Lighting variations made it more difficult for the algorithm to learn to associate views with vectors, and a larger, more sophisticated neural network was then needed for good performance. The flying robot was tilted by the wind, changing the camera angle and requiring further views to be learnt, which reduced the success rate. In a large, empty field, the views did not change enough as the robot flew for the algorithm to consistently associate particular views with unique vectors; however, adding some distinctive objects around the home location fixed this. Somehow, bees seem to have solved these problems. Clearly, there is more to learn about bees, but does this work advance what scientists already know about them? The possibility that insects might associate views with vectors has often been proposed, but the available evidence provided more support for simple models in which the home-vector and view-memory systems operated more independently. By demonstrating, using real-world tests, the effectiveness of closely integrating these systems -- while maintaining a plausible simplicity in the algorithm -- Ou and colleagues' work invites reconsideration of these assumptions. Advances in understanding some key neural circuits underlying insect navigation make this particularly intriguing to explore. The work also provides an interesting counterpoint to prevailing approaches to robot navigation, which typically try to build accurate 3D maps by combining multiple sources of information, including vision, depth-sensing, self-motion and external signals, such as GPS and wireless transmitters. Building such maps is necessary for some robot tasks, but for many use cases, such as monitoring crops or inspecting disaster sites, bee-like capabilities could be sufficient -- and the low-power, low-compute solutions they inspire could be highly advantageous.

[2]

Tiny robot drones learn to navigate the world like honeybees

Insect-size drones are too small to lug around complex navigation systems. To help tiny autonomous fliers find their way home, researchers are taking their cues from honeybees with the Bee-Nav, described today in Nature. A honeybee leaving the hive first takes a short learning flight to memorize nearby landmarks, explains the study's lead author Guido de Croon, an artificial intelligence and robotics researcher at the Delft University of Technology in the Netherlands. As a bee flies away, "it keeps track of the direction and speed of its movement," de Croon says, in a process called path integration. Because path integration is prone to accumulating tiny measurement errors over time, the insect relies on the memorized landmarks to correct its course as it gets back home. De Croon and his colleagues copied this workflow. First, a drone performs a beelike learning flight around its starting point using a minuscule omnidirectional camera to capture the surrounding scenery. Midflight, it trains a tiny onboard neural network to map these images to home vectors, basically invisible arrows pointing back to the launchpad. This establishes a safe zone called the Learned Homing Area. Once trained, the drone can be sent far away and come back using path integration first, backtracking based on measured speed and direction. If the drone winds up anywhere inside its starting safe zone, the visual neural network guides it the rest of the way home. If you're enjoying this article, consider supporting our award-winning journalism by subscribing. By purchasing a subscription you are helping to ensure the future of impactful stories about the discoveries and ideas shaping our world today. The Bee-Nav does this using an off-the-shelf Raspberry Pi 4 computer the size of a credit card that runs neural nets with between 3.4 and 42.3 kilobytes of memory -- thousands of times less than conventional mapping setups use. The team's test bots homed in from a maximum of 600 meters (1,970 feet) away outdoors despite wind gusts and camera-blinding sun glares. "What I find especially exciting is how little computation is needed," says Sarah Bergbreiter, a mechanical engineer at Carnegie Mellon University, who was not involved in the study. "For the small-scale robots that my group and others work on, this is the kind of approach that makes serious outdoor deployments plausible." De Croon's team is still working out a few challenges for the platform, such as navigating between multiple memorized places and dealing with landmark-free starting points. "Platforms running Bee-Nav will also need local obstacle avoidance and planning capability if the environment is cluttered or dynamic," says Sean Humbert, a mechanical engineer at the University of Colorado Boulder, who was not involved in the study. But even now, de Croon says, the Bee-Nav can make autonomous, outdoor drones smaller and more power-efficient. "We could easily put it on a 50-gram, even 30-gram drone," de Croon claims. Scaling autonomous drones further down to the size of actual bees, he notes, would require solving other fundamental problems like miniaturizing batteries. "But we hope when these problems are solved in the long term, we will have the intelligence ready to match that," de Croon says.

Share

Copy Link

Researchers developed Bee-Nav, a system that lets tiny robot drones navigate like honeybees using lightweight neural networks. The breakthrough allows drones to return within half a meter of their starting position after flights up to 600 meters, using just 3.4 to 42.3 kilobytes of memory—thousands of times less than conventional systems.

Bee-Inspired Navigation Robot Transforms Drone Capabilities

A bee-inspired navigation robot developed by researchers at Delft University of Technology demonstrates how nature's solutions can revolutionize AI-powered robotics. Published in Nature, the study by Ou et al. introduces Bee-Nav, a system that enables robot drones to navigate the world like honeybees using remarkably compact neural network algorithms

1

. The breakthrough addresses a fundamental challenge: conventional robots rely on detailed maps that demand high computational power, limiting the potential for miniaturization in autonomous drones.How Honeybees Solve Navigation Challenges

The navigation system for insect-sized drones draws directly from honeybee behavior. When leaving their hive, honeybees perform exploratory flights, facing their nest and flying in arcs of gradually increasing radius

1

. Lead author Guido de Croon explains that during these learning flights, bees track the direction and speed of their movements through path integration, while simultaneously memorizing nearby landmarks2

. This dual approach compensates for navigational errors that accumulate during extended journeys, allowing insect brains to maintain accurate location estimates despite their tiny size.

Source: Nature

Neural Network Architecture Mimics Insect Intelligence

The onboard neural network operates through self-supervised learning, progressively improving its ability to link visual scenes with home vector calculations. During training, the algorithm receives views from a learning flight and estimates the home vector at corresponding points in space. The system also generates simulated rotated views to enhance training data

1

. This creates a "Learned Homing Area"—a safe zone where the drone can generate accurate home vector estimates purely from visual input. The neural network requires only 3.4 to 42.3 kilobytes of memory, thousands of times less than conventional mapping systems, while billions of parameters power state-of-the-art image-recognition systems2

.Real-World Performance Validates Approach

Field tests confirm the drone navigation capabilities extend far beyond laboratory conditions. The flying robot, equipped with an omnidirectional camera and running on a credit card-sized Raspberry Pi 4 computer, successfully returned to within half a meter of its starting position after circuitous flights of up to 600 meters

1

2

. The system maintained performance despite wind gusts and camera-blinding sun glares during outdoor testing. Simulations demonstrated that the neural network could navigate home even when encountering unfamiliar views at distances up to 2.5 times the radius of the learnt homing area1

.Related Stories

Implications for Miniaturization and Future Deployment

Sarah Bergbreiter, a mechanical engineer at Carnegie Mellon University, notes that the minimal computation required makes serious outdoor deployments plausible for small-scale robots

2

. De Croon claims the Bee-Nav system could easily operate on drones weighing just 50 grams, or even 30 grams, making autonomous drones significantly smaller and more power-efficient2

. However, Sean Humbert from the University of Colorado Boulder points out that platforms running Bee-Nav will need additional local obstacle avoidance and planning capability in cluttered or dynamic environments2

.Challenges and Long-Term Vision

The research team continues addressing key limitations, including navigation between multiple memorized locations and operation from landmark-free starting points. Scaling autonomous drones down to actual bee size requires solving fundamental problems like miniaturizing batteries

2

. Yet the lightweight algorithm already extends the navigational capabilities of small, low-power drones while advancing scientific understanding of how insect brains process spatial information1

. The research demonstrates that bee-inspired navigation can deliver practical solutions for autonomous systems where size, weight, and power consumption constrain conventional approaches.🟡,References

Summarized by

Navi

[2]

Related Stories

EPFL Researchers Look to Fruit Fly Brains for Next-Gen Robot Controllers

05 Apr 2025•Science and Research

AI-Powered Navigation Breakthrough: Robots Learn to Stay on Track Without Maps

28 Aug 2025•Technology

Bat-Inspired Drones Could Revolutionize Search and Rescue Operations in Dark and Dangerous Conditions

30 Oct 2025•Science and Research

Recent Highlights

1

Meta AI chatbot exploited by hackers to hijack high-profile Instagram accounts worth millions

Technology

2

Florida sues OpenAI and Sam Altman over ChatGPT safety, alleging AI harms linked to violence

Policy and Regulation

3

Nvidia RTX Spark chips power new AI laptops with up to 128GB memory and local agent capabilities

Technology

Recent Highlights

Today's Top Stories

News Categories