Meta signs multi-billion dollar deal with Amazon for millions of AWS Graviton chips for AI

17 Sources

[1]

In another wild turn for AI chips, Meta signs deal for millions of Amazon AI CPUs | TechCrunch

Amazon just scored a major coup with Meta thanks, once again, to Amazon's own homegrown chips. Meta has signed a deal to use millions of AWS Graviton chips to power its growing AI needs, Amazon announced Friday. Note that the AWS Graviton is an ARM-based CPU, (a central processing unit, the chip that handles general computing tasks) not a GPU (a graphical processing unit). While GPUs remain the chip of choice for training large models, once those models are trained, AI agents built on top of them are causing a shift in the type of chip is needed. Agents create compute-intensive workloads like real-time reasoning, writing code, search, and the the coordination involved in managing agents through multi-step tasks. AWS's latest version of Graviton was designed specifically to handle AI-related compute needs, the company says. This deal brings more of Meta's cash back to AWS instead of competitors like Google Cloud. Last August, Meta signed a six year, $10 billion deal with Google Cloud, though Meta had, until then, primarily been an AWS customer that also used Microsoft Azure. We couldn't help but notice that AWS timed the announcement of this deal right as the Google Cloud Next conference wrapped up, like a virtual smirk at its cloud rival. Google, of course, also makes its own custom AI chips and announced new versions of them at the show. True, Amazon makes its own AI GPU as well: the Trainium, which, despite its name, is used for both training and inference -- the stage that happens after a model is trained, when it's actively processing prompts. But Anthropic had already swooped in with a deal announced earlier this month that commandeered many of those chips for years to come. The Claude maker agreed to spend $100 billion over 10 years to run its workloads on AWS -- with a particular focus on Trainium -- while Amazon agreed to invest another $5 billion (bringing its total to $13 billion of investment) into Anthropic in return. Ultimately, the Meta deal is allowing Amazon to showcase a huge AI customer as a proving point for its homegrown CPUs. These are chips that compete with Nvidia's new Vera CPU, which is also ARM-based and designed to handle AI agentic workloads. The difference, of course, is that Nvidia sells its chips and AI systems to enterprises and cloud providers (including AWS). AWS only sells access to its chips through its cloud service. Earlier this month Amazon CEO Andy Jassy took aim at Nvidia and Intel in his annual shareholder letter, saying that enterprises want better price-performance ratios for AI, and that he intends to win deals on that basis. This also means the pressure couldn't be higher on Amazon's internal chip building team to deliver, a team that we visited last month in an exclusive tour of their lab.

[2]

Meta Just Signed a Huge Deal to Use Amazon's Graviton CPU Chips for AI

Expertise 13+ years of experience in consumer product reviews, buying guides, best lists, and tech news across a variety of tech categories. As a homeowner, Ajay is also familiar with the unique electrical issues that can crop up in a prewar apartment building. Despite talk of an impending AI bubble, Amazon is the latest company to benefit from the AI arms race. Meta just inked a deal with Amazon worth billions to deploy the AWS Graviton processors in its 32 data centers over the next three years. While Amazon hasn't disclosed the full value of the deal, we've seen companies spend eye-popping sums to sustain their AI growth. Recently, Meta also signed a six-year, $10 billion deal with Google Cloud, while OpenAI agreed to spend $20 billion with chip startup Cerebras over the next three years to use servers powered by the company's hardware. The Graviton processors support cloud workloads that run on Amazon Elastic Compute Cloud (Amazon EC2), and the company has long said that it offers the best price performance for cloud workloads. What's interesting here is that the AWS Graviton is an ARM-based CPU, rather than a GPU. CPU refers to a computer's Central Processing Unit, the computer's brain, whereas a GPU is its Graphics Processing Unit, commonly used for training AI models. "As we scale the infrastructure behind Meta's AI ambitions, diversifying our compute sources is a strategic imperative," said Santosh Janardhan, Meta's head of infrastructure, in a statement. "AWS has been a trusted cloud partner for years, and expanding to Graviton allows us to run the CPU-intensive workloads behind agentic AI with the performance and efficiency we need at our scale." Typically, AI models are trained on GPUs. Once trained, AI agents can use CPUs for more compute-intensive workloads, such as writing code. The Graviton chips are designed to be efficient for AI-agentic tasks. According to Amazon, the Graviton 5 chips have 192 cores and a cache that is five times larger than the previous generation, reducing communication delays between cores by 33%. They should also be more energy-efficient, with 25% better performance than previous generations. "This isn't just about chips; it's about giving customers the infrastructure foundation, as well as data and inference services, to build AI that understands, anticipates, and scales efficiently to billions of people worldwide," said Nafea Bshara, AWS vice president, in a statement. Part of the motive behind this may also be that earlier this month, Antropic signed a deal to spend $100 billion on AWS to run Claude workloads on Amazon's Trianium GPU chips, while Amazon agreed to invest $5 billion back into Antropic. It's likely that Antropic has monopolized Amazon's stock of Tranium2 to Tranium4 chips, and the company also has the option to buy future Amazon chips as they become available. In addition to working with Amazon, Meta is developing its own in-house silicon, with work progressing on four iterations of its MITA chip for AI and an expanded partnership with Broadcom to design and build the chips. Meta has also agreed to spend billions on chips and AI hardware from Nvidia and AMD, as well as another multibillion-dollar deal to use tensor processing units from Alphabet. A Meta representative declined to share specific workloads but said the company will support AI work, including MSL (Meta SuperIntelligence Labs).

[3]

Meta's multi-billion-dollar Graviton deal highlights intensifying CPU shortages in AI infrastructure -- the industry signals a shift to Agentic inference workloads, pushing demand

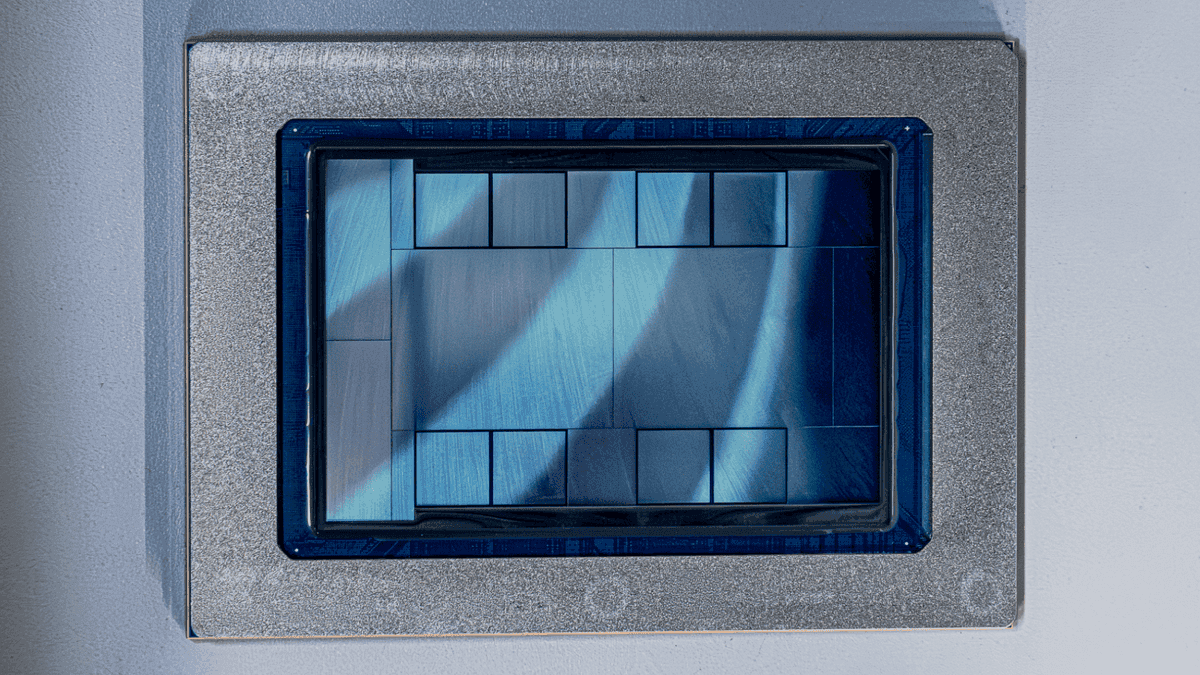

Meta signed a multibillion-dollar, multi-year deal with Amazon Web Services last week to deploy tens of millions of Graviton5 CPU cores across AWS data centers, making Meta one of the five largest Graviton customers worldwide. The deal focuses explicitly on CPU-intensive agentic AI workloads, not GPU training, with Amazon CEO Andy Jassy saying in a post accompanying the announcement that agentic AI is "becoming almost as big a CPU story as a GPU story." Meta already has GPU and accelerator contracts worth hundreds of billions across Nvidia, AMD, Broadcom, Google, CoreWeave, and Nebius, and it went to AWS specifically for general-purpose CPUs. Santosh Janardhan, Meta's head of infrastructure, said in the joint announcement that "diversifying our compute sources is a strategic imperative," and that Graviton allows the company to "run the CPU-intensive workloads behind agentic AI with the performance and efficiency we need at our scale." Graviton5, which AWS unveiled at re: Invent in December, packs 192 Arm Neoverse V3 cores on a 3nm process with roughly 180 MB of L3 cache, a fivefold increase over Graviton4. AWS claims a 25% performance lift over its predecessor and 33% lower inter-core latency. AWS vice president Nafea Bshara confirmed that the contract runs for at least three years and that the majority of capacity will be deployed in the U.S. The CPU-to-GPU ratio The meteoric rise of agentic AI is driving notable shifts in CPU-to-GPU ratios. While training LLMs relies on large deployments of GPUs, agentic inference is fundamentally different, involving processes like branching control flow, tool invocation, sandbox execution, validation loops, and orchestration across many concurrent sub-agents. All that work falls on CPUs. In its recent earnings call, Intel's CFO David Zinsner said that the ratios of CPUs to GPUs in data centers have already moved from 1:8 to 1:4, adding that as workloads continue migrating towards inference and agentic AI, ratios could converge to 1:1 or even tilt further in favor of CPUs. "As you think about the growth rate now going forward, it's [CPU demand] going to become a significant part of the AI [total addressable market]," Zinsner said. Arm has also quantified the rising demand for agentic AI in terms of core counts. At the company's Arm Everywhere event in March, Arm launched its first in-house silicon product, the 136-core AGI CPU, with Meta as lead partner and customer. Arm CEO Rene Haas told the audience that a typical AI data center today requires around 30 million CPU cores per gigawatt of capacity. With agentic workloads, however, that figure rises to roughly 120 million cores per gigawatt, a fourfold increase driven by agents that run continuously, spawn sub-agents, and generate queries at more than 15 times the rate of human chatbot users. Meanwhile, AMD CEO Lisa Su said at the Morgan Stanley TMT Conference in March that "we're seeing a significant CPU demand, frankly, as a result of the inference demand picking up." She added that "the CPU portion of the business has actually far exceeded my expectations in terms of demand." Supply constraints and rising lead times The surge in CPU demand is running into a supply chain that planned for a GPU-dominated world, leading to server CPU lead times stretching to roughly six months, up from about two weeks before the agentic demand spike. Intel acknowledged on its Q1 earnings call that unmet Xeon demand "starts with a B," referring to billions of dollars in lost revenue, with CEO Lip-Bu Tan saying that "In recent months, we have seen clear signs that the CPU is reinserting itself as the indispensable foundation of the AI era." Revenue would have been higher had Intel been able to produce more chips, the company said: Q1 data center and AI revenue came in at $5.05 billion, up 22% year-over-year. Server CPU prices have climbed 10% to 20% since March, with analysts expecting a further 8% to 10% increase in the second half of the year. Intel raised prices in both February and March, with a third increase reportedly planned for May, bringing the cumulative hike to roughly 30% above 2025 levels. AMD's Lisa Su told the Morgan Stanley audience that AMD's own customers described the demand as something that "was perhaps... under-forecasted," adding: "We are in the process of catching up." The bottleneck extends well beyond CPUs themselves, however, with TrendForce downgrading its full-year server shipment growth forecast from 20% to 13%, per reporting from The Register, because power management ICs and baseboard management controllers needed to assemble complete servers are stretching to 35- to 40-week lead times. Foundries are prioritizing higher-margin AI-specific chips, squeezing capacity for the mature-node components that general-purpose servers require. Samsung's planned closure of its S7 eight-inch wafer fab in Korea will tighten PMIC supply further. Even with all the GPUs and HBM in the world, you can't ship a rack without the host CPUs, PMICs, and BMCs. Compute diversification In response to this, Meta is seemingly attempting to spread its CPU procurement across every available source. In addition to the Graviton5 deal, Meta co-developed the Arm AGI CPU announced in March and plans to deploy it alongside its Broadcom-built MTIA inference accelerators, and the company has struck a $100 billion deal with AMD that includes EPYC server CPUs and Instinct GPUs. Nvidia also announced that Meta will deploy standalone Grace CPUs in production, with Vera to follow. Intel and Google separately announced a multi-year Xeon collaboration in early April, further demonstrating how x86 supply is being locked up through long-term agreements across the industry. Nvidia's decision to launch its 88-core Vera CPU as a standalone product, separate from its GPU systems, reflects the same dynamic, with Jensen Huang saying that he expects Vera to become a multibillion-dollar business at GTC in March. This, in addition to Arm breaking 35 years of pure IP-licensing precedent to ship finished silicon, and Intel redirecting wafer capacity to Xeon, shows that all the major players are either manufacturing or securing long-term supply of CPUs for agentic workloads. In terms of infrastructure spending, CreditSights projects that the top five hyperscalers will spend roughly $750 billion on capex in 2026, up around 67% year-over-year. Amazon alone has guided to $200 billion, and Meta has set a range of $115 to $135 billion. Most of that is naturally destined for AI, with every gigawatt of agentic capacity requiring four times the CPU cores of traditional AI training clusters. Meta's Graviton deal is a sign, by the company spending more aggressively on AI infrastructure than almost anyone else, that its own supply can't deliver enough general-purpose compute to keep pace.

[4]

Meta to use millions of AWS Graviton cores

Meta plans to deploy tens of millions of Amazon Web Services' Graviton 5 CPU cores as part of a multi-year collaboration that will make the social network among the largest-ever consumers of the cloud giant's homegrown silicon. This compute will support Meta's agentic AI deployments. While GPUs remain essential for training and running generative AI models, the software frameworks necessary to harness those models still run on CPUs. Amazon's latest Graviton processors feature 192 of Arm's Neoverse V3 cores along with a substantially larger L3 cache and support for memory up to DDR5 8,800 MT/s. That combo deliver a 25 percent performance uplift compared to Graviton 4. In a statement Santosh Janardhan, Meta's head of infrastructure, characterized the collaboration with AWS as an effort to diversify the social networking company's compute fleet. "As we scale the infrastructure behind Meta's AI ambitions, diversifying our compute sources is a strategic imperative. AWS has been a trusted cloud partner for years," he said. Evidence of a diversification strategy is not hard to find, because over the past few months Meta has cozied up to ARM-based CPU designers. In February, the company revealed it was among the first to deploy Nvidia's standalone Grace CPUs at scale. Since then the social networking magnate also announced plans to deploy Nvidia's all new 88-core Vera CPUs. Then, in March, Arm revealed it worked closely with Meta to design its first branded datacenter silicon - the "AGI CPU" which packs 136 Neoverse V3 cores into a 300 watt part. Arm's new silicon won't make its way into Meta datacenters unit later this year. However, the similarities between the AGI CPU and Amazon's Graviton 5 chips means Meta can probably deploy in AWS for now and then bring those workloads in house again when Arm's silicon is finally ready. Adoption of Arm datacenter processors, particularly for AI applications, is expected to drive considerable gains for the British chip designer's market share. Analysts at Counterpoint Research recently predicted that by 2029, Arm-based CPUs will account for 90 percent of the AI ASIC server CPU market. "While x86 architectures currently maintain a significant presence in AI server infrastructure, our generation-by-generation analysis suggests this established stronghold is swiftly transitioning toward proprietary Arm-based designs," Counterpoint analyst David Wu said in a blog post. This shift arguably began with the launch of Nvidia's Grace CPUs in 2023. The Arm-based CPUs have since replaced x86-based parts from Intel and AMD in many of Nvidia's GPU systems. In December, AWS revealed it was swapping out Intel's CPUs in favor of its own in its Trainium 3 AI rack systems, and just this week, Google said it would do the same replacing the x86 chips found in its TPU clusters with its own Arm-based Axion chips. ®

[5]

Meta Inks Multibillion-Dollar Deal to Use Amazon Chips for AI

Amazon.com Inc. and Meta Platforms Inc. have struck a multibillion-dollar deal for the social-media giant to rent hundreds of thousands of Amazon's general-purpose chips for its AI efforts. The multiyear deal gives Meta access to the Graviton line of processors, Nafea Bshara, an Amazon vice president and co-founder of the company's Annapurna Labs chips unit, said in an interview. Artificial intelligence models capable of generating text or reasoning are typically built using graphics processing units from Nvidia Corp. But AI developers can use general-purpose central processing units like Graviton for related tasks, including generating the responses to queries after a model is trained, a process known as inference. "The GPUs are useless if you don't have the CPUs next to them," Bshara said. Most CPUs Amazon has deployed in its data centers in recent years have been Graviton processors, an achievement for a company once heavily reliant on Intel Corp. hardware. Amazon Chief Executive Officer Andy Jassy said recently that the company's silicon unit was on pace to generate $20 billion in sales over the course of a year, and that executives were mulling selling the chips -- to date found only in Amazon data centers -- to other companies for use in their server farms. The Meta-Amazon deal announced on Friday is the latest Big Tech tie-up as the industry scrambles to secure sufficient processors to power new and future AI models. OpenAI and Anthropic have said they're increasing their use of Amazon's in-house Trainium chips, AI accelerators the company markets as a cost-effective alternative to Nvidia's GPUs. Meta has taken a broad approach to securing chips for its AI efforts, citing a desire to diversify its partnerships to stay flexible. The company has signed megadeals with chipmakers like Nvidia and Advanced Micro Devices Inc. Meta is also spending aggressively to develop its own silicon to help reduce costs and decrease its dependence on third-party chipmakers. The company is currently developing four iterations of its MTIA chip for AI purposes, and recently announced an expanded partnership with Broadcom Inc. for help designing and building those chips. Meta has also agreed to spend billions on chips and other AI hardware from Nvidia and AMD. It recently signed a multibillion-dollar deal to use so-called tensor processing units from Alphabet Inc.'s Google.

[6]

Meta will adopt hundreds of thousands of AWS Graviton chips in latest AI infrastructure grab

Amazon's cloud unit said Friday that Meta has agreed to use Amazon's general-purpose Graviton chips in a deal that will run for at least three years. The arrangement demonstrates Meta's willingness under CEO Mark Zuckerberg to splurge so it can meet high computing demand, alongside technology peers such as Alphabet and Microsoft. In recent weeks Meta has signed deals worth a combined $48 billion with CoreWeave and Nebius, both of which rent out access to Nvidia graphics processing units, or GPUs, that run AI models. Amazon didn't disclose the value of its Meta deal. Meta is counterbalancing infrastructure expansions with head count reductions. On Thursday the company announced plans to lay off around 8,000 employees, or 10% of its workforce. Unlike Nvidia GPUs, Arm-based Graviton processors from top cloud Amazon Web Services can take care of a wide assortment of computing tasks, similar to Intel's or AMD's central processing units, or CPUs. But Graviton can still come in handy for AI workloads, specifically for refinements, or post-training, after models have been trained with large amounts of data using large-scale computing clusters. "Graviton is one of the most used platforms for pre training by a lot of foundation model companies, and Meta is now one the newest one," said Nafea Bshara, an AWS vice president and distinguished engineer. Bshara co-founded chip company Annapurna Labs, which Amazon acquired in 2015. Since then, Amazon has developed special-purpose chips for training and running AI models, among other components. Graviton has become a breakout hit, gaining adoption from Adobe, Apple and Snowflake. Earlier this week, Amazon-backed AI model builder Anthropic announced plans to use Graviton processors as well. AWS says Graviton delivers the best performance for a given price of all computing options available through the EC2 computing service, while using 60% less energy. Meta has used Graviton chips on a small scale, and now it will tap hundreds of thousands of the chips, making it one of the top five Graviton customers, Bshara said. The company has rented out Nvidia GPUs from AWS since 2017, he said. On Thursday Intel CEO Lip-Bu Tan told analysts that demand exceeds supply for its Xeon server chips. "For the last few years, the story around high performance computing was almost exclusively about GPU and other accelerators," Tan said. "In recent months, we have seen clear signs that the CPU is reinserting itself as the indispensable foundation of the AI era." But Meta did not choose Graviton because other kinds of CPUs were unavailable, Bshara said. "Expanding to Graviton allows us to run the CPU-intensive workloads behind agentic AI with the performance and efficiency we need at our scale," Santosh Janardhan, Meta's head of infrastructure, was quoted as saying in a statement.

[7]

Meta signs multibillion-dollar deal for Amazon Graviton5 chips as AI compute demand outstrips $135B capex budget

Summary: Meta signed a multibillion-dollar, multi-year deal to deploy tens of millions of Amazon's Graviton5 ARM CPU cores in AWS data centres for agentic AI workloads. The chips are general-purpose processors, not AI accelerators, handling the CPU-intensive inference and orchestration tasks behind real-time reasoning and multi-step agents. The deal is one piece of a procurement campaign exceeding $200 billion across Nvidia ($50B), AMD ($60B), CoreWeave ($35B), Nebius ($27B), Broadcom (MTIA custom silicon through 2029), and now Amazon, reflecting Meta's conclusion that its AI compute demand exceeds what any single supply chain can deliver. Meta has signed a multibillion-dollar, multi-year deal with Amazon Web Services to deploy tens of millions of Graviton5 processor cores for artificial intelligence workloads, the companies announced on Thursday. The chips are not AI accelerators. They are general-purpose ARM-based CPUs, 192 Neoverse V3 cores per chip, manufactured on a 3-nanometre process, running in AWS data centres across the United States. Meta is not buying them. It is renting the compute capacity. The deal is significant not because of what the chips do, which is handle the CPU-intensive inference and orchestration tasks behind agentic AI, but because of who is selling them. Amazon is a direct competitor to Meta in advertising, in commerce, and increasingly in AI. Meta is paying Amazon billions for infrastructure because the demand for compute to run AI agents has outstripped what any single company can build alone, even one spending $115 billion to $135 billion on capital expenditure this year. The distinction between training and inference has defined the AI chip market since the deep learning boom began. Training, the computationally intensive process of teaching a model, requires GPUs or specialised accelerators. Inference, the process of running a trained model to serve users, requires a different mix of compute, and the agentic AI workloads Meta is building require far more CPU capacity than traditional inference. Real-time reasoning, code generation, search, and orchestrating multi-step tasks across multiple models all demand massive general-purpose processing power. Santosh Janardhan, Meta's head of infrastructure, said expanding to Graviton "allows us to run the CPU-intensive workloads behind agentic AI with the performance and efficiency we need at our scale." Nafea Bshara, AWS vice president and distinguished engineer, said Meta chose Graviton5 "for price performance" despite having "access to so many options from the supply side." The deal starts with tens of millions of Graviton5 cores, with flexibility to expand, and runs for at least three years. The majority of the capacity will be deployed in US data centres. Meta had previously used Graviton on a small scale. This deal transforms that relationship from an experiment into a core infrastructure dependency. The Graviton5, announced earlier this year, delivers a 25% performance uplift over its predecessor with 33% lower inter-core latency despite doubling the core count. It is available through EC2 M9g instances in preview, with C9g and R9g variants coming later in 2026. Meta is effectively becoming one of the largest single customers for Amazon's custom silicon programme, running workloads in a competitor's data centres because the alternative, building equivalent capacity internally, would take longer than the agentic AI roadmap permits. The Graviton deal is one entry in a procurement campaign that has no precedent in the technology industry. In February 2026, Meta committed approximately $50 billion to Nvidia for millions of Blackwell and Rubin GPUs, Grace and Vera CPUs, and Spectrum-X networking equipment. In the same month, it signed an approximately $60 billion agreement with AMD for six gigawatts of custom Instinct MI450 GPUs built on the CDNA 5 architecture at 2nm, a deal that includes performance warrants convertible into roughly 10% of AMD's equity. Meta's $35 billion AI cloud commitment with CoreWeave covers dedicated capacity through December 2032, with early deployments of Nvidia's Vera Rubin platform for inference. A $27 billion deal with Nebius adds further AI infrastructure. Meta's extended chip deal with Broadcom through 2029 covers several generations of its custom MTIA processors at 2nm, with over a gigawatt of initial computing capacity. Now comes the multibillion-dollar Graviton contract with Amazon. The total committed spend across these deals exceeds $200 billion, and none of it includes the data centres, power infrastructure, or internal engineering required to absorb the hardware. Meta launched four new MTIA chips in March 2026, the MTIA 300, 400, 450, and 500, all built on RISC-V architecture and manufactured by TSMC in partnership with Broadcom. The company can now release new chip designs every six months or less. The MTIA 400 is the first custom chip Meta describes as having raw performance competitive with leading commercial products. The 450 and 500 target generative AI inference for images and video. Yet even with its own silicon programme accelerating, Meta is simultaneously signing deals with Nvidia, AMD, CoreWeave, Nebius, Broadcom, and Amazon. The implication is that Meta's internal projections for AI compute demand are so large that building everything in-house is not a viable strategy, not because the technology is lacking, but because the timeline is too short. Amazon's custom chip business could be worth $50 billion, according to CEO Andy Jassy's April 2026 shareholder letter, which disclosed that Graviton, Trainium, and Nitro chips collectively generate more than $20 billion in annualised revenue growing at triple-digit rates. Jassy hinted that Amazon may begin selling racks of chips to third parties in the future, noting that two large customers asked to buy out all of Amazon's Graviton capacity in 2026 and were refused to protect availability for other customers. The Meta deal keeps the chips within AWS data centres, making it a cloud contract rather than a hardware sale, but the scale of the engagement suggests the boundary between cloud provider and chip supplier is dissolving. Amazon's Annapurna Labs, acquired in 2015, designs all three chip families. Trainium, the AI training and inference accelerator, has attracted Anthropic, which has deployed over a million Trainium2 chips and committed $100 billion in AWS spend over a decade. OpenAI secured two gigawatts of Trainium capacity as part of Amazon's $50 billion investment. Apple is reportedly testing Trainium for AI workloads. Trainium3, generally available in early 2026, is the first 3nm AI chip from AWS and is nearly fully subscribed. Trainium4, roughly 18 months from broad availability, will feature NVLink Fusion interoperability with Nvidia, a concession to the reality that no chip ecosystem can exist in complete isolation from Nvidia's. The Meta deal is for Graviton CPUs, not Trainium accelerators, but it validates Amazon's broader ambition to become a chip company that happens to run a cloud, rather than a cloud company that happens to make chips. Every major hyperscaler now designs its own silicon. Google's TPU programme, now in its eighth generation, projects 4.3 million chip shipments in 2026 scaling to 35 million by 2028. Google's four-partner chip supply chain challenging Nvidia spans Broadcom for training, MediaTek for cost-optimised inference, Marvell for memory processing, and TSMC for fabrication. Microsoft's Maia 200, launched in January 2026, powers OpenAI's GPT-5.2 in production. Meta's MTIA programme is shipping its fourth generation. Amazon's Graviton and Trainium lines are generating $20 billion in revenue. The custom ASIC market is growing at 45% in 2026, versus 16% growth in GPU shipments. Nvidia's share of AI accelerator revenue by value has declined from approximately 87% at its peak in 2024 to a projected 75% by the end of this year. The decline is gradual, and Nvidia's absolute revenue continues to grow, but the structural shift is unmistakable: the customers are becoming the competition. Nvidia's strategy to maintain revenue even from custom chip rivals is to make its interconnect fabric indispensable. Its $2 billion investment in Marvell and the NVLink Fusion platform ensure that even custom chips designed for Amazon, Google, Microsoft, and Meta can be integrated into Nvidia's rack-scale infrastructure, generating Nvidia revenue through platform licensing and networking components regardless of whose silicon does the computing. Trainium4's NVLink Fusion compatibility illustrates the point: Amazon is building an alternative to Nvidia's GPUs while simultaneously integrating with Nvidia's interconnects. The AI chip market is not consolidating around a single winner. It is fragmenting into an ecosystem where every participant both competes with and depends on every other participant, and the deals are so large that even direct rivals cannot afford not to trade with each other. Meta's capital expenditure guidance for 2026 is $115 billion to $135 billion, nearly double the $72 billion it spent in 2025, which was itself a record. The company is building Prometheus, a one-gigawatt supercluster in Ohio housing over 1.3 million GPUs, and has announced Hyperion, a facility described as nearly the size of Manhattan. It created Meta Superintelligence Labs to develop frontier models and Meta Compute to manage the data centre buildout, with plans to add tens of gigawatts of capacity this decade. Mark Zuckerberg committed $600 billion to US infrastructure through 2028. These are not projections from an analyst's model. They are announced commitments from the company's chief executive, denominated in amounts that would rank among the largest infrastructure programmes in history. The Graviton deal makes sense only in the context of those numbers. Meta is not renting AWS capacity because it lacks the ability to build its own. It is renting AWS capacity because even $135 billion in annual capital expenditure, even its own chip programme, even $50 billion in Nvidia hardware and $60 billion in AMD hardware and $35 billion in CoreWeave cloud and $27 billion in Nebius infrastructure, is not enough. The demand that Meta's AI roadmap generates, for training frontier models, for running inference at the scale of three billion daily active users, for deploying agentic AI across WhatsApp, Instagram, Facebook, and a new generation of products that do not yet exist, exceeds what any single supply chain can deliver. So Meta buys from everyone. It buys from its GPU suppliers. It buys from its cloud competitors. It builds its own chips. It rents from startups. And it signs a multibillion-dollar contract with Amazon for general-purpose CPUs, because the future it has committed to building requires more compute than currently exists on earth, and the only strategy left is to buy it from wherever it can be found.

[8]

Meta signs multibillion-dollar deal to use Amazon's Graviton chips for agentic AI

Facebook parent Meta signed a deal to use Amazon's Graviton chips for agentic AI, the latest indication of growing demand for the tech giant's growing silicon business. Bloomberg reports that the deal is worth billions of dollars over multiple years. It comes one day after Meta said it would lay off roughly 10% of its workforce, or about 8,000 employees, as companies across the industry cut headcount while pouring billions into AI infrastructure. The deal gives Meta access to tens of millions of Graviton5 processor cores, running in AWS data centers, making Meta one of the largest Graviton customers in the world, the companies said. It builds on Meta's existing use of Amazon Bedrock, the company's platform for AI models. Amazon CEO Andy Jassy said in a LinkedIn post that agentic AI is "becoming almost as big a CPU story as a GPU story." In other words, while graphical processing units (mostly from Nvidia) have dominated the AI hardware conversation, agentic systems need traditional central processing units to handle the reasoning and coordination that happens between steps. Meta has taken a broad approach, signing deals with Nvidia and AMD, recently agreeing to use Google's custom processors, and developing its own in-house silicon with Broadcom. "As we scale the infrastructure behind Meta's AI ambitions, diversifying our compute sources is a strategic imperative," said Santosh Janardhan, head of infrastructure at Meta, in a release. Amazon is establishing itself as a major chipmaker in its own right. CEO Andy Jassy disclosed in his annual shareholder letter that Amazon's custom silicon business is generating more than $20 billion a year in revenue, saying it's "quite possible" Amazon will sell racks of its chips to third parties in the future. That would mean competing more directly with Nividia. Its roster of chip customers is growing. Anthropic committed to running its models on Amazon's Trainium processors as part of a $25 billion expanded partnership announced this week, and OpenAI agreed to use Trainium as part of a $100 billion cloud deal earlier this year.

[9]

Meta picks Amazon's 3nm Graviton chips to power next AI wave

Meta has signed a deal to deploy tens of millions of AWS Graviton processor cores as it expands the computing backbone needed for its next generation of artificial intelligence systems. The agreement deepens Meta's long-running relationship with Amazon Web Services and highlights a growing shift in AI infrastructure. While graphics processors remain central to training large AI models, companies are now seeking more CPU power for inference, real-time reasoning, search, coding tools, and multi-step AI agents.

[10]

Meta inks deal to use more Amazon chips

Why it matters: The move comes as the leading cloud providers, including Amazon, aim to increase adoption of their homegrown chips amid an industrywide shortage of Nvidia graphics chips. Driving the news: The companies said Amazon will initially provide Meta with tens of millions of Graviton cores, with the potential to expand even further. * The new pact, which builds on an existing partnership between AWS and Meta, will make Meta one of the largest users of Graviton chips, the companies said. What they're saying: "The deal reflects a shift in how AI infrastructure gets built," the companies said in a statement. * "While GPUs remain essential for training large models, the rise of agentic AI is creating massive demand for CPU-intensive workloads -- real-time reasoning, code generation, search, and orchestrating multi-step tasks." The big picture: Google is also trying to boost adoption of its Tensor chips. On Wednesday the company announced its eighth-generation TPUs, including separate versions for training and inference.

[11]

Meta Agrees to Deploy Millions of Amazon AI Chips in Deal Worth Billions - Decrypt

AWS VP Nafea Bshara said the multi-year deal would be worth billions of dollars. Social media giant Meta signed an agreement with Amazon Web Services to deploy tens of millions of Graviton5 processors for its next-generation AI infrastructure, making the company one of AWS's largest Graviton customers globally. The partnership spans three to five years and will be worth billions of dollars, AWS Vice President Nafea Bshara told Reuters. Meta will deploy Amazon's fifth-generation CPU processors, which are purpose-built for agentic AI workloads -- applications that can reason, generate code, and orchestrate multi-step tasks independently. Each Graviton5 chip contains 192 cores that can be assigned to different tasks simultaneously, enabling parallel processing for complex AI workflows. "As we scale the infrastructure behind Meta's AI ambitions, diversifying our compute sources is a strategic imperative," said Meta Head of Infrastructure Santosh Janardhan, in a statement. "AWS has been a trusted cloud partner for years, and expanding to Graviton allows us to run the CPU-intensive workloads behind agentic AI with the performance and efficiency we need at our scale." The deal underscores how major technology companies are expanding beyond graphics processors that have dominated AI model training. As AI applications mature from research to production, companies increasingly need CPUs optimized for running trained models efficiently -- handling user queries, generating responses, and managing complex reasoning tasks in real time. The deal comes one day after Meta, the parent company behind Facebook and Instagram, confirmed reports of mass layoffs, with 8,000 jobs to be cut and 6,000 open positions to remain unfilled. The shift comes as Meta increasingly positions AI as its north star and attempts to compete with powerful rivals like OpenAI, Anthropic, and Google.

[12]

AWS inks multibillion-dollar AI infrastructure deal with Meta - SiliconANGLE

AWS inks multibillion-dollar AI infrastructure deal with Meta Amazon Web Services Inc. has inked a multiyear deal to supply Meta Platforms Inc. with cloud infrastructure. Bloomberg reported today that the agreement is worth billions of dollars. The deal centers on AWS' Graviton family of internally-developed central processing units. According to the company, Meta will purchase access to tens of millions of Graviton cores with the option to add more down the line. It will use the chips to power artificial intelligence agents. AWS debuted the newest addition to the Graviton chip line, the Graviton5, last December. It features 192 cores made using a 3-nanometer manufacturing process. The cores implement Arm Holdings plc's ubiquitous instruction set architecture, or ISA. A chip's ISA defines the language in which it expresses computations. The "words" that make up the language are simple computing operations such as arithmetic calculations. Arm's ISA also includes matrix and vector extensions, computations optimized for AI workloads. AWS says that Graviton5 is 25% faster than its previous custom CPU. One of the contributors to the chip's speed is that its L3 cache is 5 times larger. An L3 cache is a memory pool that keeps bits immediately next to a processor's cores. Shortening the distance between two sets of circuits reduces the amount of time it takes data to travel between them, which speeds up processing. CPUs perform a wide range of tasks in AI clusters. They coordinate the graphics cards that perform the bulk of the calculations involved in running a neural network. Additionally, AI agents like those Meta plans to run on Graviton5 can use CPUs to power their tools. Those are the third-party applications an agent uses to automate tasks. Graviton5 is designed to work with a collection of hardware and software modules called the AWS Nitro System. It offsets certain infrastructure management tasks from CPUs to specialized accelerators, which leaves more computing capacity for customer applications. Public cloud operators often use a single set of infrastructure assets to power multiple customers' workloads. Those workloads are isolated from one another to minimize cybersecurity risks. According to AWS, Graviton5 uses a module called the Nitro Isolation Engine to verify that different users' workloads are indeed isolated from one another. "AWS has been a trusted cloud partner for years, and expanding to Graviton allows us to run the CPU-intensive workloads behind agentic AI with the performance and efficiency we need at our scale," said Meta head of infrastructure Santosh Janardhan.

[13]

Meta and AWS sign agreement to deploy AWS Graviton chips to power Agentic AI

TL;DR: Meta is expanding its Agentic AI capabilities by partnering with Amazon AWS to deploy millions of Graviton5 cores, enhancing compute power with improved latency, bandwidth, and security. This follows Meta's deals with AMD and Arm, positioning it as a major Graviton customer amid rising CPU demand for AI workloads. Meta continues to bet heavily on Agentic AI, as the company has signed an agreement with Amazon to deploy AWS Graviton processors at scale. In late February, Meta signed a deal with AMD to deploy 6 gigawatts of AI hardware. In March, Arm released the Arm AGI CPU for Agentic AI, built in collaboration with Meta. The new partnership with AWS is another step in the same direction for the company. Recently, we have been seeing a significant shift in the compute demands for Agentic AI workloads. While GPUs remain the dominant force behind most AI workloads, CPUs are slowly gaining significance, thereby increasing demand. This is why Meta is now partnering with Amazon's AWS to deploy tens of millions of Graviton cores to its compute portfolio. The Graviton5 chip is a 192-core chip built on the AWS Nitro System and features a cache that is five times larger than its previous generation. AWS claims an "up to 33%" improvement in core-to-core latency, greater bandwidth, and the ability for Meta to run its own virtual machines without compromises to performance or security. The official Amazon AWS announcement goes on to claim that Graviton5 is built on a 3nm production process, with AWS controlling the entire production process from design to the final rollout. This translates into more granular control over the chip's performance, efficiency, compatibility, and optimization, which other competing Agentic AI chips can't match. The claimed performance improvement over the previous generation is 25%, though that will need to be verified by third-party sources first. Regardless, this deal is quite significant for both Meta and AWS as it makes Meta one of the largest Graviton customers in the world. We have recently seen Google and Intel join forces to deploy Intel Xeon processors for AI workloads, and now a similar deal has been struck here. Meta has also partnered with Broadcom to develop custom AI silicon and has multiple MITA accelerators already deployed.

[14]

Meta strikes deal with Amazon's cloud unit to use its CPU chips - The Economic Times

Meta will use "tens of millions of cores" worth of Graviton chips. Each chip itself contains 192 cores, but they can each be assigned to different tasks.Meta Platforms and Amazon.com on Friday said Meta will use Amazon Web Services' (AWS) Graviton5 central processing unit (CPU) chips, a deal an AWS executive told Reuters would span multiple years and be worth billions of dollars. Meta will use "tens of millions of cores" worth of Graviton chips. Each chip itself contains 192 cores, but they can each be assigned to different tasks. While graphics processing units (GPUs) made by firms such as Nvidia remain essential for training AI models, once they are trained and deployed they often run on CPUs. The CPU market is undergoing an AI-driven renaissance, with Intel saying this week CPU prices were rising as demand soars. AWS has been developing its in-house CPU since 2018 and is now on its fifth generation of the chip, which it buys directly from Taiwan Semiconductor Manufacturing Co. "We pass that savings on to the customers," Nafea Bshara, vice president and distinguished engineer at Amazon Web Services, told Reuters, saying the Meta deal would span multiple years and be worth billions of dollars. Meta has previously signed large chip deals with Nvidia and Advanced Micro Devices, and also has worked closely with Arm Holdings on Arm's new CPU. "As we scale the infrastructure behind Meta's AI ambitions, diversifying our compute sources is a strategic imperative," Santosh Janardhan, head of infrastructure at Meta, said in a statement.

[15]

Meta Is Adding Tens of Millions of AWS Graviton Cores To Its Compute Portfolio As Agentic AI Becomes "Almost As Big a CPU Story As A GPU Story"

Meta has partnered with Amazon's AWS to bring tens of millions of Graviton CPU cores to its AI compute portfolio for Agentic AI. As the Agentic AI era rages on, companies are rapidly expanding their AI infrastructure to meet rising compute demands. We have seen multi-GigaWatt deals being signed here and there, and CPU usage is on the rise. With all of this happening, Meta has also announced its blockbuster partnership with Amazon's AWS. The new partnership centers around AWS's Graviton CPUs, which Meta will be using for its compute portfolio. In the announcement, Meta says that they will be adding "Tens of Millions" of AWS Graviton CPUs. That's a huge number of CPUs since each of the latest Graviton5 chip packs 192 Arm Neoverse cores. The following are some of the key highlights of the partnership: With this, Meta will become one of Amazon's largest Graviton customers across the globe. And the use of Graviton chips also showcases just how much essential CPUs have become for Agentic AI. CPU makers are seeing massive adoption and interest in their products. Intel, AMD, NVIDIA, Amazon, essentially every firm that makes a CPU is now being approached by AI firms to gain access to as many chips as possible. "This isn't just about chips; it's about giving customers the infrastructure foundation, as well as data and inference services, to build AI that understands, anticipates, and scales efficiently to billions of people worldwide. Meta's expanded partnership, deploying tens of millions of Graviton cores, shows what happens when you combine purposebuilt silicon with the full AWS AI stack to power the next generation of agentic AI." - Nafea Bshara, Vice President and Distinguished Engineer, Amazon At the same time, AI firms are also preparing their own custom silicon, which will be used for AI. Meta has already partnered with Broadcom on the development of custom AI silicon, which will be used to power a "Multi-Gigawatt" ecosystem. Meta already produces several MITA series accelerators, but with chipmakers such as TSMC, Samsung, and the rest being severely constrained, the next possible route is to go to chip makers who are already producing chips at these semiconductor firms, or just knock on the door of cloud AI providers. And the "tens of millions of cores" is just the first deployment. Meta aims to aggressively scale up its AI resources in the coming years, so as the AI ecosystem expands, we will see even more chips being added.

[16]

Meta Becomes One of World's Largest Customers of Amazon AI Chips | PYMNTS.com

By completing this form, you agree to receive marketing communications from PYMNTS and to the sharing of your information with our sponsor, if applicable, in accordance with our Privacy Policy and Terms and Conditions. The agreement with Amazon Web Services (AWS) will bring tens of millions of these cores into Meta's compute portfolio, with the flexibility to add more, Meta said in a Friday (April 24) press release. The agreement builds on the companies' longstanding relationship and supports Meta's broader goal of diversifying compute to meet the demands of its AI systems, according to the release. "As we scale the infrastructure behind Meta's AI ambitions, diversifying our compute sources is a strategic imperative," Meta Head of Infrastructure Santosh Janardhan said in the release. "AWS has been a trusted cloud partner for years, and expanding to Graviton allows us to run the CPU-intensive workloads behind agentic AI with the performance and efficiency we need at our scale." This announcement came a day after it was reported that Meta plans to lay off about 8,000 employees, or 10% of its workforce, and leave 6,000 open roles unfilled to offset the investments it has been making in AI infrastructure. PYMNTS reported in March that Meta plans to spend between $115 billion and $135 billion this year as it races to construct data centers, chips and other AI infrastructure. This level of spending puts it in the company of some of the biggest investors in AI infrastructure, including Amazon, Google and Microsoft. AWS announced the agreement in its own Friday press release, saying that the deal builds on Meta's longstanding relationship with AWS and use of Amazon Bedrock, a platform for building generative AI applications and agents, at scale to support its AI. The company added that purpose-built chips such as Graviton are the most efficient way to power agentic workloads like code generation, real-time reasoning and frontier model training. "This isn't just about chips; it's about giving customers the infrastructure foundation, as well as data and inference services, to build AI that understands, anticipates and scales efficiently to billions of people worldwide," Nafea Bshara, vice president and distinguished engineer at Amazon, said in the release. Amazon CEO Andy Jassy said in a 2025 Letter to Shareholders posted April 9 that Amazon's chips business will be much larger than most people think. The company's annual revenue run rate for its chips business, which includes Graviton, Trainium and Nitro, is over $20 billion and growing triple-digit percentages year over year. "If our chips business was a stand-alone business, and sold chips produced this year to AWS and other third parties (as other leading chips companies do), our annual run rate would be ~$50 billion," Jassy said.

[17]

Meta expands AWS partnership with large-scale deployment of graviton processors

Meta has finalized an agreement to deploy AWS Graviton processors at scale, marking a substantial expansion of its long-standing partnership with Amazon Web Services (AWS). The initial deployment will encompass tens of millions of Graviton cores, with provisions to scale further as Meta develops its next-generation artificial intelligence (AI) infrastructure. This agreement highlights a broader industry shift in how AI infrastructure is architected, balancing the established use of hardware alongside newly optimized processors for emerging workloads. While Graphics Processing Units (GPUs) remain foundational for training large AI models, the increasing prevalence of agentic AI -- autonomous systems designed to reason, plan, and execute complex workflows -- is generating massive demand for CPU-intensive infrastructure. Meta is utilizing the Graviton deployment to support these specific agentic workloads, which include: Purpose-built processors are currently viewed as the most efficient method for powering these CPU-bound operations at scale. To meet the demands of its frontier models, Meta's infrastructure will rely heavily on the AWS Graviton5 processor. Engineered specifically for high-performance computing, the chips offer several structural upgrades over previous generations: As AI computation demands escalate globally, both cost management and environmental impact have become central to infrastructure planning. AWS Graviton5 processors are manufactured using 3-nanometer chip technology. This smaller, more precise manufacturing process inherently yields more efficient processors. Because AWS oversees the entire pipeline -- from fundamental chip design to server architecture integration -- the hardware can be heavily optimized compared to off-the-shelf alternatives. Consequently, Graviton5 delivers up to 25% better performance than the previous generation while maintaining leading energy efficiency. This allows organizations like Meta to scale their AI operations and deliver personalized experiences globally while remaining aligned with corporate sustainability targets. Commenting on this, Nafea Bshara, Vice President and Distinguished Engineer, Amazon, said: This isn't just about chips; it's about giving customers the infrastructure foundation, as well as data and inference services, to build AI that understands, anticipates, and scales efficiently to billions of people worldwide. Meta's expanded partnership, deploying tens of millions of Graviton cores, shows what happens when you combine purpose-built silicon with the full AWS AI stack to power the next generation of agentic AI. Santosh Janardhan, Head of Infrastructure, Meta, said:

Share

Copy Link

Meta has inked a major multi-billion dollar agreement with Amazon to deploy tens of millions of AWS Graviton CPU cores across 32 data centers over three years. The deal highlights a critical shift in AI infrastructure as agentic AI workloads drive unprecedented demand for CPUs, not just GPUs, exposing supply constraints across the industry.

Meta Amazon Deal Signals Major Shift in AI Infrastructure

Meta has signed a multi-billion dollar deal with Amazon Web Services to deploy tens of millions of AWS Graviton chip cores across its 32 data centers over the next three years, making the social media giant one of the five largest Graviton customers worldwide

1

3

. The agreement focuses explicitly on ARM-based CPUs rather than GPUs, marking a notable departure from traditional AI chip strategies. Amazon's Graviton 5 processors feature 192 Arm Neoverse V3 cores with roughly 180 MB of L3 cache, delivering a 25% performance lift over its predecessor and 33% lower inter-core latency3

2

. While Amazon hasn't disclosed the full value, the timing brings more of Meta's cash back to AWS after the company signed a six-year, $10 billion deal with Google Cloud last August1

.

Source: Wccftech

Agentic AI Workloads Drive CPU Demand Beyond GPUs

The Meta Amazon deal underscores how agentic AI workloads are fundamentally reshaping chip requirements in AI infrastructure. While GPUs remain essential for training large AI models, agentic AI creates CPU-intensive workloads like real-time reasoning, writing code, search, and coordination involved in managing agents through multi-step tasks

1

. Amazon CEO Andy Jassy stated that agentic AI is "becoming almost as big a CPU story as a GPU story"3

. These workloads involve branching control flow, tool invocation, sandbox execution, validation loops, and orchestration across many concurrent sub-agents—all tasks that fall on CPUs3

. Meta's head of infrastructure, Santosh Janardhan, confirmed that "diversifying our compute sources is a strategic imperative" and that AWS Graviton allows the company to "run the CPU-intensive workloads behind agentic AI with the performance and efficiency we need at our scale"2

.

Source: The Register

CPU Shortages Emerge as New Bottleneck in Data Centers

The surge in demand for AI chips, particularly CPUs, has exposed significant supply constraints across the industry. Intel's CFO David Zinsner revealed that CPU-to-GPU ratios in data centers have already shifted from 1:8 to 1:4, with ratios potentially converging to 1:1 or tilting further toward CPUs as workloads migrate toward inference and agentic AI

3

. Arm CEO Rene Haas quantified this shift, explaining that a typical AI data center today requires around 30 million CPU cores per gigawatt of capacity, but with agentic workloads, that figure rises to roughly 120 million cores per gigawatt—a fourfold increase3

. Server CPU lead times have stretched to roughly six months, up from about two weeks before the agentic demand spike, while server CPU prices have climbed 10% to 20% since March3

. AMD CEO Lisa Su acknowledged at the Morgan Stanley TMT Conference in March that "we're seeing a significant CPU demand, frankly, as a result of the inference demand picking up," adding that "the CPU portion of the business has actually far exceeded my expectations in terms of demand"3

.Related Stories

Compute Diversification Strategy Across Cloud Providers

Meta's agreement with AWS represents part of a broader compute diversification strategy as the company seeks flexibility across multiple chip vendors and cloud providers. The social media company already has GPU and accelerator contracts worth hundreds of billions across Nvidia, AMD, Broadcom, Google, CoreWeave, and Nebius

3

. In February, Meta revealed it was among the first to deploy Nvidia's standalone Grace CPUs at scale, and later announced plans to deploy Nvidia's new 88-core Vera CPUs4

. In March, Arm revealed it worked closely with Meta to design its first branded datacenter silicon—the "AGI CPU" which packs 136 Neoverse V3 cores into a 300-watt part4

. Meta is also developing its own in-house silicon, with work progressing on four iterations of its MTIA chip for AI and an expanded partnership with Broadcom to design and build the chips . Amazon's Nafea Bshara emphasized the symbiotic relationship between different chip types, stating that "the GPUs are useless if you don't have the CPUs next to them" .ARM-Based CPUs Gain Ground Against x86 Architecture

The Meta Amazon deal accelerates a broader industry transition toward ARM-based CPUs in AI infrastructure. Analysts at Counterpoint Research predict that by 2029, ARM-based CPUs will account for 90% of the AI ASIC server CPU market

4

. Counterpoint analyst David Wu noted that "while x86 architectures currently maintain a significant presence in AI server infrastructure, our generation-by-generation analysis suggests this established stronghold is swiftly transitioning toward proprietary Arm-based designs"4

. This shift began with the launch of Nvidia's Grace CPUs in 2023, which have since replaced x86-based parts from Intel and AMD in many of Nvidia's GPU systems4

. In December, AWS revealed it was swapping out Intel's CPUs in favor of its own in its Trainium 3 AI rack systems, and Google recently announced it would replace x86 chips found in its TPU clusters with its own Arm-based Axion chips4

. Amazon CEO Andy Jassy has taken aim at Nvidia and Intel in his annual shareholder letter, saying that enterprises want better price-performance ratios for AI, and that he intends to win deals on that basis1

. Most CPUs Amazon has deployed in its data centers in recent years have been Graviton processors, and Jassy recently said the company's silicon unit was on pace to generate $20 billion in sales over the course of a year .

Source: FoneArena

References

Summarized by

Navi

[1]

[4]

Related Stories

Meta unveils four MTIA chip generations to power AI inference and reduce Nvidia dependence

12 Mar 2026•Technology

Meta strikes up to $100 billion AI chips deal with AMD, could acquire 10% stake in chipmaker

18 Feb 2026•Technology

Google and Meta strike multibillion-dollar AI chip deal as tech giants race to scale AI infrastructure

27 Feb 2026•Business and Economy

Recent Highlights

1

Meta AI chatbot exploited by hackers to hijack high-profile Instagram accounts worth millions

Technology

2

Florida sues OpenAI and Sam Altman over ChatGPT safety, alleging AI harms linked to violence

Policy and Regulation

3

Nvidia RTX Spark chips power new AI laptops with up to 128GB memory and local agent capabilities

Technology

Recent Highlights

Today's Top Stories

News Categories