Authors Face Career-Ending AI Accusations as Publishing Industry Grapples With Trust Crisis

4 Sources

[1]

Thousands of AI-written, edited or 'polished' books are being sold - an eerie echo of Orwell's 'novel-writing machines'

At some point in the next several months, I am hoping to receive a modest check as a member of the class covered in the class-action settlement Bartz v. Anthropic. In 2025, the artificial intelligence company Anthropic, best known for creating the chatbot Claude, agreed to pay up to US$1.5 billion to thousands of authors after a judge ruled that the company had infringed upon their copyrights. When I first learned about the settlement, I assumed that Anthropic was primarily interested in teaching Claude about the subject of my stolen work, former socialist activist, British Labour politician and feminist Ellen Wilkinson. It did not initially occur to me that Claude might also be learning about how I, Laura Beers, political historian, craft my sentences and translate my voice to the page. Yet there is increasing evidence that chatbots like Claude can be trained not only to regurgitate an author's content, but also to mimic their voice. In March 2026, journalist Julia Angwin filed a class action suit against the owners of Grammarly, alleging that the company misappropriated her and other writers' identities to build its "Expert Review" AI tool, which offers to give editorial feedback in the voices of various authors, living and dead. That a machine might use my writing not only to learn about my subject matter, but also to analyze and ultimately mimic my authorial voice, points to a future that George Orwell envisioned with eerie prescience. In his 1949 dystopian novel "1984," Orwell imagined "novel-writing machines" capable of mass-producing literature, employing programmed mechanical "kaleidoscopes" as substitutes for individual artistic process. 'In place of a human mind, a statistical average' To what extent, I wondered, could Claude mimic my own voice? I prompted the chatbot to write an essay "in the style of Laura Beers" about an early 20th-century politician. I struggled to recognize myself in the resulting prose. But maybe Claude, having only consumed one of my books, didn't have enough to go on to really nail my tone. I decided to try again. This time, I would ask it to write a brief essay in the style of Orwell. The subject of my book "Orwell's Ghosts: Wisdom and Warnings for the Twenty-First Century," Orwell is one of the most distinctive literary essayists and prose stylists of the 20th century. Most of Orwell's work is in the public domain, meaning it could have legally been used to train Claude. I prompted Claude to write an essay "in the style of George Orwell about the threat to individual identity and freedom of thought posed by AI and particularly by the ability of 'novel writing machines' to produce literature." Primed with ample source material, the AI did a passable job: "Here is a type of comfort, familiar to anyone who has ever been slowly dispossessed of something, that consists not in denying the loss but in not yet noticing it. The frog does not leap from the gradually heated water. The citizen does not protest the gradual narrowing of the permitted thought. And the reader, it seems, does not mourn the novel until the novel has already been replaced by something that resembles it in every outward particular - same chapters, same characters, same approximate sequence of feeling - yet contains, in place of a human mind, a statistical average of all the human minds that came before it." The final sentence about the statistical average rings false. But Orwell would, I suspect, have liked the image of the slowly boiling frog. "Here is a type of comfort" is also a phrase that Orwell might well have written. I am skeptical that anyone would classify Claude's efforts as indistinguishable from Orwell's prose. But when it comes to machine-produced "literature," perhaps it doesn't really matter whether it can fully approximate original art, as long as it's good enough to function as entertainment and distraction for the masses. Jam, bootlaces and books This was Orwell's own dispirited suggestion in "1984. With the help of "novel-writing machines," the employees of the Ministry of Truth - the government department responsible for controlling information and rewriting history - are able to mass-produce not only novels, but also "newspapers, films, textbooks, telescreen programmes [and] plays." They churn out "rubbishy newspapers containing almost nothing except sport, crime and astrology, sensational five-cent novelettes" and "films oozing with sex," along with cheap pornography intended for the "proles," as the uneducated working classes of Big Brother's Oceania were known. The technology disgusts Orwell's protagonist, Winston Smith, who pointedly decides to purchase a diary and pen to write down his own independent thoughts. But to Julia, Winston's nymphomaniac, anti-intellectual lover who works as a mechanic servicing the machines, "Books were just a commodity that had to be produced, like jam or bootlaces." 'Full-Length Novels in Seconds' According to estimates, thousands of books for sale on Amazon have been written in whole or in part using AI. In other words, today's AI is also being used to mass-produce literature like jam or bootlaces. Many of these works are not fully machine-written. Instead, they've been, as the AI writing tool Sudowrite advertises, "polished by AI." With its "Rewrite" function, the company promises to give users an opportunity to "refine your prose while staying true to your style, with multiple AI-suggested revisions to choose from." The service is akin to the "touching up" provided by the Ministry of Truth's Rewrite Squad in "1984." Other books for sale on Amazon are, however, entirely machine-generated. The AI writing tool Squibler promises that if you give it an overarching prompt, it can produce "Full-Length Novels in Seconds." The potential of AI-generated "literature" to turn a quick-and-easy profit ensures that readers will continue to encounter more of this content in the future, especially as AI's large language models become more refined. Already, studies have shown that readers cannot easily distinguish AI-generated forgeries from original prose. Last year, I had lunch with a screenwriter friend in Los Angeles. He told me that his colleagues are particularly nervous about the use of AI to produce sequels. Once you have an established cast of characters for a movie franchise like, say, "Fast & Furious," audiences will likely see the next installment whether it's written by man or machine. Yet my own brief experiments with Claude give me at least some hope for the future of literary art. A chatbot like Claude might be able to absorb and analyze "a statistical average of all the human minds that came before it," but without the input of actual human experience and sensibility, it is hard to envisage them ever producing true art. Whether AI can produce the next George Orwell novel or essay remains to be seen. That it can and will churn out an increasing volume of popular fiction and screenplays like "Fast & Furious 25" seems less in doubt.

[2]

How Authors and Readers Feel About the 'Shy Girl' Cancellation

Major publishing houses risk unwittingly putting out books generated with A.I. tools. Authors and readers are frustrated, nervous and grasping for solutions. Last fall, Antonio Bricio, an engineering consultant who lives in Guadalajara, Mexico, finished a draft of his first novel, a science fiction thriller about a government conspiracy to bury the history of humanity's first contact with alien refugees. After querying 20 literary agents and getting a string of rejections, he spent several months furiously revising it in hopes of one day landing a publisher. Now, Bricio worries that the already taxing process of getting a publishing deal as a debut author has become even more fraught. He fears that agents and publishers will avoid taking risks on unknown authors over concerns that they might have written the book using artificial intelligence. The panic and paranoia over A.I.-generated books exploded last month, when a major publisher, Hachette, decided to cancel the release of a horror novel, "Shy Girl," by Mia Ballard, in the United States over evidence suggesting that it had been partly produced by A.I. Hachette also pulled the book in the United Kingdom, where it released "Shy Girl" last year after Ballard initially self-published it. When Bricio learned about the novel's cancellation on social media, his stomach dropped. He said he does not use A.I. to write, except to occasionally translate a stray word or phrase from his native Spanish into English, in which he is also fluent, using the A.I. translation program DeepL. But he wondered what an A.I. detector would say about his work. So he paid for a subscription to Originality.ai and uploaded a chapter of his novel. The detector was 100 percent confident that he had used A.I. in some way. Bricio searched for the phrases that had tripped up the detector, deleted some sentences and reran it. This time, the program said it was 100 percent certain that a human had written it. Eventually, Bricio had a chat conversation with a customer service representative, who told him that if he received results that incorrectly flagged his work as A.I.-generated, he might need a different model of the program. The back and forth only left Bricio more unsettled. The Originality.ai reports on his draft, which he shared with The Times, showed that adding or deleting even just a few sentences produced wildly different results. "What if publishers or agents start running these A.I. tools on everybody?" Bricio said. "Everybody is going to walk on eggshells from now on." As the publishing industry wrestles with the intrusion of A.I. into nearly every aspect of the business, there seems to be little consensus over what publishers can or should do to regulate how writers use the technology. But many agree that the current state of affairs is untenable. A growing number of writers face unfounded suspicions of A.I. use. Others use A.I. without disclosing it. Many readers feel confused and wary, not knowing whether the books they're reading were written by a human or a machine. Quite a few self-published authors have been called out for obvious A.I. use and pilloried by readers and fellow writers as a result. But the "Shy Girl" controversy could prove to be a turning point for the entire book business. In the wake of the novel's cancellation, many readers and authors questioned how a major publishing company failed to catch signs of A.I. writing. Commenters on Goodreads and Reddit had complained for months about what they called obvious evidence of chatbot language. The scandal has prompted some readers to question how much publishing houses vet the work they acquire. "We're reaching this era of distrust, with no easy way to prove the veracity of your own writing," said Andrea Bartz, a thriller writer who was a lead plaintiff in the class-action lawsuit brought by authors against Anthropic, which agreed to a $1.5 billion settlement. Bartz recently put some of her own writing into Ace, an A.I. checker, and was startled when the program labeled her work as 82 percent A.I.-generated. The program then offered her a solution: "Would you like to humanize your text?" When Bartz wrote about her experience on Substack, dozens of writers chimed in. "I guess that's what happens when your books were stolen to program A.I.," the novelist Rene Denfeld commented, noting that an A.I. detection program had also falsely determined some of her writing to be A.I.-generated. "It's got to be a wake-up call for the industry," said Jane Friedman, a publishing consultant. Most major publishing houses don't have clear-cut rules around A.I. use for authors, operating instead on trust and the expectation that writers will be transparent. But with the many ways A.I. is seeping into book creation, from research to editing to composing sentences, there is confusion over which forms of A.I. use cross a line -- and a heightened fear that A.I. writing can, and will, steal past professional editors. When Rachel Louise Atkin, who reviews books on Goodreads, Instagram and TikTok for thousands of followers, first heard about "Shy Girl" on social media, it sounded like a book she would love -- a gripping and twisted feminist horror story. She devoured the book in a day and recommended it widely. She said she was shocked to learn that it had been pulled over evidence of A.I. use. "If I knew for definite that something was written with A.I., I would have avoided it," she said. "I think we should be able to make the choice if we want to read something that was written with A.I. or not." The book influencer Stacy Smith found "Shy Girl" on NetGalley, a site where readers can access books to review ahead of publication, and gave it a five-star review on Goodreads. She, too, was dismayed to learn of the accusations. "I would read books written with A.I., but I would like to know they were written by A.I.," she said. "It's the dishonesty that hurts." Authors, meanwhile, often feel threatened from all sides. The ever increasing number of books published each year, including those written with A.I., makes it more difficult for writers to find an audience in a fractured and oversaturated entertainment marketplace. On top of that, authors who steer clear of A.I. now feel pressure to prove their human bona fides -- with no great options for doing so short of live-streaming as they type. Some writers are adding a logo to their books and websites that says "human authored." The certification, offered by the Authors Guild, allows authors to attest that they wrote their books without using A.I. to generate or substantially shape prose. While the guild does not independently verify authors' claims, writers may be subject to trademark violation suits if they violate the logo's terms of use. A.M. Dunnewin, a self-published author of horror novels, registered for the certification and put the symbol on her website: "I thought, maybe having that certificate could be a safety net, letting people know that it's my work." Sarina Bowen, an author who has self-published some books and released others with major publishers, was accused of using A.I. to create the cover for one of her novels. It was a charge she easily refuted; the novel was published years before generative A.I. went mainstream. But now, she worries about the cover art she sources online -- which is a fairly common practice among self-published authors -- and whether an artist might have used the technology to produce it. "I don't know where we go from here, but that moment when I was accused of having an A.I. cover was really a downer," she said. "Everyone who publishes books is swimming in this world where we can't be sure of the origin of our content." Readers who picked up "Shy Girl" were among the first to spot signs of A.I. generation in its pages, and they clearly didn't like it. Some writers said they found that encouraging. "If they are going to spend money on a book, they want it to come from the author's brain and heart and not a computer that's robbed the writer's brain," said Laura Taylor Namey, who writes young adult fiction. "I applaud that." But others fear that more A.I.-generated books will slip through the cracks. And as technology improves, the telltale signs of chatbot prose might disappear. "I'm really not looking forward to the day when readers can't tell the difference," Bowen said.

[3]

We Talked to a Writer Accused of Publishing An AI-Generated Essay in The New York Times

Can't-miss innovations from the bleeding edge of science and tech Was AI used to produce a personal essay that wound up in the pages of the New York Times? The answer is complicated. The writer Kate Gilgan found herself at the center of a literary scandal last month when, on social media, another writer accused her of using AI to write an emotional first-person essay about the experience of losing custody of her young son at the height of her alcoholism. The piece had been published in the NYT's famously competitive "Modern Love" column back in October; the accusations were made without any hard evidence, and the writer who accused Gilgan of using AI, The Lit Mag's Becky Tuch, pointed only to the style of Gilgan's article as evidence. Others quickly piled on, and soon much of literary social media was swarming with speculation and analyses via AI content detectors (which, we should note, are known to be unreliable.) Gilgan is pretty offline, she told Futurism -- so it wasn't until journalists started asking her about the controversy that she realized there was one at all. "I'm actually not on Twitter or X or whatever that is," said Gilgan, who spoke to us from her home in the Western Canadian province of Saskatchewan. But she "wasn't that worried," she said, "because AI wasn't used to generate that content." That contention, it turns out, is a bit semantic. As Gilgan conceded to The Atlantic, she did make use of a variety of chatbots -- ChatGPT, Claude, Copilot, and Perplexity -- for conceptualizing and editing the piece, though she denied copying and pasting anything directly from an AI into her essay. The situation, in other words, is messy. Though the AI accusations against her were unsubstantiated at first -- they were based simply on certain rhetorical devices that chatbot-generated writing is known to favor, and which the public is clearly starting to be on the lookout for -- it turned out that readers were right to be suspicious, since AI did have a prominent hand in the creation of the piece. The controversy comes at an intensifying moment for the literary world's ongoing struggle with AI. Institutional scandals continue to abound -- within the same two-week span as the allegations against Gilgan emerged, the publishing giant Hachette pulled a buzzy new horror novel over suspicion of substantial AI use, and the NYT cut all ties with a book critic after it was discovered that his usage of AI had resulted in the newspaper publishing a significantly plagiarized book review -- while some writers and journalists are starting to open up about their sometimes very extensive use of AI. To unpack it all, I wanted to talk to Gilgan myself -- about how she used AI, what it means when a machine becomes a collaborator in the creative process, and where writers should draw the line. In an interview, Gilgan maintained that the idea that she published AI slop in "Modern Love" is false. But she did use chatbots to help her craft a piece specifically for publication in the column, and there's no question that it ended up with the distinctive argot of AI. One thing was clear: AI use has turned into one of the most contentious topics in the literary community. "I was going back and reading a lot of my earlier pieces -- I guess, maybe intuitively, I was wondering, 'Oh, my God, has that happened? Has AI changed my voice?'" Gilgan told me. But "I don't think I actually worried about it, because I haven't used it to that extent." *** Gilgan started taking getting published seriously about ten years ago, she told us, writing about extremely personal topics like an extramarital affair she'd had and her family's experience of being trapped in Bali during the pandemic. And even before that, about 15 years ago, she tried -- and failed -- to write a memoir about the same experience she later explored in her "Modern Love" piece: losing custody of her young son due to alcoholism. The problem? It wasn't any good, she said. "It was so full of self-pity and histrionic emotional grandeur; it was just awful," said Gilgan. "And so I stopped writing it and set it down... it just wasn't working." A few years ago, she decided she wanted to revisit the custody battle and her subsequent path to sobriety, but this time as a novel. "It gave me more freedom," said Gilgan. She finally finished her first draft about a year ago; the non-fiction essay published in "Modern Love," she says, was born from that. "This essay then came out of that novel," Gilgan said. Distilling it into a shorter essay, she thought, might help her get her book published. "I thought, 'Okay, I'm going to try and leverage this. I'm going to try and market the essay to try and help bring my book to publication.'" Gilgan was strategic. She turned to chatbots, which she says she started playing around with about two or so years ago, to help her craft her essay in a way that she believed would appeal to the NYT's "Modern Love" editorial staff. "Rather than sitting on Google reading through tons of other people's articles about how to get published in 'Modern Love' and 'here's what Dan Jones looks for,'" said Gilgan, referring to the column's longtime editor, "I asked AI, 'Okay, boil this down for me. Take everything -- every scrap of information on the internet that you can find -- to help me get this essay published in the Times.'" Gilgan used a mix of chatbots throughout the process, she said. "We homeschool our kids, so we've always got laptops open around the house," she explained. "One will have ChatGPT on it, and one will have Copilot on it. Or if I'm using my cell phone, whatever happens to be on it is what I'll use. I don't have any go-tos." Though she holds that she didn't use AI to generate any "new ideas," as she put it, she says she did lean on the tech as a "first reader," by running and re-running her writing through chatbots and asking questions about how best construct her piece to suit her mission: publication. "One of the bits of feedback I got from AI was, 'Okay, you're going to have to really focus on a tight story arc.' Okay, I need to do that. So if I get that feedback, I go back to my essay and I start rewriting, start shifting things around," said Gilgan. "There were a lot of questions I asked it about, 'Does this sound too histrionic? Am I just making my case that my ex-husband was the only problem?'" "I used it to help me stay rational and unemotional about a really emotional topic," she continued, adding that there's a "fine line in writing first-person narrative where you're relatable but you're not 'terminally unique' in your emotions -- I used [AI] to help me balance that." (In that way, Gilgan said, she leaned on AI the same way she asked questions of her Alcoholics Anonymous sponsor throughout the writing process; chatbots, she said, are almost like having her sponsor "available, on my phone with me" at all times.) This process, however, led to some accusing Gilgan of smuggling full-on undisclosed AI slop into the pages of the paper of record. Asked what she makes of this indictment of her writing style, and if she believes leaning on AI for the "Modern Love" piece significantly altered her voice as a writer, she laughed that the backlash simply speaks to her technical ability -- and insisted that while her writing style has "matured" since she was first published in 2017, she doesn't believe AI has fundamentally transformed her writing. "At first it was like, 'Oh my god,'" she recounted. "And then it's like, 'But I'm just a technically proficient writer.'" "One of the issues seems to be things around disclosure: 'How much was AI used? Did it generate content? My direct answer to that question is: no more so than an editor would generate content for me," Gilgan contended. "An editor is going to realistically rewrite a sentence or two for me. They're not going to insert a sentence into my piece, but they are going to rephrase. They're going to shift the wording. They're going to use some synonyms in there, that sort of thing. But they're not going to come up with a sentence all on their own. And it was the same with this." *** In March, asked about the online controversy stemming from Gilgan's "Modern Love" essay, the NYT told Futurism that journalism at the newspaper "is inherently a human endeavor," and "that will not change." "As technology evolves, we are consistently assessing best practices for our newsroom," a spokesperson for the paper added. When we first started emailing, Gilgan referred to AI as a new "tool" in her workflow. She also compared AI to using a typewriter instead of a computer, or relying on a thesaurus. When I suggested that many writers might recoil from the characterization of chatbots as a "tool" like any other, given that it does have the capacity to both wholesale generate and drastically transform a piece of text in a radically different way than any previous technologies have been capable of, she insisted that AI can't replace the role of a human editor. And if she didn't want to actually write, she added, she just wouldn't be a writer. "Is there a risk with AI? Absolutely," said Gilgan. "If I want to be lazy about my writing, yeah -- AI could do it all for me." But for the sake of her own sense of integrity, she added, "I hope I don't ever get that lazy that I just hand it over to AI."

[4]

A New Kind of Scandal Is Growing Online. It's Ruining Careers -- and Aimed at the Wrong Target.

There's actually a much bigger problem -- and it isn't chatbots generating books and articles. Sign up for the Slatest to get the most insightful analysis, criticism, and advice out there, delivered to your inbox daily. Over the past month, A.I. detection has been at the center of a series of controversies: Hachette pulled the horror novel Shy Girl by Mia Ballard after detectors flagged it as substantially A.I.-generated. The New York Times cut ties with a freelance book critic who admitted that an A.I. editing tool had regurgitated passages from a Guardian article into his draft. The Atlantic reported that a "Modern Love" column had been flagged as more than 60 percent A.I.-generated. In certain corners of social media, A.I.-detector screenshots are shared like mug shots, and pile-ons have the grim energy of public stonings. This may all seem understandable -- people want to know if what they're reading was generated by a bot, and some argue they deserve to know. However, such controversy narrows the issue of A.I.'s steady encroachment to one of process, rather than impact. Drawing a red line around using chatbots to generate prose may make it easier to ignore the way that the technology may be shaping writing before one even types a single word. And a culture of callouts, scandals, and fear may prevent media and publishing from wrestling with much thornier questions of authorship. At the center of many of these controversies is a company called Pangram, whose CEO, Max Spero, has become the go-to authority when A.I. authorship disputes erupt. On Twitter/X, where Spero calls himself a "slop janitor," a user flagged a Guardian sports journalist's writing as A.I.-generated. The publication responded that this was "the same style he's used for 11 years writing for the Guardian, long before LLMs existed. The allegation is preposterous." Spero quote-tweeted the exchange with a Pangram time-series analysis of 871 articles by the journalist: "It's clear that he is increasingly relying on AI. In two weeks in February he churned out nine articles classified by Pangram as fully AI-generated. Receipts below." Or take Pangram's appearance in the Shy Girl cancellation. Readers on Reddit and YouTube had been flagging the horror novel as suspiciously A.I. for months, but then Spero ran the full manuscript and posted the result (78 percent A.I.-generated). Hachette pulled the book the day the Times piece ran. A story in the Atlantic soon followed. Spero was on LinkedIn, urging publishers to "strictly moderat[e] AI generated content" and "draft and enforce robust AI-use policy." A pattern emerges: The crowd suspects a problem, then Pangram validates the suspicion, stokes the mob, and sells the solution. The impulse to dismiss all this as a detector company drumming up business runs into an issue -- Pangram actually works way better than you might think. Brian Jabarian, a University of Chicago economist who conducted a rigorous independent evaluation of A.I. detectors, told me flatly, "This narrative that we shouldn't use A.I. detection doesn't seem to hold anymore." Jabarian's preprint, co-authored with Alex Imas and with no disclosed financial ties to the company, tested the tool across nearly 2,000 passages and found near-zero false-positive and false-negative rates on medium-to-long texts, the length of a typical op-ed or a verbose Amazon review. Independent benchmarks confirm that Pangram outperforms every other detector tested and is robust against "humanizers," or software designed to smuggle A.I. text past detectors. So when Spero posts a time-series chart of hundreds of articles showing when a journalist's output started sounding fishily like ChatGPT, I am inclined to believe it. That A.I. detection is finally catching up is, on balance, a Good Thing. A.I.-generated articles already far outnumber human ones. Social media is flooded with low-effort slop. According to Pangram's own research, a fifth of peer reviews submitted to the A.I. research conference ICLR are fully A.I.-generated, and 9 percent of American newspapers contain undisclosed bot use. In this A.I.-powered asphyxiation of the information ecosystem, Spero has positioned himself on social media as a folk hero hauling in the oxygen tanks. You can tag his company's bot on Twitter/X, and it will tell you whether a post is A.I. On Spero's social media to-do list: a "slop hunter of the week leaderboard." Pangram may be great for A.I. slop, but its performance probably varies in the wild. "If you copy-paste chunks of ChatGPT with minimal edits, then Pangram is fairly accurate," Tuhin Chakrabarty, an assistant professor of computer science at SUNY, told me. "If you significantly edit an A.I.-generated text, then it becomes human, and this is a harder problem in general." That matters because in the real world, A.I. use comes in a spectrum. In Pangram's newspaper study, for example, 86.5 percent of the chatbot use detected in opinion pieces at the Times, the Wall Street Journal, and the Washington Post was classified as "mixed," or some unknown entanglement of human and machine. Did the writer use Claude to help with transitions? Or generate an opinion piece fully formed from ChatGPT and slap a name on it? These distinctions matter. Pangram's latest version now outputs scores on a continuum and is making genuine progress on these gray-zone instances, but those cases remain far less validated than the extremes. To complicate matters further, not everyone may equally bear the burden of false accusations. Liam Dugan, a Ph.D. student at the University of Pennsylvania whose dissertation focuses on A.I. detection and has benchmarked the major commercial detectors, told me: "For most people, they might never, ever get a false positive. And for other people, the false positives are sort of disproportionately allocated on them because they just happen to write like A.I." Some A.I. detectors are more likely to flag non-native speakers of English. (According to a Pangram blog post, the company has largely solved this problem, but there is no independent audit of this assertion.) Apart from non-native speakers, there may be other subgroups of writers whose prose has the focus-grouped sheen of ChatGPT output. Opinion writing in major newspapers, in fact, comes to mind. Not only does A.I. keep improving, but humans are also beginning to speak and write like A.I., narrowing the gap that detectors rely on to make their calls. This makes A.I. detection inherently an arms race, so the performance of any given detector will likely fluctuate over time. Academics I spoke with all emphasized that the state of A.I. detection is much better today than it was in 2023 but cautioned against letting the narrative pendulum swing too far in the other direction. Jabarian told me, "Maybe we went from a world where people were not using detection because it was so bad, and now maybe people think it works all the time." And when the technicalities of A.I. detection collide with cancel culture, it does not lead anywhere productive. Take a dustup Spero found himself in a few weeks ago with the Wall Street Journal. Pangram's newspaper study identified specific op-ed writers, including three at the Journal. James Taranto, who edits those pages, responded with a combative piece. He ran the flagged articles through Pangram and got different scores, contacted the accused writers, and concluded that the accusations of "A.I.-generated" didn't hold up. The response is instructive for what it pursued and what it avoided. Taranto investigated Pangram's consistency and found enough variation to dismiss it. But quibbling over individual op-eds let him sidestep some uncomfortable introspection. Even if Pangram misfires on a given op-ed, the study's broader pattern -- that A.I. use is showing up across major newspaper opinion pages, his own included -- is impossible to argue with. He did not have to ask how his editorial oversight had failed to spot a discomfiting level of undisclosed A.I. use. Basically, he wrote a hit piece on the thermometer instead of asking why he had a fever. This incident reveals why chatbot callout culture leads nowhere. Spero called out Taranto; Taranto called out Spero. Nothing changed. "I think it may have been a mistake to name names," Spero told me. But the larger issue may be that when it comes to A.I.-assisted writing, red lines perhaps are being drawn around the wrong thing. On Substack recently, Nicholas Thompson, the CEO of the Atlantic, shared a writer's account of using Claude to build a custom editing rubric while instructing the A.I., "You are not a co-writer." Thompson called it "a cool example of how you can use A.I. to help your writing -- without relying on it for any actual writing." Elsewhere, he has deemed the practice of using chatbots to generate sentences "unethical and wrong." This is becoming the standard position: A.I. for everything upstream of prose is acceptable; A.I. for the prose itself is a betrayal. To understand why this is a problem, consider a simple exercise I ask reporters to perform when I run workshops on journalism and A.I. I hand each reporter one of two A.I.-generated research reports on collagen supplements -- same underlying studies, same data, different framing. Report A opens with positive clinical findings and mentions industry funding as a limitation. Report B opens with the funding-bias analysis and loudly labels which results are industry funded. Report A primes a "Does collagen work?" story. Report B primes a "Why you can't trust collagen research" story. To be clear, both are reasonable reads of the literature, but the reporters would write different stories because of how the A.I. decided to order the same information. In each case, their writing would sail through a detector. Meanwhile, a reporter who did her own research but asked an A.I. to clean up her prose might get flagged. She was arguably the least influenced of the three, yet the moral intuitions of writers seem to be that she most betrayed the craft. I understand that moral intuition and agree that passing off A.I.-generated prose as one's own breaks the writer-reader contract. (I also acknowledge the aesthetic and moral revulsion of generative A.I., full stop.) But what many in journalism seem most concerned about is what's at stake in newsmaking, that the perspectives of A.I. models shape writing and thus public opinion. A recent piece in Thompson's Atlantic called this prospect "terrifying" and proposed a suite of solutions: disclosure policies, editor training on A.I. tells, detection software, penalties for violators. Every recommendation targets prose, while the upstream-influence problem, something the author herself seemed most concerned with, received no actionable attention at all. It's all about process, not about impact. If the goal is writerly independence, then drawing the red line at writing protects the independence of the final product far less than one might hope. Rather, it protects a feeling of independence. And to be clear, I'm not saying that relying on A.I. for research is even bad. Yes, chatbots are unusually persuasive, and writers pick up model biases without even knowing it, but the baseline isn't some platonic ideal of a perfectly objective journalist. The question is, how does A.I.-based research either reinforce or counteract the biases in information-gathering processes that journalists already use? And Thompson's red line happens to align perfectly not only with what companies like Pangram can now measure and sell, but also with what vigilantes can police on social media. This troubles me because A.I. detection, even at its best, is going to be a moving target. Build a culture of accusation on that foundation, and you get something not only brittle but perhaps even falsely reassuring: a system that comforts readers and writers that the A.I. problem has been solved while harder questions go unasked.

Share

Copy Link

The publishing world is in turmoil as AI detection tools flag thousands of books and articles, leading to cancelled deals and destroyed reputations. Major publishers like Hachette pulled books over AI suspicions, while authors discover their own work falsely flagged by unreliable detectors. The controversy reveals a deeper crisis about the future of authorship and creative writing in an AI-saturated landscape.

Publishing Houses Confront Wave of AI-Generated Books

The publishing industry is wrestling with an unprecedented crisis as AI in writing threatens to reshape the landscape of authorship. In March 2026, Hachette pulled the horror novel "Shy Girl" by Mia Ballard in both the United States and United Kingdom over evidence suggesting substantial AI use, marking what many consider a turning point for the entire book business

2

. The 'Shy Girl' cancellation came after readers on Goodreads and Reddit complained for months about obvious chatbot language, raising questions about how major publishing houses vet acquired work.

Source: NYT

According to estimates, thousands of AI-generated books are already flooding the market, creating what George Orwell eerily predicted in his 1949 novel "1984" as "novel-writing machines" capable of mass-producing literature

1

. The New York Times also cut ties with a freelance book critic after discovering his AI usage resulted in a significantly plagiarized book review3

.Copyright Infringement Lawsuits Target AI Companies

Authors are fighting back through legal channels as AI companies face mounting copyright challenges. In 2025, Anthropic, the company behind the chatbot Claude, agreed to pay up to $1.5 billion to thousands of authors in the class-action settlement Bartz v. Anthropic after a judge ruled the company had infringed upon their copyrights

1

. Andrea Bartz, a thriller writer who was a lead plaintiff in the lawsuit, expressed concerns about an emerging "era of distrust, with no easy way to prove the veracity of your own writing"2

. In March 2026, journalist Julia Angwin filed another class action suit against Grammarly's owners, alleging the company misappropriated writers' identities to build its "Expert Review" AI tool, which offers editorial feedback mimicking various authors' voices1

. These legal battles highlight growing concerns that chatbots are trained not only to regurgitate content but also to mimic individual authorial voices.Unreliable AI Detectors Threaten Innocent Writers

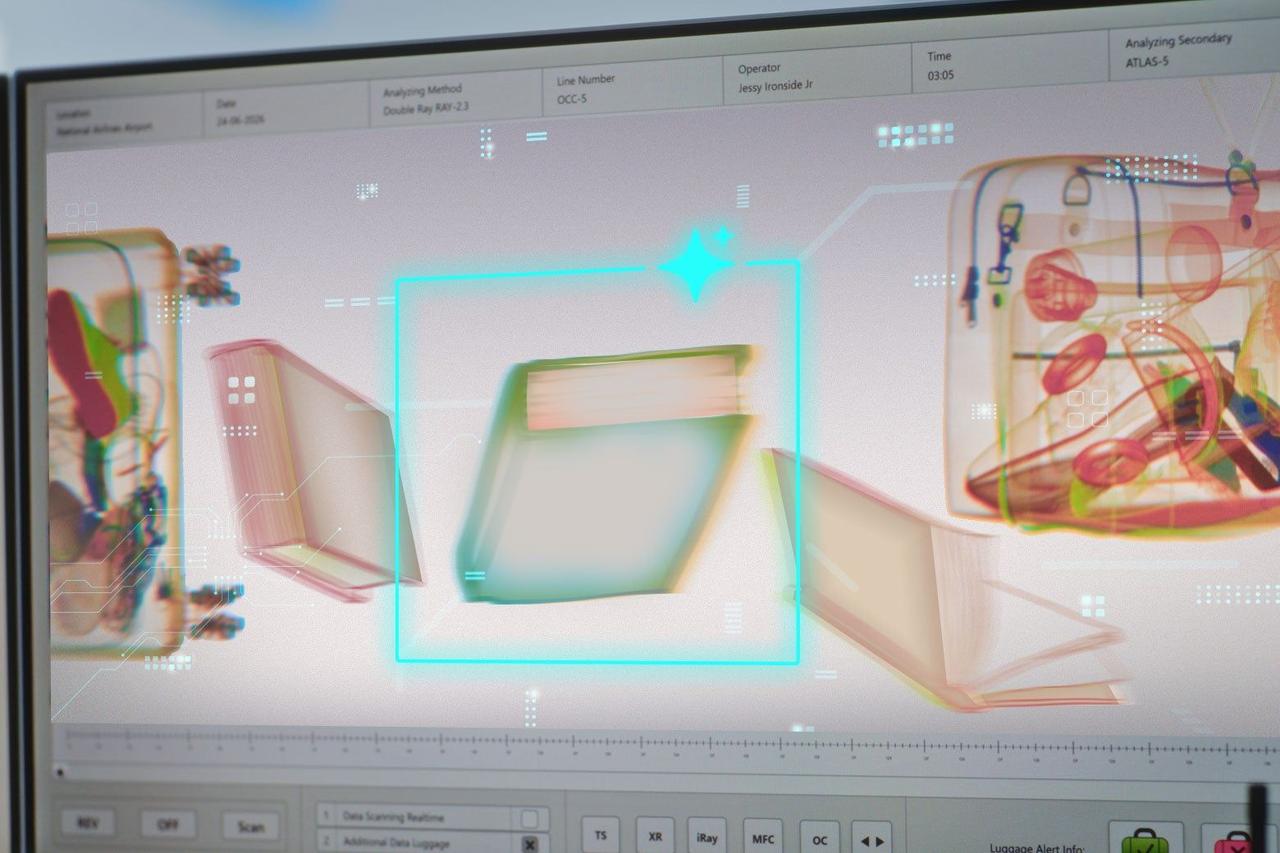

As accusations of using AI proliferate, authors discover that AI detection tools produce wildly inconsistent results that can destroy careers. Antonio Bricio, an engineering consultant working on his debut science fiction novel, paid for a subscription to Originality.ai and uploaded a chapter of his work. The detector initially showed 100 percent confidence that he had used AI, despite Bricio writing entirely by hand except for occasional word translations using DeepL

2

.

Source: Slate

After deleting some sentences and rerunning the test, the same program declared 100 percent certainty that a human had written it. "Everybody is going to walk on eggshells from now on," Bricio said, fearing that publishers and agents might start running these tools on all submissions. Andrea Bartz experienced similar frustration when she put her own writing into Ace, an AI content detectors, and was startled when the program labeled her work as 82 percent AI-generated

2

. Novelist Rene Denfeld commented that false positives might result from the fact that "your books were stolen to program A.I."Related Stories

Modern Love Scandal Exposes Murky Boundaries

The case of Kate Gilgan reveals how complicated AI use in creative writing has become, blurring traditional definitions of authorship. Writer Becky Tuch accused Gilgan of using AI to write an emotional first-person essay about losing custody of her son that appeared in The New York Times' competitive Modern Love column in October, pointing only to the article's style as evidence

3

.

Source: Futurism

While Gilgan initially denied that "AI wasn't used to generate that content," she later conceded to The Atlantic that she used ChatGPT, Claude, Copilot, and Perplexity for conceptualizing and editing the piece, though she denied copying and pasting anything directly from AI into her essay

3

. Gilgan admitted she was strategic, using chatbots to help craft her essay in a way that would appeal to Modern Love editorial staff. The Atlantic reported that the column had been flagged as more than 60 percent AI-generated4

. "I was going back and reading a lot of my earlier pieces -- I guess, maybe intuitively, I was wondering, 'Oh, my God, has that happened? Has AI changed my voice?'" Gilgan reflected.The Future of Authorship Hangs in Balance

Publishing industry controversy has exposed fundamental questions about what constitutes authentic creative writing in an AI-saturated world. Most major publishing houses don't have clear-cut rules around AI use for authors, operating instead on trust and the expectation that writers will be transparent, but confusion reigns over which forms of AI use cross a line

2

. Jane Friedman, a publishing consultant, called the situation "a wake-up call for the industry." According to Pangram's research, a fifth of peer reviews submitted to the AI research conference ICLR are fully AI-generated, and 9 percent of American newspapers contain undisclosed bot use4

. The controversy extends beyond simple process questions to deeper concerns about how AI may be shaping writing before authors type a single word. As one analysis noted, chatbots can be trained to analyze and mimic authorial voice, creating what amounts to "a statistical average of all the human minds that came before it"1

. The current state leaves many readers feeling confused and wary, not knowing whether books they're reading were written by humans or machines, while writers face unfounded suspicions or use AI without disclosure.References

Summarized by

Navi

[1]

Related Stories

AI writing scandals expose literary world's struggle with synthetic quotes and detection

23 May 2026•Entertainment and Society

New York Times drops critic over AI use as ethical concerns shake publishing industry

25 Mar 2026•Entertainment and Society

The Looming Threat of AI Model Collapse: Accuracy, Ethics, and the Future of Information

22 May 2025•Technology

Recent Highlights

1

Google bets on AI agents with Gemini 3.5 Flash, Spark, and Omni at I/O 2026

Technology

2

AI passes the Turing Test as GPT-4.5 appears more human than actual people in landmark study

Science and Research

3

OpenAI cracks 80-year-old Erdős problem, stunning mathematicians with AI's biggest math breakthrough

Science and Research