NVIDIA and Elon Musk race to put AI data centers in space as Earth-based limits bite

3 Sources

[1]

Elon Musk might be right -- here's why putting AI data centers in space isn't as crazy as it sounds

When we upload photos, stream movies and search Google, we often don't think about the consequences. "The cloud" has made the internet feel weightless but AI is making the physical side of the internet impossible to ignore. As useful as it may be, AI does not just run on software. It runs on land, chips, power plants, cooling systems, transmission lines and data centers so massive they could be the size of 2,000 Walmarts. That's why some communities are now pushing back before these data centers are even built. That may help explain why one of the strangest-sounding ideas in tech suddenly feel a lot less absurd. For one, putting AI data centers in space. According to Reuters, Google is reportedly in talks with Elon Musk's SpaceX about launching orbital data centers as part of Project Suncatcher, Google's effort to test solar-powered, satellite-based AI cloud infrastructure using its own Tensor Processing Units. Yes, AI is getting so demanding that the answer is apparently....rockets? But the more you look at the problem, the more revealing the idea becomes. AI is running into the real world The unfortunate side of the AI boom is that AI's appetite for power, cooling and compute has become so extreme that space is now being discussed as a serious part of the infrastructure conversation. That's because AI has been sold to us as software. But every query, every prompt has to run somewhere. Behind the scenes, AI depends on enormous data centers packed with specialized chips. Those chips use huge amounts of electricity and generate heat that has to be managed. As AI models become more powerful and more widely used, the demand for data center capacity is growing fast. According to the IEA's "Electricity 2024 - Analysis and Forecast to 2026" report (and subsequent updates in May 2026), global data center electricity consumption is projected to exceed 1,000 terawatt-hours (TWh) by the end of 2026. This figure is roughly equivalent to the entire annual electricity consumption of Japan, which currently ranks as the world's fourth-largest economy. Communities are raising concerns about electricity demand, water use, emissions, land use and rising power bills. The fight over massive AI data center projects in places like Utah shows how quickly "the cloud" can become a local zoning, water and environmental issue. Why space is appealing The basic appeal of orbital data centers is solar power. That's because in space, satellites can collect sunlight more consistently than solar panels on Earth. There are no clouds, no nighttime in the same way and no local fights over using thousands of acres of land for power infrastructure. Another perk is no wildlife. Data centers can disrupt wildlife by converting large stretches of habitat into industrial infrastructure, and in the case of Utah's proposed Stratos project, critics have warned that the 40,000-acre site near the Great Salt Lake could bring major ecological impacts that could include increasing the average temperature dramatically. Google's Project Suncatcher is built around solar-powered satellites equipped with AI chips, linked together to create a kind of orbital AI cloud. The company has described its upcoming early-2027 mission as a learning test designed to see whether its hardware can survive and function in orbit. And while Elon Musk may be the most visible figure talking about AI data centers in space, he is no longer the only one. . NVIDIA has announced space-focused AI computing platforms, including its NVIDIA Space-1 Vera Rubin Module, which it says is designed to bring AI compute to orbital data centers, geospatial intelligence and autonomous space operations. Meanwhile, Aetherflux, a startup originally focused on space-based power beaming, reportedly rebranded this week as Cowboy Space,and is now pitching a constellation of orbital AI data centers. Its approach appears to involve turning the upper stage of its planned rockets into the data center itself, rather than launching a separate dedicated satellite. Taken together, these moves suggest the space-based AI infrastructure race is no longer just a Musk moonshot -- it may be the start of a much broader orbital data center boom, or even an early-stage bubble. For AI companies, the dream is obvious Just last week, Anthropic reportedly agreed to use SpaceX's Colossus 1 infrastructure and explicitly stated interest in gigawatts of orbital compute. It's a signal that the industry is hitting a physical wall; if space-based data centers can be scaled, they solve the 'impossible' constraints currently choking Earth-based facilities, including: * Less pressure on local power grids * Less land conflict * Less dependence on water-heavy cooling * More access to continuous solar energy * Fewer battles with communities that do not want giant data centers nearby Everyone wants faster AI, better assistants and more powerful tools. Far fewer people want the infrastructure required to make those tools possible built near their homes. Space creates new problems Data centers on Earth already have to manage enormous amounts of waste heat. In space, there is no air to carry heat away. That means orbital data centers would need highly advanced thermal systems, likely including large radiators, to prevent the hardware from overheating. That means, an enormous cost. The Wall Street Journal reported that launch costs remain a major obstacle, with current costs around $3,400 per kilogram and experts suggesting that space-based data centers would likely need launch costs closer to $200 per kilogram or less to make economic sense. There are other problems, too, such as radiation, satellite maintenance, high-speed communication, space debris, orbital congestion and the challenge of manufacturing and launching hardware at a scale that would actually matter for AI. Astronomers are also worried. Space.com has reported that scientists have raised concerns about SpaceX's proposed plan for a massive constellation of orbiting AI data centers, warning that large numbers of bright satellites could interfere with astronomy and permanently change the night sky. So, no, space data centers are not an easy fix. While they may solve one set of problems, they could create others. Musk's idea shows how extreme the AI race has become Musk's space data center idea shows how far the AI race means to the U.S. The companies that dominate AI may not simply be the ones with the smartest models. They may be the ones with the most compute, the most power, the best chips, the biggest data centers and the ability to build infrastructure faster than everyone else. According to Financial Times, SpaceX has reportedly struck major AI infrastructure deals, including a deal for Anthropic to use compute capacity at SpaceX's Colossus 1 data center in Tennessee. The Financial Times reported that the deal gives Anthropic access to more than 300 megawatts of compute capacity as AI companies scramble for the infrastructure needed to train and run their models. It's clear that AI is becoming an infrastructure race just as much as anything else. And Musk is not the only one who sees it. Google's Project Suncatcher, SpaceX's orbital ambitions and the growing rush for data center power all point to the same uncomfortable truth that the next phase of AI may be limited by electricity, cooling, chips and physical capacity. The takeaway With so many peopple shouting "NIMBY" ("not in my back yard") about data centers, Elon Musk and others might be on to something, especially as AI becomes more integrated into our daily lives. Local fights over data centers are likely to grow, making nuclear power, natural gas, grid upgrades and now even space-based computing something worth exploring. Musk's idea may sound extreme. But the fact that companies are even discussing orbital data centers says a lot about where AI is headed. Follow Tom's Guide on Google News and add us as a preferred source to get our up-to-date news, analysis, and reviews in your feeds. Subscribe to Tom's Guide on YouTube and follow us on TikTok.

[2]

Big Tech eyes orbital data centers for "near continuous" solar power

Across the globe, the rapid deployment of AI infrastructure is running up against physical limits. Rather than technology, AI data centers currently face constraints caused by access to power, water for cooling, and delays in receiving building permit approvals that in some cases now stretch for seven years. Providing a potential alternative, orbital data centres are moving from being purely theoretical to technically feasible. While they won't meet every need, they do offer a way to bypass terrestrial bottlenecks, when they are expected to come online in the next 5-7 years. Understanding AI data center constraints The future growth of AI relies on access to sufficient compute power, delivered through global, large-scale data centers. Deploying this infrastructure relies on speed, but three key constraints are dramatically slowing down data center construction. Given their enormous energy needs, availability of power is the first critical bottleneck. For example, EU data centers are expected to represent 4% of the region's electricity demand (~108 TWh) by 2030 - more than the current annual electricity consumption of the Netherlands. Power constraints are dominating data center-building timelines, especially in major hubs. In Northern Virginia, USA, new-connection waits can be up to seven years. Thermal management introduces the second crucial constraint. Water-based cooling systems substantially increase local consumption, and water-stress exposure affects numerous data center regions, leading to operational and reputational risks for developers. Finally, regulatory hurdles compound these physical limitations. Community resistance to data centers has grown, dramatically extending project timelines and increasing stakeholder management costs. These constraints matter because AI economics reward speed. AI model generations turn over every 12-18 months, meaning that infrastructure that arrives after the model-refresh cycle delivers diminished returns. Developers are therefore looking for new options to overcome these challenges, including through orbital data centers. What are orbital data centers? Orbital data centers are compute hardware (processors, memory, storage) hosted by satellites in Low Earth Orbit (LEO). These operate at altitudes of 400- 1,400 km above the Earth's surface and travel around the Earth every 90- 120 minutes. A recent demonstration successfully tested an H100-class GPU payload in space, marking a tangible step toward space-based AI infrastructure. It is important to understand that the vision for orbital data centers is not hyperscale facilities in space. Rather, it's a modular, networked layer of satellites designed for workloads where orbit provides structural advantages, such as near-continuous solar exposure for power, a passive thermal environment for cooling, lower communication latency than deep space deployments, proximity to space-generated data, and/or geopolitical resilience. All of this means that for workloads where these factors matter more than millisecond latency, LEO satellites offer a way to bypass terrestrial bottlenecks. The critical building blocks for orbital data centers Even as the hardware advances, the success of orbital data centers requires systems engineering rigor across six important building blocks: 1. Continuous solar power at scale Certain orbital regimes (e.g., dawn-dusk Sun-synchronous orbits) can provide near continuous solar exposure. However, high-specific-power, radiation tolerant solar arrays, and resilient energy storage are needed to handle transients and contingency eclipse events. 2. Effective thermal management Even for satellites illuminated by the Sun, a few minutes of shadow occur during each orbit, leading to a temperature spread from +120°C to -250°C. Thermal management -- both within the satellite and in releasing heat into space -- is therefore critical. Efficient thermal management, including heat spreading, conservative power density, and intelligent workload scheduling, becomes key to performance. 3. Resilient, modular compute platforms Radiation hardening, redundancy, and autonomous operation are baseline requirements. Because AI economics depend on a regular cadence of hardware-refreshes, platforms need upgrade pathways, swappable units, and servicing strategies to maintain high utilization rates. 4. High-throughput network links Data needs to move efficiently from orbital data centers. For non-geostationary modules, optical inter-satellite links are needed to exchange data before transmitting it to Earth. Robust, scalable ground gateways are also required to receive large data volumes and route insights securely. 5. Reusable heavy-lift access to bring down launch costs Launch costs currently account for about 40% of total required investment. Reusable launch systems like SpaceX's Starship, which targets sub-$100/ kg versus historical rates of $2,000-$10,000/ kg, are fundamentally reshaping orbital data center economics by making deployments at scale commercially viable. 6. In-orbit assembly and servicing Large orbital data centers require robotic assembly of modular units and periodic hardware refresh. This mandates standardized docking interfaces and autonomous operations to scale. Minimizing these in-orbit services may help reduce time to market but may increase the number of satellites needed. How users will adopt orbital compute While they seem like science fiction, orbital data centers may sound more visionary than they actually are. Rather than fully migrating to space, operators will deploy a new data channel (much like the one emerging in mobile communications with OneWeb, Starlink, and Kuiper) and use orbital capacity only where it removes a greater bottleneck than it introduces, especially in three areas: Understanding the challenges and opportunity While orbital data centers provide a tangible opportunity for AI, constraints remain. For starters, launch costs, platform mass, utilization rates, and operational lifetime must exceed the costs of terrestrial delays due to power, water, and permitting issues. Second, autonomous fault management, debris management, credible servicing pathways, and upgrade strategies will determine how often hardware requires refreshing and therefore effective cost per compute hour. Third, orbital availability, spectrum allocation, and cybersecurity frameworks will shape deployment speed, permissible actors, and operational boundaries. As mentioned previously, latency is an issue, limiting the types of workload that can be deployed in space. Combining terrestrial and orbital data centers Increasingly, the data center industry's constraints are not about technology. Orbital compute will not eliminate every bottleneck, but for specific workload types, it offers a way to avoid power queues, heat limits, and permitting timelines by converting physical constraints into architectural opportunities. We've featured the best AI tool. This article was produced as part of TechRadar Pro Perspectives, our channel to feature the best and brightest minds in the technology industry today. The views expressed here are those of the author and are not necessarily those of TechRadarPro or Future plc. If you are interested in contributing find out more here: https://www.techradar.com/pro/perspectives-how-to-submit

[3]

NVIDIA Silently Builds an Orbital AI Empire With 5 Partners, Racing Elon Musk to Put Datacenters in Space

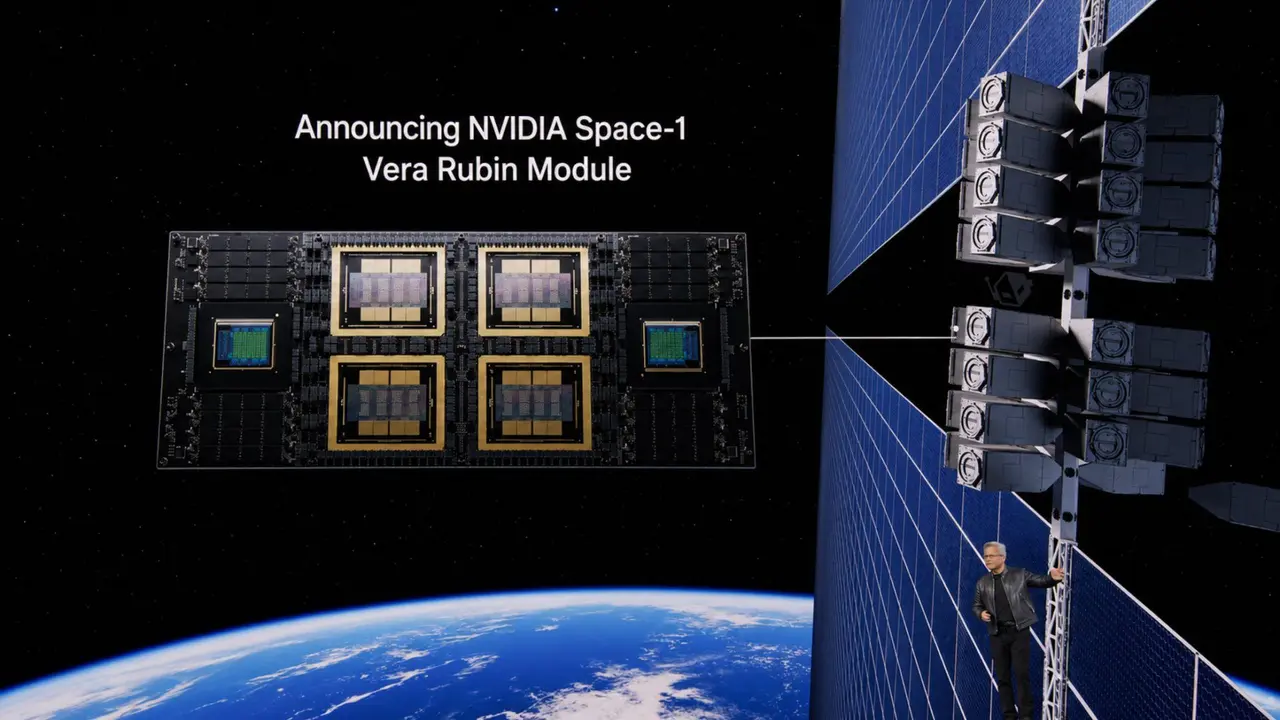

NVIDIA is reaching for the stars, literally, as the company plans to build its future AI datacenters in outer space as terrestrial resources become a major constraint. Outer Space is becoming the new real estate gold mine for AI datacenters. Building data centers in space can be costly, but as commercial spaceships such as SpaceX's Starship and Falcon Heavy become viable, companies are seeing outer space as the new frontier to scale out their AI capacity. Currently, AI datacenters being built on Earth are worth billions of dollars, and the entire industry is spending trillions in getting these facilities up and running for their compute needs. Not only is the construction cost high, but the power and cooling requirements are also massive, and these facilities can cause adverse effects to the surrounding flora and fauna. Recently, there have been major backlashes against data centers, which are gobbling up local water supplies at an unprecedented rate, and power bills in towns where they are based are rising, affecting communities & even countries as a whole. So in a sense, AI datacenters are not just a burden to everyone around them, but also a burden for our planet. NVIDIA plans to solve this issue entirely by sending off AI datacenters to space. For this purpose, NVIDIA is working with five major firms: Starcloud, Planet Labs, Kepler Communications, Firefly Aerospace, and Sophia Space. Last year, NVIDIA announced that Starcloud, part of its Inception program for startups, would launch an AI-equipped satellite to orbit the Earth. The company has proposed a 5-Gigawatt data center, spanning 4 square kilometers for Outer Space, which will feature super-large solar plates for power & deep space vacuum as a cooling solution. With this approach, NVIDIA projects a 10x lower energy cost versus Earth-based AI data centers. Also, Power & Cooling in Space is unlimited, so your two biggest constraints are solved. Since then, NVIDIA has unveiled its latest Space-1 Vera Rubin model for data-center-class AI at scale. This Rubin module is designed specifically for Outer Space, featuring a tightly integrated GPU-CPU design that offers high-bandwidth interconnect and is powered completely by solar energy radiated from the Sun. Some features of the NVIDIA Space-1 Vera Rubin module include: But NVIDIA isn't alone in the race to build AI data centers in outer space. Elon Musk's SpaceXAI has also partnered with Anthropic to build a multi-Gigawatt "Orbital" AI data center. SpaceXAI also believes that an orbital compute installation will address some of the major bottlenecks of terrestrial-based systems, such as Power, Land, and Cooling. With unlimited power and cooling, AI chips will then become a major bottleneck. Another bottleneck can arise from deliveries to space itself, as the reliance on commercial "outer space" shipments to move many hundreds of thousands of GPUs will be a task in itself. But at the pace at which AI is moving right now, the outer space AI Datacenter dream is far from a dream, and aiming to become a reality very soon.

Share

Copy Link

NVIDIA is partnering with five space companies to build orbital AI infrastructure, while Elon Musk's SpaceXAI teams with Anthropic for multi-gigawatt space facilities. The push comes as terrestrial data centers face severe power, water, and land constraints—with some projects facing seven-year permit delays.

NVIDIA Quietly Builds Orbital AI Infrastructure

NVIDIA is advancing plans to deploy AI data centers in space through partnerships with five companies: Starcloud, Planet Labs, Kepler Communications, Firefly Aerospace, and Sophia Space

3

. The chip giant unveiled its Space-1 Vera Rubin model designed specifically for data-center-class AI at scale in orbit, featuring a tightly integrated GPU-CPU design with high-bandwidth interconnect powered entirely by solar energy3

. Starcloud, part of NVIDIA's Inception program, has proposed a 5-gigawatt facility spanning 4 square kilometers featuring super-large solar plates and leveraging deep space vacuum for cooling3

.

Source: Wccftech

Elon Musk and Tech Giants Join the Space Race

Elon Musk's SpaceXAI has partnered with Anthropic to build multi-gigawatt orbital data centers, with Anthropic reportedly agreeing to use SpaceX's Colossus 1 infrastructure and expressing interest in gigawatts of orbital compute

1

. Google is also in talks with SpaceX about launching orbital facilities as part of Google Project Suncatcher, an effort to test solar-powered, satellite-based AI cloud infrastructure using its own Tensor Processing Units1

. Aetherflux, a startup focused on space-based power beaming, recently rebranded as Cowboy Space and is pitching a constellation of orbital AI data centers by turning rocket upper stages into facilities themselves1

.

Source: Tom's Guide

Terrestrial Data Center Challenges Drive Space Push

The shift toward space-based AI reflects mounting resource constraints on Earth. Global data center electricity consumption is projected to exceed 1,000 terawatt-hours by the end of 2026, roughly equivalent to Japan's entire annual consumption

1

. In Northern Virginia, new power connection waits can stretch up to seven years2

. EU data centers are expected to represent 4% of the region's electricity demand by 2030, exceeding the Netherlands' current annual consumption2

. Communities are pushing back against facilities that consume thousands of acres and massive water supplies for cooling, with environmental and social challenges becoming zoning and regulatory battles1

.Related Stories

How Orbital Data Centers Solve Earth's Bottlenecks

Orbital data centers hosted in low earth orbit at altitudes of 400-1,400 km offer near continuous solar power through specific orbital regimes like dawn-dusk sun-synchronous orbits

2

. NVIDIA projects 10x lower energy costs versus Earth-based facilities, with power and cooling essentially unlimited in space3

. These facilities eliminate pressure on local power grids, land conflicts, dependence on water-heavy cooling, and battles with communities opposed to massive infrastructure projects1

. The approach bypasses terrestrial bottlenecks when facilities are expected to come online in the next 5-7 years2

.

Source: TechRadar

Technical Hurdles and Timeline Realities

Success requires engineering across six critical areas: continuous solar power at scale, effective thermal management handling temperature spreads from +120°C to -250°C, resilient compute platforms with radiation hardening and autonomous operation, high-throughput network links including optical inter-satellite connections, and reduced launch costs

2

. Launch costs currently account for roughly 40% of total investment, but reusable systems like SpaceX's Starship targeting sub-$100 per kg versus historical rates of $2,000-$10,000 per kg are reshaping economics2

. A recent demonstration successfully tested an H100-class GPU payload in space, marking progress toward space-based AI infrastructure2

. Google's early-2027 mission is designed as a learning test to determine whether hardware can survive and function in orbit1

.References

Summarized by

Navi

[1]

Related Stories

AI Trained in Space as Tech Giants Race to Build Orbiting Data Centers Powered by Solar Energy

11 Dec 2025•Technology

Nvidia unveils Vera Rubin Space Module for orbital AI data centers as space computing race heats up

17 Mar 2026•Technology

SpaceX pushes AI data centers into orbit as Musk predicts space will beat Earth in 36 months

06 Feb 2026•Technology

Recent Highlights

1

Pope Leo XIV releases major AI encyclical calling for 'disarmament' of artificial intelligence

Policy and Regulation

2

Apple's Siri overhaul for iOS 27 brings Gemini integration and standalone app to compete with ChatGPT

Technology

3

Nvidia unveils RTX Spark chip to chase $200B CPU market with AI agent PCs from Microsoft, Dell, and HP

Technology

Recent Highlights

1

Pope Leo XIV releases major AI encyclical calling for 'disarmament' of artificial intelligence

2

Apple's Siri overhaul for iOS 27 brings Gemini integration and standalone app to compete with ChatGPT

3

Nvidia unveils RTX Spark chip to chase $200B CPU market with AI agent PCs from Microsoft, Dell, and HP